Category: Microsoft

Category Archives: Microsoft

Build a full-stack, server-rendered Nuxt site with Azure Static Web Apps

Nuxt is a powerful frontend JavaScript framework for Vue.js, with full-stack and server-side capabilities such as server-side rendering and server routes. Since Nuxt 3, we can deploy our full-stack Nuxt applications to Azure Static Web Apps with zero-configuration required! Let’s jump in to see how we can build and deploy a full-stack Nuxt app on Azure with Azure Static Web Apps. (Access the complete application on GitHub!, and the deployed application at https://happy-beach-029f0310f.5.azurestaticapps.net/items)

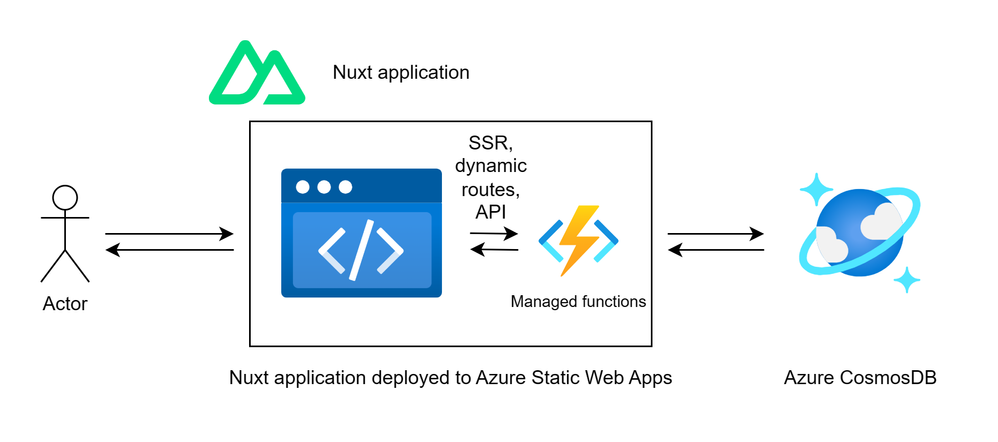

Architecture diagram Nuxt app deployed to Azure Static Web Apps

When we deploy our Nuxt site to Azure Static Web Apps, Nuxt will detect our deployment target and automatically build our Nuxt project into 2 separate bundles: a bundle of static files, which will be deployed to Azure Static Web Apps’ globally distributed static host, and a bundle of Azure Functions files, which will be deployed to Azure Static Web Apps’ managed functions. This makes hosting Nuxt on Static Web Apps for optimized frontend hosting a breeze! Alternatively, we can specify the Azure preset in our build command.

Illustration of Nuxt build step into split static and Azure Functions bundles

Prerequisites

This tutorial requires that you have Node.js installed locally, an Azure subscription and a GitHub account. For this sample application, we’ll be using an existing Azure CosmosDB for NoSQL to demonstrate how we can access sensitive backend services such as databases or 3rd party APIs from Nuxt’s own server-routes. The schema of the Azure CosmosDB for NoSQL is as follows:

SWAStore (Database)

Items (Container)

Item (Item)

{

id: string

title: string

price: number

}

Sales (Container)

Item (Item)

{

id: string

date: string (date in ISO String format)

items : [

{

id: string

quantity: number

}

]

}

Getting started

We’ll get started by creating a Nuxt project. This project will contain the pages for our website with server-side rendering along with server routes responsible for fetching data from our database. This will be a sample dashboard application that allows us to managed sales for an e-commerce site, specifying items in our store and sales that occurred.

npx nuxi@latest init nuxt-swa-full-stack-app

cd nuxt-swa-full-stack-app

npm install /cosmos

code .

npm run dev

These commands will scaffold a Nuxt project, install Node dependencies with npm, open the project in Visual Studio Code and run the Nuxt development server. We can now access our project at http://localhost:3000. We’ll follow up by setting our environment variables with our CosmosDB credentials so that we can access our database from our application. Within nuxt-swa-full-stack-app, run the following:

echo ‘COSMOSDB_KEY=”<ENTER COSMOSDB KEY HERE>”

COSMOSDB_ENDPOINT=”<ENTER COSMOSDB URL HERE>”‘ > .env

This will create a .env file which will be read by Nuxt and make these environment variables available within our Nuxt project, as indicated in Nuxt docs. We’ll make use of these environment variables in the nuxt.config.ts file, setting these environment variables as configuration that will be accessible in our project.

export default defineNuxtConfig({

devtools: { enabled: true },

runtimeConfig: {

// Will be available at runtime

cosmosdbKey: process.env.COSMOSDB_KEY,

cosmosdbEndpoint: process.env.COSMOSDB_ENDPOINT

}

})

Create the server API routes

We’ll start by creating the server routes which will access our Azure CosmosDB database and provide an API that can be accessed from our Nuxt pages. Create the file /server/api/Items/index.js which will contain our server route code, and add the logic to handle the request.

import { CosmosClient } from “@azure/cosmos”;

export default defineEventHandler(async (event) => {

const config = useRuntimeConfig(event)

const client = new CosmosClient({ endpoint: config.cosmosdbEndpoint, key: config.cosmosdbKey });

const method = event.node.req.method;

const database = client.database(“SWAStore”);

const container = database.container(“Items”);

if (method === “GET”) {

try {

const { resources } = await container.items.readAll().fetchAll();

return resources;

} catch (error) {

setResponseStatus(event, 500);

return `Error retrieving items from the database: ${error.message}`;

}

}

// [ POST, PUT AND DELETE ENDPOINTS OMITTED FOR SIMPLICITY, AVAILABLE IN SOURCE CODE ]

else {

setResponseStatus(event, 405);

return “Method Not Allowed”;

}

});

We now have CRUD endpoints for our Items, with the above snippet showing the GET endpoint and the POST, PUT, and DELETE implementations available in the source code. We can access our GET Items at http://localhost:3000/api/Items, which will return an array of our items.

Screenshot of GET request to /api/Items returning our array of items

The rest of the CRUD endpoints for Sales entities will be available in the source code accompanying this article.

Create the pages in the Nuxt app

Now that we have an API for our Items and Sales, we can use these API endpoints within out Nuxt frontend application to provide the ability to see and edit the sales and items of our e-commerce store. Start by creating a file for the Items page at /pages/Items/index.vue with the following contents:

<template>

<div>

<div>

<div v-for=”item in items” :key=”item.id”>

<div>

<div>

#{{ item.id }} – {{ item.title }}

</div>

<div>${{ item.price }}</div>

</div>

<div>

<NuxtLink :to=”`/items/edit/${item.id}`”>Edit</NuxtLink>

<button @click=”handleDelete(item.id)”>Delete</button>

</div>

</div>

</div>

<div>

<NuxtLink to=”/items/create”>Create New Item</NuxtLink>

</div>

</div>

</template>

<script setup>

const { data: items, refresh: refreshItems } = await useFetch(“/api/Items”);

const handleDelete = async (itemId) => {

const {data, error} = await fetch(`/api/Items/${itemId}`, {

method: “DELETE”,

});

if (error.value) {

console.error(“Error deleting item:”, error);

}

// Trigger re-fetch after deletion

refreshItems();

};

</script>

This page now presents our Items list within our application, by calling our Items API and displaying all items. We also added delete functionality, and we can further iterate on this application to add CRUD access for the Items and the Sales.

Screenshot of our full-stack Nuxt application with CRUD functionality

With our full-stack application complete, we can configure our application to make use of the rendering mode we want to leverage.

Configuring server-side rendering

By default, Nuxt.js provides universal rendering for every page, without additional configuration changes. This means that page requests that come from an external source, such as Google, will be rendered server-side, providing our application with better search engine optimization. Page requests that come from another page in our Nuxt application will make the fetch requests to our backend APIs instead, providing a smoother application experience. Universal rendering provides the best of both worlds, improved search engine optimization and initial application load, while providing SPA-level fluidity across pages.

Inspect the network tab within your browser for yourself, you’ll see that fresh page loads respond with the HTML with data present, whereas page navigations within the application make API calls! Alternatively, we can configure the rendering mode within Nuxt to leverage server-side rendering or client-side rendering as we prefer.

Deploying our Nuxt application to Azure Static Web Apps

We can easily deploy our Nuxt application to Azure Static Web Apps with the zero-configuration deployment. We’ll start by creating a GitHub repository for the nuxt-swa-full-stack-app project, which contains our Nuxt app.

Once the GitHub repository has been created, we can navigate to the Azure Portal and create a new Static Web Apps resource. In the deployment details, we must select the Nuxt build preset. This will create a GitHub Actions workflow file to build and deploy our application to your Static Web Apps resource with the proper configuration.

Finally, we set our database environment variables for our Azure Static Web Apps resource. From the Azure Static Web Apps resource in the Azure Portal, we navigate to Environment variables and set our NUXT_COSMOSDB_KEY as well as your NUXT_COSMOSDB_ENDPOINT. Nuxt will automatically overwrite our runtimeConfig cosmosdbKey and cosmosdbEndpoint by convention.

And here is our final full-stack Nuxt application on Azure Static Web Apps: https://happy-beach-029f0310f.5.azurestaticapps.net/items

Conclusion

We’ve finally built and deployed a full-stack Nuxt application with universal/server-side rendering to Azure Static Web Apps! This sample demonstrates how we can benefit from Nuxt’s zero-configuration deployments to Azure Static Web Apps and leverage Azure Static Web Apps’ distributed content host and serverless managed functions backends. Azure Static Web Apps also provides built-in preview environments and authorization to take this application to the next step, so make sure to try those out!

Get started deploying full-stack web apps for free on Azure with Azure Static Web Apps!

Microsoft Tech Community – Latest Blogs –Read More

How to use Comments as Prompts in GitHub Copilot for Visual Studio

GitHub Copilot is a coding assistant powered by Artificial Intelligence (AI), which can run in various environments and help you be more efficient in your daily coding tasks. In this new series of content, we will show you how GitHub Copilot works in Visual Studio specifically and how it helps you being more productive.

In the second short video in this series, my colleague Bruno Capuano will use comments to trigger GitHub Copilot to generate code directly inline in the current file.

In case you are not sure, check how to install GitHub Copilot for Visual Studio in the first article of this series.

What’s interesting with GitHub Copilot for Visual Studio is that it’s not just suggesting code, but it is also suggesting completion for your comments. This can be quite useful when you write documentation, for example.

In the example, Bruno is typing a comment looking like this:

// function to get the year of birth

Immediately Copilot jumps to action and proposes to complete the comment as follows:

// function to get the year of birth *from the age*

(Note: in this example I added *stars* around the suggested code. In the Visual Studio IDE, the suggestion would appear greyed out).

In order to validate the suggestion and add it to the code, you can simply press the Tab key. Then, if you press Enter, Copilot is going to suggest some code. Based on your internet connection, this can be either immediate or take a couple of seconds. The code suggestion will also be added inline and greyed out. And here too, you can accept the suggestion by pressing the Tab key.

Validating the output

The most important concept to keep in mind when dealing with Artificial Intelligence is that the user (that’s you) should always validate the output of the model. You have the opportunity to check the Copilot output when the code is added and greyed out, or after you press the Tab key. I often accept the suggestion and then go back to the generated code to change small details to “make the code mine” or even to fix it.

GitHub Copilot is not a compiler!

What this means is that the code generated by GitHub Copilot can very well fail compilation, leaving you, the Pilot in charge, responsible to check and correct the code.

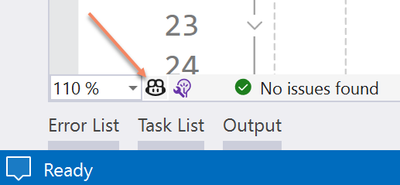

Disabling completion

Note that you might not always want Copilot to suggest completions in your code files. It’s quite easy to deactivate it, either for all code files, or even just for specific files such as markdown or C#. Simply click on the small Copilot icon on the Visual Studio taskbar and then select the appropriate option.

More information

For more information, check our collection with resources here. Also, you can see the full length video here. Stay tuned to this blog for more content posted regularly. And of course, you can also subscribe to our YouTube channel to get more video content!

Microsoft Tech Community – Latest Blogs –Read More

Azure Monitor: Create Dedicated Clusters Using Any Commitment Tier

Hello readers,

You might have noticed the supportability for any existing commitment tier, including the small 100, 200, 300, 400 GB/Day ones, for Azure Monitor Logs Dedicated Cluster have been announced by the Azure Monitor product group. The official announcement went live on January 25, 2024 and can be found HERE.

As short recap, an Azure Monitor Logs Dedicated Cluster might be required if you would like to use one or more of the capabilities reported below:

Customer-managed keys – Encrypt cluster data using keys that you provide and control.

Lockbox – Control Microsoft Support engineer access requests to your data.

Double encryption – Protect against a scenario where one of the encryption algorithms or keys may be compromised. In this case, the extra layer of encryption continues to protect your data.

Cross-query optimization – Cross-workspace queries run faster when workspaces are on the same cluster.

Cost optimization – Link your workspaces in the same region to cluster to get commitment tier discount to all workspaces, even to ones with low ingestion that are eligible for commitment tier discount.

Availability zones – Protect your data from datacenter failures by relying on datacenters in different physical locations, equipped with independent power, cooling, and networking. The physical separation in zones and independent infrastructure makes an incident far less likely since the workspace can rely on the resources from any of the zones. Azure Monitor availability zones covers broader parts of the service and when available in your region, extends your Azure Monitor resilience automatically. Azure Monitor creates dedicated clusters as availability-zone-enabled (isAvailabilityZonesEnabled: ‘true’) by default in supported regions. Dedicated clusters Availability zones aren’t supported in all regions currently.

Ingest from Azure Event Hubs – Lets you ingest data directly from an event hub into a Log Analytics workspace. Dedicated cluster lets you use capability when ingestion from all linked workspaces combined meet commitment tier.

As per the announcement, configuration is first available the Clusters – Create Or Update REST API. There are also good examples in Microsoft documentation, including methods like Azure portal, Azure CLI, PowerShell, and REST API, about cluster provisioning.

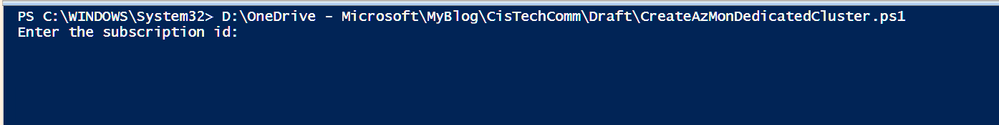

Trying to make your life easier I created a PowerShell script that allows you to use PowerShell to leverage REST API calls which allow you to create a Dedicated Cluster using any commitment tier starting with the lowest level of 100 GB/Day.

Let’s have a look at the information you need to have at first before running the script:

An account with at least the Log Analytics Contributor built-in role permissions

The SKU capacity or Commitment tier you would like to use

The subscription where you want to create the cluster

The location in which the cluster will be created. Remember that you can log analytics workspaces to a dedicated cluster only if they are in the same region

The resource group where to create the dedicated cluster

With this information in your hand, you can start creating the PowerShell script that:

Ask for all the above information

Make the connection to Azure

Retrieve the authentication token

Create the cluster

Not really happy with creating the above-mentioned PowerShell script? Don’t worry, I have one created for you. Here is the source code:

<#

.SYNOPSIS

This sample script is designed to ease the creation of Azure Monitor Logs Dedicated Cluster using any supported/available tier.

.DESCRIPTION

This sample script is designed to ease the creation of Azure Monitor Logs Dedicated Cluster using any supported/available tier.

It will ask for the following information during the execution:

– Subscription Id

– Resource group

– Azure Monitor Logs Dedicated Cluster name

– Azure Monitor Logs Dedicated Cluster commitment tier

Once all the necessary information has been entered, the script will log you in and retrieve a list of Azure location to deploy

the resource in. Select your preferred location from the grid to continue with the execution and resource creation.

.REQUIREMENTS

The following PowerShell modules are required:

– AZ.ACCOUNTS

– AZ.RESOURCES

.NOTES

AUTHOR: Bruno Gabrielli

LASTEDIT: March 5th, 2024

– VERSION: 1.0 // March 5th, 2024

– First version

#>

# Forcing use of TLS protocol

[System.Net.ServicePointManager]::SecurityProtocol = [System.Net.SecurityProtocolType]::Tls12

# Reaing input values

[string]$subscriptionId = Read-Host “Enter the subscription id”

[string]$resourceGroup = Read-Host “Enter the resource group name”

[string]$clusterName = Read-Host “Enter the Dedicated cluster name”

[string]$sku = Read-Host “Enter the Dedicated cluster commitment tier (only the size number)”

# Authenticating to Azure and setting the contex on the selected subscription

Connect-AzAccount

Set-AzContext -subscriptionId “$subscriptionId”

# Getting Azure Location where to deploy the cluster

$selectedLocation = (Get-AzLocation | Select-Object -Property DisplayName, Location | Out-GridView -OutputMode Single -Title “Select the region to deploy the resource in”).Location

# Retrieving bearer token to be used for REST API call authentication

$bearerToken = (Get-AzAccessToken).Token

# Assembling body based on the input values

$body = @”

{

“identity”: {

“type” : “systemAssigned”

},

“sku”: {

“name” : “capacityReservation”,

“Capacity” : $sku

},

“properties” : {

“billingType” : “Cluster”

},

“location” : “$selectedLocation”

}

“@

# Setting variables and costants

$method = “PUT”

$contentType = “application/json”

$headers = @{“Authorization” = “Bearer $bearerToken”}

$uri = “https://management.azure.com/subscriptions/$subscriptionId/resourcegroups/$resourceGroup/providers/Microsoft.OperationalInsights/clusters/$($clusterName)?api-version=2022-10-01”

# Create Cluster

$createResponse = Invoke-WebRequest -Uri $uri -Method $method -ContentType $contentType -Headers $headers -Body $body -UseBasicParsing

return $createResponse.StatusCode

<#

## USE THESE LINE BELOW TO CHECK FOR RESOURCE PROVISIONING STATUS

#Get cluster provisioning state

$getClusterProvisioningState = Invoke-WebRequest -Uri $uri -Method “GET” -Headers $headers -UseBasicParsing

$getClusterProvisioningStateDetails = $getClusterProvisioningState | ConvertFrom-JSON

Write-Host “Resource provisioning status == $($getClusterProvisioningStateDetails.Properties.provisioningState)”

#>

Run the script and provide the necessary information as prompted by PowerShell (in this example I am going to create a 100GB/Day instance):

Once all the information has been entered, the script will present a grid view with all the Azure regions so you can select the one you would like to use and click OK:

Script will continue with the execution and once done, return HTTP code 202 which means request accepted.

Provisioning this type of resource will require a considerable amount of time (few hours) so, from this point on, you just have to sit, relax, and wait . Of course, you can check every now and then the provisioning status by running the following commands from the same PowerShell prompt you just used to invoke the cluster creation:

#Get cluster provisioning state

$getClusterProvisioningState = Invoke-WebRequest -Uri $uri -Method “GET” -Headers $headers -UseBasicParsing

$getClusterProvisioningStateDetails = $getClusterProvisioningState | ConvertFrom-JSON

Write-Host “Resource provisioning status = $($getClusterProvisioningStateDetails.Properties.provisioningState)”

Once the value of provisioningState changes to Succeeded, it means that your cluster has been successfully created and you can start linking your workspaces.

REMEMBER: this script is provided AS IS, so do not forget to test it thoroughly.

Curious to see all details about your cluster including linked workspaces with their setting, data ingested by each of them, total daily ingestion for the cluster and an estimation of chargeback? We have a nice workbook for you in the Azure Monitor Workbook gallery

This is an example of how the workbook will look like:

With that said, I can only close by saying: happy linking and good saving

Disclaimer

The sample scripts are not supported under any Microsoft standard support program or service. The sample scripts are provided AS IS without warranty of any kind. Microsoft further disclaims all implied warranties including, without limitation, any implied warranties of merchantability or of fitness for a particular purpose. The entire risk arising out of the use or performance of the sample scripts and documentation remains with you. In no event shall Microsoft, its authors, or anyone else involved in the creation, production, or delivery of the scripts be liable for any damages whatsoever (including, without limitation, damages for loss of business profits, business interruption, loss of business information, or other pecuniary loss) arising out of the use of or inability to use the sample scripts or documentation, even if Microsoft has been advised of the possibility of such damages.

Microsoft Tech Community – Latest Blogs –Read More

Memorable Community-Led Microsoft Mesh Launch Events

Microsoft Mesh is a technology which transforms remote interactions, rendering them akin to face-to-face experiences, thereby elevating productivity, digital presence, and interactivity to the next level. Anyone can elevate collaborative experience with immersive 3D spaces within Microsoft Teams. It enables dynamic team meetings, brainstorming sessions, and networking events, tailored for both large enterprises and small businesses, offering a distinctive platform for engagement and connection.

In honor of Microsoft Mesh’s launch on January 24, 2024, two Mixed Reality MVPs in Germany, Zaid Zaim and Christian Glessner, orchestrated influential events that resonated with both local and global tech communities in partnership with ignore gravity, Hololux, Malt Community, iLoveMesh, Metaverse Playground, and Unity Developer community Germany.

January 30, 2024: Beyond Reality – Metaverse and AI Developers Hangout {Berlin}

The event served as a vibrant platform for artists, game developers, technology, and AI experts to engage in enlightening dialogues and networking focused on the metaverse and AI realms. Featuring insightful sessions and expert speakers, including Christian Heilmann, Marc Plogas, Alexander Wachtel, Christoph Spinger, and Norbert Nemec the gathering highlighted the potential of XR and AI technologies.

MVP Zaid Zaim and Norbert collaborated to demonstrate Microsoft Mesh, redefining the hybrid work landscape with XR collaborative experiences, immersive workspaces, avatars, spatial audio, and interactive tools. They showcased the technology’s ability to transform remote interactions into engaging and shared environments and provided insights into the developer workflow with Unity demos for creating effective interactive hybrid work solutions.

As an organizer, Zaim shares his insights gained from engaging with the participants, stating, “In addition to the main event program I enjoyed the side conversations with participants learning about projects and initiatives they drive. One of the participants is an entrepreneur who is developing a VR App for fashion, and it was fun to brainstorm how she can demonstrate her art in technologies like Microsoft Mesh.”

Zaim says, “XR/Metaverse and AI are currently the most trending topics,” and explains the impact this event had on the participants, “providing a foundation for people coming from these fields is highly important, enabling space for discussion and interaction between both areas. I love to follow this path: Inspire, Learn, Apply, and Experience!”

He continues, “This event offered content from experts from these areas. Participants had specific takeaways from the different talks and learned and connected with new friends. I enjoy supporting those interested and applicable to jump into the Microsoft technology stack. Finally, there are cool demos that people can try and experience. I felt very happy when my community members and speakers told me that it was worth spending time here!”

Picture Credits: Fares Al Shawaf

February 8, 2024: Microsoft Mesh Launch party (Recap)

In a vibrant showcase of immersive collaboration, two Mixed Reality MVPs, Christian Glessner and Zaid Zaim, led an unforgettable afterparty on Neon Planet hosted live in Microsoft Mesh. This event highlighted the endless possibilities of 3D experiences in the workplace and beyond. According to one of the organizers, Christian, this event inspired attendees by showcasing the possibilities available to creators, designers, and developers.

Christian and Zaid, renowned for their innovative spirit, played crucial roles in uniting technology enthusiasts and professionals within the XR/Metaverse communities all around the world. Their commitment to enhancing community engagement radiated throughout the event as attendees delved into the captivating world of Neon Planet, demonstrating the remarkable capabilities of Microsoft Mesh, like Physics and Visual Scripting in facilitating shared virtual environments.

The afterparty not only provided a platform for networking and discovery but also emphasized the significance of community in the digital age. Attendees left with new connections and inspirations, eager for future adventures in virtual spaces. Christian says, “They’ve enjoyed the experience. Instead of just talking about Mesh they were happy to jump in and discover the potential of immersive events firsthand.”

As the iLoveMesh community anticipates more events, the impact of the Launch Party remains clear: when technology meets community, the possibilities are boundless. Due to the visionary leadership of Christian and Zaid, the event stands as a beacon for future explorations in the metaverse, showcasing the power of collective innovation and engagement.

Picture Credits: Carlos Austin

Zaid and Christian both anticipate reaching and assisting a larger audience in the future. Zaid says, “I’m looking forward to scaling up these events and multiply hosting these in different countries and cities and supporting tech communities to master their jump into XR and AI. I very much enjoy giving talks and workshops about these topics and am thrilled to engage further with topics NGOs and Women in Tech.”

Christian also shares outlook on current and future community activities, “I’m just on my iLoveMesh World Tour where I engage communities in Thailand, Malaysia, and Singapore.”

Microsoft Tech Community – Latest Blogs –Read More

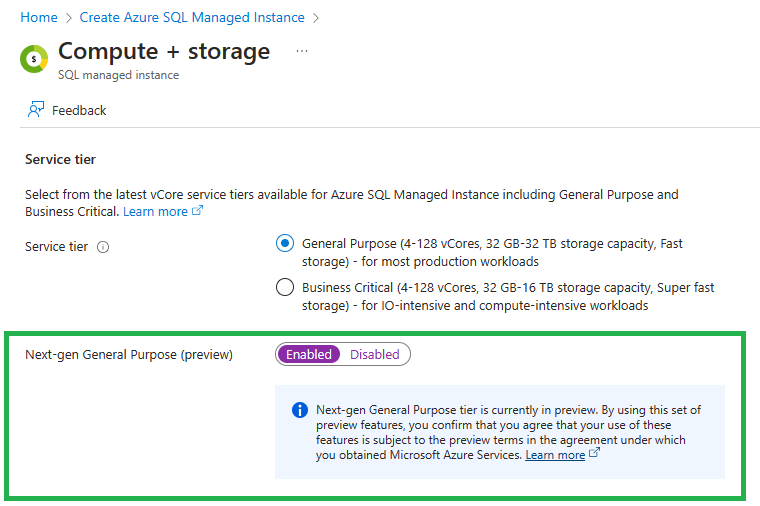

Introducing Azure SQL Managed Instance Next-gen GP

We are excited to announce the public preview of Next-gen General Purpose (GP) service tier for Azure SQL Managed Instance (SQL MI). Next-gen GP brings significantly improved performance and scalability to power up your existing Azure SQL Managed Instance fleet, and help you bring more of mission-critical SQL workloads to Azure.

What is Azure SQL Managed Instance Next-gen GP?

Under the hood, the Next-gen GP is built using the latest technology from Azure Storage: premium managed disks. This upgrade enables major enhancements in the areas of storage performance, instance scalability and cost optimization, greatly improving the value you’re getting for your money!

Starting today, Next-gen GP is available in Preview for both the existing and newly created Azure SQL MI GP instances. The upgrade to Next-gen GP is a fully online operation which is easily reversible (if needed), so you can confidently try the Next-gen GP for your existing instances. All it takes is one click of a button (two clicks, actually – you also need to click „Apply“ for the upgrade process to start). The upgrade process can take a bit of time (data needs to be copied to a new type of storage) but everything happens in the background, and you can use your instance during the upgrade.

Significantly improved storage performance

To deliver maximum storage performance, Next-gen GP uses premium managed disks instead of premium page blobs. Premium managed disks offer significantly lower I/O latency relative to blobs, leading to better performance for all workloads, especially for those heavy on the writes. In addition, Next-gen GP provides more data IOPS and larger transaction log throughput to really make your database applications sing!

Here’s an overview of the performance enhancements in the Next-gen GP:

Category

Item

Current GP

Next-gen GP

Improvement

Storage performance

Average I/O latency (approx.)

5-10 ms

3-4 ms

2x lower I/O latency

Max data IOPS

30-50k IOPS

80k IOPS

60% better

Max log throughput

120 MB/s (instance)

22-65 MB/s (per-DB)

192 MB/s (instance)

no per-DB limits!

60% better,

more flexible

Support for more storage, vCores, databases and files

Next-gen GP greatly extends the current maximum resource limits, starting with the support for 32 TB of storage, support for up to 500 DBs, larger maximum of 128 vCores, and a significantly improved limit for the number of files, with 4096 files per database. These new limits will allow you to migrate your more demanding workloads, or to consolidate some of the existing workloads into fewer number of instances – the opportunities are endless.

Here’s a quick breakdown of the scalability enhancements in the Next-gen GP:

Category

Item

Current GP

Next-gen GP

Improvement

Instance

scalability

Max storage

16 TB

32 TB

2x better

Max number of DBs

100 DBs

500 DBs

5x better

Max number of files

280 per instance

4096 per database

Huge improvement!

Max number of vCores

80 vCores

128 vCores

40% better

More flexibility to right-size the resources and optimize your spending

Next-gen GP offers significantly more sizing flexibility, helping you optimize the hardware configuration and performance levels for your database workloads.

First off, performance limits are shared across the Next-gen GP instance, freeing you from having to manage database file sizes to optimize your performance (which is the case with the current SQL MI GP). The shared performance quotas are much easier to use, and this model is familiar to the existing SQL Server users (be it on-prem, in VMs, or in SQL MI BC).

In addition, Next-gen GP offers 2x the number of vCore choices making it more cost-effective to achieve the required resource levels for your workload.

Finally, Next-gen GP introduces the ability to further improve performance by requesting additional IOPS via the new IOPS slider. The following section will go into more details on IOPS slider.

Category

Item

Current GP

Next-gen GP

Improvement

Resource flexibility

Performance limits

Separate limits for each file

Shared limits

for the instance

Easier to use, familiar model

Number of vCore sizes

8 click stops

16 click stops

2x better

Independent sizing of I/O performance and size

N/A

Slider for additional IOPS

New option!!

Further improve database performance with the IOPS slider

Next-gen GP allows you to provision additional IOPS on top of the baseline I/O performance provided by the service out of the box. The additional IOPS are provisioned via the new IOPS slider:

Here’s how the IOPS provisioning works in the Next-gen GP:

To provide great performance out-of-the-box, each instance gets a certain amount of IOPS included by default, and these “included IOPS” are free of charge! Each instance gets 3 IOPS per GB of storage for free, with a minimum of 300 IOPS.

Maximum amount of IOPS is limited by the amount of vCores configured for the instance. Max IOPS amount is 1600 IOPS per vCore, with a maximum of 80.000 IOPS.

Here’s how this could look in practice for our sample Next-Gen GP instance from the picture above (our instance is using 8 vCores of compute and 2400 GB of storage):

Included (free) IOPS: 2400 GB of storage x (3 IOPS per GB) = 7200 IOPS included for free

Maximum possible IOPS: 8 vCores x (1600 IOPS per vCore) = 12800 IOPS maximum

This means we can use the IOPS slider to provision up to 5600 additional IOPS

Here’s a chart that illustrates the options for provisioned IOPS for an 8-vCore Next-gen GP instance, depending on instance storage size:

The additional IOPS improve the performance of our workload, but come with extra charge, which we’ll cover in the following section.

One final remark: changing the amount of additional IOPS for your instance is an online scaling operation, so you can leverage this to your advantage. Need some extra IO performance for the end-of-month processing, or for an occasional bulk-load of a large quantity of data? IOPS slider is your friend!

Next-gen GP pricing

The compute and storage pricing remains the same as with the current GP tier. Moreover, any Reserved Instance pricing deals you have purchased for the current GP service tier will automatically apply for the Next-gen GP as well. This means that with Next-gen GP you are getting significantly improved performance for the same price!

However, in case you’d like to provision additional IOPS for your instance using the IOPS slider, those additional IOPS will come with an extra cost of $0.038 per IOPS (East US prices, 3/21/2024)

Let’s push the IOPS to max for our new instance and examine the costs:

SQL MI GP pricing

(East US prices, 3/21/2024)

Current GP

– IOPS depend on file sizes

Next-gen GP (baseline)

– 7200 included IOPS

– no additional IOPS

Next-gen GP (max IOPS)

– 7200 included IOPS

– 5600 additional IOPS

Compute cost

(8 vCores, license included, premium-series HW)

$ 1611.64

$ 1611.64

$ 1611.64

Storage cost

(2400 GB x $0.115/GB, 32 GB free of charge)

$ 272.32

$ 272.32

$ 272.32

Additional IOPS cost

(5600 IOPS x $0.038/IOPS)

–

–

$ 212.80

TOTAL (per month)

$ 1883.96

$ 1883.96

$ 2096.76

Here’s how these costs are represented in the “Estimated costs” panel in Azure Portal:

One final point: the billing for additional IOPS for Next-gen GP will start on 4/1/2024. There will be no charges for additional IOPS in the March of 2024.

What about the Business Critical service tier?

Business Critical (BC) service tier is an important part of the Azure SQL Managed Instance value prop, and it’s here to stay for the long term! We recently released a broad set of performance and scalability enhancements for the BC tier that make it even better suited for your most demanding and critical workloads.

This blog summarizes the recent BC enhancements, many of which are now made available to GP customers with the Next-gen GP tier.

Next-gen GP Availability

Next-gen GP requires the November 2022 Feature Wave (in short: FW) as a prerequisite.

FW is already available for all SQL MI instances created in new subnets. This means that you can try the Next-gen GP today if you have the flexibility to create a new subnet for your instance.

The rollout of FW across the existing fleet of SQL MI instances is well under way and is on track to be completed over the course of the next several months. So, if your instances have not yet received the FW update, they should receive it quickly, opening up the option to upgrade them to the Next-gen GP!

Summary

Next-gen GP brings a ton of exciting new innovation into Azure SQL Managed Instance, making sure it’s ready for more of your resource-intensive workloads. So try the Next-gen GP to maximize your performance and further improve your cost efficiency.

If you’re new to Azure SQL Managed Instance, get started today with our free offer – it gives you access to one instance that supports up to 100 databases for up to one year, making it a great way to test-drive out service.

To learn more about Azure SQL Managed Instance, visit our documentation and YouTube channel for more information.

Microsoft Tech Community – Latest Blogs –Read More

System Center 2019 Update Rollup 6

We are delighted to announce the release of Update Rollup 6 for System Center 2019. As System Center 2019 is approaching the end of mainstream support next month, this Update Rollup comes with timely enhancements and bug fixes to support your existing configurations. This article gives detailed information on the new enhancements.

Virtual Machine Manager

Arc blade enhancements

We recently introduced Azure management capabilities like Microsoft Defender for Cloud, Azure Update Manager, VM Insights and more for Arc-enabled SCVMM VMs. You can discover guidance on how to get started with Azure Arc-enabled SCVMM and install Arc agents at scale from the Azure Arc blade in the SCVMM product. If you are running WS 2012 and 2012R2 hosts and VMs, you can also get to know the forward-looking options as these OSes went out of support by 10th October 2023. Read more about Arc enabled SCVMM here.

Refer to the KB article for additional details on the issues that are fixed with VMM 2019 UR6.

Data Protection Manager

Update Rollup 6 for System Center Data Protection Manager 2019 brings you stability improvements and bug fixes along with the following new features:

Support for Windows and Basic SMTP Authentication for sending DPM email reports and alerts.

List online recovery points for a data source along with the expiration dates.

Refer to the KB article for additional details on the issues that are fixed with DPM 2019 UR6.

Operations Manager

SCOM 2019 UR6 is available with a rich set of bug fixes, security enhancements and Unix/Linux/Network monitoring fixes and changes. Refer to this KB article for details.

Orchestrator

Web Console filtering is now improved for both Job and Instance views. Initial load time of the navigation tree on the Web Console reduced with optimizations made in the API authorization cache. Refer to the KB article for complete details on the issues that are fixed with SCO 2019 UR6.

We are committed to delivering new features and quality updates with UR releases at regular cadence. For any feedback and queries, you can reach us at systemcenterfeedback@microsoft.com.

Microsoft Tech Community – Latest Blogs –Read More

Azure SQL Database Watcher and Azure Data Explorer Integration

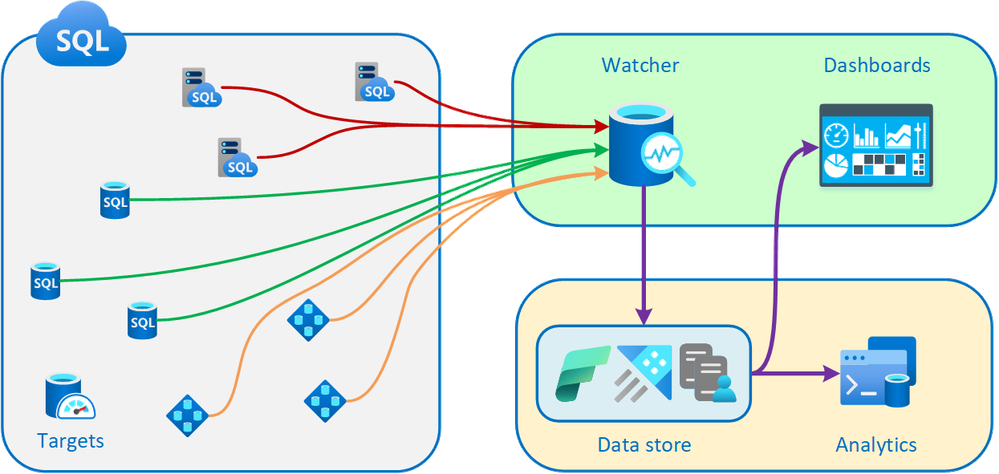

Azure SQL family users can now take advantage of an enhanced monitoring solution for their databases and leverage Azure Data Explorer or the Microsoft Fabric integration. With the introduction of the new Database Watcher for Azure SQL (preview), users gain access to advanced monitoring capabilities.

Database watcher is a new managed monitoring solution for database services in the Azure SQL family. It supports Azure SQL Database and Azure SQL Managed Instance.

Database watcher collects in-depth workload monitoring data to give you a detailed view of database performance, configuration, and health. Monitoring data from the databases, elastic pools, and SQL managed instances you select is collected in near real time into a central data store. To store and analyze SQL monitoring data, database watcher uses Azure Data Explorer . You can also trial a free Azure Data Explorer cluster. As a complement option, Eventhouse KQL database in Fabric Real-Time Analytics is also supported as a destination.

Out-of-the-box dashboards in Azure portal provide a single-pane-of-glass view of your Azure SQL estate and a detailed view of each database, elastic pool, and SQL managed instance.

A conceptual diagram of a database watcher deployment, showing the flow of monitoring data from Azure SQL resources to a database watcher. The monitoring data is stored in Azure Data Explorere or Fabric Real-Time Analytics. Dashboards in the Azure portal show you a single-pane-of-glass view across your Azure SQL estate, and a detailed view of each database, elastic pool, and managed instance.

From the DB Watcher resource page in Azure, you can easily select an Azure Data Explorer cluster and databsase to stream the data to it.

Optimal Data Analysis with KQL (Kusto Query Language)

To analyze collected monitoring data, the recommended method is to use KQL. KQL is optimal for querying telemetry, metrics, and logs. It provides extensive support for text search and parsing, time-series operators and functions, analytics and aggregation, and many other language constructs that facilitate data analysis. You can find examples of analyzing database watcher monitoring data with KQL in the documentation.

Evolution of Azure SQL Database Monitoring

Over the past decade, our customers have emphasized the importance of reliable, low-latency, and comprehensive monitoring for cloud database services. Initially, Azure Monitor metrics and diagnostic telemetry sufficed for many Azure SQL users. However, as large and complex mission-critical applications became prevalent, it became evident that more robust monitoring was necessary. While Azure SQL Analytics and SQL Insights were introduced to address this need, feedback revealed gaps in the monitoring data coverage, an excessively high data latency, and high cost at scale. Despite attempts to improve with SQL Insights, concerns persisted, especially regarding the setup and maintenance of IaaS VMs to monitor PaaS database services. Reliability issues further underscored the need for a better solution.

Thus, Database Watcher was born.

This managed monitoring solution offers extensive data coverage, collecting information from over 70 SQL system views and presenting it directly in the Azure portal. With minimal latency, typically in single-digit seconds, and leveraging Azure Data Explorer or Fabric Real-Time Analytics, Database Watcher empowers users to derive actionable insights from rich, real-time data that database watcher collects.

Summary

The dashboards, complemented by KQL queries, enable you to delve deep into the performance and configuration of your databases. This means you can detect, investigate, and troubleshoot a wide variety of database performance and health issues.

Whether you’re tackling resource bottlenecks or fine-tuning your Azure SQL resources for the best balance of cost and performance, Database Watcher equips you with the insights needed to make informed decisions. It’s your pathway to optimizing your Azure SQL setup for peak efficiency and cost-effectiveness.

Next steps

One effective approach to grasp the potential and the power of Database Watcher is to give it a try yourself. Set up your Azure SQL resources with Database Watcher, explore the dashboards, and start running some queries with KQL.

To read more about Database Watcher, check out the documentation.

Microsoft Tech Community – Latest Blogs –Read More

Viva Connections Partner Showcase – Accelerator 365

We are excited to share a Partner Showcase story on Accelerator 365. Senior Manager, Glyn Clough from Accelerator 365 is highlighting their solutions and extensions which available for the customers from the Microsoft AppSource.

Viva Connections is a company-branded employee experience destination that seamlessly integrates news, conversations, and resources within the apps and devices you use daily. It is designed to foster a culture of inclusion, allowing everyone’s ideas and voices to matter, while providing employees with the flexibility to engage and participate from anywhere.

Microsoft AppSource is an online store that contains thousands of business applications and services built by industry-leading software providers. It is a platform where you can browse, try, buy, and deploy cloud-ready solutions for Microsoft Power Platform, Microsoft 365, Dynamics 365, and more, helping you drive your business and get more done with the technology you already have.

Presenters – Glyn Clough (Accelerator 365) & Vesa Juvonen (Microsoft)

Company – Accelerator 365

Covered solutions in the AppSource:

Page Tour by Accelerator 365

Launchpad by Accelerator 365

Stock Price by Accelerator 365

My Emails by Accelerator 365

Poll by Accelerator 365

Alerts by Accelerator 365

Accordion by Accelerator 365

References

Overview of Viva Connections

Viva Connections solutions in the Microsoft AppSource

Overview of Viva Connections Extensibility

Have you created an offering for the Viva Connections? – Do you have apps available in the AppSource? – let us showcase your solutions. Please fill in the following form to get us connected with you so that we can plan the right model together – https://aka.ms/viva/connections/partnershowcase.

Providing great employee experience platform with our partner ecosystem :clapping_hands:

Microsoft Tech Community – Latest Blogs –Read More

New on Microsoft AppSource: March 8-14, 2024

We continue to expand the Microsoft AppSource ecosystem. For this volume, 143 new offers successfully met the onboarding criteria and went live. See details of the new offers below:

Get it now in our marketplace

Kezzler Connected Products Platform: Kezzler’s cloud-based platform provides unit-level traceability for brands to meet customer and government requirements, improve supply chain control, and gain insights across the entire value chain. The platform is scalable, flexible, and independent of packaging, factory automation, and ERP providers. Kezzler’s patented core technologies give it a competitive advantage in handling massive volumes.

Limitless Digital Workplace News Portal: This offer from IT-Dev integrates Microsoft 365 communication tools, offering predefined templates for corporate news and communication areas. It allows easy and quick content creation, push notifications, and error-reporting functionality. The portal is customizable and offers a base of corporate photos. The company has delivered more than 500 successful digital workplace projects internationally.

RISIKA Risk and Credit App: From Siteshop, the Risika Risk and Credit app for Microsoft Dynamics 365 CRM provides financial insights and credit recommendations for Nordic companies, helping businesses avoid wasting time and resources on prospects who won’t pay on time. The app works for companies in Denmark, Sweden, Norway, and Finland, and requires a separate subscription for Risika API.

Zenya: This offer from Infoland is a quality control and risk management software for healthcare organizations. With more than 20 years of experience, it supports guidelines and standards such as Qmentum, JCI, and ISO 9001. Used by more than 400 healthcare organizations and 1.4 million professionals, it offers insight into risks and opportunities to focus on patient care.

Go further with workshops, proofs of concept, and implementations

Copilot Consulting: This training program from Supremo covers basic concepts of AI and Copilot for Microsoft 365, configuration and customization, practical workshops, advanced features, and support. Participants will learn how to automate tasks, analyze data, and make decisions using Copilot. Technical support and mentoring are also provided.

Copilot for Microsoft 365 Center of Excellence as a Service: Monthly Managed Service: This service from adaQuest guides organizations in deploying, managing, and optimizing Copilot for Microsoft 365. It fosters a culture of continuous learning, collaboration, and technological fluency, positioning businesses at the forefront of digital innovation. This CoE as a Service improves adoption and implementation results for Copilot for Microsoft 365.

Copilot for Microsoft 365 Advisory: SoftwareOne offers a Copilot for Microsoft 365 workshop engagement to assess needs, prioritize scenarios, and define an actionable roadmap. The deliverables include a clear plan for Copilot for Microsoft 365 deployment, understanding of licensing, security, technology, adoption, and governance needs, and a simple readiness plan.

Dynamics 365 Mixed Reality Guides GxP Framework: 6-Week Implementation: Microsoft Dynamics 365 Mixed Reality guides combined with NNIT’s GxP framework ensure compliance and control over the life cycle of SOPs and JIs. The framework reduces errors, standardizes skills, and improves training.

ITVT Quick Start App for Microsoft Dynamics 365 Business Central: 1-Month Implementation: This service from ITVT optimizes business structures for startups and companies. It offers a fully functional system that provides information on cost-saving and logistics optimization. The app has an interface with the Datev system, allowing for easy management of personnel and accounting issues. This offer is available in Belgium, Germany, The Netherlands, and Switzerland.

Microsoft 365 Cloud Accelerator Experience: Workshop: This workshop from Seer Strategic Solutions helps businesses explore the capabilities and benefits of Microsoft 365 products. Led by a Microsoft-certified facilitator, the workshop allows participants to try out different scenarios in a virtual “sandbox” environment and learn how to unlock the potential of Microsoft 365 for their business.

Microsoft 365 Copilot: 4-Week Workshop: Microsoft 365 Copilot is an AI-powered assistant designed to integrate with Microsoft 365 apps, helping businesses delegate basic tasks to AI and improve productivity. Embee Software offers a four-week program to integrate Copilot into businesses, including assessment, technical readiness, and compliance checks. It also provides customized solutions using Microsoft 365 Copilot, Azure OpenAI Service, and Github Copilot.

Microsoft 365 Copilot Adoption Accelerator: This service from Fujitsu helps organizations maximize AI potential. It includes deployment, security optimization, training, adoption, and change management. Fujitsu offers additional on-demand services and specializes in adoption and change management. It guides customers to identify potential, prerequisites, and technical gaps for secure and productive use of Copilot for Microsoft 365.

Microsoft 365 Copilot Workshop: 2-Day Workshop: This workshop from Elgon helps organizations assess readiness for AI with Copilot and create an implementation plan. The workshop includes an “Art of the Possible” session showcasing Copilot’s capabilities and a phase to build an actionable plan. The goal is to accelerate the AI and Copilot journey, empower users, increase satisfaction, and drive innovation.

Microsoft 365 Copilot: 3-Day Workshop: This Fujitsu workshop helps organizations unlock the full potential of AI. It includes an assessment phase, a demonstration phase, and a plan development phase. Fujitsu provides additional on-demand services, such as building a business case or cost/benefit analysis. The workshop helps organizations understand AI readiness, envision possibilities, and create a clear, actionable roadmap for integration.

Microsoft 365 Copilot: 1-Day Envisioning Workshop: Click2Cloud offers this AI readiness workshop to help organizations understand the benefits of Copilot and formulate a strategy for adoption. The workshop provides a plan with recommendations and a high-level roadmap for implementation. It is designed for those considering Copilot licensing and aims to assess the value of integrating AI within an organization.

Microsoft 365 Copilot: Security Pre-Settings: Overcast offers a tool designed to optimize productivity and creativity within the Microsoft suite of applications. It offers features such as automating repetitive tasks and real-time collaboration, speeding up workflows, and freeing up time for more strategic tasks. It integrates seamlessly with daily Microsoft 365 applications, improving efficiency. It is available as an add-on plan with specific licensing prerequisites.

Microsoft 365: 12-Day Deployment of Security Basic Services: Cosmos Business Systems proposes a Microsoft 365-based transformation strategy for optimizing digital landscape with a focus on zero trust architecture. The plan includes streamlined mobile and application management with Microsoft Intune, enhanced security with Microsoft Defender, and augmented security measures and user empowerment with Microsoft Entra ID.

Microsoft Copilot for Security: 2- to 4-Hour Workshop: This workshop from Water IT Security & Defense teaches users how to use Microsoft Copilot for Security efficiently and cost-effectively. The workshop is designed to put organizations in the best position to take advantage of the benefits of AI in their day-to-day operations.

Microsoft Dynamics 365 Business Central: 2-Hour Workshop: This two-hour workshop from Knowit is offered to review current solutions and map out complexity. Dynamics 365 Business Central is discussed, with a tailored mapping session based on a questionnaire. The resulting report, which becomes your company’s property, includes recommendations for the way forward.

Microsoft Entra Private Access and Entra Internet Access: Fortify your remote workforce’s security with Sentinel Technologies’ latest jumpstart. We’ll identify prime applications for onboarding to Private Access, configure traffic forwarding policies, and implement conditional access restrictions to secure Microsoft 365 resources in the cloud. This proactive approach ensures a robust defense against evolving cyberthreats.

Migrate to Power BI: This consulting package from DataFactZ helps organizations transition their data analytics and visualization processes to Microsoft Power BI on Azure. The package includes assessment, migration strategy, data migration, integration, training, and ongoing support. Benefits include improved data visualization and analytics capabilities, increased efficiency and productivity, and better decision-making.

Mobile Device Management with Intune: 1-Hour Workshop: Available in German, this workshop from Public Cloud Group helps companies save on infrastructure and increase device security. It covers Microsoft Intune features, potential scenarios, and identifying opportunities for consolidation and improved security. Participants will gain familiarity with Intune, understand potential use cases, and assess benefits and solutions.

Mixed Reality Booster Program for Dynamics 365 Guides: 12-Week Implementation: This NNIT implementation enables organizations to explore the potential of Microsoft Dynamics 365 Guides. The program includes an envisioning workshop, pilot, deployment, platform deployment, and conclusion presentation. NNIT provides end-to-end services and a complete knowledge base for companies in highly regulated industries.

Mixed Reality Booster Program for Remote Assist: 5-Week Implementation: This NNIT implementation offers a four-phase process to explore and realize the benefits of mixed reality within an organization. The program includes an envisioning workshop, pilot, deployment, and achievement phase. NNIT provides end-to-end services, including device procurement, configuration, scaling, user training, and daily support.

Support for Fiscal Printers for Microsoft Dynamics 365: This solution from Retcon enables retail sales orders to be printed on fiscal devices implementing the POSNET protocol. It includes a module that connects standard sales orders to the fiscal service. The solution can be installed on-premises or in the cloud and includes functionalities such as programming headers and footers, defining VAT rates, and automatic daily sales reports. This offer is available in Poland.

Operations Assist: 5-Day Proof of Concept: Argano will demonstrate how Microsoft Store Operations Assist integrates with Microsoft Power Apps and Microsoft Teams to provide a unified view of operations, advanced analytics, personalized recommendations, automation tools, and scalability. It offers a cloud-based AI solution for healthcare, manufacturing, and retail industries. The offering includes a demonstration of a custom solution built using Store Operations Assist.

Power Pages: 2-Week Pilot Design Sprint: Velrada offers a two-week process to develop a lean Microsoft Power Pages solution for customer, vendor, and supplier portals. The engagement includes discovery, design, development, showcase, and refinement activities. The result is a secure and scalable web application that can be deployed quickly. The engagement aims to boost confidence and understanding of Power Pages among internal developers.

Scale Mixed Reality with Center of Excellence: 12-Month Implementation: This NNIT implementation helps organizations implement and adopt Mixed Reality. The framework consists of seven phases, including planning, deployment, and operations. NNIT provides end-to-end services, from procurement to daily support, and has experience in driving Mixed Reality in small and large organizations.

Simple Sales: 1-Month Implementation: Boyer offers a pre-packaged solution for rapid implementation of core tables, views, charts, and data import from Excel. It also includes configuration of OOB dashboard and enabling of Microsoft products. Key benefits include rapid time to value, strong foundation, and extension of current Microsoft products.

Contact our partners

Aptean Food and Beverage ERP Drink – IT Edition for Microbreweries

Boost Your SEO with Online Listings by Yext

CluedIn Master Data Management

Cognizant Call Desk Assistant and Insights

CollectSmart Payments Standard

Copilot Data Governance Readiness with Microsoft Purview

Copilot for Microsoft 365: 4-Week Assessment

Copilot for Microsoft 365: 8-Hour Readiness Assessment

Copilot for Security Readiness Assessment

Copilot Readiness PLUS Tenant Wide Data Oversharing Analysis and Report: 2-Week Assessment

Crave Retail – Smart Fitting Room + Assisted Seller

CS Core-Suite Fashion Connector

Cyberpact – OT Cybersecurity Services

Data Quality App for Non-Profits

Data and AI Services: 1-Hour Briefing

Distillery Workflows – Supply Chain

Dynamics 365 Business Central – Finance Report

Dynamicweb B2B with Business Central

ECHO by Selected Interventions

EduBot – University AI Chatbot Solution

Enhance Customer Interactions with Conversation AI Bot

FactoryStack: Same-Day Implementation

Fluid Eye – Lubricants Emission Simulation

FruitPunch AI Challenge Based Learning

Fusion Chat AI Chatbot and Virtual Agent Solutions

GL Suite Licensing and Credentialing Regulatory Solution

Guidehouse (in)Sight Health Market Advisor

Imperium Email Marketing – SaaS

Imperium Power Platform Consulting – SaaS

Imperium Text Messaging for Dynamics Customer Service – SaaS

Imperium Text Messaging for Dynamics Sales

ISLAA (Investments Surveys Leases Adjustments and Annuities)

MaaS – Mood as a Service, Powered by Maaind

Microsoft 365 Copilot Readiness: 2-Day Assessment and Adoption Workshop

Migration and Assessment – Dynamics 365 Cloud

Mobile Device Management with Microsoft Intune: 2-Day Assessment

Nubovi Cloud Spend Management and Monitoring

Ogi Pro Consulting Services – Identity Management: 2-Week Assessment

OnePact Renewable Energy Management Software

Peppermint CX365 Work Management

POLUMANA Itinerary Planning App

Power Automate: 2-Week Assessment

ProductPlan – Product Management Platform

QuickBooks to Business Central Migration: 4-Hour Readiness Assessment

SaaS Credit Transfer Higher Education

shopfloor.GPT – Industrial AI Assistant for Smart Manufacturing

True Sky Budgeting, Planning, and Forecasting

tyGraph Copilot for Microsoft 365 Analytics

VoyagerAnalytics: 1-Year License, Tier 1

VoyagerAnalytics: 1-Year License, Tier 2

This content was generated by Microsoft Azure OpenAI and then revised by human editors.

Microsoft Tech Community – Latest Blogs –Read More

AI LAB Finland -työpajat asiakkaille kevät 2024

AI LAB Finland on työpajasarja, jossa keskitymme generatiivisen tekoälyn hyötyihin ja mahdollisuuksiin aina yhden liiketoimintaroolin näkökulmasta: myyntijohtajat, talousjohtajat, asiakkuusjohtajat, henkilöstöjohtajat, lakiasiainjohtajat ja toimitusjohtajat. Työpajan aikana pääset keskustelemaan ja oppimaan generatiivisen tekoälyn mahdollisuuksista sekä kuulemaan suoraan alan ammattilaisilta, miten voit hyödyntää työkaluja omassa toiminnassasi.

Työpajat kestävät noin neljä tuntia. Niihin sisältyy Microsoftin tarjoama aamiainen sekä työpajan jälkeen lounas- ja verkostoitumistilaisuus osallistujille. Työpajat on rakennettu osallistaviksi kokemuksiksi. Et vain istu ja kuuntele, vaan haluamme herättää keskustelua, sekä mennä syvemmälle niihin kysymyksiin, joita juuri sinulla herää generatiiviseen tekoälyyn ja sen hyödyntämiseen liittyen.

HUOM! AI LAB Finland -työpajat on suunnattu yrityksen johdossa työskenteleville henkilöille. Työpajat eivät ole teknisiä vaan lähestymme tekoälyä ja sen käyttötarkoituksia henkilön rooliin näkökulmasta.

Alle on listattu kevään 2024 tulevat työpajat. Tervetuloa mukaan!

23.4.2024 AI LAB: Viestintä- ja markkinointijohtajat

Ota sähköpostitse yhteyttä: Microsoftin Tapio Hurmerintaan (v-tapiohu@microsoft.com), kun haluat osallistua tähän työpajaan. Kerro viestissäsi toimialasi, yrityksesi nimi sekä tittelisi.

24.4.2024 AI LAB: Asiakkuusjohtajat

Ota sähköpostitse yhteyttä: Microsoftin Tapio Hurmerintaan (v-tapiohu@microsoft.com), kun haluat osallistua tähän työpajaan. Kerro viestissäsi toimialasi, yrityksesi nimi sekä tittelisi.

10.5.2024 AI LAB: Lakiasiainjohtajat

Lisätietoa sekä ilmoittautuminen työpajaan tästä linkistä.

21.5. AI LAB: Talousjohtajat

Lisätietoa sekä ilmoittautuminen työpajaan tästä linkistä.

22.5. AI LAB Henkilöstöjohtajat

Ota sähköpostitse yhteyttä: Microsoftin Tapio Hurmerintaan (v-tapiohu@microsoft.com), kun haluat osallistua tähän työpajaan. Kerro viestissäsi toimialasi, yrityksesi nimi sekä tittelisi.

Tälle sivulle päivittyvät mahdolliset muut tulevat AI LAB Finland -työpajat.

Microsoft Tech Community – Latest Blogs –Read More

Introducing database watcher for Azure SQL

Reliable, in-depth, and at-scale monitoring of database performance has been a long-standing top priority for SQL customers. Today, we are pleased to announce the public preview of database watcher for Azure SQL, a managed database monitoring solution to help our customers use Azure SQL reliably and efficiently.

Managed monitoring for Azure SQL

To enable database watcher, you do not need to deploy any monitoring infrastructure or install and maintain any monitoring agents. You can create a new watcher and start monitoring your Azure SQL estate in minutes.

Once enabled, database watcher collects detailed monitoring data from your databases, elastic pools, and managed instances into a central data store in your Azure subscription. Data is collected with minimal latency – when you open a monitoring dashboard, you see database state as of just a few seconds ago.

Figure 1: A conceptual diagram of a database watcher deployment, showing the flow of monitoring data from Azure SQL resources to a database watcher, its data store, dashboards, and an analytic query console.

Dashboards in the Azure portal show you a single-pane-of-glass view across your Azure SQL estate, and a detailed view of each database, elastic pool, and managed instance.

Figure 2: An estate dashboard in database watcher, shown in dark mode. In this example, each hexagon represents a primary, secondary, or geo-replica of an Azure SQL database.

Figure 3: A partial view of the detailed resource dashboard, showing performance metrics for an Azure SQL database.

Database watcher is powered by Azure Data Explorer, a fully managed, highly scalable data service, purpose-built for fast ingestion and analytics on time-series monitoring data. A single Azure Data Explorer cluster can scale to support monitoring data from thousands of Azure SQL resources. You have an option to use the same core engine in Real-Time Analytics in Microsoft Fabric, an all-in-one SaaS analytics solution for enterprises. Real-Time Analytics seamlessly integrates with other experiences and items in Microsoft Fabric.

Once the SQL monitoring data is in Azure Data Explorer or Real-Time Analytics, it becomes available not just on dashboards in the Azure portal, but also for a wide variety of other uses: you can analyze data on-demand using KQL or T-SQL, build custom visualizations in Power BI and Grafana or use native Azure Data Explorer dashboards, analyze monitoring data in Excel, or export it for integration with downstream systems.

Database watcher supports all service tiers and SKUs of Azure SQL Database and Azure SQL Managed Instance. This includes vCore and DTU purchasing models, provisioned and serverless compute tiers, single databases and elastic pools, and Hyperscale. It can monitor all types of secondary readable replicas, including high availability replicas, geo-replicas, and Hyperscale named secondary replicas.

To get started and to learn more, review database watcher documentation:

Overview

Quickstart

Create and configure

Data collection and datasets

Analyze monitoring data

FAQ

A retrospective of database monitoring in Azure SQL

Over the last decade, our customers have consistently told us that reliable, low-latency, and detailed monitoring is a must-have for a cloud database service. In the early days of Azure SQL, Azure Monitor metrics and diagnostic telemetry met database monitoring requirements for many of our customers. But when large and complex mission-critical database applications became common in Azure, it was clear that we needed to do more.

In the following years, we released two monitoring solutions that were intended to bring advanced monitoring capabilities to Azure SQL: Azure SQL Analytics and SQL Insights. However, customer feedback for Azure SQL Analytics highlighted gaps in the monitoring data coverage, an excessively high data latency, and high cost at scale. SQL Insights improved data coverage to a degree, but the data latency and cost concerns remained. An even stronger customer feedback for SQL Insights was that customers didn’t want to set up and maintain an IaaS VM to monitor PaaS database services. Reliability problems in Azure SQL Analytics and SQL Insights have affected customers as well. Today, these earlier solutions remain in preview.

We learned from this experience and feedback when designing and building database watcher. This new managed database monitoring solution brings truly in-depth data coverage, collecting data from over 70 SQL system views and visualizing it directly in the Azure portal. Data latency is typically in single-digit seconds or less, not minutes. Azure Data Explorer and Real-Time Analytics bring their advanced analytics capabilities, enabling us, our customers, and partners to develop actionable advice and insights from the rich data that database watcher collects.

Roadmap

The preview we are launching today makes a managed monitoring solution for Azure SQL broadly available to our customers. But our journey is not yet complete. As we make progress toward general availability and beyond, customers can expect the following new features and improvements:

Alerts

Monitoring for SQL Server on Azure VM

Support for an expanded set of Azure regions

An increased limit on the number of monitoring targets per watcher

Configuration and management with PowerShell and Azure CLI

Support for user-assigned managed identity

Database watcher specific RBAC roles and actions

Configurable data collection

Extended events collection

Manageability improvements for monitoring larger estates

This is not a static list. Our work on database watcher is largely driven by your feedback. We encourage you to tell us what is most important for you in the SQL monitoring space, both in the short and in the long term.

Your feedback

During the private preview, dozens of Azure SQL customers have evaluated database watcher for their monitoring scenarios and provided detailed feedback to help us deliver a functional and reliable database monitoring solution. We are very grateful to every private preview participant – you all have made database watcher better!

Now that database watcher is available in public preview, we invite and encourage all Azure SQL customers worldwide to do the same: set up and use database watcher in your environments, tell us what works well, what needs improvement, and what might be missing. Your feedback is critical to help us deliver a generally available solution that you will like. We are looking forward to hearing from you!

Microsoft Tech Community – Latest Blogs –Read More

Last chance to nominate for POTYA!

The Microsoft Partner of the Year Awards nominations are closing on April 3, 2024. Make sure to get your submissions in!

These awards celebrate partner achievements, cloud technologies, entrepreneurial spirit, and highlight the tremendous work done by partners in various industries and in driving social impact. The Country/Region Partner of the Year Awards recognize partner successes in over 100 countries/regions around the world.

Our partner ecosystem provides value to customers by delivering innovative solutions and services to businesses and local economies around the world, and it’s critical that those solutions and services are built to deliver meaningful outcomes for customers and to create inclusive economic opportunity. We believe technology should be a force for global good and we’re intentional about recognizing partner organizations and technology that is inclusive, trusted, and that enables a more sustainable and equitable future, empowering every person and organization to achieve more.

POTYA site: Awards (microsoft.com)

Get inspired by winners: Partner of the Year Awards Winners (microsoft.com)

Guidelines: https://aka.ms/potya_guidelines

Learn more

Microsoft Tech Community – Latest Blogs –Read More

Last chance to nominate for POTYA!

The Microsoft Partner of the Year Awards nominations are closing on April 3, 2024. Make sure to get your submissions in!

These awards celebrate partner achievements, cloud technologies, entrepreneurial spirit, and highlight the tremendous work done by partners in various industries and in driving social impact. The Country/Region Partner of the Year Awards recognize partner successes in over 100 countries/regions around the world.

Our partner ecosystem provides value to customers by delivering innovative solutions and services to businesses and local economies around the world, and it’s critical that those solutions and services are built to deliver meaningful outcomes for customers and to create inclusive economic opportunity. We believe technology should be a force for global good and we’re intentional about recognizing partner organizations and technology that is inclusive, trusted, and that enables a more sustainable and equitable future, empowering every person and organization to achieve more.

POTYA site: Awards (microsoft.com)

Get inspired by winners: Partner of the Year Awards Winners (microsoft.com)

Guidelines: https://aka.ms/potya_guidelines

Learn more

Microsoft Tech Community – Latest Blogs –Read More

Last chance to nominate for POTYA!

The Microsoft Partner of the Year Awards nominations are closing on April 3, 2024. Make sure to get your submissions in!

These awards celebrate partner achievements, cloud technologies, entrepreneurial spirit, and highlight the tremendous work done by partners in various industries and in driving social impact. The Country/Region Partner of the Year Awards recognize partner successes in over 100 countries/regions around the world.

Our partner ecosystem provides value to customers by delivering innovative solutions and services to businesses and local economies around the world, and it’s critical that those solutions and services are built to deliver meaningful outcomes for customers and to create inclusive economic opportunity. We believe technology should be a force for global good and we’re intentional about recognizing partner organizations and technology that is inclusive, trusted, and that enables a more sustainable and equitable future, empowering every person and organization to achieve more.

POTYA site: Awards (microsoft.com)

Get inspired by winners: Partner of the Year Awards Winners (microsoft.com)

Guidelines: https://aka.ms/potya_guidelines

Learn more

Microsoft Tech Community – Latest Blogs –Read More

Last chance to nominate for POTYA!

The Microsoft Partner of the Year Awards nominations are closing on April 3, 2024. Make sure to get your submissions in!

These awards celebrate partner achievements, cloud technologies, entrepreneurial spirit, and highlight the tremendous work done by partners in various industries and in driving social impact. The Country/Region Partner of the Year Awards recognize partner successes in over 100 countries/regions around the world.

Our partner ecosystem provides value to customers by delivering innovative solutions and services to businesses and local economies around the world, and it’s critical that those solutions and services are built to deliver meaningful outcomes for customers and to create inclusive economic opportunity. We believe technology should be a force for global good and we’re intentional about recognizing partner organizations and technology that is inclusive, trusted, and that enables a more sustainable and equitable future, empowering every person and organization to achieve more.

POTYA site: Awards (microsoft.com)

Get inspired by winners: Partner of the Year Awards Winners (microsoft.com)

Guidelines: https://aka.ms/potya_guidelines

Learn more

Microsoft Tech Community – Latest Blogs –Read More

Last chance to nominate for POTYA!

The Microsoft Partner of the Year Awards nominations are closing on April 3, 2024. Make sure to get your submissions in!

These awards celebrate partner achievements, cloud technologies, entrepreneurial spirit, and highlight the tremendous work done by partners in various industries and in driving social impact. The Country/Region Partner of the Year Awards recognize partner successes in over 100 countries/regions around the world.

Our partner ecosystem provides value to customers by delivering innovative solutions and services to businesses and local economies around the world, and it’s critical that those solutions and services are built to deliver meaningful outcomes for customers and to create inclusive economic opportunity. We believe technology should be a force for global good and we’re intentional about recognizing partner organizations and technology that is inclusive, trusted, and that enables a more sustainable and equitable future, empowering every person and organization to achieve more.

POTYA site: Awards (microsoft.com)

Get inspired by winners: Partner of the Year Awards Winners (microsoft.com)

Guidelines: https://aka.ms/potya_guidelines

Learn more

Microsoft Tech Community – Latest Blogs –Read More

Last chance to nominate for POTYA!

The Microsoft Partner of the Year Awards nominations are closing on April 3, 2024. Make sure to get your submissions in!

These awards celebrate partner achievements, cloud technologies, entrepreneurial spirit, and highlight the tremendous work done by partners in various industries and in driving social impact. The Country/Region Partner of the Year Awards recognize partner successes in over 100 countries/regions around the world.

Our partner ecosystem provides value to customers by delivering innovative solutions and services to businesses and local economies around the world, and it’s critical that those solutions and services are built to deliver meaningful outcomes for customers and to create inclusive economic opportunity. We believe technology should be a force for global good and we’re intentional about recognizing partner organizations and technology that is inclusive, trusted, and that enables a more sustainable and equitable future, empowering every person and organization to achieve more.

POTYA site: Awards (microsoft.com)

Get inspired by winners: Partner of the Year Awards Winners (microsoft.com)

Guidelines: https://aka.ms/potya_guidelines

Learn more

Microsoft Tech Community – Latest Blogs –Read More

Last chance to nominate for POTYA!

The Microsoft Partner of the Year Awards nominations are closing on April 3, 2024. Make sure to get your submissions in!