Category: Microsoft

Category Archives: Microsoft

MGDC for SharePoint FAQ: What counts as an object?

1. Overview

When gathering SharePoint data through Microsoft Graph Data Connect (MGDC), you are billed through Azure. You can find the official MGDC pricing information at Pricing – Microsoft Graph Data Connect. As I write this blog, the price to pull 1,000 objects from the available MGDC for SharePoint datasets (Sites, Groups and Permissions) in the US is $0.75, plus the cost for infrastructure like Azure Storage, Azure Data Factory or Azure Synapse.

With all that said, there is still a question that comes up frequently. What counts as an object? Well, that’s what we will cover in this blog post.

2. What MGDC provides

What MGDC delivers to you are datasets. After you run a pipeline with a copy data action, you end up with a collection of files in your Azure Storage account. Each of these files will contain objects. It’s an interesting file format that contains text using JavaScript Object Notation, also known as JSON.

Here is what the contents of the file would look like:

{“property1″:”valuea”,”property2″:”valueb”,”property3″:”valuec”}

{“property1″:”valued”,”property2″:”valuee”,”property3″:”valuef”}

{“property1″:”valueg”,”property2″:”valueh”,”property3″:”valuei”}

{“property1″:”valuej”,”property2″:”valuek”,”property3″:”valuel”}

In the example above, you have a file with 4 JSON objects, each with 3 properties. The file contains one line per object and these lines can get quite long.

3. Multiple JSON objects per file

Even though the files have a JSON file extension, the files you get are not proper JSON files. First, you typically don’t want your JSON content to be one long line. The proper formatting would be something like this:

{

“property1”: “valuea”,

“property2”: “valueb”,

“property3”: “valuec”

}

{

“property1”: “valued”,

“property2”: “valuee”,

“property3”: “valuef”

}

{

“property1”: “valueg”,

“property2”: “valueh”,

“property3”: “valuei”

}

{

“property1”: “valuej”,

“property2”: “valuek”,

“property3”: “valuel”

}

That’s more readable, but this is still not a proper JSON file. That would have only one object, not multiple objects, per file. But if you have lots of objects, having one file for each object will make this far less efficient to process. That’s why MGDC packs lots of JSON objects into a single file with the “json” extension.

Also, if you have lots and lots of objects, MGDC will pack the results as multiple “json” files, each containing many JSON objects packed together.

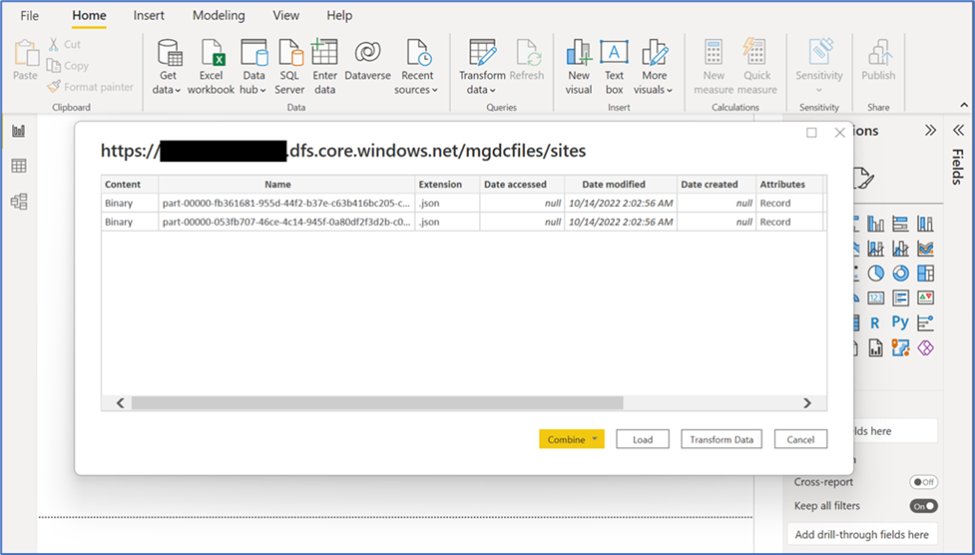

Most data tools have no problem loading this kind of file. Power BI, for example, not only can load files with multiple JSON objects, but it can also load multiple files in a single Power Query. Here’s an example:

Importing JSON files in Power BI

4. What constitutes a SharePoint object in MGDC

With all that information, we’re ready to state what counts as an object for MGDC. Each (long) line in those “json” files is an object, matching the schema published at Datasets, regions, and sinks supported by Microsoft Graph Data Connect.

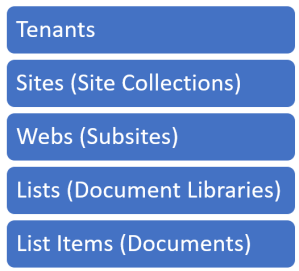

For the SharePoint datasets currently available, you have:

Sites: One object is one site collection. These includes Team sites and OneDrive sites.

Groups: One object is one group. These groups could have multiple members, but those members are all included in that single group object.

Sharing Permissions: One object is a permission granted to a specific scope (site, web, library, folder or file). This single object includes a set of users granted a permission in that scope.

5. Sharing Permission Objects

The SharePoint datasets above are easy to grasp but Sharing Permissions needs further explanation. Permissions captures a more complex concept commonly referred to as an Access Control List (ACL). That is how SharePoint stores permissions granted to users.

Permissions includes different types of permissions (Full control, contribute, read, etc.) that are granted at different scopes (site, web, library, folder or file). This covers permissions granted directly to users and groups, plus those permissions granted using sharing links. Each unique scope and permission combination gets their own Permission object, which could include multiple users and groups in the “shared with” list.

For instance, if you grant full permissions on a file to 10 users, that is a single Permission object where the “Full Control” role definition for the scope of that file is granted to a set of 10 users. That entire information is captured in one Permission object.

If you want to grant permission to a file with 5 users having read/write permission and another 5 users having read-only permissions, then you need 2 Permission objects. One with the “Contribute” role definition for the file being granted to 5 users and another with the “Read” role for that file being granted to the other 5 users.

You can read more about the Sharing Permissions dataset in the blog at SharePoint on MGDC FAQ: What is in the Permissions dataset?

6. Can I predict how many Sharing Permission objects?

The exact number of Sharing Permission objects for a given SharePoint tenant is hard to predict.

If you have 100 sites, for instance, you could reasonably assume that there will be at the very least 300 Permission objects. That’s because each site, by default, gets an Owner, Member and Visitors group. Each of these 3 SharePoint groups is granted these specific permissions. So that’s 3 Permission objects per site, even if you don’t grant any other permissions after creating the site.

In addition to those, you could grant further permissions at other levels. There is also the common scenario of using sharing links. The permissions for each of those links are captured in another Permission object.

Obviously, the more sharing happens in your company, the more Sharing Permission objects you will have. For a sample of tenants with at least 5K sites, I see an average of 53 Permission objects per site, with a median of 35 Permission objects per site. I have seen 100 Permissions per site in a few companies with heavy usage of SharePoint and its collaboration capabilities. I have also seen companies with less collaboration activities that have 10 or 20 Permission objects per site in average.

7. Objects in Delta datasets

An important topic for those concerned with a high number of objects is Delta Datasets. The idea is simple: instead of pulling all objects every day or every week, you can ask SharePoint on MGDC to deliver just what has changed. This mechanism will drastically reduce the number of objects delivered, by providing only objects that were created, updated, or deleted.

For more details about Delta Datasets, read the blog at SharePoint on MGDC FAQ: How can I use Delta State Datasets?

8. Summary

In summary, SharePoint on MGDC delivers data to you as JSON objects, packed into files that are pulled into your Azure Storage account. MGDC objects transferred will show in your Azure bill. The number of SharePoint objects depends on your number of sites/groups/files, as well as the amount of collaboration in your tenant.

I hope this blog post helped you understand what constitutes an object in SharePoint on MGDC. For more information about SharePoint Data in MGDC, please visit the collection of links I keep at https://aka.ms/SharePointData.

Microsoft Tech Community – Latest Blogs –Read More

MGDC for SharePoint FAQ: How can I estimate my Azure bill?

Introduction

When gathering SharePoint data through Microsoft Graph Data Connect, you are billed through Azure. As I write this blog, the price to pull 1,000 objects from Microsoft Graph Data Connect in the US is $0.75, plus the cost for Azure infrastructure like Azure Storage and Azure Synapse.

I wrote a blog about what counts as an object, but I frequently get questions about how to estimate the overall Azure bill for the Microsoft Graph Data Connect for SharePoint for a specific project. Let me try to clarify things…

Before we start, here are a few notes and disclaimers:

These are estimates and your specific Azure bill will vary.

Check the official Azure links provided. Rates may vary by country and over time.

These are Azure pay-as-you-go list prices in the US as of March 2024.

You may benefit from Azure discounts, like savings using a pre-paid plan.

How many objects?

To estimate the number of objects, you start by finding out the number of sites in the tenant. This should include all sites (not just active sites) in your tenant. You can find this number easily in the SharePoint Admin Center. That will be the number of objects in your SharePoint Sites dataset.

Finding the number of SharePoint Groups and SharePoint Permissions will require some estimation. I recently collected some telemetry and saw that the average number of SharePoint Groups per Site for a sample of 100 large tenants was around 22. The average SharePoint permissions per site was around 53.

Delta pulls (gathering just what changed) will be smaller, but that also varies depending on how much collaboration happens in your tenant (in the Delta numbers below, I am estimating a 10% change).

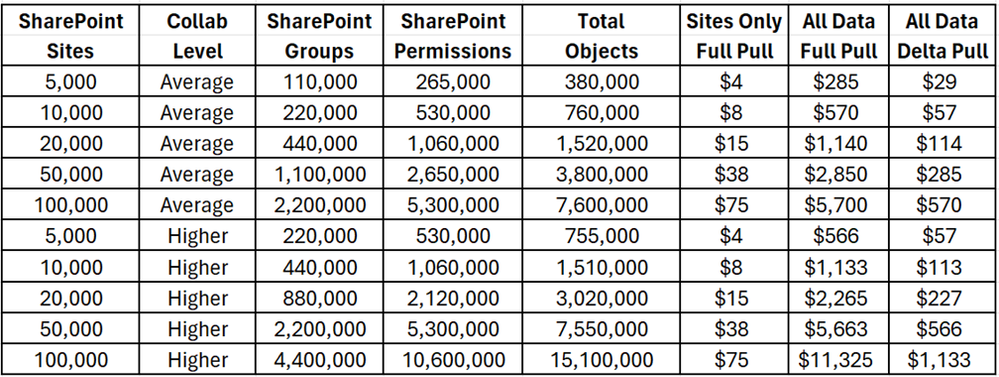

Here’s a table to help you estimate your Microsoft Graph Data Connect for SharePoint costs:

Microsoft Graph Data Connect for SharePoint – Object Costs

The official information about Microsoft Graph Data Connect pricing is at https://azure.microsoft.com/en-us/pricing/details/graph-data-connect/

How much storage?

The SharePoint information you get from Microsoft Graph Data Connect will be stored in an Azure Storage account. That also incurs some cost, but it’s usually small when compared to the Microsoft Graph Data Connect costs for data pulls. The storage will be proportional to the number of objects and to the size of these objects.

Again, this will vary depending on the amount of collaboration in the tenant. More sharing means more members in groups and more people in the permissions, which will result in more objects and also larger objects.

I also did some estimating of object size and arrived at around 2KB per SharePoint Site object, 20KB per SharePoint Group object and 3KB per Permission object. There are several Azure storage options including Standard vs. Premium, LRS vs. GRS, v1 vs. v2 and Hot vs. Cool. For Microsoft Graph Data Connect, you can go with a Standard + LRS + V2 + Cool blob storage account, which costs $0.01 per GB per month.

Here’s a table to help you estimate your Azure Storage costs:

Microsoft Graph Data Connect for SharePoint – Storage Costs

As you can see, smaller tenants will see storage costs below $10/month and larger tenants will see costs below $100/month. And that’s assuming you pull all data daily and keep it for one month. If you keep your Microsoft Graph Data Connect for SharePoint data for multiple months, these costs will increase proportionally. There are additional costs per storage operation like read and write but those are negligible at this scale (for instance, $0.065 per 10,000 writes and $0.005 per 10,000 reads).

The official information about Azure Storage pricing is at https://azure.microsoft.com/en-us/pricing/details/storage/blobs/

What about Synapse?

You will also typically use Azure Synapse to move the SharePoint data from Microsoft 365 to your Azure account. You could run a pipeline daily to get the information and do some basic processing, like computing deltas or creating aggregations.

Here are a few of the items that are billed for Azure Synapse when running Microsoft Graph Data Connect pipelines:

Azure Hosted – Integration Runtime – Data Movement – $0.25/DIU-hour

Azure Hosted – Integration Runtime – Pipeline Activity (Azure Hosted) – $0.005/hour

Azure Hosted – Integration Runtime – Orchestration Activity Run – $1 per 1,000 runs

vCore – $0.15 per vCore-hour

As with Azure Storage, the costs here are small. You will likely need one pipeline run per day and it will typically run in less than one hour for a small tenant. Large tenants might need a few hours per run to gather all their SharePoint datasets. You should expect less than $10/month for smaller tenants and less than $100/month for larger and/or more collaborative tenants.

The official information about Azure Synapse pricing is at https://azure.microsoft.com/en-us/pricing/details/synapse-analytics/

Closing notes

These are the main meters in Azure to get you started with costs related to Microsoft Graph Data Connect for SharePoint. I suggest experimenting with a small test/dev tenant to get familiar with Azure billing.

For more information about Microsoft Graph Data Connect for SharePoint, see the links at https://aka.ms/SharePointData.

Microsoft Tech Community – Latest Blogs –Read More

The Future of Windows Server Hyper-V is Bright!

Greetings folks!

There have been several recent changes in the virtualization market, so this month, I wanted to take a moment to respond to the flood of questions we are receiving about Hyper-V futures, Windows Server 2025, and more. I surmise this blog will garner questions in the comments section, so I plan to answer those questions in the next blog. Let’s get started beginning with Hyper-V itself.

Hyper-V is Microsoft’s hardware virtualization product. It lets you create and run a software version of a computer, called a virtual machine (VM). Each virtual machine acts like a complete computer, running an operating system and programs. When you need computing resources, virtual machines give you more flexibility, help save time and money, and are a more efficient way to use hardware than just running one operating system on physical hardware. This quick description is just the beginning of what Hyper-V delivers…

Hyper-V is a strategic technology at Microsoft.

Please reread that last sentence. When I say strategic technology, I say this because Hyper-V is used throughout Microsoft in:

Azure

Windows Server

Azure Stack HCI

Windows client

Xbox

If you are using Windows Server, you already have Hyper-V. There is no additional charge, it’s built-in, just like it has been for over 15 years. The difference between Hyper-V in Windows Server Standard and Datacenter is the number of Windows Server guest OS instances that are included:

With Windows Server Standard, you are licensed to run two instances of Windows Server guests OS environments.

With Windows Server Datacenter, you are licensed to run unlimited copies of Windows Server guest OS environments.

If you are running Linux as a guest OS, just make sure you are licensed by your distributor, and you can run as many Linux guests as you’d like run on either Windows Server Standard or Datacenter.

In terms of Linux guest OS support, Hyper-V supports Red Hat Enterprise Linux, CentOS, Debian, Oracle Linux, SUSE, and Ubuntu. Linux integration services are included in the Linux kernel and updated for new releases. Hyper-V also supports FreeBSD with FreeBSD Integration Services built into FreeBSD 10.0 and later.

The unlimited use rights of Windows Server Datacenter coupled with the complete package of Hyper-V, Software-defined storage (Storage Spaces Direct) and Software-defined networking (SDN) deliver the best bang for your buck, making it extremely popular. Considering the power and scale of modern compute and storage (Local, SAN, File, Hyperconverged), Windows Server Datacenter is great for virtualization hosts.

Hyper-V is used for more than just virtualization

Hyper-V is used for platform security. Virtualization-based security, or VBS, uses hardware virtualization and the hypervisor to create an isolated virtual environment that becomes the root of trust of the OS that assumes the kernel can be compromised. Windows uses this isolated environment to host several security solutions, providing them with increased protection from vulnerabilities and preventing the use of malicious exploits which attempt to defeat protections. VBS enforces restrictions to protect vital system and operating system resources, or to protect security assets such as authenticated user credentials.

Hyper-V is used for containers. Hyper-V isolation for containers offers enhanced security and broader compatibility between host and container versions. With Hyper-V isolation, multiple container instances run concurrently on a host; however, each container runs inside of a highly optimized virtual machine and effectively gets its own kernel. The presence of the virtual machine provides hardware-level isolation between each container as well as the container host.

Hyper-V in Azure

Below is a screen shot of a virtual machine in Azure. Take a close look. This single VM supports up to 1,792 Virtual Processors and 29.7 Terabytes of RAM. I apologize that this VM only has 29.7 Terabytes of RAM (we support up to 48 TB of RAM), but those machines are busy running some of the largest workloads on the planet.

Because Hyper-V is used throughout Microsoft and in Azure, you reap the benefits of innovation we deliver in Azure that percolates through the other products. For example, today in Azure we have a wide range of VM offerings from small to gargantuan with a wide range of CPU, memory, networking, storage options and GPUs. Azure VMs with GPUs are available with single or fractional GPUs and designed for compute intensive, graphics intensive and visualization workloads from Virtual Desktops to AI. To enable these VM offerings in Azure with GPUs required changes to Hyper-V. Guess what is coming in Windows Server 2025?

Windows Server 2025 is introducing GPU partitioning (GPU-P) to enable scenarios on-premises or at the edge. You will be able to partition GPUs and assign them to VMs while retaining high availability and live migration. GPU-P is so flexible that you can live migrate VMs with partitioned GPUs between two standalone servers. No cluster required and great for test/dev!

Windows Server 2025 is introducing Workgroup Clusters

Speaking of no cluster required, we are making significant changes to make Hyper-V deployments at the edge easier. One thing we are hearing from you is that due to the power of modern servers, it is easier than ever to deploy small footprints at the edge. Today, you can purchase two and three node clusters that are small enough to fit in the overhead compartment of an airplane. Up to Windows Server 2022, deploying a cluster requires Active Directory. While this is not an issue in the datacenter, this adds complexity at the edge. With Windows Server 2025, we are introducing the ability to deploy “Workgroup Clusters.” Workgroup clusters do not require AD and are a certificate-based solution!

Windows Server 2025 is chock full of innovation, and GPU-P and workgroup clusters are just the beginning. If you would like to learn more about Windows Server 2025 with demos, check out this Ignite Session, “What’s New In Windows Server vNext (2025).”

Windows Server 2025 Insider Preview: Now with Flighting!

If you want to start evaluating Windows Server 2025, there is no better time than right now and we’re making it easier than ever with Windows Server flighting! If you have a recent Windows Server insider build installed, you can now go to Windows Update in Settings, and check for updates. This will provide an update to a newer build, as a Feature update (also known as “in place OS upgrade”). That’s it! The process is easy and has proven well for hundreds of thousands of Windows 10 and Windows 11 insiders over the years.

Windows Server 2025 Hyper-V

As I stated earlier, Hyper-V is a strategic technology at Microsoft used throughout our products. Since the first release of Hyper-V in Windows Server 2008, we never stopped innovating Hyper-V and there are no plans to stop. In the next blog, I will be answering your questions, and we will see where that takes us!

One more thing: Windows Server Engineering Summit 2024

I’m pleased to announce the Windows Server Engineering Summit 2024. This year, we bring you three days of demos, technical sessions, and Q&A, led by Microsoft engineers, guest experts from Intel, and our MVP community. RSVP now to learn:

What’s coming next in Windows Server 2025

Get best practices for security and identity

Tips for cloud migration and hybrid cloud management

Cover new technologies and capabilities

Hybrid cloud with Azure Arc

Security and hardening, (everyone’s favorite)

Migration, and much more

We’ll also offer live Q&A during all the sessions so watch, learn, and post your questions early and often!

Cheers,

Jeff Woolsey

Microsoft

Microsoft Tech Community – Latest Blogs –Read More

AI/ML ModelOps is a Journey. Get Ready with SAS® Viya® Platform on Azure

Why do you need a ModelOps Platform for your organization?

If you are a data scientist or an analytics leader, you know the challenges of developing and deploying analytical models in a fast-paced and competitive business environment. You may have hundreds or thousands of models in various stages of the analytics life cycle, but only a fraction of them are actually delivering value to your organization. You may face issues such as long development cycles, manual processes, lack of visibility, poor performance, and loss of intellectual property. These issues can prevent you from realizing the full potential of your analytics investments and hinder your ability to innovate and respond to changing needs.

That’s why you need model ops in your organization. Model ops is a set of practices and technologies that enable you to automate, monitor, and manage your analytical models throughout their life cycle. Model ops can help you streamline your workflows, improve your model quality, increase your productivity, and ensure your models are always aligned with your business goals. Model ops can also help you foster collaboration and trust among your stakeholders, such as data scientists, IT, business users, and regulators. With model ops, you can turn your models into assets that drive value and competitive advantage for your organization.

SAS Viya Platform: A powerful AI/ML Model management Platform

SAS Viya is a powerful cloud-based analytics platform built by Microsoft’s coveted partner SAS Institute Inc. that combines AI (Artificial Intelligence) and traditional analytics capabilities. SAS Viya seamlessly integrates with Microsoft Azure services, enhancing the analytics capabilities and providing a powerful platform for data-driven decision-making.

Simplified Acquisition Process for SAS Viya Platform @Azure Marketplace

The simplified acquisition process of SAS Viya on Azure marks a significant departure from the traditional method of obtaining SAS Viya. With the introduction of SAS Viya on Azure, users now benefit from a streamlined approach via the Azure Marketplace. This marketplace serves as a user-friendly platform where, with just a click on the “Create” button, customers initiate the acquisition process.

The automated deployment, a key feature of this approach eliminating the need for extensive IT involvement. Azure Marketplace not only expedites the transaction offering a faster and more accessible path for users to access and leverage the advanced analytics capabilities of SAS Viya.

Key Features of SAS Viya on Azure:

SAS Visual Machine Learning (VML): SAS Viya includes robust machine learning capabilities. With VML, you can build, train, and deploy machine learning models efficiently. Whether you’re a Python enthusiast, an R aficionado, or prefer Jupyter Notebooks, SAS Viya integrates seamlessly with these languages to enhance your data science workflows.

Integration with Python, R, and Jupyter Notebooks: SAS Viya provides native integration with popular programming languages. You can leverage your existing Python or R code within the SAS Viya environment. Additionally, Jupyter Notebooks allow for interactive exploration and documentation of your analyses.

Azure Data Sources and Services Integration:

Azure Synapse: SAS Viya connects to Azure Synapse, enabling you to enrich data from various sources. Use SAS Information Catalog and SAS Visual Analytics to explore and prepare data efficiently.

Azure Machine Learning: Collaborate between SAS Viya and Azure Machine Learning to build and deploy analytic models. You can choose your preferred programming language and even use a visual drag-and-drop interface for model components. Seamlessly move models to production.

Power Automate and Power Apps: Automate decision-making processes by integrating SAS Viya with Microsoft Power Automate and Power Apps. Enable real-time, calculated decisions across various domains, such as claims processing, credit decisioning, fraud detection, and more.

Azure IoT Hub and Azure IoT Edge: Stream data from IoT devices across environments, allowing real-time decisioning and analysis. Benefit from in-stream automation, data curation, and modeling while maintaining total governance.

Azure Data Sources: SAS Viya uses high-performance connectors to source data from Azure environments, provisioning data for downstream AI needs.

SAS Analytic Lifecycle Capabilities

Access and Prepare Data:

SAS Viya allows you to handle complex and large datasets efficiently. You can perform data preparation tasks such as cleaning, transforming, and structuring data for analysis.

Whether your data resides in databases, spreadsheets, or cloud storage, SAS Viya provides seamless connectivity to various data sources.

Visualize Data:

Data visualization is crucial for understanding data relationships, patterns, and trends. SAS Viya offers powerful visualization tools to create insightful charts, graphs, and dashboards.

Explore your data visually, identify outliers, and gain valuable insights before diving into modeling.

Build Models:

SAS Viya leverages AI techniques to build predictive and prescriptive models. Whether you’re solving real-world business problems or conducting research, you can utilize machine learning algorithms, statistical methods, and optimization techniques.

Experiment with different models, evaluate their performance, and choose the best one for your specific use case.

Automation:

Automate repetitive tasks within your analytic workflows. SAS Viya allows you to create data pipelines, schedule data refreshes, and automate model deployment.

Collaborate with other users by sharing workflows and automating decision-making processes.

Integration:

Connect seamlessly with open-source languages such as Python and R. If you have existing code or libraries, integrate them into your SAS Viya environment.

Leverage the power of both SAS and open-source tools to enhance your analytics capabilities.

ModelOps:

Managing models over time is critical for maintaining their accuracy and relevance. SAS Viya provides tools for monitoring model performance, adapting to changes in data, and retraining models as needed.

Stay on top of your models’ health and ensure they continue to deliver value.

Demonstration of Deployment on Azure:

Let’s walk through the steps for deploying SAS Viya on Microsoft Azure. This process typically takes about an hour to complete. Here’s how you can get started:

Access SAS Viya on Azure:

Visit the Azure Marketplace and search for “SAS Viya (Pay-As-You-Go)

Click “Get It Now” and then select “Continue.”

Deployment Form:

Click “Create” next to the plan.

Complete the form with the necessary information:

Project Details:

Specify your Subscription and Resource Group. These values depend on your organization’s Azure resource management practices. (Don’t forget to prepare a suitable landing zone for your Analytical platform)

Instance Details:

Personalize the URL that users will use to access SAS Viya. The Region and Deployment DNS Prefix contribute to the URL. Choose a region geographically close to your users.

Provide a Deployment Name for reference within the Azure interface.

Security and Access:

Set an administrator password for SAS Viya (remember or record it).

Choose one of the following options for SSH public key source:

Generate a new key pair.

Use a key stored in Azure.

Copy and paste a public key.

Optionally, secure access by specifying Authorized IP Ranges.

Review and Create:

Click “Review + create” to proceed.

Confirm the information, accept the terms and conditions, and click “Create.”

If you opted to create SSH keys, select “Download + create” when prompted.

Deployment Completion:

The deployment process may take up to an hour.

Once completed, sign in to SAS Viya using the URL you personalized earlier

Summary

SAS Viya on Azure provides a user-friendly, automated approach to access advanced analytics capabilities.

Offers seamless integration with Azure tools and services, catering to a wide range of users in the analytics space.

Next Steps

In the next part, I will dive deep into the model ops capabilities and advantages of combining Azure and SAS Viya platform.

Microsoft Tech Community – Latest Blogs –Read More

AI/ML ModelOps is a Journey. Get Ready with SAS® Viya® Platform on Azure

Why do you need a ModelOps Platform for your organization?

If you are a data scientist or an analytics leader, you know the challenges of developing and deploying analytical models in a fast-paced and competitive business environment. You may have hundreds or thousands of models in various stages of the analytics life cycle, but only a fraction of them are actually delivering value to your organization. You may face issues such as long development cycles, manual processes, lack of visibility, poor performance, and loss of intellectual property. These issues can prevent you from realizing the full potential of your analytics investments and hinder your ability to innovate and respond to changing needs.

That’s why you need model ops in your organization. Model ops is a set of practices and technologies that enable you to automate, monitor, and manage your analytical models throughout their life cycle. Model ops can help you streamline your workflows, improve your model quality, increase your productivity, and ensure your models are always aligned with your business goals. Model ops can also help you foster collaboration and trust among your stakeholders, such as data scientists, IT, business users, and regulators. With model ops, you can turn your models into assets that drive value and competitive advantage for your organization.

SAS Viya Platform: A powerful AI/ML Model management Platform

SAS Viya is a powerful cloud-based analytics platform built by Microsoft’s coveted partner SAS Institute Inc. that combines AI (Artificial Intelligence) and traditional analytics capabilities. SAS Viya seamlessly integrates with Microsoft Azure services, enhancing the analytics capabilities and providing a powerful platform for data-driven decision-making.

Simplified Acquisition Process for SAS Viya Platform @Azure Marketplace

The simplified acquisition process of SAS Viya on Azure marks a significant departure from the traditional method of obtaining SAS Viya. With the introduction of SAS Viya on Azure, users now benefit from a streamlined approach via the Azure Marketplace. This marketplace serves as a user-friendly platform where, with just a click on the “Create” button, customers initiate the acquisition process.

The automated deployment, a key feature of this approach eliminating the need for extensive IT involvement. Azure Marketplace not only expedites the transaction offering a faster and more accessible path for users to access and leverage the advanced analytics capabilities of SAS Viya.

Key Features of SAS Viya on Azure:

SAS Visual Machine Learning (VML): SAS Viya includes robust machine learning capabilities. With VML, you can build, train, and deploy machine learning models efficiently. Whether you’re a Python enthusiast, an R aficionado, or prefer Jupyter Notebooks, SAS Viya integrates seamlessly with these languages to enhance your data science workflows.

Integration with Python, R, and Jupyter Notebooks: SAS Viya provides native integration with popular programming languages. You can leverage your existing Python or R code within the SAS Viya environment. Additionally, Jupyter Notebooks allow for interactive exploration and documentation of your analyses.

Azure Data Sources and Services Integration:

Azure Synapse: SAS Viya connects to Azure Synapse, enabling you to enrich data from various sources. Use SAS Information Catalog and SAS Visual Analytics to explore and prepare data efficiently.

Azure Machine Learning: Collaborate between SAS Viya and Azure Machine Learning to build and deploy analytic models. You can choose your preferred programming language and even use a visual drag-and-drop interface for model components. Seamlessly move models to production.

Power Automate and Power Apps: Automate decision-making processes by integrating SAS Viya with Microsoft Power Automate and Power Apps. Enable real-time, calculated decisions across various domains, such as claims processing, credit decisioning, fraud detection, and more.

Azure IoT Hub and Azure IoT Edge: Stream data from IoT devices across environments, allowing real-time decisioning and analysis. Benefit from in-stream automation, data curation, and modeling while maintaining total governance.

Azure Data Sources: SAS Viya uses high-performance connectors to source data from Azure environments, provisioning data for downstream AI needs.

SAS Analytic Lifecycle Capabilities

Access and Prepare Data:

SAS Viya allows you to handle complex and large datasets efficiently. You can perform data preparation tasks such as cleaning, transforming, and structuring data for analysis.

Whether your data resides in databases, spreadsheets, or cloud storage, SAS Viya provides seamless connectivity to various data sources.

Visualize Data:

Data visualization is crucial for understanding data relationships, patterns, and trends. SAS Viya offers powerful visualization tools to create insightful charts, graphs, and dashboards.

Explore your data visually, identify outliers, and gain valuable insights before diving into modeling.

Build Models:

SAS Viya leverages AI techniques to build predictive and prescriptive models. Whether you’re solving real-world business problems or conducting research, you can utilize machine learning algorithms, statistical methods, and optimization techniques.

Experiment with different models, evaluate their performance, and choose the best one for your specific use case.

Automation:

Automate repetitive tasks within your analytic workflows. SAS Viya allows you to create data pipelines, schedule data refreshes, and automate model deployment.

Collaborate with other users by sharing workflows and automating decision-making processes.

Integration:

Connect seamlessly with open-source languages such as Python and R. If you have existing code or libraries, integrate them into your SAS Viya environment.

Leverage the power of both SAS and open-source tools to enhance your analytics capabilities.

ModelOps:

Managing models over time is critical for maintaining their accuracy and relevance. SAS Viya provides tools for monitoring model performance, adapting to changes in data, and retraining models as needed.

Stay on top of your models’ health and ensure they continue to deliver value.

Demonstration of Deployment on Azure:

Let’s walk through the steps for deploying SAS Viya on Microsoft Azure. This process typically takes about an hour to complete. Here’s how you can get started:

Access SAS Viya on Azure:

Visit the Azure Marketplace and search for “SAS Viya (Pay-As-You-Go)

Click “Get It Now” and then select “Continue.”

Deployment Form:

Click “Create” next to the plan.

Complete the form with the necessary information:

Project Details:

Specify your Subscription and Resource Group. These values depend on your organization’s Azure resource management practices. (Don’t forget to prepare a suitable landing zone for your Analytical platform)

Instance Details:

Personalize the URL that users will use to access SAS Viya. The Region and Deployment DNS Prefix contribute to the URL. Choose a region geographically close to your users.

Provide a Deployment Name for reference within the Azure interface.

Security and Access:

Set an administrator password for SAS Viya (remember or record it).

Choose one of the following options for SSH public key source:

Generate a new key pair.

Use a key stored in Azure.

Copy and paste a public key.

Optionally, secure access by specifying Authorized IP Ranges.

Review and Create:

Click “Review + create” to proceed.

Confirm the information, accept the terms and conditions, and click “Create.”

If you opted to create SSH keys, select “Download + create” when prompted.

Deployment Completion:

The deployment process may take up to an hour.

Once completed, sign in to SAS Viya using the URL you personalized earlier

Summary

SAS Viya on Azure provides a user-friendly, automated approach to access advanced analytics capabilities.

Offers seamless integration with Azure tools and services, catering to a wide range of users in the analytics space.

Next Steps

In the next part, I will dive deep into the model ops capabilities and advantages of combining Azure and SAS Viya platform.

Microsoft Tech Community – Latest Blogs –Read More

AI/ML ModelOps is a Journey. Get Ready with SAS® Viya® Platform on Azure

Why do you need a ModelOps Platform for your organization?

If you are a data scientist or an analytics leader, you know the challenges of developing and deploying analytical models in a fast-paced and competitive business environment. You may have hundreds or thousands of models in various stages of the analytics life cycle, but only a fraction of them are actually delivering value to your organization. You may face issues such as long development cycles, manual processes, lack of visibility, poor performance, and loss of intellectual property. These issues can prevent you from realizing the full potential of your analytics investments and hinder your ability to innovate and respond to changing needs.

That’s why you need model ops in your organization. Model ops is a set of practices and technologies that enable you to automate, monitor, and manage your analytical models throughout their life cycle. Model ops can help you streamline your workflows, improve your model quality, increase your productivity, and ensure your models are always aligned with your business goals. Model ops can also help you foster collaboration and trust among your stakeholders, such as data scientists, IT, business users, and regulators. With model ops, you can turn your models into assets that drive value and competitive advantage for your organization.

SAS Viya Platform: A powerful AI/ML Model management Platform

SAS Viya is a powerful cloud-based analytics platform built by Microsoft’s coveted partner SAS Institute Inc. that combines AI (Artificial Intelligence) and traditional analytics capabilities. SAS Viya seamlessly integrates with Microsoft Azure services, enhancing the analytics capabilities and providing a powerful platform for data-driven decision-making.

Simplified Acquisition Process for SAS Viya Platform @Azure Marketplace

The simplified acquisition process of SAS Viya on Azure marks a significant departure from the traditional method of obtaining SAS Viya. With the introduction of SAS Viya on Azure, users now benefit from a streamlined approach via the Azure Marketplace. This marketplace serves as a user-friendly platform where, with just a click on the “Create” button, customers initiate the acquisition process.

The automated deployment, a key feature of this approach eliminating the need for extensive IT involvement. Azure Marketplace not only expedites the transaction offering a faster and more accessible path for users to access and leverage the advanced analytics capabilities of SAS Viya.

Key Features of SAS Viya on Azure:

SAS Visual Machine Learning (VML): SAS Viya includes robust machine learning capabilities. With VML, you can build, train, and deploy machine learning models efficiently. Whether you’re a Python enthusiast, an R aficionado, or prefer Jupyter Notebooks, SAS Viya integrates seamlessly with these languages to enhance your data science workflows.

Integration with Python, R, and Jupyter Notebooks: SAS Viya provides native integration with popular programming languages. You can leverage your existing Python or R code within the SAS Viya environment. Additionally, Jupyter Notebooks allow for interactive exploration and documentation of your analyses.

Azure Data Sources and Services Integration:

Azure Synapse: SAS Viya connects to Azure Synapse, enabling you to enrich data from various sources. Use SAS Information Catalog and SAS Visual Analytics to explore and prepare data efficiently.

Azure Machine Learning: Collaborate between SAS Viya and Azure Machine Learning to build and deploy analytic models. You can choose your preferred programming language and even use a visual drag-and-drop interface for model components. Seamlessly move models to production.

Power Automate and Power Apps: Automate decision-making processes by integrating SAS Viya with Microsoft Power Automate and Power Apps. Enable real-time, calculated decisions across various domains, such as claims processing, credit decisioning, fraud detection, and more.

Azure IoT Hub and Azure IoT Edge: Stream data from IoT devices across environments, allowing real-time decisioning and analysis. Benefit from in-stream automation, data curation, and modeling while maintaining total governance.

Azure Data Sources: SAS Viya uses high-performance connectors to source data from Azure environments, provisioning data for downstream AI needs.

SAS Analytic Lifecycle Capabilities

Access and Prepare Data:

SAS Viya allows you to handle complex and large datasets efficiently. You can perform data preparation tasks such as cleaning, transforming, and structuring data for analysis.

Whether your data resides in databases, spreadsheets, or cloud storage, SAS Viya provides seamless connectivity to various data sources.

Visualize Data:

Data visualization is crucial for understanding data relationships, patterns, and trends. SAS Viya offers powerful visualization tools to create insightful charts, graphs, and dashboards.

Explore your data visually, identify outliers, and gain valuable insights before diving into modeling.

Build Models:

SAS Viya leverages AI techniques to build predictive and prescriptive models. Whether you’re solving real-world business problems or conducting research, you can utilize machine learning algorithms, statistical methods, and optimization techniques.

Experiment with different models, evaluate their performance, and choose the best one for your specific use case.

Automation:

Automate repetitive tasks within your analytic workflows. SAS Viya allows you to create data pipelines, schedule data refreshes, and automate model deployment.

Collaborate with other users by sharing workflows and automating decision-making processes.

Integration:

Connect seamlessly with open-source languages such as Python and R. If you have existing code or libraries, integrate them into your SAS Viya environment.

Leverage the power of both SAS and open-source tools to enhance your analytics capabilities.

ModelOps:

Managing models over time is critical for maintaining their accuracy and relevance. SAS Viya provides tools for monitoring model performance, adapting to changes in data, and retraining models as needed.

Stay on top of your models’ health and ensure they continue to deliver value.

Demonstration of Deployment on Azure:

Let’s walk through the steps for deploying SAS Viya on Microsoft Azure. This process typically takes about an hour to complete. Here’s how you can get started:

Access SAS Viya on Azure:

Visit the Azure Marketplace and search for “SAS Viya (Pay-As-You-Go)

Click “Get It Now” and then select “Continue.”

Deployment Form:

Click “Create” next to the plan.

Complete the form with the necessary information:

Project Details:

Specify your Subscription and Resource Group. These values depend on your organization’s Azure resource management practices. (Don’t forget to prepare a suitable landing zone for your Analytical platform)

Instance Details:

Personalize the URL that users will use to access SAS Viya. The Region and Deployment DNS Prefix contribute to the URL. Choose a region geographically close to your users.

Provide a Deployment Name for reference within the Azure interface.

Security and Access:

Set an administrator password for SAS Viya (remember or record it).

Choose one of the following options for SSH public key source:

Generate a new key pair.

Use a key stored in Azure.

Copy and paste a public key.

Optionally, secure access by specifying Authorized IP Ranges.

Review and Create:

Click “Review + create” to proceed.

Confirm the information, accept the terms and conditions, and click “Create.”

If you opted to create SSH keys, select “Download + create” when prompted.

Deployment Completion:

The deployment process may take up to an hour.

Once completed, sign in to SAS Viya using the URL you personalized earlier

Summary

SAS Viya on Azure provides a user-friendly, automated approach to access advanced analytics capabilities.

Offers seamless integration with Azure tools and services, catering to a wide range of users in the analytics space.

Next Steps

In the next part, I will dive deep into the model ops capabilities and advantages of combining Azure and SAS Viya platform.

Microsoft Tech Community – Latest Blogs –Read More

AI/ML ModelOps is a Journey. Get Ready with SAS® Viya® Platform on Azure

Why do you need a ModelOps Platform for your organization?

If you are a data scientist or an analytics leader, you know the challenges of developing and deploying analytical models in a fast-paced and competitive business environment. You may have hundreds or thousands of models in various stages of the analytics life cycle, but only a fraction of them are actually delivering value to your organization. You may face issues such as long development cycles, manual processes, lack of visibility, poor performance, and loss of intellectual property. These issues can prevent you from realizing the full potential of your analytics investments and hinder your ability to innovate and respond to changing needs.

That’s why you need model ops in your organization. Model ops is a set of practices and technologies that enable you to automate, monitor, and manage your analytical models throughout their life cycle. Model ops can help you streamline your workflows, improve your model quality, increase your productivity, and ensure your models are always aligned with your business goals. Model ops can also help you foster collaboration and trust among your stakeholders, such as data scientists, IT, business users, and regulators. With model ops, you can turn your models into assets that drive value and competitive advantage for your organization.

SAS Viya Platform: A powerful AI/ML Model management Platform

SAS Viya is a powerful cloud-based analytics platform built by Microsoft’s coveted partner SAS Institute Inc. that combines AI (Artificial Intelligence) and traditional analytics capabilities. SAS Viya seamlessly integrates with Microsoft Azure services, enhancing the analytics capabilities and providing a powerful platform for data-driven decision-making.

Simplified Acquisition Process for SAS Viya Platform @Azure Marketplace

The simplified acquisition process of SAS Viya on Azure marks a significant departure from the traditional method of obtaining SAS Viya. With the introduction of SAS Viya on Azure, users now benefit from a streamlined approach via the Azure Marketplace. This marketplace serves as a user-friendly platform where, with just a click on the “Create” button, customers initiate the acquisition process.

The automated deployment, a key feature of this approach eliminating the need for extensive IT involvement. Azure Marketplace not only expedites the transaction offering a faster and more accessible path for users to access and leverage the advanced analytics capabilities of SAS Viya.

Key Features of SAS Viya on Azure:

SAS Visual Machine Learning (VML): SAS Viya includes robust machine learning capabilities. With VML, you can build, train, and deploy machine learning models efficiently. Whether you’re a Python enthusiast, an R aficionado, or prefer Jupyter Notebooks, SAS Viya integrates seamlessly with these languages to enhance your data science workflows.

Integration with Python, R, and Jupyter Notebooks: SAS Viya provides native integration with popular programming languages. You can leverage your existing Python or R code within the SAS Viya environment. Additionally, Jupyter Notebooks allow for interactive exploration and documentation of your analyses.

Azure Data Sources and Services Integration:

Azure Synapse: SAS Viya connects to Azure Synapse, enabling you to enrich data from various sources. Use SAS Information Catalog and SAS Visual Analytics to explore and prepare data efficiently.

Azure Machine Learning: Collaborate between SAS Viya and Azure Machine Learning to build and deploy analytic models. You can choose your preferred programming language and even use a visual drag-and-drop interface for model components. Seamlessly move models to production.

Power Automate and Power Apps: Automate decision-making processes by integrating SAS Viya with Microsoft Power Automate and Power Apps. Enable real-time, calculated decisions across various domains, such as claims processing, credit decisioning, fraud detection, and more.

Azure IoT Hub and Azure IoT Edge: Stream data from IoT devices across environments, allowing real-time decisioning and analysis. Benefit from in-stream automation, data curation, and modeling while maintaining total governance.

Azure Data Sources: SAS Viya uses high-performance connectors to source data from Azure environments, provisioning data for downstream AI needs.

SAS Analytic Lifecycle Capabilities

Access and Prepare Data:

SAS Viya allows you to handle complex and large datasets efficiently. You can perform data preparation tasks such as cleaning, transforming, and structuring data for analysis.

Whether your data resides in databases, spreadsheets, or cloud storage, SAS Viya provides seamless connectivity to various data sources.

Visualize Data:

Data visualization is crucial for understanding data relationships, patterns, and trends. SAS Viya offers powerful visualization tools to create insightful charts, graphs, and dashboards.

Explore your data visually, identify outliers, and gain valuable insights before diving into modeling.

Build Models:

SAS Viya leverages AI techniques to build predictive and prescriptive models. Whether you’re solving real-world business problems or conducting research, you can utilize machine learning algorithms, statistical methods, and optimization techniques.

Experiment with different models, evaluate their performance, and choose the best one for your specific use case.

Automation:

Automate repetitive tasks within your analytic workflows. SAS Viya allows you to create data pipelines, schedule data refreshes, and automate model deployment.

Collaborate with other users by sharing workflows and automating decision-making processes.

Integration:

Connect seamlessly with open-source languages such as Python and R. If you have existing code or libraries, integrate them into your SAS Viya environment.

Leverage the power of both SAS and open-source tools to enhance your analytics capabilities.

ModelOps:

Managing models over time is critical for maintaining their accuracy and relevance. SAS Viya provides tools for monitoring model performance, adapting to changes in data, and retraining models as needed.

Stay on top of your models’ health and ensure they continue to deliver value.

Demonstration of Deployment on Azure:

Let’s walk through the steps for deploying SAS Viya on Microsoft Azure. This process typically takes about an hour to complete. Here’s how you can get started:

Access SAS Viya on Azure:

Visit the Azure Marketplace and search for “SAS Viya (Pay-As-You-Go)

Click “Get It Now” and then select “Continue.”

Deployment Form:

Click “Create” next to the plan.

Complete the form with the necessary information:

Project Details:

Specify your Subscription and Resource Group. These values depend on your organization’s Azure resource management practices. (Don’t forget to prepare a suitable landing zone for your Analytical platform)

Instance Details:

Personalize the URL that users will use to access SAS Viya. The Region and Deployment DNS Prefix contribute to the URL. Choose a region geographically close to your users.

Provide a Deployment Name for reference within the Azure interface.

Security and Access:

Set an administrator password for SAS Viya (remember or record it).

Choose one of the following options for SSH public key source:

Generate a new key pair.

Use a key stored in Azure.

Copy and paste a public key.

Optionally, secure access by specifying Authorized IP Ranges.

Review and Create:

Click “Review + create” to proceed.

Confirm the information, accept the terms and conditions, and click “Create.”

If you opted to create SSH keys, select “Download + create” when prompted.

Deployment Completion:

The deployment process may take up to an hour.

Once completed, sign in to SAS Viya using the URL you personalized earlier

Summary

SAS Viya on Azure provides a user-friendly, automated approach to access advanced analytics capabilities.

Offers seamless integration with Azure tools and services, catering to a wide range of users in the analytics space.

Next Steps

In the next part, I will dive deep into the model ops capabilities and advantages of combining Azure and SAS Viya platform.

Microsoft Tech Community – Latest Blogs –Read More

AI/ML ModelOps is a Journey. Get Ready with SAS® Viya® Platform on Azure

Why do you need a ModelOps Platform for your organization?

If you are a data scientist or an analytics leader, you know the challenges of developing and deploying analytical models in a fast-paced and competitive business environment. You may have hundreds or thousands of models in various stages of the analytics life cycle, but only a fraction of them are actually delivering value to your organization. You may face issues such as long development cycles, manual processes, lack of visibility, poor performance, and loss of intellectual property. These issues can prevent you from realizing the full potential of your analytics investments and hinder your ability to innovate and respond to changing needs.

That’s why you need model ops in your organization. Model ops is a set of practices and technologies that enable you to automate, monitor, and manage your analytical models throughout their life cycle. Model ops can help you streamline your workflows, improve your model quality, increase your productivity, and ensure your models are always aligned with your business goals. Model ops can also help you foster collaboration and trust among your stakeholders, such as data scientists, IT, business users, and regulators. With model ops, you can turn your models into assets that drive value and competitive advantage for your organization.

SAS Viya Platform: A powerful AI/ML Model management Platform

SAS Viya is a powerful cloud-based analytics platform built by Microsoft’s coveted partner SAS Institute Inc. that combines AI (Artificial Intelligence) and traditional analytics capabilities. SAS Viya seamlessly integrates with Microsoft Azure services, enhancing the analytics capabilities and providing a powerful platform for data-driven decision-making.

Simplified Acquisition Process for SAS Viya Platform @Azure Marketplace

The simplified acquisition process of SAS Viya on Azure marks a significant departure from the traditional method of obtaining SAS Viya. With the introduction of SAS Viya on Azure, users now benefit from a streamlined approach via the Azure Marketplace. This marketplace serves as a user-friendly platform where, with just a click on the “Create” button, customers initiate the acquisition process.

The automated deployment, a key feature of this approach eliminating the need for extensive IT involvement. Azure Marketplace not only expedites the transaction offering a faster and more accessible path for users to access and leverage the advanced analytics capabilities of SAS Viya.

Key Features of SAS Viya on Azure:

SAS Visual Machine Learning (VML): SAS Viya includes robust machine learning capabilities. With VML, you can build, train, and deploy machine learning models efficiently. Whether you’re a Python enthusiast, an R aficionado, or prefer Jupyter Notebooks, SAS Viya integrates seamlessly with these languages to enhance your data science workflows.

Integration with Python, R, and Jupyter Notebooks: SAS Viya provides native integration with popular programming languages. You can leverage your existing Python or R code within the SAS Viya environment. Additionally, Jupyter Notebooks allow for interactive exploration and documentation of your analyses.

Azure Data Sources and Services Integration:

Azure Synapse: SAS Viya connects to Azure Synapse, enabling you to enrich data from various sources. Use SAS Information Catalog and SAS Visual Analytics to explore and prepare data efficiently.

Azure Machine Learning: Collaborate between SAS Viya and Azure Machine Learning to build and deploy analytic models. You can choose your preferred programming language and even use a visual drag-and-drop interface for model components. Seamlessly move models to production.

Power Automate and Power Apps: Automate decision-making processes by integrating SAS Viya with Microsoft Power Automate and Power Apps. Enable real-time, calculated decisions across various domains, such as claims processing, credit decisioning, fraud detection, and more.

Azure IoT Hub and Azure IoT Edge: Stream data from IoT devices across environments, allowing real-time decisioning and analysis. Benefit from in-stream automation, data curation, and modeling while maintaining total governance.

Azure Data Sources: SAS Viya uses high-performance connectors to source data from Azure environments, provisioning data for downstream AI needs.

SAS Analytic Lifecycle Capabilities

Access and Prepare Data:

SAS Viya allows you to handle complex and large datasets efficiently. You can perform data preparation tasks such as cleaning, transforming, and structuring data for analysis.

Whether your data resides in databases, spreadsheets, or cloud storage, SAS Viya provides seamless connectivity to various data sources.

Visualize Data:

Data visualization is crucial for understanding data relationships, patterns, and trends. SAS Viya offers powerful visualization tools to create insightful charts, graphs, and dashboards.

Explore your data visually, identify outliers, and gain valuable insights before diving into modeling.

Build Models:

SAS Viya leverages AI techniques to build predictive and prescriptive models. Whether you’re solving real-world business problems or conducting research, you can utilize machine learning algorithms, statistical methods, and optimization techniques.

Experiment with different models, evaluate their performance, and choose the best one for your specific use case.

Automation:

Automate repetitive tasks within your analytic workflows. SAS Viya allows you to create data pipelines, schedule data refreshes, and automate model deployment.

Collaborate with other users by sharing workflows and automating decision-making processes.

Integration:

Connect seamlessly with open-source languages such as Python and R. If you have existing code or libraries, integrate them into your SAS Viya environment.

Leverage the power of both SAS and open-source tools to enhance your analytics capabilities.

ModelOps:

Managing models over time is critical for maintaining their accuracy and relevance. SAS Viya provides tools for monitoring model performance, adapting to changes in data, and retraining models as needed.

Stay on top of your models’ health and ensure they continue to deliver value.

Demonstration of Deployment on Azure:

Let’s walk through the steps for deploying SAS Viya on Microsoft Azure. This process typically takes about an hour to complete. Here’s how you can get started:

Access SAS Viya on Azure:

Visit the Azure Marketplace and search for “SAS Viya (Pay-As-You-Go)

Click “Get It Now” and then select “Continue.”

Deployment Form:

Click “Create” next to the plan.

Complete the form with the necessary information:

Project Details:

Specify your Subscription and Resource Group. These values depend on your organization’s Azure resource management practices. (Don’t forget to prepare a suitable landing zone for your Analytical platform)

Instance Details:

Personalize the URL that users will use to access SAS Viya. The Region and Deployment DNS Prefix contribute to the URL. Choose a region geographically close to your users.

Provide a Deployment Name for reference within the Azure interface.

Security and Access:

Set an administrator password for SAS Viya (remember or record it).

Choose one of the following options for SSH public key source:

Generate a new key pair.

Use a key stored in Azure.

Copy and paste a public key.

Optionally, secure access by specifying Authorized IP Ranges.

Review and Create:

Click “Review + create” to proceed.

Confirm the information, accept the terms and conditions, and click “Create.”

If you opted to create SSH keys, select “Download + create” when prompted.

Deployment Completion:

The deployment process may take up to an hour.

Once completed, sign in to SAS Viya using the URL you personalized earlier

Summary

SAS Viya on Azure provides a user-friendly, automated approach to access advanced analytics capabilities.

Offers seamless integration with Azure tools and services, catering to a wide range of users in the analytics space.

Next Steps

In the next part, I will dive deep into the model ops capabilities and advantages of combining Azure and SAS Viya platform.

Microsoft Tech Community – Latest Blogs –Read More

New opportunities for sales, services, and education partners

Today, we are excited to announce the expansion of Copilot for Microsoft 365 with the general availability of new offerings for sales, services, and education—providing new ways for customers and partners to embrace AI to achieve their goals and significantly enhance how they work.

Copilot for Sales is an AI assistant for sales professionals that brings together the power of generative AI through Copilot for Microsoft 365, Copilot Studio, and data from any CRM system to accelerate productivity, keep data fresh, unlock seller-specific insights, and help sellers personalize customer interactions – all leading to closure of more deals.

Copilot for Service modernizes existing service solutions with generative AI to enhance customer experiences and boost agent productivity. It infuses AI into the contact center to accelerate time to production with point-and-click setup, direct access within major service vendors (including Salesforce, ServiceNow, and Zendesk), and connection to public websites, SharePoint, knowledgebase articles, and offline files. Agents can ask questions in natural language and answers are delivered in the tools they use every day—Outlook, Teams, Word, and others.

Learn more about Copilot for Sales and Copilot for Service announcements.

In addition, Copilot for Microsoft 365 is now also generally available for education customers to purchase for their faculty users through the Cloud Solution Provider (CSP) program.

Read the announcement

Plan to join us on Wednesday, March 6, 2024, for the Reimagine education event: the future of AI in Education for an opportunity to deep dive on Copilot for Education.

Read more in our blog about specific opportunities for education partners with Copilot for Microsoft 365.

Additional opportunities for all partners

Register for the Copilot partner incentives overview webinar – February 28/29 to learn about the latest priorities, strategy, and earning opportunities for Copilot & AI.

Sign up for a Copilot for Microsoft 365 pre-sales and technical bootcamp

Join the conversation on our Copilot for Microsoft 365 community

Microsoft Tech Community – Latest Blogs –Read More

How Microsoft Copilot in the Edge Browser can help you learn from YouTube videos

How Microsoft Copilot in the Edge Browser can help you learn from YouTube videos

A new feature that lets you summarize, transcribe, and ask questions from any YouTube video

YouTube is a great source of information and learning for professionals, especially in the fields of technology, science, and education. You can find thousands of videos on topics ranging from programming languages, frameworks, and tools, to data science, machine learning, and artificial intelligence, to web development, design, and user experience, and much more. However, watching YouTube videos can also be time-consuming, distracting, and overwhelming. You might not have the time or patience to watch a long video, or you might get lost in the details and miss the main points. You might also have questions that are not answered in the video, or you might want to review the content later without having to watch the whole video again.

That’s where Microsoft Copilot in the Edge Browser can help you. Microsoft Copilot is a new feature that lets you interact with any YouTube video in a smart and convenient way. You can use Microsoft Copilot to summarize the video, create a transcript, and ask questions from the video content. You can also save the summaries, transcripts, and answers for later reference, or share them with others. Microsoft Copilot can help you learn more from YouTube videos, without wasting time or losing focus.

In this video Microsoft’s Darryl Rowe shows how you can leverage the power of Microsoft Copilot in the Edge browser to summarize YouTube videos, generate Youtube transcripts, ask questions of the video, and more!

Resources:

Copilot in Edge | Microsoft Learn

Thanks for visiting – Michael Gannotti LinkedIn

Microsoft Tech Community – Latest Blogs –Read More

MGDC for SharePoint FAQ: Which date should I query?

1. Filter by SnapShotDate

When gathering SharePoint data through Microsoft Graph Data Connect (MGDC), you must choose the date to query. This is expressed as a required filter on the SnapshotDate column (see picture below for an example using the Synapse Copy Data tool). You typically want the “Start time” and “End time” to be the same date (we don’t look at the time portion), so you capture the data for that specific day.

Applying a date filter

2. The latest data

You typically want the latest data available, which for SharePoint on MGDC is two days ago. So, if today is 2022-10-29, you probably want to set both the “Start time” and the “End time” to 2022-10-27. However, you can query any of the last 21 days, counting from 2 days ago. That means you can query from today’s date minus 2 to today’s date minus 23. For instance, if today is 2022-10-29, you can query any date from 2022-10-06 to 2022-10-27.

3. State datasets

This 21-day range applies to many of the SharePoint datasets on MGDC, including:

BasicDataSet_v0.SharePointSites_v1

BasicDataSet_v0.SharePointGroups_v1

BasicDataSet_v0.SharePointPermissions_v1

BasicDataSet_v0.SharePointFiles_v1

These are state datasets, which means they include all objects of that type in SharePoint at the time of the request. So, if you query for Sites on a specific day (as shown in the picture), you’ll get a full list of all the Sites in your tenant as of that date, not just the ones created or updated on that day.

4. Looking back

Why look back, then? Well, you might want to keep regular snapshots of the data to track how things are growing over time and maybe you lost a recent day on your capture process due to operational issues. This way you can look back a bit and “complete your collection”.

5. There is a limit

Why have that limit at all? Well, if we kept the data for longer than a month there would be additional compliance requirements. We need to make sure it does not break any data retention rules. We also give ourselves a week to clean up the older data and that’s why we use 21 days instead of a full month.

By the way, we do encourage you to also check your compliance requirements if you intend to keep the data from MGDC in your Azure Storage account for a long time. You might have similar restrictions.

6. The sign-up date

There is another detail about this. SharePoint on MGDC does not do backfills, which means you can only look back to dates on or after you enabled the collection of data in the Admin Center (see picture below).

If you signed up for MGDC with SharePoint on 2022-10-15, it will take 48 hours to set up your fist collection, which means the first date you can query would be 2022-10-17. Since we’re always looking 48 hours in the past, you will need to wait until 2022-10-19 to query that date. Effectively, you need to wait 96 hours after you check the box before you can run you first request. After that, you can query daily, always looking back at least 2 days.

Also, if you signed up for MGDC with SharePoint on 2022-10-15 and today is 2022-10-29, you can only query from 2022-10-17 (date of the first collection) to 2022-10-27 (two days ago). Once it’s been 21 days since you enabled MGDC with SharePoint datasets, this is no longer an issue.

Enabling Microsoft Graph Data Connect for SharePoint

7. Error messages

You might be wondering what happens if you query a date outside of these boundaries. Well, your request to MGDC will fail ☹.

Here’s what the error message (for the Sites dataset) looks like when you monitor your Copy Data activity in Azure Data Factory or Azure Synapse. If the SharePoint data extract failed for a dataset because the data is outside of the valid date range, your error message will look like this:

“ErrorCode=UserErrorOffice365DataLoaderError,

‘Type=Microsoft.DataTransfer.Common.Shared.HybridDeliveryException,

Message=Office365 data loading failed to execute.

office365LoadErrorType: PermanentError.

Table [BasicDataSet_v0.SharePointSites_v1] only support data for the past [21]days.

Please rerun the job with valid start and end dates,

Source=Microsoft.DataTransfer.ClientLibrary,'”

If the SharePoint data extract failed for a dataset because the data is not available for the date you requested, your error message will look like this:

“ErrorCode=UserErrorOffice365DataLoaderError,

‘Type=Microsoft.DataTransfer.Common.Shared.HybridDeliveryException,

Message=Office365 data loading failed to execute.

office365LoaderErrorType: PermanentError.

Your dataset request failed. Please verify the dates you used in the request. You might also want to review our documentation at https://aka.ms/mgdcdocs. If you just enabled the MGDC, try your request again in 48 hours. Please reach out to dataconnect@microsoft.com for further support.

Source=Microsoft.DataTransfer.ClientLibrary,'”

8. Delta State Datasets

As mentioned earlier, you should specify the same date for both start date and end date to get a complete set of objects for that specific date, also called a “full pull”. If you specify different dates, you are asking for only the objects that changed between those two dates, also called a “delta pull”. For more details about deltas, read this blog: How to Use Delta State Datasets.

9. Summary

In summary, you can query dates from [Today – 2] days to [Today – 23 days] (or the day of your first collection, after you enabled MGDC for SharePoint, whichever is more recent). The latest date you can query is 2 days ago and that is probably what you are looking for. You should always use the same start date and end date to get a complete set of objects, unless you want only the objects that changed between the two dates.

I hope this blog post helped you better understand which dates you can query for SharePoint Data on the Microsoft Graph Data Connect. For more information about SharePoint Data in MGDC, please visit the collection of links I keep at https://aka.ms/SharePointData.

Microsoft Tech Community – Latest Blogs –Read More

Announcing the limited general availability of Accelerated Connections

This is the first blog in a series of detailed efforts and offerings addressing network disaggregation in the datacenter and how it intersects with Azure’s new Accelerated Connections offering.

Part1: Introduction to Accelerated Connections.

Part2: How did we get here, where are we going?

Part3: How can I tell which Azure Networking options are right for me?

Part4: Optimizing your Azure workloads to take advantage of Accelerated Connections (NVAs, VMSS, Web Front End, Etc.).

We are pleased to announce limited GA of Accelerated Connections, a new Azure Network feature that delivers highest connections per second (CPS) and total active connections (TAC) performance to the most demanding VM workloads through specialized hardware in the Azure fleet. Our technologies enhance VMs to perform at previously unattainable levels (up to 10-25 times previous perf). This is targeted at customers requiring high amounts of connections held over time or quickly being established such as network virtual appliances, web front ends, and other connection heavy critical infrastructure. This offering is part of a Microsoft technology stack enabling our customers to enhance and build network functionality with high amounts of flexibility and performance in the cloud.

Microsoft previously released Accelerated Networking which provides high bandwidth, packets/sec, with ultra-low latency and jitter. We recently augmented our offerings with Azure Boost which enables faster storage and networking performance for Azure VM customers (among other benefits). As part of the continued cloud story and delivery to customers, Accelerated Connections can be enabled to enhance both offerings. Currently, Accelerated Connections works with Accelerated Networking and Boost support will roll in later this year. Both will receive enhanced CPS and TAC along with additional improvements explained in benefits area below. Together these offerings allow Azure customers to scale to the most demanding cloud workloads and achieve near bare metal network performance in the cloud.

To learn more, watch the Ignite Video, read our industry papers or experience Accelerated Connections today by provisioning VMs listed in the documentation.

Continue reading to learn more about the benefits of using Accelerated Connections.

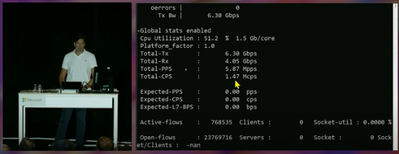

Pictured: Mark Russinovich demonstrating 1.5 Million Connections per second on single Azure VM at Ignite using Accelerated Connections technologies.

Below, we continue to explore the benefits of enabling this feature…

Accelerated Connection Benefits:

Increased CPS

Increased TAC

Increased simultaneous active connections (SAC) and better connection handling

Increased reliability through disaggregation

Compatible with most VMs and highly configurable

Complementary to Boost and Network Acceleration

Potential cost savings by using fewer VMs for same workloads

Increased CPS:

Up to 25x improvement in CPS performance for NVAs in the Azure Marketplace versus current NVA capabilities

Up to 10x CPS performance on single VM versus current VM capabilities

Increased performance for both UDP and TCP protocols confirmed and attested to by customers in public preview

Increased TAC:

Increased number of TAC (Flow Table) for VMs dependent on auxiliary SKU selection

Increased SAC and better connection handling:

Latency and connection handling improvement for high demand workloads like voice/video in need of multiple connections connection processing

Consistent throughput across a very large number of active connections

Reduced jitter on connection creation

Increased reliability through disaggregation:

Further isolation of complex networking operations by moving off the host resulting in improved overall VM performance on Azure Host in the fleet

Compatible with most VM sizes in Azure and highly configurable:

Accelerated Connection uses a new vNIC attributes on VM’s for ‘auxiliary mode’ and ‘auxiliary sku’ that let you pick performance level of VM.

Future offering: Can be configured on existing VMs in your network without needing to create new VM to support feature

Runs on a wide list of compatible VM types that have 4 vCPU and higher

See Accelerated Connections for more details

Complementary to Boost and Network Acceleration: