Category: Microsoft

Category Archives: Microsoft

Sync Up Episode 09: Creating a New Future with OneDrive

Sync Up Episode 9 is now available on all your favorite podcast apps! This month, Arvind Mishra and I are talking with Liz Scoble and Libby McCormick about the power of Create.Microsoft.com and how we’re bringing that power into the OneDrive experience! Along the way, we learn a little more about ourselves, about TPS reports, and much more!

Show: https://aka.ms/SyncUp | Apple Podcasts: https://aka.ms/SyncUp/Apple | Spotify: https://aka.ms/SyncUp/Spotify | RSS: https://aka.ms/SyncUp/RSS

As always, we hope you enjoyed this episode! Let us know what you think in the comments below!

Microsoft Tech Community – Latest Blogs –Read More

Sync Up Episode 09: Creating a New Future with OneDrive

Sync Up Episode 9 is now available on all your favorite podcast apps! This month, Arvind Mishra and I are talking with Liz Scoble and Libby McCormick about the power of Create.Microsoft.com and how we’re bringing that power into the OneDrive experience! Along the way, we learn a little more about ourselves, about TPS reports, and much more!

Show: https://aka.ms/SyncUp | Apple Podcasts: https://aka.ms/SyncUp/Apple | Spotify: https://aka.ms/SyncUp/Spotify | RSS: https://aka.ms/SyncUp/RSS

As always, we hope you enjoyed this episode! Let us know what you think in the comments below!

Microsoft Tech Community – Latest Blogs –Read More

Sync Up Episode 09: Creating a New Future with OneDrive

Sync Up Episode 9 is now available on all your favorite podcast apps! This month, Arvind Mishra and I are talking with Liz Scoble and Libby McCormick about the power of Create.Microsoft.com and how we’re bringing that power into the OneDrive experience! Along the way, we learn a little more about ourselves, about TPS reports, and much more!

Show: https://aka.ms/SyncUp | Apple Podcasts: https://aka.ms/SyncUp/Apple | Spotify: https://aka.ms/SyncUp/Spotify | RSS: https://aka.ms/SyncUp/RSS

As always, we hope you enjoyed this episode! Let us know what you think in the comments below!

Microsoft Tech Community – Latest Blogs –Read More

Sync Up Episode 09: Creating a New Future with OneDrive

Sync Up Episode 9 is now available on all your favorite podcast apps! This month, Arvind Mishra and I are talking with Liz Scoble and Libby McCormick about the power of Create.Microsoft.com and how we’re bringing that power into the OneDrive experience! Along the way, we learn a little more about ourselves, about TPS reports, and much more!

Show: https://aka.ms/SyncUp | Apple Podcasts: https://aka.ms/SyncUp/Apple | Spotify: https://aka.ms/SyncUp/Spotify | RSS: https://aka.ms/SyncUp/RSS

As always, we hope you enjoyed this episode! Let us know what you think in the comments below!

Microsoft Tech Community – Latest Blogs –Read More

Sync Up Episode 09: Creating a New Future with OneDrive

Sync Up Episode 9 is now available on all your favorite podcast apps! This month, Arvind Mishra and I are talking with Liz Scoble and Libby McCormick about the power of Create.Microsoft.com and how we’re bringing that power into the OneDrive experience! Along the way, we learn a little more about ourselves, about TPS reports, and much more!

Show: https://aka.ms/SyncUp | Apple Podcasts: https://aka.ms/SyncUp/Apple | Spotify: https://aka.ms/SyncUp/Spotify | RSS: https://aka.ms/SyncUp/RSS

As always, we hope you enjoyed this episode! Let us know what you think in the comments below!

Microsoft Tech Community – Latest Blogs –Read More

Sync Up Episode 09: Creating a New Future with OneDrive

Sync Up Episode 9 is now available on all your favorite podcast apps! This month, Arvind Mishra and I are talking with Liz Scoble and Libby McCormick about the power of Create.Microsoft.com and how we’re bringing that power into the OneDrive experience! Along the way, we learn a little more about ourselves, about TPS reports, and much more!

Show: https://aka.ms/SyncUp | Apple Podcasts: https://aka.ms/SyncUp/Apple | Spotify: https://aka.ms/SyncUp/Spotify | RSS: https://aka.ms/SyncUp/RSS

As always, we hope you enjoyed this episode! Let us know what you think in the comments below!

Microsoft Tech Community – Latest Blogs –Read More

Sync Up Episode 09: Creating a New Future with OneDrive

Sync Up Episode 9 is now available on all your favorite podcast apps! This month, Arvind Mishra and I are talking with Liz Scoble and Libby McCormick about the power of Create.Microsoft.com and how we’re bringing that power into the OneDrive experience! Along the way, we learn a little more about ourselves, about TPS reports, and much more!

Show: https://aka.ms/SyncUp | Apple Podcasts: https://aka.ms/SyncUp/Apple | Spotify: https://aka.ms/SyncUp/Spotify | RSS: https://aka.ms/SyncUp/RSS

As always, we hope you enjoyed this episode! Let us know what you think in the comments below!

Microsoft Tech Community – Latest Blogs –Read More

Sync Up Episode 09: Creating a New Future with OneDrive

Sync Up Episode 9 is now available on all your favorite podcast apps! This month, Arvind Mishra and I are talking with Liz Scoble and Libby McCormick about the power of Create.Microsoft.com and how we’re bringing that power into the OneDrive experience! Along the way, we learn a little more about ourselves, about TPS reports, and much more!

Show: https://aka.ms/SyncUp | Apple Podcasts: https://aka.ms/SyncUp/Apple | Spotify: https://aka.ms/SyncUp/Spotify | RSS: https://aka.ms/SyncUp/RSS

As always, we hope you enjoyed this episode! Let us know what you think in the comments below!

Microsoft Tech Community – Latest Blogs –Read More

Unlock the power of video with Microsoft Stream

Hi Microsoft 365 Insiders!

Experience seamless video collaboration with Microsoft Stream, a powerful platform that enables you to create, share, and view videos securely across the Microsoft 365 apps you use every day. You can use it to easily create useful and interesting video content, and leverage features like sharing, transcriptions, translations, chapters, search, and more.

Read the full overview in our latest blog!

We have also shared out on X/LinkedIn:

X: https://twitter.com/Msft365Insider/status/1767581318089482328

LinkedIn: https://www.linkedin.com/feed/update/urn:li:activity:7173347029945966592

Thanks!

Perry Sjogren

Microsoft 365 Insider Social Media Manager

Become a Microsoft 365 Insider and gain exclusive access to new features and help shape the future of Microsoft 365. Join Now: Windows | Mac | iOS | Android

Hi Microsoft 365 Insiders! Experience seamless video collaboration with Microsoft Stream, a powerful platform that enables you to create, share, and view videos securely across the Microsoft 365 apps you use every day. You can use it to easily create useful and interesting video content, and leverage features like sharing, transcriptions, translations, chapters, search, and more. Read the full overview in our latest blog! We have also shared out on X/LinkedIn:X: https://twitter.com/Msft365Insider/status/1767581318089482328LinkedIn: https://www.linkedin.com/feed/update/urn:li:activity:7173347029945966592 Thanks! Perry SjogrenMicrosoft 365 Insider Social Media Manager Become a Microsoft 365 Insider and gain exclusive access to new features and help shape the future of Microsoft 365. Join Now: Windows | Mac | iOS | Android Read More

Recording – Copilot Use Cases for HR: Copilot for Microsoft 365 Starter Series

Copilot Use Cases for HR: Copilot for Microsoft 365 Starter Series

Session 1 of 8

Watch! Copilot Use Cases for HR: Copilot for Microsoft 365 Starter Series with Microsoft’s Jaspreet Dhamija and Helen Guan, as well as specialist guest Helen Boter, Senior HR Leader at Microsoft. Together they demonstrate and discuss the use of Copilot for Microsoft 365 for HR.

Copilot for Microsoft 365 is a powerful tool that can significantly enhance the efficiency and effectiveness of Human Resources (HR) professionals in Healthcare and Life Sciences organizations. Here are some ways it can be beneficial:

Streamlined Recruitment:

Copilot automates resume screening, making it easier to identify top talent.

It assists in creating job descriptions, ensuring clarity and alignment with organizational needs.

Efficient Onboarding:

Simplifies document management during the onboarding process.

Provides personalized onboarding plans for new hires, ensuring a smooth transition.

Enhanced Employee Engagement:

Analyzes employee feedback to provide valuable insights.

Recommends tailored training programs to boost engagement and productivity.

Data-Driven Decision-Making:

Leverages Copilot’s big data platform to make informed HR decisions.

Enables evidence-based strategies for workforce management.

In the healthcare and life sciences sectors, Copilot can address specific use cases such as optimizing recruitment workflows, improving employee satisfaction, and ensuring compliance with industry regulations. By leveraging artificial intelligence and automation, Copilot empowers HR professionals to work smarter and achieve more with fewer resources.

Resources:

Microsoft Copilot for Microsoft 365 | Microsoft 365

Copilot for Microsoft 365 – Microsoft Adoption

Microsoft Copilot for Microsoft 365—Features and Plans | Microsoft 365

Get started with Copilot for Microsoft 365 – Training | Microsoft Learn

Microsoft Copilot for Microsoft 365 documentation | Microsoft Learn

Download the Slides from the Webinar in PDF format

You can see the rest of the upcoming Copilot for Microsoft 365 Starter series here.

Thanks for visiting – Michael Gannotti LinkedIn

Microsoft Tech Community – Latest Blogs –Read More

Navigating Billing & Payouts in Azure Marketplace: Insights from Mastering the Marketplace

In the ever-evolving landscape of digital commerce, understanding the intricacies of billing and payouts is paramount for vendors looking to thrive in platforms like the Azure Marketplace. In this Mastering the Marketplace session, our subject matter experts delve deep into the nuances of setting up Billing and Payouts, offering invaluable guidance for those venturing into the realm of transactable offers. Led by the Marketplace FastTrack team, this webinar not only provides a comprehensive overview of how payouts operate within the Azure Marketplace but also sheds light the intricate monthly payout processes. Join us as we unravel the complexities of billing and payouts, empowering vendors with the knowledge needed to navigate the Azure Marketplace with confidence and success.

Key areas covered:

Billing and Payouts

Learn how to set up your tax and payout profile for the Azure Marketplace

Setting up your Partner Center account and information on setting up taxes

Setting up users (add, create, remove) and roles & permissions

How to validate Partner Center account information

Create a tax and payout profile with guides and scenarios, for both US and foreign publishers, to help determine the best tax profile for your business scenario.

Create a payout profile in the currency to be paid.

Create a profile where the payout should be routed to.

Understand billing and payout process cycle

Currency– covers pricing of transactable offers and currency exchange impacts from the customer purchase to the publisher payout

Payout Schedule– based on the type of purchase made in the Azure Marketplace such as usage/consumption

Payout Requirements– provides information on when a publisher is eligible for payout

Payout Timeline– payouts typically dispersed on or before the 15th of each month in the currency selected when setting up the payment profile. Payment can take up to 10 business days to receive based on the payment method used

Explore the Partner Center Revenue dashboard and Earnings report

Walk through demo of the Revenue Dashboard and Earnings Report features, use cases, and details on best practices

To expand your knowledge on Billing and Payouts you can watch the full webinar to hear detailed information on this Mastering the Marketplace session. Register to watch the full recording of the webinar Billing and Payouts

There are many resources provided in the session that will help you navigate the Billing and Payment set up process. We have listed them below for your convenience.

Create a Partner Center account and enable commercial marketplace

Set up users and roles

Check account validation

Create a tax and payout profile

Microsoft Publisher Agreement

Add and Manage Users for the Commercial Marketplace

Assign User Roles and Permissions

Manage a commercial marketplace account in Partner Center

Set up commercial marketplace payout and tax profiles

Tax details for commercial marketplace publishers

Geographic availability and currency support for the commercial marketplace

Payout schedules and processes

Getting Paid in Partner Center

Payment thresholds, methods, and timeframes

Revenue dashboard in commercial marketplace analytics

Earnings pages in Partner Center

Earnings – Reports overview in Partner Center

Additional Mastering the Marketplace sessions are available Live and OnDemand to provide publishers with resources to successfully publish your solution to the Commercial Marketplace.

Have follow up questions about this presentation’s content? Comment below to continue the conversation with our subject matter experts!

__________________________________________________________________________________________________________________________________________________

Additional Resources:

Billing and Payouts

Govern and control using private Azure Marketplace – Microsoft marketplace

Azure Consumption Commitment enrollment – Marketplace publisher

Your commercial marketplace benefits – Marketplace publisher

Introduction to listing options – Microsoft commercial marketplace

Get support for the commercial marketplace program in Partner Center – Marketplace publisher

Payouts and tax profile FAQ – Marketplace publisher

Customer Billing and Invoice

Overview of billing and invoicing for marketplace customers – Microsoft marketplace

Review your individual Azure subscription bill – Microsoft Cost Management

Review your Azure Enterprise Agreement bill – Microsoft Cost Management

Review your Microsoft Customer Agreement bill – Azure – Microsoft Cost Management

Commercial Marketplace

Microsoft commercial marketplace publisher FAQ

Cloud Partner Portal migration FAQ

Get help and contact support in Partner Center

Partner Center announcements

Microsoft Partner Community – Microsoft Community Hub

Marketplace Community – Microsoft Community Hub

Publish an integrated solution

Configure your SaaS offer properties in Azure Marketplace

Standard Contract for Microsoft commercial marketplace

Cloud Solution Provider – Microsoft commercial marketplace – Azure – Marketplace publisher

Configure ISV to CSP partner private offers in Microsoft Partner Center – Marketplace publisher

Microsoft Tech Community – Latest Blogs –Read More

Introducing SharePoint Embedded: Microsoft 365 features for Your Marketplace App

If you manage files and documents as part of your app, Microsoft SharePoint Embedded is a new product that makes it easier than ever to do this in a trusted, reliable way, while surfacing Microsoft 365 features as part of your app. In this webinar Microsoft’s Principal Product Manager, Akanksha Rakesh, and I share a technical overview of the product, information on M365 integration and billing, and much more.

Microsoft SharePoint Embedded is a cloud-based file and document management system suitable for use in any application. SharePoint Embedded is a new API-only solution that enables app developers to harness the power of the Microsoft 365 file and document storage platform for any app and is suitable for enterprises building line of business applications and ISVs building multitenant applications.

In this webinar you will learn about:

The core business problem that SharePoint Embedded solves is providing a unified content platform for enterprises that have multiple applications with different document repositories and capabilities.

A technical overview of SharePoint Embedded, including its architecture, features, permissions, integration with M365 experiences and Microsoft Purview, and billing models. A few of the SharePoint Embedded technical features covered are:

Create a dedicated partition within the M365 tenant to store files and documents with consistent security, compliance, collaboration, and content AI features.

Manages files and documents inside SharePoint Embedded containers, a new, lightweight approach designed for apps.

Containers are flexible, scalable, and integrated with M365 experiences such as Office, Teams, and Purview.

Supports a consumptive billing model with two options: ISV-led billing and direct-to-customer billing.

Provides enterprise manageability with PowerShell, SharePoint Admin UX, and Graph APIs.

Supports a new admin role for logical isolation and separation of concerns.

Hear how Peppermint Connect, a legal workflow solution, leverages SharePoint Embedded for document management within Teams and Outlook

To learn more register to watch the webinar SharePoint Embedded: Microsoft 365 features for Your Marketplace App.

Comment below with questions to continue the conversation!

__________________________________________________________________________________________________________________________________________________

Additional Resources:

SharePoint Embedded Overview: SharePoint Embedded Overview

Enable SharePoint Embedded in your Tenant Enable SharePoint Embedded

Go deeper with self paced learning models SharePoint Embedded – building applications – Training

Sample apps: SharePoint-Embedded-Samples/Samples at main · microsoft/SharePoint-Embedded-Samples · GitHub

VS Code extension

SharePoint Embedded for Visual Studio Code | Microsoft Learn

Introducing the SharePoint Embedded Visual Studio Code extension – Microsoft Community Hub

Microsoft Tech Community – Latest Blogs –Read More

Armchair Architects: Considerations for Ethical and Responsible Use of AI in Applications

In this blog, our host, David Blank-Edelman and our armchair architects Uli Homann and Eric Charran will be discussing the ethical and responsible considerations architects should consider when using large language models (LLMs) in their applications, including the risks of leaking confidential information, societal biases, and increasing regulations.

Thinking about Confidentiality

As an architect Eric worries about a couple main areas in the AI space. First there are ethical and responsible considerations for architects integrating LLMs into any type of design, collaboration, or application experience.

There are some real-world examples of unintended LLM training on things such as confidential information that has leaked or unintentionally present secure information in the corpus of data that was used to train LLMs. In addition, there are potential societal biases and discriminations that may be embedded in the corpus of training data for LLMs. Architects need to think about what are some strategies at the platform level for data anonymization, access control, algorithmic transparency and asserting those things to regulatory bodies and the specter of increasing regulations that might appear in this space.

Transparency in Algorithms and Responsible AI

Transparency of algorithms is a nice idea if you’re doing a little bit of data science and have some statistic models, however if you have a LLMs with trillions of variables and if the model is a closed model like OpenAI, Gemini or other models that are entropic and so forth, how are you going to go get transparency in algorithms?

Uli believes, you won’t be able to get transparency in algorithms then folks in the responsible AI space will start to request explainable AI. You can’t explain a LLMs with trillions of variables, it just doesn’t work. In addition, part of what the LLMs expose into this world is the need to rethink what responsible AI means and to answer some of the questions before choosing LLMs.

Picking a Model and Partner

There needs to be very clear picture of what are you going to choose as the basis of your LLM. There are really two choices: closed models such as OpenAI, Gemini, or whatever it may be or you select open-source LLMs such as Llama and many other models. While there is a little bit more transparency because the source is open, the code is still very complex. You might not be the expert to really understand what that model is, and you also might not know who is behind the model.

Some considerations for the open-source LLMs are:

Is this a viable organization that has the right intention?

Where did this model come from?

Do they adhere to specific promises that they give you in terms of what the model doesn’t misappropriate for their LLM?

How do you know that the model doesn’t have code embedded that you just can’t find?

Effectively, it starts with which model are you going to pick, which really means which partner are you going to pick? Then when you pick a partner, you have to ask “What are the promises that the partner is actually giving you?”

With OpenAI, there’s a version called Azure OpenAI and Azure OpenAI gives a couple of promises, for example:

Microsoft will never use your data to train the foundational model. That’s a guarantee Microsoft gives you and stands behind. Other competitors such as Google and AWS may be doing something similar.

Microsoft also guarantees that all data that you feed into the Azure OpenAI model is isolated from any other data that is being hosted in the same multi-tenant service.

After you have picked the right partnership, either open source or closed source and think through what you want to achieve with that capability. Then you have to take into consideration your company’s stance on ethics and responsible AI usage. When Uli talks about AI on stage, either publicly or in conversations with specific customers or partners, they get very excited. However, he recommends that one of the first things you need to do is have an AI charter.

One consideration is, “What is your company going to do with AI and what is it not going to do with AI?” This is done so that every employee and every partner that you work with understands what the job of AI in your world is.

Microsoft has been public about what they think AI will do and will not do since 2015 and has made it transparent to the public. Internally, every Microsoft employee also has a path to escalate if there is a usage of AI that’s being proposed that you as an individual don’t feel comfortable with. There is a path that’s not part of the employee’s organizational management path where they can escalate and ask, “Is this really in line with our values?”

In summary, you first pick the model and the partner(s), the second thing is what are you going to do with AI and what are you not going to do with AI? Then the last piece that Uli thinks about is “When you’re looking at large language models, you can’t go for explainable AI.” He believes unfortunately, it doesn’t work.

However, what you can do is go to observability, not explainability anymore. You can observe what’s going on. In an LLM, when you interact with it you use a prompt. You could argue that a prompt is like a model itself; you know exactly what the structure of the prompt is going to be, what you are going to add to the prompt before it enters the LLM, etc…

Create Model using JSON

You can observe what goes into the prompt and you also know what’s coming out of the LLM. You can observe what the output is, and you can also create a model for the output using JSON for example. OpenAI has a model, but there’s other open-source capabilities like type chat that force the LLM to respond as a or with a JSON schema.

Which means you can now reason over that schema as a model and say, “oh, only data that fits that schema will effectively end up in my response prompt.” This is a very good way of saying, “Yep, I can guarantee I don’t know how the LLM gets to the result.” We can mathematically prove that it’s right, but we don’t know exactly how the code path works. But we know what we fed in, we know what came out and we can enforce rules against the input and the output. That’s what Uli means about observability.

These are the three elements that Uli generally talks through with customers and partners: start with the right partnership, what is your AI perspective and then how do you go and observe rather than explain AI.

Practical Tips to get Started

Some of the ways to get started from Eric’s perspective is to focus on model reporting analytics, investigating visualization and explanation tools. Then aggressively engaging in studied user interaction and feedback while keeping within the guidelines of the ethical considerations and your company’s AI charter that Uli mentioned earlier.

Modeling reporting analytics: clearly documenting the model architecture. You might not be able to dive into the layers, but also focus on the training data and sources. In addition to understanding the dimensionality, the cardinality of that data, what’s you’re obfuscating and tokenizing, what you’re removing from that data set, what biases might exist in that underlying trading data. Eric believes it will help developers understand how the model works and to potentially pre-empt any particular issues even before you begin the foundational model development process or the fine-tuning process.

Uli has a differing opinion, in that what Eric is describing is awesome if you’re in a traditional model world, but we’re not as LLMs are not traditional models. You don’t control the data set that OpenAI uses, that Gemini uses, that Anthropc uses. You don’t even know what they’re training on.

Financial Services Approach

Eric, agreed with the point Uli mentioned in that LLMs are not traditional models and you do not know what they are training on. In the financial services industry, there’s a combination of approaches that Eric is taking.

The approaches are consumption of foundational models from your Hugging Face or subscribing to cloud-based models and a hosted foundational model exercise. There are also ones in which based on proprietary information and data sets that these organizations have, he is going to create his own foundational model.

In the circumstances in which you are curating and creating a data platform and utilizing your own foundational model, you will have purview and visibility to the dimensionality of the data that you’re going to feed your LLM to create your own hosted version. So, in this particular circumstance, training data sources helps you get that visibility and that includes, if Eric is downloading a LLM and utilizing it from GitHub he wants to start asking himself “How much documentation is associated with that foundational model and how do I know how it was made and trained?”

The owners or the creators of that LLM might not provide that at great detail, but it doesn’t mean that you shouldn’t be asking yourselves these questions before you just start utilizing it.

Commoditization of Generative AI

Uli is hearing of a very large conversation going on between should I build my own foundation models and what’s the return on investment on that capability? Never in Eric’s career has he seen such a rapid commoditization of a computing workload as he has seen with generative AI.

Organizations need to ask themselves some questions such as:

Why would I create my own foundational model?

Why would I accrue a data platform which has petabytes of training data and then try to create my own custom infrastructure, build my own spines, host this giant model and my own infrastructure?

Because the hyperscalers have yet to come up with their own concrete version of a custom LLM hosting environment. So why would I do all of that versus just subscribe to one that exists out there that I can configure and utilize. My data transmission to those things are secure that they’re not going to be used to train, that I don’t have to worry about betraying trade secrets in prompt interactions.

Feedback for Prompt Tuning

Let’s assume that a LLM is still a black box, and user interaction and feedback are very important. As you engage in prompt tuning, you want to see if you can develop confidence scores and human-in-the-loop systems that allow you to say “What if I ask this thing, these sets of questions, or provide these prompts” as you are prompt tuning it.

Some questions for consideration are:

How do I understand what the scope and spectrum of the models’ outputs are?

How can I root out prior to going to production based on prompts that people are asking, or maybe unexpected prompts that people are asking?

How can I not be or minimize my surprise as to a model’s particular outputs, either in terms of its veracity and accuracy, whether or not it’s doing hallucinations, whether or not it’s actually doing what you asked, or if it’s just giving up too early in the process,

These are all things that you’re going to want to try to root out through aggressive interaction and feedback mechanisms prior to actually putting this thing in your app or releasing it to the world.

Then finally, for Eric, there’s the underpinning ethical considerations, which as Uli mentioned earlier, if you’re in a foundational model world, you don’t know what the data sets are. They can tell you what the data sets are; you can believe them, or they might not even tell you. But the idea here is through that exploration, that prompt tuning and the very specific and stringent study of interactions, how can you root out bias and mitigate it?

Some considerations are:

How can you make sure that it’s being respective of governance and privacy implementation considerations for your particular vertical?

When it goes wrong, how can you take accountability for that?

How can you understand the prompts and even create your own kind of catalogue of what the types of the spectrum of responses are?

If you’re in retrieval-augmented generation (RAG); how can RAG synergistically provide quality gates during the prompt and response process?

Content safety

Uli discusses foundational model as a service capability that the hyperscalers are providing; the hyperscalers are providing capabilities to host your own model. Uli is going to focus the discussion on closed models such as OpenAI, Gemini and so forth. They are just LLMS that provide you with answers depending on what you ask, RAG, or other patterns using. Now there are complementary services that companies like Microsoft have built which are what’s called content safety.

They allow you to take your prompt, feed it into another LLM to go through the prompt and see if there’s anything offensive in that prompt and also things like jailbreak, where you try to trick the model into doing something that you don’t want it to do.

Content safety capability is a service for Microsoft and there are other capabilities out there that you can utilize to effectively safeguard your prompt input and your output. Where when you are using Azure OpenAI, it is actually built in, you actually can’t avoid it.

For example, when the prompt coming in is being filtered and the result coming out is being reviewed and filtered according to the policies which you can set up. There’s a default set of policies, but you can tune them based upon your requirements and how you think about it. These content safety capabilities utilize the entire learning from responsible AI journeys.

This is not new, this is something Microsoft has been doing for a while as an industry and are incorporating that capability set into a single service that filters bias all that stuff based upon what you know. The goal is to not go and say” I know exactly how this thing got trained, I know exactly and control what it got fed.” There are certain areas where you just can’t know. So, you now focus on what you can control, which is the input and the output into the system. Uli thinks that’s a different philosophy for responsible AI than we had before.

Thank you for reading the insights provided by Uli and Eric or you can view the video version below.

Resources

Microsoft Azure AI Fundamentals: Generative AI

Responsible and trusted AI

Architectural approaches for AI and ML in multitenant solutions

Training: AI engineer

Responsible use of AI with Azure AI services

Check out the other Azure Enablement Show videos

Microsoft Tech Community – Latest Blogs –Read More

Seamless Integration: Enhancing your Static Web App by adding an Azure Functions backed AI Chatbot

Introduction

Prerequisites

.NET 6.0 or greater (Download here: Download .NET 6.0)

Node.js installed on your machine

An Azure Account

Azure OpenAI resource or an OpenAI account (If you are using Azure OpenAI follow these steps: Create and deploy an Azure OpenAI Service resource)

Azure Storage emulator (e.g., Azurite setup guide here: Use the Azurite emulator for local Azure Storage development)

Step-by-Step Guide

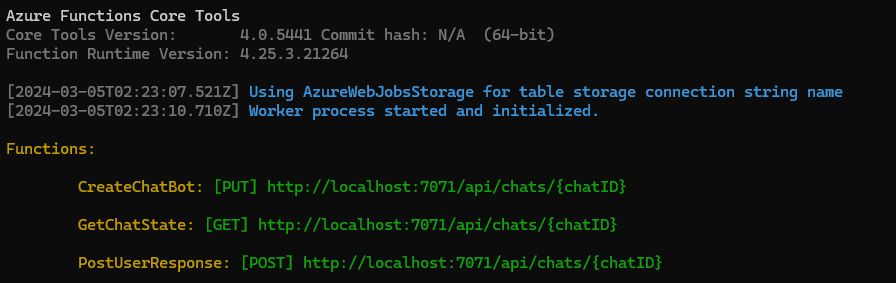

Deploy an Azure Function App with OpenAI Extension

Getting Started

1. Clone the Extension and Sample Repository: Start by cloning the repository from https://github.com/Azure/azure-functions-openai-extension/. To better understand how this integration works I also recommend reading the docs regarding the general requirements as well as the chatbot sample specific instructions.

2. Navigate to the Sample Chatbot Project: Once cloned, navigate to the sample chatbot project located at samples/chat/nodejs. We will use this sample project to deploy to our Azure Function App and expose our chatbot endpoints that will later on be consumed by our Static Web App.

3. Configure Environment Variables for testing: To connect to your OpenAI resource locally during testing, configure the required environment variables in the samples/chat/nodejs/local.settings.json file. Depending on your setup, use one of the following templates:

For Azure OpenAI resource you will have to add CHAT_MODEL_DEPLOYMENT_NAME, AZURE_OPENAI_KEY and AZURE_OPENAI_ENDPOINT:

{

“IsEncrypted”: false,

“Values”: {

“AzureWebJobsStorage”: “UseDevelopmentStorage=true”,

“AzureWebJobsFeatureFlags”: “EnableWorkerIndexing”,

“FUNCTIONS_WORKER_RUNTIME”: “node”,

“CHAT_MODEL_DEPLOYMENT_NAME”: “<your-deployment_name>”,

“AZURE_OPENAI_KEY”: “<your_key_here>”,

“AZURE_OPENAI_ENDPOINT”: “https://<your-openai-endpoint>.openai.azure.com/”

}

}

Or, for direct OpenAI API access you will have to add OPENAI_KEY. You can also override the CHAT_MODEL_DEPLOYMENT_NAME if needed:

{

“IsEncrypted”: false,

“Values”: {

“AzureWebJobsStorage”: “UseDevelopmentStorage=true”,

“AzureWebJobsFeatureFlags”: “EnableWorkerIndexing”,

“FUNCTIONS_WORKER_RUNTIME”: “node”,

“CHAT_MODEL_DEPLOYMENT_NAME”: “gpt-3.5-turbo”

“OPENAI_KEY”: “<your_openai_key_here>”

}

}

4. Start the Application Locally: Test your chatbot by running it locally. Use the following commands:

azurite –silent –location c:azurite –debug c:azuritedebug.log –blobPort 8888 –queuePort 9999 –tablePort 11111

npm install && dotnet build –output bin && npm run build && npm run start

Create a chat session:

PUT http://localhost:7071/api/chats/TailoredTales

{

“instructions”: “You are TailoredTales, a guide in the quest for the perfect read. Respond with brief, engaging hints, leading seekers to books that fit as if destined, blending curiosity with the promise of a great discovery.”

}

To send messages to the bot, execute the following request:

POST http://localhost:7071/api/chats/TailoredTales

What’s a captivating book that blends mystery with a touch of magic, ideal for someone who loves both genres and is looking for a new book?

To list all questions with answers for later use in populating your chatbot window, execute the following:

GET http://localhost:7071/api/chats/TailoredTales?timestampUTC=2023-11-9T22:00

Deploy to an Azure Function App

Develop and deploy the front end to Azure Static Web Apps

Let’s create a new React app

1. Set Up the Project: Create a new project for your front-end app. Initialize it with the chosen framework or tools. For this demo we will be using React.js. We will also install axios to make HTTP requests to interact with our chatbot backend. Feel free to use any other technology you are comfortable with.

npx create-react-app chatbot-app

cd chatbot-app

npm install axios

It can create a new chat session by calling the createChat API.

It can post messages to an existing chat session using the postMessage API.

It can retrieve the state of a chat session at a specific timestamp using the getChatState API.

The <function-hostname> in the BASE_URL is only required for local testing. As soon as we have linked the deployed Static Web App to our Function App the integration will fully handle routing and authentication for us and we can simple make calls to the Static Web Apps hostname by changing the BASE_URL to /api/chats. For now replace the value with either the local host running the chatbot app or your functions hostname (In that case you will have to also configure CORS on the function app).

const BASE_URL = ‘<function-hostname>/api/chats/’;

export const createChat = async (chatId, instructions) => {

try {

const response = await axios.put(`${BASE_URL}${chatId}`, {

instructions,

});

return response.data;

} catch (error) {

console.error(“Error creating chat:”, error);

throw error;

}

};

export const postMessage = async (chatId, message) => {

try {

await axios.post(`${BASE_URL}${chatId}`, message, {

headers: {

‘Content-Type’: ‘text/plain’,

},

});

} catch (error) {

console.error(“Error posting message:”, error);

throw error;

}

};

export const getChatState = async (chatId, timestampUTC) => {

try {

const response = await axios.get(`${BASE_URL}${chatId}`, {

params: { timestampUTC },

});

return response.data;

} catch (error) {

console.error(“Error getting chat state:”, error);

throw error;

}

};

3. Creating the Chat Box Component: We are creating a component responsible for creating a chat interface and handling chat interactions with a chatbot backend. Here’s a summary of its functionality:

State Management: It uses React state hooks to manage the following state variables:

chatId: Stores the unique identifier for the chat session.

message: Stores the user’s input message.

messages: Stores the chat messages exchanged between the user and the chatbot.

lastUpdate: Keeps track of the timestamp of the last chat message update.

Initialization: When the component mounts, it initializes a chat session by calling the createChat function from the ChatService and sets the chatId.

Polling for Messages: It sets up a polling mechanism (pollForMessages) to check for new chatbot responses at regular intervals. When new responses are received, they are added to the messages state.

Message Submission: When the user submits a message, it adds the user’s message to the chat interface, calls the postMessage function to send the message to the chatbot backend, and triggers the polling for new chatbot responses.

Scrolling: It ensures that the chat interface automatically scrolls to display the latest messages at the bottom.

Rendered Elements: It renders a chat interface with the chat messages and an input field for users to type their messages.

Conditional Rendering: Messages from the chatbot are displayed with an “Assistant” label.

import React, { useState, useEffect, useRef } from ‘react’;

import { createChat, postMessage, getChatState } from ‘./ChatService’;

import ‘./ChatBox.css’;

const ChatBox = () => {

const [chatId, setChatId] = useState(”);

const [message, setMessage] = useState(”);

const [messages, setMessages] = useState([]);

const [lastUpdate, setLastUpdate] = useState(new Date().toISOString());

const messagesEndRef = useRef(null);

useEffect(() => {

const initChat = async () => {

const chatSessionId = `chat_${new Date().getTime()}`;

const instructions = “You are TailoredTales, a guide in the quest for the perfect read. Respond with brief, engaging hints, leading seekers to books that fit as if destined, blending curiosity with the promise of a great discovery.”;

setChatId(chatSessionId);

await createChat(chatSessionId, instructions);

};

initChat();

}, []);

const pollForMessages = async () => {

const maxPollingDuration = 10000;

const pollingInterval = 200;

let totalPollingTime = 0;

const poll = setInterval(() => {

getChatState(chatId, lastUpdate).then(data => {

if (data !== null) {

const assistantMessages = data.RecentMessages.filter(msg => msg.Role === ‘assistant’);

if (assistantMessages.length > 0) {

setMessages(prevMessages => […prevMessages, …assistantMessages]);

setLastUpdate(new Date().toISOString());

clearInterval(poll);

}

}

});

totalPollingTime += pollingInterval;

if (totalPollingTime >= maxPollingDuration) {

clearInterval(poll);

}

}, pollingInterval);

};

const handleSubmit = async (e) => {

e.preventDefault();

if (message) {

const tempMessage = {

Content: message,

Role: ‘user’,

id: new Date().getTime(),

};

setMessages(prevMessages => […prevMessages, tempMessage]);

await postMessage(chatId, message);

setMessage(”);

pollForMessages();

}

};

useEffect(() => {

const messagesContainer = messagesEndRef.current;

if (messagesContainer) {

messagesContainer.scrollTop = messagesContainer.scrollHeight;

}

}, [messages]);

return (

<div className=”chatbox-container”>

<div className=”chatbox-header”>

Tailored Tales

</div>

<div className=”chatbox-messages” ref={messagesEndRef}>

{messages.map((msg, index) => (

<div key={index} className={`message ${msg.Role}`}>

{msg.Role === ‘assistant’ && <div className=”message-role”>Assistant</div>}

<span>{msg.Content}</span>

</div>

))}

</div>

<form onSubmit={handleSubmit} className=”chatbox-form”>

<input

type=”text”

value={message}

onChange={(e) => setMessage(e.target.value)}

placeholder=”Type here…”

className=”chatbox-input”

/>

</form>

</div>

);

};

export default ChatBox;

import ChatBox from ‘./ChatBox’;

function App() {

return (

<div className=”App”>

<ChatBox />

</div>

);

}

export default App;

@import url(‘https://fonts.googleapis.com/css2?family=Inter:wght@400;500;600&display=swap’);

* {

font-family: ‘Inter’, sans-serif;

}

@import url(‘https://fonts.googleapis.com/css2?family=Inter:wght@400;500;600&display=swap’);

.chatbox-container {

position: fixed;

bottom: 10px;

right: 10px;

width: 320px;

height: 600px;

display: flex;

flex-direction: column;

justify-content: space-between;

background-color: #fff;

border-radius: 16px;

box-shadow: rgba(9, 30, 66, 0.25) 0px 1px 1px, rgba(9, 30, 66, 0.13) 0px 0px 1px 1px;

font-family: ‘Inter’, sans-serif;

padding-top: 0;

}

.chatbox-header {

padding: 12px 20px;

border-top-left-radius: 8px;

border-top-right-radius: 8px;

font-size: 1.2em;

box-shadow: 0 2px 2px rgba(0, 0, 0, 0.05);

text-align: left;

font-weight: 900;

}

.chatbox-messages {

flex-grow: 1;

padding: 15px;

overflow-y: auto;

display: flex;

flex-direction: column;

gap: 10px;

overflow-y: auto;

margin-top: 0;

scrollbar-width: none;

}

.message {

max-width: 75%;

word-wrap: break-word;

padding: 10px 14px;

border-radius: 18px;

line-height: 1.4;

position: relative;

margin-bottom: 4px;

}

.message.user {

align-self: flex-end;

background-color: #5851ff;

color: #fff;

border-bottom-right-radius: 4px;

}

.message.assistant {

align-self: flex-start;

background-color: #efefef;

color: #333;

border-bottom-left-radius: 4px;

}

.chatbox-form {

display: flex;

padding: 10px 15px;

background-color: #ffffff;

box-shadow: 0 -2px 2px rgba(0, 0, 0, 0.1);

border-bottom-left-radius: 16px;

border-bottom-right-radius: 16px;

}

.chatbox-input {

flex-grow: 1;

margin-right: 8px;

padding: 10px;

border: 0px solid #d1d1d4;

border-radius: 18px;

background-color: #ffffff;

outline: none;

}

.message-role {

font-size: 0.7rem;

color: #6c757d;

margin-bottom: 2px;

}

Deploy Your React App to Azure Static Web Apps

Link your Function App to your Static Web Apps

You are all done! 🥳

You can now go to your static web app and start asking your Tailored Tales bot anything about books.

Links

Final React Project: https://github.com/annikel/chatbot-app

Annina Keller is a software engineer on the Azure Static Web Apps team. (Twitter: @anninake)

Microsoft Tech Community – Latest Blogs –Read More

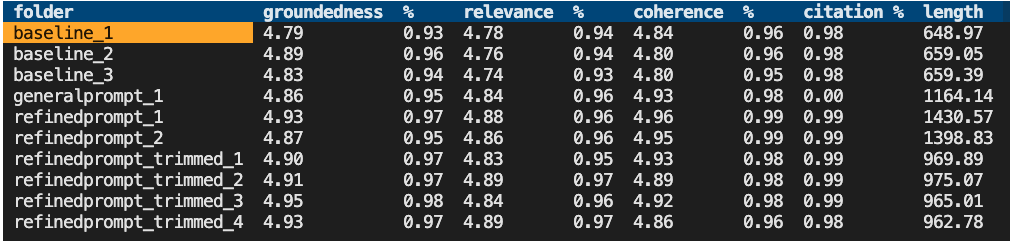

Evaluating a RAG chat app: Approach, SDKs, and Tools

When we’re programming user-facing experiences, we want to feel confident that we’re creating a functional user experience – not a broken one! How do we do that? We write tests, like unit tests, integration tests, smoke tests, accessibility tests, loadtests, property-based tests. We can’t automate all forms of testing, so we test what we can, and hire humans to audit what we can’t.

But when we’re building RAG chat apps built on LLMs, we need to introduce an entirely new form of testing to give us confidence that our LLM responses are coherent, grounded, and well-formed.

We call this form of testing “evaluation”, and we can now automate it with the help of the most powerful LLM in town: GPT-4.

How to evaluate a RAG chat app

The general approach is:

Generate a set of “ground truth” data- at least 200 question-answer pairs. We can use an LLM to generate that data, but it’s best to have humans review it and update continually based on real usage examples.

For each question, pose the question to your chat app and record the answer and context (data chunks used).

Send the ground truth data with the newly recorded data to GPT-4 and prompt it to evaluate its quality, rating answers on 1-5 scales for each metric. This step involves careful prompt engineering and experimentation.

Record the ratings for each question, compute average ratings and overall pass rates, and compare to previous runs.

If your statistics are better or equal to previous runs, then you can feel fairly confident that your chat experience has not regressed.

Evaluate using the Azure AI Generative SDK

A team of ML experts at Azure have put together an SDK to run evaluations on chat apps, in the azure-ai-generative Python package. The key functions are:

QADataGenerator.generate(text, qa_type, num_questions): Pass a document, and it will use a configured GPT-4 model to generate multiple Q/A pairs based on it.

evaluate(target, data, data_mapping, metrics_list, …): Point this function at a chat app function and ground truth data, configure what metrics you’re interested in, and it will ask GPT-4 to rate the answers.

Start with our batch evaluator repo

Since I’ve been spending a lot of time maintaining our most popular RAG chat app solution, I wanted to make it easy to test changes to that app’s base configuration – but also make it easy for any developers to test changes to their own RAG chat apps. So I’ve put together ai-rag-chat-evaluator, a repository with command-line tools for generating data, evaluating apps (local or deployed), and reviewing the results.

For example, after configuring an OpenAI connection and Azure AI Search connection, generate data with this command:

python3 -m scripts generate –output=example_input/qa.jsonl –numquestions=200

To run an evaluation against ground truth data, run this command:

python3 -m scripts evaluate –config=example_config.json

You’ll then be able to view a summary of results with the summary tool:

You’ll also be able to easily compare answers across runs with the compare tool:

For more details on using the project, check the README and please file an issue with any questions, concerns, or bug reports.

When to run evaluation tests

This evaluation process isn’t like other automated testing that a CI would runs on every commit, as it is too time-intensive and costly.

Instead, RAG development teams should run an evaluation flow when something has changed about the RAG flow itself, like the system message, LLM parameters, or search parameters.

Here is one possible workflow:

A developer tests a modification of the RAG prompt and runs the evaluation on their local machine, against a locally running app, and compares to an evaluation for the previous state (“baseline”).

That developer makes a PR to the app repository with their prompt change.

A CI action notices that the prompt has changed, and adds a comment requiring the developer to point to their evaluation results, or possibly copy them into the repo into a specified folder.

The CI action could confirm the evaluation results exceed or are equal to the current statistics, and mark the PR as mergeable. (It could also run the evaluation itself at this point, but I’m wary of recommending running expensive evaluations twice).

After any changes are merged, the development team could use an A/B or canary test alongside feedback buttons (thumbs up/down) to make sure that the chat app is working as well as expected.

We’d love to hear how RAG chat app development teams are running their evaluation flows, to see how we can help in providing reusable tools for all of you. Please let us know!

Microsoft Tech Community – Latest Blogs –Read More

Can’t Enable DKIM in Office 365

AOL, Yahoo, and Verizon (using the same email server) are now requiring that SPF, DKIM, and DMARC be enabled for emails sent to those addresses. I saw a report that Google will soon require this too. My problem is that to enable DKIM, I have to create 2 CNAME records. I have created these records on 4 accounts on 4 different providers. I have another account that actually works, but DKIM was enabled even without the CNAMES. I also passed the DMARC check on that account, so I know that I have managed to put in the correct entries in the CNAME records. But, I still get the error message about needing the CNAME records before enabling DKIM.

Another curiosity is that on none of my accounts can I detect CNAME records, even CNAMEs that were already there? What do I have to do to enable DKIM on these accounts (a total of 6 accounts on 4 different hosts)?

AOL, Yahoo, and Verizon (using the same email server) are now requiring that SPF, DKIM, and DMARC be enabled for emails sent to those addresses. I saw a report that Google will soon require this too. My problem is that to enable DKIM, I have to create 2 CNAME records. I have created these records on 4 accounts on 4 different providers. I have another account that actually works, but DKIM was enabled even without the CNAMES. I also passed the DMARC check on that account, so I know that I have managed to put in the correct entries in the CNAME records. But, I still get the error message about needing the CNAME records before enabling DKIM. Another curiosity is that on none of my accounts can I detect CNAME records, even CNAMEs that were already there? What do I have to do to enable DKIM on these accounts (a total of 6 accounts on 4 different hosts)? Read More

Empowering.Cloud Community Update – March 2024

In this month’s Empowering.Cloud community update, we cover the latest briefings from MVPs, the Microsoft Teams Monthly Update, updates in the Operator Connect world and upcoming industry events. There’s lots to look forward to!

Troubleshooting your Meeting Room Experience

https://app.empowering.cloud/briefings/329/Troubleshooting-your-Meeting-Room-Experience

Jason Wynn, MVP and Presales Specialist at Carillion, shows us how to get the most out of our meeting room experience, troubleshoot some common issues and explains how and why we’re trying to get all this information together.

Use of Teams Admin Center, Microsoft Pro Portal and Power BI reports

Configuration and live information from Microsoft

Monitoring hardware health and connectivity

Live environment analysis and troubleshooting

Network performance and jitter rates

Call performance analysis and optimisation

Introduction to Frontline Workers in M365

https://app.empowering.cloud/briefings/327/Take-Charge-of-The-Microsoft-Teams-Phone-Experience

MVP Kevin McDonnell introduces us to the topic of M365 for Frontline Workers, including challenges faced by frontline works and how Microsoft 365 can help provide a solution to some of these.

Challenges faced by Frontline Workers

Challenges faced by managers and organizers

Solutions in Microsoft 365 for Frontline Workers include:

M365 can help boost productivity, improve employee experience and provide personalized information and support for Frontline Workers

Where is My Microsoft 365 Data Stored?

https://app.empowering.cloud/briefings/350/Where-is-my-Microsoft365-Data-Stored

In another one of the latest community briefings, MVP Nikki Chapple tells us all about where our M365 data is stored.

The importance of knowing where your Microsoft 365 data is stored

Microsoft 365 data is stored in various locations including user mailboxes, group mailboxes, OneDrive, SharePoint sites and external locations

The location of data storage may vary depending on user location and compliance requirements

Microsoft Teams Monthly Update – February 2024

https://app.empowering.cloud/briefings/349/Microsoft-Teams-Monthly-Update-February-2024

In this month’s Microsoft Teams monthly update, MVP Tom Arbuthnot gives us the rundown on all the latest Microsoft Teams news, including new certified devices and Shared Calling in TAC.

Teams and Microsoft Apps on Apple Vision Pro

Shared Calling now in Teams Admin Center

Teams 2.1 client cutover coming soon

Improved Copilot in Teams and in Windows for prompting, chat history and a prompt library

Microsoft 365 Backup Public Preview with fast restorability and native data format

Android 9 and Android 10 Device Certificate Extensions

Pexip bringing a ‘Teams-like experience’ to Cloud Video Interop (CVI)

Microsoft Teams Insider Podcast

Complex Voice Strategies for Global Organizations with Zach Bennett

https://www.teamsinsider.show/2111467/14508325

Zach Bennett, Principal Architect at LoopUp, came along to the Teams Insider Show to discuss Teams Phone options for complex and global organizations.

The Role of AI in Contact Centers and Regulatory Considerations with Philipp Beck

Philipp Beck, Former CEO and Founder of Luware, and MVP Tom Arbuthnot delve into key developments in the world of Microsoft Teams and Contact Center.

Microsoft Teams Operator Connect Updates

The numbers are continuing to rise in the Microsoft Teams Operator Connect world with there now being 89 operators and 86 countries covered. Will we reach 100 providers or countries first?!

Check out our full Power BI report of all the Operators here:

https://app.empowering.cloud/research/microsoft-teams-operator-connect-providers-comparison

Upcoming Community Events

Teams Fireside Chat – 14th March, 16:00 GMT | Virtual

Hosts: MVP Tom Arbuthnot

Guest Speaker: MVPs and Microsoft speakers LIVE from MVP summit

This month’s Teams Fireside Chat is a special one as Tom Arbuthnot will be hosting live from the MVP Summit at the Microsoft campus in Redmond, where he’ll be joined by other MVPs for an expertise-filled session.

Registration Link: https://events.empowering.cloud/event-details?recordId=recJNyAGoTadbcfMN

Microsoft Teams Devices Ask Me Anything – 18/19th March | Virtual

Microsoft Teams Devices Ask Me Anything is a monthly community which gives you all an update on the important and Microsoft Teams devices news, as well as the chance to ask questions and get them answered by the experts. We have 2 sessions to cover different time zones, so there’s really no excuse not to come along to at least one!

EMEA/NA – 18th March, 16:00 GMT | Virtual

Hosts: MVP Graham Walsh, Michael Tressler, Jimmy Vaughan

Registration Link: https://events.empowering.cloud/event-details?recordId=recnbltzoOt2pQ2wF

APAC – 19th March, 17:30 AEST | Virtual

Hosts: MVP Graham Walsh, Phil Clapham, Andrew Higgs, Justin O’Meara

Registration Link: https://events.empowering.cloud/event-details?recordId=recsMBe3O6J10xSC2

Everything You Need to Know as a Microsoft Teams Service Owner at Enterprise Connect – 25th March | In-Person

Training Session: led by MVP Tom Arbuthnot

Whether you’re in the network team, telecoms team or part of the Microsoft 365 team, MVP Tom Arbuthnot’s training session will help you avoid common pitfalls and boost your success as he takes you through everything you need to know as a Microsoft Teams Service Owner.

Registration Link: https://enterpriseconnect.com/training

Teams Fireside Chat – 11th April, 16:00 GMT | Virtual

Hosts: MVP Tom Arbuthnot

Guest Speaker: Vandana Thomas, Product Leader, Microsoft Teams Phone Mobile

Join other community members as we chat with Microsoft’s Product Leader for Teams Phone Mobile, Vandana Thomas on April’s Teams Fireside Chat. As usual, we’ll open up the floor to discussion to bring along your burning Microsoft Teams questions to get them answered by the experts.

Registration Link: https://events.empowering.cloud/event-details?recordId=recLQiD9PjZbQahog

Comms vNext – 23-24 April | In-Person | Denver, CO

Comms VNext is the only conference in North America dedicated to Microsoft Communications and Collaboration Technologies and aims to bring the community together for an event full of deep technical sessions from experts, an exhibition hall with 40 exhibitors and some great catering too!

Registration Link: https://www.commsvnext.com/

That’s all for this month, so look out for the next update in April with all the news from Enterprise Connect!

Microsoft Tech Community – Latest Blogs –Read More

Unable to enter network after launching updat KB5034848

Today I reactivated my computer and updated with the latest version of windows 11 (23H2, X64 KB5034848). Some time later I lost connection to the internet, while my router and another laptop is still able to access the internet.

Using the help tool, I get the message that the internet can not be accessed using a fixed IP address. Automatic IP address assignment with DHCP is active (has not been changed after updating windows).

What to do?

Today I reactivated my computer and updated with the latest version of windows 11 (23H2, X64 KB5034848). Some time later I lost connection to the internet, while my router and another laptop is still able to access the internet.Using the help tool, I get the message that the internet can not be accessed using a fixed IP address. Automatic IP address assignment with DHCP is active (has not been changed after updating windows).What to do? Read More

Copilot for Microsoft 365 Strategy Briefing – Malvern, PA

Copilot for Microsoft 365 Strategy Briefing

Join us for the Microsoft 365 Copilot Briefing with Cyclotron & Microsoft on April 5th. This in-person strategy briefing to learn how AI and Microsoft 365 Copilot are transforming the way we work.

Topics will include:

How the Era of AI is changing work as we know it

What it means to be an AI-powered organization and how to prepare your workforce for AI transformation success

How Microsoft can help securely and responsibly guide your organization’s AI transformation

Opportunity to discuss and learn from peers including hearing from one of our Early Access Program customers

Register Now Space is Limited

Microsoft Tech Community – Latest Blogs –Read More

Publishing a SaaS offer for PowerApps a canvas app in AppSource

Hello,

We have been trying for many months now to publish our PowerApps canvas apps as a SaaS offer, thereby allowing us to have transactable, licensed customers.

Through a lot of effort, we managed to get some support where we were told quite vaguely how to achieve this, but without any meaningful guidance we are struggling as we are a small ISV with expertise in our actual business, and Power Platform. We are not, however, experts in SQL (that’s being generous) or in creating APIs which seem to be a requirement to keep track of user licenses and feeding the data to our canvas app.

If anyone here has any info on this, or how they might have achieved it themselves it would be massively appreciated, as we can’t seem to get there by ourselves and MS support is very difficult to find.

It does feel as though the app development part is so much simpler than getting it onto the marketplace, which is surely the wrong way round.

Thank you,

Craig

Hello, We have been trying for many months now to publish our PowerApps canvas apps as a SaaS offer, thereby allowing us to have transactable, licensed customers. Through a lot of effort, we managed to get some support where we were told quite vaguely how to achieve this, but without any meaningful guidance we are struggling as we are a small ISV with expertise in our actual business, and Power Platform. We are not, however, experts in SQL (that’s being generous) or in creating APIs which seem to be a requirement to keep track of user licenses and feeding the data to our canvas app. If anyone here has any info on this, or how they might have achieved it themselves it would be massively appreciated, as we can’t seem to get there by ourselves and MS support is very difficult to find. It does feel as though the app development part is so much simpler than getting it onto the marketplace, which is surely the wrong way round. Thank you,Craig Read More