Category: News

Goodbye Planner

Just checked out the new Planner, it changed my board categories to something I don’t want, I hate it. No useful features like “@”mentions for the comments, Trello has had that feature for a decade. Why don’t we have the flexibility to use it as we see fit? Leaving this stuff up to developers will never be a good fit.

Just checked out the new Planner, it changed my board categories to something I don’t want, I hate it. No useful features like “@”mentions for the comments, Trello has had that feature for a decade. Why don’t we have the flexibility to use it as we see fit? Leaving this stuff up to developers will never be a good fit. Read More

Revolutionizing hyperscale application delivery and security: The New Azure Front Door edge platform

Prologue – The creation of a new proxy with Linux, Rust, and OSS

In this introductory blog to the new Azure Front Door next generation platform, we will go over the motivations, design choices and learnings from this undertaking which helped us successfully achieve massive gains in scalability, security and resiliency.

Introduction

Azure Front Door is a global, scalable, and secure entry point for caching and acceleration of your web content. It offers a range of features such as load balancing, caching, web application firewall, and a rich rules engine for request transformation. Azure Front Door operates at the edge of Microsoft’s global network and handles trillions of requests per day from millions of clients around the world.

Azure Front Door, originally built upon a Windows-based proxy, has been a critical component in serving and protecting traffic for Microsoft’s core internet services. As the commercial offering of Azure Front Door expanded, and with the ever-evolving landscape of security and application delivery, we recognized the need for a new platform. This new platform would address the growing demands of scale, performance, cost-effectiveness, and innovation, ensuring we are able to meet the challenging scale and security demands from our largest enterprise customers. For our next-generation Azure Front Door platform, we opted to build it on Linux and embrace the open-source software community. The new edge platform was designed to incorporate learnings from the previous proxy implementation, while allowing us to accelerate innovation and deliver enhanced value to our customers. We will delve into the key design and development decisions that shaped the next generation proxy, and a modern edge platform that meets innovation, resiliency, scale and performance requirements of Azure and Microsoft customers.

Why Linux and Open Source?

A key choice that we made during the development of the new proxy platform was to use Linux as the operating system for the proxy. Linux offers a mature and stable platform for running high-performance network applications and it has a rich ecosystem of tools and libraries for network programming which allows us to leverage the expertise and experience of the open-source community.

Another reason for choosing Linux was that it offers a vibrant ecosystem with containers and Kubernetes for deploying and managing the proxy instances. The use of containers and Kubernetes offer many benefits for cloud-native applications, such as faster and easier deployment, scaling, and updates, as well as better resource utilization and isolation. By using containers and Kubernetes, we were also able to take advantage of the existing infrastructure and tooling that Microsoft has built for running Linux-based services on Azure.

The next decision that we made was to use open-source software as the basis of the platform. We selected high-quality and widely used open-source software for tasks like TLS termination, caching, and basic HTTP proxying capabilities. By using existing and reliable open-source software as the foundation of the new edge platform, we can concentrate on developing the features and capabilities that are unique to Azure Front Door. We also gain from continuous development and enhancement by the open-source community.

How did we build the next generation proxy?

While open-source software provides a solid foundation for the new proxy, it does not cover all the features and capabilities that we need for Azure Front Door. Azure Front Door is a multi-tenant service that supports many custom proxy features that are not supported by any open-source proxy. Building the proxy from scratch was faced with multiple design challenges but in this blog we will focus on the top two that helped build the foundation of the new proxy. We will discuss other aspects such as resilient architecture and protection features in later parts of this blog series.

Challenge 1: Multi-Tenancy

The first major challenge in developing Azure Front Door as a multi-tenant service was ensuring that the proxy could efficiently manage the configurations of hundreds of thousands of tenants, far surpassing the few hundred tenants typically supported by most open-source proxies. Each tenant’s configuration dictates how the proxy handles their HTTP traffic, making the configuration lookup an extremely critical aspect of the system. This requires all tenant configurations to be loaded into memory for high performance.

Processing configuration for hundreds of thousands of tenants means that the system needs to handle hundreds of config updates every second which requires dynamic updates to the data path without disrupting any packets. To address this, Azure Front Door adopted a binary configuration format which supports zero-copy deserialization and ensures fast lookup times. This choice is crucial not only for efficiently managing current tenant configurations but also for scaling up to accommodate future growth, potentially increasing the customer base tenfold. Additionally, to handle dynamic updates to the customer configuration delivered by the Azure Front Door’s configuration pipeline, a custom module was developed to asynchronously monitor and update the config in-memory.

Challenge 2: Customer business logic

One of the most widely adopted features of Azure Front Door is our Rules Engine, which allows our customers to set up custom rules tailored for their traffic. To build the proxy from scratch means that we must enable this extremely powerful use case in the open-source proxy, which brings us to our second challenge. Rather than creating fixed modules for each rule, we chose to innovate.

We developed a new domain-specific language (DSL) named AXE (Arbitrary eXecution Engine), specifically designed to add and evolve data plane capabilities swiftly. AXE is declarative and expressive, enabling the definition and execution of data plane processing logic in a structured yet flexible manner. It represents the rules as a directed acyclic graph (DAG), where each node signifies an operation or condition, and each edge denotes data or control flow. This allows AXE to support a vast array of operations and conditions, including:

Manipulating headers, cookies, and query parameters

Regex processing

URL rewriting

Filtering and transforming requests and responses

Invoking external services

These capabilities are integrated at various phases of the request processing cycle, such as parsing, routing, filtering, and logging.

AXE is implemented as a custom module in the new proxy, where it interprets and executes AXE scripts for each incoming request. The module is built on a fast, lightweight interpreter that operates in a secure, sandboxed environment, granting access to necessary proxy variables and functions. It also supports asynchronous and non-blocking operations, vital for non-disruptive external service interactions and timely processing.

This innovative approach to building and integrating the Rules Engine using AXE ensures that Azure Front Door remains a cutting-edge solution, capable of meeting and exceeding the dynamic requirements of our customers. Though AXE was developed for supporting Rules Engine feature of Azure Front Door, it was so flexible that we use it to power our WAF module now.

Why Rust?

Another important decision that we made while building the next generation proxy was to write new code in Rust, a modern and safe systems programming language. All the components we mentioned in the section above are either written in Rust or being actively rewritten in Rust. Rust is a language that offers high performance, reliability, and productivity, and it is gaining popularity and adoption in the network programming community. Rust has several features and benefits that make it a great choice for the next generation proxy, such as:

Rust has a powerful and expressive type system that helps us write correct and robust code. Rust enforces strict rules and performs all checks at compile time to prevent common errors and bugs, such as memory leaks, buffer overflows, null pointer exceptions, and data races. Rust also supports advanced features found in modern high-level languages such as generics, traits, and macros, that allow us to write generic and reusable code.

Rust has a concise and consistent syntax that avoids unnecessary boilerplate and encourages common conventions and best practices. Rust also has a rich and standard library that provides a wide range of useful and high-quality functionality with an emphasis on safety and performance, such as collections, iterators, string manipulation, error handling, networking, threading, and asynchronous execution abstractions.

Rust has a strong and vibrant community that supports and contributes to the language and its ecosystem. It has a large and growing number of users and developers who share their feedback, experience, and knowledge through various channels, such as forums, blogs, podcasts, and conferences. Rust also has a thriving and diverse ecosystem of tools and libraries that enhance and extend the language and its capabilities, such as IDEs, debuggers, test frameworks, web frameworks, network libraries, and AI/ML libraries.

We used Rust to write most of the new code for the proxy. By using Rust, we were able to write highly performant and reliable code for the proxy, while also improving our development velocity by leveraging existing Rust libraries. Rust helped us avoid many errors and bugs that could have compromised the security and stability of the proxy, and it also made our code more readable and maintainable.

Conclusion

The Azure Front Door team embarked on this journey to overhaul the entire platform a few years ago by rewriting the proxy and changing the infrastructure hosting the proxy. This effort enabled us to increase our density and throughput by more than double along with significant enhancements to our resiliency and scalability. We have successfully completed the transition of Azure Front Door customers from the old platform to the new one without any disruption. This challenging task was like changing the wings of a plane while it is airborne.

In this blog post, we shared some of the design and development challenges and decisions that we made while building the next generation edge platform for Azure Front Door that is based on Linux and uses Rust and OSS to extend and customize its functionality. We will share more details about AXE and other data plane and infrastructure innovations in later posts.

If you want to work with us and help us make the internet better and safer, we have some great opportunities for you. Azure Front Door team is looking to hire more engineers in different locations, such as USA, Australia, and Ireland. You can see more details and apply online at the Microsoft careers website. We hope to hear from you and welcome you to our team.

Microsoft Tech Community – Latest Blogs –Read More

The 2024 Work Trend Index is now available

Every year Microsoft releases the Work Trend Index report – a report that collects data from over 30,000 people across over 30 countries via global plus industry-spanning surveys, observational studies and labor trends from the LinkedIn Economic Graph. It provides an unparalleled view into some of the most important trends underway now, and gives us a glimpse into the future of work. The latest report dropped today. Check it out now!

Microsoft Tech Community – Latest Blogs –Read More

Does anyone know if Microsoft Dynamics Sequences are in the On Premises Version of Dynamics 365 CE ?

We have an enterprise customer that uses Dynamics 365 CE On Premises (2104 9.1.18.22) and wishes to use Sequences (not all the customer Insights, but just sequences) and is asking if there is something they need to install to get it for the On Premises version

We have an enterprise customer that uses Dynamics 365 CE On Premises (2104 9.1.18.22) and wishes to use Sequences (not all the customer Insights, but just sequences) and is asking if there is something they need to install to get it for the On Premises version Read More

No licenses or products showing on my newly created standard business account

Yesterday, more than a day and a half from now, I created a Microsoft 365 business standard account, started the one month free trial. “bought” it for 3 accounts. Linked it to my domain. Then i started noticing some issues:

– I couldn’t log in to outlook, it said error 500, too many redirections.

– I tried to create two accounts for my two workers, but no licenses were showing. I created their accounts without licenses, then I checked the licenses list and product list and they are both empty. Maybe I’m doing something wrong, but I expected to see there the apps that are “included” with the subscription. I think that’s also why I can’t log in to outlook.

– I can’t go into “my sign ins or security information” , they both go to a blank page.

– And I thought the process of linking my domain to Microsoft 365 was done, because it said “the configuration is completed”, but whenever I check on “domains”, the domain shows in state “configuration incomplete”, then after clicking on that domain and into “continue configuration “, it goes again to “configuration is complete” (but in domains, still it shows “configuration incomplete”.

I called support yesterday, but got no response on any of the 3 tries. Today I got a response, they told me they raised a ticket with my issues, but even though they say it could take from 30 minutes to a full day, it’s been almost 18 hours and still I got no response. I’m inclined to not trust the help service because the responder kept telling me a notification would be sent to my email even after I explained to him multiple times that one of the things that wasn’t working was outlook.

Yesterday, more than a day and a half from now, I created a Microsoft 365 business standard account, started the one month free trial. “bought” it for 3 accounts. Linked it to my domain. Then i started noticing some issues: – I couldn’t log in to outlook, it said error 500, too many redirections. – I tried to create two accounts for my two workers, but no licenses were showing. I created their accounts without licenses, then I checked the licenses list and product list and they are both empty. Maybe I’m doing something wrong, but I expected to see there the apps that are “included” with the subscription. I think that’s also why I can’t log in to outlook. – I can’t go into “my sign ins or security information” , they both go to a blank page. – And I thought the process of linking my domain to Microsoft 365 was done, because it said “the configuration is completed”, but whenever I check on “domains”, the domain shows in state “configuration incomplete”, then after clicking on that domain and into “continue configuration “, it goes again to “configuration is complete” (but in domains, still it shows “configuration incomplete”. I called support yesterday, but got no response on any of the 3 tries. Today I got a response, they told me they raised a ticket with my issues, but even though they say it could take from 30 minutes to a full day, it’s been almost 18 hours and still I got no response. I’m inclined to not trust the help service because the responder kept telling me a notification would be sent to my email even after I explained to him multiple times that one of the things that wasn’t working was outlook. Read More

May Modern Work & Security Partner Community Call

Thank you for joining us for the May Modern Work & Security Partner Community Call.

Our next call will be on Friday 07th June. You can add these sessions to your diary via the link: https://aka.ms/MWAddToCalendar

For those of you who were unable to attend, you can find the slides attached to this post and the recording will be available on demand within the next week HERE Read More

We couldn’t retrieve the updated values from a linked Excel workbook

Hello, good morning,

I hope you’re doing well.

I have the following issue. I’m trying to link 2 Excel workbooks within a Microsoft Teams group, but when someone else accesses the workbook, the following message appears: no pudimos obtener los valores actualizados de un libro vinculado excel, which translates to We couldn’t retrieve the updated values from a linked Excel workbook. Instead of the data, #¡REF! is displayed. I don’t know what to do about it; I would greatly appreciate any help with this issue.

Thank you very much!

Hello, good morning, I hope you’re doing well. I have the following issue. I’m trying to link 2 Excel workbooks within a Microsoft Teams group, but when someone else accesses the workbook, the following message appears: no pudimos obtener los valores actualizados de un libro vinculado excel, which translates to We couldn’t retrieve the updated values from a linked Excel workbook. Instead of the data, #¡REF! is displayed. I don’t know what to do about it; I would greatly appreciate any help with this issue. Thank you very much! Read More

NEW ILT Copilot Executive Challenge Coming to you this Friday! 5/10

Release Date: May 10th, 2024

(This course will be included in the newest title plan coming out this Friday)

MS-4008: Copilot for Microsoft

365 Interactive Experience for Executives

_____________________________________________________________________________________________

MS-4008 ‘Copilot for Microsoft 365 Interactive Experience for Executives,’ will launch on May 10th. MS-4008 is a 60-minute interactive course that is tailored for executive-level professionals and business leaders aiming to augment their strategic and operational capabilities using AI. It’s ideal for those leaders looking to maximize the benefits of Microsoft Copilot within Microsoft 365 to enhance productivity, decision-making, and organizational impact.

Course Overview:

Learn how Microsoft Copilot for Microsoft 365 can transform workplace productivity and spur innovation. This course provides practical insights into creating contextual prompts for Copilot and features engaging exercises that showcase its application in everyday workflows.

What’s Included:

Introduction to Copilot for Microsoft 365: Understand the capabilities, functionality, and security features of Copilot. Gain a foundational knowledge of its impact on business operations.

Hands-on Demonstrations: Experience the versatility of Copilot through demonstrations that emphasize its integration into business workflows.

Interactive Experience: Engage in hands-on sessions that demonstrate Copilot’s practical uses in real-time scenarios.

This course offers a thorough exploration of Microsoft Copilot, illustrating its potential to streamline operations and boost productivity across your workforce.

Release Date: May 10th, 2024

(This course will be included in the newest title plan coming out this Friday)

MS-4008: Copilot for Microsoft

365 Interactive Experience for Executives

_____________________________________________________________________________________________

MS-4008 ‘Copilot for Microsoft 365 Interactive Experience for Executives,’ will launch on May 10th. MS-4008 is a 60-minute interactive course that is tailored for executive-level professionals and business leaders aiming to augment their strategic and operational capabilities using AI. It’s ideal for those leaders looking to maximize the benefits of Microsoft Copilot within Microsoft 365 to enhance productivity, decision-making, and organizational impact.

Course Overview:

Learn how Microsoft Copilot for Microsoft 365 can transform workplace productivity and spur innovation. This course provides practical insights into creating contextual prompts for Copilot and features engaging exercises that showcase its application in everyday workflows.

What’s Included:

Introduction to Copilot for Microsoft 365: Understand the capabilities, functionality, and security features of Copilot. Gain a foundational knowledge of its impact on business operations.

Hands-on Demonstrations: Experience the versatility of Copilot through demonstrations that emphasize its integration into business workflows.

Interactive Experience: Engage in hands-on sessions that demonstrate Copilot’s practical uses in real-time scenarios.

This course offers a thorough exploration of Microsoft Copilot, illustrating its potential to streamline operations and boost productivity across your workforce. Read More

ISSUES WITH GUESST ACCOUNT IN TEAMS

Hello

Please i need your help on this issue.

When they try to log in, they are prompted to use a password instead of the authentication number they were previously using.

Here is the issue this user is showing in the system and can not be added to teams as a guest contact.

It is already strange that they show up twice with different emails.

I have deleted the two guest accounts from Microsoft Entra portal.

The user is not showing in there as well.

Last I search Active Directory and nothing was there either.

However when trying to add them to teams as a guest it will not allow saying the user is already added. It also asks for the password for the user to log in.

Normally when adding someone to teams as a guest contact they just use the ID Verification number that Microsoft emails to them but that is not the case for this user

Hello Please i need your help on this issue. When they try to log in, they are prompted to use a password instead of the authentication number they were previously using. Here is the issue this user is showing in the system and can not be added to teams as a guest contact.It is already strange that they show up twice with different emails. I have deleted the two guest accounts from Microsoft Entra portal. The user is not showing in there as well.Last I search Active Directory and nothing was there either. However when trying to add them to teams as a guest it will not allow saying the user is already added. It also asks for the password for the user to log in. Normally when adding someone to teams as a guest contact they just use the ID Verification number that Microsoft emails to them but that is not the case for this user Read More

Port forwarding in Azure front door standard tier

There is one on prem web application which working on domainname.com:7071 now we are migrating this app to azure and using web app + front door. Client want to include port in URL, don’t want to migrate without port in URL. How can I achieve this in the front door? As per my reacherch front door is working on 443/80 port only.

There is one on prem web application which working on domainname.com:7071 now we are migrating this app to azure and using web app + front door. Client want to include port in URL, don’t want to migrate without port in URL. How can I achieve this in the front door? As per my reacherch front door is working on 443/80 port only. Read More

how to….copy column layout from one folder to all other email folders.

I’ve done this before but am unable to find the correct navigation to do it again.

I have selected specific columns, in a specific order, for my email display in my primary inbox.

I want to propagate that column selection/order to all other folders.

Every step-by-step I’ve found online hits a step that doesn’t work.

I’m running Outlook as part of MS 365.

I’ve been using Outlook for over 30 years, but it keeps changing in many ways that make online answers not work. And MS help didn’t understand my question, despite multiple re-phrasing.

I’ve done this before but am unable to find the correct navigation to do it again.I have selected specific columns, in a specific order, for my email display in my primary inbox.I want to propagate that column selection/order to all other folders. Every step-by-step I’ve found online hits a step that doesn’t work.I’m running Outlook as part of MS 365. I’ve been using Outlook for over 30 years, but it keeps changing in many ways that make online answers not work. And MS help didn’t understand my question, despite multiple re-phrasing. Read More

New Blog | Empower multiple teams and prioritize investigations with Insider Risk Management

Your data is a prime target in most security incidents. But when an incident occurs, do you have the information you need to prioritize incidents and contain them based on the importance of the data itself?

With insider incidents becoming a bigger concern each year and 74% of organizations saying these occurrences have become more frequent[1], detecting insider risks is now a vital part of safeguarding digital landscapes. Microsoft Purview Insider Risk Management is used by organizations across the world to correlate various signals to identify potential insider risks while ensuring user privacy by design, but it can also be used to detect data security risks coming from external attackers. The past year saw a dramatic surge in identity attacks, with an average of 4,000 password attacks per second. Some of these attacks are successful in compromising user credentials, enabling the attacker to persist in the organization’s systems as an insider, having access to sensitive data.

That is why besides data security and data compliance teams, SOC (security operations center) teams also play a pivotal role in safeguarding organizations’ data against a myriad of threats, coming from both Insiders and external attackers. However, security admins are challenged in a fragmented tooling landscape, requiring these professionals to often analyze repeated alerts and to manually correlate insights across solutions, restricting visibility on risky data and users involved in an incident. With customers that employ more security tools experiencing 2.8x more data security incidents[2], it is crucial that security teams have access to integrated solutions across their data landscape to help them triage and prioritize incidents with broader context for their investigations.

Microsoft Purview Insider Risk Management correlates various signals, such as unusual access patterns and data exfiltration, to identify potential malicious or inadvertent insider risks, including IP theft, data leakage, and security violations. Insider Risk Management enables customers to create data handling policies based on their own internal policies, governance, and organizational requirements. Built with privacy by design, users are pseudonymized by default, and role-based access controls and audit logs are in place to help ensure user-level privacy.

Empowering SOC teams to better investigate insider risks

Today, we are excited to announce the public preview of Insider Risk Management context on the Microsoft Defender XDR user entity page. With this update, SOC analysts with the required customer-determined permissions can access an insider risk summary of user exfiltration activities that may lead to potential data security incidents, as a part of the user entity investigation experience in Microsoft Defender. This feature can help SOC analysts gain data security context for a specific user, prioritize incidents, and make more informed decisions on responses to potential incidents.

When looking into an occurrence in Microsoft Defender’s Incidents view, the security analyst now can dig further into an incident’s source. In the following example, a multi-stage attack stole an employee’s credentials, followed by exfiltration activities that triggered multiple data loss prevention (DLP) alerts, such as sharing payment cards information externally.

Figure 1: Incident in Microsoft Defender showing a user’s Insider Risk Level

Read the full post here: Empower multiple teams and prioritize investigations with Insider Risk Management

By Nathalia Borges

Your data is a prime target in most security incidents. But when an incident occurs, do you have the information you need to prioritize incidents and contain them based on the importance of the data itself?

With insider incidents becoming a bigger concern each year and 74% of organizations saying these occurrences have become more frequent[1], detecting insider risks is now a vital part of safeguarding digital landscapes. Microsoft Purview Insider Risk Management is used by organizations across the world to correlate various signals to identify potential insider risks while ensuring user privacy by design, but it can also be used to detect data security risks coming from external attackers. The past year saw a dramatic surge in identity attacks, with an average of 4,000 password attacks per second. Some of these attacks are successful in compromising user credentials, enabling the attacker to persist in the organization’s systems as an insider, having access to sensitive data.

That is why besides data security and data compliance teams, SOC (security operations center) teams also play a pivotal role in safeguarding organizations’ data against a myriad of threats, coming from both Insiders and external attackers. However, security admins are challenged in a fragmented tooling landscape, requiring these professionals to often analyze repeated alerts and to manually correlate insights across solutions, restricting visibility on risky data and users involved in an incident. With customers that employ more security tools experiencing 2.8x more data security incidents[2], it is crucial that security teams have access to integrated solutions across their data landscape to help them triage and prioritize incidents with broader context for their investigations.

Microsoft Purview Insider Risk Management correlates various signals, such as unusual access patterns and data exfiltration, to identify potential malicious or inadvertent insider risks, including IP theft, data leakage, and security violations. Insider Risk Management enables customers to create data handling policies based on their own internal policies, governance, and organizational requirements. Built with privacy by design, users are pseudonymized by default, and role-based access controls and audit logs are in place to help ensure user-level privacy.

Empowering SOC teams to better investigate insider risks

Today, we are excited to announce the public preview of Insider Risk Management context on the Microsoft Defender XDR user entity page. With this update, SOC analysts with the required customer-determined permissions can access an insider risk summary of user exfiltration activities that may lead to potential data security incidents, as a part of the user entity investigation experience in Microsoft Defender. This feature can help SOC analysts gain data security context for a specific user, prioritize incidents, and make more informed decisions on responses to potential incidents.

When looking into an occurrence in Microsoft Defender’s Incidents view, the security analyst now can dig further into an incident’s source. In the following example, a multi-stage attack stole an employee’s credentials, followed by exfiltration activities that triggered multiple data loss prevention (DLP) alerts, such as sharing payment cards information externally.

Figure 1: Incident in Microsoft Defender showing a user’s Insider Risk Level

Read the full post here: Empower multiple teams and prioritize investigations with Insider Risk Management

New Blog | Secure your AI transformation with Microsoft Security

Generative AI is reshaping business today for every individual, every team, and every industry. Organizations engage with GenAI in a variety of ways – from purchasing and using finished GenAI apps to developing, deploying, and operating custom-built GenAI apps.

GenAI broadens the attack surface of applications through prompts, training data, models, and more – thereby effectively changing the threat landscape with new risks such as direct or indirect prompt injection attacks, data leakage, and data oversharing.

In March this year, we shared how Microsoft Security helps organizations discover, protect, and govern the use of GenAI apps like Copilot for M365. Today, we’re thrilled to introduce additional capabilities for that scenario and new capabilities to secure and govern the development, deployment, and runtime of custom-built GenAI apps.

With these new innovations, Microsoft Security is at the forefront of AI security to support our customers on their AI journey by being the first security solution provider to offer threat protection for AI workloads and providing comprehensive security to secure and govern AI usage and applications.

Secure and govern GenAI you build:

Discover new AI attack surfaces with AI security posture management (AI-SPM) in Microsoft Defender for Cloud for AI apps using Azure OpenAI Service, Azure Machine Learning, and Amazon Bedrock

Protect your AI apps using Azure OpenAI in runtime with threat protection for AI workloads in Microsoft Defender for Cloud, the first cloud-native application protection platform (CNAPP) to provide runtime protection for enterprise-built AI apps using Azure OpenAI Service

Secure and govern GenAI you use:

Discover and mitigate data security and compliance risks with Microsoft Purview AI Hub, now offering new insights, including visibility into unlabeled data and SharePoint sites that are referenced by Copilot for M365 and non-compliant usage such as regulatory collusion, money laundering, and targeted harassment for M365 interactions

Govern AI use to comply with regulatory requirements with 4 new AI compliance assessments in Microsoft Purview Compliance Manager

Discover new AI attack surfaces

As organizations embrace GenAI, many accelerate adoption with pre-built GenAI applications while others choose to develop GenAI applications in-house, tailored to their unique use cases, security controls and compliance requirements. Organizations from all industries are racing to transform their applications with AI, with over half of Fortune 500 companies using Azure OpenAI.

With all the new components of AI workloads such as models, SDKs, training, and grounding data – the visibility into understanding all the configurations of these new components and the risks associated with them is more important than ever.

With new AI security posture management (AI-SPM) capabilities in Microsoft Defender for Cloud, security admins can continuously discover and inventory their organization’s AI components across Azure OpenAI Service, Azure Machine Learning, and Amazon Bedrock – including models, SDKs, and data – as well as sensitive data used in grounding, training, and fine tuning LLMs. Admins can find vulnerabilities, identify exploitable attack paths, and easily remediate risks to get ahead of active threats.

Figure 1: Attack path analysis in Defender for Cloud identifies an indirect risk to an Azure OpenAI resource where an attacker can exploit vulnerabilities via an internet exposed VM to potentially gain access and control of the AI resource, model deployments, and data.

Read the full post here: Secure your AI transformation with Microsoft Security

By Daniela Villarreal

Generative AI is reshaping business today for every individual, every team, and every industry. Organizations engage with GenAI in a variety of ways – from purchasing and using finished GenAI apps to developing, deploying, and operating custom-built GenAI apps.

GenAI broadens the attack surface of applications through prompts, training data, models, and more – thereby effectively changing the threat landscape with new risks such as direct or indirect prompt injection attacks, data leakage, and data oversharing.

In March this year, we shared how Microsoft Security helps organizations discover, protect, and govern the use of GenAI apps like Copilot for M365. Today, we’re thrilled to introduce additional capabilities for that scenario and new capabilities to secure and govern the development, deployment, and runtime of custom-built GenAI apps.

With these new innovations, Microsoft Security is at the forefront of AI security to support our customers on their AI journey by being the first security solution provider to offer threat protection for AI workloads and providing comprehensive security to secure and govern AI usage and applications.

Secure and govern GenAI you build:

Discover new AI attack surfaces with AI security posture management (AI-SPM) in Microsoft Defender for Cloud for AI apps using Azure OpenAI Service, Azure Machine Learning, and Amazon Bedrock

Protect your AI apps using Azure OpenAI in runtime with threat protection for AI workloads in Microsoft Defender for Cloud, the first cloud-native application protection platform (CNAPP) to provide runtime protection for enterprise-built AI apps using Azure OpenAI Service

Secure and govern GenAI you use:

Discover and mitigate data security and compliance risks with Microsoft Purview AI Hub, now offering new insights, including visibility into unlabeled data and SharePoint sites that are referenced by Copilot for M365 and non-compliant usage such as regulatory collusion, money laundering, and targeted harassment for M365 interactions

Govern AI use to comply with regulatory requirements with 4 new AI compliance assessments in Microsoft Purview Compliance Manager

Discover new AI attack surfaces

As organizations embrace GenAI, many accelerate adoption with pre-built GenAI applications while others choose to develop GenAI applications in-house, tailored to their unique use cases, security controls and compliance requirements. Organizations from all industries are racing to transform their applications with AI, with over half of Fortune 500 companies using Azure OpenAI.

With all the new components of AI workloads such as models, SDKs, training, and grounding data – the visibility into understanding all the configurations of these new components and the risks associated with them is more important than ever.

With new AI security posture management (AI-SPM) capabilities in Microsoft Defender for Cloud, security admins can continuously discover and inventory their organization’s AI components across Azure OpenAI Service, Azure Machine Learning, and Amazon Bedrock – including models, SDKs, and data – as well as sensitive data used in grounding, training, and fine tuning LLMs. Admins can find vulnerabilities, identify exploitable attack paths, and easily remediate risks to get ahead of active threats.

Figure 1: Attack path analysis in Defender for Cloud identifies an indirect risk to an Azure OpenAI resource where an attacker can exploit vulnerabilities via an internet exposed VM to potentially gain access and control of the AI resource, model deployments, and data.

Read the full post here: Secure your AI transformation with Microsoft Security

Standardize your customer configurations in Microsoft 365 Lighthouse

Default baseline tasks keep users secure and productive

We’re excited to continue enhancing our default baseline in Microsoft 365 Lighthouse to provide a set of tasks that help Managed Service Providers (MSPs) secure users, devices, and data in their customer tenants to ensure customers remain secure and productive in a scalable way.

This post introduces tasks we added to our default baseline that are focused on several key areas, including new areas like user education and Microsoft Teams.

To learn more about the benefits of using baselines, check out the webinar on May 22, 2024: Unlock efficiency and scale with Microsoft 365 Lighthouse.

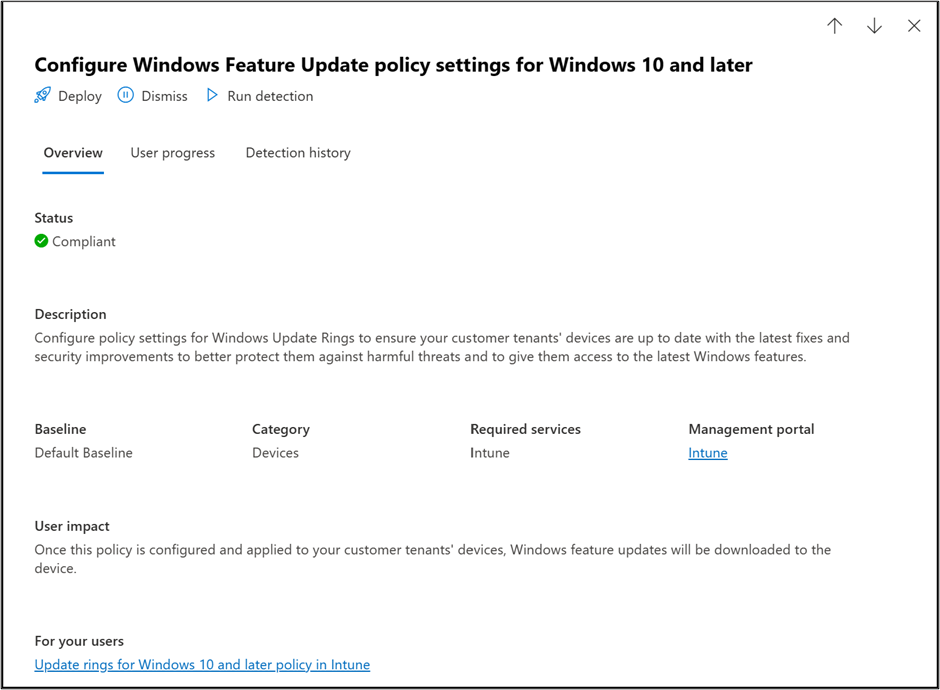

Keep your devices secure and updated with a Windows Feature Update policy

A screenshot showing the Windows Feature Update policy task details pane

To keep Windows devices secure and updated, users need the latest security updates. Our Windows Update deployment task standardizes deployment windows, deployment rings, and update behavior. This helps you apply updates quickly and consistently. Our drift and variance detection and alerts notify you of any policy changes that could pose a security risk.

Protect company data by using a OneDrive policy

A screenshot showing the OneDrive configuration task details pane

Setting up a policy for OneDrive configuration helps keep user and company data secure and provides the ability to view the status of the OneDrive sync client. The ability to view when a OneDrive client is no longer syncing or in a healthy state can help prevent data loss and maintain continuity for user data.

Configure SSPR to allow users to reset their own passwords

A screenshot showing the Configure self-service password reset task within the default baseline

Self-service password reset (SSPR) gives users the ability to change or reset their password, with no administrator or help desk involvement. This ability reduces help desk calls and loss of productivity when a user can’t sign in to their device or an application.

Configure Viva Learning paths for user education

A screenshot showing the Configure Viva Learning paths task within the default baseline

Help users onboard to Microsoft 365 and learn about features and functionality with Viva Learning paths. The set of courses included in this task also help educate users on best practices for safety and security.

Baseline tasks focused on Microsoft Teams

A screenshot showing the Disable anonymous access for Teams meeting task within the default baseline

We’ve also added several tasks focused on Microsoft Teams. From disabling anonymous access to Teams meetings, to turning on Safe Attachments for Teams, OneDrive, and SharePoint, this set of tasks helps ensure that your customers’ productivity suite is properly configured and secure.

ASR policies and Windows Update tasks

A screenshot showing the Create an attack surface reduction policy task within the default baseline

Lastly, we’ve added several tasks focused on keeping endpoints up to date and secure. From configuration of an attack surface reduction (ASR) policy, to enabling cloud updates for Microsoft 365 apps to ensure apps are on the latest security and feature updates, we’ve made it even easier to ensure all your managed endpoints are protected and in a consistent state across your customer tenants.

Start using Microsoft 365 Lighthouse to configure your managed tenants today

These tasks are just a sampling of the deployment tasks available as part of the Microsoft 365 Lighthouse default baseline. We continue to expand into new areas to ensure that your customer tenants are securely configured to enhance productivity and usability.

To learn more about Microsoft 365 and the default baseline, check out these resources:

Start using Microsoft 365 Lighthouse

Check out default baselines in Microsoft 365 Lighthouse

Sign up for Microsoft 365 Lighthouse | Microsoft Learn

Overview of Microsoft 365 Lighthouse | Microsoft Learn

Microsoft Tech Community – Latest Blogs –Read More

Export DLP Policies, Rules and Settings using PowerShell

This blog outlines the steps to export the DLP policies, rules and settings in bulk.

Here’s a summary of the items covered:

Exporting DLP policies, rules and settings: The document explains how to use PowerShell cmdlets to export the DLP policies, rules and settings in bulk from the Security and Compliance Center PowerShell.

Viewing the value of switches: The document shows how to view the value of switches that are parsed by the cmdlets, such as the groups or users that are scoped or excluded from a policy.

Exporting as a CSV file: The document provides examples of how to export the policy scoping or exclusion details as a CSV file by using the Select -ExpandProperty parameter.

Exporting as a JSON file: The document demonstrates how to export all the policies and their attributes or sub-attributes as a JSON file by using the ConvertTo-Json cmdlet.

We have cmdlets to export the DLP Policies rules and settings however one of the main issues we come across is the inability to view the value of those switches since the data is parsed.

Consider a scenario where you want a list of all the groups/users scoped or excluded in a particular policy along with the Display Names, Email and Immutable ID’s.

When you run the cmdlet to you would see that the content is enclosed with braces { }. Braces are normally indicative of a hash table.

Get-DlpCompliancePolicy “Credit Card Policy – Audit” | Select EndpointDLPLocation

EndpointDlpLocation

——————-

{Tailspin, Traders, Contoso, contosoteam…}

Considering there are hundreds of entries, you can use the below cmdlet to expand the property and export it as a csv.

Get-DlpCompliancePolicy “Credit Card Policy – Audit” | Select -ExpandProperty EndpointDLPLocation | Export-Csv c:tempPolicyscoping.csv -NoTypeInformation

Similarly, you can use the below to export the list of users/groups that are excluded from the policy.

Get-DlpCompliancePolicy “Credit Card Policy – Audit” | Select -ExpandProperty EndpointDLPLocationException | Export-Csv c:tempPolicyExclusion.csv -NoTypeInformation

You can also choose to export all the policies and their attributes/sub-attributes as a JSON file using the below command.

You can then use a Parser or import the json file into PowerQuery/PowerBI to parse the data and view all the policies and it’s details.

$dlppolicy = Get-DlpCompliancePolicy

$dlppolicy | ConvertTo-Json -Depth 100 | Out-File -Encoding UTF8 -FilePath c:policy.json

You can also choose to Export a single policy or rule info to JSON and view the details by using the below cmdlet.

$dlppolicy = Get-DlpCompliancePolicy “Credit Card Policy – Audit”

$dlppolicy | ConvertTo-Json -Depth 100 | Out-File -Encoding UTF8 -FilePath c:CCpolicy.json

$dlprule = Get-DlpComplianceRule

$dlprule | ConvertTo-Json -Depth 100 | Out-File -Encoding UTF8 -FilePath c:rule.json

In-order to export the Policy Configuration, you can use the below.

$config = Get-PolicyConfig

$config | ConvertTo-Json -Depth 100 | Out-File -Encoding UTF8 -FilePath c:policyconfig.json

Hope this article helps in your DLP journey!

Microsoft Tech Community – Latest Blogs –Read More

Tenant health transparency and observability

In previous resilience blog posts, we’ve shared updates about the continuous improvements we’re making to resilience and reliability, including our most recent update on regionally isolated authentication endpoints and an announcement last year of our industry-leading and first of its kind backup authentication service. These and other innovations behind the scenes enable us to deliver consistently very high rates of availability globally each month.

In this post, we’ll outline what we’re doing to help customers see how available and resilient Microsoft Entra really is for them, to not only hold us accountable when issues arise, but also better understand what actions to take within their tenant to improve its health. At the global level, you see it in the form of retrospective SLA reporting, which shows authentication availability exceeding our 4 9s promise (launched in spring 2021) by a wide margin and reaching 5 9s in most months. But it becomes more compelling and actionable at the tenant level: what is the uptime experience of my users on my organization’s apps and devices? Is my tenant handling surges in sign-in demand?

We often hear from customers about the effect on resilience insights when they move to the cloud. In the on-prem world, identity health monitoring occurred onsite and with tight control; operational awareness happened entirely within a company’s first-party IT department. Now, we need to achieve that same transparency or better in an outsourced, cloud-based identity service and with a federated set of dependencies.

IT departments and developers are working hard to ensure each of their users maintains seamless, uninterrupted access that doesn’t compromise security. Enabling access for the right users with minimal friction while stopping intrusions and risk is critical to keep the world running. When an organization outsources their identity service to Microsoft, they expect us to acknowledge degradations when they happen, then take accountability to learn and continuously improve from those events. We also recognize that human-driven communication can only take us so far.

To meet these challenges, we’re increasingly embracing granular monitoring and automation. We start from the assumption that the unexpected will find a way of happening in any complex system, no matter how resilient it is. Beyond resilience, we must detect incidents, respond to them effectively, and improve as we go—and help our customers do the same. You see examples of this approach both in our rollout of in-tenant health monitoring and in our investments behind the scenes aimed at fast incident detection and communication.

Let’s start with out-of-the-box automated health monitoring in premium tenants. Tenant-level health monitoring empowers customers to independently understand the quality of their users’ experiences with authentication and access. It also sets the stage to prompt tenant administrators with actions they can take to investigate and reduce disruptions, all from Microsoft Entra admin center or using MS Graph API calls.

We’ve taken a step in this direction by introducing a group of precomputed health metric streams that enable our premium customers to watch key authentication scenarios, an early milestone in our investments to enhance transparent visibility into tenant health and service resilience. These new health metrics isolate relevant signals from activity logs and provide pre-computed, low-latency aggregates every 15 minutes for specific high-value observability scenarios.

With their granularity and scenario-specific focus, health metrics go a step beyond the monthly tenant-level SLA reporting we released in 2023. Precomputed health metrics also supplement the activity log data that we’ve been providing and continue to improve on. With sign-in logs, customers can build their own computed metrics to monitor, like isolating a specific sign-in method to watch for increases in success and failure. With our new precomputed streams, customers can snap to Microsoft-defined indicators of health, take advantage of features we’re developing at scale, and dive into activity logs for deeper investigations. We encourage customers to make use of both options to get a full picture.

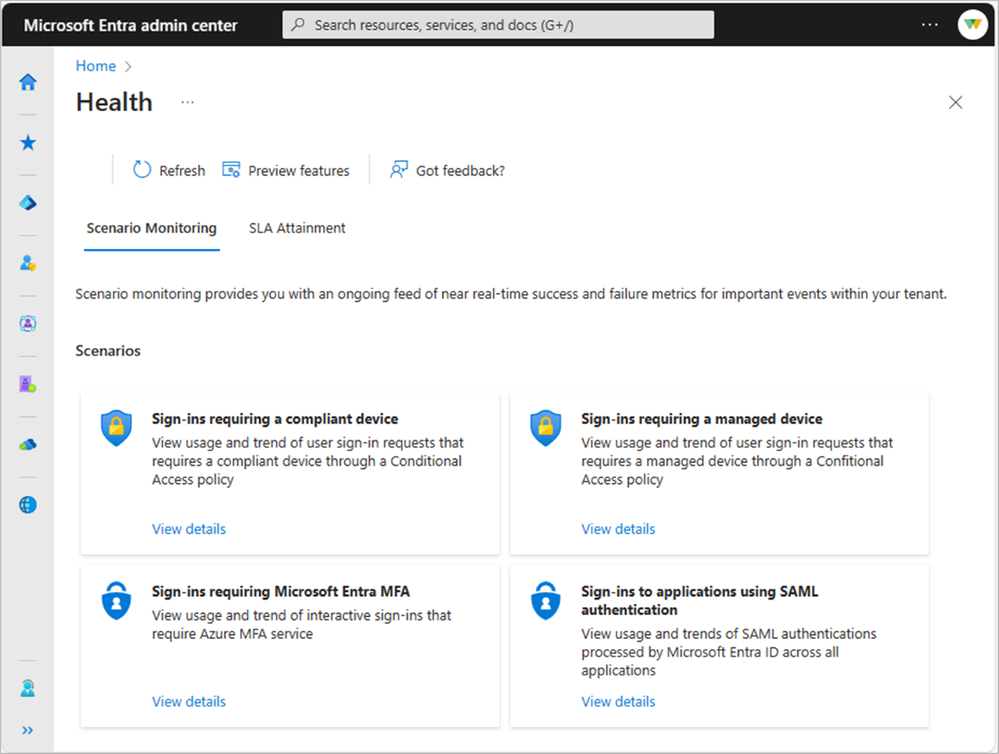

During the initial public preview offering, we’re releasing health metric streams related to maintaining highly available:

Multifactor authentication (MFA)

Sign-ins for devices that are managed under Conditional Access policies

Sign-ins for devices that are compliant with Conditional Access policies

Security Assertion Markup Language (SAML) sign-ins

We’re starting with authentication-related scenarios because they are mission critical to all our customers, but other scenarios in areas like entitlement management, directory configuration, and app health will be added in time along with intelligent alerting capabilities in response to anomalous patterns in the data. We’re publishing the health metrics in Microsoft Entra admin center, Azure Portal, and M365 admin center, as well as in Microsoft Graph for programmatic access and integration into other monitoring pipelines.

For more information about how to access the health monitoring metrics, visit the Microsoft Learn documentation.

Figure shows the Scenario monitoring landing page & the Sign in with MFA scenario details

Even as in-tenant observability improves, customers will still rely on traditional incident communications when Microsoft-side issues happen. Like all service providers, we push messages about incidents to affected customers and post service health announcements to a website and communications feed in Azure. However, when this approach relies solely on hand-crafted service monitors and human-driven communications, it has limitations. Customers are right to have concerns about the timeliness of communication and the monitoring coverage itself.

To address this challenge, we’re building increasingly sophisticated default monitoring packages attached to automated communications. The early results are promising. We’ve been able to bring times to notify customers about incidents down significantly, with service degradations and downtime being communicated within about 10 minutes of auto-detection. We’re also catching service degradations increasingly early by investing in monitoring, the results of which we track by watching customer-reported incident volumes.

The best incidents are the ones that never happen. Our goal is to find and mitigate problems before they impact our customers. So, in addition to advances, we continue to prioritize building systematic resilience measures to prevent service degradations and outages or auto-mitigate them before they affect a customer environment. We will share more on this in a future blog.

To continuously improve our services in partnership with our customers, we’re combining improvements in our service-level safety net with tenant-level monitoring. We’re also expanding our monitored scenarios, boosting our out-of-the-box monitoring intelligence, and speeding up our communication. Plus, integration with Azure, M365, and Microsoft Graph ensures that Microsoft Entra observability can happen wherever it’s needed. Together, we’re making sure everyone can work securely and seamlessly.

With our already strong foundation of availability and resilience, security-enhancing recommendations, and mature service monitoring and incident communications, we’re excited to see these new capabilities take Entra health transparency to the next level.

Igor Sakhnov

CVP, Microsoft Identity & Network Access Engineering

Read more on this topic

Microsoft Entra resilience update: Workload identity authentication – Microsoft Community Hub

Learn more about Microsoft Entra

Prevent identity attacks, ensure least privilege access, unify access controls, and improve the experience for users with comprehensive identity and network access solutions across on-premises and clouds.

Microsoft Entra News and Insights | Microsoft Security Blog

Microsoft Entra blog | Tech Community

Microsoft Entra documentation | Microsoft Learn

Microsoft Entra discussions | Microsoft Community

Microsoft Tech Community – Latest Blogs –Read More

Defender for Cloud Apps delivers new in-browser protection capabilities via Microsoft Edge

In today’s increasingly online and hybrid work environment, facilitating seamless work from any location and device is crucial, especially with the growing necessity to share data externally for enhanced collaboration. On the other hand, protecting your organization’s data and resources remains important. Additionally, the rising reliance on web browsers for enterprise tasks introduces new security challenges that require careful attention to keep data and apps secure.

To address these needs effectively, it is vital to provide centralized yet flexible solutions that empower users to control how their organization’s data is accessed, balancing protection with productivity. We are excited to deliver a new way to manage secure session access for SaaS apps. Microsoft Defender for Cloud Apps now provides new in-browser protection capabilities via Microsoft Edge to enable security teams to seamlessly manage how a user can interact with in-app data based on their risk profile. The in-browser protection removes the need for proxies, improving both security and productivity, based on session policies that are applied directly to the browser.

Depending on the risk associated with the user, such as when they are logging in from an unmanaged device, admins can restrict app access or create granular policies that prevent downloads, uploads, copying, cutting, or printing actions during a session. More importantly, protected users enjoy a smooth experience when using cloud apps without any impact on their productivity — through native integration with Edge, there are no latency or app compatibility issues, providing more flexibility in protecting your valuable data across SaaS apps.

Protect data across SaaS apps directly within Edge for Business

Microsoft Defender for Cloud Apps now enables session policies to protect data in motion within the Edge for Business browser as it traverses trust boundaries with detailed visibility into cloud app usage with real-time, session-level monitoring. This functionality is crucial for protecting data from SaaS apps such as SharePoint, Box, or Dropbox as it moves to managed or unmanaged devices within an organization.

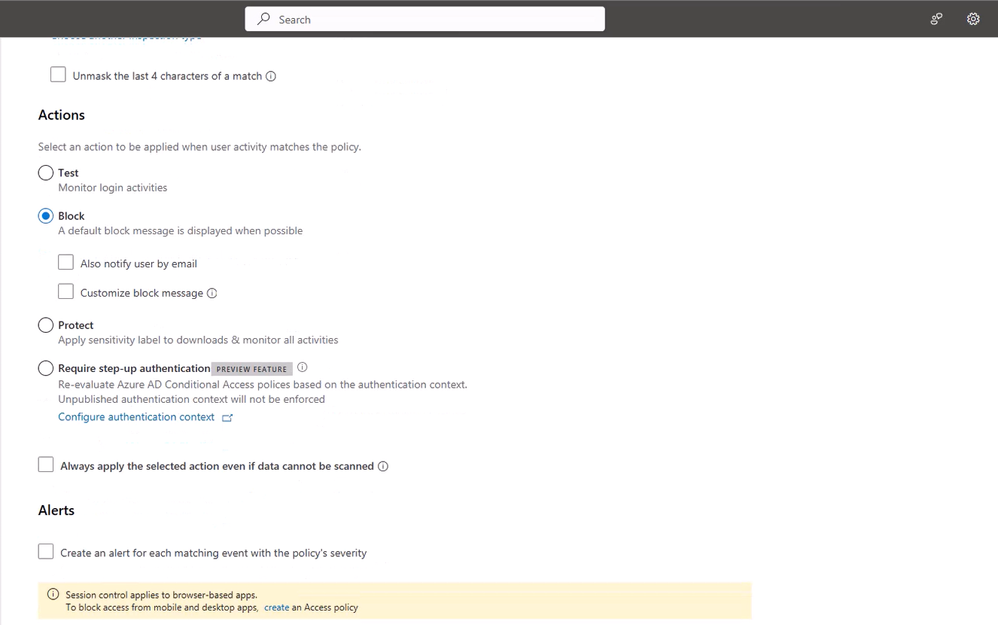

Session policies can be configured within the Microsoft Defender portal. Security admins can follow these steps to create a new session policy:

After you have created a conditional access policy that applies Defender for Cloud Apps session control, navigate to Cloud Apps -> Policies -> Policy management in the Microsoft Defender portal. Then select the Conditional access tab.

Click on Create policy and select Session policy.

In the Session policy window, assign a name for your policy, such as Block Download of Sensitive Documents in Box for Marketing Users.

Under the Session control type field, choose from the following options:

Select Monitor only if you only want to monitor activities by users. This selection creates a Monitor only policy for the apps you selected were all sign-ins.

Select Control file download (with inspection) if you want to monitor user activities. You can take more actions like block or protect downloads for users.

Select Block activities to block specific activities, which you can select using the Activity type filter.

Figure 1: Creating a Defender for Cloud Apps session policy in Microsoft Defender portal

Seamless experience for both end users and admins

The integration of Defender for Cloud Apps with Edge for Business delivers smooth and fast experience for both end users and admins, leveraging robust security controls from an enterprise-grade browser while streamlining workflows. The deployment is seamless for the users as this functionality is natively built into the Edge browser installed by default on the users’ Windows PC.

Once the admin establishes session policies, these policies are directly applied to browser. For instance, admins can create session policies based on user risk profiles to prevent actions such as downloads, uploads, copying, cutting, or printing files. Specifically, when a user attempts to download a file containing sensitive credit card information from a SharePoint site via the Edge for Business browser, Defender for Cloud App will enforce the session policy to block this action. These restrictions are implemented seamlessly for users without affecting their productivity.

Additionally, for admins, the experience is equally seamless, requiring no additional configurations as it automatically utilizes the built-in controls of Edge for Business. If you are already using session policies today, there is no need to define new ones. The integration will work seamlessly and continue to serve 3rd party browsers through proxy while automatically using Edge after a user is signed into the work profile.

Figure 2: A block message from Defender for Cloud Apps to prevent the download of a sensitive file within the Edge browser

Users can identify that they’re using in-browser protection in Microsoft Edge for Business by the additional “lock” icon in the browser address bar as shown in the example below, indicating protection by Defender for Cloud Apps. Unlike standard conditional access app control, the .mcas.ms suffix does not appear in the browser address bar with in-browser protection, indicating that the Edge for Business browser is implementing security measures directly on the user’s device, which can provide reduced latency, tighter control, and better security.

The seamless integration of Microsoft Defender for Cloud Apps with an enterprise-grade browser ensures a safer, latency-free experience for end users. Simultaneously, security admins can effortlessly manage in-app access to SaaS applications and control user interactions with in-app data based on individual risk profiles. This integration strikes a crucial balance between protection and productivity in today’s dynamic workplace.

Learn more:

Read our documentation to get started with in-browser protection

Explore session policy in Defender for Cloud Apps documentation

Microsoft Tech Community – Latest Blogs –Read More

Retrieving more than 30,000 records from Log Analytics Workspace using Azure Data Explorer

Introduction:

In the ever-evolving landscape of cloud computing, Log Analytics Workspace is used as a tool in Azure to collect logs, edit/run log queries and interactively analyze query results.

As organizations scale their infrastructure and applications, the volume of observability data naturally increases.

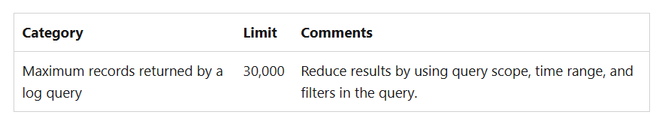

A query running in Log Analytics workspace can return a maximum of 30,000 records. However, there are several instances where huge amount of data needs to be extracted and analyzed. Some of the scenarios are:

Data over a long period of time: Organization needs several months of data which is high in volume and number of records.

Monitoring Solution Design: Sometimes there is a need to capture all logs under one workspace for Security/Compliance team to review. Hence, some organizations monitor all their subscriptions under one tenant in one workspace. This causes causing centralization of a huge volume of data in one workspace.

Larger Scope while querying data: Some organizations have monitoring data spread across several workspaces. A query can be run to fetch the data across workspaces. This results in large number of records.

This limitation of the 30,000 records in the workspace leads to writing the same query in a shorter time range, running it multiple times to get the data in batches and combining that data at the end. Hence causing re-running/re-writing query with additional efforts and taking more time than expected to fetch logs.

To address this challenge and to empower customers with the ability to query and fetch data in one go, Azure Data explorer service in Azure can be utilized.

What is Azure Data Explorer?

Azure Data Explorer is a platform for high-performance which helps to analyze high volumes of data in near real time. The Azure Data Explorer provides an end-to-end solution for data ingestion, query, visualization, and management. Azure Data Explorer is ideal for enabling interactive analytics capabilities over high velocity, diverse raw data.

Getting Started: Query data in Azure Monitor using Azure Data Explorer

Open https://dataexplorer.azure.com and click on “Query” from left pane.

Then click on “Add+” and select “Connection”.

2. You will see an option to add a Connection. Put the following values in:

Connection URI: Put Log Analytics Workspace URI in the format of

Note: You will find the above details in under Log Analytics Workspace -> Properties -> Resource ID

Display Name: Name of the workspace or anything as per convenience

Click on Add to establish the connection between Azure Data explorer and the workspace.

Once the connection is established, you can run a query and fetch the records. You need to select the database from the left pane before running the query.

Advantages:

Fetch records in one go thus saving time and manual efforts of re-writing/re-running query.

No overhead of creating a data cluster in Azure Data explorer thus reducing cost and complex setup.

Delivers high performance along with variety and volume of data.

Larger query scope and time range.

Microsoft Tech Community – Latest Blogs –Read More

Error with the animation of the surface plot

Hello

I want to perform a surface animation, but I constantly run into an error

Error using surf

Data dimensions must agree.

Error in surfc (line 54)

hs = surf(cax, args{:});

Error in dcSQUID_diode_efficiency_animation (line 26)

surfc(phi,theta,eff)

I would be grateful if someone could tell me where exactly I made a mistake in the code

function z1=dcSQUID_diode_efficiency_animation

tic

phi0=linspace(0.000,2*pi,100);

theta0=linspace(0.000,2*pi,100);

flux1=linspace(0,1,10);

h = figure;

axis tight manual

filename = ‘dc_squid_eff.gif’;

for ii = 1:10

for i=1:100

for j=1:100

y=self11(phi0(i),theta0(j),flux1(ii));

eff(i,j,ii)=y;

end

end

[phi,theta]=meshgrid(phi0,theta0);

surfc(phi,theta,eff)

zlim([-0.5 0.5])

clim([-0.5 0.5])

colormap jet

colorbar(‘FontSize’,34,’FontName’,’Times New Roman’)

set(gca,’FontName’,’Times New Roman’,’FontSize’,34)

xlabel(‘phi’,’FontName’,’Times New Roman’,’fontsize’,34,’fontweight’,’b’);

ylabel(‘theta’,’FontName’,’Times New Roman’,’fontsize’,34,’fontweight’,’b’);

set(gca,’XTick’,0:pi/2:2*pi)

set(gca,’XTickLabel’,{‘0′,’pi/2′,’pi’,’3pi/2′,’2pi’})

set(gca,’YTick’,0:pi/2:2*pi)

set(gca,’YTickLabel’,{‘0′,’pi/2′,’pi’,’3pi/2′,’2pi’})

drawnow

frame = getframe(h);

im = frame2im(frame);

[imind,cm] = rgb2ind(im,256);

if ii == 1

imwrite(imind,cm,filename,’gif’, ‘Loopcount’,inf);

else

imwrite(imind,cm,filename,’gif’,’WriteMode’,’append’);

end

end

function z2=self11(phi0,theta0,flux1)

rL1=1;

rL2=1;

rR0=1;

rR1=1;

rR2=1;

x = -2*pi:0.001:2*pi;

I_dc = cos(x./2).*atanh(sin(x./2))+rL1*cos((x+2*phi0)./2).*atanh(sin((x+2*phi0)./2))+rL2*cos((x+2*theta0)./2).*atanh(sin((x+2*theta0)./2))+…

rR0*cos((x-2*pi*flux1)./2).*atanh(sin((x-2*pi*flux1)./2))+rR1*cos(((x-2*pi*flux1)+2*phi0)./2).*atanh(sin(((x-2*pi*flux1)+2*phi0)./2))+…

rR2*cos(((x-2*pi*flux1)+2*theta0)./2).*atanh(sin(((x-2*pi*flux1)+2*theta0)./2));

I_min=min(I_dc);

I_max=max(I_dc);

z2=(I_max-abs(I_min))/(I_max+abs(I_min));

end

toc

endHello

I want to perform a surface animation, but I constantly run into an error

Error using surf

Data dimensions must agree.

Error in surfc (line 54)

hs = surf(cax, args{:});

Error in dcSQUID_diode_efficiency_animation (line 26)

surfc(phi,theta,eff)

I would be grateful if someone could tell me where exactly I made a mistake in the code

function z1=dcSQUID_diode_efficiency_animation

tic

phi0=linspace(0.000,2*pi,100);

theta0=linspace(0.000,2*pi,100);

flux1=linspace(0,1,10);

h = figure;

axis tight manual

filename = ‘dc_squid_eff.gif’;

for ii = 1:10

for i=1:100

for j=1:100

y=self11(phi0(i),theta0(j),flux1(ii));

eff(i,j,ii)=y;

end

end

[phi,theta]=meshgrid(phi0,theta0);

surfc(phi,theta,eff)

zlim([-0.5 0.5])

clim([-0.5 0.5])

colormap jet

colorbar(‘FontSize’,34,’FontName’,’Times New Roman’)

set(gca,’FontName’,’Times New Roman’,’FontSize’,34)

xlabel(‘phi’,’FontName’,’Times New Roman’,’fontsize’,34,’fontweight’,’b’);

ylabel(‘theta’,’FontName’,’Times New Roman’,’fontsize’,34,’fontweight’,’b’);

set(gca,’XTick’,0:pi/2:2*pi)

set(gca,’XTickLabel’,{‘0′,’pi/2′,’pi’,’3pi/2′,’2pi’})

set(gca,’YTick’,0:pi/2:2*pi)

set(gca,’YTickLabel’,{‘0′,’pi/2′,’pi’,’3pi/2′,’2pi’})

drawnow

frame = getframe(h);

im = frame2im(frame);

[imind,cm] = rgb2ind(im,256);

if ii == 1

imwrite(imind,cm,filename,’gif’, ‘Loopcount’,inf);

else

imwrite(imind,cm,filename,’gif’,’WriteMode’,’append’);

end

end

function z2=self11(phi0,theta0,flux1)

rL1=1;

rL2=1;

rR0=1;

rR1=1;

rR2=1;

x = -2*pi:0.001:2*pi;

I_dc = cos(x./2).*atanh(sin(x./2))+rL1*cos((x+2*phi0)./2).*atanh(sin((x+2*phi0)./2))+rL2*cos((x+2*theta0)./2).*atanh(sin((x+2*theta0)./2))+…

rR0*cos((x-2*pi*flux1)./2).*atanh(sin((x-2*pi*flux1)./2))+rR1*cos(((x-2*pi*flux1)+2*phi0)./2).*atanh(sin(((x-2*pi*flux1)+2*phi0)./2))+…

rR2*cos(((x-2*pi*flux1)+2*theta0)./2).*atanh(sin(((x-2*pi*flux1)+2*theta0)./2));

I_min=min(I_dc);

I_max=max(I_dc);

z2=(I_max-abs(I_min))/(I_max+abs(I_min));

end

toc

end Hello

I want to perform a surface animation, but I constantly run into an error

Error using surf

Data dimensions must agree.

Error in surfc (line 54)

hs = surf(cax, args{:});

Error in dcSQUID_diode_efficiency_animation (line 26)

surfc(phi,theta,eff)

I would be grateful if someone could tell me where exactly I made a mistake in the code

function z1=dcSQUID_diode_efficiency_animation

tic

phi0=linspace(0.000,2*pi,100);

theta0=linspace(0.000,2*pi,100);

flux1=linspace(0,1,10);

h = figure;

axis tight manual

filename = ‘dc_squid_eff.gif’;

for ii = 1:10

for i=1:100

for j=1:100

y=self11(phi0(i),theta0(j),flux1(ii));

eff(i,j,ii)=y;

end

end

[phi,theta]=meshgrid(phi0,theta0);

surfc(phi,theta,eff)

zlim([-0.5 0.5])

clim([-0.5 0.5])

colormap jet

colorbar(‘FontSize’,34,’FontName’,’Times New Roman’)

set(gca,’FontName’,’Times New Roman’,’FontSize’,34)

xlabel(‘phi’,’FontName’,’Times New Roman’,’fontsize’,34,’fontweight’,’b’);

ylabel(‘theta’,’FontName’,’Times New Roman’,’fontsize’,34,’fontweight’,’b’);

set(gca,’XTick’,0:pi/2:2*pi)

set(gca,’XTickLabel’,{‘0′,’pi/2′,’pi’,’3pi/2′,’2pi’})

set(gca,’YTick’,0:pi/2:2*pi)

set(gca,’YTickLabel’,{‘0′,’pi/2′,’pi’,’3pi/2′,’2pi’})

drawnow

frame = getframe(h);

im = frame2im(frame);

[imind,cm] = rgb2ind(im,256);

if ii == 1

imwrite(imind,cm,filename,’gif’, ‘Loopcount’,inf);

else

imwrite(imind,cm,filename,’gif’,’WriteMode’,’append’);

end

end

function z2=self11(phi0,theta0,flux1)

rL1=1;

rL2=1;

rR0=1;

rR1=1;

rR2=1;

x = -2*pi:0.001:2*pi;

I_dc = cos(x./2).*atanh(sin(x./2))+rL1*cos((x+2*phi0)./2).*atanh(sin((x+2*phi0)./2))+rL2*cos((x+2*theta0)./2).*atanh(sin((x+2*theta0)./2))+…

rR0*cos((x-2*pi*flux1)./2).*atanh(sin((x-2*pi*flux1)./2))+rR1*cos(((x-2*pi*flux1)+2*phi0)./2).*atanh(sin(((x-2*pi*flux1)+2*phi0)./2))+…

rR2*cos(((x-2*pi*flux1)+2*theta0)./2).*atanh(sin(((x-2*pi*flux1)+2*theta0)./2));

I_min=min(I_dc);

I_max=max(I_dc);

z2=(I_max-abs(I_min))/(I_max+abs(I_min));

end

toc

end surface plot, animation MATLAB Answers — New Questions

Why do I get “Error: The server response timed out” every time I try to review results in the Polyspace Access Web UI?

I installed and started Polyspace Access, and provided the path to the license file in the "Configure Apps" menu within the Cluster Admin dashboard.

I clicked "Restart Apps" and all the apps are running including the Polyspace Access UI, and I uploaded results to Polyspace Access. I can see the results listed in the web UI, but when I try to review the results I get "Error:The server response timed out" every time.

There is no attempted license checkout https://www.mathworks.com/help/polyspace_access/install/configure-polyspace-access-license.html in the license manager debug log file.I installed and started Polyspace Access, and provided the path to the license file in the "Configure Apps" menu within the Cluster Admin dashboard.

I clicked "Restart Apps" and all the apps are running including the Polyspace Access UI, and I uploaded results to Polyspace Access. I can see the results listed in the web UI, but when I try to review the results I get "Error:The server response timed out" every time.

There is no attempted license checkout https://www.mathworks.com/help/polyspace_access/install/configure-polyspace-access-license.html in the license manager debug log file. I installed and started Polyspace Access, and provided the path to the license file in the "Configure Apps" menu within the Cluster Admin dashboard.

I clicked "Restart Apps" and all the apps are running including the Polyspace Access UI, and I uploaded results to Polyspace Access. I can see the results listed in the web UI, but when I try to review the results I get "Error:The server response timed out" every time.

There is no attempted license checkout https://www.mathworks.com/help/polyspace_access/install/configure-polyspace-access-license.html in the license manager debug log file. MATLAB Answers — New Questions