Category: News

Azure Red Hat OpenShift: Empowering Innovation at Red Hat Summit & AnsibleFest 2024

In less than a week, Azure Red Hat OpenShift (ARO) located in Booth 202, will highlight exciting latest updates at the Red Hat Summit & AnsibleFest 2024 in Denver, CO, from May 6-9. At Red Hat Summit, where Microsoft is a platinum sponsor, Azure Red Hat OpenShift, celebrating its 5th anniversary on May 7th, will be showcased alongside Microsoft Red Hat products and services. In this blog, we will explore the significance of Azure Red Hat OpenShift in this esteemed event, provide an overview of what to expect at the summit, and fly over the capabilities.

Red Hat Summit 2024

The Red Hat Summit & AnsibleFest 2024 is a convergence of leading minds in open-source technologies, cloud computing, and automation solutions. It serves as a pivotal platform for industry leaders, developers, and IT (information technology) professionals to collaborate, learn, and network.

Additionally, AnsibleFest brings open-source experts together to exchange ideas on how to create, manage, and scale automation in ways that best address your challenges.

Why Azure Red Hat OpenShift?

Azure Red Hat OpenShift (ARO) stands out as a fully managed application platform, jointly engineered, managed, and supported by Microsoft and Red Hat. ARO seamlessly integrates the power of OpenShift with the scalability and flexibility of Azure, offering a robust cloud-native platform for developing, deploying, and managing applications. ARO streamlines operations, enhances security, and integrates seamlessly with Azure’s vast array of services. Additionally, it brings the following key benefits:

Case Study: Azure Red Hat OpenShift on Vinci Energies

Streamlined Application Development: By offering a comprehensive application platform with integrated developer tools such as CI/CD, observability, Service Mesh, etc., Azure Red Hat OpenShift streamlines the deployment of containerized applications, making it easier for organizations to manage their software stack efficiently and deploy applications quickly.

Simplified Operations: ARO automates various operational tasks like scaling, monitoring, and resource allocation, reducing manual effort and enhancing operational efficiency. With ARO, you gain access to global SRE expertise that automates deployments and day-to-day operations, alleviating the burden on internal teams, simplifying operations, and allowing them to focus on more strategic activities.

Enhanced Security: With built-in security features and robust access controls, ARO ensures that containerized applications and data are protected against threats.

Consistent Scalability Everywhere: ARO provides seamless scalability across hybrid and multi-cloud environments, allowing organizations to scale their applications as needed without disruptions.

Azure Sets ARO Apart with Consistent Scalability and Managed Services

Azure plays a pivotal role in Azure Red Hat OpenShift due to its comprehensive suite of cloud services and infrastructure capabilities, providing a robust and scalable foundation for hosting OpenShift clusters. This ensures high availability and low-latency connectivity critical for modern applications requiring seamless performance across geographies. Moreover, Azure’s integration with Azure Red Hat OpenShift simplifies hybrid cloud deployments, extending on-premises infrastructure seamlessly. Deep integration also facilitates advanced capabilities like Microsoft Entra ID authentication (formerly Azure Active Directory), Azure Monitor for monitoring and logging, and Azure DevOps for CI/CD automation, enhancing development and operational efficiency.

Case Study: Azure Red Hat OpenShift on Ortec Finance

Azure Red Hat OpenShift stands out as a fully managed platform covering infrastructure and daily operations, as validated by the Total Economic Impact™ (TEI) study commissioned by Red Hat. This study highlights the cost savings and benefits realized by organizations leveraging Microsoft Azure Red Hat® OpenShift®.

From the TEI of Azure Red Hat OpenShift 2024

The ARO platform is supported by a specialized global SRE team ensuring a 99.95% SLA. It offers enhanced security with automated patching and zero downtime upgrades. A key strength is its consistency across hybrid cloud environments, providing an initiative-taking platform for growth without interruptions. Beyond Kubernetes, ARO offers the ability to build pipelines, security, service mesh, and more, streamlining application development and deployment processes. ARO is accessible within the Azure console as a first-party service with on-demand billing, consistent support, integration with Azure services, expanded deployment regions, certifications, regulatory compliance, and cost management against Azure spend.

Now, let us dive into the key sessions and insights from Azure Red Hat OpenShift’s participation at the Red Hat Summit & Ansible Fest 2024.

What is new and what is next with Red Hat OpenShift cloud services

Abstract: Discover the latest developments and upcoming trends in Red Hat OpenShift cloud services.

Date & Time: Tuesday, May 7, 4:00 PM MDT

Speakers: Brooke Jackson, Andrew Cathrow, Jerome Boutaud

Session Type: Breakout (Room 605/60 MM7 – Street level)

Adobe’s Kubernetes Journey: From fragmented adoption to future foundation

Abstract: In this session, we will explore Adobe’s 5-year Kubernetes journey from initial adoption to Ethos, its unified computing layer. We will delve into its role in platform engineering, covering various Kubernetes flavors on multiple clouds like Microsoft Azure Kubernetes Service (AKS), Amazon Elastic Kubernetes Service (Amazon EKS), and Red Hat OpenShift, including Microsoft Azure Red Hat OpenShift and Red Hat OpenShift Service on AWS.

Date & Time: Tuesday, May 7, 1:30 PM MDT

Room 201 – Street level

Abstract: Explore Goethe-Institut’s journey transitioning from legacy systems to ARO for improved workload management and cloud adoption.

Date & Time: Wednesday, May 8, 1:00 PM MDT

Speakers: Robin Walter, Sascha Beutler, Immo Goltz

Session Type: Breakout (Room 605/607 – Street level)

Securely operate and manage your RHEL and Red Hat OpenShift hybrid environments with Microsoft Azure

Abstract: Managing innovation, security, compliance, and cost in hybrid environments is a complex task for IT and DevOps teams. This session will demonstrate how Azure Arc manages on-premises environments efficiently, covering policy, configuration, updates, and security. We will also provide a preview of AI-driven operations using Microsoft Copilot for Azure in Red Hat setups.

Date and Time: Wednesday, May 8, 2:15 PM – 3:00 PM MDT.

Room 605/607 – Street level

Speakers: Jane Yan, Ryan Willis, Satya Vel

Accelerating application development and deployment with Azure Red Hat OpenShift and Azure DevOps

Abstract: Learn how ARO and Azure DevOps accelerate application development and deployment with built-in DevOps services.

Date & Time: Wednesday, May 8, 4:00 PM MDT

Speakers: Jerome Boutaud, Courtney Grosch, Mayuri Gupta

Session Type: Lightning talk (DevZone – Expo Hall)

Azure Red Hat Summit Demos

At our Azure Red Hat OpenShift (ARO) demo booth, customers will experience a comprehensive showcase of ARO’s capabilities. Demos include “ARO: Who does what?” for operational clarity and “Cost Management on ARO” for resource optimization. Other demos cover Integration with Azure Services, Hybrid Cloud Deployment, DevOps Automation, Scaling, Security, and Machine Learning Integration. These demos illustrate ARO’s versatility in seamlessly integrating with Azure services, deploying hybrid cloud solutions, automating DevOps processes, scaling applications, monitoring performance, ensuring disaster recovery, maintaining security, and leveraging AI for intelligent applications.

Red Hat Central Demos

While at the Summit, be sure to visit the OpenShift booth to meet our Azure Red Hat OpenShift experts in person to ask questions and experience demos highlighting multi-cloud consistency, building AI enabled applications and integrating Azure tools such as Azure DevOps in Azure Red Hat OpenShift. And while you can find our Azure Red Hat OpenShift experts throughout the event, they will be at the OpenShift booth on Wednesday 9:30-11:30 to “Ask the Experts” anything.

The sessions at Red Hat Summit & AnsibleFest 2024 underscore the transformative impact of Azure Red Hat OpenShift and its integration with Microsoft Azure, Red Hat technologies, and automation solutions.

If you are planning to attend Red Hat Summit, be sure to join the sessions listed above, network with experts, and unlock new possibilities for innovation and automation at the Red Hat Summit & Ansible Fest 2024.

See you at the Summit!

Be on the lookout on May 2nd ARO news: How do You Operationalize Generative AI Consistently and Scale

Click here for more information on Azure Red Hat OpenShift

Click here to see all the Microsoft Red Hat product line up at Red Hat Summit

Additional Azure Red Hat OpenShift Resources:

ARO spotlight: Total Economic Impact of OpenShift cloud services

Ortec Finance customer video

Campari Group customer story (published by IBM)

ARO learning path: getting started with ARO

ARO Interactive walkthrough

Many demos on ARO lightboard video page

Four benefits of Azure Red Hat OpenShift checklist

Microsoft Tech Community – Latest Blogs –Read More

Make myself not bookable

I am creating calendars for various campaigns, and assigning different team members to the calendars. This results in me appearing as a staff member in each calendar that I create and administer. I don’t want to be available – I want to set myself to be not bookable. The help I have found says that I can set myself to not available, which is not what I want. I want my name to not appear at all.

How do I toggle myself to become “not bookable?” This seems like an incredibly basic feature that should have been implemented ages ago, so I’m sure it’s possible and I just can’t find it.

How do I change this:

I am creating calendars for various campaigns, and assigning different team members to the calendars. This results in me appearing as a staff member in each calendar that I create and administer. I don’t want to be available – I want to set myself to be not bookable. The help I have found says that I can set myself to not available, which is not what I want. I want my name to not appear at all. How do I toggle myself to become “not bookable?” This seems like an incredibly basic feature that should have been implemented ages ago, so I’m sure it’s possible and I just can’t find it. How do I change this: Read More

Work Teams admin – close one to one conversations org wide.

I have been asked by my CEO to update our organization teams to not allow all users to create one to one chats for users in our organization and only lock them down to either established teams or groups.

I see where i can update this setting in the messaging policy to enable / disable the “Chat” and the “Chat with Group” function. However it states that chats that existed prior to disabling the option will still function. Is there a way on the back end either through the admin center or via powershell scripting to go through each user in the organization and force users to leave their one to one conversations?

I was looking through the powershell commands and playing around but wasnt finding it.

https://learn.microsoft.com/en-us/powershell/module/teams/get-teamuser?view=teams-ps

I have been asked by my CEO to update our organization teams to not allow all users to create one to one chats for users in our organization and only lock them down to either established teams or groups. I see where i can update this setting in the messaging policy to enable / disable the “Chat” and the “Chat with Group” function. However it states that chats that existed prior to disabling the option will still function. Is there a way on the back end either through the admin center or via powershell scripting to go through each user in the organization and force users to leave their one to one conversations? I was looking through the powershell commands and playing around but wasnt finding it. https://learn.microsoft.com/en-us/powershell/module/teams/get-teamuser?view=teams-ps Read More

EXCEL FORMULAS

Hey everyone.

I have daily data which excludes weekend and public holidays. When i want to do an annual percentage change calculation, one of the dates could possibly be weekend or public holiday date so there won’t be any data. It returns an error. I want to be able to have an excel formula that if the current and last year date have a number, the percentage change calculation should normally happen. If not the last date with the data should be used as the substitute date. For example, if the last year date is a Saturday and there was data on Friday then the Friday data should be used.

I hope i can get guidance on what to use formula wise.

Hey everyone. I have daily data which excludes weekend and public holidays. When i want to do an annual percentage change calculation, one of the dates could possibly be weekend or public holiday date so there won’t be any data. It returns an error. I want to be able to have an excel formula that if the current and last year date have a number, the percentage change calculation should normally happen. If not the last date with the data should be used as the substitute date. For example, if the last year date is a Saturday and there was data on Friday then the Friday data should be used. I hope i can get guidance on what to use formula wise. Read More

Conditional Formatting – Highlight data based on a cell input value

Hi,

I’m trying to make a sheet more user friendly. I can’t seem to find any existing threads on the topic, so I might be using the wrong keywords to search. Basically, I have a list of individuals. To make it more user friendly, I have an input cell (G1). If I type Bob into G1 I’d like Bob to be highlighted in cells B2:D6.

Can anyone please provide some guidance on how to write the Conditional Formatting formula?

Out of curiosity, how would the formula differ if I wanted to highlight the row that Bob shows up in?

Thanks in advance.

Hi, I’m trying to make a sheet more user friendly. I can’t seem to find any existing threads on the topic, so I might be using the wrong keywords to search. Basically, I have a list of individuals. To make it more user friendly, I have an input cell (G1). If I type Bob into G1 I’d like Bob to be highlighted in cells B2:D6. Can anyone please provide some guidance on how to write the Conditional Formatting formula?Out of curiosity, how would the formula differ if I wanted to highlight the row that Bob shows up in? Thanks in advance. Read More

API Key copilot-pro

I am a Computer Science student at the UPC and I am doing my final degree project. My job is to develop an application to help future university students choose a career.

For this, my project managers bought the copilot-pro and windows365 with the aim of customizing it. The idea is to develop a native App that communicates with the copilot-pro by sending questions and capturing their responses. I have been trying to make this communication for weeks and have not been successful. It seems that I require my copilot’s API Key but I can’t find out or have anyone tell me what it is or how to do it. On the other hand, the FAQ says that copilot does not have an API.

More precisely, what happens to me is that I can’t get the API token from the copilot-pro external service for my application that I’m developing. I have asked the copilot and each time he sends me to Open.ai or directly denies me the token. Other times I have been sent to the copilot configuration but that page either does not exist or I cannot find it. My goal is to link a custom GPT with my application to be able to make chat queries from the application interface.

My question is how can I carry out this communication between my native App and the copilot-pro? Is it necessary to buy another Microsoft product or extension to do it?

Another problem I encounter is that copilot-pro has been updating a lot in recent months and the information available is usually obsolete and since there is no way to communicate with specialized Microsoft technical support, it becomes very difficult to do anything that is not basic.

I will be very grateful for any help and/or suggestions you can give me to solve this problem and be able to move forward with my project.

I am a Computer Science student at the UPC and I am doing my final degree project. My job is to develop an application to help future university students choose a career.For this, my project managers bought the copilot-pro and windows365 with the aim of customizing it. The idea is to develop a native App that communicates with the copilot-pro by sending questions and capturing their responses. I have been trying to make this communication for weeks and have not been successful. It seems that I require my copilot’s API Key but I can’t find out or have anyone tell me what it is or how to do it. On the other hand, the FAQ says that copilot does not have an API.More precisely, what happens to me is that I can’t get the API token from the copilot-pro external service for my application that I’m developing. I have asked the copilot and each time he sends me to Open.ai or directly denies me the token. Other times I have been sent to the copilot configuration but that page either does not exist or I cannot find it. My goal is to link a custom GPT with my application to be able to make chat queries from the application interface.My question is how can I carry out this communication between my native App and the copilot-pro? Is it necessary to buy another Microsoft product or extension to do it?Another problem I encounter is that copilot-pro has been updating a lot in recent months and the information available is usually obsolete and since there is no way to communicate with specialized Microsoft technical support, it becomes very difficult to do anything that is not basic.I will be very grateful for any help and/or suggestions you can give me to solve this problem and be able to move forward with my project. Read More

Project Resource Pop-Up

Is there a way to turn off the resource pop-up window that appears when moving the mouse over a resource in a project? This occurs in the Gnatt Chart view of a project in the Microsoft Project Online Desktop Client version.

Is there a way to turn off the resource pop-up window that appears when moving the mouse over a resource in a project? This occurs in the Gnatt Chart view of a project in the Microsoft Project Online Desktop Client version. Read More

New REST API and Bing Ads SDK May 2024 Release (V13.0.20)

We are excited to release Bing Ads SDK 13.0.20 for Java and .NET, which includes performance improvements such as lower service call latency and reduced network traffic. This allows you to make service calls faster and at a lower cost.

Improvement from previous SDK version

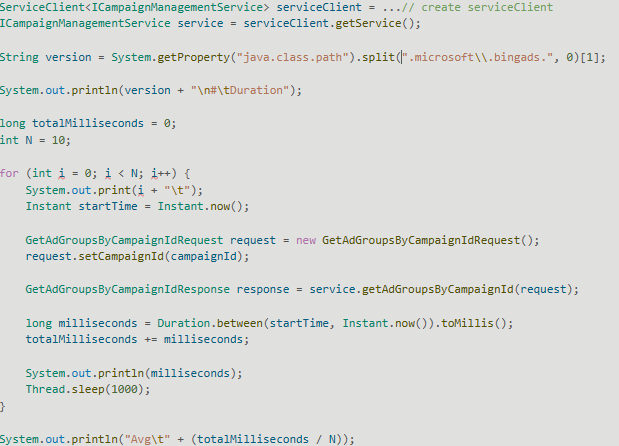

For example, when we run the following test to query ad groups by campaign Id for 1000 ad groups, the previous SDK version 13.0.19.1 has an average call duration of 284 ms:

When running the same code with the new SDK version 13.0.20, the average call duration is reduced to 67 ms. This is a 75% improvement from the previous version.

v13.0.19.1

# Duration (ms)

0 425

1 326

2 304

3 262

4 264

5 253

6 270

7 257

8 245

9 240

Avg. 284 ms

v13.0.20

# Duration (ms)

0 79

1 73

2 66

3 62

4 63

5 70

6 65

7 69

8 61

9 64

Avg. 67 ms

Reduced response size

We have also reduced the response size. For the example above, it is reduced from 784 KB to 8.4 KB, which is a 99% improvement.

You can expect similar improvements for other API method calls as well, especially ones that operate on many entities or return large amounts of data.

New REST API

These improvements are made possible by internally switching the SDK from the existing XML-based SOAP API to a new JSON-based REST API. This SDK release switches Campaign Management, Bulk, and Reporting services to the new API, and other services will be switched in future releases.

Upgrading to the new SDK

Given the significant internal changes we had to make to achieve these improvements, we recommend that you test and monitor your application when upgrading to this version to make sure your application is compatible with the implementation changes and dependencies introduced in this release.

We have also provided an easy way to switch Bing Ads SDK back to the old implementation in case any issues arise when using the new implementation. For more details on that and other information regarding the upgrade process, please see:

Upgrade to Bing Ads Java SDK 13.0.20

Upgrade to Bing Ads .NET SDK 13.0.20

Microsoft Tech Community – Latest Blogs –Read More

Load DICOM image, binarize, and save a new DICOM image

I load a DICOM Image and binarize it.

Then I save a new dicom image. However, the saved image is only black, and not black and white.

Here is the code:

for i = 1:length(d)

na=d(i).name;

TF = startsWith(na,’Tumour’);

if TF == 1

info = dicominfo(na);

greyImage = dicomread(info);

BW=imbinarize(greyImage);

fname1 = strcat(‘bin_’,na)

dicomwrite(BW, fname1,info);

else

if TF == 0

fprintf(‘File do not match’)

end

end

endI load a DICOM Image and binarize it.

Then I save a new dicom image. However, the saved image is only black, and not black and white.

Here is the code:

for i = 1:length(d)

na=d(i).name;

TF = startsWith(na,’Tumour’);

if TF == 1

info = dicominfo(na);

greyImage = dicomread(info);

BW=imbinarize(greyImage);

fname1 = strcat(‘bin_’,na)

dicomwrite(BW, fname1,info);

else

if TF == 0

fprintf(‘File do not match’)

end

end

end I load a DICOM Image and binarize it.

Then I save a new dicom image. However, the saved image is only black, and not black and white.

Here is the code:

for i = 1:length(d)

na=d(i).name;

TF = startsWith(na,’Tumour’);

if TF == 1

info = dicominfo(na);

greyImage = dicomread(info);

BW=imbinarize(greyImage);

fname1 = strcat(‘bin_’,na)

dicomwrite(BW, fname1,info);

else

if TF == 0

fprintf(‘File do not match’)

end

end

end dicom MATLAB Answers — New Questions

matlab2023a安装Simscape Multibody Link插件失败

采用命令

>> installaddon(‘smlink.r2023a.win64.zip’)

安装时,提示:

Installing smlink.r2023a.win64…

错误使用 installaddon

Archive architecture () does not match the MATLAB architecture (win64).

Installation of smlink.r2023a.win64 failed.采用命令

>> installaddon(‘smlink.r2023a.win64.zip’)

安装时,提示:

Installing smlink.r2023a.win64…

错误使用 installaddon

Archive architecture () does not match the MATLAB architecture (win64).

Installation of smlink.r2023a.win64 failed. 采用命令

>> installaddon(‘smlink.r2023a.win64.zip’)

安装时,提示:

Installing smlink.r2023a.win64…

错误使用 installaddon

Archive architecture () does not match the MATLAB architecture (win64).

Installation of smlink.r2023a.win64 failed. 联合仿真;安装插件 MATLAB Answers — New Questions

Conflict status after having 2 Local user group membership Policy

Hello,

I have an issue with applying two “Local User Group Membership” policies on a PC. The Intune policy report shows a conflict between having two “Local User Group Membership” policies despite having different configurations. For example, one is a Global Policy, which applies an admin privilege to all PCs, and the other one is more specific to a certain group, and it is just about giving remote access to the PCs on this group. So, my question is, why does Intune mark these two policies as a conflict of each other? If it is not possible to have two “Local User Group Membership” policies applying to the PC. Is there a way to have a global policy for admin users on the PC and one more private policy for remote user access using “Local User Group Membership”?

Hello, I have an issue with applying two “Local User Group Membership” policies on a PC. The Intune policy report shows a conflict between having two “Local User Group Membership” policies despite having different configurations. For example, one is a Global Policy, which applies an admin privilege to all PCs, and the other one is more specific to a certain group, and it is just about giving remote access to the PCs on this group. So, my question is, why does Intune mark these two policies as a conflict of each other? If it is not possible to have two “Local User Group Membership” policies applying to the PC. Is there a way to have a global policy for admin users on the PC and one more private policy for remote user access using “Local User Group Membership”? Read More

Compare our offerings for partners: Explore our new partner benefits packages

Find the offerings you need to create customer-centric, AI-powered solutions for your customers at any stage of business growth.

Read more here

Find the offerings you need to create customer-centric, AI-powered solutions for your customers at any stage of business growth.

Read more here Read More

VBA to read and sort based on information in cells

Hi All,

Looking for help sorting some data in Excel 365. We’ve been able to get close using formulas and helper columns but have hit a bit of a brick wall. Below is a small sample of the data we are sorting.

We currently group and sort Paint_Color, Category, and Part Number prefix/suffix manually using formulas, helper columns, and the Sort function in the Data ribbon.

We’d like to automate this using VBA and expand the sorting functionality to look at each Description, correctly interpret the numerical and dimensional values therein, and sort largest to smallest, progressively from the left most numerical/dimensional value to the right most.

We had tried adding this to our manually sorting, breaking the description down in a series of helper columns by breaking out the numerical/dimensional values, then separating them using “ X “ as the delimiter. Unfortunately we ran into an issue with Excel seeing our fractional dimensions as dates, and reading our architectural dimensions (which contain a hyphen between the feet and inches) as feet MINUS inches.

The goal is to automatically group and sort the line items without having to manipulate the data in the cells.

Ideally we’d like to achieve 100% automatic sorting. However, given the irregular nature of our descriptions, we realize some descriptions may get missed or misread.

In that case, a code which would catch as much as possible, then allow for manual renumbering in the Item column to reposition those items which didn’t take, would be a major help.

Is there a way to handle this, using VBA to break apart and interpret the description within a reasonable shot?

Thank you, Nathan

Hi All,Looking for help sorting some data in Excel 365. We’ve been able to get close using formulas and helper columns but have hit a bit of a brick wall. Below is a small sample of the data we are sorting.We currently group and sort Paint_Color, Category, and Part Number prefix/suffix manually using formulas, helper columns, and the Sort function in the Data ribbon. We’d like to automate this using VBA and expand the sorting functionality to look at each Description, correctly interpret the numerical and dimensional values therein, and sort largest to smallest, progressively from the left most numerical/dimensional value to the right most.We had tried adding this to our manually sorting, breaking the description down in a series of helper columns by breaking out the numerical/dimensional values, then separating them using “ X “ as the delimiter. Unfortunately we ran into an issue with Excel seeing our fractional dimensions as dates, and reading our architectural dimensions (which contain a hyphen between the feet and inches) as feet MINUS inches.The goal is to automatically group and sort the line items without having to manipulate the data in the cells.Ideally we’d like to achieve 100% automatic sorting. However, given the irregular nature of our descriptions, we realize some descriptions may get missed or misread.In that case, a code which would catch as much as possible, then allow for manual renumbering in the Item column to reposition those items which didn’t take, would be a major help.Is there a way to handle this, using VBA to break apart and interpret the description within a reasonable shot?Thank you, Nathan Read More

using calculated fields instead

I have various formulas taking the difference between the two months if the issuer shows up in both quarters

possible to set these up as calculated fields instead ? need the formulas to be dynamic as the dates will change every quarter

(i scrubbed out the issuer name and they’re unique so that’s why there’s no instance of an issuer in both quarters).

l

I have various formulas taking the difference between the two months if the issuer shows up in both quarters possible to set these up as calculated fields instead ? need the formulas to be dynamic as the dates will change every quarter (i scrubbed out the issuer name and they’re unique so that’s why there’s no instance of an issuer in both quarters). l Read More

Filter or Formula for second to last cel

Dear all,

I have tried to find a solution for my challenge, however I haven’t managed to find the right answer.

In an Excel sheet, I have multiple rows with data divided over different amounts of columns. The only thing these rows have in common, is that the second to last filled cell in each row has a date (dd/mm/yyyy or dd-mm-yyyy).

I need a formula that shows this date. In orther words, I would need a formula that copies the second to last filled cell of each row into a column.

To visualize my question: looking at the table below; is there a formula (or other function) that automatically copies the dates from the yellow cells into the first (yellow) column?

Many Thanks!

Dear all, I have tried to find a solution for my challenge, however I haven’t managed to find the right answer. In an Excel sheet, I have multiple rows with data divided over different amounts of columns. The only thing these rows have in common, is that the second to last filled cell in each row has a date (dd/mm/yyyy or dd-mm-yyyy). I need a formula that shows this date. In orther words, I would need a formula that copies the second to last filled cell of each row into a column. To visualize my question: looking at the table below; is there a formula (or other function) that automatically copies the dates from the yellow cells into the first (yellow) column? Many Thanks! Read More

Open MS Project file in Project Plan 3

Hello,

My company is switching over to the online Plan 3 version of Project. Some folks in our company still have MS Project on their computers. Is there anyway to open a .mpp file from my computer or my OneDrive using the online version plan 3?

I was given Plan 3 but only see the ability to start a new plan or open a plan shared with me.

Thank you,

Sara

Hello, My company is switching over to the online Plan 3 version of Project. Some folks in our company still have MS Project on their computers. Is there anyway to open a .mpp file from my computer or my OneDrive using the online version plan 3? I was given Plan 3 but only see the ability to start a new plan or open a plan shared with me. Thank you,Sara Read More

MacOS Alias breaks when OneDrive syncs

I work on two computers and I used OneDrive to sync between those computers. When I create a MacOS alias, once it syncs to the cloud, the alias breaks. The alias links to other files in OneDrive. Is there a better way to create aliases in OneDrive, or a reason they break in OneDrive?

I work on two computers and I used OneDrive to sync between those computers. When I create a MacOS alias, once it syncs to the cloud, the alias breaks. The alias links to other files in OneDrive. Is there a better way to create aliases in OneDrive, or a reason they break in OneDrive? Read More

Using Speech to text in Android & iOS App

I have to extract text from audio files (which are extracted from a video). Does this support mp3? The audio files can be longer duration, should I use SDK or Rest API?.

I have to extract text from audio files (which are extracted from a video). Does this support mp3? The audio files can be longer duration, should I use SDK or Rest API?. Read More

Protecting Containers: A Primer for Moving from an EDR-based Threat Approach

Many security teams are familiar with an EDR-based approach to security. However, container protection within their cloud ecosystem can seem much more challenging and complex.

Protecting containers requires an understanding of the complete attack surface that containers expose–whether you are running them using an orchestrator like Kubernetes or locally using Docker.

In this article, we will describe the attack surface, how it compares and aligns with the security technologies you might already have, and then make the case for a stronger focus on pre-deployment protections, adding to standard EDR post-deployment detections.

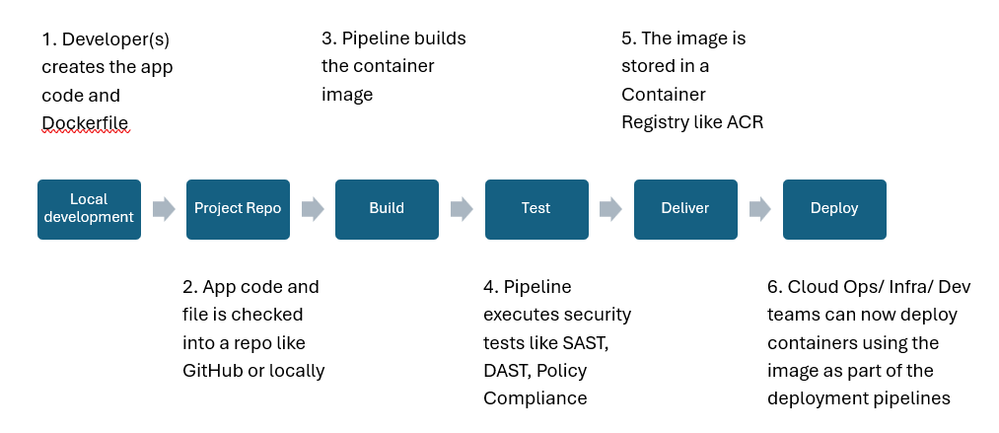

Let’s start by looking at the container-based CI/CD deployment process that we will use in the article. We will discuss security controls (preferring Cloud Native) that you may need at each phase.

Note: This is a simplistic pipeline that you can customize. The idea here is to focus more on the foundational concepts related to container driven development/deployment.

Fig. Container driven development and deployment pipeline

How Does Container Security Compare to Modern Work Security?

In general, we look to EDR to provide threat and anomaly detections and to take actions such as automated attack disruption. (Automatic attack disruption in Microsoft Defender for Business – Microsoft Defender for Business | Microsoft Learn)

We can also consider, earlier in the attack lifecycle, how to reduce attack surface on physical/virtual assets, (including mobile devices, laptops, workstations, and servers) with AV components such as Attack Surface Reduction (ASR) rules (Use attack surface reduction rules to prevent malware infection – Microsoft Defender for Endpoint | Microsoft Learn), which prevent attacks by blocking common entry points. Additionally, Microsoft Edge and Defender AV can detect and block “potentially unwanted applications.” (PUA) (Block potentially unwanted applications with Microsoft Defender Antivirus – Microsoft Defender for Endpoint | Microsoft Learn)

When we think about the purpose of EDR on a system, consider when, within the MITRE kill chain, defenders typically look to this solution to take effect. Some of the benefits include (not exhaustive):

Telemetry from the end points (user and servers). However, in case of Containers, an EDR solution would need to be aware of presence of a containerization technology and runtimes like Containerd.

Threat Detection: EDR needs to be sophisticated enough to detect container specific attacks (see MITRE Container Matrix above).

Compliance: In cases where you are running your containers on a Docker host an EDR can help identify the security weaknesses in Docker hosts (https://learn.microsoft.com/en-us/azure/defender-for-cloud/harden-docker-hosts.)

The Importance of Shifting Left, In General

As we shift left in our threat driven approach to security, even with traditional solutions, managing vulnerabilities and misconfigurations is a logical step “left” in the kill chain, i.e., not just locking doors, but adding locks and fixing cracks on the small, hidden windows which might be attractive to an attacker with determined, malicious intent.

For a continued healthy security posture at scale, we can automate some of the post-breach activities, reducing time commitments, thereby shifting our focus to blocking or updating vulnerable applications, fixing over-privilege for browser extensions, addressing weak or self-signed certificates, and applying configuration baselines among other activities (many of which can also be automated.)

Since modern workers draw their applications from a massive library of republished SaaS solutions, without automation and prioritization, shifting left can be a tall task for security teams. Therefore, we layer on a threat-driven approach to prioritization, considering Microsoft’s visibility to the threat landscape, so organizations can quickly mitigate those vulnerabilities and misconfigurations that are accessible, exploitable, and with potential breach of sensitive or proprietary data first.

This technology has recently been described in the market as “XSPM.” With Microsoft’s native end-to-end, approach, we call this “exposure management.” (https://techcommunity.microsoft.com/t5/security-compliance-and-identity/introducing-microsoft-security-exposure-management/ba-p/4080907)

The Intricacies of Securing Containers Versus Endpoints or VMs Alone

Modern Work security often relies on securing proprietary applications that are deployed on end user devices. As a result, the attack vectors, corresponding techniques, and attack surface are very different from a container-based Enterprise Application. Refer to MITRE Containers Matrix: https://attack.mitre.org/matrices/enterprise/containers/

vs. MITRE Windows Matrix:

https://attack.mitre.org/matrices/enterprise/windows/

If you are using Kubernetes you should also consider https://microsoft.github.io/Threat-Matrix-for-Kubernetes/ (we will not do a deeper dive on Kubernetes in this article)

Container applications certainly complicate matters for security teams whose task is to reduce risk for the businesses they protect. Containers don’t follow the same rules as modern work environments when it comes to the existing threat landscape.

Since the purpose of using containers is efficiency, bundling application code with its dependencies for seamless, repeatable, and fast deployment at scale, protection must also support these business goals.

Does EDR Provide Any Protection for Containers?

Containers are inherently different from the end user’s SaaS driven assets because they are, by definition, DevOps assets–as we see from the figure above. Container images may be built with custom code (potentially embedded secrets) while also drawing from libraries of pre-built (and therefore potentially vulnerable) binaries.

Therefore, “shifting left” takes on new meaning and requires a process driven DevOps or “code-to-cloud” approach to security.

Considering our earlier EDR-based methods for securing and protecting, we’ll observe that containers are, at their essence, processes, running with their own potentially configured network isolations (port controls). At runtime, they do utilize the VM kernel. The image will have required application binaries as well.

So, it follows that EDR could detect certain “broken rules” of even newly built container apps, that anomalies would be detected if the app begins to act out of normal bounds for an application. More specifically, as an example, signals related to “Create or Modify System Process” https://attack.mitre.org/techniques/T1543/

A capable EDR solution like Microsoft’s Defender for Endpoint (MDE) will cover several of these Techniques.

Additionally, as mentioned above, Defender for Servers P2 provides a set of Docker hardening recommendations aligned with the Center for Internet Security (CIS) Docker Benchmark.

Does EDR Provide Enough Protection for Containers?

But here, also, is where the phrase “too little, too late” comes to mind as, containers, at runtime are meant to deploy, shut down, and redeploy at scale.

Allowing EDR to kill a process to disrupt potential attacks, might also mean shutting down entire business apps at scale, thereby disrupting the balance of risk versus business requirements. So, EDR, though important on the host, won’t be enabled with all of its powerful end-user focused capabilities for container hosts/clusters.

Additionally, EDR might not be aware of the application libraries present in the containerized applications.

How Should Containers Be Secured Then?

Therefore, to properly reduce business risk, defenders, again, need to “shift left,” in this case, ensuring security as the image is being developed.

Like the concept of a layered approach for modern work, defense in depth means reducing the attack surface earlier in the kill chain and utilizing protective and detective tools for the entire kill chain. For instance, in end-user environments, you might be using Defender for Office for anti-phishing policies and to paint the full picture of potential phishing or malware in teams before it ever touches the endpoint, you’ll look to Defender for Identity and Entra ID to mitigate identity risks and add detections such as lateral movement, and you’ll look to Defender for Cloud Apps to create SaaS app usage policies and alert on things like unusual addition of credentials to OAUTH apps.

We will need a similar suite of tools for containers based on how they work. Cloud Native solutions like Defender for Cloud provide a suite of capabilities that help you centrally achieve defense in depth.

Linting at Developer IDE as the application and Dockerfile (https://docs.docker.com/develop/security-best-practices) is built–for example, Docker Linter https://github.com/hadolint/hadolint/releases

Running Static Tests as the code is checked in to repos like GitHub https://techcommunity.microsoft.com/t5/microsoft-defender-for-cloud/microsoft-defender-for-devops-github-connector-microsoft/ba-p/3818803

Running Dynamic Application Security Tests (DAST) as the code is deployed in a test environment–like a test AKS Cluster or temporary Docker Host. These are language agnostic and can be automated in a CI/CD pipeline, automated on a schedule, or run independently by using on-demand scans.

Image Vulnerability scanning as the pipeline uploads the image to a container registry like Azure Container Registry (ACR). The Cloud Native solutions like Defender for Cloud have native integration, and, as a result make this process completely frictionless, (https://learn.microsoft.com/en-us/azure/defender-for-cloud/agentless-vulnerability-assessment-azure)

Once the application is deployed on the VM or Kubernetes Cluster, you will have EDR type technologies to monitor the container’s activities. If you are leveraging Kubernetes the solution should also protect against these techniques https://microsoft.github.io/Threat-Matrix-for-Kubernetes/. Defender for Containers, for example, provides coverage (https://learn.microsoft.com/en-us/azure/defender-for-cloud/alerts-reference#deprecated-defender-for-containers-alerts)

There are many other things that are applicable to securing the pipeline like securing Kubernetes RBAC, ensuring images are pushed/ pulled from private repositories etc.

Summary

We saw that Container Security requires a holistic approach and simply relying on the traditional tools you use for securing your Modern Workspace will not suffice.

Cloud Native solutions like Defender for Cloud provide you with capabilities that allow centralized enforcement of layered security.

Microsoft Tech Community – Latest Blogs –Read More

Revisiting Enterprise Policy as Code v10

As EPAC has reached version 10, it is time to revisit Enterprise Policy as Code (EPAC for short) to give you an update from the original post (https://techcommunity.microsoft.com/t5/core-infrastructure-and-security/azure-enterprise-policy-as-code-a-new-approach/ba-p/3607843) published on September 12th, 2022.

The maintainers of the OSS project EPAC work daily with Microsoft’s customers implementing Azure governance and security in general and more specifically Policy implementation via EPAC. EPAC was born out of the need to manage Policy at scale, while dramatically reducing the cost of implementation with traditional Infrastructure as Code (IaC) tools, such as ARM, Bicep, and Terraform. Those tools are great for IaC in general; however, their lack the knowledge of dependencies between definitions, assignments, exemptions, and role assignments and the simplifications to Policy Assignments and Policy Exemptions. EPAC understands the dependencies and will sequence the deployment correctly.

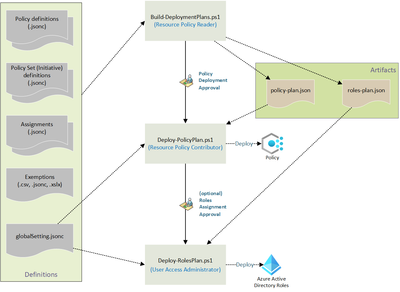

EPAC consists of PowerShell scripts and a starter kit:

Deployment scripts to create deployment plans, deploy the created Policy plan, and deploy the created role assignment plans. They can be executed manually (not recommended) or any CI/CD tool capable of running PowerShell core.

Scripts for operational tasks related to Policy, for example: creating remediation tasks at scale, extracting documentation, etc. Note: I’m not covering them in this article.

Hydration scripts, for the initial setup of EPAC. This is a work-in-progress. One of the scripts can extract exiting Policy resources from Azure tenants in EPAC format to enable a smooth transition to EPAC.

Starter kit contains sample pipelines/workflows for Azure DevOps and GitHub.

For the details, please follow these links:

Documentation: https://aka.ms/epac.

PowerShell module in the PowerShell Gallery: https://www.powershellgallery.com/packages/EnterprisePolicyAsCode

GitHub repository with the source code: https://github.com/Azure/enterprise-azure-policy-as-code

Blog Posts:

Azure Enterprise Policy as Code – A New Approach (the original post): https://techcommunity.microsoft.com/t5/core-infrastructure-and-security/azure-enterprise-policy-as-code-a-new-approach/ba-p/3607843

Azure Enterprise Policy as Code – Azure Landing Zones Integration: https://techcommunity.microsoft.com/t5/core-infrastructure-and-security/azure-enterprise-policy-as-code-azure-landing-zones-integration/ba-p/3642784

Infrastructure as Code Testing with Azure Policy: https://techcommunity.microsoft.com/t5/core-infrastructure-and-security/infrastructure-as-code-testing-with-azure-policy/ba-p/3921765

Azure Policy Recommended Practices: https://techcommunity.microsoft.com/t5/core-infrastructure-and-security/azure-policy-recommended-practices/ba-p/3798024

Alexey Nazarov is starting a new series on Azure Policy. You can find the first entry: link will be added here when it is published.

Getting started

Decide on your approach!

EPAC is extremely flexible as you can implement any Policy development workflow, branching strategy, CI/CD tool, organizational structure for single and multi-tenant scenarios. The key decisions are:

Consume EPAC as a PowerShell module, or by forking the GitHub repo.

Implement GitHub flow (simple) or Release flow (allows for staged deployment of changes) as your CI/CD and branching approach.

One centralized team (recommended) or multiple teams (by function, and/or hierarchical) managing Policy.

Handling existing Policy implementation by

Exporting them into the EPAC repository and subsuming all existing Policies into EPAC (recommended)

Enabling co-existence with the desired state strategy set to owned only. Owned only should be used for a short transitional period (weeks); keeping it longer leads to increasing difficulty managing your Policy deployments.

Implementing EPAC

Create an empty git repository in your favorite source control tool.

Use the hydration kit or manually create Definitions folder.

Populate the Definitions

From scratch (see starter kit)

Export of your environment,

Azure Landing Zones (https://learn.microsoft.com/en-us/azure/architecture/landing-zones/landing-zone-deploy). Note: EPAC contains great integration with Azure Landing Zones (https://azure.github.io/enterprise-azure-policy-as-code/integrating-with-alz/).

Combination of the above.

Create your CI/CD pipelines/workflows in your favorite CI/CD tool.

EPAC deployment scripts

EPAC contains three scripts to deploy Policy. They are individual scripts to enable approval gates and implement the least privilege principle for the service principals executing the job/stages in CI/CD.

EPAC environments

As with any other software development, Policy development requires a development and testing area for just Policy. This can be one or more EPAC environments.

Simple flow using GitHub Flow

In the simplest case you’ll deploy the developed Policy resources to your tenant root or pseudo root beneath tenant root for each tenant. The downside of this approach is that any mistakes in Policy development immediately impact deployments to production, breaking your solutions CI/CD and in rare cases could even break running systems. The obvious advantage is its simplicity. You would name such an environment with the generic word tenant, prod, or something descriptive of the tenants. If you have multiple tenants, your CI/CD will run multiple deployments (one per tenant).

Release flow

If you have differentiated your Azure tenant or tenants into nonprod and prod environments, using Release flow (https://devblogs.microsoft.com/devops/release-flow-how-we-do-branching-on-the-vsts-team/) makes more sense. Steps:

Develop Policy in a feature branch.

Pull request into main, deploys Policy to nonprod after a successful PR merge.

Let it “soak” in for a few days and observe if it causes any issues for your solutions.

Create a releases branch deploys the changes to prod.

If you need to deploy prod Exemptions during the “soak” period, you need a way to fast-track those exemptions without deploying the Policy changes being “soaked”. This is done by creating a releases-prod-exemptions-fast-track branch which plans the deployment with ‘Build-DeploymentPlans ‑BuildExemptionsOnly’ and Deploy the Policies with Deploy-PolicyPlans. No role changes will occur in this pipeline.

Global settings file

The global-settings file ‘global-settings.jsonc’ in the ‘Definitions’ folder for release flow would look like this.

{

“$schema”: “https://raw.githubusercontent.com/Azure/enterprise-azure-policy-as-code/main/Schemas/global-settings-schema.json”,

“pacOwnerId”: “11111111-2222-3333-4444-555555555555”,

“pacEnvironments”: [

{

“pacSelector”: “epac-dev”,

“cloud”: “AzureCloud”,

“tenantId”: “77777777-8888-9999-1111-222222222222”,

“deploymentRootScope”: “/providers/Microsoft.Management/managementGroups/mg-epac-dev”,

“desiredState”: {

“strategy”: “full”,

“keepDfcSecurityAssignments”: false

}

},

{

“pacSelector”: “nonprod”,

“cloud”: “AzureCloud”,

“tenantId”: “77777777-8888-9999-1111-222222222222”,

“deploymentRootScope”: “/providers/Microsoft.Management/managementGroups/mg-nonprod”,

“desiredState”: {

“strategy”: “full”,

“keepDfcSecurityAssignments”: false

}

},

{

“pacSelector”: “prod”,

“cloud”: “AzureCloud”,

“tenantId”: “77777777-8888-9999-1111-222222222222”,

“deploymentRootScope”: “/providers/Microsoft.Management/managementGroups/mg-enterprise”,

“managedIdentityLocation”: “eastus2”,

“desiredState”: {

“strategy”: “full”,

“keepDfcSecurityAssignments”: false

},

“globalNotScopes”: [

“/providers/Microsoft.Management/managementGroups/mg-nonprod”,

“/providers/Microsoft.Management/managementGroups/mg-epac-dev”

]

}

]

}

Policy Assignment and effect parameters

Using JSON for parameters works great for smaller Initiatives and single Policy Assignments. However, when assigning the big security and compliance-oriented Initiatives, such as ‘Microsoft cloud security benchmark’, ‘NIST 800-53’, and ‘CIS’ (often multiple of them), defining ‘effect parameters via JSON is cumbersome and time consuming. You will need to define hundreds or even thousands of parameters. I had a customer which had ~5000 lines of JSON just for the effect parameters. This makes the JSON file hard to maintain and completely unreadable.

EPAC solves this problem by reading them from a spreadsheet (CSV file). The spreadsheet only defines the Policy name and effect, while EPAC will figure out the parameter names and settings for all the assignments driven by this spreadsheet. If the Initiative does not parameterize the effect, EPAC will automatically generate ‘overrides’ to implement. Lastly, if the effect is Deny, EPAC will only set the Policy to deny in one of the Initiatives and set the effect to Audit for the remaining Initiatives; this prevents the already difficult to read error messages blocked by a Deny from getting more complex.

Efficient Exemption definitions

Normally when creating an Exemption for a Policy if that Policy is included in multiple Initiatives assigned (a frequent occurrence with built-in security and regulatory compliance Initiatives), you must define one exemption per Policy, per Assignment, and per Scope and find (tedious) the policyDefinitionreferenceId in the Initiative definition. For an average exemption, this can be tens or even hundreds of entries in the definition files.

Staring with v10.0.0, this can be simplified to one entry, defining instead of a policyAssignmentId and policyDefinitionReferenceId, the Policy definition Id or Name. EPAC will find all the assignments which include that definition either directly assigned, or due to being included in an assigned Initiative and create one exemption per relevant Assignment. EPAC will generate unique names and augment the displayName and description for the exemptions.

Staring in v10.1.0, instead of specifying one scope per entry, you can define a scopes array. EPAC will generate a set of exemptions for each scope while augmenting the displayName and description with the last part of the scope (or a string override in the definition). Assuming five Assignments containing the Policy definition with the specified Id would generate ten Exemptions. If you specified 16 scopes, that number will be an impressive 80 Exemptions.

{

“exemptions”: [

{

“name”: “short-name”,

“displayName”: “Descriptive name displayed on portal”,

“description”: “More details”,

“exemptionCategory”: “Waiver”,

“scopes”: [

“humanReadableName:/subscriptions/11111111-2222-3333-4444-555555555555”,

“/subscriptions/11111111-2222-3333-4444-555555555556/resourceGroups/resourceGroupName1”,

],

“policyDefinitionId”: “/providers/microsoft.authorization/policyDefinitions/00000000-0000-0000-0000-000000000000”,

}

]

}

What we learned

Security and regulatory compliance Initiatives

Limit the number of assigned Initiatives to a handful or less. Always assign ‘Microsoft cloud security benchmark’; Defender for Cloud relies on the input generated by the included Policies.

Management Groups and Policy Resources

Custom Policy/Initiative Definitions and Policy Assignments need to be deployed at a scope. They should always be deployed at the top Management Group (MG) in each tenant. That MG should be the single MG (no siblings) underneath the “Tenant root group” as recommended by Microsoft (see https://learn.microsoft.com/en-us/azure/cloud-adoption-framework/ready/landing-zone/design-areas) or at the actual “Tenant root group” if you are not following Microsoft’s recommendation verbatim. Keep the management group names and display names the same readable name to keep Policy and RBAC elements readable. Do not use GUIDs or other obfuscated names for management groups.

Policy Assignments

Policies are inert elements in Azure until you create a Policy Assignment at a scope. Each assignment should:

Define semi-readable short name (limited to 24 characters by Azure)

Define a readable displayName (visible in Portal).

May have metadata, such as a work item id.

Assignments containing Policies with Modify or DeployIfNotExists Policies require a Managed Identity (MI). The MI must be granted Azure roles, as specified in the details section of the Policy rule. EPAC calculates these. I prefer System-assigned Managed Identity SPN (service principal names) since they cannot be used outside a single assignment, eliminating the minimal (Azure provides controls for the usage) threat of malicious usage. However, to reduce the number of role assignments, user-assigned MI can be used.

Custom Definitions

First question the need for any custom Policy/Initiative definition requested. While the built-in Policies are not perfect, the choices made are often made due to constraints and conflicts between settings and include tradeoffs in risk versus usability. If you still think you need custom definitions, sleep on it, and revisit the topic one more time.

If you have multiple tenants, the same definition should be propagated to every tenant (DRY principle) by EPAC. Do not use a separate repo which would cause copy/paste issue (WET anti-pattern).

Policy Exemptions

Even with the best intentions some Policies may get in the way. If there is a business reason within acceptable risk parameters, you can grant an Exemption.

Exemptions come in two flavors (without any technical meaning):

Mitigated – Most often used for permanent exemptions. An example is allowing public IP addresses for a storage account which is used as an upload folder AND mitigations, such as Virus scans and deleting processed data.

Waiver – Most often used for temporary exemptions to allow a solution team to fix their non-compliant deployment. Generally granted until Monday after the ETA (estimated time of arrival) for the fix.

Exemptions allow metadata. Add a link in metadata to the work item (e.g., Azure DevOps work item, GitHub issue, Jira ticket, etc.) to keep a record of why the exemption was granted and who granted it.

If you exempt an entire subscription with a Mitigated, it is likely that you should have used notScope (called Excluded Scope in Azure Portal) in the Assignment instead.

Warning: When you delete a Policy Assignment with Exemptions, then the Exemptions are not deleted and become orphaned.

Operating Azure Policy

Operational tasks (e.g., Remediation tasks, generating documentation) must be scripted. Do not use CI/CD tools to execute operational tasks since CI/CD is intended to deploy resources, not to operate those resources.

Keeping track of built-in Policy changes

I frequently consult AzAdvertizer (https://www.azadvertizer.net/). In addition, I keep track of changes by cloning and following Microsoft’s official Azure Policy repo on GitHub (https://github.com/Azure/azure-policy/tree/master/built-in-policies). When I receive an email about a merged PR (pull request), I’ll fetch the latest version from GitHub into my clone. This allows me to use Visual Studio Code on my local clone instead of using Azure Portal or GitHub web interface.

That’s it for this round

Remember to thoroughly test the code and policies in a safe environment before deploying to production. If there are any issues with the code, please raise a GitHub Issue.

Until next time.

Disclaimer

The sample scripts are not supported under any Microsoft standard support program or service. The sample scripts are provided AS IS without warranty of any kind. Microsoft further disclaims all implied warranties including, without limitation, any implied warranties of merchantability or of fitness for a particular purpose. The entire risk arising out of the use or performance of the sample scripts and documentation remains with you. In no event shall Microsoft, its authors, or anyone else involved in the creation, production, or delivery of the scripts be liable for any damages whatsoever (including, without limitation, damages for loss of business profits, business interruption, loss of business information, or other pecuniary loss) arising out of the use of or inability to use the sample scripts or documentation, even if Microsoft has been advised of the possibility of such damages.

Microsoft Tech Community – Latest Blogs –Read More