Tag Archives: microsoft

Respond to trending threats and adopt zero-trust with Exposure Management

In today’s rapidly evolving threat landscape, organizations face a daunting challenge: managing their security posture effectively. With an ever-expanding attack surface, including cloud services, endpoints, apps, increasing use of SaaS applications and different types of accounts and identities, it has become more important than ever to implement proactive processes to prevent threats. As cyber threats become more sophisticated, organizations must stay ahead of the curve. The ability to implement processes to identify, assess, and remediate exposures is essential for maintaining a robust security posture.

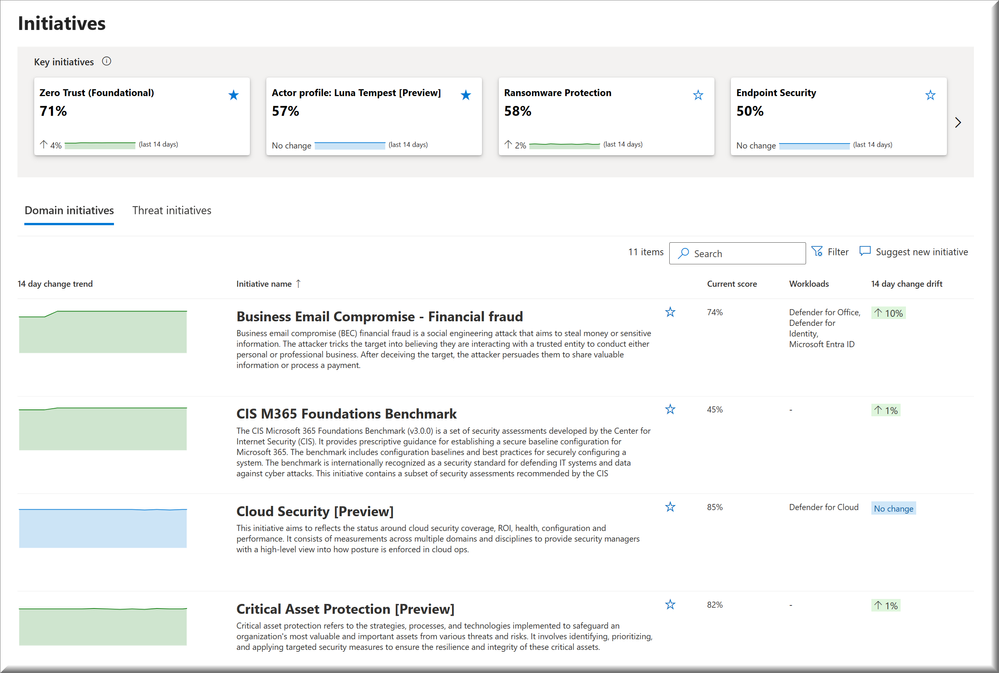

In March, we announced the public preview of Microsoft Security Exposure Management that addresses the need for a unified solution that brings together disparate data sources, enabling security teams to make informed decisions. We introduced the concept of Security Initiatives that simplifies security posture management and helps you to assess readiness and maturity in specific security domains.

Security Initiatives take a proactive approach to managing security programs towards specific risk or domain-related objectives. Domain initiatives can relate to workloads such as endpoint, cloud and identity, enabling security to work closely with IT operations teams responsible for each workload to prioritize the proper implementation of key security controls.

Once you determine the objectives of your security organization, the Security Initiatives help you identify areas for improvements, real exposure or unmet implementation of essential security controls. Each initiative has a score that represents the organization’s progress in implementing the recommendations and can be used to track and report on their work in managing exposure and minimize risk.

Announcements

We have two key updates to Security Initiatives to share with you. First we will look at an exciting new set of initiatives focused on threat actors and techniques and secondly we want to highlight how the Zero Trust initiative can help organizations track and report on progress in adopting a Zero Trust architecture.

Threat initiatives

Today, we are announcing a preview integration with Threat Analytics to enhance the set of domain security initiatives with threat-based security initiatives. These initiatives focus on specific attack techniques and active threat actors, as seen and analyzed by expert Microsoft security researchers.

Consider this scenario:

You’re part of a financial institution that has received threat intelligence about possible attacks targeting institutions like yours. The burning questions are, “How well-protected are we against this specific threat?” and “What steps can we take to mitigate this threat?”

With the threat-based security initiatives, you can follow a curated list of security recommendations essential for safeguarding against the identified threat.

Unlike existing domain initiatives that use security metrics to calculate scores, the new threat-based initiatives calculate their score based on the implementation of associated recommendations, similar to the calculation used in Microsoft Secure Score. By diligently following these recommendations, organizations can enhance their initiative scores and overall posture against specific threats and threat actors. This proactive approach empowers security teams to stay ahead of evolving cyber risks and safeguard critical assets.

Follow these steps to try the new initiatives:

In Defender portal, navigate to Exposure Management -> Exposure Insights -> Initiatives.

In the Initiative catalog, select the Threat Initiative tab. If you don’t see this in your environment, please try again in a day or two as this update is gradually rolling out.

Explore the list of supported initiatives related to threat actors and techniques, along with their associated scores.

Click on specific initiatives to access detailed recommended actions.

For deeper insights into the specific threat, each initiative provides a link to the associated threat analytics article

The second news we want to highlight is related to one of our key domain initiatives. Zero Trust is really one of the more fundamental approaches organizations can take to improve their security posture and ability to respond to threats. In the Zero Trust guidance center you find how to adopt Zero Trust but also specific guidance for Microsoft 365, Azure and a newly updated section on Microsoft Copilots.

Zero Trust initiative

The Zero Trust initiative is aligned with the Microsoft Zero Trust adoption framework, allowing you to track your progress with metrics aligned with business scenarios. These metrics capture your resource coverage across prioritized actionable recommendations to help security teams protect their organization. The initiative also provides real-time data on your Zero Trust progress that can be shared with stakeholders.

Each metric includes insights that help teams understand the current state — providing teams with recommendation details, identifying which assets are affected, and measuring the impact on the overall Zero Trust maturity.

Zero Trust adoption is a team game that involves both security and IT operations teams to be aligned and work together to prioritize changes that improve overall Zero Trust maturity. At the metric and task level, you can share the recommendation with the appropriate team and owner. The owner can then link directly to the admin experience of the respective security control to configure and deploy the recommendation.

The Microsoft Zero Trust adoption framework encourages you to take a risk-based approach and/or a defensive strategy. With either of these approaches, you can target other Security Initiatives within the exposure management tool, such as Ransomware Protection or a specific threat initiative, and see your work accrue to Zero Trust maturity in the Zero Trust initiative.

Finally, we recommend that you use the Zero Trust initiative together with the Zero Trust adoption framework. The metrics and tasks within the initiative are organized by Zero Trust business scenario. The adoption framework provides rich guidance on how to plan and deploy the recommendations, including links to the best resources for each metric task.

Follow these steps to try the Zero Trust initiatives:

In Defender portal, navigate to Exposure Management -> Exposure Insights -> Initiatives.

In the Initiative catalog, select the Domain Initiative tab.

Open the Zero Trust initiative.

If your organization is focused on implementing a Zero Trust architecture click the Favorite star to ensure that this initiative shows up as one of your prioritized Key initiatives on the Exposure Management Overview page.

Thank you for reading this far and as a bonus we want to provide upcoming updates to Attack Path management in Microsoft Security Exposure Management. The Security Initiatives is an effective way for organizations to track and report on progress within a prioritized area but Attack Paths provide a direct view into how adversaries could exploit weaknesses or vulnerabilities.

Exposure Management offers organizations insight into potential attack paths identified within their environments. This enables a more validated approach to prioritizing vulnerabilities, misconfigurations, and other exposure-related findings. With the Exposure Management attack path management module, customers can concentrate on the security issues attackers are most likely to exploit to advance their malicious operations and target critical assets.

Upcoming Attack Path Management improvements

We are thrilled to announce the upcoming release of several new features for Attack Path Management! With these new additions, customers will gain a deeper understanding of how security issues and gaps are correlated to attack paths, along with crucial context regarding which assets are predominantly involved or responsible for creating potential routes attackers could exploit.

Attack Path Overview

Gain a comprehensive understanding of the assets and issues involved in potential attack paths. We’re introducing a new screen for attack path management designed to provide users with a high-level overview of the potential impact of attack paths on their organization. This includes a timeline of discovered attack paths, top-risk paths identified, primary entry points and targets, and more. This overview screen complements the existing attack path list experience, which offers visibility into all attack paths discovered by Microsoft Security Exposure Management.

Chokepoints

Identify which assets in your environments require focused attention. Chokepoints are assets that play a significant “role” in many attack paths leading to critical assets. With numerous security issues and findings, gaining visibility into such assets and understanding their role in multiple potential attack paths is crucial for focusing team efforts on tasks with the most impact on the organization’s exposure.

Asset Blast Radius

View the potential blast radius of your assets with a single click. One of the core principles of Microsoft Security Exposure Management is that “context is king.” Addressing the known saying of “Defenders Think in Lists. Attackers Think in Graphs,” Exposure Management provides organizations with the tools to change this paradigm. With this new addition, customers can gain instant visibility into the potential blast radius of their assets, allowing them to scope the potential impact of an attack and prioritization mitigation actions accordingly. This new action is available both in the Attack Surface Map and Attack Path Management areas.

For those looking to learn more about security initiatives, attack paths and exposure management in general, here are some additional resources you can explore.

Exposure Management documentation: Microsoft Security Exposure Management documentation

Exposure Management product website: Microsoft Security Exposure Management | Microsoft Security

Exposure Mangement public preview blog post: Introducing Microsoft Security Exposure Management

Related blog posts

Critical Asset Protection with Microsoft Security Exposure Management – Microsoft Community Hub

Microsoft Tech Community – Latest Blogs –Read More

Logic Apps Aviators Newsletter – May 2024

In this issue:

Ace Aviator of the Month

Customer Corner

News from our product group

News from our community

Ace Aviator of the Month

May’s Ace Aviator: Derek Marley

What is your role and title? What are your responsibilities associated with your position?

Integration Principal Strategist – Azure Integration Services

As an Integration Principal Strategist, I lead the charge in crafting comprehensive integration strategies tailored to meet the unique needs of businesses. With over 25 years of experience in the integration space, I bring a depth of knowledge and expertise to the table. My primary focus is on Azure Integration Services, where I excel in designing, implementing, and optimizing integration solutions.

At the heart of my role is the ability to architect robust integration frameworks that seamlessly connect disparate systems, applications, and data sources. I work closely with stakeholders to understand their objectives and translate them into actionable integration plans. From establishing integration patterns to defining API frameworks, I ensure that every aspect of the integration lifecycle is meticulously addressed.

In my capacity as a strategist, I not only devise integration roadmaps but also stay abreast of emerging technologies and industry trends to continuously refine and enhance our approach. I believe collaboration is key, and I thrive in cross-functional environments where I can work closely with development teams, architects, and business leaders to drive alignment and foster innovation.

Can you provide some insights into your day-to-day activities and what a typical day in your role looks like?

In my role as an Integration Principal Strategist, each day is diverse and packed with tasks aimed at optimizing integration and supporting our clients’ objectives. A typical day begins with connecting with my team: reviewing goals, projects, and deadlines. When it comes to project work, I strategize with clients to grasp their integration needs, communicate these back to my team, collaborate on the design, and oversee tailored solutions. Additionally, I stay updated on industry trends, foster client relationships, and promote effective teamwork to ensure successful integration outcomes and drive business growth.

What motivates and inspires you to be an active member of the Aviators/Microsoft community?

Being an active member of the Aviators/Microsoft community is motivating and important for me. The sense of a shared purpose within the community fuels my passion for collaboration and continuous learning. The opportunity to exchange ideas, insights, and best practices with fellow professionals allows me to stay at the forefront of industry trends and enhances my skills as an Integration Strategist. Doing so makes my discussions with customers

way more pragmatic and overall, more enjoyable.

Looking back, what advice do you wish you would have been told earlier on that you would give to individuals looking to become involved in STEM/technology?

The advice I would give is this: Technology is constantly evolving, and things are always shifting, so it’s key to stay flexible and willing to embrace new ideas. Connecting with mentors and communities is super valuable for learning and collaborating. And always remember, soft skills like communication and teamwork are just as vital as technology.

Imagine you had a magic wand that could create a feature in Logic Apps. What would this feature be and why?

If I had a magic wand to create a feature in Logic Apps, I’d build on the incredible potential of the Workflow Assistant/Copilot by adding organizational profiles. Imagine this: being able to input a company’s unique integration standards, preferred patterns, and specific requirements

into the system. Then, when the Assistant/Copilot generates Logic Apps, it would incorporate not only the Logic App LLM Responses but also be influenced by the company-specific profile details. This feature would streamline development, ensure consistency across projects, and empower organizations to leverage their integration expertise seamlessly within the Logic Apps environment.

What are some of the most important lessons you’ve learned throughout your career that surprised you?

One of the things that really caught me off guard in my career and that became an important learning lesson occurred recently – it is how quickly AI and copilots have become super relevant and incredibly important to all companies. It is hard to believe that just one year ago, we knew what was unfolding, but the speed at which these technologies evolved is surprising! It’s a real eye-opener about how fast technology can change things. It reminds me how important it is to stay flexible, open-minded, and continually engrossed in learning, because one just never know what’s coming next! For me, this learning has driven home awareness of that adage: we live in an age where no longer do big organizations absorb the small but rather, the fast organizations absorb slow. Incorporate AI and Copilots early, but please ensure you do in a responsible manner!

Customer Corner:

Empowering prosthetic innovation: Össur’s Azure Integration Services journey

Check out this customer success story about Össur, a leading prosthetics and orthopedics manufacturer based in Iceland, who utilized Microsoft Azure and Logic Apps to enhance their manufacturing processes. By implementing Azure services, Össur improved efficiency, agility, and scalability in their production operations. Logic Apps enabled seamless integration between Össur’s systems, facilitating real-time data exchange and workflow automation. This integration streamlines order processing, inventory management, and production scheduling, resulting in faster response times and reduced costs. Read more on how Azure and Logic Apps empower Össur to optimize their manufacturing operations, ultimately enhancing customer satisfaction and competitiveness.

News from our product group:

!! Announcement !! Logic Apps In-app connectors for IBM Mainframe and Midranges Generally Available

We are pleased to announce the General Availability of the Azure Logic Apps Built-in connectors for IBM Mainframe and Midranges. Read more about these connectors and how to get started in this article.

Announcing General Availability of Azure API Management Basic v2 and Standard v2 Tiers

We’re thrilled to announce the General Availability of Azure API Management’s newest pricing tiers – Basic v2 and Standard v2. The introduction of these new tiers directly addresses common customer requests, enhancing quality-of-service and enabling businesses of all sizes to leverage API Management effectively.

A workbook for Logic App (standard) run history and resubmitting multiple runs.

Enjoyed the sample workbook for organizing Logic Apps events e mitted to Application Insights but wanted to select and resubmit multiple runs from the workbook? Check out this article on the newly released workbook with multiple runs.

File Systems Connector Support on Workflow Standard Plans

We recently implemented some updates that now allow Workflow Standard Plans to host Logic Apps Standard workflows that contains File System Connector. Check out more in this post.

TimeZone changes in Kazakhstan

In Kazakhstan the local authorities have operationalized a Time Zone Change for the country, where all cities will converge to UTC+5 (and UTC+6 is getting deprecated and converging into UTC+5). See how that affects your Logic App in this post.

Improving the DevOps Experience for Azure Logic Apps Standard

Azure Logic Apps Standard just launched a set of preview features that help you automate the steps in setting up DevOps processes for your applications. In this blog post, you will find more about these new features

Secure GraphQL APIs with JSON Web Token validation and Authorization rules in Azure API Management

In this blog post, we walk you through how Azure API Management can effectively solve these security concerns by utilizing JWT validation, enforcing authorization rules based on the token’s claims, and deactivating introspection through the GraphQL validation policy

Choosing the right Azure API Management tier for your networking scenarios

This blog post aims to guide you through the different options available on both the classic tiers and v2 tiers of Azure API Management, to help you decide which choice works best for your requirements.

Using logic app to Revoke Sign in session via REST API

In this article, we will share the new way to use logic app to Revoke Sign in Session via REST API.

Using File Connector – Trigger Properties to filter file types in Azure Logic Apps

Learn about the file connector and how you can filter out file extensions using trigger conditions in this video.

News from our community:

Getting Started with Azure Integration Services

Post by Stephen W. Thomas

Catch up on Stephen’s live session here as he walks us through the history of Microsoft Integration, what you can do if you still have BizTalk Server running, review the core services of AIS, and dive into a quick demo.

Friday Fact: Workflow and Trigger expressions can help monitor your Logic Apps.

Post by Luís Rigueira

In this blog/video by Luis, we learn about the workflow() and trigger() expressions and how to use them to help your Logic App as they’re great in error handling situations.

Microsoft Azure Integration Services

Post by Sebastian Meyer

Check out this podcast featuring German Aviator Sebastian Meyer as he chats about Azure Integration Services and compares it to SAP Integration Suite with the podcast host, Adam Kiwon. Don’t speak German? English translated captions work great!

Harnessing the Power of Microsoft Copilots: A Guide to Copilot Extensibility Within Your Enterprise

Post by Derek Marley

Read this post by this month’s Ace Aviator Derek as he dives into the three primary ways Microsoft Copilot can access data from within and outside the traditional Microsoft data sources.

My Azure Front Door experience

Post by Mark Brimble

New to Azure Front Door? Mark was too until he followed the Quickstart guide. Read about his impressions on getting started and any additional comments from a personal experience.

Friday Fact: It is possible to create an XML first-class experience inside Logic Apps.

Post by Sandro Pereira

By default, XML messages don’t have a first-class experience like JSON, but that doesn’t mean that you can’t create that experience. Follow along with Sandro as he shows you how.

Logic App Inline Function Troubleshooting Challenge

Post by Mike Stephenson

Watch this video from Mike as he troubleshoots a problem with Logic App Standard and the Inline Function feature and gives some good tips.

Upgrading Logic App Consumption to Logic App Standard

Post by Poojith Jain

In this post, Poojith talks about why you should consider transitioning from Logic App Consumption to Logic App Standard and the key differences and any limitations when adopting the Logic App Standard approach.

Logic App Resubmit from Action

Post by Mike Stephenson

Watch this video from Mike as he talks about the Resubmit from Action feature in both Logic Apps Standard and Consumption.

Post by Luís Rigueira

Looking for a time-saving PowerShell script to simplify the preservation of Logic App details before deletion? Then read Luis’ post as it applies to scenarios needing to delete important Logic Apps but still require insights into past executions.

Logic App Best Practices, Tips, and Tricks: #42 How to convert JSON into XML inside Logic Apps

Post by Sandro Pereira

While XML to JSON is a straightforward operation, JSON to XML is not but still made simple in this post by Sandro. Learn how to convert JSON messages to XML inside your workflows using out-of-the-box capabilities.

Microsoft Tech Community – Latest Blogs –Read More

Leverage Service Connector to streamline connectivity to backend services on Azure

Creating connections between different compute and backend services on Azure is a common task that all developers have to deal with while implementing a piece of code. There are a lot of considerations one must deal with while planning, deploying and using these connections such as which identity do I use, what network configurations do I implement and where do I storage the connection configuration either in key vault or in App Configuration service to name a few.

Following these practices is important to ensure that as a developer you play your part in not just implementing best practices but also align with azure security baseline and the concept of zero trust.

This is where a service connection can help simplify some of these design and implementation choices for you.

A service connection represents an abstraction of the link between two services and the Service Connector is an Azure extension resource provider designed to provide a simple way to create and manage connections between Azure services.

Fundamentally a service connection

Configures network settings, authentication, and manages connection environment variables or properties for you.

Validates connections and provides suggestions to fix faulty connections.

Source services and target services support multiple simultaneous service connections, which means that you can connect each resource to multiple resources. Service Connector manages connections in the properties of the source instance. Creating, getting, updating and deleting connections is done directly by opening the source service instance in the Azure portal, or by using the CLI commands of the source service. Connections can be made across subscriptions or tenants, meaning that source and target services can belong to different subscriptions or tenants.

Note: Identity best practice is to use a managed identity to connect two azure services, however a managed identity cannot span multiple Entra tenants. Therefore, when you are creating a service connection between resources which are in two separate tenants then prefer to use a service principal or a connection string which is fetched from a key vault while creating the service connection.

Let’s try to create a new service connection. We will use the portal experience to look at all the options. We will use an azure web app to explore.

Under “Settings” for an azure web app you will find an option called “Service Connector” as shown below.

First, we will create a connection for a storage account. When you click on “Create” you will see a dialogue box open.

We see a list of services and we will select “Storage – Blob” as the choice.

When you have selected the service, a name is auto populated using a convention. You can select one of the subscriptions you have access to. You can then select a storage account from that subscription. The available client type was only “.NET” for me as my azure app service is .NET 6 based and the portal was nice enough to pick that up.

Next screen is where we setup “Authentication”.

As you can see, it gives you four options. Leveraging a “System assigned managed identity” should be preferred given that the service connection will be tied to the identity of the azure web app and once you delete the web app along with which the service connection will also be deleted, the RBAC set in the target service will also be deleted allowing for a better management of the authentication cleanup.

You can select which RBAC role to provide. The drop down will list roles based on the target service. Follow the principle of least privilege.

Important point to note is the two environment variables that will get created as part of the flow. You will need to use them in the code when you want to create a connection to the target storage account. You can edit them if you want to follow you own naming convention.

You can also choose to store the configuration inside of the App Configuration service. If you select the check box, then it will show you a dropdown with an option to choose from the list of available instances.

Next will be “Networking”.

As you can see, the only option to choose from is the option to configure the firewall rules in the target storage account and this is because I have not configured my web app with VNet integration. In case you want to use a private endpoint in the target service (recommended for production setup) or use the service endpoint you must configure the VNet integration for the app first. I am glad the portal experience makes it intuitive but when you are doing it from the CLI then the commands will fail if the VNet integration is not setup.

When you hit “Review + Create”, here is what happens in the backend

If the web app does not have a system assigned managed identity, then it gets created and is assigned the required RBAC role to the storage account.

Network configurations are updated in the firewall settings of the storage account.

Environment variable is created. Now this variable and the service connector is a slot level deployment for the web app so if you have multiple slots in your web app then you will need to create the service connection per slot. In this case however, the above steps are not repeated and only the required environment variable is created in the slot.

Service Connector creates connections between Azure services using an on-behalf-of token. The on-behalf-of (OBO) flow describes the scenario of a web API using an identity other than its own to call another web API. Referred to as delegation in OAuth, the intent is to pass a user’s identity and permissions through the request chain. You can validate this by looking at the activity logs where you will see all operations being executed under the identity of the person or Entra application (in case of IaC). As a result, one who is creating the service connection needs to have correct permissions. Refer link for more details.

Next, we will look at another example where we will try to connect to an SQL database. I will not repeat all the steps but try to highlight the differences and an issue which exists at the time of writing this blog post on May 7, 2024.

When creating the service connector for SQL database and as you will see for many other options when using any of the identity options apart from connection string is that the portal requests you to run two commands on the cloud shell as shown in image below.

When you click on “Create on Cloud Shell”, the portal launches the shell and tries to execute the commands. However, the second command fails with an error as discussed in linked github issue.

The workaround mentioned is to run the same set of commands in the local CLI environment works wonderfully well. So, in case you are stuck or get an error with the CLI commands then please try in the local CLI shell instead of cloud shell.

The sequence of operations in this case is

I selected a system assigned managed identity and hence it checked for the presence of one.

The operations are performed using an on-behalf-of token and hence the user I had logged in with is added as an Entra administrator on the SQL server.

Post this the managed identity is added as a user in the database.

The firewall settings on the database server are updated.

The environment variable is created on the web app which can then be used in the code.

An interesting point I noted is that the operation using my identity caused an alert on the defender to fire for suspected login from an unusual location. This was because I work from India and the resources I have deployed are in East US, so when the resource provider used my identity to make the changes, the location detected was unusual.

Another positive about service connector is the built-in support for Availability Zones for HA and DR to a paired region irrespective of whether you are following a cross-region DR for your choice of compute or not.

Service connectors handle business continuity and disaster recovery (BCRD) for storage and compute. The platform strives to have as minimal of an impact as possible in case of issues in storage/compute, in any region. The data layer design prioritizes availability over latency in the event of a disaster – meaning that if a region goes down, Service Connector will attempt to serve the end-user request from its paired region.

During the failover action, Service Connector handles the DNS remapping to the available regions. All data and action from customer view serves as usual after failover. Service Connector will change its DNS in about one hour. Performing a manual failover would take more time. As Service Connector is a resource provider built on top of other Azure services, the actual time depends on the failover time of the underlying services.

Refer to the official documentation on link. As with other services, it is highly recommended that you use availability zone support in regions where the capability is available.

The portal also has a nice “Network view” where you can see a diagram of how the service connector is connecting your compute to the backend service.

The portal also has a nice option to “Validate” the connection and as a best practice, one should always validate the service connection before using it and in case you suddenly start facing challenges with a service connection not working, then also try to validate the service connection again as it will check or the pre-requisites on the compute and the target service so any breaking changes can be caught and then you can go ahead and fix.

Service Connections work with other compute options as well like Function Apps, AKS, Azure Container Apps and Azure Spring Apps.

I experimented with function apps as well and the experience is the same. In case you are a big fan of event driven programming then do note that service connections and triggers are two very different concepts and have no overlap. However, one catch is that you should be careful when creating a trigger and service connection on the same instance of the target service especially if you are working with “create” operations.

I unfortunately created a function app trigger on a storage account blob added event and as part of reading the file and writing it back in another storage account container using the service connection, I picked the same storage account and container. This created a cycle of events where the same file ended up invoking my function app in an infinite loop.

To get started on delving deeper refer: https://learn.microsoft.com/en-us/azure/service-connector/concept-service-connector-internals

Microsoft Tech Community – Latest Blogs –Read More

The New Outlook Notifications for email with rules not working. Missing features

I have to use the New Outlook to be prepared for the eventuality when Microsoft forces the change.

I’m missing notifications for emails coming in when it has mailbox rules to organize it.

The Notification Center in Windows is ok, but there should be a way in the rules to set a notification in a window like the Reminders window.

being able to set a specific sound would be beneficial as well.

I’ve had to resort to using Categories and constantly checking Outlook to see if I missed anything. This has resulted in missing many time-sensitive alerts and emails. Such as a Midnight internet outage at a business-critical building on my day off. Resulting in 6 hours of downtime during business rather than a resolution before business opening.

I have to use the New Outlook to be prepared for the eventuality when Microsoft forces the change. I’m missing notifications for emails coming in when it has mailbox rules to organize it. The Notification Center in Windows is ok, but there should be a way in the rules to set a notification in a window like the Reminders window.being able to set a specific sound would be beneficial as well. I’ve had to resort to using Categories and constantly checking Outlook to see if I missed anything. This has resulted in missing many time-sensitive alerts and emails. Such as a Midnight internet outage at a business-critical building on my day off. Resulting in 6 hours of downtime during business rather than a resolution before business opening. Read More

Azure VM Agent Status not ready

I have created a red hat openshift private cluster but the VMS are stuck in the state of “agent status not ready.”

I have followed these troubleshooting steps:

Linux Virtual Machine Agent Status “Not Ready” – Microsoft Community Hub

However, all of them seem to point to trying to check and see what is on the VM itself. I am unable to do this because I can’t SSH into the machine. Has anyone else ran into this issue and been able to resolve it? I am deploying it via CLI as I was not able to do it via GUI for some reason. This is my script:

#az login

az account set –name “accountnamehidden”

#az provider register -n Microsoft.RedHatOpenShift –wait

#az provider register -n Microsoft.Compute –wait

#az provider register -n Microsoft.Storage –wait

#az provider register -n Microsoft.Authorization –wait

$LOCATION= “eastus” # the location of your cluster

$RESOURCEGROUP= “sample-rg” # the name of the resource group where you want to create your cluster

$CLUSTER= “K8sDev1test” # the name of your cluster

$arovnet= “sample-vnet”

$mastersubnet = “k8sDev1-master-ue-snet”

$workersubnet = “k8sDev1-worker-ue-snet”

az aro create –resource-group “samplerg” –vnet-resource-group “sample-vnet-rg” –name $CLUSTER –vnet $arovnet –master-subnet “k8sDev1-master-ue-snet” –worker-subnet “k8sDev1-worker-ue-snet” –apiserver-visibility Private –ingress-visibility Private –fips true –outbound-type UserDefinedRouting –client-id hidden –client-secret hidden

I have created a red hat openshift private cluster but the VMS are stuck in the state of “agent status not ready.” I have followed these troubleshooting steps: Linux Virtual Machine Agent Status “Not Ready” – Microsoft Community Hub However, all of them seem to point to trying to check and see what is on the VM itself. I am unable to do this because I can’t SSH into the machine. Has anyone else ran into this issue and been able to resolve it? I am deploying it via CLI as I was not able to do it via GUI for some reason. This is my script: #az loginaz account set –name “accountnamehidden”#az provider register -n Microsoft.RedHatOpenShift –wait#az provider register -n Microsoft.Compute –wait#az provider register -n Microsoft.Storage –wait#az provider register -n Microsoft.Authorization –wait $LOCATION= “eastus” # the location of your cluster$RESOURCEGROUP= “sample-rg” # the name of the resource group where you want to create your cluster$CLUSTER= “K8sDev1test” # the name of your cluster$arovnet= “sample-vnet”$mastersubnet = “k8sDev1-master-ue-snet”$workersubnet = “k8sDev1-worker-ue-snet”az aro create –resource-group “samplerg” –vnet-resource-group “sample-vnet-rg” –name $CLUSTER –vnet $arovnet –master-subnet “k8sDev1-master-ue-snet” –worker-subnet “k8sDev1-worker-ue-snet” –apiserver-visibility Private –ingress-visibility Private –fips true –outbound-type UserDefinedRouting –client-id hidden –client-secret hidden Read More

Cloud Proxy Connector certificate – not trusted

Hi Guys,

I am struggling with CMG connection point in disconnected state.

In logs I see:

Starting to connect to Proxy server xxxx:10140 with client certificate 7B96C5251E6F6C5C48412E87F07749D7DB201C35 and connection ID d99de605-abbb-47cc-81df-0827ab4cb656…

Starting to connect to Proxy server xxx:443 with client certificate 7B96C5251E6F6C5C48412E87F07749D7DB201C35 and connection ID d5b1432f-2030-49cb-94a6-1bab1c4b8af8…

and then:

ERROR: Failed to build Tcp connection d99de605-abbb-47cc-81df-0827ab4cb656 with server xxx:10140. Exception: System.Net.WebException: TCP CONNECTION: Failed to connect TCP socket with proxy server —> System.Net.Sockets.SocketException: A connection attempt failed because the connected party did not properly respond after a period of time, or established connection failed because connected host has failed to respond xxx:10140~~ at System.Net.Sockets.TcpClient.Connect(String hostname, Int32 port)~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.TcpConnection.Connect()~~ — End of inner exception stack trace —~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.TcpConnection.Connect()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionBase.Online()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionBase.Start()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionManager.MaintainConnections()

ERROR: Failed to build Http connection d5b1432f-2030-49cb-94a6-1bab1c4b8af8 with server xxx:443. Exception: System.Net.WebException: HTTP CONNECTION: Failed to send data to proxy server —> System.Net.WebException: Unable to connect to the remote server —> System.Net.Sockets.SocketException: A connection attempt failed because the connected party did not properly respond after a period of time, or established connection failed because connected host has failed to respond xxx:443~~ at System.Net.Sockets.Socket.DoConnect(EndPoint endPointSnapshot, SocketAddress socketAddress)~~ at System.Net.ServicePoint.ConnectSocketInternal(Boolean connectFailure, Socket s4, Socket s6, Socket& socket, IPAddress& address, ConnectSocketState state, IAsyncResult asyncResult, Exception& exception)~~ — End of inner exception stack trace —~~ at System.Net.HttpWebRequest.GetRequestStream(TransportContext& context)~~ at System.Net.HttpWebRequest.GetRequestStream()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.HttpConnection.PopulateStream(HttpWebRequest request, IAsyncResult asynchronousResult, String requestString, Byte[] data)~~ — End of inner exception stack trace —~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.HttpConnection.PopulateStream(HttpWebRequest request, IAsyncResult asynchronousResult, String requestString, Byte[] data)~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.HttpConnection.Connect()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionBase.Online()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionBase.Start()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionManager.MaintainConnections()

When I checked Cloud Proxy Connector cert with thumbprint: 7B96C5251E6F6C5C48412E87F07749D7DB201C35 I see information:

This CA Root certificate is not trusted. To enable trust, install this certificate in the Trusted Root Certification Authorities store

I assume it is not normal situation for this cert? Also from what I read this cert should be only under SMS cert store? Is that correct? Could you please advise what is the best way to fix that?

Thank you and best regards,

Damian

Hi Guys, I am struggling with CMG connection point in disconnected state. In logs I see: Starting to connect to Proxy server xxxx:10140 with client certificate 7B96C5251E6F6C5C48412E87F07749D7DB201C35 and connection ID d99de605-abbb-47cc-81df-0827ab4cb656… Starting to connect to Proxy server xxx:443 with client certificate 7B96C5251E6F6C5C48412E87F07749D7DB201C35 and connection ID d5b1432f-2030-49cb-94a6-1bab1c4b8af8… and then: ERROR: Failed to build Tcp connection d99de605-abbb-47cc-81df-0827ab4cb656 with server xxx:10140. Exception: System.Net.WebException: TCP CONNECTION: Failed to connect TCP socket with proxy server —> System.Net.Sockets.SocketException: A connection attempt failed because the connected party did not properly respond after a period of time, or established connection failed because connected host has failed to respond xxx:10140~~ at System.Net.Sockets.TcpClient.Connect(String hostname, Int32 port)~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.TcpConnection.Connect()~~ — End of inner exception stack trace —~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.TcpConnection.Connect()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionBase.Online()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionBase.Start()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionManager.MaintainConnections() ERROR: Failed to build Http connection d5b1432f-2030-49cb-94a6-1bab1c4b8af8 with server xxx:443. Exception: System.Net.WebException: HTTP CONNECTION: Failed to send data to proxy server —> System.Net.WebException: Unable to connect to the remote server —> System.Net.Sockets.SocketException: A connection attempt failed because the connected party did not properly respond after a period of time, or established connection failed because connected host has failed to respond xxx:443~~ at System.Net.Sockets.Socket.DoConnect(EndPoint endPointSnapshot, SocketAddress socketAddress)~~ at System.Net.ServicePoint.ConnectSocketInternal(Boolean connectFailure, Socket s4, Socket s6, Socket& socket, IPAddress& address, ConnectSocketState state, IAsyncResult asyncResult, Exception& exception)~~ — End of inner exception stack trace —~~ at System.Net.HttpWebRequest.GetRequestStream(TransportContext& context)~~ at System.Net.HttpWebRequest.GetRequestStream()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.HttpConnection.PopulateStream(HttpWebRequest request, IAsyncResult asynchronousResult, String requestString, Byte[] data)~~ — End of inner exception stack trace —~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.HttpConnection.PopulateStream(HttpWebRequest request, IAsyncResult asynchronousResult, String requestString, Byte[] data)~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.HttpConnection.Connect()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionBase.Online()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionBase.Start()~~ at Microsoft.ConfigurationManager.CloudConnection.ProxyConnector.ConnectionManager.MaintainConnections() When I checked Cloud Proxy Connector cert with thumbprint: 7B96C5251E6F6C5C48412E87F07749D7DB201C35 I see information: This CA Root certificate is not trusted. To enable trust, install this certificate in the Trusted Root Certification Authorities store I assume it is not normal situation for this cert? Also from what I read this cert should be only under SMS cert store? Is that correct? Could you please advise what is the best way to fix that? Thank you and best regards,Damian Read More

Get access token of a user to use it to get informations

Hello guys, i want to get an access token that i can use it as a specific user to get information from microsoft intune.

for example :

I want to auth then input email and password.

Then get access token of this user.

Use access token to get application that this user see on his company portal

i already use an access token but of application to get all mobileApps but now i want access token of a user. could you please share with me steps to do that and what i need to add on my app registration.

btw i use postman for test my APIs

Hello guys, i want to get an access token that i can use it as a specific user to get information from microsoft intune.for example :I want to auth then input email and password.Then get access token of this user.Use access token to get application that this user see on his company portal i already use an access token but of application to get all mobileApps but now i want access token of a user. could you please share with me steps to do that and what i need to add on my app registration. btw i use postman for test my APIs Read More

DLL peoblem

There is pcshll.dll in hllapi in .net. This DLL file is present in the code but still system throws exception “System.DllNotFoundException: ‘Unable to load DLL ‘C:WindowsSysWOW64pcshll32.dll’: The specified module could not be found. (Exception from HRESULT: 0x8007007E

There is pcshll.dll in hllapi in .net. This DLL file is present in the code but still system throws exception “System.DllNotFoundException: ‘Unable to load DLL ‘C:WindowsSysWOW64pcshll32.dll’: The specified module could not be found. (Exception from HRESULT: 0x8007007E Read More

Bookings typo in french version

Hi,

I would like to bring to your attention a minor typo in the French version of “bookings.” When selecting an employee name, the phrase “N’importe quel employé” contains an unnecessary backslash () character. The correct phrase should be “N’importe quel employé” without the backslash.

Could you please address this issue and update the text accordingly? It would greatly improve the user experience for French-speaking users.

Thank you for your prompt attention to this matter.

Regards,

Olivier

Hi,I would like to bring to your attention a minor typo in the French version of “bookings.” When selecting an employee name, the phrase “N’importe quel employé” contains an unnecessary backslash () character. The correct phrase should be “N’importe quel employé” without the backslash.Could you please address this issue and update the text accordingly? It would greatly improve the user experience for French-speaking users.Thank you for your prompt attention to this matter. Regards, Olivier Read More

Disaster Recovery for SAP NetWeaver HA deployment with Azure Shared Disk on Windows using ASR

Overview

You have set up the SAP system on Windows to be highly available with Azure shared disk, following the steps in Cluster SAP ASCS/SCS instance on Windows Server Failover Cluster (WSFC) using shared disk in Azure. This makes the SAP system resilient to platform maintenance or hardware failure within an Azure region. But it doesn’t safeguard applications from large-scale regional disaster. The good news is that with the public preview of ASR for Azure shared disk, you can now easily configure DR for your high available SAP ASCS/SCS running on WSFC with Azure shared disk.

NOTE: For DR of your Windows SAP system with File share, see Disaster Recovery for SAP NetWeaver high availability deployment with File Share on Windows using ASR for details.

IMPORTANT NOTES:

The example shown in this article is tested with the following version, cluster share and quorum options –

SAP ASCS/ERS OS version: Windows Server 2019 Datacenter.

Enqueue Server version: ENSA1.

Quorum: Cloud witness.

Cluster share: Azure shared disks.

Shared disk type: ZRS.

As ASR for Azure shared disk is still in public preview, we don’t advise implementing the scenario for critical production workloads. Carefully review the Support matrix for shared disks in Azure VM disaster recovery (preview) – Azure Site Recovery.

This article focuses on the central services and application server’s component of SAP system. For database DR approach, see Disaster Recovery recommendation for SAP workload.

Failover of other dependent services like Domain Name System (DNS) or Active Directory (AD) is not covered in this article.

To replicate VMs using ASR for DR, review supported regions.

ASR doesn’t replicate Azure load balancer that is used as virtual IP for the SAP ASCS/ERS cluster configuration in the source site. You need to manually create one in the DR site before or during the failover event.

The cloud witness uses Azure blob storage, so you need a separate storage account in the DR region before or during the failover event.

The procedure described here has not been tested with different OS releases. So, make sure you test and document the entire procedure thoroughly in your environment.

Read Disaster Recovery overview and infrastructure guidelines for SAP workload and Disaster Recovery recommendation for SAP workload for general guidance, strategies, and factors to consider when designing DR for SAP workload. Disaster recovery architecture of SAP ASCS/ERS with Azure shared disk.

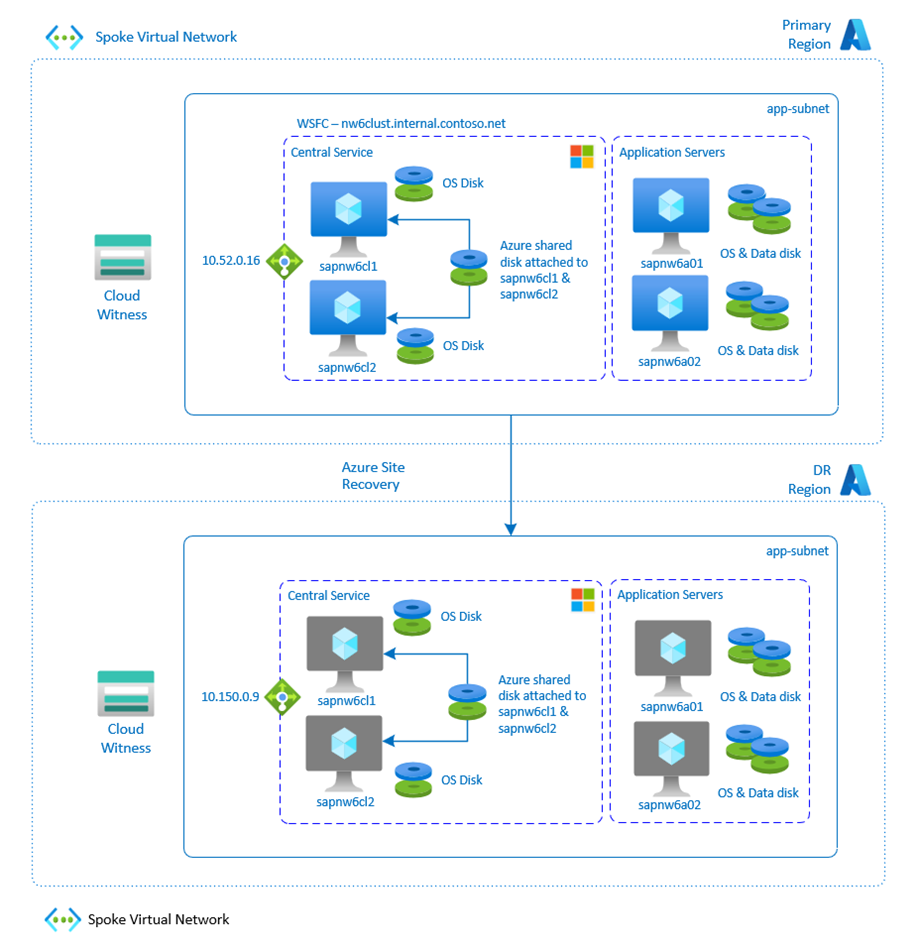

DR architecture for SAP workload on Windows with Azure shared disks

The following figure shows how the ENSA1 high availability of SAP ASCS (sapnw6cl1), and SAP ERS (sapnw6cl2) instance is set up using WSFC, with Azure shared disk attached to both the VMs. The cluster uses a cloud witness as a quorum option. To achieve DR for the setup, ASR is used to replicate the SAP ASCS/ERS VMs across the sites, which would replicate OS disk and Azure share disk. In the same way, for application servers (sapnw6a01 and sapnw6a02) that have OS and data disk (premium managed disk), set up ASR to replicate VMs to DR site.

DR architecture for SAP ASCS/ERS and SAP Application Servers running on Windows using ASR

NOTE:

This article describes steps related to the ENSA1 architecture. The same DR process can also be applied to the ENSA2 architecture as well.

This article does not include the scope of using SMB volumes on Azure Files or Azure NetApp Files in your SAP system for interface or anything else. If you use them, ensure that they get replicated into DR region as well.

To have similar high availability SAP system setup in the DR site, you need to make sure that all the components that are part of the SAP system are replicated.

Components

DR setup

SAP ASCS/ERS VMs (includes OS disk and Azure shared disk).

Replicate VMs using Azure Site Recovery.

Storage used for cloud witness.

Create separate storage in the DR region.

Load balancer used for cluster virtual IP.

Create a separate load balancer in the DR region.

SAP Application Servers VMs (include OS and data disk that uses premium managed disks)

Replicate VMs using Azure Site Recovery

IMPORTANT: Use of Azure Site Recovery for SAP databases isn’t recommended. For more details on the DR recommendation for databases, refer to SAP database servers DR guidelines.

Disaster Recovery (DR) site preparation

To achieve a similar SAP system setup on DR site including high availability setup of SAP ASCS/ERS, you need to make sure that all the components are replicated and available in the event of a failover.

Configure ASR for SAP ASCS/ERS and application server VMs

Set up Resource Group, Virtual Network, Subnet and Recovery Service Vault in the secondary site that you would use in setting up your DR. To learn more about networking, see prepare networking for Azure VM disaster recovery.

Before enabling ASR on SAP ASCS and SAP ERS VMs, it is essential that WSFC is configured, and Azure shared disk is managed by cluster.

Configure ASR for SAP ASCS and ERS VMs with Azure shared disk by following the steps in the Shared disks in Azure Site Recovery document. Follow Configure replication for Azure VMs in Azure Site Recovery to configure ASR for SAP application servers.

When you use ASR to set up DR for VMs, the VM’s OS, data disks, and Azure shared disk (for ASCS/ERS VMs) are copied to the DR site.

NOTE: With Azure shared disk, SAP ASCS and ERS VMs will be grouped together in ASR. This way, the VMs in the group will replicate together to have app-consistent recovery snapshot. In the event of a failover, the VMs will fail over as a group.

After the VMs are replicated, the status of protected cluster (sapnw6) and individual VMs (sapnw6cl1 and sapnw6cl2) would turn into “Protected” and the replication health would be “Healthy”.

Status of SAP ASCS/ERS and application server VMs in vault (replicated items) after setting up ASR

Configure the cloud witness for SAP ASCS/ERS in the DR site

Tip: Based on your DR strategy, you can either execute this step when you are preparing your DR site like setting up ASR or you can execute at the time of the DR failover process.

Create an Azure storage account on the DR site for the usage as a cloud witness.

Site

Storage cloud witness

Primary

nw6cloudwitness

DR

nw6cloudwintess-dr

Configure standard load balancer for SAP ASCS/ERS in the DR site

Tip: Based on your DR strategy, you can either execute this step when you are preparing your DR site like setting up ASR or you can execute at the time of the DR failover process.

Create an Azure standard load balancer on the DR site, similar to the one you have created in your primary site. If you are creating the load balancer in advance on the DR site, you won’t be able to add VMs to the backend pool because the VMs don’t exist yet in the DR site. You would need to create the backend pool as an empty pool. This allows you to define the load balancing rules. But you would need to add the VMs in the backend pool, when the DR failover of the VMs through ASR has been done.

Keep the probe port of the DR site load balancer the same as in the primary site.

When VMs without public IP addresses are placed in the backend pool of the internal standard load balancer, there would not be any outbound connectivity from these VMs, unless additional configuration is performed to allow routing to public end point. For details on how to achieve outbound connectivity see public endpoint connectivity for Azure VMs & Standard ILB in SAP HA scenarios.

Site

Frontend IP

Primary – ASCS

10.52.0.16

DR – ASCS

10.150.0.9

NOTE: This example uses the ENSA1 setup. For ASR configuration on ENSA2 architecture, you need to configure additional frontend IP and load balancing rules as described in prepare Azure infrastructure for SAP HA with WSFC.

Disaster Recovery (DR) failover event

[A] – Applicable to SAP ASCS Node, [B] – Applicable to SAP ERS Node, [C] – Applicable to SAP Dialog Nodes.

The following procedure should be used for the SAP ASCS/ERS with Azure shared disk and the SAP application servers in the event of a DR failover. The failover procedure here assumes that the system in the primary site is unreachable or unavailable for some reasons. Hence, the DR failover process is started. The VMs in the primary site would stay down after the failover to the DR region is triggered.

NOTE: The exact steps and the order of recovery of your SAP system must be tested, documented and fine-tuned regularly.

Perform the failover of SAP ASCS/ERS and all application server VMs that are configured in ASR to the DR region.

Central Services: If both SAP ASCS/ERS VMs (sapnw6cl1 and sapnw6cl2) that have Azure shared disk(s) in the protected cluster are up and running in primary site, and recovery points are consistent across both the VMs. Follow run a failover – recovery point is consistent across all the VMs to perform failover.

Recovery point is consistent across both the VMs in protected cluster

Central Services: If one of the VM (sapnw6cl1 or sapnw6cl2) is down on primary site, and you need to start a failover to the DR site, then follow run a failover – recovery point is consistent only for a few VMs document. In this case, the VM that is down won’t be a part of cluster recovery point, instead you would need to select individual recovery point of that VM to initiate failover.

Recovery point is consistent only on one of VM in protected cluster

Application Servers: To perform the failover of application server VMs, see Tutorial to fail over Azure VMs to a secondary region for disaster recovery with Azure Site Recovery.

After the failover is completed, the status of replicated items in the recovery service vault would be like below –

Status of VMs after completion of failover

Change the IP address of VMs in DNS or in host files (if used). In this example, change the IP address for SAP ASCS/ERS, and all application servers. The Windows cluster also registers the ASCS/ERS server name in DNS. So, you need to change the IP address of ASCS/ERS server name in DNS or in host files too.

Entries in DNS

Primary Site

DR site

nw6clust.internal.contoso.net

10.52.0.10

10.52.0.11

10.150.0.5

10.150.0.4

nw6ascscl

10.52.0.16 (LB frontend IP)

10.150.0.9 (LB Frontend IP)

sapnw6cl1

10.52.0.10

10.150.0.5

sapnw6cl2

10.52.0.11

10.150.0.4

sapnw6a01

10.52.0.12

10.150.0.6

sapnw6a02

10.52.0.13

10.150.0.7

If you have created an Azure standard load balancer in the DR site beforehand with an empty backend pool. Add ASCS/ERS VMs into the backend pool.

On DR site, add VMs in load balancer backend pool

[A] Update the IP address of ASCS server name resource configured in the cluster to the frontend IP of load balancer (the one provisioned in DR site).

Update the IP address for ASCS server name in WSFC

IMPORTANT: For ENSA2, you would need to change two IP addresses (one for ASCS, and one for ERS) to the respective frontend IP that you set up in Azure load balancer.

[A] Change the quorum to the cloud witness storage account created on the DR site.

Update of cluster quorum setting with DR site cloud witness storage account key

[A] Start cluster role.

Status of services in cluster

[C] Update the user store in all application server instances with the correct database hostname that is running in DR region. Check SAP Note 1852017 to get more insights on how to update the ‘hdbuserstore’ on Windows.

[C] Start all dialog instances.

Status of SAP system

Failback to the former primary site

Before you begin to failback VMs to the former primary site, ensure that you have committed the failover and status of your virtual machine is “failover committed”.

Re-protect failed over protected cluster (sapnw6) and application server VMs. For more detail, see re-protect VMs with Azure shared disk to the primary site with ASR, and re-protect VMs to the primary site with ASR.

On the event of a failure, follow the same post steps described above.

Microsoft Tech Community – Latest Blogs –Read More

permission error opening excel file

when i open an excel file that i created i get below error even though i set myself the permission

”you are not signed in office with an account that has permission to open this workbook. you may sign in a new account info OFFICE that has permission or request permission the content owner”

I am signed in with correct account with a license.

No issues accessing other files, only one file with issue.

Made no changes so confused why its an issue. One night working fine, next morning i have this issue.

File looks to be corrupted after speaking to chat support so how do i fix this?

when i open an excel file that i created i get below error even though i set myself the permission ”you are not signed in office with an account that has permission to open this workbook. you may sign in a new account info OFFICE that has permission or request permission the content owner” I am signed in with correct account with a license. No issues accessing other files, only one file with issue.Made no changes so confused why its an issue. One night working fine, next morning i have this issue. File looks to be corrupted after speaking to chat support so how do i fix this? Read More

Licenses required to retain access to historic data

Hi Team,

Please validate if below statement is true.

We need to know the minimum number of FO licenses required to retain access to historic data on F&O as the client is migrating from on-prem FO to the Business Central cloud. We believe it should be 1 according to resources available to us, but we have been told we may require all 20 FO Licenses to retain access. Please confirm which it is.

Regards,

Kumar

Hi Team, Please validate if below statement is true. We need to know the minimum number of FO licenses required to retain access to historic data on F&O as the client is migrating from on-prem FO to the Business Central cloud. We believe it should be 1 according to resources available to us, but we have been told we may require all 20 FO Licenses to retain access. Please confirm which it is. Regards,Kumar Read More

Enabling Defender for Cloud for Azure Subscriptions

I’m unclear about how the enablement works if there hasn’t been any subscription in the tenant that has previously used Microsoft Defender for Cloud (MDC) despite having read through Connect Azure subscription and Enabling Microsoft Defender for Cloud.

The documentation specifies: First sign in to the portal and then open Defender for Cloud. Defender for Cloud is now enabled on your subscription and you have access to the basic features (= Foundational CSPM).

The subscription filter of the Azure portal defaults to all subscriptions of the current Entra ID directory. So when accessing MDC, there is no such thing as “your subscription”.

Imagine a new and pristine directory with a pristine subscription. Is MDC already enabled after creating the directory and the subscription?

If yes, then the documentation should state that Foundational CSPM is enabled per default and no enablement is needed.

If not, what happens when I navigate to MDC on the Azure portal (https://portal.azure.com/#view/Microsoft_Azure_Security/SecurityMenuBlade/)? Does it enable MDC for all current and future subscriptions (since there is no particular subscription “selected” when doing this)? What Azure/directory roles are required to do this? Can I trigger this action via API? How can I find out if someone already initiated this activation?

Based on my tests in my own environment, it appears that Foundational CSPM is automatically activated on new subscriptions without ever navigating to MDC. The basic CSPM features are enabled shortly after creating a new subscription, the ASC default Azure Policy initiative is automatically assigned and MDC assesses the subscription.

I’m unclear about how the enablement works if there hasn’t been any subscription in the tenant that has previously used Microsoft Defender for Cloud (MDC) despite having read through Connect Azure subscription and Enabling Microsoft Defender for Cloud. The documentation specifies: First sign in to the portal and then open Defender for Cloud. Defender for Cloud is now enabled on your subscription and you have access to the basic features (= Foundational CSPM). The subscription filter of the Azure portal defaults to all subscriptions of the current Entra ID directory. So when accessing MDC, there is no such thing as “your subscription”. Imagine a new and pristine directory with a pristine subscription. Is MDC already enabled after creating the directory and the subscription?If yes, then the documentation should state that Foundational CSPM is enabled per default and no enablement is needed.If not, what happens when I navigate to MDC on the Azure portal (https://portal.azure.com/#view/Microsoft_Azure_Security/SecurityMenuBlade/)? Does it enable MDC for all current and future subscriptions (since there is no particular subscription “selected” when doing this)? What Azure/directory roles are required to do this? Can I trigger this action via API? How can I find out if someone already initiated this activation? Based on my tests in my own environment, it appears that Foundational CSPM is automatically activated on new subscriptions without ever navigating to MDC. The basic CSPM features are enabled shortly after creating a new subscription, the ASC default Azure Policy initiative is automatically assigned and MDC assesses the subscription. Read More

How to fetch??

How to fetch the customers names, who’s having first letter CAPITAL ??

How to fetch the customers names, who’s having first letter CAPITAL ?? Read More

Copilot for M365 Demo Tenant

During the TSP Mars comminity call an upcoming Demo tenant was mentioned as an upcoming solution for Copilot for M365 labs. This was not in the slide deck, but it was said that it would be available during april.

How is this going? Is there any update on the release date, and are there any details to be shared about this demo tenant?

An update would be greatly appreciated, as it impacts our own investments in a similar solution.

Thanks!

During the TSP Mars comminity call an upcoming Demo tenant was mentioned as an upcoming solution for Copilot for M365 labs. This was not in the slide deck, but it was said that it would be available during april.How is this going? Is there any update on the release date, and are there any details to be shared about this demo tenant? An update would be greatly appreciated, as it impacts our own investments in a similar solution.Thanks! Read More

SharePoint Online Mapped Drive Access Denied Error

Good day All

My client’s setup:

Got a Windows 11 PC (aka file server) setup with OneDrive Client to sync files to PC’s local hard drive.

Those folders are then shared with local file share/drive mapping with everyone in the office having full access. The reason for this is, the client works with big drawings, and it is faster to save the files locally than to wait for OneDrive Client to sync files between team members.

My issue:

When I save a file via the mapped network drive everything works fine as follows:

Save file via Mapped Drive -> All local users can access the file -> OneDrive Client sync file to SharePoint Online -> All users working from home can access file via SharePoint Online.

But when a home user saves a file via SharePoint the following happens:

User working from home save file in SharePoint -> OneDrive Client sync file to local hard drive -> File is accessible on the PC (aka file server) -> Access Denied error when opening the file via Mapped Drive.

To me it looks like the new file saved via SharePoint Online does not inherit the parent file permissions from the file share.

“Everyone” has full rights on the file share.

When I go on the PC (aka file Server) to the file share security and select “Replace all child object permissions entries with inheritable permission entries from this object” and apply, I can then open the file via the Mapped Drive, but any files after that added by a home user via SharePoint Online, we get Access Denied again for that new file.

Anyone tried a setup like this or experience an issue like this?

Good day All My client’s setup: Got a Windows 11 PC (aka file server) setup with OneDrive Client to sync files to PC’s local hard drive.Those folders are then shared with local file share/drive mapping with everyone in the office having full access. The reason for this is, the client works with big drawings, and it is faster to save the files locally than to wait for OneDrive Client to sync files between team members. My issue: When I save a file via the mapped network drive everything works fine as follows:Save file via Mapped Drive -> All local users can access the file -> OneDrive Client sync file to SharePoint Online -> All users working from home can access file via SharePoint Online. But when a home user saves a file via SharePoint the following happens:User working from home save file in SharePoint -> OneDrive Client sync file to local hard drive -> File is accessible on the PC (aka file server) -> Access Denied error when opening the file via Mapped Drive. To me it looks like the new file saved via SharePoint Online does not inherit the parent file permissions from the file share.”Everyone” has full rights on the file share. When I go on the PC (aka file Server) to the file share security and select “Replace all child object permissions entries with inheritable permission entries from this object” and apply, I can then open the file via the Mapped Drive, but any files after that added by a home user via SharePoint Online, we get Access Denied again for that new file. Anyone tried a setup like this or experience an issue like this? Read More

Customer review: Dooap AP automation for Dynamics 365 makes high-volume invoicing quick and painless

Dooap accounts payable (AP) automation, available through Microsoft AppSource, integrates seamlessly with Microsoft Dynamics 365 Finance, reducing processing costs and errors. This mobile-first solution built on Microsoft Azure saves you time and money through automated invoice scanning, capture, and validation. Dooap simplifies your AP processes.

Microsoft AppSource interviewed Michelle Wagner, AP Director, SA Recycling, to learn what she had to say about the product.

What do you like best about Dooap?

I love the Power BI analytics that Dooap offers. Many off-the-shelf reports offer exactly what someone directing the AP team would need. Another great benefit is the way we can use the analytics for our internal training as a basis for continuous improvement. As an example, we can pull the data of invoices returned to AP, sort them by reason, dig into their root causes, and then make necessary changes.

How has the product helped your organization?

We have over 200 approvers in more than 100 locations using the Dooap mobile app, and they love the ability to review and approve, zoom into the details of the invoices, see prior history, coding, and comments, all from the mobile app. Users of the desktop application are the AP team, also approvers, and our buyers (who create the purchase orders in Dynamics 365). We all found benefits in the full audit trail and the ability to send invoices to one another through a buyer review workflow.

How is customer service and support for Dooap?

The support team at Dooap is fantastic. If we have a request or a problem, we submit a support ticket, but we also have a bi-weekly meeting with the team to talk through the support requests. They are quick to address tickets. Many of our wish-list items have been included in future releases of Dooap.

Any recommendations or insights to other users considering this product?

I would tell anyone thinking of doing AP automation with Dooap – do it! Jump onboard – it’s quick, easy, and painless and they’re with you every step of the way. There’s nothing that you cannot solve together with them. With our volume of invoices, I really cannot fathom life without Dooap AP automation. It would be chaos and a nightmare! It would mean a lot of non-value-adding activities, poor credit performance, and poor vendor relations.

What is your overall rating for this product?

5 out of 5 stars.

Cloud marketplaces are transforming the way businesses find, try, and deploy applications to help their digital transformation. Learn more about Microsoft AppSource and find ways to discover the right application for your business needs.

Microsoft Tech Community – Latest Blogs –Read More

Send data to Microsoft Sentinel using Cribl Stream

Microsoft Sentinel is a modern cloud-native SIEM, enriched by AI and threat intelligence empowering security teams with an easy and powerful security operations solution. Microsoft Sentinel offers a comprehensive toolset to collect, correlate, and analyze large volumes of security data across multicloud, multiplatform environments to detect and mitigate cyberthreats at scale.

Microsoft Sentinel has over 350 partner integrations and we are excited to highlight a recent integration with Cribl Stream. Together, Microsoft and Cribl are working to drive accelerated SIEM migrations for customers looking to modernize their security operations (SecOps) with Microsoft Sentinel.

“By combining Cribl’s leading data observability pipeline technology with Microsoft Sentinel’s next generation SecOps SIEM solution, we are collectively helping customers transform and secure their businesses” says Clint Sharp, CEO, “We are excited to deepen our collaboration with Microsoft and unlock value for our joint customers.”

Cribl Stream

Cribl Stream is a robust, vendor-agnostic streams processing engine focused on centralized

parsing and processing of data (e.g. security, IT, observability, and telemetry data). Customers can take any source and use Cribl Stream to route, reduce, reformat, enrich, or otherwise structure data in flight then send it to any destination – including Microsoft Sentinel.

Cribl Stream Integration with Microsoft Sentinel

The Cribl Stream integration with Microsoft Sentinel helps customers accelerate SIEM migrations their Cribl’s ability to easily route data to various Microsoft Sentinel log tiers. In addition to benefitting customers that are migrating to Sentinel, Cribl offers customers additional capabilities including simple deployment, data optimization, and normalization.

Microsoft Sentinel supports both custom data and a variety of standardized formats, all of which Cribl Stream can directly target. Cribl has created several “Cribl Packs” for Microsoft Sentinel. which are self-contained bundles of configurations that enable joint customers to solve full use cases with minimal setup/configuration. Additionally, customers can edit these configurations or build their own custom transformations.

Accelerating SIEM Migrations to Microsoft Sentinel using Cribl Stream

Migrating or standing up a SIEM solution can be a complex, time-consuming, and resource-intensive process. In addition, the recently announced SIEM Migration experience in Microsoft Sentinel for bringing Splunk detections to Microsoft Sentinel analytics rules, customers can utilize the Cribl Stream integration to easily and quick bring data in the appropriate schema into Sentinel for security analysis.

Learn More

To learn more about this integration, please see Cribl’s recent blog post and technical documentation here. For the latest information on Microsoft Sentinel see:

Start Microsoft Sentinel free trial today.

Learn how Microsoft Sentinel delivered 234% ROI according to Forrester study.

Read Microsoft Sentinel customer testimonials

Engage with the Microsoft Sentinel tech community

Microsoft Tech Community – Latest Blogs –Read More

Issues with Wireless Display Adapter

When I am trying to connect a new display adapter on Windows 11 at the second time, it fail. No problem with the first time connection.

If you remove the Display adapter from the Bluetooth device list it will instantly connect again, then the issue repeats itself.

Is this a known bug?

When I am trying to connect a new display adapter on Windows 11 at the second time, it fail. No problem with the first time connection. If you remove the Display adapter from the Bluetooth device list it will instantly connect again, then the issue repeats itself. Is this a known bug? Read More

[Teams 2.0] How my custom Teams app cross launch the PC application

The main issue of Teams 2.0 is that we cannot open external link which is of user-defined protocol, to accept cross-launch. For example, we registered my_protocal://url_content in system’s register table, and installed its related application MyApp.exe. We can open My custom App by visiting my_protocal://url_content on classic Microsoft Teams, but not in Teams2.0.

According to the Microsoft document: https://learn.microsoft.com/zh-cn/javascript/api/%40microsoft/teams-js/secondarybrowser?view=msteams-client-js-latest Windows Open is standard API. On web and desktop, please use the window.open() method or other native external browser methods.