Month: January 2024

Lesson Learned #464: Utilizing SMO’s Scripting Option in Azure SQL Database.

Today, I encountered a unique service request from a customer inquiring about alternative methods to script out a table and all its dependencies in Azure SQL Database. Traditionally, several approaches are employed, such as utilizing stored procedures like sp_help, sp_depends, or functions like object_definitionor SSMS GUI. These methods, while useful, but, I would like to share other options using SQL Server Management Objects (SMO).

Script:

# Define connection details

$serverName = “servername.database.windows.net”

$databaseName = “DBName”

$tableName = “Table1”

$schemaName = “dbo” # Update if using a different schema

$userId = “UserName”

$password = “Pwd!”

# Create a Server object

$serverConnection = New-Object Microsoft.SqlServer.Management.Common.ServerConnection($serverName, $userId, $password)

$server = New-Object Microsoft.SqlServer.Management.Smo.Server($serverConnection)

# Access the database

$database = $server.Databases.Item($databaseName)

# Access the specific table

$table = $database.Tables.Item($tableName, $schemaName)

# Configure scripting options

$scripter = New-Object Microsoft.SqlServer.Management.Smo.Scripter($server)

$scripter.Options.ScriptSchema = $true

$scripter.Options.Indexes = $true

$scripter.Options.Triggers = $true

$scripter.Options.ScriptDrops = $false

$scripter.Options.WithDependencies = $false

# Script the table

$scripter.Script($table) | ForEach-Object { Write-Output $_ }

Running this small PowerShell Script we are going to have the structure of the table including triggers and Indexes.

Example:

SET ANSI_NULLS ON

SET QUOTED_IDENTIFIER ON

CREATE TABLE [dbo].[PerformanceVarcharNVarchar](

[Id] [int] NOT NULL,

[TextToSearch] [varchar](200) COLLATE SQL_Latin1_General_CP1_CI_AS NOT NULL,

CONSTRAINT [PK_PerformanceVarcharNVarchar] PRIMARY KEY CLUSTERED

(

[Id] ASC

)WITH (STATISTICS_NORECOMPUTE = OFF, IGNORE_DUP_KEY = OFF)

)

SET ANSI_PADDING ON

CREATE NONCLUSTERED INDEX [PerformanceVarcharNVarchar1] ON [dbo].[PerformanceVarcharNVarchar]

(

[TextToSearch] ASC

)WITH (STATISTICS_NORECOMPUTE = OFF, DROP_EXISTING = OFF, ONLINE = OFF)

Microsoft Tech Community – Latest Blogs –Read More

Azure DevOps Pipelines: Discovering the Ideal Service Connection Strategy

About

This post is part of an overall series on Azure DevOps YAML Pipelines. The series will cover any and all topics that fall into the scope of Azure DevOps Pipelines. I encourage you to check it out if you are new to this space.

Introduction

When an organization is trying to configure their Azure DevOps (ADO) environment to deploy into Azure, they are immediately met with the dilemma on how their DevOps instance will execute the deployment against their Azure Environment. This article will go over the various configurations, decisions, and pros and cons that should be evaluated when deciding how your DevOps environment will deploy code into Azure.

This article will not talk about the nitty gritty details on how to configure the connection. This is covered in MS documentation. Nor will we discuss which type of authentication should be created. There are additional resources that will cover this. This article instead will focus on questions such as “How many Service Connections should I have?”, “What access should my Service Connection have?”, “Which pipelines can access my Service Connection?”, etc…

Deployment Scope

This question on how to architect our Service Connections, the means by which Azure DevOps communicates to Azure, will be the main focal point of this piece. Deployment Scope, for the purposes of this article, will refer to what Azure Environment and resources our Azure DevOps Service Connection can interact with.

This answer will vary depending on your organization’s security posture, scale, and maturity. The most secure will be the smallest deployment scope and will then entail the most amount of overhead, while on the flip side the least secure will have the largest deployment scope and least amount of overhead associated with it. We will cover three scenarios and the associated pros and cons: One Service Connection to Rule Them All, a Service Connection per Resource Group, as well as a Service Connection Per Environment.

As for what access the identity from ADO should have in Azure I typically recommend starting with Contributor as this will provide the ability to create Azure resources and interact with the Azure Management Plane. If your organization in leveraging Infrastructure as Code (IaC) I would also recommend leveraging User Access Administrator, to provision Role Based Access Controls and allow Azure to Azure resource communication leveraging Managed Identities. This is effectively the same permission combo as Owner; however, if you are familiar with Azure recommended practices, Owner permission assignment is not recommended in the majority of cases.

One Service Connection to Rule Them All

This scenario is pretty self-explanatory. A team would have a single Service Connection which all their pipeline would leverage across all environments. This scenario could be scoped a little more specific where a specific application will have one Service Connection that is responsible for deploying across all environments.

Pros

The pro to this approach is pretty simple. We are only concerned about one Service Connection for our application(s). This means we have to manage and provision access to one identity behind the scenes in Entra ID. Additionally, if your organization is leveraging a Service Principle and password to authenticate to Azure these credentials will expire and need to be rotated. Since there is one Service Connection for all deployments, we will only need to maintain the connection to one Service Principal. This negates the potential risk your production deployment is delayed due to credentials expiring. This overall would be considered a small concern, unless it is realized while in the middle of a production outage/hot fix.

Cons

As mentioned earlier this would be the least secure approach. By enabling one Service Connection access to everything we are effectively given every deployment regardless of the deployment scope. This also means from a deployment/audit log that all activity will report back to one identity which can make troubleshooting and tracking harder.

We also introduce the unintended risk of a deployment designed to go a development environment inadvertently going to production. Defining the Service Connection is one criteria to separate Azure Deployments across environments. This is a rather large concern as someone can easily unknowingly forget to update the service connection and deploy code in the wrong location.

Service Connection Per Resource Group

The opposite of a one Service Connection to Rule Them All would be a Service Connection Pre Resource Group. This structure would have the finest grain access control where each individual Resource Group would have a Service Connection tied to it’s deployment.

Pros

This approach is the most secure. It is the most restrictive model that is easily available when creating the Service Connection. By creating a Service Connection per Resource Group we are limiting what specific deployments can interact with. This would eliminate the risk of Team A’s deployments touching Team B’s resources since the deployment scope of the Service Connection would be limited to the resources contained in Team A’s Resource Group. This can be a rather significant gain; however, I will point out that a proper CI/CD flow should mitigate this risk.

Additionally, from a logging and auditing perspective there would be clear transparency on what deployment updated what resources. Since each deployment pipeline’s permissions are scoped to that pipeline, we can quickly trace back any changes that were made back to the orchestrating pipeline.

Cons

By the nature of this approach there will be exponentially more Service Connections to manage. If wanting to use this approach I would highly encourage using the Workload Identity Federation when setting up your connections. This will help alleviate the password expiration of your Service Connections.

If you aren’t using Workload Identity Federation this could lead to the team having to constantly watch for and update passwords since a large organization could easily have 100 Resource Groups which means typically 100 pipelines and that could be across three environments. Thus, we are talking about managing and organizing around 300 Service Connections. Not a simple task.

Another significant drawback to this approach occurs when you are deploying Infrastructure as Code or having to update resources that live outside of the designated Resource Group. Since the Service Connection can only interact with the resources in its designated Resource Group it will not be able to provision any additional RBAC access required to resources that live outside of the Resource Group. Again, the scope of this effort in the example above could mean tracking access for 300 Service Connections.

Some common scenarios for this would be activities such as being able to setup up access to retrieve secrets from a shared Key Vault, add a Managed Identity access to a share data source, and pushing images to a shared container registry. To accommodate for this one would have to manually add the extra permissions. This then will have an impact as this access provisioning would not be defined via IaC and runs the risk of being unaccounted for in an organization Azure deployment.

A Service Connection Per Environment

This configuration is a bit of a goldilocks between the two extremes. In this scenario our Service Connection will have access to all resource groups in a specific Azure Subscription/Environment. The Service Connection would be reused across a team(s) to handle Azure Deployments to a specified subscription.

Pros

This architecture will lead to higher developer enablement as developers would be able to, via Azure DevOps Deployments, make any and all changes required for their applications and/or infrastructure. Additionally, by having different Service Connections we create the need to have the Service Connection updated when moving across environments. This is a significant win as it will satisfy any audit/security requirements that environments are separated by access.

This approach limits the activity of the Service Connection’s role assignment to just one per environment as opposed to having to continuously create/update Service Connection role assignments each time a new pipeline is created. This is sizable as it will negate the overhead involved with maintaining these.

Cons

Structuring your Service Connection this way will violate the least privilege access principle. This principle is defined as:

“The information security principle of least privilege asserts that users and applications should be granted access only to the data and operations they require to perform their jobs.”

Since the deployment pipeline will have access greater than what is needed to do its specific job (i.e. access to update resources outside the scope of the defined deployment) this architecture will violate this principle. As such this approach is not the most secure. In some organizations that strictly adhere to the concept of least privilege this could be significant and a deal breaker.

Auditing your Azure Environment will also not be as granular/transparent as it would be under the Service Connection Per Resource Group model since the same Service Connection would be reused across the environment.

Conclusion

These three approaches are all options when looking to configure your Service Connections from Azure DevOps into Azure. Each approach will have its one set of pros and cons your organization should evaluate when making a decision. It is important to understand there isn’t one universal answer for everyone.

If interested in learning more on Azure DevOps YAML Pipelines I’d encourage you to check out additional posts on this topic.

Microsoft Tech Community – Latest Blogs –Read More

SharePoint Roadmap Pitstop: December 2023

Ho ho ho, Merry SharePoint! We ho ho hope you found time away from screens and unwound with fun, family, and friends. December 2023 brought a lot to review AND a lot to look forward. So, without delay, in this here beginning of 2024… let, us, go!

We’ll focus on everything that landed this past month: SharePoint eSignature, Stream: Add a survey, poll/quiz/link/text, Viva Engage: Community creation API, OneDrive app for Teams, OneDrive: Open in app, Microsoft 365 Backup (Public Preview), Microsoft Loop moves to loop.cloud.microsoft, and notification of the Delve Web retirement on Dec. 16, 2024.

We take a glance back and highlight the top 5 SharePoint tech items of 2023, peek ahead at 5 anticipated features slated for CY24-Q1 and shine a light on a few related items and the always-fun teasers. AND we’ve a special bonus, festive song within the companion podcast episode – titled, “It’s beginning to look a lot like SharePoint” – queried at number one on the Power BI charts :).

Details and screenshots below, including the full audible companion: The Intrazone Roadmap Pitstop: Month 2023 podcast episode – all to help answer, “What’s rolling out now for SharePoint and related technologies into Microsoft 365?”

All features listed below began rolling out to Targeted Release customers in Microsoft 365 as of December 2023 (possibly early January 2024).

Inform and engage with dynamic employee experiences

Build your intelligent intranet on SharePoint in Microsoft 365 and get the benefits of investing in business outcomes – reducing IT and development costs, increasing business speed and agility, and up-leveling the dynamic, personalized, and welcoming nature of your intranet.

Microsoft SharePoint eSignature

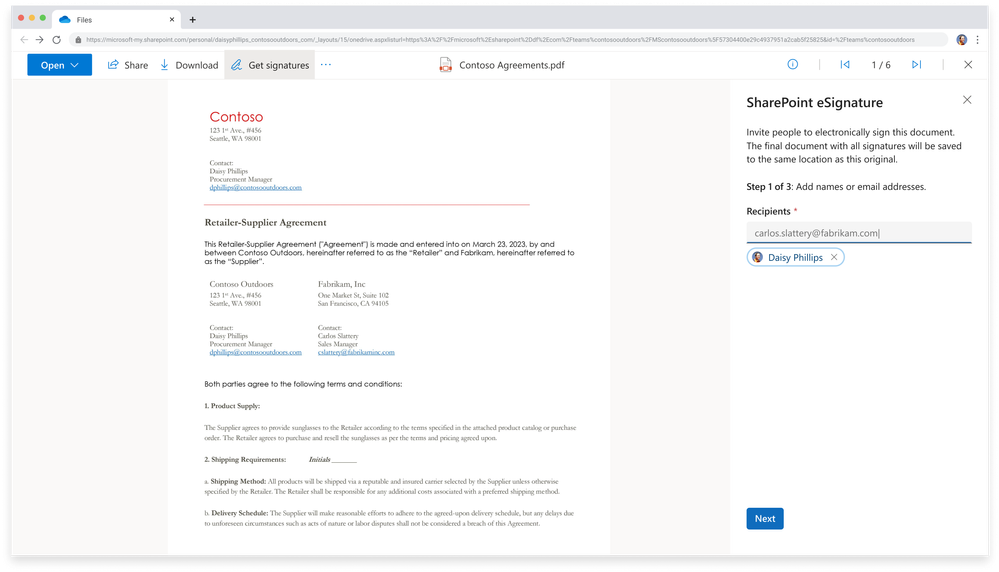

Might I get your John Hancock, please. Microsoft’s native eSignature service allows you to request simple electronic signatures from internal and external recipients for a PDF document in SharePoint. Recipients sign the documents without the document leaving the trusted boundaries of Microsoft.

From a SharePoint document library, open the document for which you want to start the eSignature process. In the document viewer, select More options (…), and then select Get signatures (pictured below). Once sent, the status of the request is set to In progress and recipients are able to add their signatures. An email notification is sent to the creator and the recipients.

On the SharePoint eSignature panel, add up to 10 internal or external recipients you want to sign the document, and then select Next.

The SharePoint eSignature service is set up in the Microsoft 365 admin center. SharePoint eSignature uses simple electronic signatures. Before you begin, determine whether this feature is appropriate for your needs and then read the Microsoft SharePoint eSignature terms of service. This is a PAY-As-You-GO service with a charge per request. In order to setup SharePoint eSignature, please read the admin support documentation: Set up SharePoint eSignature

Additional information

Help and support

Roadmap ID 168828

Stream: Add a survey, poll, or quiz to a video at specific times

Make your video interactive and more engaging by adding a survey, quiz, or poll using Microsoft Forms. You can add forms anywhere in the video timeline. Great for training scenarios or anytime you want get info and insights from your viewers in the flow of viewing.

A survey automatically overlays over the Stream video during playback, here showing the Microsoft Form that pops up inline.

Interactivity can be added, edited, and previewed in Stream Web App in Edit mode by users with editing permissions. You then go to Microsoft Forms to view responses and share a summary link with answers and scores in Microsoft Forms. Viewers can use video timeline markers to navigate in and out of interactive content.

Roadmap ID 180796

Stream: Add a hyperlink or text callout to a video at specific times

Another way to Make your video interactive and engaging is by adding annotations that can include hyperlinks and text. This could be a call out to highlight important moments or share links to related documents and other additional resources. You can add text and links anywhere in the video timeline to help guide your viewers to the right information.

Creating Callout text for a Stream video in Microsoft 365 – noting an in-point and an out-point for when the text/link appears over the video while playing.

And again, Viewers can use video timeline markers to navigate interactive content.

Roadmap ID 180795

Viva Engage Community creation API | Available within Microsoft Graph Beta

Many of our customers have requested this capability in the past to programmatically create communities instead of creation via Engage UI. We invite you to try this beta endpoint and provide us with feedback. Some things to note about the API:

It is Microsoft 365 Groups unified.

It supports app-delegated (user auth) and app-only access.

It is limited to networks in Native mode only – legacy and external Yammer networks will not be able to use this API for community creation.

Community creation for an existing Microsoft 365 group is out of scope.

Roadmap ID: 178311.

Learn more.

Top 5 tech from SharePoint and related tech shipped in 2023

It was a Banner year. Some Marvel enthusiasts might even say it was a Bruce Banner year. And to not make you angry, I shall not delay… Two of the biggest releases were undoubtably Copilot for Microsoft 365 AND New Teams… we count and credit those behemoths for sure, but not have them count against the SharePoint top five of 2023.

So, counting down, here are the top 5 SharePoint and related tech features and updates from 2023:

#5: OneDrive: Simplified sharing

#4: Stream Playlists (powered by Microsoft Lists)

#3: New Stream (on SharePoint) web part

#2: Loop components in Teams channels

#1: SharePoint news to email

Three bonuses | One for each core Microsoft 365 persona:

BONUS for everyone: Microsoft Feed

BONUS one for admins: SharePoint Advanced Management (SAM)

BONUS for devs: Advances in Microsoft Graph connectors

OK, “The sun’s getting’ real low,” time to deescalate and keep things moving into our teamwork section…

Teamwork updates across SharePoint team sites, OneDrive, and Microsoft Teams

Microsoft 365 is designed to be a universal toolkit for teamwork – to give you the right tools for the right task, along with common services to help you seamlessly work across applications. SharePoint is the intelligent content service that powers teamwork – to better collaborate on proposals, projects, and campaigns throughout your organization – with integration across Microsoft Teams, OneDrive, Yammer, Stream, Planner and much more.

OneDrive app for Microsoft Teams

By now, you may have seen or used our new OneDrive experience on the web. And if so, you know how it makes finding and organizing what’s important to you, incredibly easy – no matter where your content is stored or how it’s shared with you.

Now Microsoft Teams is giving you access to that same OneDrive experience, with all the new enhancements, directly from within Teams. The new OneDrive app for Teams is now rolling out to customers worldwide and will replace the generic Files app in Teams; this is the Files app you’ve seen on the left-hand rail of Microsoft Teams – now it’s simply branded “OneDrive” (as it should be)

Highlights of the value of access full OneDrive from within Microsoft Teams.

This new experience means you’ll have a consistent and familiar file management experience across both Teams and OneDrive Web. To learn more about the OneDrive app experience for Teams, please check out the Microsoft Teams post: Effective file management with the next generation of OneDrive app for Microsoft Teams

OneDrive: Open in app

Fewer clicks, tighter integration, less content leaving your tenant trust boundaries. In the same way that you can open a Word, PowerPoint, or Excel file in their respective desktop apps, you can now open any file in its desktop app directly from OneDrive, edit it, and the changes will sync to OneDrive. From OneDrive’s My Files or SharePoint’s Documents, users can now select Open in app to open a file in its native app.

Open any file from OneDrive for Web in its native desktop app.

Note: this feature is powered by OneDrive’s Sync – it requires having OneDrive.exe installed on the user’s machine. In case it’s not running, ‘Open in app’ will start the OneDrive executable.

Roadmap ID 124813

Learn more.

Microsoft 365 Backup (Public Preview)

Microsoft 365 Backup Service is a product offering spanning across the OneDrive, SharePoint, and Exchange Online services. This service provides customers with a consistent, cross product solution, to recover their Microsoft 365 data from cataclysmic events such as: at scale ransomware attacks, or malicious/accidental data deletion/corruption by end users.

Microsoft 365 Backup architecture | Data never leaves the Microsoft 365 data trust boundary or the geographic locations of your current data residency. The backups are immutable unless expressly deleted by the Backup tool admin via product offboarding. OneDrive, SharePoint, and Exchange have multiple physically redundant copies of your data to protect against physical disasters.

Microsoft 365 Backup Service is available on a pay-as-you-go basis and can be enabled through the Microsoft 365 admin center. Global admins can backup and restore content across the defined services (SPO/ODB/EXO). To protect content for any defined service, they need to create a backup policy for that service and add the relevant sites/accounts/mailboxes that they want to protect to that policy. SharePoint admins can backup and restore content for both SharePoint and OneDrive but not Exchange. Similarly, Exchange admins can backup and restore content only for Exchange but not SharePoint and OneDrive; per boundaries and delegation of each admin role.

Roadmap ID 188799

Learn more.

Related technology

SharePoint Embedded (Public Preview)

At its core, Microsoft 365 offers industrial strength capacity and management for your critical enterprise content in the apps you use every day. And much of that is based on the foundational content management capabilities of SharePoint. Imagine if you didn’t have to build a robust content store for your line of business application…

We’re pleased to have announced that SharePoint Embedded, a new way to build custom content apps for enterprises and ISVs, and it’s now in public preview. SharePoint Embedded offers a headless, API-only pattern to build content apps that integrate management capabilities like collaboration, security, and compliance into any app by storing content inside an enterprise’s existing Microsoft 365 tenant.

Slide as presented during Jeff Teper’s opening keynote (November 28, 2023), to highlight how SharePoint Embedded is designed, “Build rich applications that go beyond Microsoft 365 with fully managed and controlled content inside your org trust boundary.”

SharePoint Embedded is available now for public preview, with general availability planned for mid-2024. It’s available under pay-as-you-go consumption terms, meaning you pay for the storage you use and the volume of API calls and data transfers – without the need for additional user licenses.

Learn more. (See additional pricing and availability details)

Microsoft Loop app transitioning to the cloud.microsoft domain

The Microsoft Loop app will soon, if not already, become available at the *.cloud.microsoft domain, accessible at https://loop.cloud.microsoft. Note: MC699714 · Published Dec 19, 2023

loop.cloud.microsoft is the new URL/domain for Microsoft Loop in Microsoft 365, updating loop.microsoft.com.

The cloud.microsoft domain was provisioned in early 2023 to provide a unified, trusted, and dedicated DNS domain space to host Microsoft’s first-party authenticated SaaS products and experiences. The cloud.microsoft domain has been a part of standard Microsoft network guidance on domains and service endpoints since April 2023. If you are currently following this guidance, this change should not impact users in your organization using the above applications under the new domain.

Users will be redirected from https://loop.microsoft.com to https://loop.cloud.microsoft automatically. No specific user action is required. Admins should ensure that connections to *.cloud.microsoft are not blocked from their clients and enterprise networks. This should require no action if standard Microsoft network guidance on domains and service endpoints is being followed.

The legacy SharePoint Invitation Manager is being retired

This platform is currently used only when Document Libraries are shared externally or when an external user is shared with a custom role. These flows will now use Entra B2B Invitation Manager instead. This means that these flows will now also respect any policies or settings in the Entra B2B admin center.

Microsoft will retire Delve Web on December 16, 2024

[Original, official message center post, MC698136, published Dec 14, 2023]

Originally announced by Satya Nadella at the Convergence Conference on March 16, 2015, then “Office Delve” was described as “an experience within Office 365 that surfaces relevant content and insights tailored to each person. It is powered by the Office Graph, an intelligent fabric that applies machine learning to map the connections between people, content and interactions that occur across Office 365.” Plus, a small bit of trivia: The original codename for Delve was “Project Oslo” – Oslo being the hometown of the Fast product team – our original engineering team behind Delve, now driving Microsoft Search and people experiences across Microsoft 365.

Screenshot of Delve overview slide, as presented at Microsoft Ignite | May 4 – 8, 2015 | Chicago, IL.

We’ve always considered Delve as the first Microsoft app to tap into and realize the value of what we now call Microsoft Graph. And today, nearly every Microsoft 365 app and service is tuned and tied to Microsoft Graph; we learned a lot through Delve; much of what you use today was informed by it.

Screenshot of Office Graph (now Microsoft Graph) visual overview slide, as presented at Microsoft Ignite | May 4 – 8, 2015 | Chicago, IL.

We will be retiring Delve on December 16th, 2024. Most of the features and value offered by Delve today are already available and improved in other experiences in Microsoft 365. The main one being Profile Cards in Microsoft 365.

Here is a list of features offered in Delve and the experiences we recommend using instead:

Delve Home – discover relevant documents recommended on Office.com, in Office apps and in Profile Cards. [“For you” recommendation in OneDrive and Using search efficiently for organizational content]

Delve Profile – view profile data in the Profile Cards cross M365, through people in search on Office.com and search in SharePoint. [Profile cards in Microsoft 365]

Edit profile – a new edit profile experience tightly coupled with Profile Cards are being developed and will be released in second half of 2024. It is also possible for users to edit their profile data in the SharePoint profile edit experience (editprofile.aspx).

Organizational view – exists in the Profile Card and as a dedicated experience in Org Explorer [Org Explorer (requires Viva license)]

Favorites – favorites on Office.com and OneDrive is not connected to Delve and is a good option for users with similar functionality and improved availability. [Favorites in OneDrive]

Note: Boards found in Delve Web will not be replaced.

January 2024 teasers + 5 roadmap item highlights coming CY24-Q1

Now, to kick off our teasers section this month, I wanted to look-look ahead-ahead, first beyond next month, to highlight 5 interesting, anticipated features live on the public roadmap — projected to begin roll out in CY24-Q1 that’s Jan-Mar 2024. We’ll then narrow in on two teasers for January 2024.

5 interesting features live on the public roadmap, aka, long-term teasers projected to begin roll out in CY24-Q1:

Collaborate on SharePoint pages and news with co-authoring [Roadmap ID: 124853]

Microsoft Viva: Viva Amplify – Publish to Viva Engage [Roadmap ID: 185105]

Microsoft Teams: Ability for meeting participants to edit their display name [Roadmap ID: 122934]

Microsoft Graph: SharePoint Pages API (Preview) [Roadmap ID: 101166]

Microsoft Viva: Viva Goals in People Profile Cards [Roadmap ID: 117453]

BONUS long-term teaser: Microsoft Teams: Loop components in Teams chat for GCC [Roadmap ID: 93163]; coming ~ CY24-Q2

Review these and more on the public Microsoft 365 roadmap – anytime you like.

January 2024 teasers

Teaser #1: Community Campaigns in Viva Engage [Roadmap ID: N/A]

Teaser #2: Microsoft Teams: Discover Feed in Channels [Roadmap ID: 187084]

… shhh, tell everyone about everything that’s public.

Upcoming events and helpful, ongoing change management resources

Upcoming events

Microsoft Fabric Community Conference | March 26-28, 2024 in Las Vegas, NV

AIIM | April 3-5, 2024 in San Antonio, TX

North American Cloud & Collaboration Summit (NACS) | April 9-11, 2024 in Dallas, TX

Microosft 365 Conference | April 28 – May 4, 2024 in Orlando, FL

SharePoint Intranet Festival (Online)

European Collaboration Summit | May 14-16, 2024 in Weisbaden, Germany

365 EduCon – Seattle | June 3-7, 2024 in Seattle, WA

Ongoing resources:

“Stay on top of Office 365 changes“

“Message center in Office 365“

Install the Office 365 admin app; view Message Center posts and stay current with push notifications.

Microsoft 365 public roadmap + pre-filtered URL for SharePoint, OneDrive, Yammer and Stream roadmap items.

SharePoint Facebook | Twitter | SharePoint Community Blog | Feedback

Follow me to catch news and interesting SharePoint things: @mkashman; warning, occasional bad puns may fly in a tweet or two here and there.

Thanks for tuning in and/or reading this episode/blog of the Intrazone Roadmap Pitstop – December 2023. We are open to your feedback in comments below to hear how both the Roadmap Pitstop podcast episodes and blogs can be improved over time.

Engage with us. Ask those questions that haunt you. Push us where you want and need to get the best information and insights. We are here to put both our and your best change management foot forward.

Stay safe out there on the road’map ahead. And thanks for listening and reading.

Thanks for your time,

Mark Kashman – senior product manager (SharePoint/Lists) | Microsoft)

The Intrazone Roadmap Pitstop – December 2023 graphic showing some of the highlighted release features.

FYI | “It’s beginning to look a lot like SharePoint” lyrics

[Original “It’s beginning to look a lot like Christmas” song written by Meredith Willson. | Parody lyrics written and sung by Mark Kashman (lightly out of tune; right on time; embedded within the above podcast episode, “SharePoint Roadmap pitstop: December 2023”) ]

It’s beginning to look a lot like SharePoint

Everywhere you scroll

Create a site with ease

Design it as you please

Web parts make each page whole.

It’s beginning to look a lot like SharePoint

Powering the M365 apps you adore…

Oh the common collab you’ll see

Across the pages, sites, files, videos, lists, loops, whiteboards, and more… will be

In your common content store.

It’s beginning to look a lot like SharePoint

No matter where you post

A Stream video on each page

One from your CEO in Viva Engage

It’s a wonderful employee experience host.

Yes, it’s beginning to look a lot like SharePoint

The grandest of all content stores

And the prettiest home site you’ll see

Is Viva Connections under the tree

Your New Teams front door.

Sure it’s SharePoint… belov’ed SharePoint… once… more….

Microsoft Tech Community – Latest Blogs –Read More

Contributor Stories: Kristina Devochko

If you’ve ever engaged with the content on the Microsoft Learn platform, it’s highly likely that the material you utilized was written or co-authored by dedicated contributors. These contributors, often volunteers, generously offer their time and expertise to fill knowledge gaps within our portfolio and ensure the content remains up to date.

In this interview series, we aim to acquaint ourselves with some of these valuable contributors. Through these conversations, we seek to understand their motivations for sharing their knowledge and gain insights into their experiences.

Congratulations and welcome to this month’s recognized contributor, Kristina Devochko – cloud guru, tech contributor, content creator, blogger, speaker, and mentor. Kristina resides in Norway and is currently employed at Amesto Fortytwo as a principal cloud engineer. She’s the owner of the kristhecodingunicorn.com tech blog, a CNCF ambassador, Microsoft Azure MVP, CNCF TAG Environmental Sustainability tech lead, Kubernetes Unpacked podcast co-host, and co-organizer of multiple meetup groups. Throughout her career, Kristina has primarily focused on all things cloud native, Kubernetes, cloud security, and green tech. She believes in helping to make the world a better place, is passionate about volunteering with non-profit organizations, and frequently provides guidance to tech communities on building sustainable, secure, and cloud native systems.

Recently, Kristina also added “Microsoft Learn contributor” to her list of accomplishments. Her article, How to manage cost and optimize resources in AKS with Kubecost, was not only her first Microsoft Learn contribution, it was also the first article published on the new Microsoft Learn Community Content site.

Meet Kristina

Kristina Devochko, Principal Cloud Engineer, Amesto Fortytwo

Megan: Welcome Kristina! So lovely to meet you! To start us off, can you share a bit about your experience and background?

Kristina: Hi Megan! Absolutely, I’m happy to. I’ve been in the tech industry for almost eight years now, but tech wasn’t something I’d been planning to do since I was born or even right out of school! I was actually studying economics when a female friend who was already studying computer science inspired me to switch and test it out for myself. It was one of the best choices I ever made and from that point on, I never looked back! I’ve been in many roles so far in my tech career – from full stack developer to database administrator to software architect – and for the last four to five years I’ve been primarily focusing on all things cloud native, platform development, and Azure. Currently, I’m a principal cloud engineer at Amesto Fortytwo. I started sharing my knowledge with various tech communities back in 2021 and am forever grateful (and shocked) with how many wonderful experiences and opportunities I’ve had since then!

Megan: Fascinating that you started in economics but ended up in computer science – all thanks to your friend! And the knowledge sharing you began in 2021 also sounds fascinating. What prompted you to start sharing your knowledge with others and what motivates you to continue?

Kristina: Well, I was basically challenged – asked if I would consider sharing my knowledge! Back in 2021 I was contacted on LinkedIn by a Microsoft Norway employee who challenged me to start contributing to the technical community as a whole by sharing my knowledge and experiences in the form of publishing blog posts, speaking at events, and mentoring, etc. Before I was asked, this wasn’t something I’d ever thought of doing, because I thought I wasn’t that big of an expert to have anything valuable to share with the tech community. But I was wrong, and I’m so happy he helped me realize that! Lots of great things have happened to me since I started contributing to the global technical community that might not have happened if I wasn’t encouraged and supported along the way!

I love sharing my knowledge and love the tech communities! Among other things, they’re amazing, supportive, inclusive, and welcoming, and it’s why I started contributing and continue to contribute three years later! Once you become a part of the global tech community, you stay there forever. At least, that’s how I feel. We help each other grow, we share feedback and knowledge with each other, we support each other, and encourage each other in the community. It energizes me and makes me want to do more. In addition, getting feedback that something I wrote or spoke about was useful and helped others is incredibly gratifying to me as a content creator. It’s the best motivator to keep me helping the tech community. Recently, I also published my first article on the Microsoft Learn platform. It was another fantastic experience, and I’m looking forward to contributing to Microsoft Learn again very soon!

Megan: Yes! Congratulations on your very first Microsoft Learn article, Kristina! It’s great! I read it earlier today when I was preparing for our interview. I’d love to know what led you to start contributing to Microsoft Learn.

Kristina: I’ve used Microsoft Learn many times as a consumer – both for my personal competence development and also for organizing Cloud Skills Challenges activities at work in collaboration with Microsoft. Earlier this year Microsoft contacted me and asked if I was interested in joining the new Microsoft Learn Community Content site, as well as helping with the site launch and being an early contributor to it. Since I’d already gained so much value from this platform as a user, I knew it would be a great opportunity to contribute back to Microsoft Learn and to the entire community that uses it. So, I went for it – and I’m happy to say that it was a truly giving and enjoyable experience! I had room to be heard and share my suggestions and ideas of how this new site could be structured to bring the most value to the community. I was also able to recommend how to motivate more authors to join the new Community Content site and publish their own articles there. Speaking of which, not only was my article my first official Microsoft Learn contribution, it was also the first article published on this new site – an interesting fact I’m very pleased about and find to be quite a fun achievement!

Megan: I’m glad you got that little nudge to join our new Microsoft Learn Community Content site and to contribute to it – sounds like it was a great experience for you. You’ve mentioned that good things have happened since you started sharing your knowledge with the general tech communities in 2021. Can you elaborate on that?

Kristina: Sure! Sharing and contributing was a new experience for me. To ensure that my blog posts, articles, and speaking engagements, etc. were the same high-quality content the tech communities expect, I devoted a lot of time and effort to researching when I began contributing. I learned a lot from the research and tight collaboration with my resources. And it was all worth it because since I started contributing to the various tech communities three years ago, I’m now a:

Microsoft Azure MVP

CNCF ambassador

Kubernetes Unpacked podcast co-host

CNCF Technical Advisory Group Environmental Sustainability (TAG ENV) tech lead

founder of Green Software Foundation Oslo meetup group

co-organizer of two meetup groups: Norwegian .NET User Group Oslo and Cloud Native and Kubernetes Oslo

co-organizer of the Kubernetes and Cloud Native Community Days (KCD) Oslo 2024 conference

tech speaker (some highlights were speaking at NDC Oslo and KubeCon + CloudNativeCon EU 2023 and being the only guest speaker at the Microsoft Build Norway event a few months ago)

program committee member for KubeCon + CloudNativeCon North America and upcoming KubeCon + CloudNativeCon Europe that will take place in Paris in March 2024

delegate at Cloud Field Day 18, an event that was held this past October for independent thought leaders in enterprise cloud to discuss pressing issues and technology advancements with key companies in the space

mentor

Megan: Kristina! WOW! You weren’t kidding when you said a lot’s happened to you as a result of your contributions within the various tech communities. Congratulations! Can you share any learnings or advice for folks who want to start contributing to the Microsoft Learn platform?

Kristina: Yes, great question, Megan. The most important advice I can give is just to start. I know very well how one can feel as an impostor sometimes or start comparing oneself with others and end up underestimating oneself, losing confidence, and not contributing. Ask yourself:

Do I like sharing knowledge and helping others?

Does it sound fun and interesting for me to try out?

Have I ever gained value from someone else’s contribution?

If the answer to one or more of these questions is “Yes”, then you should definitely start contributing! You never know who your content can help! Also, when you’re just starting out, don’t hesitate to seek help, ask for feedback from the Microsoft Learn community, and/or ask for a second pair of eyes to look at your content and give feedback. And if you’re nervous to ask about it in public, find a contributor who you feel comfortable and confident with, and ask him/her privately. A lot of people are happy to help out, including myself!

Megan: Awesome insight and advice, thanks! One final question for you. One purpose of this interview is to get to know a little about the person behind the contributions. What do you like to do in your spare time? Do you have any hobbies?

Kristina: I spend a significant part of my free time on tech and community-related activities. But apart from that, I love reading, not only tech-related literature but also fantasy (I’m a Potterhead, a Harry Potter universe fan) and adventure genres. I also love hiking, drawing, cross stitching, and photography. And I have two cats, Penelope and Sofie, that I absolutely love spending time with, cuddling, and playing with.

Penelope Sofie

Megan: Yes! Ditto for me on the hiking, photography, and cats. Well, it was wonderful meeting you today, Kristina, and getting to know you on a professional and personal level. Before we wrap up, is there anything else you’d like to share about your contributing experience?

Kristina: Thanks, Megan. It was nice to meet you, as well. I just want to reiterate that contributing to the new Microsoft Learn Community Content site has been such a fun, educational, amazing, and gratifying experience for me. I’m looking forward to seeing and reading articles from more Microsoft contributors in the coming months, as well as creating and publishing more articles myself!

Megan: A great final thought for our readers today. Thanks again, Kristina, and I can’t wait to read your future contributions. Take care!

Keep up with Kristina:

Personal website: https://kristhecodingunicorn.com

LinkedIn: https://linkedin.com/in/krisde

Twitter: https://twitter.com/kristhecodingu1

Credly: https://credly.com/users/kristina-devochko/badges

BioDrop: https://biodrop.io/guidemetothemoon

To learn more about:

contributing to Microsoft Learn, visit Microsoft Learn Contributor Home.

joining the new Community Content site on Microsoft Learn, visit Microsoft Learn Community Content.

Microsoft Tech Community – Latest Blogs –Read More

Addressing Data Exfiltration: Token Theft Talk

Let’s continue our discussion on preventing data exfiltration. In previous blogs, we shared Microsoft’s approach to securing authentication sessions with Continuous Access Evaluation (CAE) and discussed securing cross-tenant access with Tenant Restrictions v2. Today our topic is stolen authentication artifacts.

Stolen authentication artifacts – tokens and cookies – can be used to impersonate the victim and gain access to everything the victim had access to. Up until a few years ago, token theft was a rare attack and was most often exercised by corporate Red Teams. Why? Because it’s simpler to steal a password than a cookie. However, with multifactor authentication (MFA) becoming prevalent, we’re seeing real-life attacks involving artifact theft and replay.

Before diving into details, it’s important to note that Microsoft recommends that the first line of defense against token theft is protecting your devices by deploying endpoint protections, device management, MFA (and moving towards phishing-resistant credentials), and antimalware, as described in Token tactics: How to prevent, detect, and respond to cloud token theft | Microsoft Security Blog.

Now, let’s discuss types of authentication artifacts and what techniques are recommended for each type to minimize the impact of theft. All authentication artifacts can be roughly divided into two buckets:

Renewable artifacts, also known as sign-in session artifacts, maintain single sign-on (SSO) and app state between the client and Entra ID.

Non-renewable artifacts, also known as apps session artifacts, grant data access to client applications.

It may be obvious that the first priority would be protecting the most powerful device SSO artifacts – Primary Refresh Tokens (PRT). The good news is that PRTs on all operating system platforms have been hardened against theft from day one. The level of protection depends on operated system capabilities, with Windows offering the strongest protection. PRT protection is not controllable by policy and is always on.

Offering similar protection for all artifacts is on our roadmap, but delivering these capabilities is going to be a multi-year journey. If you want to learn more about various challenges of building comprehensive protection against token theft, watch this RSA presentation. In the meantime, you can reduce token theft by carefully orchestrating Entra ID security products.

Addressing token theft of sign-in session artifacts

Conditional Access: Token protection policy offers cryptographic protection against replay of stolen tokens. This feature leverages and builds on top of already existing cryptographic protections of PRTs. When token protection policy is on, use of unprotected sign-in sessions is blocked. In combination with PRT protection always being on, it extends cryptographic protection to all renewable artifacts. Token protection is in public preview for Office and Outlook on Windows. Start in report-only mode first to evaluate the impact for your organization.

Apps that are not yet in scope of token protection can be protected by enabling compliant network check for Entra Global Secure Access. This policy will ensure that authentication artifacts always come from your organization’s network. It means that stolen tokens can only be replayed from your organization’s network, thus significantly reducing blast radius of the attack.

Addressing token theft of app session artifacts

Depending on your network configuration, you might be able block usage of stolen access tokens and workload cookies outside of your corporate network by using Conditional Access: Block access by location and strictly enforce location policies for continuous access evaluation (CAE). This new CAE enforcement mode blocks access from outside allowed IP ranges, thus blocking any usage of stolen tokens from outside your network and significantly reducing blast radius of the attack. To take advantage of this capability, your users must access both Entra ID and workloads from enumerable IP addresses. CAE strictly enforce locations policy can be enforced for corpnet users accessing data via Entra Global Secure Access (GSA) because Entra GSA is able to pass along IP address of user’s device. When configuring Named Locations in Conditional Access (CA), make sure to include range of IP addresses from which your users access both Entra ID and workloads (e.g. SharePoint Online).

Detecting token theft

To detect stolen artifacts, you can enable risk detections with Microsoft Entra ID Protection to elevate user risk when token theft is suspected. Anomalous token, token issuer anomaly, and adversary in the middle detections can be indicative of token theft. Each detection is calculated offline, whereas anomalous token can also be calculated in real-time at sign-in to catch the threat and flag the sign in as compromised. To take full advantage of these detections, we recommend configuring Risk-based Conditional Access (RBCA) to ensure your users have the proper policy controls applied when token theft is suspected. When RBCA policies are applied against token theft detections, it forces the user to complete multifactor authentication and reset their password, and when applicable, require an admin to revoke user tokens.

Continuous Access Evaluation works together with RBCA to block resource access with tainted artifacts. When user risk increases, CAE issues signals to all CAE-capable workloads to enforce RBCA policy immediately.

Pictures speak a thousand words – this infographic illustrates how different technologies work together to address token theft.

As token theft attacks are becoming more prevalent Microsoft constantly improves defenses against such attacks. Stay tuned for new updates in this area soon.

Anna Barhudarian

Principal Product Manager, Identity Division

Learn more about Microsoft Entra:

Related Articles:

Apply Zero Trust Principles to Authentication Session Management with Continuous Access Evaluation

How Tenant Restrictions v2 Can be Used to Prevent Data Exfiltration

See recent Microsoft Entra blogs

Dive into Microsoft Entra technical documentation

Join the conversation on the Microsoft Entra discussion space and Twitter

Learn more about Microsoft Security

Microsoft Tech Community – Latest Blogs –Read More

Native support for Socket.IO on Azure, scalability issue no more

This article talks about a popular open-source library called “Socket.IO”. It’s often used to build real-time web applications, like multi-player games and co-editing, co-creation applications.

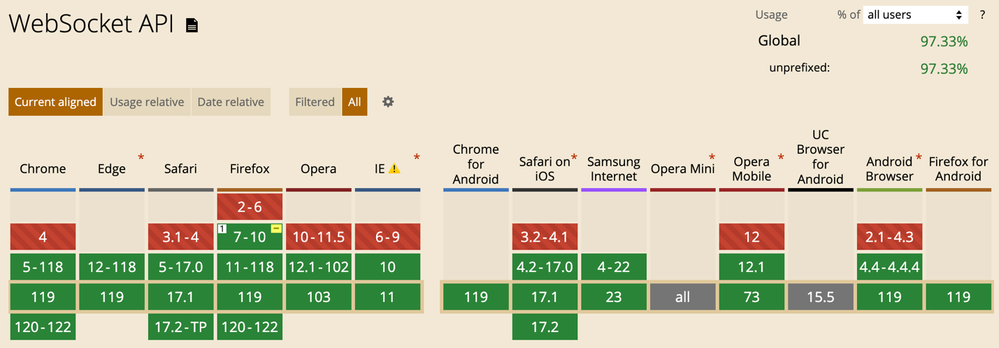

It explores the relationship between WebSocket API, Socket.IO library and an Azure service.

WebSocket API – provides the transport-level plumbing of bi-directional communication between clients and server.

Socket.IO library – builds on top of WebSocket API and provides application-level features that are common when developing real-time web apps.

Azure Web PubSub for Socket.IO – a feature from an Azure service that provides the infrastructure for massive scalability of Socket.IO apps.

What is WebSocket API?

WebSocket API allows web apps to establish a persistent, bi-directional communication channel between the server and client. Compared with polling techniques based on HTTP, WebSocket consumes less resources and offers lower latency. When we conducted performance test between using HTTP polling-based technique and using WebSocket, we saw a 10x reduction of memory reusage.

Screenshot take from caniuse.com/?search=websocket

WebSocket is a perfect fit for building real-time web experiences. It’s why it was introduced in the first place. But the API only concerns itself with establishing the communication channel, in other words, it’s an API that works at the transport level. Application developers much prefer working at a higher level, a level that brings them closer to the application they are building.

The application-level questions developers grapple with are of these sorts:

How do I send a notification to every online user when the database is updated?

How do I notify the only the users who are interested in a certain company stock?

How do I make sure the moves made by one game player are delivered to other players quickly?

What is Socket.IO library?

Socket.IO is a popular open-source library for building real-time web experiences. When we conducted a user study earlier this year, we were pleasantly surprised to learn that developers use Socket.IO for all kinds of applications, from the typical chat rooms and multi-player games to as far afield as controlling IoT devices and real-time monitoring for fraud detections.

While developers don’t necessarily need to use Socket.IO library to build real-time web experiences as these days browsers have solid support for WebSocket API, they continue to choose Socket.IO for several reasons.

Developers choose Socket.IO for productivity gains

The gain in productivity is why developers choose Socket.IO. Socket.IO removes the need to focus on transport level concerns. It offers higher-level APIs to manage client connections as a group. We can easily add a client to a group and send messages to all the clients in that group.

// Sends a message to all the clients in the room ‘room-101’. The event name is “greeting” and the message content is “Hello. Hola! 你好. “);

io.to(“room-101”).emit(“greeting”, “Hello. Hola! 你好. “);

Group membership is highly dynamic in nature. We can add and remove a client from a group as we see fit. As needs arise, we may have many groups, and a client can be in multiple groups.

// Adds a single client to “room-101”

io.in(theSocketId).socketsJoin(“room-101”);

Besides these high-level APIs, Socket.IO handles reconnect when connection drops which is common due to the nature of network connectivity.

Easy-to-learn and easy-to-use APIs

Another selling point is the design of the APIs. Server-side APIs mirror the client-side APIs so once developers become comfortable with one side of the APIs, they can easily transfer the knowledge to the other side. And it conforms to the event-based nature of the browser. Developers can define event handlers to whatever event names they define. It’s been shared with us by numerous Socket.IO users that APIs are intuitive and easy to use.

// Client defines an event handler to handle an event named “greeting”

socket.io.on(“greeting”, () => {

// …

});

To learn more about Socket.IO, visit its documentation site.

Using a high-level library like Socket.IO is not without challenges. Azure solves the scalability challenge.

It’s hard to find faults in Socket.IO as a library that abstracts away the common tasks of building real-time web experiences. Developers can hit the ground running without a problem. That’s until real users start using the applications. For one, meeting the challenge of thousands+ online users can be a pain and an unfamiliar territory for developers. It’s entirely doable and there are guides on the internet, just that it’s difficult to set up. When we are on a deadline to ship features reliably, the lines of code needed to build the infrastructure eat away the already tight time budget.

When we surveyed Socket.IO users, scaling out Socket.IO servers came up the top of the challenges. Scaling out refers to the practice of setting up more than one Socket.IO server and instrumenting a mechanism to coordinate messaging between Socket.IO servers. Plain as it sounds, the devils are in the implementation details.

This is an area Azure can add much value. We asked ourselves “how to make scalability a non-issue for developers” and “how to provide the simplest developer interface” to developers familiar with Socket.IO library?

With the input from the Socket.IO community (over 200 responses), we brought native support for Socket.IO on Azure. This means developers can continue using the Socket.IO APIs they know and love and let Azure handle scalability. You can follow the quickstarts to see how easy it is port a locally running Socket.IO app to Azure. Once it’s running on Azure, it’s ready to handle up to 100,000 concurrent connections with on single Azure resource.

Resources and references

WebSocket API

Socket.IO library documentation

Web PubSub for Socket.IO documentation

Microsoft Tech Community – Latest Blogs –Read More

Uncover the Future: Microsoft Autonomous AI Agents analyzing SAP Data Insights

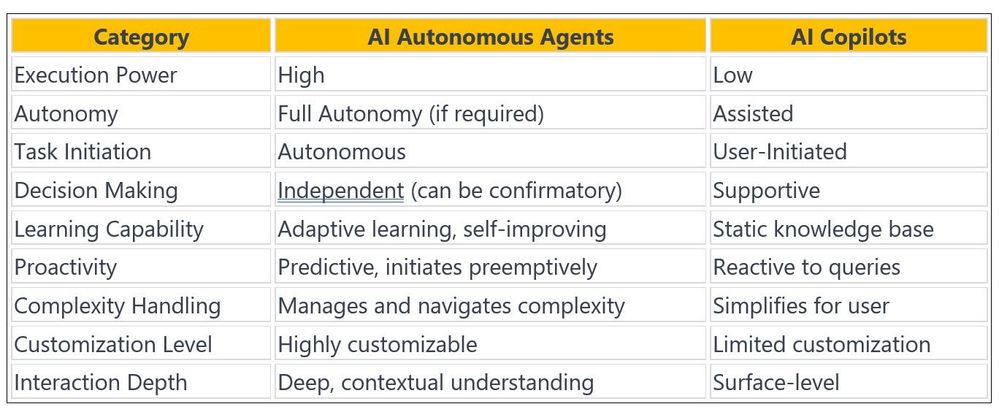

As a professional in SAP, Data and AI, intimately involved with the latest developments in Microsoft Research, our focus has been keenly set on the advancements in Generative AI (GenAI) and Large Language Models (LLMs) such as GPT, Claude, Palm, and Llama. These groundbreaking technologies represent more than mere progress in natural language processing; They embody a paradigm shift in our approach to language generation and comprehension, propelled by the most recent achievements in artificial intelligence. This evolution signifies a transformative step in our interaction with digital language. In the expanding landscape of AI, we distinguish between two types of digital assistants to get: Copilots and AI Agents, each playing a unique role in how we harness AI.

To start with, let’s understand the distinction between “AI Copilot” and “AI Autonomous Agents”, before we explore the Microsoft Groundbreaking AI Frameworks that support SAP ERP, BW Applications like SAP ECC, SAP BW, SAP S/4HANA & Microsoft Dynamics 365.

AI Copilots: Your Helper

Copilots are like navigators, offering real-time support to enhance user decision-making and productivity without overstepping into the realm of action. They’re silent whisperers of data, providing insights and answers upon request, perfect for scenarios where autonomy isn’t necessary or desired. To put it simply, Copilots are software add-ons that offer instant assistance to users of application functions. GitHub Copilot and Microsoft 365 Copilots are some examples. Let’s envision an intelligent SAP Copilot in an SAP S/4HANA or ECC system might show important inventory data to help with procurement decisions without ordering anything itself, provide comprehensive views to assist business users making a smart call without navigating through different SAP screens to get the numbers. Similarly, Microsoft’s Power BI Copilot draws upon data from the SAP ERP system to deliver predictive analytics and trends, equipping business decision makers with the information they need to make smart, proactive decisions while preserving the human touch in final strategic determinations.

AI Autonomous Agents: Your Doer

In the field of AI, Agents are autonomous doers/workers, capable of independently carrying out tasks that align with predefined goals and parameters. They’re the behind-the-scenes workhorses, streamlining workflows by taking on tasks such as auto-generating reports or proactively managing calendars based on learned user preferences. In the domain of Microsoft Dynamics, an AI Agent might not only flag a high-priority customer interaction but also draft a follow-up email, pending the user’s final approval. Additionally, it can retrieve multiple SAP business tables for immediate analysis and create a dynamic report for business decision makers to examine. In the world of smart enterprises, the distinction between Copilots and Agents is based on how they assist business and technical users. Copilots, like helpers within your software, might do things like giving you a summary of your meeting in Microsoft 365. They’re there to guide you, not to take over. Think of them as helpful chatbot in your program. However, AI Agents are different because they do things on their own, like making decisions and completing tasks with just a little bit of help from us.

In conclusion, enterprises running SAP on Azure can greatly benefit from the tailored support of AI Copilots and AI Agents. These advanced AI assistants play a crucial role in guiding enterprises through key decisions and strategies. Moreover, these Agents and Copilots have different ways of working because of the type of model engineering that is involved in answering a given prompt. Agents and Copilots differ in the training data that they use to model the LLM. Copilots usually have data from a specific domain that is relevant to the user. So, when GitHub Copilot makes a suggestion, it does so based on its knowledge of repositories. AI Agents usually have a wider range of resources that they use to form their conclusions and suggestions.

Let’s dive deeper and explore how we can harness the power of two Microsoft’s sophisticated AI frameworks to create autonomous AI agents specifically designed for analyzing complex data on SAP S/4HANA and ECC platforms in real-time scenario with practical examples. To provide some background, Microsoft research team recently released two innovative AI papers related to these sophisticated AI frameworks that have generated considerable buzz in the tech community, showcasing the commitment to pushing the boundaries of AI technology –

1. TaskWeaver: A Code-First Agent Framework, two sophisticated frameworks that are redefining the capabilities of AI in diverse scenarios and

2. AutoGen blogpost: Enabling Next-Gen LLM Applications via Multi-Agent Conversation.

TaskWeaver: Empowering LLMs with Enhanced Capabilities

As we know, Large Language Models (LLMs) have demonstrated remarkable skills in natural language understanding and generation, leading to their use in applications such as chatbots and virtual assistants. However, current LLM frameworks have limitations in dealing with domain-specific data analytics tasks with rich data structures. Furthermore, they have difficulty with flexibility to satisfy diverse user demands. To tackle these challenges, TaskWeaver is introduced as a code-first framework for building LLM-powered autonomous agents. It translates user requests into executable code and treats user-defined plugins as callable functions. TaskWeaver supports rich data structures, flexible plugin usage, dynamic plugin selection, and harnesses LLM coding capabilities for complex logic. It also incorporates domain-specific knowledge through examples and ensures the secure execution of generated code. TaskWeaver offers a strong and flexible framework for creating smart conversational agents that can handle complex tasks and adapt to domain-specific situations. TaskWeaver’s capacity to handle domain-specific data analytics tasks, default plugins and its support for rich data structures make it a distinguished framework in the field of AI. Its customizable plugins enable developers to adjust the framework to their specific needs, providing a level of refinement and efficiency that distinguishes it from other AI frameworks. By leveraging the power of LLMs and enabling developers to adapt the framework to different industries, TaskWeaver is ready to lead the way in transforming how we exploit the potential of AI, we’ll explore with an example in a moment.

Framework Components

TaskWeaver consists of several key components that work in tandem to provide a seamless workflow:

Planner Agent: Acts as the master strategist, orchestrating the workflow by creating a comprehensive list of tasks based on user requests.

Code Generator: Translates the Planner Agent’s instructions into Python code, leveraging TaskWeaver’s plugins to perform specific functions.

Code Interpreter/Executor: Executes the generated code and reports results back to the user.

This workflow demonstrates TaskWeaver’s efficiency in using AI to handle tasks from planning to execution, transforming complex requests into actionable insights.

TaskWeaver Features and Capabilities –

TaskWeaver stands out with its advanced capabilities that cater to a wide range of applications:

Integration with Large Language Models: TaskWeaver is compatible with LLMs, which are the backbone of the framework, enabling the creation of autonomous agents that can navigate through intricate logic and specialized knowledge domains.

Customizable Plugins: Users can create custom Python function-based plugins to execute domain-specific tasks, interact with external data sources, or perform specialized analytics.

Secure Execution: Microsoft ensures the secure execution of code within TaskWeaver, allowing developers to focus on their work without concern for security vulnerabilities.

User-Friendly Interface: Designed to prevent developers from getting bogged down in complicated processes, TaskWeaver offers a smooth user experience.

TaskWeaver’s ability to integrate with large language models (LLMs) and its customizable plugins sets it apart from other AI frameworks. By leveraging the power of LLMs and allowing developers to tailor the framework to their specific needs, TaskWeaver offers a level of sophistication and efficiency that is unmatched in the field.

As AI continues to advance, TaskWeaver is well-positioned to lead the way in reshaping how we harness the potential of AI in various industries.

Microsoft TaskWeaver is a framework that lets developers build and manage tasks across different platforms and applications. Frameworks such as Langchain, Semantic Kernel, Transformers Agent, Agents, AutoGen and JARVIS, which use LLMs for task-oriented conversations, are crucial in this transformation. They allow easy, natural language interactions with AI systems, making complex data analytics and domain-specific tasks more available. However, the real challenge and opportunity are in improving these frameworks to overcome current limitations, especially in specialized areas like SAP and data analytics. This involves adding domain-specific knowledge into GenAI models, improving their ability to process and analyze industry-specific data and workflows. There are many examples of how TaskWeaver can handle different tasks effectively. For example, developers can build smart agents that communicate with users fluently and helpfully, improving user satisfaction through clever conversation. TaskWeaver’s skill to understand user needs, control plugins, and run code safely makes it a very useful tool for various applications, from financial prediction to data exploration.

How to connect TaskWeaver with SAP S/4HANA for Data Insights?

To demonstrate the TaskWeaver approach, and to learn more about how TaskWeaver works in this situation, let’s examine the specific requirements for using it with SAP S/4HANA workload on Azure and HANA DB plugin creation for data analysis and insights. This example presents common actions in a data analysis task, which include getting data, analyzing the data, and displaying the results. By reviewing the steps in this use case, we can identify the main requirements that this example demands:

Rationale and Prerequisites for TaskWeaver, SAP S/4HANA and HANA DB Plugin:

Using Custom HANA Plugins: TaskWeaver should support custom plugins for doing things like getting data from SAP S/4HANA product and sales or custom tables, using specific SAP data extraction algorithms, and making the connection plugin with the ‘hdbcli’ python package deployment.

Handling Complex Data Structures: It must handle complex data formats, like pandas DataFrame, for advanced data processing and facilitate easy data transfer between plugins.

Stateful Execution: TaskWeaver should maintain state across conversations, processing user inputs and executing tasks in an iterative manner throughout the session.

Data Schema Inspection and Action: Prior to executing tasks, TaskWeaver needs to inspect the data schema in the database and use this information for actions before analyzing the SAP product and sales data.

Natural Language Responses: The system should provide user-friendly responses in natural language, summarizing execution results, such as the amount of sales order per product details.

Dynamic Code Generation: TaskWeaver must generate code on-the-fly to meet ad-hoc user requests, including tasks not covered by existing plugins, like visualizing SAP data related to Sales and product tables.

Incorporating Domain Knowledge: The framework should integrate domain-specific knowledge to enhance LLM’s planning and tool usage, ensuring accurate and reliable results in complex domains.

Persisting Results: It should offer a mechanism to save outcomes, such as DataFrames or images, to persistent storage, with options for business users to download these artifacts.

Installation and Setup

Getting started with TaskWeaver AI Agent and SAP HANA DB Plugin setup, it involves cloning the repository from GitHub and setting up a virtual environment to ensure smooth operation. AI Developers can then install the necessary requirements and begin leveraging TaskWeaver’s advanced AI functionalities.

1. Assuming SAP S/4HANA or SAP Suite on HANA(SoH) is operational.

2. Follow below link for step-by-step instructions to set up TaskWeaver on your local environment for testing.

TaskWeaver-SAP-AI-AGENT· amitlals/TaskWeaver-SAP-AI-AGENT

Example prompt –>

=========================================================

_____ _ _ __

|_ _|_ _ ___| | _ | | / /__ ____ __ _____ _____

| |/ _` / __| |/ /| | /| / / _ / __ `/ | / / _ / ___/

| | (_| __ < | |/ |/ / __/ /_/ /| |/ / __/ /

|_|__,_|___/_|_|__/|__/___/__,_/ |___/___/_/

=========================================================

TaskWeaver: I am TaskWeaver, an AI assistant. To get started, could you please enter your request?

Human: ___Fetch Product HT-1001 and related sales orders, and visualize in pie chart

Conclusion –

2024: A Year of New Opportunities and AI Agents.

It’s 2024, and the AI field is buzzing with excitement, particularly about autonomous AI Agent technology and its advancement as AI Agents as a Service (AIAaaS). Following the success of LLM apps in 2022 & 2023, AI Agents are poised to take over this year. Right now, many of these agents are still like new gadgets – fun for experimentation but not yet widely adopted in everyday life. However, the foundation is being laid, and we’re about to see these AI agents evolve from novelty concepts to practical, real-world solutions.

AI agents have the power to shape our future in amazing ways. The speed of technological progress is remarkable, and the possibilities for AI agents to change the way we work and live are huge. They can make our tasks easier, boost creativity, and improve efficiency, all with little effort. This change will surely reshape the business world, giving us a chance to grow with these innovative technologies. As we move towards this AI-led future, it will be an exciting adventure full of new findings and inventions.

For any feedback or issues related to this blog, please feel free to reach out to Amit Lal

Report Issues or Feedback: Have you encountered any problems?

Click to join the Discussion: Want to participate in discussions about this repo?

Reference –

AutoGen Official Blog: AutoGen: Enabling next-generation large language model applications – Microsoft Research

TaskWeaver Official Blog: TaskWeaver | TaskWeaver (microsoft.github.io)

GitHub

AutoGen: microsoft/autogen

TaskWeaver: microsoft/TaskWeaver

Papers:

Autogen: https://arxiv.org/pdf/2308.08155.pdf

TaskWeaver: https://arxiv.org/pdf/2311.17541.pdf

—

….Up next: Explore the synergy of AutoGen Framework with SAP platforms in our upcoming post. Stay tuned for more!

—

–Amit Lal

Disclaimer: The announcement of the Microsoft AI Framework is intended for informational purposes only. Microsoft reserves the right to make adjustments or changes to the product, its features, availability, and pricing at any time without prior notice. This blog does not constitute a legally binding offer or guarantee of specific functionalities or performance characteristics. Please refer to the official product documentation and agreements for detailed information about the product and its use. Microsoft is deeply committed to the responsible use of AI technologies. It is recommended to review and comply with all applicable laws, regulations, and organizational policies to ensure the responsible and ethical use of AI.

Microsoft Tech Community – Latest Blogs –Read More

Running AutoDock HPC application on Azure Machine Learning platform

Azure Machine Learning is a service that enables you to create, train, deploy, and manage machine learning models and experiments. You can also use it to run HPC applications, such as simulations, rendering, or data analysis.

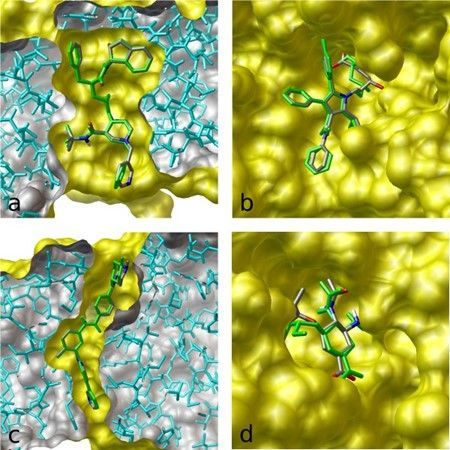

Autodock is a powerful tool for molecular simulation, essential for researchers who work with large-scale computational tasks in HPC environments. It is especially useful for drug design and biomolecular interaction studies.

Figure 1. The image illustrates the results of flexible docking (green) superimposed on the crystal structures of (a) indinavir, (b) atorvastatin, (c) imatinib, and (d) oseltamivir bound to their respective targets. (source: AutoDock Vina (scripps.edu))

This article will guide you through the process of running Autodock Vina docking simulation scripts on the Azure Machine Learning platform. You will also learn how to provision Azure NetApp Files (ANF) volumes for the Machine Learning notebooks, which can provide persistent storage for most HPC applications.

The steps are:

Prepare your Azure Machine Learning environment

Create ANF volume

Install Autodock Vina on the ANF volume

Create a compute cluster to run your job

Create your Autodock Vina docking script

Create your command job to run the docking script on the compute cluster

At the end, we will conclude this tutorial and highlight the benefits of using AML environment for HPC applications. We will also explore some customization options based on various needs and factors.

Let’s begin!

Prepare your Azure Machine Learning environment

Follow Quickstart: Get started with Azure Machine Learning to create an Azure Machine Learning workplace, sign in to studio and create a new notebook.

Set your kernel and create handle to workplace. You’ll create ml_client for a handle to the workspace. Enter your Subscription ID, Resource Group name and Workspace name and run in your notebook.

from azure.ai.ml import MLClient

from azure.identity import DefaultAzureCredential

# authenticate

credential = DefaultAzureCredential()

# Get a handle to the workspace

ml_client = MLClient(

credential=credential,

subscription_id=”<SUBSCRIPTION_ID>”,

resource_group_name=”<RESOURCE_GROUP>”,

workspace_name=”<AML_WORKSPACE_NAME>”,

)

Create ANF volume

Follow Create an NFS volume for Azure NetApp Files with Service Level “Standard” and NFSv3 protocol to create a 4TiB size volume.

To ensure accessibility from Azure Machine Learning compute to the ANF volume, we will place both the Azure Machine Learning compute resources and the Azure NetApp Files subnets within the same VNET.

Figure 2. Ensure Azure Machine Learning compute resources and Azure NetApp Files subnets are within the same VNET.

2. Open terminal of your notebook. Follow Mount NFS volumes for virtual machines | Microsoft Learn to test if you can mount the ANF volume from the Compute node successfully.

Figure 3. Test if you can mount the ANF volume successfully from the Compute node.

Install Autodock Vina on the ANF volume

Follow Installation — Autodock Vina 1.2.0 documentation (Autodock-vina.readthedocs.io) to install Autodock Vina. We will download the executable for the latest release (1.2.5):

$ cd anfvol

$ wget https://github.com/ccsb-scripps/AutoDock-Vina/releases/download/v1.2.5/vina_1.2.5_linux_x86_64

$ chmod +x vina_1.2.5_linux_x86_64

Create a compute cluster to run your job

from azure.ai.ml.entities import AmlCompute, NetworkSettings

# Name assigned to the compute cluster

cpu_compute_target = “cpu-dockingcluster”

try:

# let’s see if the compute target already exists

cpu_cluster = ml_client.compute.get(cpu_compute_target)

print(

f”You already have a cluster named {cpu_compute_target}, we’ll reuse it as is.”

)

except Exception:

print(“Creating a new cpu compute target…”)

# Let’s create the Azure Machine Learning compute object with the intended parameters

# if you run into an out of quota error, change the size to a comparable VM that is available.

# Learn more on https://azure.microsoft.com/en-us/pricing/details/machine-learning/.

network_settings = NetworkSettings(vnet_name=”/subscriptions/<SUBSCRIPTION_ID>/resourceGroups/<RESOURCE_GROUP>/providers/Microsoft.Network/virtualNetworks/<VNET_NAME>”,

subnet=”/subscriptions/<SUBSCRIPTION_ID>/resourceGroups/<RESOURCE_GROUP>/providers/Microsoft.Network/virtualNetworks/<VNET_NAME>/subnets/<SUBNET_NAME>”)

cpu_cluster = AmlCompute(

name=cpu_compute_target,

# Azure Machine Learning Compute is the on-demand VM service

type=”amlcompute”,

# VM Family

size=”STANDARD_HB120RS_V3″,

# Minimum running nodes when there is no job running

min_instances=0,

# Nodes in cluster

max_instances=4,

# How many seconds will the node running after the job termination

idle_time_before_scale_down=180,

# Dedicated or LowPriority. The latter is cheaper but there is a chance of job termination

tier=”Dedicated”,

network_settings=network_settings

)

print(

f”AMLCompute with name {cpu_cluster.name} will be created, with compute size {cpu_cluster.size}”

)

# Now, we pass the object to MLClient’s create_or_update method

cpu_cluster = ml_client.compute.begin_create_or_update(cpu_cluster)

Create your Autodock Vina docking script (basicdocking.sh)