Month: February 2024

Microsoft and open-source software

Microsoft has embraced open-source software—from offering tools for coding and managing open-source projects to making some of its own technologies open source, such as .NET and TypeScript. Even Visual Studio Code is built on open source. For March, we’re celebrating this culture of open-source software at Microsoft.

Explore some of the open-source projects at Microsoft, such as .NET on GitHub. Learn about tools and best practices to help you start contributing to open-source projects. And check out resources to help you work more productively with open-source tools, like Python in Visual Studio Code.

.NET is open source

Did you know .NET is open source? .NET is open source and cross-platform, and it’s maintained by Microsoft and the .NET community. Check it out on GitHub.

Python Data Science Day 2024: Unleashing the Power of Python in Data Analysis

Celebrate Pi Day (3.14) with a journey into data science with Python. Set for March 14, Python Data Science Day is an online event for developers, data scientists, students, and researchers who want to explore modern solutions for data pipelines and complex queries.

C# Dev Kit for Visual Studio Code

Learn how to use the C# Dev Kit for Visual Studio Code. Get details and download the C# Dev Kit from the Visual Studio Marketplace.

Visual Studio Code: C# and .NET development for beginners

Have questions about Visual Studio Code and C# Dev Kit? Watch the C# and .NET Development in VS Code for Beginners series and start writing C# applications in VS Code.

Reactor series: GenAI for software developers

Step into the future of software development with the Reactor series. GenAI for Software Developers explores cutting-edge AI tools and techniques for developers, revolutionizing the way you build and deploy applications. Register today and elevate your coding skills.

Use GitHub Copilot for your Python coding

Discover a better way to code in Python. Check out this free Microsoft Learn module on how GitHub Copilot provides suggestions while you code in Python.

Getting started with the Fluent UI Blazor library

The Fluent UI Blazor library is an open-source set of Blazor components used for building applications that have a Fluent design. Watch this Open at Microsoft episode for an overview and find out how to get started with the Fluent UI Blazor library.

Remote development with Visual Studio Code

Find out how to tap into more powerful hardware and develop on different platforms from your local machine. Check out this Microsoft Learn path to explore tools in VS Code for remote development setups and discover tips for personalizing your own remote dev workflow.

Using GitHub Copilot with JavaScript

Use GitHub Copilot while you work with JavaScript. This Microsoft Learn module will tell you everything you need to know to get started with this AI pair programmer.

Generative AI for Beginners

Want to build your own GenAI application? The free Generative AI for Beginners course on GitHub is the perfect place to start. Work through 18 in-depth lessons and learn everything from setting up your environment to using open-source models available on Hugging Face.

Use OpenAI Assistants API to build your own cooking advisor bot on Teams

Find out how to build an AI assistant right into your app using the new OpenAI Assistants API. Learn about the open playground for experimenting and watch a step-by-step demo for creating a cooking assistant that will suggest recipes based on what’s in your fridge.

What’s new in Teams Toolkit for Visual Studio 17.9

What’s new in Teams Toolkit for Visual Studio? Get an overview of new tools and capabilities for .NET developers building apps for Microsoft Teams.

Embed a custom webpage in Teams

Find out how to share a custom web page, such as a dashboard or portal, inside a Teams app. It’s easier than you might think. This short video shows how to do this using Teams Toolkit for Visual Studio and Blazor.

Get to know GitHub Copilot in VS Code and be more productive

Get to know GitHub Copilot in VS Code and find out how to use it. Watch this video to see how incredibly easy it is to start working with GitHub Copilot…Just start coding and watch the AI go to work.

Customize Dev Containers in VS Code with Dockerfiles and Docker Compose

Dev containers offer a convenient way to deliver consistent and reproducible environments. Follow along with this video demo to customize your dev containers using Dockerfiles and Docker Compose.

Designing for Trust

Learn how to design trustworthy experiences in the world of AI. Watch a demo of an AI prompt injection attack and learn about setting up guardrails to protect the system.

AI Show: LLM Evaluations in Azure AI Studio

Don’t deploy your LLM application without testing it first! Watch the AI Show to see how to use Azure AI Studio to evaluate your app’s performance and ensure it’s ready to go live. Watch now.

What’s winget.pro?

The Windows Package Manager (winget) is a free, open-source package manager. So what is winget.pro? Watch this special edition of the Open at Microsoft show for an overview of winget.pro and to find out how it differs from the well-known winget.

Use Visual Studio for modern development

Want to learn more about using Visual Studio to develop and test apps. Start here. In this free learning path, you’ll dig into key features for debugging, editing, and publishing your apps.

Build your own assistant for Microsoft Teams

Creating your own assistant app is super easy. Learn how in under 3 minutes! Watch a demo using the OpenAI Assistants, Teams AI Library, and the new AI Assistant Bot template in VS Code.

GitHub Copilot fundamentals – Understand the AI pair programmer

Improve developer productivity and foster innovation with GitHub Copilot. Explore the fundamentals of GitHub Copilot in this free training path from Microsoft Learn.

How to get GraphQL endpoints with Data API Builder

The Open at Microsoft show takes a look at using Data API Builder to easily create Graph QL endpoints. See how you can use this no-code solution to quickly enable advanced—and efficient—data interactions.

Microsoft, GitHub, and DX release new research into the business ROI of investing in Developer Experience

Investing in the developer experience has many benefits and improves business outcomes. Dive into our groundbreaking research (with data from more than 2000 developers at companies around the world) to discover what your business can gain with better DevEx.

Build your custom copilot with your data on Teams featuring Azure the AI Dragon

Build your own copilot for Microsoft Teams in minutes. Watch this video to see how in this demo that builds an AI Dragon that will take your team on a cyber role-playing adventure.

Microsoft Graph Toolkit v4.0 is now generally available

Microsoft Graph Toolkit v4.0 is now available. Learn about its new features, bug fixes, and improvements to the developer experience.

Microsoft Mesh: Now available for creating innovative multi-user 3D experiences

Microsoft Mesh is now generally available, providing a immersive 3D experience for the virtual workplace. Get an overview of Microsoft Mesh and find out how to start building your own custom experiences.

Global AI Bootcamp 2024

Global AI Bootcamp is a worldwide annual event that runs throughout the month of March for developers and AI enthusiasts. Learn about AI through workshops, sessions, and discussions. Find an in-person bootcamp event near you.

Microsoft JDConf 2024

Get ready for JDConf 2024—a free virtual event for Java developers. Explore the latest in tooling, architecture, cloud integration, frameworks, and AI. It all happens online March 27-28. Learn more and register now.

Microsoft Tech Community – Latest Blogs –Read More

Microsoft and open-source software

Microsoft has embraced open-source software—from offering tools for coding and managing open-source projects to making some of its own technologies open source, such as .NET and TypeScript. Even Visual Studio Code is built on open source. For March, we’re celebrating this culture of open-source software at Microsoft.

Explore some of the open-source projects at Microsoft, such as .NET on GitHub. Learn about tools and best practices to help you start contributing to open-source projects. And check out resources to help you work more productively with open-source tools, like Python in Visual Studio Code.

.NET is open source

Did you know .NET is open source? .NET is open source and cross-platform, and it’s maintained by Microsoft and the .NET community. Check it out on GitHub.

Python Data Science Day 2024: Unleashing the Power of Python in Data Analysis

Celebrate Pi Day (3.14) with a journey into data science with Python. Set for March 14, Python Data Science Day is an online event for developers, data scientists, students, and researchers who want to explore modern solutions for data pipelines and complex queries.

C# Dev Kit for Visual Studio Code

Learn how to use the C# Dev Kit for Visual Studio Code. Get details and download the C# Dev Kit from the Visual Studio Marketplace.

Visual Studio Code: C# and .NET development for beginners

Have questions about Visual Studio Code and C# Dev Kit? Watch the C# and .NET Development in VS Code for Beginners series and start writing C# applications in VS Code.

Reactor series: GenAI for software developers

Step into the future of software development with the Reactor series. GenAI for Software Developers explores cutting-edge AI tools and techniques for developers, revolutionizing the way you build and deploy applications. Register today and elevate your coding skills.

Use GitHub Copilot for your Python coding

Discover a better way to code in Python. Check out this free Microsoft Learn module on how GitHub Copilot provides suggestions while you code in Python.

Getting started with the Fluent UI Blazor library

The Fluent UI Blazor library is an open-source set of Blazor components used for building applications that have a Fluent design. Watch this Open at Microsoft episode for an overview and find out how to get started with the Fluent UI Blazor library.

Remote development with Visual Studio Code

Find out how to tap into more powerful hardware and develop on different platforms from your local machine. Check out this Microsoft Learn path to explore tools in VS Code for remote development setups and discover tips for personalizing your own remote dev workflow.

Using GitHub Copilot with JavaScript

Use GitHub Copilot while you work with JavaScript. This Microsoft Learn module will tell you everything you need to know to get started with this AI pair programmer.

Generative AI for Beginners

Want to build your own GenAI application? The free Generative AI for Beginners course on GitHub is the perfect place to start. Work through 18 in-depth lessons and learn everything from setting up your environment to using open-source models available on Hugging Face.

Use OpenAI Assistants API to build your own cooking advisor bot on Teams

Find out how to build an AI assistant right into your app using the new OpenAI Assistants API. Learn about the open playground for experimenting and watch a step-by-step demo for creating a cooking assistant that will suggest recipes based on what’s in your fridge.

What’s new in Teams Toolkit for Visual Studio 17.9

What’s new in Teams Toolkit for Visual Studio? Get an overview of new tools and capabilities for .NET developers building apps for Microsoft Teams.

Embed a custom webpage in Teams

Find out how to share a custom web page, such as a dashboard or portal, inside a Teams app. It’s easier than you might think. This short video shows how to do this using Teams Toolkit for Visual Studio and Blazor.

Get to know GitHub Copilot in VS Code and be more productive

Get to know GitHub Copilot in VS Code and find out how to use it. Watch this video to see how incredibly easy it is to start working with GitHub Copilot…Just start coding and watch the AI go to work.

Customize Dev Containers in VS Code with Dockerfiles and Docker Compose

Dev containers offer a convenient way to deliver consistent and reproducible environments. Follow along with this video demo to customize your dev containers using Dockerfiles and Docker Compose.

Designing for Trust

Learn how to design trustworthy experiences in the world of AI. Watch a demo of an AI prompt injection attack and learn about setting up guardrails to protect the system.

AI Show: LLM Evaluations in Azure AI Studio

Don’t deploy your LLM application without testing it first! Watch the AI Show to see how to use Azure AI Studio to evaluate your app’s performance and ensure it’s ready to go live. Watch now.

What’s winget.pro?

The Windows Package Manager (winget) is a free, open-source package manager. So what is winget.pro? Watch this special edition of the Open at Microsoft show for an overview of winget.pro and to find out how it differs from the well-known winget.

Use Visual Studio for modern development

Want to learn more about using Visual Studio to develop and test apps. Start here. In this free learning path, you’ll dig into key features for debugging, editing, and publishing your apps.

Build your own assistant for Microsoft Teams

Creating your own assistant app is super easy. Learn how in under 3 minutes! Watch a demo using the OpenAI Assistants, Teams AI Library, and the new AI Assistant Bot template in VS Code.

GitHub Copilot fundamentals – Understand the AI pair programmer

Improve developer productivity and foster innovation with GitHub Copilot. Explore the fundamentals of GitHub Copilot in this free training path from Microsoft Learn.

How to get GraphQL endpoints with Data API Builder

The Open at Microsoft show takes a look at using Data API Builder to easily create Graph QL endpoints. See how you can use this no-code solution to quickly enable advanced—and efficient—data interactions.

Microsoft, GitHub, and DX release new research into the business ROI of investing in Developer Experience

Investing in the developer experience has many benefits and improves business outcomes. Dive into our groundbreaking research (with data from more than 2000 developers at companies around the world) to discover what your business can gain with better DevEx.

Build your custom copilot with your data on Teams featuring Azure the AI Dragon

Build your own copilot for Microsoft Teams in minutes. Watch this video to see how in this demo that builds an AI Dragon that will take your team on a cyber role-playing adventure.

Microsoft Graph Toolkit v4.0 is now generally available

Microsoft Graph Toolkit v4.0 is now available. Learn about its new features, bug fixes, and improvements to the developer experience.

Microsoft Mesh: Now available for creating innovative multi-user 3D experiences

Microsoft Mesh is now generally available, providing a immersive 3D experience for the virtual workplace. Get an overview of Microsoft Mesh and find out how to start building your own custom experiences.

Global AI Bootcamp 2024

Global AI Bootcamp is a worldwide annual event that runs throughout the month of March for developers and AI enthusiasts. Learn about AI through workshops, sessions, and discussions. Find an in-person bootcamp event near you.

Microsoft JDConf 2024

Get ready for JDConf 2024—a free virtual event for Java developers. Explore the latest in tooling, architecture, cloud integration, frameworks, and AI. It all happens online March 27-28. Learn more and register now.

Microsoft Tech Community – Latest Blogs –Read More

Microsoft and open-source software

Microsoft has embraced open-source software—from offering tools for coding and managing open-source projects to making some of its own technologies open source, such as .NET and TypeScript. Even Visual Studio Code is built on open source. For March, we’re celebrating this culture of open-source software at Microsoft.

Explore some of the open-source projects at Microsoft, such as .NET on GitHub. Learn about tools and best practices to help you start contributing to open-source projects. And check out resources to help you work more productively with open-source tools, like Python in Visual Studio Code.

.NET is open source

Did you know .NET is open source? .NET is open source and cross-platform, and it’s maintained by Microsoft and the .NET community. Check it out on GitHub.

Python Data Science Day 2024: Unleashing the Power of Python in Data Analysis

Celebrate Pi Day (3.14) with a journey into data science with Python. Set for March 14, Python Data Science Day is an online event for developers, data scientists, students, and researchers who want to explore modern solutions for data pipelines and complex queries.

C# Dev Kit for Visual Studio Code

Learn how to use the C# Dev Kit for Visual Studio Code. Get details and download the C# Dev Kit from the Visual Studio Marketplace.

Visual Studio Code: C# and .NET development for beginners

Have questions about Visual Studio Code and C# Dev Kit? Watch the C# and .NET Development in VS Code for Beginners series and start writing C# applications in VS Code.

Reactor series: GenAI for software developers

Step into the future of software development with the Reactor series. GenAI for Software Developers explores cutting-edge AI tools and techniques for developers, revolutionizing the way you build and deploy applications. Register today and elevate your coding skills.

Use GitHub Copilot for your Python coding

Discover a better way to code in Python. Check out this free Microsoft Learn module on how GitHub Copilot provides suggestions while you code in Python.

Getting started with the Fluent UI Blazor library

The Fluent UI Blazor library is an open-source set of Blazor components used for building applications that have a Fluent design. Watch this Open at Microsoft episode for an overview and find out how to get started with the Fluent UI Blazor library.

Remote development with Visual Studio Code

Find out how to tap into more powerful hardware and develop on different platforms from your local machine. Check out this Microsoft Learn path to explore tools in VS Code for remote development setups and discover tips for personalizing your own remote dev workflow.

Using GitHub Copilot with JavaScript

Use GitHub Copilot while you work with JavaScript. This Microsoft Learn module will tell you everything you need to know to get started with this AI pair programmer.

Generative AI for Beginners

Want to build your own GenAI application? The free Generative AI for Beginners course on GitHub is the perfect place to start. Work through 18 in-depth lessons and learn everything from setting up your environment to using open-source models available on Hugging Face.

Use OpenAI Assistants API to build your own cooking advisor bot on Teams

Find out how to build an AI assistant right into your app using the new OpenAI Assistants API. Learn about the open playground for experimenting and watch a step-by-step demo for creating a cooking assistant that will suggest recipes based on what’s in your fridge.

What’s new in Teams Toolkit for Visual Studio 17.9

What’s new in Teams Toolkit for Visual Studio? Get an overview of new tools and capabilities for .NET developers building apps for Microsoft Teams.

Embed a custom webpage in Teams

Find out how to share a custom web page, such as a dashboard or portal, inside a Teams app. It’s easier than you might think. This short video shows how to do this using Teams Toolkit for Visual Studio and Blazor.

Get to know GitHub Copilot in VS Code and be more productive

Get to know GitHub Copilot in VS Code and find out how to use it. Watch this video to see how incredibly easy it is to start working with GitHub Copilot…Just start coding and watch the AI go to work.

Customize Dev Containers in VS Code with Dockerfiles and Docker Compose

Dev containers offer a convenient way to deliver consistent and reproducible environments. Follow along with this video demo to customize your dev containers using Dockerfiles and Docker Compose.

Designing for Trust

Learn how to design trustworthy experiences in the world of AI. Watch a demo of an AI prompt injection attack and learn about setting up guardrails to protect the system.

AI Show: LLM Evaluations in Azure AI Studio

Don’t deploy your LLM application without testing it first! Watch the AI Show to see how to use Azure AI Studio to evaluate your app’s performance and ensure it’s ready to go live. Watch now.

What’s winget.pro?

The Windows Package Manager (winget) is a free, open-source package manager. So what is winget.pro? Watch this special edition of the Open at Microsoft show for an overview of winget.pro and to find out how it differs from the well-known winget.

Use Visual Studio for modern development

Want to learn more about using Visual Studio to develop and test apps. Start here. In this free learning path, you’ll dig into key features for debugging, editing, and publishing your apps.

Build your own assistant for Microsoft Teams

Creating your own assistant app is super easy. Learn how in under 3 minutes! Watch a demo using the OpenAI Assistants, Teams AI Library, and the new AI Assistant Bot template in VS Code.

GitHub Copilot fundamentals – Understand the AI pair programmer

Improve developer productivity and foster innovation with GitHub Copilot. Explore the fundamentals of GitHub Copilot in this free training path from Microsoft Learn.

How to get GraphQL endpoints with Data API Builder

The Open at Microsoft show takes a look at using Data API Builder to easily create Graph QL endpoints. See how you can use this no-code solution to quickly enable advanced—and efficient—data interactions.

Microsoft, GitHub, and DX release new research into the business ROI of investing in Developer Experience

Investing in the developer experience has many benefits and improves business outcomes. Dive into our groundbreaking research (with data from more than 2000 developers at companies around the world) to discover what your business can gain with better DevEx.

Build your custom copilot with your data on Teams featuring Azure the AI Dragon

Build your own copilot for Microsoft Teams in minutes. Watch this video to see how in this demo that builds an AI Dragon that will take your team on a cyber role-playing adventure.

Microsoft Graph Toolkit v4.0 is now generally available

Microsoft Graph Toolkit v4.0 is now available. Learn about its new features, bug fixes, and improvements to the developer experience.

Microsoft Mesh: Now available for creating innovative multi-user 3D experiences

Microsoft Mesh is now generally available, providing a immersive 3D experience for the virtual workplace. Get an overview of Microsoft Mesh and find out how to start building your own custom experiences.

Global AI Bootcamp 2024

Global AI Bootcamp is a worldwide annual event that runs throughout the month of March for developers and AI enthusiasts. Learn about AI through workshops, sessions, and discussions. Find an in-person bootcamp event near you.

Microsoft JDConf 2024

Get ready for JDConf 2024—a free virtual event for Java developers. Explore the latest in tooling, architecture, cloud integration, frameworks, and AI. It all happens online March 27-28. Learn more and register now.

Microsoft Tech Community – Latest Blogs –Read More

Leverage anomaly management processes with Microsoft Cost Management

The cloud comes with the promise of significant cost savings compared to on-premises costs. However, realizing those savings requires diligence to proactively plan, govern, and monitor your cloud solutions. Your ability to detect, analyze, and quickly resolve unexpected costs can help minimize the impact on your budget and operations. When you understand your cloud costs you can make more informed decisions on how to allocate and manage those costs. But even with proactive cost management, surprises can still happen. That’s why we developed several tools in Microsoft Cost Management to help you set up thresholds and rules so you can detect problems early and ensure the timely detection of out-of-scope changes in your cloud costs. Let’s take a closer look at some of these tools and how you can use them to discover anomalous costs and usage patterns.

Identify atypical usage patterns with anomaly detection

Anomaly detection is a powerful tool that can help you minimize unexpected charges by identifying atypical usage patterns like cost spikes or dips based on your cost and usage trends and take corrective actions. For example, you might notice that something has changed, but you’re not sure what. Suppose you have a subscription that consumes around $100 every day. A new service was added into the subscription by mistake, resulting in the daily cost doubling to $200. With anomaly detection, you will be notified about the steep spike in daily cost, which you can then investigate to see if it’s an expected increase or a mistake, leading to early corrective measure.

You can also embed time-series anomaly detection capabilities into your apps to identify problems quickly. AI Anomaly Detector ingests time-series data of all types and selects the best anomaly detection algorithm for your data to ensure high accuracy. Detect spikes, dips, deviations from cyclic patterns, and trend changes through both univariate and multivariate APIs. Customize the service to detect any level of anomaly. Deploy the anomaly detection service where you need it—in the cloud or at the intelligent edge.

Use Alerts to get notified when an anomalous usage change is detected

You can subscribe to anomaly alerts to be automatically notified when an anomalous usage change is detected, with a subscription-scope email displaying the underlying resource groups that contributed to the anomalous behavior. Alerts can also be set up for your Azure reserved instances usage to receive email notifications, so you can take remedial action when your reservations have low utilization.

Here’s an example of how to create an anomaly alert rule:

Select the scope as the subscription which needs monitoring.

Navigate to the ‘Cost alerts’ page in Cost Management. Select ‘Anomaly’ as the Alert type.

Specify the recipient email IDs.

Click on ‘Create alert rule.’

In the event that an anomaly is detected, you will receive alert emails which give you basic information to help you start your investigation.

Get deeper insights with smart views

Use smart views in Cost Analysis to view anomaly insights that were automatically detected for each subscription. To drill into the underlying data for something that has changed, select the Insight link. You can also create custom views for anomalous usage detection such as unused costs from Azure reserved instances and savings plans that could point to further optimization for specific workloads.

You can also group related resources in Cost Analysis and smart views. For example, group related resources, like disks under virtual machines or web apps under App Service plans, by adding a “cm-resource-parent” tag to the child resources with a value of the parent resource ID. Or use Charts in Cost Analysis smart views to view your daily or monthly cost over time.

Use Copilot for AI-based assistance

For quick identification and analysis of anomalies in your cloud spend, try the AI-powered Copilot in Cost Management––available in preview on the Azure Portal. For example, if a cost doubles you can ask Copilot natural language questions to understand what happened and get the insights you need faster. You don’t need to be an expert in navigating the cost management UI or analyzing the data, you simply let the AI do it for you. For example, you can ask, “why did my cost increase this month?” or “which service led to the increase in cost this month?” Copilot will then provide a breakdown by categories of spend and their percentage impact on your total invoice. From there, you can leverage the generated suggestions to investigate your bill further.

Learn more about streamlining anomaly management

Optimizing your cloud spend with Azure becomes much easier when you streamline your anomaly management processes with tools like anomaly detection, alerts, and smart views in Microsoft Cost Management. You can learn even more about using FinOps best practices to manage anomalies in your resource usage at aka.ms/finops/solutions.

Microsoft Tech Community – Latest Blogs –Read More

Zero-trust Security for Windows Container-based application with Calico

Hello, we would like to feature our partners from Tigera Calico that we teamed up with to co-author a blog on Zero-Trust security for Windows container-based applications with Calico. Below are the names of the partners that co-authored the blog.

Dhiraj Sehgal Jen Luther Thomas

Enterprises are increasingly integrating Windows containers into their Kubernetes workflow and much like Linux containers they are looking to strengthen their Windows container based application’s security posture by explicitly authorizing and verifying every communication request and minimizing trust assumptions. Zero-trust workload security restricts communication between pods and services at a very fine-grained level, resulting in multiple benefits that include:

Enhanced Security: Ensures each pod has limited and authorized communication access, preventing potential threats from spreading across the cluster.

Compliance: Achieves compliance requirements by enforcing strict access controls and data isolation.

Isolation of Sensitive Data: Isolates sensitive data from other less sensitive workloads to reduce the risk of unauthorized access.

Workload Communication Visibility: Provides better visibility into workload-workload communication and security gaps, including network security policies.

As the number of Windows container-based workloads and associated pods running in the cluster grows, building security posture requires zero-trust workload access security with the following:

Egress access controls: Secure access from individual pods running Linux or windows containerized workloads in a Kubernetes cluster to external resources, including cloud services, databases, and 3rd-party APIs.

DNS Policies: Enforce DNS policies at the source pod so that fully qualified domain names (FQDN/DNS) can be used to allow access from a pod or set of pods (via label selector) to external resources—eliminating the need for a firewall rule or equivalent.

Global and Namespaced Network Sets: Automatically update access controls for all IPs described by the CIDR notation using IP subnet/CIDR in security policies.

Identity-Aware Microsegmentation: Segment workloads using workload identities to achieve workload isolation and limit lateral communication.

Application-Layer Policy: Apply security controls at the application level to secure pod-to-pod traffic, including HTTP methods and URL paths. Eliminate the operational complexity of deploying an additional service mesh.

Let’s go through an example to build zero-trust security for the demo application Online Boutique (previously known as Hipster Shop), an 11 microservice demo application, in a Azure Kubernetes Service environment and connected to Calico Cloud. After Online Boutique is deployed, the associated microservices, including RecommendationService and ProductCatalogService, as shown below, are monitored for breakdown, timeouts, and slow performance.

The deployment looks like this:

Figure: Online Boutique microservices architecture

Zero-trust workload access control for CartService:

We will explore two scenarios to secure CartService that carries products for the checkoutservice after product selection from the Redis database has happened. CartService is powered by an external third-party service. The service needs to be secure and have exclusive access from checkout to prevent tampering with the changes in the cart.

Scenario 1: Building the security policy for CartService

Whether or not the DevOps engineer understands the layout of their microservice architecture or the associated label schema for those workloads, once the application is introduced into the cluster, the team can make use of Calico Cloud’s ‘Recommend a policy’ feature to automatically highlight flows between workloads as seen below:

Policy recommendation will aggregate the metadata of those flows to understand their full context and suggest a policy that allow-lists traffic between cartservice and checkoutservice based explicitly on the port, protocol, and the label’s key-pair value match.

Users then assess the impact of the recommended policy using Preview and/or Stage to observe the effect on traffic without impacting actual traffic flows.

The preview option comes in handy as teams can collectively understand the impact from their respective roles, which can be developer, security, DevOps, or network engineer. DevOps engineer or Developer can enforce their policy after understanding its impact on network flow. Further, they can also download Kubernetes CR YAML and check into their git repo to apply it as part of their code deployment. Even if the environment is rebuilt, the policies being part of code are directly applied to the services.

Once the zero-trust security policy is enforced between trusted workloads of cartservice and checkoutservice, the user can also create a default-deny policy at the end of the namespace to deny unwanted lateral connections.

Scenario 2: How to implement a security policy (if a threat is detected and CartService is vulnerable)

If the CartService is vulnerable due to poor policy design, and an identified threat is able to probe that workload, DevOps can create a quarantine policy to log and deny those flows at the earliest possible stage of the policy tier board in Calico Cloud.

Implement identity-aware microsegmentation for Frontend and ShippingService

Frontend talks to ShippingService, but under organizational rules. Shippingservice is the service that stores all mailing information for all customers. The Frontend purpose is to provide customer login and interact with other services which changes with respect to newer product availability and existing product inventory. Both services have distinct security requirements as they are owned by different teams and contain different levels of confidential information. Let’s simplify it to the next figure, where frontend and backend are in different zones and have controlled communication among them.

Figure 1: Storefront microservices architecture

How to make sure that ‘frontend’ and ‘backend’ microsegmentation happen according to organizational requirements

In this scenario, DevOps can create a zone-based architecture via a security policy similar to traditional firewall solutions. The frontend workload is given a label match of `fw-zone=dmz` (Demilitarized Zone). Any workloads with the DMZ label match can receive ingress traffic from the public internet and can then relay those flows to workloads in a trusted zone (i.e. service-1)

The “trusted” zone is responsible for controlling flows between microservices within that zone, as well as securely allowing traffic to and from the DMZ and ‘restricted’ zones. Team can implement zero trust by only allowing traffic between these pods explicitly, based on label match, port, and protocol. That way, if a new workload is introduced into this namespace, it would need to match all three of the above contexts in order for the packet to be allowed.

Figure 2: Kubernetes insecure flat network design to rogue workloads

Finally, the team implements a “restricted” zone that ensures workloads handling sensitive data, such as databases or log event handlers, are only able to talk to workloads in a trusted zone. This applies to both ingress and egress traffic. Under no circumstance could a rogue workload in our cluster talk to this database, nor could the database interface against any third-party services/APIs. The only way it could talk to any external IP is via this secure zone-based architecture.

Conclusion

Windows on AKS can be extended with partner solutions, just like Linux by utilizing Calico’s recommended policies, policy board, and tiering, teams can reduce the attack surface of deployed Windows-based containers in a namespace and implement microsegmentation to prevent lateral movement of threats across different workloads within a namespace to strengthen their application’s security posture.

Try it yourself here in self-paced workshop.

Microsoft Tech Community – Latest Blogs –Read More

Inclusive and productive Windows 11 experiences for everyone

Today we begin to release new features and enhancements to Windows 11 Enterprise—features that offer a more intuitive and user-friendly experience for both workers and IT admins. Most of these new features will be enabled by default in the March 2024 optional non-security preview release for all editions of Windows 11, versions 23H2 and 22H2. IT admins who want to get the new Windows 11 features can enable optional updates for their managed devices via policy.

New in accessibility

One of the most exciting areas of enhancement involves voice access, a feature in Windows 11 that enables everyone, including people with mobility disabilities, to control their PC and author text using only their voice and without an internet connection. Voice access now supports multiple languages, including French, German, and Spanish. People can create custom voice shortcuts to quickly access frequently used commands. And, voice access now works across multiple displays with number and grid overlays that help people easily switch between screens using only voice commands.

Enhancements to Narrator, the built-in screen reader, are also coming. You’ll be able to preview natural voices before downloading them and utilize a new keyboard command that allows you to more easily move between images on a screen. Narrator’s detection of text in images, including handwriting, has been improved, and it now announces the presence of bookmarks and comments in Microsoft Word.

If you’re interested in learning about Windows 11 accessibility features, please check out the following resources:

Inside Windows 11 accessibility setting and tools

Skilling snack: Accessibility in Windows 11

Skilling snack: Voice access in Windows

Enhanced sharing

Sharing content is now easier with updates to Windows share and Nearby Share. The Windows share window now displays different apps for “Share using” based on the account you use to sign in. Nearby Share has also been improved, with faster transfer speeds for people on the same network and the ability to give your device a friendly name for easier identification when sharing.

Casting

Casting, the feature that allows you to wirelessly send content from your device to a nearby display, has been enhanced. You will receive notifications suggesting the use of Cast when multitasking, and the Cast menu in quick settings now provides more help in finding nearby displays and fixing connections.

Snap layouts

Snap layouts, the feature that helps you organize the apps on your screen, now allows you to hover over the minimize or maximize button of an app to open the layout box, and to view various layout options. This makes it easier for you to choose the best layout for the task at hand.

New Windows 365 features now available

Windows 365 now offers new features including a new, dedicated mode for Windows 365 Boot that allows you to sign in to your Cloud PC using passwordless authentication. A fast account switching experience has also been added. For Windows 365 Switch, which lets you sign in and connect to your Cloud PC using Windows 11 Task view, you’ll now find it easier to disconnect from your Cloud PC and see desktop indicators to help you easily see whether you are on your Cloud PC or local PC.

For more information, see today’s post, New Windows 365 Boot and Switch features now available.

Unified enterprise update management

We are also releasing enhancements to Windows Autopatch in direct response to your feedback. Several new and upcoming enhancements give you more control, extend the value of your investments, and help you streamline update management, including:

The ability to import Update rings for Windows 10 and later (preview)

Customer defined service outcomes (preview)

Improved data refresh speed and reporting accuracy

Looking ahead, one of the most noticeable changes in Windows Autopatch will be a simplified update management interface that will make the update ecosystem easier to understand. We are unifying our update management offering for enterprise organizations—bringing together Windows Autopatch and the Windows Update for Business deployment service into a single service that enterprise organizations can use to update and upgrade Windows devices as well as update Microsoft 365 Apps, Microsoft Teams, and Microsoft Edge.

We invite you to read our ongoing Windows Autopatch updates in the Windows IT Pro Blog to find out more about richer functionality planned for Windows Autopatch. For the latest, see What’s new in Windows Autopatch: February 2024.

Get familiar with the latest innovations, including Copilot, creator apps, and more

Today’s announcement from Yusuf Mehdi offers more details about new innovations coming to Windows 11 including availability and rollout plans. You can find a summary of all the new enhancements and features in the Windows Update configuration documentation and, as always, stay up to date on rollout plans and known issues (identified and resolved) via the Windows release health dashboard.

Continue the conversation. Find best practices. Bookmark the Windows Tech Community, then follow us @MSWindowsITPro on X/Twitter. Looking for support? Visit Windows on Microsoft Q&A.

Microsoft Tech Community – Latest Blogs –Read More

Inclusive and productive Windows 11 experiences for everyone

Today we begin to release new features and enhancements to Windows 11 Enterprise—features that offer a more intuitive and user-friendly experience for both workers and IT admins. Most of these new features will be enabled by default in the March 2024 optional non-security preview release for all editions of Windows 11, versions 23H2 and 22H2. IT admins who want to get the new Windows 11 features can enable optional updates for their managed devices via policy.

New in accessibility

One of the most exciting areas of enhancement involves voice access, a feature in Windows 11 that enables everyone, including people with mobility disabilities, to control their PC and author text using only their voice and without an internet connection. Voice access now supports multiple languages, including French, German, and Spanish. People can create custom voice shortcuts to quickly access frequently used commands. And, voice access now works across multiple displays with number and grid overlays that help people easily switch between screens using only voice commands.

Enhancements to Narrator, the built-in screen reader, are also coming. You’ll be able to preview natural voices before downloading them and utilize a new keyboard command that allows you to more easily move between images on a screen. Narrator’s detection of text in images, including handwriting, has been improved, and it now announces the presence of bookmarks and comments in Microsoft Word.

If you’re interested in learning about Windows 11 accessibility features, please check out the following resources:

Inside Windows 11 accessibility setting and tools

Skilling snack: Accessibility in Windows 11

Skilling snack: Voice access in Windows

Enhanced sharing

Sharing content is now easier with updates to Windows share and Nearby Share. The Windows share window now displays different apps for “Share using” based on the account you use to sign in. Nearby Share has also been improved, with faster transfer speeds for people on the same network and the ability to give your device a friendly name for easier identification when sharing.

Casting

Casting, the feature that allows you to wirelessly send content from your device to a nearby display, has been enhanced. You will receive notifications suggesting the use of Cast when multitasking, and the Cast menu in quick settings now provides more help in finding nearby displays and fixing connections.

Snap layouts

Snap layouts, the feature that helps you organize the apps on your screen, now allows you to hover over the minimize or maximize button of an app to open the layout box, and to view various layout options. This makes it easier for you to choose the best layout for the task at hand.

New Windows 365 features now available

Windows 365 now offers new features including a new, dedicated mode for Windows 365 Boot that allows you to sign in to your Cloud PC using passwordless authentication. A fast account switching experience has also been added. For Windows 365 Switch, which lets you sign in and connect to your Cloud PC using Windows 11 Task view, you’ll now find it easier to disconnect from your Cloud PC and see desktop indicators to help you easily see whether you are on your Cloud PC or local PC.

For more information, see today’s post, New Windows 365 Boot and Switch features now available.

Unified enterprise update management

We are also releasing enhancements to Windows Autopatch in direct response to your feedback. Several new and upcoming enhancements give you more control, extend the value of your investments, and help you streamline update management, including:

The ability to import Update rings for Windows 10 and later (preview)

Customer defined service outcomes (preview)

Improved data refresh speed and reporting accuracy

Looking ahead, one of the most noticeable changes in Windows Autopatch will be a simplified update management interface that will make the update ecosystem easier to understand. We are unifying our update management offering for enterprise organizations—bringing together Windows Autopatch and the Windows Update for Business deployment service into a single service that enterprise organizations can use to update and upgrade Windows devices as well as update Microsoft 365 Apps, Microsoft Teams, and Microsoft Edge.

We invite you to read our ongoing Windows Autopatch updates in the Windows IT Pro Blog to find out more about richer functionality planned for Windows Autopatch. For the latest, see What’s new in Windows Autopatch: February 2024.

Get familiar with the latest innovations, including Copilot, creator apps, and more

Today’s announcement from Yusuf Mehdi offers more details about new innovations coming to Windows 11 including availability and rollout plans. You can find a summary of all the new enhancements and features in the Windows Update configuration documentation and, as always, stay up to date on rollout plans and known issues (identified and resolved) via the Windows release health dashboard.

Continue the conversation. Find best practices. Bookmark the Windows Tech Community, then follow us @MSWindowsITPro on X/Twitter. Looking for support? Visit Windows on Microsoft Q&A.

Microsoft Tech Community – Latest Blogs –Read More

What is AI? Jared Spataro at the Global Nonprofit Leaders Summit

Jared Spataro, Microsoft Corporate Vice President, AI at Work, presented an engaging keynote at the Global Nonprofit Leaders Summit that left everyone amazed and optimistic about the abilities and simplicity of AI for everyone.

Watch Jared’s session for a walk through that shows how Microsoft Copilot can be a powerful tool for productivity and creativity. From the fun and fantastic, to the practical and powerful, Jared queries Copilot in a real-time demo using his own workstreams in Outlook, Teams, and more:

Can elephants tow a car?

What will the workplace of the future look like?

Can you write a Python script to extract insights from this data?

Can you summarize and prioritize the latest emails from my boss?

Jared shares important tips for prompt engineering, previews the new “Sounds like me” feature to co-create responses in your own voice, and talks about the value of AI being “usefully wrong.”

And he reminds us to say please and thank you.

What did you learn from Jared’s session? How are you using Copilot to enhance creativity and productivity?

Microsoft Tech Community – Latest Blogs –Read More

What’s New in Copilot for Microsoft 365

Welcome to the first edition of What’s new in Copilot for Microsoft 365. We are continuing to enhance Copilot to provide deeper experiences for users and tighter integration with your organization’s data to unlock even more capabilities. Whether you’re a Microsoft 365 admin for a large enterprise or smaller company or someone who uses Copilot for Microsoft 365 for their daily work, every month we’ll highlight updates to let you know about new and upcoming features and where you can find more information to help make your Copilot experience a great one. In addition to these monthly posts, we’ll continue to provide updates through our usual message center posts and on our public roadmap.

Today, we are highlighting Copilot support in 17 additional languages, expanded resources and coming features in Copilot Lab, the updated Copilot experience in Teams, Copilot in the Microsoft 365 mobile app, and a new feature that provides a single entry point to help you create content from scratch. We’ll also take a look at updates to Copilot in OneDrive, Stream, and Forms plus a new feature that generates content summaries when you share files with coworkers. Finally, we’ll share a bit on what’s new in the Copilot for Microsoft 365 Usage report for admins. Let’s take a closer look at what’s new this month:

Experience Copilot support for more languages

Begin your Copilot journey and build new skills with Copilot Lab

Copilot now available in the Microsoft 365 mobile app

Introducing Copilot in Forms

Extract information quickly from your files with Copilot in OneDrive

Include quick summaries when sharing documents

Get instant video summaries and insights with Copilot in Stream

Try new ways of working with Help me create

Draft emails quicker and get coaching tips for your messages with Copilot in classic Outlook for Windows

Experience the new Copilot experience in Microsoft Teams

Check out the improved usage reports for Microsoft Copilot in the admin center

Catch up on the Copilot for Microsoft 365 Tech Accelerator

Experience Copilot support for more languages

We are adding support for an additional 17 languages, further expanding access to Copilot worldwide. We will start rolling out Arabic, Chinese Traditional, Czech, Danish, Dutch, Finnish, Hebrew, Hungarian, Korean, Norwegian, Polish, Portuguese (Portugal), Russian, Swedish, Thai, Turkish and Ukrainian over March and April. Copilot is already supported in the following languages: English (US, GB, AU, CA, IN), Spanish (ES, MX), Japanese, French (FR, CA), German, Portuguese (BR), Italian, and Chinese Simplified. Check the public roadmap and message center to track roll out status.

Copilot in Excel (preview) is currently supported in English (US, GB, AU, CA, IN) and will be supported in Spanish (ES, MX), Japanese, French (FR, CA), German, Portuguese (BR), Italian, and Chinese Simplified starting in March.

Begin your Copilot journey and build new skills with Copilot Lab

Copilot Lab helps users get started with the art of prompting and helps organizations with onboarding and adoption by providing a single experience that meets Copilot users where they are in their journey. Today, we’re expanding Copilot Lab by transforming the current prompts library into a comprehensive learning resource that helps everyone begin their Copilot journey with confidence and to take greater advantage of Copilot in their daily work.

Start your Copilot journey with ease. We’ve learned from our earliest Copilot adopters that working with generative AI requires new skills and habits. Copilot Lab already shows up in Copilot for Microsoft 365, Word, PowerPoint, Excel, and OneNote via the small notebook icon that suggests relevant prompts to inspire you. Now, we have consolidated our best resources, training videos, ready-made prompts, and inspiration to make Copilot Lab the single resource to help you get started. To do this, we’ve brought together our own internal best practices, insights from our earliest customers, findings from the Microsoft Research team, and thought leadership published on WorkLab.

Achieve more together by sharing your favorite prompts. With Copilot Lab, we are making it even easier to create, save, and share your favorite prompts with colleagues inside your organization. Now you can share prompts with colleagues to prepare for a customer meeting or to generate ideas for a new product launch. And leaders across your organization can showcase how they’re using Copilot by sharing their favorite prompts to save time or tackle any task at hand, to help improve personal and team productivity and encourage community-centric learning and adoption. This feature is integrated into the Copilot Lab website and in-app experiences will begin rolling out by this summer.

You can access Copilot Lab today at copilot.cloud.microsoft/prompts or directly in app by selecting the notebook icon next to the Copilot prompt window.

Copilot now available in the Microsoft 365 mobile app

We’re extending Copilot to the Microsoft 365 mobile app and to the Word and PowerPoint mobile apps. With the new Microsoft 365 app look and feel, you can easily find Copilot alongside your content, apps, and shortcuts. You can use it to:

Bring your content into Copilot to complete tasks on the go. Summarize documents, translate, explain, or ask questions, and have your answer grounded in the content you select.

Start generating content wherever you work based on your ideas and existing information, and hand over to Microsoft 365 mobile apps to continue working.

Interact with Copilot in Word mobile and PowerPoint mobile to comprehend content better and skim through only the most important slides on the go (requires a Copilot license).

The Microsoft 365 mobile app complements the Copilot mobile app rolled out earlier this month, and licensed users can continue to use the Copilot mobile app to have responses grounded in both web or work data. IT admins can easily deploy both the Microsoft 365 mobile app and the Copilot mobile app to corporate devices using Microsoft Intune or a third-party tool, or users can simply download the Microsoft 365 mobile app on any supported device and sign in.

Copilot integration in the Microsoft 365 mobile app and the Word and PowerPoint mobile apps is rolling out now. You can learn more here.

The iOS layout of the Microsoft 365 mobile app, showing Copilot available on the taskbar.

Create compelling surveys, polls, and forms with Copilot in Forms

Use Copilot to simplify the process of creating surveys, polls, and forms, saving you time and effort. Go to forms.microsoft.com, select New, and tell Copilot your topic, length, and any additional context. Copilot will provide relevant questions and suggestions, and then you can refine the draft by adding extra details, editing text, or removing content. Once you’ve created a solid draft with Copilot, you can then customize the background with one of the many Forms style options. With Copilot in Forms, you’ll effortlessly create well-crafted forms that capture your audience’s attention, leading to better response rates.

Copilot in Forms will be available in March. You can learn more here.

An image of a form draft with Copilot prompts displayed

Extract information quickly from your files with Copilot in OneDrive

Copilot in OneDrive gives you instant access to information contained deep within your files. Initially available from the OneDrive web experience, Copilot will provide you with smart and intuitive ways to interact with your documents, presentations, spreadsheets, and files. You can use Copilot in OneDrive to:

Get information from your files: Ask questions about your content using natural language, and Copilot will fetch the information from your files, saving you the work and time of manually searching for what you need.

Generate file summaries: Need a quick overview of a file? Copilot can summarize the contents of one or multiple files, offering you quick insights without having to even open the file.

Find files using natural language: Find files in new ways by using Copilot prompts such as “Show me all the files shared with me in the past week” or “Show files that Kat Larson has commented in.”

Copilot in OneDrive will be available in late April on OneDrive for Web. You can learn more here.

Alt text: Video showing Copilot in OneDrive with a prompt to extract information from a collection of resumes.

Include quick summaries when sharing documents

Add Copilot-generated summaries when you share documents with your colleagues. These summaries, included in the document sharing notification, give your recipients immediate context around a document and a quick overview of its content without needing to open the file. Sharing summaries helps users prioritize work, increases engagement, and reduces cognitive burden.

Sharing summaries will be available in March 2024, starting when sharing a Word document from the web, with support in the desktop client and the mobile app later this year. Learn more here.

GIF showing AI-generated sharing summary when sharing a Microsoft Word doc.

Get instant video summaries and insights with Copilot in Stream

By using Copilot in Microsoft Stream, you can quickly get the information you need about videos in your organization, whether you’re viewing the latest Teams meeting recording, town hall, product demo, how-to, or onsite videos from frontline workers. Copilot helps you get what you need from your videos in seconds. You can use it to:

Summarize any video and identify relevant points you need to watch

Ask questions to get insights from long or detailed videos

Locate when people, teams, or topics are discussed so you can jump to that point in the video

Identify calls to action and where you can get involved to help

Copilot in Stream will be available in late April. You can learn more here.

Copilot in Stream can quickly summarize a video or answer your questions about the content in the video. Alt text: Screen shot showing Copilot in Microsoft Stream.

Try new ways of working with Help me create

In March, we’re rolling out a new Copilot capability in the Microsoft 365 web app that helps you focus on the substance of your content while Copilot suggests the best format: a white paper, a presentation, a list, an icebreaker quiz, and so on. In the Microsoft 365 app at microsoft365.com, simply tell Help me create what you want to work on and it will suggest the best app for you and give you a boost with generative AI suggestions. Learn more here.

Help me create dialog box in the foreground, with the Microsoft 365 web app create screen in the background.

Draft emails quicker and get coaching tips for your messages with Copilot in classic Outlook for Windows

Customers of the new Outlook for Windows have been enjoying Copilot features like draft, coaching, and summary which we announced last year. Since November last year, summary by Copilot has also been available in classic Outlook for Windows. Soon, draft and coaching will be coming to classic Outlook too.

Draft with Copilot helps you reduce time spent on email by drafting new emails or responses for you with just a short prompt that explains what you want to communicate. Because you are always in control with Copilot, you can choose to adjust the proposed draft in length and tone or ask Copilot to generate a new message – and you can always go back to the previous options if you prefer.

Coaching by Copilot can help you get your point across in the best possible way, coaching you on tone (for example, too aggressive, too formal, and so on), reader sentiment (how a reader might perceive your message), and clarity. Copilot can provide coaching for drafts it created or drafts you wrote yourself.

Coaching will start rolling out in early March and draft by Copilot will start rolling out in late March.

An image of a message composed in the classic Outlook for Windows with the Copilot icon being clicked to reveal options for draft and coaching.

Experience the new Copilot in Microsoft Teams

We have recently enabled a new Copilot experience in Microsoft Teams that offers better prompts, easier access, and more functionality than the previous version. Copilot in Teams will be automatically pinned above your chats, and you can use it to catch up, create, and ask anything related to Microsoft 365. Learn more about the new Copilot experience in Teams here.

An image of the Copilot experience in Microsoft Teams, responding to a question based on the user’s Graph data

Check out the improved usage reports for Microsoft Copilot in the admin center

The Microsoft 365 admin center Usage reports offer a growing set of usage insights across your Microsoft 365 cloud services. Among these reports, the Copilot for Microsoft 365 Usage report (Preview) is built to help Microsoft 365 admins plan for rollout, inform adoption strategy, and make license allocation decisions.

The report now includes usage metrics for Microsoft Copilot with Graph-grounded chat. This allows you to see how Chat compares with usage of Copilot in other apps like Teams, Outlook, Word, PowerPoint, Excel, OneNote and Loop. You can review the enabled and active user time series chart to assess how usage is trending over time. The new metric has been added retroactively dating back to late November of 2023. To access the report, navigate to Reports > Usage and select the Copilot for Microsoft 365 product report. Learn more here.

An image of the Copilot for Microsoft 365 Usage report highlighting the addition of a new metric for Microsoft Copilot with Graph-grounded chat

Learn more about the use of Copilot for Microsoft 365 in the Financial Services Industry

Today we are releasing the new white paper for the financial services industry (FSI) with information about use cases and benefits for the FSI, information about risks and regulations, guidance for managing and governing a generative AI solution, and more information about how to prepare for Copilot. Read the paper here.

Catch up on the Copilot for Microsoft 365 Tech Accelerator

In case you missed it, you can catch up on all the sessions from the Copilot for Microsoft 365 Tech Accelerator via recordings on the event page. The event covered a range of topics including how Copilot works, how to prepare your organization for Copilot, strategies for deploying, driving adoption, and measuring impact, and deep dives on how to extend Copilot with Copilot Studio and Graph connectors. Chat Q&A is open through Friday, March 1, 12:00 P.M. PT, so watch the recordings and get any questions you might have answered.

Did you know? The Microsoft 365 Roadmap is where you can get the latest updates on productivity apps and intelligent cloud services. Check out what features are in development or coming soon on the Microsoft 365 Roadmap. All future rollout dates assume the feature availability on the Current Channel. Customers should expect these features to be available on the Monthly Enterprise Channel the second Tuesday of the upcoming month.

Microsoft Tech Community – Latest Blogs –Read More

Azure DDoS Protection – SecOps Deep Dive

This blog is written in collaboration with @SaleemBseeu

Introduction:

Azure DDoS protection is a security solution offered by Microsoft Azure to protect applications and resources from Distributed Denial of Service (DDoS) attacks. DDoS attacks are a type of attacks that attempt to overwhelm a target application or service by flooding it with a massive volume of malicious traffic, thereby rendering it unavailable to legitimate users. Azure DDoS protection addresses these concerns by providing advanced mitigation capabilities and ensuring the availability of resources.

Some of the key features of Azure DDoS Protection include:

Adaptive Tuning – Adaptive tuning will help with setting up protection policies tuned to your application’s traffic profiles. It automatically learns a baseline representing your application posture in peace time and sets mitigation threshold. The profile adjusts as traffic changes over time.

Attack Analytics and Metrics – Attack analytics will help with detailed reports in five-minute increments during an attack, and a complete summary after the attack ends. We can stream these mitigation flow logs to Microsoft Sentinel or an offline security information and event management (SIEM) system for near real-time monitoring during an attack. On top of this, alerts can be configured at the start and stop of an attack, and over the attack’s duration, using built-in attack metrics.

DDoS Rapid Response – During an active attack, Azure DDoS Protection customers have access to the DDoS Rapid Response (DRR) team, who can help with attack investigation during an attack and post-attack analysis.

Cost Guarantee – Receive data-transfer and application scale-out service credit for resource costs incurred as a result of documented DDoS attacks.

SecOps Deep Dive Investigation:

In this blog we will be focusing on how to investigate a DDoS Attack using the logs/metrics and newly built KQL queries.

Prerequisites:

To initiate an investigation into a DDOS attack, we must establish the following prerequisites. More information on configuration of the prerequisites can be found here.

Create a DDoS Protection Plan.

Associate the DDoS Protection Plan to an existing Virtual Network.

Set Up Diagnostic Logging for the respective Public IP resource.

Simulate a DDoS Attack using one of our Simulation Partners. For more information on attack simulation, refer to this documentation.

Once the Attack Simulation is completed, we can look in to following metrics available under a public IP resource to better understand the attack patterns.

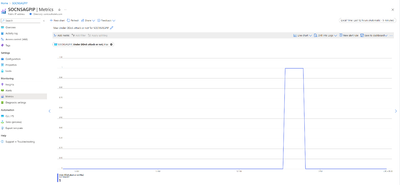

Under DDoS Attack or Not: This Metric will give information on whether our Public IP resource is under a DDoS attack or not. This metric can also be used to set up an alert to notify about DDoS attacks via email and other available options. As we can see here, during the attack duration, the metric changed from 0 to 1 and rest of the times it remained 0.

Inbound SYN Packets to trigger DDoS Mitigation: This metric will provide information on the Threshold for Inbound Syn packets to trigger DDoS Mitigation. This threshold will be unique to each public IP resource depending on the average application traffic and adaptive tuning functionality of Azure DDoS Protection. In this case the threshold is 10k Syn Packets per second.

Inbound TCP Packets DDoS: This metric will give us information on number of TCP packets came in during a DDoS Attack. In this case 49.30k Packets per second.

Inbound TCP Packets Dropped DDoS: This metric will give us information on number of TCP packets dropped out of the total incoming TCP packets. In this case almost all the packets 49.30k packets per second were dropped.

Inbound TCP Packets Forwarded DDOS: This metric will give us information on number of TCP packets forwarded to the service or application. In this case only about 19 packets were forwarded and rest all the packets were dropped.

In this way we can utilize the Public IP resource metrics to get deeper insights into the DDoS attacks.

Queries for DDoS Mitigation Trends:

To understand the attack patterns easily, our team has developed 2 new KQL queries that will give detailed information on total packet trends and Attack Vectors as shown below. These Queries are available in our Net Sec GitHub Repository – GitHub

To get the trend of the total and dropped packets for each public IP address:

To get the information on DDoS attack mitigation duration and attack vectors

As demonstrated earlier, the two queries yield essential insights into recent attacks occurring within a specified time frame. For a deeper analysis of these attacks, we can explore the following three log categories:

AzureDiagnostics | where Category == “DDoSProtectionNotifications”: This log category furnishes details about the initiation and cessation of DDoS mitigation. These logs serve as a basis for configuring alerts to notify the Security Operations Center (SOC) Analyst as necessary.

AzureDiagnostics | where Category == “DDoSMitigationReports”: Within this category, you’ll find comprehensive post-mitigation reports and incremental updates, generated every 5 minutes during an ongoing DDoS attack. These reports encompass critical information such as packet counts, attack types, protocols, and details about the attacker’s source. To get summarized information on this, we can also use the queries from GitHub.

AzureDiagnostics | where Category == “DDoSMitigationFlowLogs”: This log category provides a granular view of each packet encountered during an attack. It includes crucial data such as packet forwarding or dropping status, along with specifics like source IP, destination IP, port, and protocol.”

In this case, our application is situated behind an Azure Application Gateway WAF. Once we have conducted a comprehensive log analysis, we can proceed to evaluate the health of our application using the metrics furnished by the Application Gateway. These metrics offer detailed insights during the attack time, including but not limited to the metrics listed below:

Failed Requests – Count of Failed Requests that the App Gateway has served.

Throughput – Number of Bytes per second the App Gateway has served.

Backend First Byte Response Time – Approximating Processing time of backend server.

In addition to the details provided earlier, we have a predefined workbook that provides comprehensive insights into historical Distributed Denial of Service (DDoS) attacks targeting specific sets of public IP resources. This consolidated dashboard outlines critical attack information, including recent incidents, protocols involved, drop reasons, and the countries of origin etc.

Conclusion:

Azure DDoS protection provides a powerful shield for your infrastructure, helping you to defend against DDoS attacks. By investigating the telemetry, and using the provided metrics and logs, you can gain a deeper understanding of the nature of DDoS attacks and take appropriate action to protect your resources.

Microsoft Tech Community – Latest Blogs –Read More

What is AI? Jared Spataro at the Global Nonprofit Leaders Summit

Jared Spataro, Microsoft Corporate Vice President, AI at Work, presented an engaging keynote at the Global Nonprofit Leaders Summit that left everyone amazed and optimistic about the abilities and simplicity of AI for everyone.

Watch Jared’s session for a walk through that shows how Microsoft Copilot can be a powerful tool for productivity and creativity. From the fun and fantastic, to the practical and powerful, Jared queries Copilot in a real-time demo using his own workstreams in Outlook, Teams, and more:

Can elephants tow a car?

What will the workplace of the future look like?

Can you write a Python script to extract insights from this data?

Can you summarize and prioritize the latest emails from my boss?

Jared shares important tips for prompt engineering, previews the new “Sounds like me” feature to co-create responses in your own voice, and talks about the value of AI being “usefully wrong.”

And he reminds us to say please and thank you.

What did you learn from Jared’s session? How are you using Copilot to enhance creativity and productivity?

Microsoft Tech Community – Latest Blogs –Read More

Updates from 162.1 and 162.2 releases of SqlPackage and the DacFx ecosystem

Within the past 4 months, we’ve had 2 minor releases and a patch release for SqlPackage. In this article, we’ll recap the features and notable changes from SqlPackage 162.1 (October 2023) and 162.2 (February 2024). Several new features focus on giving you more control over the performance of deployments by preventing potential costly operations and opting in to online operations. We’ve also introduced an alternative option for data portability that can provide significant speed improvements to databases in Azure. Read on for information about these improvements and more, all from the recent releases in the DacFx ecosystem. Information on features and fixes is available in the itemized release notes for SqlPackage.

.NET 8 support

The 162.2 release of DacFx and SqlPackage introduces support for .NET 8. SqlPackage installation as a dotnet tool is available with the .NET 6 and .NET 8 SDK. Install or update easily with a single command if the .NET SDK is installed:

# install

dotnet tool install -g microsoft.sqlpackage

# update

dotnet tool update -g microsoft.sqlpackage

Online index operations

Starting with SqlPackage 162.2, online index operations are supported during publish on applicable environments (including Azure SQL Database, Azure SQL Managed Instance, and SQL Server Enterprise edition). Online index operations can reduce the application performance impact of a deployment by supporting concurrent access to the underlying data. For more guidance on online index operations and to determine if your environment supports them, check out the SQL documentation on guidelines for online index operations.

Directing index operations to be performed online across a deployment can be achieved with a command line property new to SqlPackage 162.2, “PerformIndexOperationsOnline”. The property defaults to false, where just as in previous versions of SqlPackage, index operations are performed with the index temporarily offline. If set to true, the index operations in the deployment will be performed online. When the option is requested on a database where online index operations don’t apply, SqlPackage will emit a warning and continue the deployment.

An example of this property in use to deploy index changes online is:

sqlpackage /Action:Publish /SourceFile:yourdatabase.dacpac /TargetConnectionString:”yourconnectionstring” /p:PerformIndexOperationsOnline=True

More granular control over the index operations can be achieved by including the ONLINE=ON/OFF keyword in index definitions in your SQL project. The online property will be included in the database model (.dacpac file) from the SQL project build. Deployment of that object with SqlPackage 162.2 and above will follow the keyword used in the definition, superseding any options supplied to the publish command. This applies to both ONLINE=ON and ONLINE=OFF settings.

DacFx 162.2 is required for SQL project inclusion of ONLINE keywords with indexes and is included with the Microsoft.Build.Sql SQL projects SDK version 0.1.15-preview. For use with non-SDK SQL projects, DacFx 162.2 will be included in future releases of SQL projects in Azure Data Studio, VS Code, and Visual Studio. The updated SDK or SQL projects extension is required to incorporate the index property into the dacpac file. Only SqlPackage 162.2 is required to leverage the publish property “PerformIndexOperationsOnline”.

Block table recreation

With SqlPackage publish operations, you can apply a new desired schema state to an existing database. You define what object definitions you want in the database and pass a dacpac file to SqlPackage, which in turn calculates the operations necessary to update the target database to match those objects. The set of operations are known as a “deployment plan”.

A deployment plan will not destroy user data in the database in the process of altering objects, but it can have computationally intensive steps or unintended consequences when features like change tracking are in use. In SqlPackage 162.1.167, we’ve introduced an optional property, /p:AllowTableRecreation, which allows you to stop any deployments from being carried out that have a table recreation step in the deployment plan.

/p:AllowTableRecreation=true (default) SqlPackage will recreate tables when necessary and use data migration steps to preserve your user data

/p:AllowTableRecreation=false SqlPackage will check the deployment plan for table recreation steps and stop before starting the plan if a table recreation step is included

SqlPackage + Parquet files (preview)

Database portability, the ability to take a SQL database from a server and move it to a different server even across SQL Server and Azure SQL hosting options, is most often achieved through import and export of bacpac files. Reading and writing the singular bacpac files can be difficult when databases are over 100 GB and network latency can be a significant concern. SqlPackage 162.1 introduced the option to move the data in your database with parquet files in Azure Blob Storage, reducing the operation overhead on the network and local storage components of your architecture.

Data movement in parquet files is available through the extract and publish actions in SqlPackage. With extract, the database schema (.dacpac file) is written to the local client running SqlPackage and the data is written to Azure Blob Storage in Parquet format. With publish, the database schema (.dacpac file) is read from the local client running SqlPackage and the data is read from or written to Azure Blob Storage in Parquet format.