Category: Microsoft

Category Archives: Microsoft

What's new in security for Azure SQL and SQL Server | Data Exposed

Check out this episode to learn the newest information on security for Azure SQL and SQL Server!

View/share our latest episodes on Microsoft Learn and YouTube!

Microsoft Tech Community – Latest Blogs –Read More

What's new in security for Azure SQL and SQL Server | Data Exposed

Check out this episode to learn the newest information on security for Azure SQL and SQL Server!

View/share our latest episodes on Microsoft Learn and YouTube!

Microsoft Tech Community – Latest Blogs –Read More

What's new in security for Azure SQL and SQL Server | Data Exposed

Check out this episode to learn the newest information on security for Azure SQL and SQL Server!

View/share our latest episodes on Microsoft Learn and YouTube!

Microsoft Tech Community – Latest Blogs –Read More

What’s new in security for Azure SQL and SQL Server | Data Exposed

Check out this episode to learn the newest information on security for Azure SQL and SQL Server!

View/share our latest episodes on Microsoft Learn and YouTube!

Microsoft Tech Community – Latest Blogs –Read More

Performance diagnostics for Azure Database for MySQL – Flexible Server

Database monitoring and troubleshooting is critical for any data driven application to detect issues faster and mitigate them as quickly as possible. The most difficult area to troubleshoot is being able to identify and address MySQL database performance issues. To help database administrators and developers to identify performance issues bottlenecks, in the Azure portal, there is a new Performance diagnostics feature designed to help manage Azure Database for MySQL servers. This feature takes advantage of MySQL’s information_schema and performance_schema databases to provide a comprehensive view of the system’s internal operations. The data from performance schema helps to diagnose performance issues and identify bottlenecks.

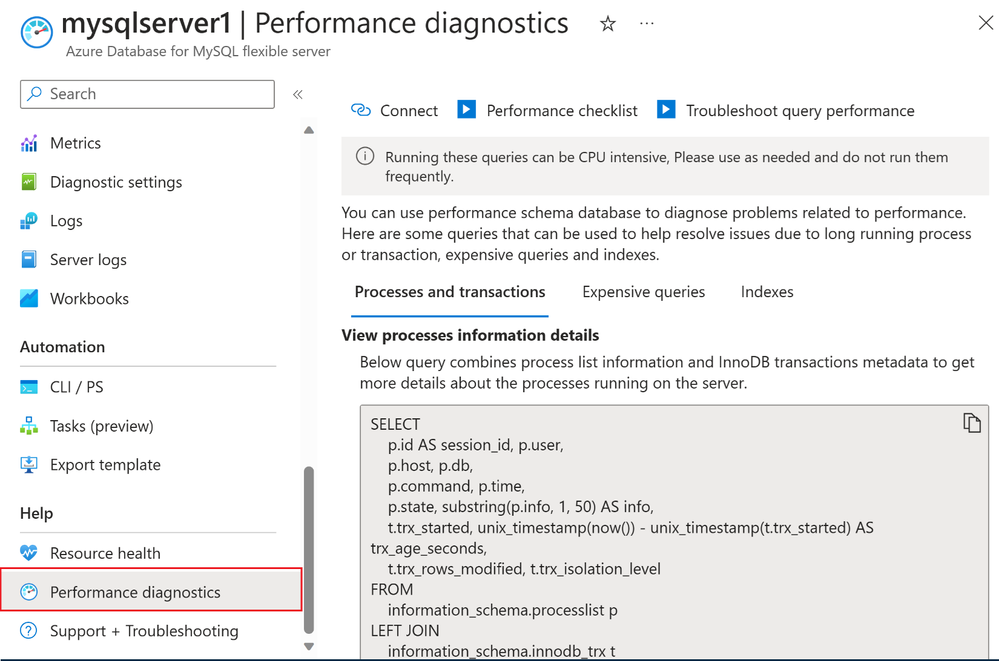

To access the functionality, in the Azure portal, navigate to an Azure Database for MySQL flexible server, and then, under Help, select Performance diagnostics:

View performance diagnostics in left navigation menu under Help.

Identifying performance issues

The performance diagnostics feature includes three tabs: Processes and transactions, Expensive queries, and Indexes. Additional information about these pages is provided in the following sections.

The Processes and transactions tab

This tab shows you queries that can help you identify long running processes and transactions. You can get this information from the following two tables:

INFORMATION_SCHEMA.PROCESSLIST table: You can get the server’s process list from this table.

INFORMATION_SCHEMA.INNODB_TRX: You can get InnoDB’s transaction metadata from this table.

An accurate description of the connection and transaction state requires using information from both sources, for example, because the process list doesn’t indicate whether or not there’s an open transaction associated with any the session, while the transaction metadata doesn’t show session state and time spent in that state.

Identify long running processes and open transactions

To mitigate or debug further, consider the following recommendations:

Avoid large or long-running transactions by breaking them into smaller transactions.

Create an alert for “Host CPU Percent” so that you get notifications if the system exceeds any of the specified thresholds.

Use Query Performance Insights to identify and then optimize any problematic or slowly running queries.

The Expensive queries tab

This tab shows all the expensive queries that are running on your MySQL flexible server instance. There could be a lot of queries from your application being executed on the MySQL server. Therefore, you can always create a digest so that the most relevant information for the expensive queries with the highest execution time.

View Expensive queries for MySQL flexible server

To mitigate or debug further, consider the following recommendations:

Use covering indexes to speed up your queries. A covering index is a regular index that provides all the data required for a query without having to access the actual table. It removes the need to access the actual table, saving a second trip to get the rest of the data.

You can use an EXPLAIN statement to analyze your queries and optimize it.

Learn how to troubleshoot query performance issues.

The Indexes tab

This tab shows queries that can help you identify unused indexes. When unused indexes are present, you may see symptoms of high I/O usage on your server metrics. These unused indexes consume storage and can have a negative impact on performance as they consume disk space, cache, and slow write operations (INSERT / DELETE / UPDATE). As table data is modified, the indexes also need to be updated.

View indexes used for your queries

Remove unused indexes after verifying that the index is not being used. Be sure to avoid inadvertently removing an index that might be critical for a query that runs only quarterly or annually. Also, be sure to consider that some indexes are used to enforce uniqueness or ordering.

Running queries in performance diagnostics

Based on your networking configuration, you can run the queries using the Azure cloud shell and/or any MySQL client tool.

For a MySQL flexible server with public access, select Connect to run the query in the portal using Azure cloud shell. You can also run the queries in Azure data studio, MySQL workbench, or MySQL command line tool.

Connect with Azure Cloud Shell to run performance diagnostic queries

For MySQL flexible servers with private access servers using VNET injections, Azure cloud shell is not supported. Hence you would need to copy and run these queries using a MySQL command line tool. Remember to select ‘performance schema’ as your database before running the query.

Run your queries in Azure data studio

Conclusion

Using these queries, MySQL developers and DBAs can gather information to help pinpoint performance bottlenecks, optimize queries, and enhance the overall efficiency of their database applications. Regularly analyzing and interpreting the data from the performance schema contributes to proactive performance management and ensures that your MySQL database continues to function optimally.

If you have any suggestions for improving the performance diagnostics experience for MySQL flexible servers, please leave a comment below or email us at AskAzureDBforMySQL@service.microsoft.com.

To learn more about what’s new with Flexible Server, see What’s new in Azure Database for MySQL – Flexible Server. Also be sure tune in for all of our updates and announcements by following us on social media: YouTube | LinkedIn | Twitter.

Microsoft Tech Community – Latest Blogs –Read More

Add LLM Prompts to Reports using Power BI Copilot for Microsoft Fabric

Interested in learning more about Power BI Copilot for Microsoft Fabric? I’ve published a new video walking through the Power BI Narrative visual with Copilot that provides a no-code (SaaS) mechanism for report developers to embed Azure OpenAI (Copilot) prompts into their reports.

There are a few great videos out there on the web for building and editing reports using Power BI Copilot, but the new Copilot Narrative (still in preview at time of recording) visual deserves more attention. LLM prompts can be added to the visual, which can be re-run every time an end user filters a report. Switching your filters from “Florida in December” to “Maine in January,” and you’d like to enhance the report with some external demographic data that ties to the data from your Power BI Semantic Model? All you need to do is push a button for a new narrative.

Also, by enabling report developers to store prompts in the visual, you can instruct the Azure OpenAI LLM that is powering Copilot to add urls and citations for the data that was used in the response.

The demo in the video is using over 220 million rows of data from the Git Repo that I put together with Inderjit Rana for customers to try out Microsoft Fabric and the Power BI Direct Lake connector, and you can recreate it yourself at this link: https://lnkd.in/gRavJURT

Microsoft Tech Community – Latest Blogs –Read More

New Windows 365 Boot and Switch features in public preview

We’re excited to announce new features for Windows 365 Boot and Windows 365 Switch that are now available in public preview!

Before we jump into the new features, here’s a refresher for everyone about their features now:

Windows 365 Boot allows a Windows 11, version 22H2 or 23H2, PC to let you choose to log directly into your Windows 365 Cloud PC as the primary Windows experience on the device. When you power on your device, Windows 365 Boot will take you to your Windows 11 log in experience. After log in, you will be directly connected to your Windows 365 Cloud PC with no additional steps. This is a great solution for shared devices where logging in with a unique user identity can take you to your personal and secure Cloud PC.

Windows 365 Switch provides the ability to easily move between a Windows 365 Cloud PC and the local desktop using the same familiar keyboard commands, as well as a mouse-click or a swipe gesture. Windows 365 Switch enables a seamless experience from within Windows 11 via the Task View feature. Windows 365 will be required at the endpoint, after which all relevant elements will show up automatically inside the Task view feature.

Ok, now let’s move over to all the new feature improvements!

Technical requirements

Let’s look at how to push the Windows 365 Boot components to your Windows 11 endpoints with Microsoft Intune:

Requirements:

Windows 11-based endpoints (Windows 11 Pro and Enterprise)

Microsoft Intune Administrator rights

Windows 365 Cloud PC license. See Create provisioning policies for guidance on how to create Cloud PCs

Enrollment in the Windows Insider Dev Channel and running Windows 11 Insider Preview Build 23601 or higher (see below for steps)

Note: Enrollment in the Windows Insider Program is required to join the public preview.

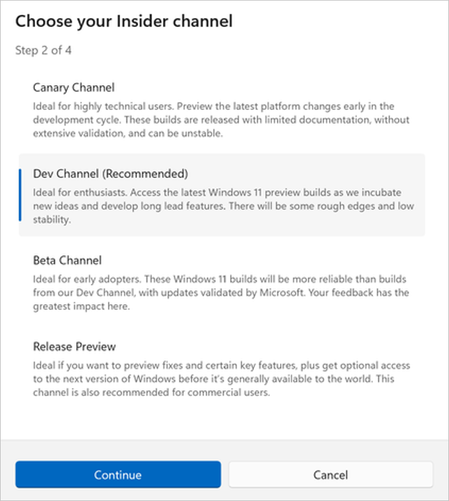

To use the new updates, you need to be enrolled on the Windows Insider Dev Channel. Here’s how:

In Settings, navigate to Windows Update > Windows Insider Program. Select Get started to initiate the enrollment process.

Sign in with your Microsoft account.

Select Dev Channel and Continue.

Screenshot of the “Choose your Insider channel box” with Dev Channel selected.

Restart the device to complete enrollment.

From the Windows Update Settings page, select Check for updates. Select Install all until you have all the latest Windows updates installed.

Screenshot of the Windows Update page in Settings.

You can also enroll your endpoints on a larger scale into the Windows Insider Program using Intune. You will need to enable pre-release builds for Windows updates and select Dev Channel as the pre-release channel.

Screenshot from the Microsoft Intune admin center, showing “Enable pre-release builds” enabled.

Please refer to our Windows Insider Program Documentation for helpful resources.

Dedicated mode for Windows 365 Boot

We’re now launching a new dedicated mode for Windows 365 Boot, now in public preview. You can now log in to your Windows 365 Cloud PC from your designated company-owned device. You’ll be able to seamlessly log in to your Windows 365 Cloud PC from Windows 11 login screen using passwordless authentication methods like Windows Hello for Business.

The new dedicated mode also comes with a fast account switching experience that enables you to effortlessly switch profiles to log in, personalize the experience with your username and password, display a picture on lock and log in screen, remember your username, and more.

Screenshot of the Windows login screen showing fast account switching with Windows 365 Boot.

New Microsoft Intune integration for Windows 365 Boot dedicated mode

You can start enabling Windows 365 Boot dedicated mode via Microsoft Intune. We’ve added a new option to configure that will add Windows Hello support to Windows 365 Boot.

Screenshot of the Basics menu for Windows 365 Boot in the Microsoft Intune admin center.

You can access Windows 365 Boot through the Intune guided flow scenario. Navigate to Devices > Windows 365 and select Windows 365 Boot or Windows 365 Boot – Public preview under Windows 365 Guides.

Screenshot of the Overview menu for Windows 365, accessed by selecting Windows 365 under Provisioning in the Devices pane of the Intune admin center.

New Microsoft Intune integration

In shared mode, organizations can use Intune to customize the login page to feature their company branding.

Screenshot of the customized login screen with the Contoso logo, name, and wallpaper.

You can enable customized company logo and name branding in Microsoft Intune by navigating to Home > Devices > Windows 365 Boot and looking for Personalization in the Settings menu.

Screenshot of the Settings menu for Windows 365 Boot.

Fail fast mechanism for Windows 365 Boot

You no longer need to wait for the sign in process to the Cloud PC to complete only to find out if Windows 365 Boot failed due to network issues or incomplete setup. New smart logic proactively informs users to resolve network issues or complete app setup so they can experience a smooth login to their Cloud PC.

Screenshot of a warning on the Windows login screen for setup issues.

Screenshot of notification on the Windows login screen when there are network issues trying to connect.

Manage local PC settings through Windows 365 Boot

With this feature, it’s now easier for you to access and manage sound, display, and other device specific settings of their local PC directly from your Cloud PC in Windows 365 Boot.

Screenshot of a red box highlighting the Open local PC settings option while in Windows 365 Boot.

Improved disconnect experience for Windows 365 Switch

Moving on to the new capabilities in Windows 365 Switch, you can now disconnect from your Cloud PC directly from the local PC. This can be done by going to Local PC > Task view, right clicking on the Cloud PC button, and selecting Disconnect. We’ve also added tool tips that show on disconnect and sign out options in the Cloud PC Start menu so that you can differentiate between these functionalities.

Screenshot of the disconnect experience for a Cloud PC as shown in Task view.

Desktop indicators differentiate between Cloud PC and local PC for Windows 365 Switch

You’ll now see the term “Cloud PC” and “Local PC” on the desktop indicator when you switch between your respective PCs.

Screenshot of the new Cloud PC or Local PC label on the desktop indicator.

Gracefully handling increased connection time for Windows 365 Switch to Frontline Cloud PCs

You’ll now see updates regarding the Cloud PC connection status and the connection timeout indicator while waiting on the connection screen. In case there’s an error, you be able to copy the correlation ID using the new copy button in the error screen for quicker resolution.

Screenshot of text indicating the Connection status on the connection screen.

Feedback

Please provide feedback in the Feedback Hub by pressing Win + F or, from Task view, navigating to Desktop Environment > Switch to Cloud PC.

Learn more with Windows in the Cloud

Register for a new episode on Unveiling new features: Windows 365 Boot for dedicated devices and more on February 14, 2024 from 08:00 AM – 09:00 AM Pacific Time. Also, check out What’s new in Windows 365 Enterprise.

Have questions? Want tips from the engineers behind the product?

Post your questions below in advance and anytime during Windows in the Cloud—or join our monthly Windows 365 Ask Microsoft Anything (AMA) events here on the Tech Community!

Continue the conversation. Find best practices. Bookmark the Windows Tech Community, then follow us @MSWindowsITPro on X/Twitter. Looking for support? Visit Windows on Microsoft Q&A.

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #473:Harnessing the Synergy of Linked Server, Python, and sp_execute_external_script

In an era where data management transcends individual database systems, SQL Server offers a sophisticated feature set that includes Linked Server integration, Python scripting, and the powerful sp_execute_external_script function. The main objective of this approach is to leverage a Python script within SQL Server using sp_execute_external_script connecting to other database outside of SQL Server On-premise, for example, Azure SQL Database or Azure SQL Managed Instance as an alternative to employing the pyodbc library. This method not only streamlines processes but also addresses key concerns in security and network configuration, such as opening ports, which are prevalent when using external libraries for database connections. By focusing on querying a Linked Server, we can achieve seamless data integration and manipulation while maintaining a secure and efficient environment.

Section 1: Unpacking Linked Servers in SQL Server

Linked Servers act as bridges, enabling SQL Server to execute commands and access data across different database systems. This capability is crucial for enterprises managing data across multiple platforms, offering a unified approach to data interaction. Utilizing Linked Servers, SQL Server can effectively communicate with various data sources, ensuring flexibility and scalability in data management.

Section 2: The Power of Python in SQL Server

The integration of Python into SQL Server, particularly through the sp_execute_external_script function, marks a significant advancement in data processing capabilities. This integration allows for the utilization of Python’s comprehensive libraries and analytical prowess directly within the SQL Server environment. It opens doors to sophisticated data analysis, complex transformations, and advanced machine learning applications, all while leveraging the robust security and performance features of SQL Server.

Section 3: Preparing the Groundwork

To embark on this integration, certain prerequisites must be met. This includes enabling SQL Server Machine Learning Services for Python support and configuring a Linked Server for external data access. Detailed steps guide you through this setup process, ensuring a smooth integration.

Section 4: Executing a Practical Use-case

We present a practical scenario where sp_execute_external_script is employed to query data from a Linked Server. The walkthrough covers creating a stored procedure that harnesses Python’s prowess to access and process data from an external database, illustrating the script’s development and execution.

Definition of Linked Server

USE [master]

GO

/****** Object: LinkedServer [MYSERVER2] Script Date: 11/01/2024 19:07:42 ******/

EXEC master.dbo.sp_addlinkedserver @server = N’MYSERVER2′, @srvproduct=N”, @Provider=N’MSOLEDBSQL’, @datasrc=N’tcp:servername.database.windows.net,1433′, @catalog=N’dotnetexample’

/* For security reasons the linked server remote logins password is changed with ######## */

EXEC master.dbo.sp_addlinkedsrvlogin @rmtsrvname=N’MYSERVER2′,@useself=N’False’,@locallogin=NULL,@rmtuser=N’username’,@rmtpassword=’########’

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’collation compatible’, @optvalue=N’false’

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’data access’, @optvalue=N’true’

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’dist’, @optvalue=N’false’

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’pub’, @optvalue=N’false’

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’rpc’, @optvalue=N’false’

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’rpc out’, @optvalue=N’false’

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’sub’, @optvalue=N’false’

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’connect timeout’, @optvalue=N’0′

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’collation name’, @optvalue=null

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’lazy schema validation’, @optvalue=N’false’

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’query timeout’, @optvalue=N’0′

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’use remote collation’, @optvalue=N’true’

GO

EXEC master.dbo.sp_serveroption @server=N’MYSERVER2′, @optname=N’remote proc transaction promotion’, @optvalue=N’true’

GO

Store procedure definition

CREATE PROCEDURE FetchDataFromLinkedServer

AS

BEGIN

EXEC sp_execute_external_script

@language = N’Python’,

@script = N’

import pandas as pd

customer_data = my_input_data

OutputDataSet = customer_data

‘,

@input_data_1 = N’SELECT TOP 50 ID, TextToSearch FROM [MyServer2].[dotnetexample].[dbo].[PErformanceVarcharNvarchar]’,

@input_data_1_name = N’my_input_data’

WITH RESULT SETS ((ID INT NOT NULL, TextToSearch VARCHAR(200) NOT NULL));

END

Just we need to call our store procedure to obtain the data from another datasource.

EXEC dbo.FetchDataFromLinkedServer

Microsoft Tech Community – Latest Blogs –Read More

The Intrinsic Value of DevOps for the US Department of Defense

DevOps is defined as the union of people, process, and technology to remove siloed roles, development, IT operations, quality engineering, and security to coordinate and collaborate to produce better, more reliable products. Every major cloud provider, Independent Software Vendor (ISV), and software consultancy has promoted this approach to reduce time to market, eliminate bugs, introduce new features rapidly, implement governance, and streamline the software development lifecycle. Microsoft has outlined the benefits of DevOps in the following online post By adopting a DevOps culture along with DevOps practices and tools, teams gain the ability to better respond to customer needs, increase confidence in the applications they build, and achieve business goals faster .

The challenge of cultivating a DevOps culture requires deep changes in the way people work and collaborate. The bureaucratic nature, culture, and traditional software process management of the Department of Defense (DoD) can be an inhibitor for adoption. There are signs as the various branches of the DoD are starting to introduce DevOps as a path forward not only with the adoption of cloud, but also for traditional on-premises programs. The following program Iron Bank (dso.mil) and DSOP (af.mil) are positive sign the DoD is moving away from the traditional Waterfall process, but the change is not reflected in every program. The adoption and implementation of DevOps practices are ever more crucial as we are currently at the intersection of technology and geopolitical world events.

In the last three years we have witnessed the conflict in Ukraine advance the technical capabilities through automation and computer engineering to execute military objectives. The very nature of conventional war has changed and there is a term used the “transparent battlefield” where drones are playing a greater role in providing real time intelligence updates as well as first strike capabilities.

At the start of the war there were various weapon systems that were introduced and heralded as “game changing” with measurable impact in shaping the battlefield. Let us take the example of modern rocket systems provided to Ukraine. The introduction had an immediate impact on the battlefield. Russian air defense missile batteries and radars systems could not properly intercept incoming attacks against their forward operating bases or military positions. Initial indicators pointed that Russian systems did not understand the incoming signatures from incoming rocket attacks. Soon after there was a slow but gradual degrading of the effectiveness of Ukrainian attacks. Interception of launches started to become more common. What changed? DevOps. The Russians learned common technical signs for an incoming strike, developed patches for their air defense systems, evaluated as well as assessed the code, and deployed the necessary patches for their systems. The Russians were able to provide an effective countermeasure during an active conflict across distributed systems within Ukraine and Russia performing out of band updates to mitigate attacks by the Ukrainians. The Ukrainians are also equally doing the same and paving the way in this methodology. This is the ethos of DevOps. When we think of DevOps, we tend to visualize a developer deploying code against a system on-premises or in the cloud. The same technical methodology can be implemented to support future war fighter efforts and advanced weapon systems.

As the DoD is starting to introduce more complex systems, including Space, the need for continuous system updates over low bandwidth communication has new significance. A streamlined process for continuous code improvement, resiliency, and self-healing software on an active battlefield will need to be accounted by military planners in support of the mission. Engineering a rapid recovery on the macro and micro level of systems that fail fast and heal quickly are essential in the software design, delivery, and long-term sustainment. Additional benefits of DevOps can be a force multiplier, optimize total cost of ownership, lower risk, introduce capabilities enhancements faster, lower lead time, and increasing return. Although the DoD budget presently has a significant percentage of the US Federal Budget, there may be additional pressures for leaders in the current and near future to look at ways to streamline their software development and sustainment process. This makes it imperative that organizations within the DoD begin to train staff and implement DevOps as part of their overall strategic plan. The traditional Waterfall model, change management, and promotion of code through various environments before it goes into production will need to go through a radical change across the department.

The goal of this article is not to promote technology to wage war, but toward building efficient and secure software within the DoD to support the various missions for the realities of the twenty first century IT landscape.

Recommended Readings and Videos

Recoding America: Why Government Is Failing in the Digital Age and How We Can Do Better

by Jennifer Pahlka

The DevOps Handbook, Second Edition: How to Create World-Class Agility, Reliability, & Security in Technology Organizations

by Gene Kim

The Phoenix Project: A Novel about IT, DevOps, and Helping Your Business Win 5th Anniversary Edition

by Gene Kim, Kevin Behr, Goerge Spafford, and Chris Ruen.

War and Peace and DevOps – Mark Schwartz

https://youtu.be/2BM0xYfcexY

What the Military Taught Me about DevOps – Chris Short

https://youtu.be/TIE1rKkJWyY

Acknowledgements

I would like to thank Chris Ayers and Erik Munson for both reviewing and proving edits in the formulation of this article.

Microsoft Tech Community – Latest Blogs –Read More

Explore the Latest Innovations for your Retail Workers with Microsoft Teams

As we ring in the start of 2024, we’re gearing up to showcase a host of new innovations across Microsoft Teams at the annual National Retail Federation (NRF) conference, taking place January 14th – January 16th in New York City.

We’re announcing new solutions designed to enable store teams to efficiently meet customers’ expectations and improve the retail experience in this new era of AI.

Keep reading below for the latest product and feature capabilities coming to Teams to help simplify operations and enable first-class retail experiences for all retail workers – including the frontline.

Enhanced Store Team Communication and Collaboration

Route announcements to frontline teams by location, department, and role

Target important announcements to the right frontline employees based on location, department, and job role information. Targeted announcements will surface on the Teams home experience so your frontline employees will never miss an important communication. This feature will be generally available in March 2024. Learn more.

Boost frontline teamwork with auto-generated role and department tagging

Reach the right person at the right time with automatic tags for your frontline teams. Tags for department and job roles can be configured and created automatically for your frontline workers in the Teams Admin Center. Frontline employees can leverage these automatic tags in their frontline teams to connect with the right person every time. This feature will be in public preview in February 2024. Learn more.

Bring answers to communities for easier information sharing

In Viva Engage in Teams, answers from Q&A conversations will now be available in communities, better enabling frontline workers to easily source needed information. This feature will be generally available January 2024.

Monitor how employee engagement drives business performance

Also coming to Viva Engage in Teams, network analytics will bring AI-powered theme extraction and employee retention metrics to users to help enhance insights into workforce dynamics and help drive informed decision making. This feature will be generally available in February 2024. Learn more.

Automatically hear push-to-talk transmissions from multiple channels

Frontline workers using Walkie Talkie in Teams now have the option to automatically hear incoming transmissions from any of their pinned favorite Teams channels. With this new feature, users can stay better connected to multiple channels without needing to switch channels manually. This feature will be generally available by end of month. Learn more on how to get started.

Use any generic wired (USB-C and 3.5mm) headset for instant team communication on Android

Frontline workers often need to instantly communicate with each other even when their phones are locked. We integrated Walkie Talkie in Teams with audio accessories partners to make this experience possible with the dedicated push-to-talk (PTT) button on headsets, which instantly brings up walkie talkie for clear and secure voice communication. In addition to select specialized headsets, we are excited to announce that Walkie Talkie in Teams will now work with any generic wired (USB-C and 3.5mm) headsets on Android. As long as the generic headsets have a control button to play/pause or to accept/decline calls, frontline workers can tap the button to start and stop transmissions on walkie talkie. Frontline organizations will be able to easily start using walkie talkie with lower-cost generic headsets. This feature will be generally available starting February 2024. Learn more.

Streamline Retail Store Operations

Allow frontline teams to set their shift availability for specific dates

Frontline workers will now have the flexibility to set their availability preferences on specific dates, enhancing their ability to manage unique scheduling needs. This added feature complements existing options for recurring weekly availability. This feature is available in January 2024. To learn more about recent enhancements to Shifts in Teams, read the latest blog – Discover the latest enhancements in Microsoft Shifts.

Easily deploy shifts at scale for your frontline

Teams admins can now standardize Shifts settings across all frontline teams and manage them centrally by deploying Shifts to frontline teams at scale in the Teams admin center. You can select which capabilities to turn on or off like (showing open shifts, swap shift requests, offer shift requests, time off requests, and time clock.)

Admins can also identify schedule owners and create scheduling groups uniformly for all frontline teams at the tenant level and create schedule groups and time-off reasons that will be set uniformly across all frontline teams. Your frontline managers are able to start using Shifts straight out-of-the-box with minimal setup required. This feature is currently in public preview and will be generally available in March 2024. Learn more.

Streamline Teams deployment for your frontline and manage at scale

Whether due to seasonality or the natural turnover seen on the frontline in retail, simplifying user membership is key to easing management needs. Now generally available, Microsoft has added new capabilities in the Teams Admin Center to deploy frontline dynamic teams at scale for your entire frontline workforce. Through the power of dynamic teams, team membership is automatically managed and always up to date with the right users as people enter, move within, or leave the organization using dynamic groups from Entra ID.

This deployment tool streamlines the admin experience to create a Teams structure that maps the frontline workforces’ real-world into digital world and makes it easy to set up a consistent channel structure to optimize for strong frontline collaboration on day one. Available in February, customers can use custom user attributes in Entra ID to define frontline and location attributes, with additional enhancements that make it easier to assign team owners by adding a people picker to the setup wizard.

Map your operational hierarchy to frontline teams

Admins will be able to set up their frontline operational hierarchy to map their organization’s structure of frontline locations and teams to a hierarchy in the Teams Admin Center. Admins can also define attributes for their teams that range from department information to brand information. The operational hierarchy coupled with this added metadata will enable frontline apps and experiences in the future like task publishing. This feature will be in public preview in January 2024. Learn more.

Leverage generative AI to streamline in-store shift management

Store managers can also identify items such as open shifts, time off, and existing shifts with a new Shifts plug-in for Microsoft 365 Copilot. Microsoft 365 Copilot can now ground prompts and retrieve insights for frontline managers leveraging data from the Shifts app in addition to user and company data it has access to such as Teams chat history, SharePoint, emails, and more. This feature will be generally available February 2024.

Automate and simplify corporate to store task publishing

With task publishing, you can now create a list of tasks and schedule them to be automatically published to your frontline teams on a regular cadence, such as every month on the 15th. Once you publish a list, the task publishing feature will handle the scheduling and ensure that the list is published at the desired cadence. This feature is useful for tasks that need to be done regularly, such as store opening and closing processes or conducting periodic inspections and compliance checks. This feature will be generally available in March 2024.

Publish a task that everyone in the team must complete

This new capability provides the option to create a task that every member of the recipient team must complete. Organizations can assign tasks like complete training or review a new policy to all or a specific set of frontline workers. The task will be created for each worker at the designated location. This feature will become generally available in March 2024.

Require additional completion requirements for submitting tasks

When you create a task within the task publishing feature, you have the option to request a form and/or photo completion. When you publish that task, each recipient team will be unable to mark the task complete until the form is submitted by a member of the team. This ensures that the task is completed properly by each team member.

Additionally, with approval completion requirements, organizations can hold frontline managers and their teams accountable for verifying the work was done to standard before reflecting that work as completed. This allows an organization to increase attention to detail and accountability for important tasks. These features will become generally available in March 2024.|

Secure and Manage your Business

Simplify authentication with domain-less sign-in

Since a single device is often shared among multiple frontline workers, they need to sign-in and out multiple times a day throughout a shift or across shifts. Typing out long user names with a domain is prone to mistakes and can be time consuming. With domain-less sign-in, frontline workers can now sign-in to Teams quicker using only the first part of their username (i.e., without the domain), then enter the password to access Teams on shared and corporate-managed devices. For example, if the username is 123456@microsoft.com or alland@microsoft.com, users can now sign in with only “123456” or “alland”, respectively. Thiis feature will be in public preview in January 2024.

We’re excited to share more updates and new features throughout the calendar year. To learn more about how Microsoft Teams empowers frontline workers, please visit our webpage to learn how.

Microsoft Tech Community – Latest Blogs –Read More

Azure OpenAI Insights: Monitoring AI with Confidence

Azure OpenAI Insights: Monitoring AI with Confidence

Getting started : Step by step

Step 1: Download the workbook from here.

Step 2: Import the workbook into your Azure Monitor workspace. Here is an external guide on how to import workbooks into Azure Monitor. Alternatively, you can use this repo for additional instructions.

Step 3: Optional step; Enable diagnostic settings for your Azure OpenAI resource. This will allow you to view additional dimensions and logs in the workbook. More information on the level of details later in this post.

Step 4: Explore the workbook.

Please check our repository for further enhancements, issues etc. We hope hearing from you via issues and stars.

Workbook Overview

Monitor

Http requests, by multiple dimensions: model name & version, status code, model deployment name, operation and api name and region.

Token based usage – multiple metrics: Processed Inference Tokens, Processed Prompt Tokens, Generate Completions Tokens, Active Tokens; these are displayed with couple of dimensions such as, model name and model deployment name.

PTU Utilization – by multiple dimensions: model name & version, streaming type and model deployment name.

Fine-tuning – Here we show the ‘Processed FineTuned Training Hours’ metric by two dimensions: model name and model deployment name.

Insights

Insights Overview

Model name

Model Deployment name

Average Duration (in milliseconds)

API operation name

Figure 5: Insights Overview – more aggregative view

By Caller IP

Request/Response (Model name, Model deployment name & Operation name)

Average Duration

All Logs

Why? Activating Azure OpenAI Monitoring: Cognitive Services, Metrics, and Diagnostics

Resource Allocation: As an ISV operator, monitoring and controlling the usage of cognitive services across tenants is vital for fair resource distribution.

Billing Accuracy: Keeping track of each tenant’s service consumption is crucial for accurate billing and service verification.

Monetization Strategy: For ISVs, monetizing cognitive service usage is key to recovering operational costs and maintaining profitability.

Usage Limits: Setting limits on service access for each tenant helps in preventing resource monopolization and ensuring service availability for all.

Data Segregation: Ensuring strict data segregation between tenants is paramount for maintaining privacy and preventing data leakage.

Metrics and Documentation: Having access to detailed documentation on AOAI metrics, error codes, and rate limits is essential for effective system integration.

Comprehensive Metrics: Access to extensive metrics like deployment names and hosting hours is crucial for managing usage and performance of cognitive services effectively.

Azure OpenAI Service Monitoring: Azure OpenAI Service Monitoring Guide details how to use Azure Monitor tools for tracking the availability, performance, and operation of Azure OpenAI Service resources. It covers different monitoring data types such as platform metrics, resource logs, and activity logs, explaining their collection and storage via diagnostic settings. The guide highlights out-of-box dashboards with categories like HTTP Requests and PTU Utilization, and delves into using the Kusto query language in Log Analytics for complex data queries. Additionally, it provides insights into creating alerts based on various monitoring data and outlines best practices and use cases for proactive notification, making it an essential resource for efficient Azure OpenAI Service management.

Azure OpenAI Service Overview: Understanding Azure OpenAI Service offers a comprehensive look at Microsoft’s Azure OpenAI Service, which grants access to OpenAI’s advanced language models like GPT-4, GPT-4 Turbo with Vision, and GPT-3.5-Turbo. The service is accessible via REST APIs, Python SDK, or a web-based interface and is tailored for customers with established partnerships with Microsoft, focusing on lower-risk applications and adherence to responsible AI principles. Key features include the Completions Endpoint for generating text completions from prompts, and the introduction of the DALL-E and Whisper models, which are in preview for generating images from text and transcribing or translating speech. The page also guides new users on starting with Azure OpenAI, including creating an Azure OpenAI resource, deploying models, and crafting effective prompts, making it a vital resource for anyone looking to leverage these cutting-edge AI capabilities.

How? Approaches to Provision Azure OpenAI Services for ISVs and Enterprises

Reuse: Utilizing Existing Azure Monitoring Tools

Overview: The ‘Reuse’ strategy focuses on leveraging existing Azure tools for monitoring and diagnostics, such as Azure Monitor, Azure Metrics, and diagnostic logs.

Detailed View: These tools provide detailed insights into the usage of Azure OpenAI services. By reusing these tools, ISVs can gain a comprehensive view of service utilization, performance metrics, and operational health without the need for extensive custom development.

Integration and Customization: Azure monitor and workbooks are pre-backed into Azure portal, enabling cost-effectiveness and time-saving aspects of this approach.

Build: Crafting Custom Solution

Overview: This approach involves ISVs developing their own custom tools tailored to their specific requirements for controlling and monitoring Azure OpenAI services.

Considerations: When building a custom solution, ISVs must consider the integration complexity, development cost, and the ongoing maintenance. This route offers maximum flexibility and control but requires significant investment in development resources.

Leveraging Existing Platforms/Tools: While the specifics of building custom tools are beyond the scope of this discussion, it’s worth noting that these tools can often be built on top of existing platforms or frameworks, enhancing efficiency and reducing development time.

Decision Factors

Balancing Flexibility and Resource Investment: The choice between building custom tools or reusing existing Azure tools depends on several factors, including the desired level of customization, available resources, and the specific needs of the ISV or enterprise.

Scalability and Future Growth: Considerations should also include scalability and the ability to adapt to future changes in Azure OpenAI services and the broader AI landscape.

Reuse Strategies in Azure OpenAI Provisioning

Unique Deployment Names for Each Customer

Overview: In this approach, ISVs assign a unique deployment name for each customer, with individualized settings including TPM (tokens per minute) and RPM (requests per minute). This customization allows for more precise control over how each customer can utilize the service.

Controlled Management: By having distinct deployment names, ISVs can fine-tune the service parameters per customer. This ensures that each customer’s usage stays within the prescribed limits, helping to manage resource allocation effectively and prevent over utilization.

Benefits: This method delegates significant control measures to the Azure platform, reducing the management burden on the ISV. It’s particularly suitable for scenarios where customer-specific data segregation and usage monitoring are critical, and where each customer’s capacity needs are within the overall model limits.

Considerations: While this setup simplifies management for the ISV, it requires careful planning and setup for each customer to ensure that their specific needs are met within the parameters of TPM and RPM.

Figure 10: Configuring deployment names

Multiple Endpoints for Increased Capacity

Overview: Alternatively, ISVs can use multiple endpoints to enhance capacity. In this scenario, each ISV customer uses the same endpoint, and the ISV is responsible for load balancing and monitoring individual customer usage.

Challenges: This approach requires the ISV to actively manage load balancing and usage tracking, which can be complex but offers greater flexibility in resource allocation and scalability.

Usage Monitoring: The ISV must implement robust systems to accurately monitor and count usage per customer, ensuring fair billing and resource distribution.

Hybrid Approach

Possibility: A third alternative could be a hybrid approach, combining elements of both strategies. This could involve using unique deployment names for certain customers with specific needs while employing multiple endpoints for others to scale capacity.

Flexibility: This approach offers the greatest flexibility, allowing ISVs to tailor the provisioning strategy to the specific needs and usage patterns of each customer.

Management Complexity: While offering adaptability, this approach can increase management complexity and resource requirements for the ISV.

Conclusion and Next Steps

Microsoft Tech Community – Latest Blogs –Read More

The Intrinsic Value of DevOps for the US Department of Defense

DevOps is defined as the union of people, process, and technology to remove siloed roles, development, IT operations, quality engineering, and security to coordinate and collaborate to produce better, more reliable products. Every major cloud provider, Independent Software Vendor (ISV), and software consultancy has promoted this approach to reduce time to market, eliminate bugs, introduce new features rapidly, implement governance, and streamline the software development lifecycle. Microsoft has outlined the benefits of DevOps in the following online post By adopting a DevOps culture along with DevOps practices and tools, teams gain the ability to better respond to customer needs, increase confidence in the applications they build, and achieve business goals faster .

The challenge of cultivating a DevOps culture requires deep changes in the way people work and collaborate. The bureaucratic nature, culture, and traditional software process management of the Department of Defense (DoD) can be an inhibitor for adoption. There are signs as the various branches of the DoD are starting to introduce DevOps as a path forward not only with the adoption of cloud, but also for traditional on-premises programs. The following program Iron Bank (dso.mil) and DSOP (af.mil) are positive sign the DoD is moving away from the traditional Waterfall process, but the change is not reflected in every program. The adoption and implementation of DevOps practices are ever more crucial as we are currently at the intersection of technology and geopolitical world events.

In the last three years we have witnessed the conflict in Ukraine advance the technical capabilities through automation and computer engineering to execute military objectives. The very nature of conventional war has changed and there is a term used the “transparent battlefield” where drones are playing a greater role in providing real time intelligence updates as well as first strike capabilities.

At the start of the war there were various weapon systems that were introduced and heralded as “game changing” with measurable impact in shaping the battlefield. Let us take the example of modern rocket systems provided to Ukraine. The introduction had an immediate impact on the battlefield. Russian air defense missile batteries and radars systems could not properly intercept incoming attacks against their forward operating bases or military positions. Initial indicators pointed that Russian systems did not understand the incoming signatures from incoming rocket attacks. Soon after there was a slow but gradual degrading of the effectiveness of Ukrainian attacks. Interception of launches started to become more common. What changed? DevOps. The Russians learned common technical signs for an incoming strike, developed patches for their air defense systems, evaluated as well as assessed the code, and deployed the necessary patches for their systems. The Russians were able to provide an effective countermeasure during an active conflict across distributed systems within Ukraine and Russia performing out of band updates to mitigate attacks by the Ukrainians. The Ukrainians are also equally doing the same and paving the way in this methodology. This is the ethos of DevOps. When we think of DevOps, we tend to visualize a developer deploying code against a system on-premises or in the cloud. The same technical methodology can be implemented to support future war fighter efforts and advanced weapon systems.

As the DoD is starting to introduce more complex systems, including Space, the need for continuous system updates over low bandwidth communication has new significance. A streamlined process for continuous code improvement, resiliency, and self-healing software on an active battlefield will need to be accounted by military planners in support of the mission. Engineering a rapid recovery on the macro and micro level of systems that fail fast and heal quickly are essential in the software design, delivery, and long-term sustainment. Additional benefits of DevOps can be a force multiplier, optimize total cost of ownership, lower risk, introduce capabilities enhancements faster, lower lead time, and increasing return. Although the DoD budget presently has a significant percentage of the US Federal Budget, there may be additional pressures for leaders in the current and near future to look at ways to streamline their software development and sustainment process. This makes it imperative that organizations within the DoD begin to train staff and implement DevOps as part of their overall strategic plan. The traditional Waterfall model, change management, and promotion of code through various environments before it goes into production will need to go through a radical change across the department.

The goal of this article is not to promote technology to wage war, but toward building efficient and secure software within the DoD to support the various missions for the realities of the twenty first century IT landscape.

Recommended Readings and Videos

Recoding America: Why Government Is Failing in the Digital Age and How We Can Do Better

by Jennifer Pahlka

The DevOps Handbook, Second Edition: How to Create World-Class Agility, Reliability, & Security in Technology Organizations

by Gene Kim

The Phoenix Project: A Novel about IT, DevOps, and Helping Your Business Win 5th Anniversary Edition

by Gene Kim, Kevin Behr, Goerge Spafford, and Chris Ruen.

War and Peace and DevOps – Mark Schwartz

https://youtu.be/2BM0xYfcexY

What the Military Taught Me about DevOps – Chris Short

https://youtu.be/TIE1rKkJWyY

Acknowledgements

I would like to thank Chris Ayers and Erik Munson for both reviewing and proving edits in the formulation of this article.

Microsoft Tech Community – Latest Blogs –Read More

The Intrinsic Value of DevOps for the US Department of Defense

DevOps is defined as the union of people, process, and technology to remove siloed roles, development, IT operations, quality engineering, and security to coordinate and collaborate to produce better, more reliable products. Every major cloud provider, Independent Software Vendor (ISV), and software consultancy has promoted this approach to reduce time to market, eliminate bugs, introduce new features rapidly, implement governance, and streamline the software development lifecycle. Microsoft has outlined the benefits of DevOps in the following online post By adopting a DevOps culture along with DevOps practices and tools, teams gain the ability to better respond to customer needs, increase confidence in the applications they build, and achieve business goals faster .

The challenge of cultivating a DevOps culture requires deep changes in the way people work and collaborate. The bureaucratic nature, culture, and traditional software process management of the Department of Defense (DoD) can be an inhibitor for adoption. There are signs as the various branches of the DoD are starting to introduce DevOps as a path forward not only with the adoption of cloud, but also for traditional on-premises programs. The following program Iron Bank (dso.mil) and DSOP (af.mil) are positive sign the DoD is moving away from the traditional Waterfall process, but the change is not reflected in every program. The adoption and implementation of DevOps practices are ever more crucial as we are currently at the intersection of technology and geopolitical world events.

In the last three years we have witnessed the conflict in Ukraine advance the technical capabilities through automation and computer engineering to execute military objectives. The very nature of conventional war has changed and there is a term used the “transparent battlefield” where drones are playing a greater role in providing real time intelligence updates as well as first strike capabilities.

At the start of the war there were various weapon systems that were introduced and heralded as “game changing” with measurable impact in shaping the battlefield. Let us take the example of modern rocket systems provided to Ukraine. The introduction had an immediate impact on the battlefield. Russian air defense missile batteries and radars systems could not properly intercept incoming attacks against their forward operating bases or military positions. Initial indicators pointed that Russian systems did not understand the incoming signatures from incoming rocket attacks. Soon after there was a slow but gradual degrading of the effectiveness of Ukrainian attacks. Interception of launches started to become more common. What changed? DevOps. The Russians learned common technical signs for an incoming strike, developed patches for their air defense systems, evaluated as well as assessed the code, and deployed the necessary patches for their systems. The Russians were able to provide an effective countermeasure during an active conflict across distributed systems within Ukraine and Russia performing out of band updates to mitigate attacks by the Ukrainians. The Ukrainians are also equally doing the same and paving the way in this methodology. This is the ethos of DevOps. When we think of DevOps, we tend to visualize a developer deploying code against a system on-premises or in the cloud. The same technical methodology can be implemented to support future war fighter efforts and advanced weapon systems.

As the DoD is starting to introduce more complex systems, including Space, the need for continuous system updates over low bandwidth communication has new significance. A streamlined process for continuous code improvement, resiliency, and self-healing software on an active battlefield will need to be accounted by military planners in support of the mission. Engineering a rapid recovery on the macro and micro level of systems that fail fast and heal quickly are essential in the software design, delivery, and long-term sustainment. Additional benefits of DevOps can be a force multiplier, optimize total cost of ownership, lower risk, introduce capabilities enhancements faster, lower lead time, and increasing return. Although the DoD budget presently has a significant percentage of the US Federal Budget, there may be additional pressures for leaders in the current and near future to look at ways to streamline their software development and sustainment process. This makes it imperative that organizations within the DoD begin to train staff and implement DevOps as part of their overall strategic plan. The traditional Waterfall model, change management, and promotion of code through various environments before it goes into production will need to go through a radical change across the department.

Recommended Readings and Videos

Recoding America: Why Government Is Failing in the Digital Age and How We Can Do Better

by Jennifer Pahlka

The DevOps Handbook, Second Edition: How to Create World-Class Agility, Reliability, & Security in Technology Organizations

by Gene Kim

The Phoenix Project: A Novel about IT, DevOps, and Helping Your Business Win 5th Anniversary Edition

by Gene Kim, Kevin Behr, Goerge Spafford, and Chris Ruen.

War and Peace and DevOps – Mark Schwartz

https://youtu.be/2BM0xYfcexY

What the Military Taught Me about DevOps – Chris Short

https://youtu.be/TIE1rKkJWyY

Acknowledgements

I would like to thank Chris Ayers and Erik Munson for both reviewing and proving edits in the formulation of this article.

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #472:Why It’s Important to Add the TCP Protocol When Connecting to Azure SQL Database

In certain service requests, our customers encounter the following error while connecting to the database, similar like this one: “Connection failed: (‘08001’, ‘[08001] [Microsoft][ODBC Driver 17 for SQL Server]Named Pipes Provider: Could not open a connection to SQL Server [65]. (65) (SQLDriverConnect); [08001] [Microsoft][ODBC Driver 17 for SQL Server]Login timeout expired (0); [08001] [Microsoft][ODBC Driver 17 for SQL Server]A network-related or instance-specific error has occurred while establishing a connection to SQL Server. Server is not found or not accessible. Check if the instance name is correct and if SQL Server is configured to allow remote connections. For more information see SQL Server Books Online. (65)’. I would like to give some insights about this.

The crucial point to mention is that Azure SQL Database only responds to TCP, and any attempt to use Named Pipes will result in an error.

1. Understanding the Error Message:

The error message encountered by our customer is typically associated with attempts to connect using the Named Pipes protocol, which Azure SQL Database does not support. It signifies a network-related or instance-specific error in establishing a connection to SQL Server, often caused by incorrect protocol usage.

2. Azure SQL Database’s Protocol Support:

Azure SQL Database is designed to work exclusively with the TCP protocol for network communication. TCP is a reliable, standard network protocol that ensures the orderly and error-checked transmission of data between the server and client.

3. Why Specify TCP in Connection Strings:

Specifying “TCP:” in the server name within your connection strings ensures that the client application directly attempts to use the TCP protocol. This bypasses any default attempts to use Named Pipes, leading to a more straightforward and faster connection process.

4. Error Diagnosis and Efficiency:

By using TCP, any connectivity issues encountered will return errors specific to the TCP protocol, making diagnosis more straightforward. This direct approach eliminates the time spent on protocol negotiation and reduces the time to connect.

5. Recommendations for Azure SQL Database Connectivity:

Always use TCP in your connection strings when connecting to Azure SQL Database.

Ensure that your client and network configuration are optimized for TCP/IP connectivity.

Regularly update your ODBC drivers and client software to the latest versions to benefit from improved performance and security features.

6. Prioritizing TCP to Avoid Unnecessary Delays in Connectivity:

An important aspect to consider in database connectivity is the order in which different protocols are attempted by the client or application. Depending on the configuration, the client may try to connect using Named Pipes before or after TCP in the event of a connectivity issue. This can lead to unnecessary delays in the validation process.

When Named Pipes is attempted first and fails (as it is unsupported in Azure SQL Database), the client then falls back to TCP, thereby wasting valuable time. This scenario is particularly common when default settings are left unchanged in client applications or drivers.

To mitigate this, it is strongly recommended to explicitly use “TCP:” in the server name within your connection strings. This directive ensures that the TCP protocol is prioritized from the outset, facilitating a more direct and efficient connection attempt.

By doing so, not only do we avoid the overhead of an unsuccessful attempt with Named Pipes, but we also gain clarity in error reporting. If a connectivity issue arises, the error returned will be specific to TCP, allowing for a more accurate diagnosis and faster resolution.

Additionally, this approach can significantly reduce the time taken to establish a connection. In high-performance environments or situations where rapid scaling is required, this efficiency can have a substantial impact on overall system responsiveness and resource utilization.

In summary, explicitly specifying the TCP protocol in your connection strings is a best practice for Azure SQL Database connectivity. It ensures a more streamlined connection process, clearer error diagnostics, and can contribute to overall system efficiency.

Enjoy!

Microsoft Tech Community – Latest Blogs –Read More

New on Microsoft AppSource: December 22-31, 2023

We continue to expand the Microsoft AppSource ecosystem. For this volume, 154 new offers successfully met the onboarding criteria and went live. See details of the new offers below:

Get it now in our marketplace

Peritos Workflow Delegation and Exception Management: Peritos Solutions’ Workflow Delegation and Exception app for Microsoft Dynamics 365 Business Central helps you manage out-of-office schedules and delegate tasks to designated substitutes. It also monitors approvals and highlights exceptions for tasks above sales or purchasing limits.

Skoookum for Microsoft Excel: Skoookum streamlines communication between sales management and team members, providing a focused environment for organizing information and notifying one another about key metrics. It’s ideal for sales teams on Office 365 who currently copy and paste Excel data into PowerPoint, Teams, or Outlook.

Go further with workshops, proofs of concept, and implementations

2-Month Implementation: Endpoint Update for Windows: JBS’ support service helps Microsoft 365 users complete feature updates and Windows 11 upgrades in as little as two months, reducing the need for IT department intervention. This offer is available only in Japanese.

Beyondsoft Data Governance and Protection with Microsoft Purview Deployment: Beyondsoft will deploy Microsoft 365 and Microsoft Purview to address security challenges regarding data protection, data compliance, data governance, and risk management. Beyondsoft also offers onboarding and fine-tuning services for Microsoft Purview.

CIO on Demand: 1-Day Consulting Service: IT Partner offers professional guidance to help businesses utilize the full spectrum of Microsoft 365 services for enhanced collaboration and risk assessment. IT Partner can streamline Microsoft 365 adoption, optimize audits, and align IT strategy with Microsoft 365.

Copilot for Microsoft 365: 2-Day Workshop (Canada): Copilot for Microsoft 365 is an AI-powered assistant that boosts productivity by generating relevant content. CDW’s workshop helps businesses plan their Copilot journey, assess their readiness, and prioritize scenarios. The package includes a high-impact scenario summary, a baseline readiness assessment rating, and a prioritized scenario road map.

Custom Business Apps and Process Automation Using Office 365: Business process automation (BPA) technology, including Microsoft 365, streamlines workflows, enhances work quality, and cuts costs. IT Partner will enable your organization to elevate business through custom apps and process automation using Office 365 integrations.

Dynamics 365 Customer Insights: 1-Day Workshop: In this workshop from Strategic IT, you’ll learn how to use Microsoft Dynamics 365 Marketing to reach your customers across all channels. Together, you will identify challenges and develop a possible marketing campaign and customer journey. This offer is available only in German.

Exchange Online – PST file Migration: 3-Day Consulting Service: This service from IT Partner offers two ways to import .pst files to migrate email, tasks, calendars, and contacts to Microsoft 365. Success criteria includes seamless access to Exchange Online, accurate content upload, and operational Exchange Online protection.

Fortis Activerecovery Incident Readiness Workshop: Sentinel’s workshop will assess your organization’s Microsoft 365 environment and its ability to handle a cyber attack. Eight critical areas will be examined. These will include data management, endpoint management, and incident response. Maturity ratings and practical recommendations will be delivered.

Fortis IR ActiveRecovery: Sentinel’s incident response consultants offer remote and on-site consultations to restore normal service to Microsoft 365 environments. They provide tooling and full-scope forensics analysis, addressing ransomware, phishing, DDoS attacks, and insider threats whenever you need help, 365 days a year.

Fortis IR Readiness Assessment: Sentinel’s security experts perform an incident response assessment to identify weaknesses in your IT environment. The assessment covers various areas within Microsoft 365, including infrastructure systems, backup, and authentication platforms. This helps optimize your technology for readiness and resilience.

Fortis IR Tabletop Exercises: Sentinel offers tabletop exercises to test your organization’s response to cyber attacks. Hypothetical scenarios will be followed up with an after-action report containing strategic recommendations. Exercises will include threat identification, incident response plan utilization, and breach disclosure.

Get Copilot for Microsoft 365 Ready: CloudWay AS will help you build your business case for Copilot for Microsoft 365. This proof of concept will identify pain points in your organization, set up a communication plan, and include coaching sessions. You’ll use Microsoft 365 and Viva Insights reports and surveys in building the business case.

Google Workspace to Microsoft 365 Migration: Dev Information Technology Limited will migrate your Google Workspace mail and documents to Microsoft Office 365, including contacts and calendars. Configuration and support services will be provided. Pricing and timeline will vary based on project scope and user count.

Hybrid Meetings and Rooms Workshop: In this workshop, which can be held virtually or on-site, Covenant Technology Partners will help customers define business priorities and scenarios for hybrid meetings and for deploying and adopting Microsoft Teams Rooms

Identity Workshop: Sentinel’s workshop will enable your company to improve security and user experience through passwordless login, access management, and multi-factor authentication. Sentinel will address digital identity topics such as privacy engineering, rule-based access control, and self-service password reset, all of which aid in creating a better Microsoft 365 environment.

Implementation of Globalization Studio (Chile): AW Latam will implement localization configurations in Microsoft Dynamics 365 Finance so your company can comply with the regulatory and fiscal requirements of Chile. This encompasses tax determination and calculation, electronic invoicing, tax reporting, bank payments, and business documents. This offer is available in Spanish.

Implementation of Globalization Studio (Costa Rica): AW Latam will implement localization configurations in Microsoft Dynamics 365 Finance so your company can comply with the regulatory and fiscal requirements of Costa Rica. This encompasses tax determination and calculation, electronic invoicing, tax reporting, bank payments, and business documents. This offer is available in Spanish.

Implementation of Globalization Studio (Nicaragua): AW Latam will implement localization configurations in Microsoft Dynamics 365 Finance so your company can comply with the regulatory and fiscal requirements of Nicaragua. This encompasses tax determination and calculation, electronic invoicing, tax reporting, bank payments, and business documents. This offer is available in Spanish.

Implementation of Globalization Studio (Panama): AW Latam will implement localization configurations in Microsoft Dynamics 365 Finance so your company can comply with the regulatory and fiscal requirements of Panama. This encompasses tax determination and calculation, electronic invoicing, tax reporting, bank payments, and business documents. This offer is available in Spanish.

Introduction to Copilot for Microsoft 365 – Canada: Connect with a solution architect at CDW for a conversation about Copilot for Microsoft 365. Get your questions answered, hear how Copilot for Microsoft 365 compares to other AI offerings, and leave with a big-picture view of use cases for your organization.

Microsoft 365 Consultancy: Get expert guidance from Pace Infotech for deploying, securing, and optimizing Microsoft 365 to enhance productivity, collaboration, and IT efficiency. Transform your workplace into a dynamic, secure, and collaborative environment that ensures success.

Microsoft 365 Copilot: Adoption and Change Management: Noventiq offers an adoption program to help organizations fully utilize Microsoft 365 Copilot. Noventiq’s framework includes assessments, strategic readiness, immersive training, and optimization. Noventiq prioritizes people and uses the Prosci ADKAR model for change management to elevate employee adoption.

Microsoft 365 Security Workshop: Techgyan is hosting security workshops for organizations with fewer than 300 email users. Workshop attendees will learn about the security and compliance features in Microsoft 365 Business Basic, Standard, and Premium to protect data and devices, comply with regulations, and manage risks.

Microsoft Exchange Server to Microsoft 365 Migration: Dev Information Technology Limited offers expert migration services for businesses of all sizes. Its well-structured approach minimizes disruptions, addresses risks, and ensures a smooth transition from your on-premises Exchange Server environment to Microsoft 365.

Microsoft Fabric Modernization: 4-Week Proof of Concept: Try out Microsoft Fabric in this proof of concept from UB Technology Innovations. Microsoft Fabric is an all-in-one analytics solution that streamlines data management and AI processes, empowering end users to access and analyze data without relying heavily on IT assistance.

Microsoft Teams Phone Pilot Study: In this engagement, Makronet will deliver a proof of concept of Microsoft Teams Phone. Microsoft Teams Phone is a cloud-based phone system that seamlessly integrates with Microsoft Teams. It allows users to make and receive calls from any device using their existing number, and it offers features such as voice mail and call forwarding. This offer is available only in Turkish.

Migration to Microsoft Fabric Real-Time Analytics: Mandelbulb Technologies will migrate your company’s Azure data workloads to Microsoft Fabric Real-Time Analytics. A comprehensive assessment will precede the migration, and training and support will be included.

Migrating from Google to Microsoft 365: Lattine Group will transfer email, contacts, and calendars from G Suite to Microsoft 365. This will include planning, evaluation, testing, and user training and support. The project does not cover functional upgrades, troubleshooting, DevOps, or application development. This offer is available only in Portuguese.

Modern Workplace – Cloud Endpoint Management: In this service, Arxus offers device and application management to improve employee productivity and reduce IT costs. Arxus will automate device setup and configuration, provide insights into device and application inventory, and offer a centralized app store for user self-service. The service specializes in enhancing Microsoft Intune for new and existing setups.

OneDrive for Business – Document Migration: This service from IT Partner involves migrating documents from Dropbox, Google Drive, and Box to OneDrive for Business, SharePoint Online, or Microsoft Teams. IT Partner’s migration process will include creating and configuring OneDrive accounts for users, preparing the source system for data transfer, and providing informational messages for users. Training and mailbox migration are available for an extra fee.

Pilot for Microsoft 365 Copilot Graph Connector: In this pilot, Covenant Technology Partners will use Microsoft Graph connectors to bring data from non-Microsoft 365 systems into the Microsoft Copilot Semantic Index for processing. The pilot includes a review of available connectors, evaluation of client readiness, installation and configuration of a selected connector, and assessment of prompt responses from the data source.

Proof of Concept: Move into Viva Engage: CloudWay AS will help your organization plan and reorganize your internal communication ecosystem before moving to the Microsoft Viva Engage employee experience platform. Knowledge transfer and coaching sessions will be included, along with building a business case for Viva Engage.

Power Platform: 4-Day Proof of Concept: See Microsoft Power Apps in action and learn the skills you need to build great business apps without writing code. Compusoft’s workshops and instruction will enable you to easily build new apps and extend or customize the apps you already use.

Securing and Hardening Your Office 365 Environment: IT Partner’s review service will focus on aligning with Microsoft recommendations and enhancing security. The service includes Microsoft Secure Score improvement, malware protection, and multi-factor authentication. Additional services, like customer team training, are available.

SharePoint Online – Migration from File Server As-Is: IT Partner will migrate your files from your local server to SharePoint Online, keeping the folder structure intact and synchronizing all files that changed since the start of the migration. Harness the power of Microsoft 365 to elevate your organizational efficiency and maximize the benefits of cloud collaboration.

SharePoint Online – Tenant-to-Tenant Migration: IT Partner will migrate content with design, structure, and permissions from one SharePoint Online tenant to another, utilizing Microsoft 365 services to ensure a smooth transition. Prerequisites include a Microsoft 365 tenant with SharePoint Online licenses and administrator access.

Sii No-Code Solutions with Microsoft Power Platform: Using the no-code tools of the Microsoft Power Platform, Sii will create an environment for your business. Launch a new era of change in your company with full employee involvement. Sii customizes engagements to the unique requirements of each business and project.

UMB_Heatmap 365: UMB_Heatmap 365 helps Microsoft 365 administrators stay up to date with around 900 changes announced every year. The news service from UMB AG categorizes announcements by urgency and provides recommendations that can save admins up to 40 hours per month. Get access to monthly webinars with Microsoft 365 experts, on-demand access to missed webinars, and more.

UNITE: ERP Quick Start: Infinity Group offers the UNITE: ERP Quick Start program to help businesses quickly and easily implement Microsoft Dynamics 365 Business Central. The program includes data migration, comprehensive training, user acceptance testing, and expert support. The managed service provides predictable costs for technical support, eliminating the need for an in-house IT team.

UNITE: ERP Training: UNITE: ERP Training by Infinity Group offers training packages for Microsoft Dynamics 365 Business Central to increase employee efficiency, productivity, standardization, and compliance. Empower your employees to automate routine tasks and access real-time business data.

Contact our partners

acadon Configurator for Timber365

Access App Modernization: 20 Hours of Analysis and Design

Access App Modernization: Feasibility Evaluation

Acuvate’s HR NExT: A Generative AI-Powered Human Resources Chatbot

AOAI Laboratory’s Saier Education

Assessment: Copilot for Microsoft 365 Governance (Canada)

Autodesk Vault & Inventor Business Central Integration

Blog Assistant: SEO AI Writer, Translator, and Paraphraser

Briisk Instant Transaction Platform

Capital One Trade Credit Integration

Comprehensive Asset Management

Customized White Label Solutions

Cyber Security Assessment (ELEVATE Solutions)

Cybersecurity Assessment Engagement from adaQuest

Doctor Engagement (Digital Medical Rep)

Endpoint Security Assessment of Microsoft 365 Defender Landscape