Category: Microsoft

Category Archives: Microsoft

Lesson Learned #478: Identifying TLS Version Using Azure SQL Auditing

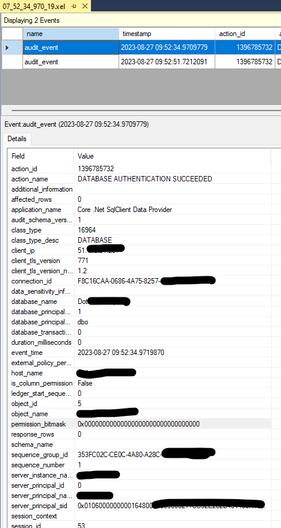

Today, we worked on a service request where our customer needs to know the TLS version that their application are using.

Thanks to Azure SQL Auditing, we have the ability to access highly valuable information for our day-to-day work. We can identify details such as the application name, event type, IP address, TLS version, connection ID, user, and more. This article specifically focuses on the client_tls_version_n field, exploring how Azure SQL Auditing not only aids in monitoring database connection security but also plays a crucial role in maintaining regulatory compliance and identifying potential vulnerabilities associated with outdated TLS versions.

Understanding TLS and Its Role in Database Security

Transport Layer Security (TLS) is the cornerstone of secure data transmission over the internet. It safeguards data integrity and confidentiality during communication between clients and servers. In the context of Azure SQL Database, TLS plays a vital role in encrypting and securing connections, thereby thwarting potential eavesdropping and data tampering attempts.

The client_tls_version_n Field in Azure SQL Auditing

This field records the version of the TLS protocol used by the client when establishing a connection to the Azure SQL Database. Monitoring this field is crucial for several reasons:

Ensuring Compliance with Security Standards: Many industries mandate the use of specific TLS versions to meet security guidelines. The client_tls_version_n field helps verify compliance with these standards.

Identifying Outdated TLS Versions: Older TLS versions, like TLS 1.0 and 1.1, are considered less secure. This field helps in identifying connections that use these outdated protocols, signaling a need for upgrades to maintain robust security.

Troubleshooting Connection Issues: Sometimes, connection failures can result from TLS version mismatches. The client_tls_version_n field aids in diagnosing such issues.

Microsoft Tech Community – Latest Blogs –Read More

Querying Watchlists

special thanks to @Ofer_Shezaf for showing me the new function call.

Watchlists

Watchlists are a feature of Microsoft Sentinel that provide great flexibility and useability. They allow for user-defined tables that can be used in KQL queries to provide additional data. By uploading data using CSV files, users control the data that are in the watchlists and that data can be modified and new rows added as needed.

Watchlists provide a “searchkey” field that is unique across the watchlist and can be used to reference an individual row as discussed below.

Querying Watchlists

The Microsoft Sentinel portal UI provides a way to query the watchlist in the logs watchlist. You can select the watchlist and then click on the “View in Logs” button. This will then transfer the user to Logs, or Advanced Hunting if using Unified SOC Platform, and will execute the following command:

_GetWatchlist(‘<Watchlist Name>’)

This will load the entire watchlist and return all the rows. While this works fine for most of the applications, if your watchlist is very large, it could cause a timeout issue depending on the rest of the KQL query.

For those cases, use the “_ASIM_GetWatchlistRaw” function to return specific rows. It takes two parameters: The Workbook name and a dynamic list of the values for the “searchkey” column that will be used to determine which rows to return.

The example below demonstrates how to use this function:

let Users = dynamic([“User1@contoso.com”, “user1@contoso.com”]);

_ASIM_GetWatchlistRaw(‘VIP’,Users)

| evaluate bag_unpack(WatchlistItem)

Note the call to “evaluate bag_unpack(WatchlistItem)” at the end. This is needed to expand the raw information that is stored in the watch list rows into the same format you get using “_GetWatchlist”

Microsoft Tech Community – Latest Blogs –Read More

Querying Watchlists

special thanks to @Ofer_Shezaf for showing me the new function call.

Watchlists

Watchlists are a feature of Microsoft Sentinel that provide great flexibility and useability. They allow for user-defined tables that can be used in KQL queries to provide additional data. By uploading data using CSV files, users control the data that are in the watchlists and that data can be modified and new rows added as needed.

Watchlists provide a “searchkey” field that is unique across the watchlist and can be used to reference an individual row as discussed below.

Querying Watchlists

The Microsoft Sentinel portal UI provides a way to query the watchlist in the logs watchlist. You can select the watchlist and then click on the “View in Logs” button. This will then transfer the user to Logs, or Advanced Hunting if using Unified SOC Platform, and will execute the following command:

_GetWatchlist(‘<Watchlist Name>’)

This will load the entire watchlist and return all the rows. While this works fine for most of the applications, if your watchlist is very large, it could cause a timeout issue depending on the rest of the KQL query.

For those cases, use the “_ASIM_GetWatchlistRaw” function to return specific rows. It takes two parameters: The Workbook name and a dynamic list of the values for the “searchkey” column that will be used to determine which rows to return.

The example below demonstrates how to use this function:

let Users = dynamic([“User1@contoso.com”, “user1@contoso.com”]);

_ASIM_GetWatchlistRaw(‘VIP’,Users)

| evaluate bag_unpack(WatchlistItem)

Note the call to “evaluate bag_unpack(WatchlistItem)” at the end. This is needed to expand the raw information that is stored in the watch list rows into the same format you get using “_GetWatchlist”

Microsoft Tech Community – Latest Blogs –Read More

Querying Watchlists

special thanks to @Ofer_Shezaf for showing me the new function call.

Watchlists

Watchlists are a feature of Microsoft Sentinel that provide great flexibility and useability. They allow for user-defined tables that can be used in KQL queries to provide additional data. By uploading data using CSV files, users control the data that are in the watchlists and that data can be modified and new rows added as needed.

Watchlists provide a “searchkey” field that is unique across the watchlist and can be used to reference an individual row as discussed below.

Querying Watchlists

The Microsoft Sentinel portal UI provides a way to query the watchlist in the logs watchlist. You can select the watchlist and then click on the “View in Logs” button. This will then transfer the user to Logs, or Advanced Hunting if using Unified SOC Platform, and will execute the following command:

_GetWatchlist(‘<Watchlist Name>’)

This will load the entire watchlist and return all the rows. While this works fine for most of the applications, if your watchlist is very large, it could cause a timeout issue depending on the rest of the KQL query.

For those cases, use the “_ASIM_GetWatchlistRaw” function to return specific rows. It takes two parameters: The Workbook name and a dynamic list of the values for the “searchkey” column that will be used to determine which rows to return.

The example below demonstrates how to use this function:

let Users = dynamic([“User1@contoso.com”, “user1@contoso.com”]);

_ASIM_GetWatchlistRaw(‘VIP’,Users)

| evaluate bag_unpack(WatchlistItem)

Note the call to “evaluate bag_unpack(WatchlistItem)” at the end. This is needed to expand the raw information that is stored in the watch list rows into the same format you get using “_GetWatchlist”

Microsoft Tech Community – Latest Blogs –Read More

Querying Watchlists

special thanks to @Ofer_Shezaf for showing me the new function call.

Watchlists

Watchlists are a feature of Microsoft Sentinel that provide great flexibility and useability. They allow for user-defined tables that can be used in KQL queries to provide additional data. By uploading data using CSV files, users control the data that are in the watchlists and that data can be modified and new rows added as needed.

Watchlists provide a “searchkey” field that is unique across the watchlist and can be used to reference an individual row as discussed below.

Querying Watchlists

The Microsoft Sentinel portal UI provides a way to query the watchlist in the logs watchlist. You can select the watchlist and then click on the “View in Logs” button. This will then transfer the user to Logs, or Advanced Hunting if using Unified SOC Platform, and will execute the following command:

_GetWatchlist(‘<Watchlist Name>’)

This will load the entire watchlist and return all the rows. While this works fine for most of the applications, if your watchlist is very large, it could cause a timeout issue depending on the rest of the KQL query.

For those cases, use the “_ASIM_GetWatchlistRaw” function to return specific rows. It takes two parameters: The Workbook name and a dynamic list of the values for the “searchkey” column that will be used to determine which rows to return.

The example below demonstrates how to use this function:

let Users = dynamic([“User1@contoso.com”, “user1@contoso.com”]);

_ASIM_GetWatchlistRaw(‘VIP’,Users)

| evaluate bag_unpack(WatchlistItem)

Note the call to “evaluate bag_unpack(WatchlistItem)” at the end. This is needed to expand the raw information that is stored in the watch list rows into the same format you get using “_GetWatchlist”

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #477:NEXT VALUE FOR function cannot be used if ROWCOUNT option has been set

In the realm of SQL Server, certain combinations of commands and functions can lead to unexpected conflicts and errors. A notable example is the conflict between the NEXT VALUE FOR function and the ROWCOUNT setting. This article aims to dissect the nature of this error, explaining why it occurs, its implications, and how to effectively capture and analyze it using Extended Events in Azure SQL Database. For example, we got the following error message: Msg 11739, Level 15, State 1, Line 11 – NEXT VALUE FOR function cannot be used if ROWCOUNT option has been set, or the query contains TOP or OFFSET. – NEXT VALUE FOR (Transact-SQL) – SQL Server | Microsoft Learn

Section 1: Understanding the Error

What is NEXT VALUE FOR? The NEXT VALUE FOR function in SQL Server is a crucial tool for generating sequential values from a defined sequence. It’s commonly used for auto-generating unique identifiers, like primary keys.

Conflict with ROWCOUNT: The error arises when NEXT VALUE FOR is used in conjunction with the ROWCOUNT option. ROWCOUNT, when set, limits the number of rows affected by a query. However, NEXT VALUE FOR expects to operate without such limitations, leading to a conflict. This issue can also manifest when using TOP or OFFSET clauses, which similarly restrict the result set.

Error Scenario: Imagine a scenario where a developer attempts to retrieve the next value from a sequence while ROWCOUNT is set to a specific limit. This operation triggers an error, as SQL Server cannot reconcile the sequence’s need for unbounded operation with the imposed row count restriction.

Section 2: Capturing the Error with Extended Events

Introduction to Extended Events: Extended Events are a lightweight, highly configurable system for monitoring and troubleshooting in SQL Server and Azure SQL Database.

Setting up an Extended Event Session: Guide the reader through setting up an Extended Event session to capture this specific error. Mention the need to focus on the error_reported event and how to configure the session to target the ring buffer for data collection.

Querying the Ring Buffer: Provide a detailed explanation and a sample query on how to retrieve and analyze the error information from the ring buffer. This will help in understanding the occurrence and frequency of the error in a live environment.

You could reproduce the issue following this syntax:

CREATE SEQUENCE TestSequence

AS INT

START WITH 1

INCREMENT BY 1;

SET ROWCOUNT 1;

SELECT NEXT VALUE FOR TestSequence, * FROM MyTable;

In order to capture this info we could create an extended event

CREATE EVENT SESSION [CaptureError] ON database

ADD EVENT sqlserver.error_reported(

ACTION(sqlserver.sql_text)

)

ADD TARGET package0.ring_buffer

WITH (MAX_MEMORY=4096 KB,EVENT_RETENTION_MODE=ALLOW_SINGLE_EVENT_LOSS,MAX_DISPATCH_LATENCY=30 SECONDS,MAX_EVENT_SIZE=0 KB,MEMORY_PARTITION_MODE=NONE,TRACK_CAUSALITY=OFF,STARTUP_STATE=OFF)

GO

ALTER EVENT SESSION [CaptureError] ON database STATE = START;

SELECT

event_data.value(‘(@timestamp)[1]’, ‘DATETIME2’) AS TimeStamp,

event_data.value(‘(data[@name=”error_number”]/value)[1]’, ‘INT’) AS ErrorNumber,

event_data.value(‘(data[@name=”message”]/value)[1]’, ‘VARCHAR(MAX)’) AS ErrorMessage,

event_data.value(‘(action[@name=”sql_text”]/value)[1]’, ‘VARCHAR(MAX)’) AS SqlText

FROM

(

SELECT

CAST(target_data AS XML) AS target_data

FROM

sys.dm_xe_database_session_targets AS t

INNER JOIN

sys.dm_xe_database_sessions AS s ON t.event_session_address = s.address

WHERE

s.name = ‘CaptureError’

AND t.target_name = ‘ring_buffer’

) AS tab

CROSS APPLY

target_data.nodes(‘RingBufferTarget/event’) AS q(event_data)

Enjoy!

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #477:NEXT VALUE FOR function cannot be used if ROWCOUNT option has been set

In the realm of SQL Server, certain combinations of commands and functions can lead to unexpected conflicts and errors. A notable example is the conflict between the NEXT VALUE FOR function and the ROWCOUNT setting. This article aims to dissect the nature of this error, explaining why it occurs, its implications, and how to effectively capture and analyze it using Extended Events in Azure SQL Database. For example, we got the following error message: Msg 11739, Level 15, State 1, Line 11 – NEXT VALUE FOR function cannot be used if ROWCOUNT option has been set, or the query contains TOP or OFFSET. – NEXT VALUE FOR (Transact-SQL) – SQL Server | Microsoft Learn

Section 1: Understanding the Error

What is NEXT VALUE FOR? The NEXT VALUE FOR function in SQL Server is a crucial tool for generating sequential values from a defined sequence. It’s commonly used for auto-generating unique identifiers, like primary keys.

Conflict with ROWCOUNT: The error arises when NEXT VALUE FOR is used in conjunction with the ROWCOUNT option. ROWCOUNT, when set, limits the number of rows affected by a query. However, NEXT VALUE FOR expects to operate without such limitations, leading to a conflict. This issue can also manifest when using TOP or OFFSET clauses, which similarly restrict the result set.

Error Scenario: Imagine a scenario where a developer attempts to retrieve the next value from a sequence while ROWCOUNT is set to a specific limit. This operation triggers an error, as SQL Server cannot reconcile the sequence’s need for unbounded operation with the imposed row count restriction.

Section 2: Capturing the Error with Extended Events

Introduction to Extended Events: Extended Events are a lightweight, highly configurable system for monitoring and troubleshooting in SQL Server and Azure SQL Database.

Setting up an Extended Event Session: Guide the reader through setting up an Extended Event session to capture this specific error. Mention the need to focus on the error_reported event and how to configure the session to target the ring buffer for data collection.

Querying the Ring Buffer: Provide a detailed explanation and a sample query on how to retrieve and analyze the error information from the ring buffer. This will help in understanding the occurrence and frequency of the error in a live environment.

You could reproduce the issue following this syntax:

CREATE SEQUENCE TestSequence

AS INT

START WITH 1

INCREMENT BY 1;

SET ROWCOUNT 1;

SELECT NEXT VALUE FOR TestSequence, * FROM MyTable;

In order to capture this info we could create an extended event

CREATE EVENT SESSION [CaptureError] ON database

ADD EVENT sqlserver.error_reported(

ACTION(sqlserver.sql_text)

)

ADD TARGET package0.ring_buffer

WITH (MAX_MEMORY=4096 KB,EVENT_RETENTION_MODE=ALLOW_SINGLE_EVENT_LOSS,MAX_DISPATCH_LATENCY=30 SECONDS,MAX_EVENT_SIZE=0 KB,MEMORY_PARTITION_MODE=NONE,TRACK_CAUSALITY=OFF,STARTUP_STATE=OFF)

GO

ALTER EVENT SESSION [CaptureError] ON database STATE = START;

SELECT

event_data.value(‘(@timestamp)[1]’, ‘DATETIME2’) AS TimeStamp,

event_data.value(‘(data[@name=”error_number”]/value)[1]’, ‘INT’) AS ErrorNumber,

event_data.value(‘(data[@name=”message”]/value)[1]’, ‘VARCHAR(MAX)’) AS ErrorMessage,

event_data.value(‘(action[@name=”sql_text”]/value)[1]’, ‘VARCHAR(MAX)’) AS SqlText

FROM

(

SELECT

CAST(target_data AS XML) AS target_data

FROM

sys.dm_xe_database_session_targets AS t

INNER JOIN

sys.dm_xe_database_sessions AS s ON t.event_session_address = s.address

WHERE

s.name = ‘CaptureError’

AND t.target_name = ‘ring_buffer’

) AS tab

CROSS APPLY

target_data.nodes(‘RingBufferTarget/event’) AS q(event_data)

Enjoy!

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #477:NEXT VALUE FOR function cannot be used if ROWCOUNT option has been set

In the realm of SQL Server, certain combinations of commands and functions can lead to unexpected conflicts and errors. A notable example is the conflict between the NEXT VALUE FOR function and the ROWCOUNT setting. This article aims to dissect the nature of this error, explaining why it occurs, its implications, and how to effectively capture and analyze it using Extended Events in Azure SQL Database. For example, we got the following error message: Msg 11739, Level 15, State 1, Line 11 – NEXT VALUE FOR function cannot be used if ROWCOUNT option has been set, or the query contains TOP or OFFSET. – NEXT VALUE FOR (Transact-SQL) – SQL Server | Microsoft Learn

Section 1: Understanding the Error

What is NEXT VALUE FOR? The NEXT VALUE FOR function in SQL Server is a crucial tool for generating sequential values from a defined sequence. It’s commonly used for auto-generating unique identifiers, like primary keys.

Conflict with ROWCOUNT: The error arises when NEXT VALUE FOR is used in conjunction with the ROWCOUNT option. ROWCOUNT, when set, limits the number of rows affected by a query. However, NEXT VALUE FOR expects to operate without such limitations, leading to a conflict. This issue can also manifest when using TOP or OFFSET clauses, which similarly restrict the result set.

Error Scenario: Imagine a scenario where a developer attempts to retrieve the next value from a sequence while ROWCOUNT is set to a specific limit. This operation triggers an error, as SQL Server cannot reconcile the sequence’s need for unbounded operation with the imposed row count restriction.

Section 2: Capturing the Error with Extended Events

Introduction to Extended Events: Extended Events are a lightweight, highly configurable system for monitoring and troubleshooting in SQL Server and Azure SQL Database.

Setting up an Extended Event Session: Guide the reader through setting up an Extended Event session to capture this specific error. Mention the need to focus on the error_reported event and how to configure the session to target the ring buffer for data collection.

Querying the Ring Buffer: Provide a detailed explanation and a sample query on how to retrieve and analyze the error information from the ring buffer. This will help in understanding the occurrence and frequency of the error in a live environment.

You could reproduce the issue following this syntax:

CREATE SEQUENCE TestSequence

AS INT

START WITH 1

INCREMENT BY 1;

SET ROWCOUNT 1;

SELECT NEXT VALUE FOR TestSequence, * FROM MyTable;

In order to capture this info we could create an extended event

CREATE EVENT SESSION [CaptureError] ON database

ADD EVENT sqlserver.error_reported(

ACTION(sqlserver.sql_text)

)

ADD TARGET package0.ring_buffer

WITH (MAX_MEMORY=4096 KB,EVENT_RETENTION_MODE=ALLOW_SINGLE_EVENT_LOSS,MAX_DISPATCH_LATENCY=30 SECONDS,MAX_EVENT_SIZE=0 KB,MEMORY_PARTITION_MODE=NONE,TRACK_CAUSALITY=OFF,STARTUP_STATE=OFF)

GO

ALTER EVENT SESSION [CaptureError] ON database STATE = START;

SELECT

event_data.value(‘(@timestamp)[1]’, ‘DATETIME2’) AS TimeStamp,

event_data.value(‘(data[@name=”error_number”]/value)[1]’, ‘INT’) AS ErrorNumber,

event_data.value(‘(data[@name=”message”]/value)[1]’, ‘VARCHAR(MAX)’) AS ErrorMessage,

event_data.value(‘(action[@name=”sql_text”]/value)[1]’, ‘VARCHAR(MAX)’) AS SqlText

FROM

(

SELECT

CAST(target_data AS XML) AS target_data

FROM

sys.dm_xe_database_session_targets AS t

INNER JOIN

sys.dm_xe_database_sessions AS s ON t.event_session_address = s.address

WHERE

s.name = ‘CaptureError’

AND t.target_name = ‘ring_buffer’

) AS tab

CROSS APPLY

target_data.nodes(‘RingBufferTarget/event’) AS q(event_data)

Enjoy!

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #476:Identifying Sleeping Sessions with Open Transactions in Azure SQL Database

In SQL Server environments, managing session states and transactions is key to ensuring optimal database performance. A particular challenge arises with sessions in a ‘sleeping’ state holding open transactions for extended periods. These sessions, while seemingly inactive, can hold locks on resources, leading to potential deadlocks or performance degradation.

Our focus is on a SQL query designed to pinpoint such sessions. The query utilizes SQL Server’s dynamic management views: sys.dm_exec_sessions, sys.dm_exec_requests, and sys.dm_tran_session_transactions. These views provide real-time data about active sessions, their current requests, and associated transaction details.

The heart of the query lies in its ability to filter sessions based on specific criteria: sessions must be in a ‘sleeping’ state, have an open transaction, and be inactive for over 5 minutes. This precise filtering allows database administrators to quickly identify and address sessions that might contribute to resource locking and overall performance issues…”

SELECT

ses.session_id,

ses.login_name,

req.start_time,

req.total_elapsed_time,

req.command,

req.status,

trans.transaction_id,

ses.status,

ses.total_elapsed_time,

ses.last_request_start_time,

ses.last_request_end_time,

ses.login_time,

DATEDIFF(minute, ses.last_request_end_time, GETDATE()) AS InactiveTime

FROM sys.dm_exec_sessions ses

LEFT JOIN sys.dm_exec_requests req ON req.session_id = ses.session_id

LEFT JOIN sys.dm_tran_session_transactions trans ON ses.session_id = trans.session_id

WHERE trans.transaction_id IS NOT NULL

AND DATEDIFF(minute, ses.last_request_end_time, GETDATE()) > 5

and ses.status = ‘sleeping’

Enjoy!

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #476:Identifying Sleeping Sessions with Open Transactions in Azure SQL Database

In SQL Server environments, managing session states and transactions is key to ensuring optimal database performance. A particular challenge arises with sessions in a ‘sleeping’ state holding open transactions for extended periods. These sessions, while seemingly inactive, can hold locks on resources, leading to potential deadlocks or performance degradation.

Our focus is on a SQL query designed to pinpoint such sessions. The query utilizes SQL Server’s dynamic management views: sys.dm_exec_sessions, sys.dm_exec_requests, and sys.dm_tran_session_transactions. These views provide real-time data about active sessions, their current requests, and associated transaction details.

The heart of the query lies in its ability to filter sessions based on specific criteria: sessions must be in a ‘sleeping’ state, have an open transaction, and be inactive for over 5 minutes. This precise filtering allows database administrators to quickly identify and address sessions that might contribute to resource locking and overall performance issues…”

SELECT

ses.session_id,

ses.login_name,

req.start_time,

req.total_elapsed_time,

req.command,

req.status,

trans.transaction_id,

ses.status,

ses.total_elapsed_time,

ses.last_request_start_time,

ses.last_request_end_time,

ses.login_time,

DATEDIFF(minute, ses.last_request_end_time, GETDATE()) AS InactiveTime

FROM sys.dm_exec_sessions ses

LEFT JOIN sys.dm_exec_requests req ON req.session_id = ses.session_id

LEFT JOIN sys.dm_tran_session_transactions trans ON ses.session_id = trans.session_id

WHERE trans.transaction_id IS NOT NULL

AND DATEDIFF(minute, ses.last_request_end_time, GETDATE()) > 5

and ses.status = ‘sleeping’

Enjoy!

Microsoft Tech Community – Latest Blogs –Read More

Expanding availability of Copilot for Microsoft 365

Today, we announced that we are expanding Copilot for Microsoft 365 to a much broader set of organizations, available across more channels, and without a minimum seat required. We are also extending our data residency commitments for Copilot for Microsoft 365 and bringing Microsoft Copilot Graph-grounded chat to Copilot in Windows. Join the upcoming AMA and Tech Accelerator event and engage with experts from Microsoft to learn more about Copilot for Microsoft 365.

Expanding availability of Copilot for Microsoft 365

Starting today, we have removed the 300-seat minimum purchase for Copilot for Microsoft 365 commercial plans. We have also extended support so that Office 365 E3 and E5 customers are eligible to purchase Copilot, and we’re extending Semantic Index for Copilot to Office 365 users with a paid Copilot license. Finally, we have announced that Copilot for Microsoft 365 is generally available for businesses of all sizes, supported on Microsoft 365 Business Standard or Business Premium. This follows a successful early access program focused specifically on small and medium businesses, as well as the previously announced availability for staff and faculty of education institutions with Microsoft 365 A3 or A5 licenses. Commercial customers—including small and medium-sized businesses—can now purchase Copilot for Microsoft 365 through our network Cloud Solution Provider partners (CSPs) and you can learn more about them here.

We still recommend that customers start by giving Copilot to a critical mass of their information workers. We learned during the early access program that this creates a flywheel of interest and adoption, accelerating time to value and an organization’s ability to measure impact in a meaningful way. Copilot for Microsoft 365 licenses will be capped by the total number of eligible base licenses that a customer has. That is, customers must have a product license of one of the prerequisite base SKUs for each seat of Copilot for Microsoft 365 they purchase. You can review requirements for Copilot here.

Updating data residency commitments

We’ve heard feedback from our Enterprise customers that they need assurances that Copilot data is managed appropriately across geographically diverse teams. To support, Copilot for Microsoft 365 upholds residency commitments as outlined in the Microsoft Product Terms and Data Protection Addendum.

We’re pleased to share that later this year Copilot for Microsoft 365 will be added as a covered workload under the data residency commitments in Microsoft Product Terms and the Microsoft Advanced Data Residency (ADR) and Multi-Geo Capabilities add-ons. For additional information on Copilot for Microsoft 365 privacy and data storage please visit Data, Privacy, and Security for Microsoft Copilot for Microsoft 365. To learn more about our commitments to data residency, see Microsoft 365 Data Residency Overview and Definitions.

Microsoft Copilot capabilities coming to Windows desktop

Organizations will soon be able to experience Copilot for Microsoft 365 integrated in Windows desktop, bringing Graph-grounded chat capabilities to Copilot in Windows for users with a Copilot for Microsoft 365 license. This update will be available to organizations with Copilot for Microsoft 365 and Copilot in Windows enabled, beginning the week of February 4th. This adds a new, simple way for users to access Copilot, in addition to the current surfaces in Teams, Edge, and copilot.microsoft.com. For information on managing Copilot in Windows, review this article.

Start preparing your organization for Copilot for Microsoft 365

There are steps you can take today to get your tenant prepared for Copilot:

Prepare your data and assess all relevant data security, privacy, and compliance controls are in place. Copilot inherits your existing permissions and policies so ensuring that these are in place helps ensure seamless deployment. Conduct access reviews for SharePoint sites, documents and tenant data, employ the use of sensitivity labels to protect important data, and validate policies for data loss prevention, retention, and compliance.

Review prerequisites for Copilot by reviewing overview and requirements for Microsoft 365 Copilot to position your tenant to seamlessly deploy Copilot. This setup guide also provides a simple walkthrough of the process.

Learn more about Copilot for Microsoft 365, how it works, benefits to your organizations, how your data is handled and protected.

Familiarize yourself with the admin controls available to manage Copilot in the Microsoft 365 admin center Copilot page.

Develop your adoption strategy by leveraging the resources available on our adoption site, including this adoption kit and user onboarding toolkit.

Check readiness, measure adoption and impact through the Microsoft Copilot Dashboard (in Preview) in Viva Insights or PowerBI that helps organizations maximize the value of Copilot for Microsoft 365. It provides actionable insights to help your organization get ready to deploy AI, drive adoption based on how AI is transforming workplace behavior and measure the impact of Copilot.

To learn more about Copilot, you can review our documentation hub, requirements, setup, and information about privacy, security, and compliance. You can also watch our sessions at this past Ignite on getting ready for Copilot.

For small and medium business customers, join our discussion forum to collaborate with other Copilot for Microsoft 365 users, take part in community calls with open Q&A, hear directly from Microsoft Copilot engineers, and access exclusive resources. Also, check out the resources available on the small and medium business Copilot adoption site.

For a comprehensive introduction and deep dive into Copilot for Microsoft 365, join us during our Copilot for Microsoft 365 Tech Accelerator, February 28 and 29, right here in the Tech Community. Listen in as experts from Microsoft talk delve into preparing for Copilot with recommendations and best practices, share strategies on driving adoption, and measuring and maximizing value for your organization. There will also be plenty of opportunities to ask questions and engage with our experts.

Finally, join our next Ask Me Anything (AMA) tomorrow at 9am PT, here in the Copilot for Microsoft 365 Tech Community. Feel free to post your questions onto the event page ahead of time, and our panel of experts will answer them during the event.

Microsoft Tech Community – Latest Blogs –Read More

Unlock the Copilot opportunity and grow your CSP business with Microsoft 365 Lighthouse

Today we announced that Copilot for Microsoft 365 is now available through all sales channels, including the Cloud Solution Provider (CSP) program. We also removed the minimum purchase requirement and expanded prerequisite licensing to be more inclusive. This represents a unique and exciting opportunity for partners to help even more customers begin their AI journey.

To empower CSPs to take advantage of Copilot and leverage the upcoming renewal period to improve retention and accelerate growth, we’re excited to announce new capabilities now available in Microsoft 365 Lighthouse, including:

Customer targeting with propensity to buy Copilot for Microsoft 365

90-day view of upcoming renewals with a tailored set of recommendations

Seamless onboarding for all CSP direct and indirect resellers

Role-based access control (RBAC) for account managers, eliminating the need for Partner Center roles to access Sales Advisor insights

Copilot deployment management with improved security configurations

Accelerate how you go to market with Copilot

Copilot has generated a lot of customer enthusiasm, offering a great opportunity for CSPs who have a clear go-to-market strategy. Since Copilot is new in the CSP channel, the first step is to build a plan to inform your customers and get them excited about Copilot. Microsoft 365 Lighthouse can help simplify and accelerate your time to market.

Sales Advisor can quickly identify the best customers to engage, helping you identify eligible Copilot customers and, more importantly, customers with a high likelihood of benefiting from enhanced productivity. Sales Advisor also provides account managers with best practices and tailored marketing content to help you easily kickstart your customer conversations.

Screenshot of a recommendation to add Copilot for Microsoft 365.

For customers who aren’t initially eligible for Copilot, Lighthouse can help identify those customers who are ready to upgrade to one of the prerequisite offerings, such as Microsoft 365 Business Premium or Microsoft 365 E3/E5. These insights let you take the proactive steps needed to get your customers AI-ready and ensure they’re prepared to take advantage of Copilot in the future.

Getting customers licensed for Copilot is important, but equally important is supporting them in the setup process to get them ready to use Copilot. Make sure users are on the current update channel for Microsoft apps, and configure conditional access to ensure users and devices are secure. Multi-tenant management capabilities in Lighthouse can help ensure a smooth and successful rollout of Copilot. Lighthouse includes the following capabilities:

Device management controls to ensure devices are protected and secure.

Conditional access configuration to safeguard sensitive business data by regulating employee access to internal content.

The ability to establish robust security policies, so environments are prepared for a better Copilot experience.

Optimize your renewal conversations with simplified renewal insights

As a CSP, you can help your customers make the most of their licensing, switch to more appropriate offers and services, and define their expectations and objectives for the year ahead. Renewal moments are essential for establishing trust and loyalty with customers and increasing your revenue and profitability. However, managing renewals can be difficult and time consuming, especially if you have many customers with different needs.

With the new upcoming renewals experience, you get access to insights and recommendations to help you prepare and conduct effective renewal discussions with your customers.

On the Upcoming renewals page, you can view subscription renewals happening in the next 90 days. By using the filter and sort capabilities, you can prioritize customer outreach to focus on customers who have the most valuable or urgent renewal opportunities. Connecting upcoming renewals with the opportunities available in Sales Advisor can help you decide what to present to your customer during the renewal discussion. Opportunities can include recommendations to upgrade customers so they’re more productive and secure, as well as suggestions to help customers realize more value from the subscriptions they already have.

Lastly, we’re making it easy to seamlessly integrate Copilot opportunities into your renewal conversations. Leveraging the Sales Advisor recommendations, you can proactively align your renewal conversation strategy by tailoring your pitch to the customer’s needs and goals. You can also use the links and resources provided in the recommendations to learn more about the offers and services that you can propose to the customer. This holistic approach ensures that your renewal discussions address your customers’ current needs while also highlighting the potential of Copilot.

Screenshot of the Upcoming renewals page.

Get easier access to insights and recommendations

The more accessible the insights and recommendations in Lighthouse are, the more valuable they become. To allow for more users to access the insights and data within Lighthouse, we’ve streamlined the Lighthouse onboarding process. Lighthouse is now enabled by default, which means no action or extra steps are needed to access the full capabilities. CSPs can sign in to lighthouse.microsoft.com and get started immediately.

We’ve also made it easier to control who can access and use Lighthouse in your partner tenant with custom, built-in RBAC roles. The first role we’ve made available is Lighthouse Account Manager, which removes the need for a user in the partner tenant to have Partner Center permissions to access Sales Advisor insights. CSPs will soon have the flexibility to assign additional Lighthouse RBAC roles to give users extra functionality within the portal. These new roles significantly increase the flexibility you have in determining how you and your teams access and manage customer data in Lighthouse.

Get started today

Sign in at lighthouse.microsoft.com.

Continue the conversation by joining us in the Microsoft 365 community! Want to share best practices or join community events? Become a member by “Joining” the Microsoft 365 community. For tips & tricks or to stay up to date on the latest news and announcements directly from the product teams, make sure to Follow or Subscribe to the Microsoft 365 Blog space!

Microsoft Tech Community – Latest Blogs –Read More

Comparative study of Azure Open AI GPT model and LLAMA 2

Introduction to LLMs

Large Language Models (LLMs) have revolutionized natural language processing by leveraging extensive training on massive datasets with tens of millions to billions of weights. Employing self-supervised and semi-supervised learning, exemplified by models like GPT-3, GPT-4, and LLAMA, these models predict the next token or word in input text, showcasing a profound understanding of contextual relationships. Notably, recent advancements feature a uni-directional (autoregressive) Transformer architecture, as seen in GPT-3, surpassing 100 billion parameters and significantly enhancing language understanding capabilities.

GPT

OpenAI’s GPT (generative pre-trained transformer) models have been trained to understand natural language and code. GPTs provide text outputs in response to their inputs. The inputs to GPTs are also referred to as “prompts”. Designing a prompt is essentially how you “program” a GPT model, usually by providing instructions or some examples of how to successfully complete a task.

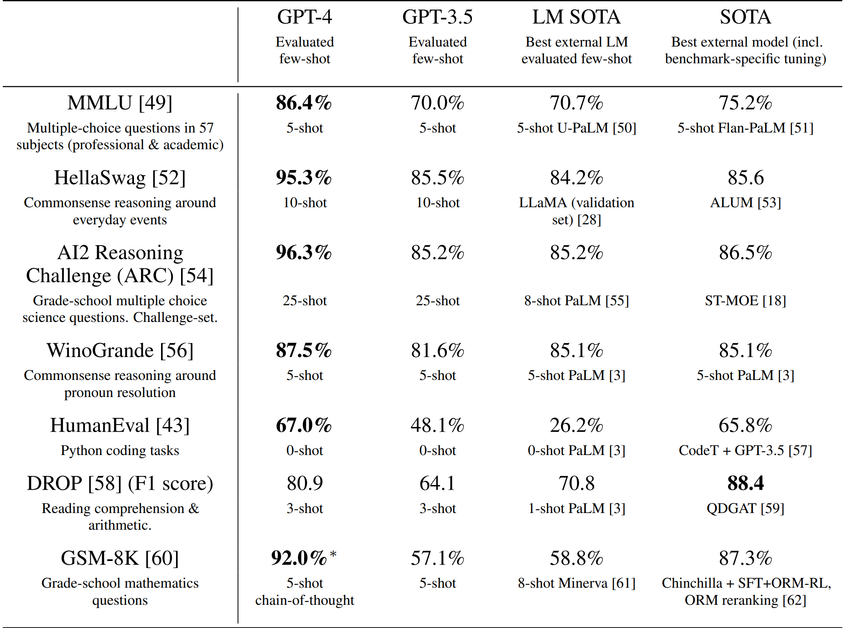

Model Evaluation

Pre-trained base GPT-4 model assessed using traditional language model benchmarks.

Contamination checks performed for test data to identify overlaps with the training set.

Few-shot prompting utilized for all benchmarks during the evaluation.

GPT-4 exhibits superior performance compared to existing language models and previous state-of-the-art systems.

Outperforms models that often employ benchmark-specific crafting or additional training protocols.

GPT-4’s performance evaluated alongside the best state-of-the-art models (SOTA) with benchmark-specific training.

GPT-4 outperforms existing models on all benchmarks, surpassing SOTA with benchmark-specific training on all datasets except DROP.

GPT-4 outperforms the English language performance of GPT 3.5 and existing language models for the majority of languages, including low-resource languages such as Latvian, Welsh, and Swahili.

GPT-4 substantially improves over previous models in the ability to follow user intent. On a dataset of 5,214 prompts submitted to ChatGPT and the OpenAI API, the responses generated by GPT-4 were preferred over the responses generated by GPT-3.5 on 70.2% of prompts.

Azure OpenAI service

Azure OpenAI Service provides REST API access to OpenAI’s powerful language models including the GPT-4, GPT-35-Turbo, and Embeddings model series.

These models can be easily adapted to your specific task including but not limited to content generation, summarization, semantic search, and natural language to code translation.

Users can access the service through REST APIs, Python SDK, or our web-based interface in the Azure OpenAI Studio.

GPT-4

GPT-4 can solve difficult problems with greater accuracy than any of OpenAI’s previous models. Like GPT-3.5 Turbo, GPT-4 is optimized for chat and works well for traditional completions tasks. Use the Chat Completions API to use GPT-4.

GPT-3.5

GPT-3.5 models can understand and generate natural language or code. The most capable and cost effective model in the GPT-3.5 family is GPT-3.5 Turbo, which has been optimized for chat and works well for traditional completions tasks as well.

LLAMA 2

Llama 2 is a large language model (LLM) developed by Meta that can generate natural language text for various applications. It is pretrained on 2 trillion tokens of public data with a context length of 4096 and has variants with 7B, 13B and 70B parameters. In this blog post, we will show you how to use Llama 2 on Microsoft Azure, the platform for the most widely adopted frontier and open models.

Comparison to closed-source models

LLAMA 2 is not good at coding as per the statistics below but goes head-to-head with Chat GPT in other tasks.

Human Evaluation

Human evaluation results for Llama 2-Chat models compared to open- and closed-source models across ~4,000 helpfulness prompts with three raters per prompt. The largest Llama 2-Chat model is competitive with ChatGPT. Llama 2-Chat 70B model has a win rate of 36% and a tie rate of 31.5% relative to ChatGPT. Note: 4k prompt set does not include any coding- or reasoning-related prompts.

Language distribution in pretraining data

Most data is in English, meaning that Llama 2 will perform best for English-language use cases. The large unknown category is partially made up of programming code data. Model is not trained with enough coding data and hence not capable of solving coding related problems.

The model’s performance in languages other than English remains fragile and should be used with caution.

Performance with tool use

Evaluation on the math datasets

Llama 2-Chat is able to understand the tools’s applications, and the API arguments, just through the semantics, despite never having been trained to use tools

Example:

Ultimately, the choice between Llama 2 and GPT or ChatGPT-4 would depend on the specific requirements and budget of the user. Larger parameter sizes in models like ChatGPT-4 can potentially offer improved performance and capabilities, but the free accessibility of Llama 2 may make it an attractive option for those seeking a cost-effective solution for chatbot development.

Microsoft Tech Community – Latest Blogs –Read More

Python Data Science Day 2024: Unleashing the Power of Python in Data Analysis

Image Created with Microsoft Image Designer

Hello, fellow data enthusiasts!

We’re excited to share that the Python Data Science Day is set to take place on March 14th, 2024, aligning with the mathematical constant Pi Day (3.14). This event is a fantastic opportunity for Python developers, entrepreneurs, data scientists, students, and researchers to come together and explore modern solutions for data pipelines and complex queries.

What to Expect?

The Python Data Science Day will feature a variety of sessions and lightning talks from experts in the field. Whether you’re interested in high-level programming topics or diving deep into specific features, there’s something for everyone.

Sessions: These 25-minute presentations, either pre-recorded or live, will be delivered by up to two people and cover a range of programming stories, approaches, and solutions.

Lightning Talks: If you’re new to public speaking or have a concise idea to share, these 5 to 7-minute talks are perfect for you. They focus on a single idea and are designed to inspire further learning.

Call for Speaker Proposals

The call for speaker proposals is open until January 25th, 2024. If you have a cool tool, product, or skill to discuss, we encourage you to submit your proposal and join the list of amazing speakers. https://aka.ms/Python/DataScienceDay/CFP

More Ways to Engage

Microsoft Fabric Global AI Hack Together: Join us from February 15th to March 4th, 2024, for live streams and real-world problem-solving with an AI-powered analytics platform.

14 Days of Python Data Science Series: Leading up to the event, we’ll release articles and recipes for using Data Science on Microsoft tools.

Data Science Cloud Skills Challenge: Participate in self-paced learning until April 15th, 2024.

Special Guest Speakers

You could be among the special guest speakers, joining the ranks of Sarah Kaiser, PhD, and Soojin Choi. Don’t miss this chance to contribute to the Python Data Science community.

Join the Conversation

Connect with us on Discord to continue the discussion and share your experiences with like-minded individuals.

We can’t wait to see you there and witness the innovative ideas and projects you’ll bring to the table. Mark your calendars for March 14th, 2024, and prepare for a day filled with Python and data science wonders! https://aka.ms/python-discord

More Data Science at Microsoft…

Data Scientist Certifications

Data Scientist Training Path

Data Science for Beginners – GitHub Repo

Microsoft Tech Community – Latest Blogs –Read More

How to build an End-to-End Analytics Solution with Lakehouse in Microsoft Fabric

Architectural Setup

Introduction

Data is the new oil, and data analytics is the key to unlocking its value. Data analytics can help organizations to gain insights, make decisions, and optimize outcomes. However, data analytics is not a simple task. It involves various challenges, such as data quality, data integration, data processing, data modelling, data visualization, and data governance.

To address these challenges, Microsoft Fabric offers a comprehensive suite of services for data engineering, data science, data warehousing, real-time analytics, and business intelligence.

Scenario

Imagine you are a chef who wants to create delicious dishes using various ingredients and recipes. You have a kitchen with all the tools and appliances you need, such as a stove, an oven, a blender, a mixer, etc. You also have access to a pantry where you can store and retrieve different kinds of food items, such as grains, fruits, vegetables, meats, dairy, etc. You want to share your culinary creations with your customers and get their feedback and ratings. Microsoft Fabric is like your kitchen and pantry, where you can cook up data solutions using various tools and services, such as Azure Synapse, Azure Data Factory, Power BI, etc. You can also store and access different kinds of data items, such as structured, unstructured, batch, streaming, etc. You can also share your data insights and reports with your stakeholders and get their feedback and ratings.

In this blog, you will learn how to use Microsoft Fabric to create an end-to-end analytics solution using Lakehouse. You will also learn how to address some of the common challenges and considerations when working with data, such as:

How to create and manage a Lakehouse that can store and process data of any scale, type, and structure

How to ingest, transform, and load data from various sources and formats into your Lakehouse

How to perform data engineering and data science tasks

How to query and analyze data using SQL and Spark

How to create and share interactive reports and dashboards using Power BI

Pre-requisites

A Microsoft 365 Developer Account with Admin rights.

A Microsoft Fabric Free Trial

How to create and manage a Lakehouse

The following steps are the steps to setup a Lakehouse in Microsoft;

1. Create a Fabric workspace: A workspace is a place where colleagues collaborate to create items such as Lakehouse, warehouses, and reports. From the experience switcher located at the bottom left, select the Data Engineering Experience.

“My Workspace” is your own default workspace, it’s where you will always be the sole Owner of the content and experimenting how items work without affecting items that the rest of collaborators have access to, for now ignore that and Select workspaces and new workspaces.

Fill out the create workspace form with the following details:

Name: Enter a unique name for your workspace

Description: Enter an Optional description for your workspace

Advanced: Under License mode, select Premium capacity and then choose a premium capacity that you have access to. Select apply to create and open the workspace.

Note: Every fabric item you create will be stored in the open workspace by default, be sure to change to another workspace if you want the item to be stored to a different workspace by navigating to the workspaces tab and selecting the workspace into which you want to store your fabric items.

2. Create a Lakehouse: Navigate to the workspace you created earlier and from the top navigation ribbon, select new, from the item drop down menu, select Lakehouse.

Congratulations, you now have your Lakehouse set up. Next, you will ingest the Sample Data

3. Ingest Sample Data: If you don’t have OneDrive Configured, sign up for the Microsoft 365 free trail. Download the dimension_customer.csv file from the Fabric Samples repo. In the Lakehouse explorer, you see options to load data into Lakehouse. Select New Dataflow Gen2. On the new dataflow pane, select Import from a Text/CSV file.

On the Connect to data source pane. Drag and drop the dimension_customer.csv file that you download.

4. Build a report: From the preview file data page, preview the data and select create to proceed and return to the dataflow canvas. In the Query settings pane, update the Name field to dimension customer. Note Fabric adds a space and number at the end of the table name by default. Table names must be lower case and must not contain spaces.

In case you have other data items that you want to associate with the Lakehouse, from the menu items, select Add data destination and Select Lakehouse. If needed, from the Connect to data destination screen, sign into your account and select Next.

From the dataflow Canvas, we can transform our data based on your business requirements. Once done, select Publish button at the bottom of the screen or publish later from the drop-down menu of the publish button

A spinning circle next to the dataflow’s name will indicate publishing is in progress in the item view

When publishing is complete, select the ellipse (…)

Select Properties. Rename the dataflow to Load Lakehouse Table and select Save

Once the dataflow is refreshed, select your new Lakehouse in the left navigation panel to view the dimension-customer delta table. Select the table to preview its data.

The SQL analytics endpoint of the Lakehouse enables you to query the data with SQL statements. Select the SQL analytics endpoint from the Lakehouse drop-down menu.

Select the dimension-customer table to preview the data and select New SQL query at the top of the Fabric interface.

The above sample query aggregates the row count based on the BuyingGroup column of the dimension_customer table.

To run the script, select the Run icon at the top of the script.

In the item view of the workspace, select the “yourlakehouse” default semantic model

From the semantic model pane, You can view all the tables. You can create reports either from scratch, pignated report, or let Power BI automatically create a report based on your data.

Notice that the report is generated in a couple of seconds, Click on view report to view the automatically generated report.

Save the report by selecting the save from the top ribbon. Notice there is a right navigation bar that you can use to make more changes to the report to meet your requirements by including or excluding other tables or columns.

To share your report, head back to the item view for your workspace, click on the share icon to share the report within your organization.

Congratulations, you have just created an end-to-end Analytics solution with Microsoft Fabric in a matter of minutes. Well done! To manage your storage and costs effectively, don’t forget to clean up your resources after you are done.

Further study guides

Microsoft Fabric Learn Together STARTING JANUARY 23, 2024 THROUGH FEBRUARY 8, 2024 (18 EPISODES) Expert-led live walk-throughs covering all the Learn modules to prepare you for the the upcoming DP-600 exam leading to the Fabric Analytics Engineer Associate certification. 9 episodes delivered in both India and Americas timezones.Register now for this exclusive live learning experience.

Also, sign up for the Fabric Cloud Skills Challenge at https://aka.ms/fabric30dtli and complete all the modules to become eligible for a 50% discount on the DP-600 exam.

Learn how to use copilot in Microsoft Fabric, your data insights AI assistant

Join the Fabric Community to stay updated on the latest About Microsoft Fabric

Consider joining the Fabric Career Hub so you won’t miss out on any Careers in Microsoft Fabric

Microsoft Tech Community – Latest Blogs –Read More

MVP Jonah Andersson, Nordic Women in Tech Winner

Jonah Andersson is a Microsoft Azure MVP from Sweden and the founder of Azure User Group Sweden, part of the Azure Tech Groups. She noticed the absence of an Azure user group in her area, the Mid-Norrland Region of Sweden. Driven by a passion to share her technical knowledge and contribute to the growth of others, she successfully established a new community during the pandemic despite everyone being physically apart. She shares details about her community, “The Azure user group community is where I host bi-weekly Saturday virtual tech events together with fellow Microsoft MVP (AI) Håkan Silfvernagel. We also provide 1:1 public mentorship to our community members. The result is inspiring because some of our community members have volunteered to speak in our community and share their knowledge about Azure and other developer technologies and tools. Håkan and I try our best to be inclusive and diverse in the content or session we organized.”

Jonah recently won the Developer of the Year award at the Nordic Women in Tech Awards, recognizing her achievements in the technology sector across the Nordic countries. This blog celebrates her remarkable honor, introducing her journey, community activities, and thoughts as a community leader.

“My career journey as a developer started and got triggered through life circumstances,” says Jonah. Unlike many other MVPs, she didn’t grow up with a strong background in technology but initially aspired to be a civil engineer or architect. Her path changed when she received a government scholarship to study computer science in college, opening the door to technology. However, upon graduating, she lost her mother to illness and, as the main provider for her family, focused first on her job. She didn’t start her career as a developer but learned a lot about solving technical problems along the way.

A turning point came in 2011 when she moved from the Philippines to Sweden. Determined to realize her college dreams, she relearned programming and Swedish, and finally made her debut as a developer. “Today, I may not be designing and building buildings, but I am working as a Senior Azure Consultant that designs and builds applications and infrastructures on the cloud. I also share this knowledge as a Microsoft MVP and Microsoft Certified Trainer,” she says.

Upon hearing about her award, Jonah was both happy and surprised, knowing there were other excellent candidates. Having been nominated twice before but not winning, she felt that this year was finally her time. “I was so happy that I dedicated the award back to the community and the people who supported me in my journey. I did not prepare a winning speech, but I spoke from the heart when I was announced as winner. My speech is available on Nordic Women in Tech Awards YouTube channel – Nordic Women in Tech Awards 2023 Iceland Live Stream – YouTube”.

“I would like to share with the community to share knowledge of your experiences and including lessons learned,” Jonah says she is actively participating in the community through Azure User Group Sweden, speaking at user group meetups, local and global conferences, sharing technology inspirations on the Extend Women in Tech Podcast, and more. She recently published her first book, “Learning Microsoft Azure (O’Reilly) – Cloud Computing and Development Fundamentals by Jonah Andersson,” and shares the inspiration behind it. “I was once involved in a cloud migration journey of moving old .NET legacy applications and databases to Microsoft Azure. My challenges and lessons learned of that journey, led me to the idea of authoring a book about learning Azure – my first ever tech book project I worked on my spare time and weekends for almost 2 years. The hard work was not wasted because the book is published internationally this month of November 2023 and I am very thankful to all community tech friends, fellow Microsoft MVPs who helped and contributed!”

Jonah’s achievements are enough to make her a role model for other women in the tech industry. She provides a message for other female technologists: “I encourage women and underrepresented groups who are aspiring to build a career in tech or as a developer to know your WHY and passion. Once you know that, use them to inspire others and follow your dream job and the things you want to do. Get a career that will help you grow and help others grow, and in order to grow, choose the right and inclusive workplace for you that values you and where you can also contribute. Find mentors and inspiration from others – through the tech communities. Most of the people involved in the communities are driven by their WHYs and passion as well. Be part of the tribe and make a difference.”

Microsoft Tech Community – Latest Blogs –Read More

Copilot for Microsoft 365 saataville kaikille organisaatioille

Copilot for Microsoft 365 ja laajentunut kumppanimahdollisuus!

Laajassa tuotevalikoimassamme tekoäly liittyy lähes jokaiseen tuotteeseen, toimialaan ja ratkaisualueeseen, ja odotamme, että se johtaa perustavanlaatuiseen muutokseen jokaisessa ohjelmistokategoriassa, avaten uuden tuottavuuden kasvun aikakauden. Sitoumuksemme on valtuuttaa kumppaneita hyödyntämään tämän innovaation potentiaalia asiakkaiden hyväksi, auttamaan heitä navigoimaan tässä nousevassa teknologiassa.

Kun julkaisimme Copilotin Microsoft 365:lle yritysasiakkaille marraskuussa, kumppanit ovat tukeneet asiakkaita määrittelemään, aktivoimaan ja toimittamaan AI-strategiansa. Nyt otamme seuraavan askeleen, laajennamme pääsyä useammille asiakkaille ja luomme lisää mahdollisuuksia kumppaneille, jotka palvelevat näitä asiakkaita.

Tänään ilmoitamme, että 16. tammikuuta 2024 alkaen Copilot Microsoft 365 on yleisesti saatavilla kaikissa myyntikanavissa, mukaan lukien Cloud Solution Provider (CSP) -kanava.

Alkaen tänään:

Kaupalliset asiakkaat, joilla on Microsoft 365 E3/E5, Office 365 E3/E5 ja Microsoft 365 Business Standard/Business Premium -lisenssit, voivat ostaa Copilot Microsoft 365 -lisä-SKU:n kaikista myyntikanavista – CSP, EA, MCA-E, EAS ja suoraan Microsoftilta verkossa.

Oppilaitosasiakkaat, joilla on Microsoft 365 A3/A5 ja Office 365 A3/A5, voivat ostaa EES:n kautta.

Poistamme Copilot Microsoft 365:lle kaikissa kanavissa ja segmenteissä olevan vähimmäislisenssivaatimuksen; asiakkaiden on kuitenkin hankittava tuotelisenssi yhdestä perus-SKU:sta jokaista Copilot Microsoft 365 -lisenssiä kohden, jonka he ostavat.

Jotta jokainen asiakas olisi valmis tekoälylle, Office 365 E3- ja E5-lisenssit sisältävät semanttisen indeksin Copilotille. Semanttinen indeksi Copilotille on ratkaisevan tärkeä saadakseen asiaankuuluvia, toimivia vastauksia kehotteisiin Microsoft 365 Copilotissa

Kumppanit voivat katsoa Partner Centerissä olevaan ilmoituksen saadakseen lisätietoja siitä, miten he voivat tehdä uutta Microsoft 365 Copilot -kauppaa tänään.

Seuraavat vaiheet: mitä voit tehdä tänään kumppanina?

Ensimmäinen askel on esitellä asiakkaillesi Microsoft Copilot. Copilot kaupallisella tietosuojalla on saatavilla ilman lisäkustannuksia kaikille Entra ID -käyttäjille. Copilotin käyttöönoton aloituspaketti ja Copilot for Microsoft 365 -kumppanisivu tarjoavat hyödyllisiä ohjeita ja resursseja asiakkaiden kanssa käytettäväksi sekä selittävät Microsoft Copilotin ja Copilot for Microsoft 365:n välisen eron.

Seuraavaksi sinun on varmistettava, että asiakkaasi ovat oikeutettuja Copilot for Microsoft 365:een hankkimalla heidät edellytettyihin peruslisensseihin (SKU). Muista, että Microsoft 365 tarjoaa kattavat suojaus- ja hallintaominaisuudet käyttäjätiedoille, päätelaitteille, tietojen suojaukselle ja uhkien torjunnalle, jotka ovat ratkaisevan tärkeitä tekoälyn turvallisen käyttöönoton kannalta.

Lisäksi tarjolla on hienoja uusia resursseja ja koulutustarjouksia, joiden avulla voit valmistella tekoälyä ja rakentaa Copilot for Microsoft 365 -käytäntöä ja myyntiputkea. Tämä on tilaisuutesi laajentaa liiketoimintaportfoliotasi tekoälyneuvontaan, tiedonhallintaan, käyttäjien käyttöönottoon ja muutoksenhallintapalveluihin, joita asiakkaat voivat hyödyntää Copilot for Microsoft 365:n hyödyntämisessä.

Jos et käytä Copilotia sisäisesti, nyt on sen aika. Copilotin omaksuminen ei ainoastaan paranna kehitysmahdollisuuksiasi, vaan antaa sinun myös jakaa kokemuksesi ja saamasi lisäarvon asiakkaiden kanssa. Se vahvistaa sitoutumistasi innovaatioon ja erinomaisuuteen ja erottaa sinut muista Microsoftin kilpaillussa kumppaniympäristössä.

Lisätietoja tästä julkistuksesta on Jared Spataron blogissa, jonka on kirjoittanut VP, Modern Work & Business Applications.

Aloittaminen

Copilot for Microsoft 365 on merkittävä mahdollisuus kumppaneille tällä tekoälyn uudella aikakaudella. Jotta pääset alkuun strategian määrittelyssä, taitojesi kehittämisessä ja myyntisi kiihdyttämisessa, suosittelemme sinua toimimaan seuraavasti:

Rakenna osaamistasi ja kehitä asiakastarjontaasi. Vieraile AI-Powered Future of Work -sivustolla saadaksesi ohjeita ja resursseja, joiden avulla voit määritellä strategiasi, kehittää taitojasi ja valmistautua markkinoille tuloon.

Lue lisää Copilot for Microsoft 365 -palveluista ja ratkaisumahdollisuuksista.

Auta teknisiä tiimejäsi ja myyntitiimejäsi kehittämään Copilot for Microsoft 365 -taitojaan rekisteröitymällä Microsoft 365 Product Bootcampille perustaitojen kehittämiseksi ja tuleville CSP Masters Sales and Technical Bootcamp -kursseille, jotta opit luomaan menestyvän käytännön, saamaan ensimmäisen Copilot for Microsoft 365 -myynnin sekä hallitsemaan ja ottamaan käyttöön ensimmäisen Copilot for Microsoft 365 -asiakkaasi CSP:ssä.

Hyödynnä tärkeimpiä markkinointiresursseja, kuten uutta tekoälyn aikakauden “Era of AI”-markkinointikampanjaa ja käyttövalmiita markkinointiresursseja, jotka on suunniteltu riippumatta siitä, etsitkö käyttövalmista markkinointikampanjaa laatikossa vai haluatko mukauttaa omaa kysyntään perustuvaa markkinointikampanjaasi.

Liity Copilot for Microsoft 365 CSP Acceleration Moment -tapahtumaan 18. tammikuuta, jolloin Microsoftin johtajat kertovat lisää Copilot for Microsoft 365 -kumppanimahdollisuudesta ja siitä, mitä tulee seuraavaksi: 18.1. klo 19 vaihtoehto | 19.1. klo 3:00 -vaihtoehto Huom! Tallenne ja kopio esityksestä julkaistaan M365 Modern Work for Partners -sivustolla 7-10 arkipäivää tapahtuman jälkeen.

Aloita Microsoft 365 Lighthousen käyttö. Tänään julkistamme myös uusia GTM-kokemuksia, jotka tukevat kumppaneita Copilotin onnistuneissa uudistuksissa ja myynnissä M365:lle ja tekevät Lighthousesta helppokäyttöisemmän vaikuttavien tietojen ja signaalien avulla. Lisätietoja on Lighthouse-blogissa.

Pidä yhteyttä ja liity Cloud Service Provider -kumppanien keskustelupalstalle ja/tai Copilot for Microsoft 365 -kumppaniyhteisöön. Tule kuulemaan 16.2. Kumppanituntia https://aka.ms/kumppanitunti ja muita paikallisia tapahtumia.

Tie menestykseen sisältää muutakin kuin vain uuden ratkaisun myymisen; Kyse on liiketoiminnan muutoksesta, vastuullisesta tekoälyn käytöstä ja arvon tuottamisesta arvokkaiden skenaarioiden avulla. Tutustu neuvonta-, valmius-, käyttöönotto-, käyttöönotto- ja muutoksenhallintaresursseihimme parantaaksesi Copilot for Microsoft 365 -käytäntöäsi.

Olemme innoissamme saadessamme lisää kumppaneita osallistumaan tähän virstanpylvääseen, joka auttaa määrittelemään uudelleen tekoälyn parissa tehtävän työn tulevaisuuden!

You can read the English version of this post here.

Microsoft Tech Community – Latest Blogs –Read More

QTip: Configure Azure SQL DB to receive alert when failover occurs in failover group or geo replica

In Azure SQL DB is possible to configure alert to receive a notification when failover happens in failover group or geo replica

Basically you need to configure activity log to send data to some destination in this case workspace will be used

Azure Monitor activity log

Configure activity log to send results to a workspace (this configuration will be applied to all resources not only current resource)

In activity log select export activity logs

Select Add diagnostic setting

Provide diagnostic setting name , in this demo all categories will be used to be able to see all records at the beginning and save

Alert for failover group

Query data using kusto in log analytics

Go to workspace and run query to see results , sometimes time is needed to see data arriving

AzureActivity

Now is possible filter data looking for failovergroup …

AzureActivity

| where OperationNameValue contains(“Microsoft.Sql/servers/failoverGroups/failover/action”)

Finally is possible to filter for specific activity status to get more specific records

AzureActivity

| where OperationNameValue contains(“Microsoft.Sql/servers/failoverGroups/failover/action”) and ActivityStatusValue ==”Start”

Creating alert …

Based on the fact that is possible to create alert based on results of the query next step is to select option to create new alert rule

AzureActivity

| where OperationNameValue contains(“Microsoft.Sql/servers/failoverGroups/failover/action”) and ActivityStatusValue ==”Start”

Now is possible to see that query is part of the alert (In this demo use basic configuration)

Alert logic configure that alert is fired when results are greater than 0

Next step is select action group …

Action groups

https://learn.microsoft.com/en-us/azure/azure-monitor/alerts/action-groups

Select action group

Create alert rule

and finally create alert …

Make failover in failover group

Wait for failover …

In some minutes email with alert will be received in email accounts defined in action group be patience because is not immediately …

Finally email received …

You can go to azure monitor and see alert sent …

Sending activity log to workspace there are more actions or parameters that is possible to monitor now we can use same process to get alert when geodr failover is done only changing query

Alert for Geo replica

Change kusto query to look for different type of operation name value

AzureActivity

| where OperationNameValue contains(“MICROSOFT.SQL/SERVERS/DATABASES/REPLICATIONLINKS/FAILOVER/ACTION”) and ActivityStatusValue ==”Start”

Create alert based on the result

Now is created then go to Azure SQL DB and make failover

Now wait for email with alert remember that will take time is not live data when is sent to workspace

Email received …

Microsoft Tech Community – Latest Blogs –Read More

Expanding availability of Copilot for Microsoft 365

Today, we announced that we are expanding Copilot for Microsoft 365 to a much broader set of organizations, available across more channels, and without a minimum seat required. We are also extending our data residency commitments for Copilot for Microsoft 365 and bringing Microsoft Copilot Graph-grounded chat to Copilot in Windows. Join the upcoming AMA and Tech Accelerator event and engage with experts from Microsoft to learn more about Copilot for Microsoft 365.

Expanding availability of Copilot for Microsoft 365

Starting today, we have removed the 300-seat minimum purchase for Copilot for Microsoft 365 commercial plans. We have also extended support so that Office 365 E3 and E5 customers are eligible to purchase Copilot, and we’re extending Semantic Index for Copilot to Office 365 users with a paid Copilot license. Finally, we have announced that Copilot for Microsoft 365 is generally available for businesses of all sizes, supported on Microsoft 365 Business Standard or Business Premium. This follows a successful early access program focused specifically on small and medium businesses, as well as the previously announced availability for staff and faculty of education institutions with Microsoft 365 A3 or A5 licenses. Commercial customers—including small and medium-sized businesses—can now purchase Copilot for Microsoft 365 through our network Cloud Solution Provider partners (CSPs) and you can learn more about them here.

We still recommend that customers start by giving Copilot to a critical mass of their information workers. We learned during the early access program that this creates a flywheel of interest and adoption, accelerating time to value and an organization’s ability to measure impact in a meaningful way. Copilot for Microsoft 365 licenses will be capped by the total number of eligible base licenses that a customer has. That is, customers must have a product license of one of the prerequisite base SKUs for each seat of Copilot for Microsoft 365 they purchase. You can review requirements for Copilot here.

Updating data residency commitments

We’ve heard feedback from our Enterprise customers that they need assurances that Copilot data is managed appropriately across geographically diverse teams. To support, Copilot for Microsoft 365 upholds residency commitments as outlined in the Microsoft Product Terms and Data Protection Addendum.

We’re pleased to share that later this year Copilot for Microsoft 365 will be added as a covered workload under the data residency commitments in Microsoft Product Terms and the Microsoft Advanced Data Residency (ADR) and Multi-Geo Capabilities add-ons. For additional information on Copilot for Microsoft 365 privacy and data storage please visit Data, Privacy, and Security for Microsoft Copilot for Microsoft 365. To learn more about our commitments to data residency, see Microsoft 365 Data Residency Overview and Definitions.

Microsoft Copilot capabilities coming to Windows desktop

Organizations will soon be able to experience Copilot for Microsoft 365 integrated in Windows desktop, bringing Graph-grounded chat capabilities to Copilot in Windows for users with a Copilot for Microsoft 365 license. This update will be available to organizations with Copilot for Microsoft 365 and Copilot in Windows enabled, beginning the week of February 4th. This adds a new, simple way for users to access Copilot, in addition to the current surfaces in Teams, Edge, and copilot.microsoft.com. For information on managing Copilot in Windows, review this article.

Start preparing your organization for Copilot for Microsoft 365

There are steps you can take today to get your tenant prepared for Copilot:

Prepare your data and assess all relevant data security, privacy, and compliance controls are in place. Copilot inherits your existing permissions and policies so ensuring that these are in place helps ensure seamless deployment. Conduct access reviews for SharePoint sites, documents and tenant data, employ the use of sensitivity labels to protect important data, and validate policies for data loss prevention, retention, and compliance.

Review prerequisites for Copilot by reviewing overview and requirements for Microsoft 365 Copilot to position your tenant to seamlessly deploy Copilot. This setup guide also provides a simple walkthrough of the process.

Learn more about Copilot for Microsoft 365, how it works, benefits to your organizations, how your data is handled and protected.

Familiarize yourself with the admin controls available to manage Copilot in the Microsoft 365 admin center Copilot page.

Develop your adoption strategy by leveraging the resources available on our adoption site, including this adoption kit and user onboarding toolkit.

Check readiness, measure adoption and impact through the Microsoft Copilot Dashboard (in Preview) in Viva Insights or PowerBI that helps organizations maximize the value of Copilot for Microsoft 365. It provides actionable insights to help your organization get ready to deploy AI, drive adoption based on how AI is transforming workplace behavior and measure the impact of Copilot.

To learn more about Copilot, you can review our documentation hub, requirements, setup, and information about privacy, security, and compliance. You can also watch our sessions at this past Ignite on getting ready for Copilot.

For small and medium business customers, join our discussion forum to collaborate with other Copilot for Microsoft 365 users, take part in community calls with open Q&A, hear directly from Microsoft Copilot engineers, and access exclusive resources. Also, check out the resources available on the small and medium business Copilot adoption site.

For a comprehensive introduction and deep dive into Copilot for Microsoft 365, join us during our Copilot for Microsoft 365 Tech Accelerator, February 28 and 29, right here in the Tech Community. Listen in as experts from Microsoft talk delve into preparing for Copilot with recommendations and best practices, share strategies on driving adoption, and measuring and maximizing value for your organization. There will also be plenty of opportunities to ask questions and engage with our experts.

Finally, join our next Ask Me Anything (AMA) tomorrow at 9am PT, here in the Copilot for Microsoft 365 Tech Community. Feel free to post your questions onto the event page ahead of time, and our panel of experts will answer them during the event.

Microsoft Tech Community – Latest Blogs –Read More

Expanding availability of Copilot for Microsoft 365

Today, we announced that we are expanding Copilot for Microsoft 365 to a much broader set of organizations, available across more channels, and without a minimum seat required. We are also extending our data residency commitments for Copilot for Microsoft 365 and bringing Microsoft Copilot Graph-grounded chat to Copilot in Windows. Join the upcoming AMA and Tech Accelerator event and engage with experts from Microsoft to learn more about Copilot for Microsoft 365.

Expanding availability of Copilot for Microsoft 365

Starting today, we have removed the 300-seat minimum purchase for Copilot for Microsoft 365 commercial plans. We have also extended support so that Office 365 E3 and E5 customers are eligible to purchase Copilot, and we’re extending Semantic Index for Copilot to Office 365 users with a paid Copilot license. Finally, we have announced that Copilot for Microsoft 365 is generally available for businesses of all sizes, supported on Microsoft 365 Business Standard or Business Premium. This follows a successful early access program focused specifically on small and medium businesses, as well as the previously announced availability for staff and faculty of education institutions with Microsoft 365 A3 or A5 licenses. Commercial customers—including small and medium-sized businesses—can now purchase Copilot for Microsoft 365 through our network Cloud Solution Provider partners (CSPs) and you can learn more about them here.

We still recommend that customers start by giving Copilot to a critical mass of their information workers. We learned during the early access program that this creates a flywheel of interest and adoption, accelerating time to value and an organization’s ability to measure impact in a meaningful way. Copilot for Microsoft 365 licenses will be capped by the total number of eligible base licenses that a customer has. That is, customers must have a product license of one of the prerequisite base SKUs for each seat of Copilot for Microsoft 365 they purchase. You can review requirements for Copilot here.

Updating data residency commitments

We’ve heard feedback from our Enterprise customers that they need assurances that Copilot data is managed appropriately across geographically diverse teams. To support, Copilot for Microsoft 365 upholds residency commitments as outlined in the Microsoft Product Terms and Data Protection Addendum.