Category: Microsoft

Category Archives: Microsoft

Corporate Comms Discussion – January

What a great discussion with Wendy Sherwood about what was done at CBRE with Viva Connections and Viva Engage. Lots of great stuff from creating goals to thinking about the hybrid workforce. Take a listen.

Microsoft Tech Community – Latest Blogs –Read More

Corporate Comms Discussion – January

What a great discussion with Wendy Sherwood about what was done at CBRE with Viva Connections and Viva Engage. Lots of great stuff from creating goals to thinking about the hybrid workforce. Take a listen.

Microsoft Tech Community – Latest Blogs –Read More

Azure SQL DB license-free standby replica | Data Exposed

Learn more about the License-free HADR replica in Azure SQL DB in this episode of Data Exposed with Anna Hoffman and Rajesh Setlem.

Resources:

To learn more and get started: https://aka.ms/sqldbstandby

View/share our latest episodes on Microsoft Learn and YouTube!

Microsoft Tech Community – Latest Blogs –Read More

Securing the Clouds: Navigating Multi-Cloud Security with Advanced SIEM Strategies

Note: this is the first of a four-part blog series that explores the complexities of securing multiple clouds and the limitations of traditional Security Information and Event Management (SIEM) tools.

This first article is by a team of Microsoft experts who share their insights and experiences in establishing a comprehensive security posture in a multi-cloud environment. It explores strategies for achieving a unified security stance, implementing Microsoft’s security solutions, and realizing the benefits and greater insights of a multi-cloud SOC. It also explores how a threat-based approach is beneficial for helping organizations stay ahead of adversaries in this modern AI world.

Multi-cloud challenges and SIEM limitations

The era of cloud computing has revolutionized the way businesses operate, providing flexibility, scalability, and efficiency. However, the transition to and implementation of multi-cloud environments comes with a unique set of security challenges. These include disparate data formats, varying security protocols, and the sheer volume and velocity of data traffic that traditional SIEM tools were not originally designed to handle. Organizations that take proactive measures and who leverage a modern SIEM strategy with the correct balance of tools, including moving from best of breed to best of platform, and who work towards reducing complexity will be less vulnerable to attacks and better positioned to thrive.

Diverse data and inconsistent protocols

Significant complexity arises from the need to manage and secure disparate data types across different cloud platforms. Each cloud service provider (CSP) has its own set of tools and services, with varying logging formats and protocols. Traditional SIEM solutions struggle to integrate this diverse data and are often designed with a single, on-premises infrastructure in mind. As a result, they were not originally designed to handle the complexity, scale, and variety of data sources that exist in today’s hybrid and cloud-based infrastructures. Their architecture and capabilities are often limited to on-premises use cases, making it challenging to effectively ingest, process, and analyze the wide array of data generated by diverse sources in these environments. This, in turn, can lead to gaps in monitoring and analysis.

Volume and velocity

The volume of data generated by cloud services can be staggering. Most traditional SIEMs are not built to scale rapidly or cost-effectively with the exponential growth of log data, which can result in performance bottlenecks and increased costs. Moreover, the velocity at which this data is generated and needs to be analyzed is another challenge. This requires SIEMs to have high processing capabilities and advanced analytics to provide timely insights into security events.

Evolving threat landscape

Cloud services are continuously evolving, with frequent updates and new features. This constant change means that security monitoring tools must be equally agile. Traditional SIEM systems may not update as quickly, leading to outdated security measures that cannot protect against the latest threats or leverage the newest cloud security services.

Integration and correlation issues

Integrating multiple SIEM solutions across multiple clouds can lead to increased complexity in data correlation and analysis. With data silos, security teams often find it challenging to correlate events across different platforms, which is crucial for detecting sophisticated attacks. These SIEM systems may require custom configurations and extensive manual effort to achieve a unified view, consuming valuable time and resources.

Limitations in cloud-specific threat detection

Traditional SIEM tools are often limited in their ability to detect cloud-specific threats and vulnerabilities. They might lack the context or specialized detection capabilities needed to identify and respond to incidents that are unique to cloud environments, such as misconfigured storage buckets, excessive permissions, or unsecured serverless computing resources.

Cost and resource constraints

The cost implications of operating multiple SIEMs are not trivial. Licensing, infrastructure, and operational costs can skyrocket, particularly as data volumes grow and retention periods must extend to meet new and changing regulatory requirements. Additionally, the expertise required to manage and maintain multiple SIEMs can strain already limited cybersecurity personnel resources.

Inflexible and cumbersome upgrades

Traditional SIEM tools may also be inflexible, requiring significant downtime for upgrades and maintenance, which can be at odds with the all-day, everyday nature of cloud services. This inflexibility can hinder a business’s ability to adapt quickly to new security requirements or operational demands.

The limitations of traditional SIEM tools in the context of multi-cloud security can lead to increased risk and decreased visibility into threats. Therefore, organizations must look towards next-generation SIEM solutions that are built for modern cloud capabilities, offering the scalability, flexibility, and advanced analytics needed to secure their cloud and on-premises environments effectively.

Conclusion

Multi-cloud security is a complex and evolving challenge that requires a modern and agile approach. Traditional SIEM tools are not designed to cope with the scale, diversity, and dynamism of cloud-based environments, resulting in reduced visibility, increased risk, and inefficient operations. To overcome these limitations, organizations need to adopt next-generation SIEM solutions that are cloud-native, scalable, flexible, and intelligent.

Future posts in this series will cover the following topics:

How Microsoft has applied a threat-driven approach to enrich use-case development as a proactive and strategic way of managing cybersecurity risks that focuses on the threats rather than just the controls and vulnerabilities as required by your compliance requirements.

How Microsoft has implemented its security solutions across Azure, Oracle, AWS, and on-premises environments, thus enabling a unified and comprehensive defense against threats, for any enterprise

Key benefits and outcome examples for some of our multi-cloud security projects, including improved detection capabilities, enhanced visibility across enterprise, efficiency, and cost savings.

Microsoft Tech Community – Latest Blogs –Read More

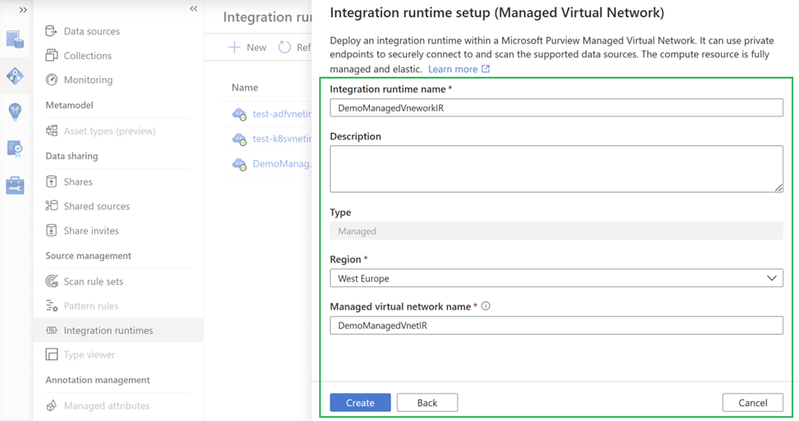

Introducing Multi-region and Multi-VNets for Managed Virtual Network in Microsoft Purview

Managed Virtual Network (VNet) in Microsoft Purview enables the scenario when your Microsoft Purview and/or data systems restrict network access, you can run the scan on the Managed VNet Integration Runtime (IR) – a fully managed service by Purview – to securely connect to them via private endpoints.

We are excited to announce a new feature enabling multi-region and mult-Vnet support for Managed VNets.

The new feature provides the ability to:

Create (max 5 Managed VNets) across different regions within a single Purview Account

Network isolation within their own organizations to address potential data residency or scan performance concerns

How do I use the new feature?

Open the Microsoft Purview portal by:

Browsing directly to https://web.purview.azure.com and selecting your Microsoft Purview account.

Opening the Azure portal, searching for and selecting the Microsoft Purview account. Selecting the the Microsoft Purview governance portal button.

From Integration runtimes page, select + New icon, to create a new runtime. Select Azure and then select Continue.

In the set up window, enter a name for the Managed VNet IR, select the region and enter a name for the Managed Virtual Network that you want to create in that region.

Step 1

Step 2

Step 3

The managed endpoint will be auto-created and you will need to go to Azure portal and approve that.

You can view the list of Managed VNet IRs and their Managed virtual Network names under Integration Runtimes

The private endpoints are automatically created, and you can view them under Management->Manage private endpoints. Similarly, when a Managed VNet IR is deleted, the associated private end point, Managed VNet IR and Managed VNet will be deleted.

Additional Resources:

Learn about Microsoft Purview: What is Microsoft Purview? | Microsoft Learn

Learn about Managed VNet: Managed Virtual Network and managed private endpoints | Microsoft Learn

Microsoft Tech Community – Latest Blogs –Read More

Securing the Clouds: Navigating Multi-Cloud Security with Advanced SIEM Strategies

Note: this is the first of a four-part blog series that explores the complexities of securing multiple clouds and the limitations of traditional Security Information and Event Management (SIEM) tools.

This first article is by a team of Microsoft experts who share their insights and experiences in establishing a comprehensive security posture in a multi-cloud environment. It explores strategies for achieving a unified security stance, implementing Microsoft’s security solutions, and realizing the benefits and greater insights of a multi-cloud SOC. It also explores how a threat-based approach is beneficial for helping organizations stay ahead of adversaries in this modern AI world.

Multi-cloud challenges and SIEM limitations

The era of cloud computing has revolutionized the way businesses operate, providing flexibility, scalability, and efficiency. However, the transition to and implementation of multi-cloud environments comes with a unique set of security challenges. These include disparate data formats, varying security protocols, and the sheer volume and velocity of data traffic that traditional SIEM tools were not originally designed to handle. Organizations that take proactive measures and who leverage a modern SIEM strategy with the correct balance of tools, including moving from best of breed to best of platform, and who work towards reducing complexity will be less vulnerable to attacks and better positioned to thrive.

Diverse data and inconsistent protocols

Significant complexity arises from the need to manage and secure disparate data types across different cloud platforms. Each cloud service provider (CSP) has its own set of tools and services, with varying logging formats and protocols. Traditional SIEM solutions struggle to integrate this diverse data and are often designed with a single, on-premises infrastructure in mind. As a result, they were not originally designed to handle the complexity, scale, and variety of data sources that exist in today’s hybrid and cloud-based infrastructures. Their architecture and capabilities are often limited to on-premises use cases, making it challenging to effectively ingest, process, and analyze the wide array of data generated by diverse sources in these environments. This, in turn, can lead to gaps in monitoring and analysis.

Volume and velocity

The volume of data generated by cloud services can be staggering. Most traditional SIEMs are not built to scale rapidly or cost-effectively with the exponential growth of log data, which can result in performance bottlenecks and increased costs. Moreover, the velocity at which this data is generated and needs to be analyzed is another challenge. This requires SIEMs to have high processing capabilities and advanced analytics to provide timely insights into security events.

Evolving threat landscape

Cloud services are continuously evolving, with frequent updates and new features. This constant change means that security monitoring tools must be equally agile. Traditional SIEM systems may not update as quickly, leading to outdated security measures that cannot protect against the latest threats or leverage the newest cloud security services.

Integration and correlation issues

Integrating multiple SIEM solutions across multiple clouds can lead to increased complexity in data correlation and analysis. With data silos, security teams often find it challenging to correlate events across different platforms, which is crucial for detecting sophisticated attacks. These SIEM systems may require custom configurations and extensive manual effort to achieve a unified view, consuming valuable time and resources.

Limitations in cloud-specific threat detection

Traditional SIEM tools are often limited in their ability to detect cloud-specific threats and vulnerabilities. They might lack the context or specialized detection capabilities needed to identify and respond to incidents that are unique to cloud environments, such as misconfigured storage buckets, excessive permissions, or unsecured serverless computing resources.

Cost and resource constraints

The cost implications of operating multiple SIEMs are not trivial. Licensing, infrastructure, and operational costs can skyrocket, particularly as data volumes grow and retention periods must extend to meet new and changing regulatory requirements. Additionally, the expertise required to manage and maintain multiple SIEMs can strain already limited cybersecurity personnel resources.

Inflexible and cumbersome upgrades

Traditional SIEM tools may also be inflexible, requiring significant downtime for upgrades and maintenance, which can be at odds with the all-day, everyday nature of cloud services. This inflexibility can hinder a business’s ability to adapt quickly to new security requirements or operational demands.

The limitations of traditional SIEM tools in the context of multi-cloud security can lead to increased risk and decreased visibility into threats. Therefore, organizations must look towards next-generation SIEM solutions that are built for modern cloud capabilities, offering the scalability, flexibility, and advanced analytics needed to secure their cloud and on-premises environments effectively.

Conclusion

Multi-cloud security is a complex and evolving challenge that requires a modern and agile approach. Traditional SIEM tools are not designed to cope with the scale, diversity, and dynamism of cloud-based environments, resulting in reduced visibility, increased risk, and inefficient operations. To overcome these limitations, organizations need to adopt next-generation SIEM solutions that are cloud-native, scalable, flexible, and intelligent.

Future posts in this series will cover the following topics:

How Microsoft has applied a threat-driven approach to enrich use-case development as a proactive and strategic way of managing cybersecurity risks that focuses on the threats rather than just the controls and vulnerabilities as required by your compliance requirements.

How Microsoft has implemented its security solutions across Azure, Oracle, AWS, and on-premises environments, thus enabling a unified and comprehensive defense against threats, for any enterprise

Key benefits and outcome examples for some of our multi-cloud security projects, including improved detection capabilities, enhanced visibility across enterprise, efficiency, and cost savings.

Microsoft Tech Community – Latest Blogs –Read More

Modernizing Azure Automation: A 2023 Retrospective and Future outlook

Majority of the organizations are at different stages of their cloud adoption journey, as they navigate through public clouds, private clouds and on-premises data centers. Their IT landscape is often characterized by multiple applications and services, that are spread across diverse environments. Managing this complex landscape manually or with multiple orchestration services can be daunting and inefficient. Irrespective of whether organizations are completely on-premises or exploring cloud solutions for the first time or born in the cloud, all share a common goal: to enhance efficiency and agility. Orchestration has become indispensable to streamline management tasks effectively to reduce cost and allow business to focus on its core priorities.

Azure Automation has emerged as a pivotal service for managing complex hybrid environments by delivering a consistent user experience across multiple cloud platforms. Customers utilize Azure Automation for a variety of tasks, such as resource lifecycle management, mission-critical jobs that often require manual intervention, guest management at scale and other common enterprise IT operations such as periodic maintenance. It targets orchestration on a wide array of resources such as Virtual Machines, Arc-enabled Servers, Databases, Storage, Azure Active Directory, Mailboxes and much more, along with complex workflows involving many resources. Azure Automation provides a complete end-to-end solution that facilitates authoring of PowerShell and Python scripts, with a serverless platform for execution of those scripts, offers the flexibility to execute those scripts on-premises or in customer’s local environment and monitors those executions comprehensively.

A 2023 Retrospective

Azure Automation has made substantial investments in modernizing its platform and significantly improving user experience over the previous year and promises to continue delivering value to its customers in the years to come. Here is a summary of key enhancements so far, that have laid the foundation for even greater benefits in the future:

New runtime languages: PowerShell 7.2 and Python 3.8 runbooks are Generally available. This enables Developers and IT administrators to execute runbooks in the most popular scripting languages. Customers are adopting Azure Automation to consolidate their scripts that are distributed on-premises and across multiple clouds and gaining operational efficiency by managing their Azure and Arc-enabled resources through a consistent experience.

Support for Azure CLI commands: Now Azure CLI commands can be invoked in Azure Automation runbooks (preview). The rich command set of Azure CLI expands capabilities of runbooks even further, allowing you to reap combined benefits of both to automate and streamline resource management on Azure.

Advanced script authoring experience: Azure Automation extension for Visual Studio Code is Generally Available. It offers an advanced authoring and editing experience for PowerShell and Python scripts. The extension leverages GitHub Copilot for intelligent code completion that provides suggestions directly within the editor, thereby making the coding process faster and simpler.

Granular control through Runtime environment: Module management and runbook update has never been so hassle-free! Runtime environment (preview) allows complete configuration of the job execution environment without worrying about mixing different module versions in a single Automation account. You can upgrade runbooks to newer language versions with minimal effort to stay secure and take advantage of latest functionalities. It is strongly recommended to use Runtime environment to update runbooks on end-of-support runtimes PowerShell 7.1 and Python 2.7 since both PowerShell 7.1 and Python 2.7 have been announced retired by parent products PowerShell and Python respectively.

Unified experience across diverse platforms: Hybrid Worker extension is Generally Available and supports Azure VMs, off-Azure servers registered as Arc-enabled servers, Arc-enabled SCVMM and Arc-enabled VMware VMs. This empowers organizations to orchestrate their entire hybrid environment at scale through a single interface. You can directly install the extension on Azure or Arc-enabled servers and execute runbooks for a variety of scenarios. These include in-guest VM management, access to other services privately from Azure Virtual Network, and to overcome organizational restrictions of keeping data in cloud.

State-of-the-art backend platform: Azure Automation has redesigned its platform and majority of the runbooks are now executing successfully on secure and modern Hyper-V containers. With this move and additional measures taken to minimize infrastructure failures, the service has further hardened its security and improved reliability. These enhancements have established the groundwork for faster release of innovative features in the coming months. If your runbooks have taken dependency on old platform and you observe unexpected job failures, take a look at the known issues and workarounds here.

Future outlook

Azure Automation is continuously evolving and enhancing its capabilities, striving to become the best-in-class platform for resource management in an adaptive cloud. It is providing organizations with more efficient and reliable ways to navigate across different services and applications residing in multiple clouds (on-premises data centers, private clouds, and public clouds). In addition to its ongoing commitments to strengthen security, reliability, resiliency and scale, Azure Automation is building critical features to further improve customer experience. Here are some of the improvements currently under development and expected to be released soon:

Aligning Runbook support with latest Runtime releases: Azure Automation is working actively to reduce the time gap between release of new PowerShell and Python language versions and their support in runbooks. Stay tuned for upcoming announcements on PowerShell 7.4!

Source control integration for new runtimes: You would now be able to keep runbooks updated with scripts in GitHub or Azure DevOps source control repository. This feature simplifies the process of promoting code that has undergone testing in the development environment to the production Automation account.

Native integration with Azure services: Azure Automation is already being used for creating runbooks that orchestrate across multiple resources. Keep an eye out for deeper integrations with more Azure resources for ease of management and to improve efficiency.

Richer Gallery of Runbooks: Improvements are planned in Runbook Gallery to help you search runbooks effortlessly for common scenarios and boost your productivity. Contribute to the community by sharing your scripts here.

Reminder for upcoming Retirements

Ensure to transition to the supported services/features prior to the retirement date:

AzureRM PowerShell module will retire on 29 February 2024 and will be replaced by Az PowerShell module. Update your outdated runbooks immediately.

With the retirement of Log Analytics agent, following dependent services/features will retire on 31 August 2024. It is strongly recommended to migrate to supported services before retirement date:

Log Analytics agent-based Hybrid Runbook Worker will be retired in favor of extension-based Hybrid Runbook Worker. Learn more.

Azure Automation Update Management will be retired in favor of Azure Update Manager. Learn more.

Azure Automation Change Tracking & Inventory will be retired in favor of Change Tracking & Inventory with AMA. Learn more.

For any questions or feedback, please reach out to askazureautomation@microsoft.com

Microsoft Tech Community – Latest Blogs –Read More

New on Microsoft AppSource: January 12-18, 2024

We continue to expand the Microsoft AppSource ecosystem. For this volume, 112 new offers successfully met the onboarding criteria and went live. See details of the new offers below:

Get it now in our marketplace

Backup as a Service (BaaS): Designed for businesses of any size, Cloudserve’s Backup as a Service (BaaS) backs up on-premises servers and end-user devices to the cloud, allowing for easy data recovery. The solution is agentless, autonomous, and AI-powered, featuring real-time monitoring and compliance measures.

BESTDoc Electronic Document Management: Available only in Spanish, this Microsoft Azure-based Software as a Service (SaaS) helps organizations optimize their electronic document management processes while saving time, space, and costs. BESTDoc enables integration with other services and tools and automation of document workflows.

Document Companion: Document Companion is a cost-effective solution offering comprehensive PDF management, stamp integration, powerful editing tools, collaboration and connectivity, and industry-specific solutions. Packed with AI-powered technologies, Document Companion automates document capturing and management in numerous formats including Microsoft Word, Excel, and PowerPoint.

Employee ideas Enterprise – Idea Management Software: Ideas is a Microsoft Teams-based platform that helps businesses crowdsource ideas, engage employees in business goals, and automate idea management. Use Ideas by Sideways 6 for customizable campaign setups and automated workflows.

Imperium Job Safety Analysis: Job Safety Analysis extends Microsoft Dynamics 365 to evaluate workplace safety by using built-in surveys based on OSHA (Occupation Safety and Health Administration) standards. The package includes real-time dashboards, automated survey assignments, anonymous responses, and secured submissions. Job Safety Analysis includes score-based data evaluation for OSHA reporting.

KeeperAI (SaaS): KeeperAI enables you to build meaningful connections within your virtual workspace. Simply add KeeperAI to Microsoft Teams, Outlook, or Microsoft 365 hubs to understand social cues, every participant’s personality, and the soft skills participants bring to the table.

Real Estate: The Real Estate app enhances Microsoft Dynamics 365 to enable small capital investors to manage property investments, portfolios, debts, utilities, bills, and invoices. The app stores data on Microsoft Azure and is ideal for commercial and residential properties.

SMARTpush: SMARTpush simplifies transferring data from Microsoft Dynamics 365 Business Central to external databases. The user-friendly interface allows you to select the exact data you need and automatically creates tables and fields in the external database. SMARTpush is available in Danish and English.

Watercooler: Watercooler lets you use Microsoft Teams to create virtual watercooler breaks to connect with colleagues. Employees can choose from a variety of topics, fostering conversations they’re genuinely interested in. Watercooler automates meeting setups, making every interaction smooth and stress-free.

Go further with workshops, proofs of concept, and implementations

ACP Copilot Take Off for Microsoft 365: 10-Day Engagement: ACP IT Solutions GmbH will help you ensure that the right people in your organization start using Microsoft 365 Copilot with the right tools and knowledge. This service will propel you toward a successful deployment by building a Center of Excellence, delivering workshops for managers, and conducting training for key users.

Amplify Microsoft Teams with Viva Amplify: 45-Day Proof of Concept: UnifiedCommunications.com will remotely provide custom content for an area of Microsoft Teams you wish to amplify and use Microsoft Viva Amplify to create, publish, and track the effectiveness of your campaign. This service includes kick-off, discovery, envisioning, implementation, and closeout stages.

Custom App Development for Microsoft Teams (IN): Crayon offers tailored development of Microsoft Teams apps integrated with your Microsoft 365 ecosystem to address business challenges. The solution includes customized apps, user-friendly app interfaces, task automation, data connectivity, ongoing support, and performance analytics. This offer is available in India.

Dynamics 365 for NGOs: 1-Day Assessment: Ifunds Germany GmbH offers a free consultation for non-profit organizations to provide insight into how NGOs can gain discounted access to products such as Microsoft Dynamics 365, Microsoft 365, Power BI, Teams, SharePoint, and Forms. These tools can help organizations better understand their supporters, manage projects and finances, and focus on important tasks.

Entec Focused Training for Microsoft 365: 4-Hour Workshop: Enten offers personalized training on Microsoft 365 tools, including Teams, Outlook, OneNote, Word, Excel, PowerPoint, OneDrive, SharePoint, and more. The customized service includes consultation and up to 4 hours of training, with a focus on maximizing the benefits for your business.

ESG Board: 4-Week Implementation: ESG Board automates data collection, emissions calculations, and reporting for environmental, social, and governance (ESG) performance. Advaiya will implement its Microsoft SharePoint-based tool to help your company meet compliance requirements, track progress, and identify areas for improvement. The implementation plan includes discovery sessions, user interface setup, completion reports, and GHG emission calculation setup.

Google Drive to OneDrive for Business: 2-Week Migration: Penthara offers a smooth and secure migration from Google Drive Enterprise to Microsoft OneDrive for Business. Penthara’s experts will ensure data integrity, security, and minimal downtime during the migration process. This service is designed for up to 25 users and up to 250GB of data. The migration includes post-migration support.

Implementing Microsoft Purview: Workshop: Suri Services offers a specialized workshop for efficient deployment of Microsoft Purview with an option for implementation assistance. The planning stage involves defining configurations, users, retention periods, and compliance manager settings. Pre-deployment involves configuring roles, eDiscovery, and privacy risk management. Deployment includes DLP management and retention policies, while optional advanced deployment offers data connector exploration. This offer is available in Spanish.

Microsoft 365 Copilot: 2-Hour Workshop: This Japanese-language service from JBS will help you learn how to use Microsoft 365 Copilot with Teams, Outlook, Word, and PowerPoint to solve business challenges. The workshop features a combination of lectures and demonstrations, allowing users to experience software features and ask questions about functionality.

Microsoft Sales Copilot: Implementation: Imperium will collaborate closely with you to guarantee seamless and timely implementation of Microsoft Sales Copilot customized to meet your business needs. Microsoft Sales Copilot is an AI-powered solution integrated into Microsoft 365, Teams, and Dynamics 365.

Microsoft Sustainability Manager: Assessment to Implementation: Adastra’s comprehensive offer includes workshops, analysis of current tools, customization of dashboards, and configuration of scorecards for tracking ESG (environmental, social, and governance) performance using Microsoft Sustainability Manager. The phased engagement begins with a free workshop and the option to develop a proof of concept followed by a minimum viable product.

Modern Data Platform: 30-Day Implementation: Smartbridge offers a production-ready Microsoft Azure Synapse environment with a data landing zone and analytics layer for reporting featuring Microsoft Power BI. Smartbridge partners with clients to simplify business transformation and establish a sharper understanding of customer needs, delivering actionable insights and predictions while driving innovation.

Multi-Store Health Prediction Dashboards: 2-Week Proof of Concept: Smartbridge offers an AI-based solution for mitigating business risk around store/location success. Using Microsoft Azure Machine Learning, Azure Synapse Analytics, and Power BI, the solution incorporates revenue, customer churn, and brand reputation based on the current and future state of the company to enable risk management monitoring and protection.

Office Apps on Microsoft 365: 1-Day Training: IT Partner offers training services to help organizations optimize Microsoft 365 features. IT Partner consultants will configure services, provide expert guidance, implement security measures, and offer continuous support to ensure client satisfaction.

RPA Migration Factory: 2-Hour Workshop: Sonata Software offers the necessary tools, guidance, and services to facilitate a seamless migration of your existing RPA bots to Microsoft Power Automate. In this workshop, you’ll learn the benefits of moving to the Microsoft Power Platform, including integrations with Microsoft products, advanced AI capabilities, and empowerment of citizen developers.

Transforming Business Operations with Microsoft 365 Copilot: Half-Day Workshop: Embark on a transformative journey with BitHawk’s specialized workshop, designed to fully harness the capabilities of Microsoft 365 Copilot in your organization. This workshop is customized for forward-thinking businesses eager to integrate AI into their daily operations for enhanced productivity and collaboration. The workshop delivers a robust learning experience that prepares you to navigate the complexities of Copilot environments with ease and efficiency.

Viva Amplify: 45-Day Proof of Concept: UnifiedCommunications.com will introduce you to Microsoft Viva Amplify capabilities such as management, distribution, and assessment of corporate communications. UnifiedCommunications.com consultants will work with you to define and execute Viva Amplify campaigns and begin tracking the success of the message. All training content will be provided at the closeout stage.

Contact our partners

ACP Copilot: AI Advisory for Microsoft 365 Copilot: 4-Hour Workshop

AI Readiness, Strategy, and Roadmap: Engagement

AI Strategy and Roadmap for Power Virtual Agents: 3-Week Assessment

airfocus – Sync with Microsoft Azure DevOps

Aptean Quick Production for Food and Beverage

Belgian Local Government in the Cloud

Birthdays and Holidays Reminder Bot for Microsoft Teams

BMI SupplyAutomate EvolutionX Connector

Business Central – Finance & Distribution: 4-Day Assessment (CA)

Business Central – Finance & Distribution: 4-Day Assessment (US)

Business Central – Finance & Distribution in French: 4-Day Assessment (CA)

Business Process Automation and Modernization

Business Reporting and Insights

Consultation for Microsoft 365

Copilot for Microsoft 365: Readiness Assessment

Data Quality App for Healthcare

Devensys Cybersecurity and MXDR: 3-Day Assessment

Enable Trusted and Controlled ESG Data: 3-Week Assessment

ESG Data Requirements: 8-Week Assessment

ESG Operating Model: 3-Week Assessment

ESG Reporting Processes: 8-Week Assessment

HCL/IBM Connections Migration to Microsoft 365: 2-Week Assessment

iThink Connect Work Anniversary for Graph

Logistic Common Data Layer (IT)

Microsoft365 Copilot Readiness: 2-Week Assessment

Microsoft 365 Copilot Readiness & Pilot Planning: 5-Week Assessment

Microsoft Data Security Value Realization: 1-Week Assessment

Microsoft Identity Value Realization: 1-Week Assessment

Microsoft Threat Protection Value Realization: 1-Week Assessment

MJ Flood Voice for Microsoft Teams

Purchase Requisition Automation

Redoflow Process Consulting: 8-Hour Assessment

RxGenius.ai – GenAI Pharmacy Assistant for E-Pharmacy

SaaS Development Support Service

StudioDesigner Inspiration Library

SyncLite Rapid IoT Data Connector

Taxxon Enhacement Pack for Guatemala

Transition to Cloud: 4-Week Assessment

Trusting Social AGI Collection Agent

Trusting Social AGI Digital Sales & Onboarding Agent

Uniserve 360 Customer Communication Management Tool

Vault Subcontractor Management

This content was generated by Microsoft Azure OpenAI and then revised by human editors.

Microsoft Tech Community – Latest Blogs –Read More

UnifyCloud – Modernizing your .NET apps to Windows containers on Azure Kubernetes Services

This blog post has been co-authored by Microsoft and Mark Erhart and Marc Pinotti from UnifyCloud.

CLOUDATLAS OVERVIEW

UnifyCloud’s CloudAtlas is an end-to-end Azure migration platform that automates and accelerates the assessment, remediation, and migration of applications and associated databases to Azure Kubernetes Services. Using CloudAtlas you can quickly assess your entire application portfolio for migration to Azure and perform automated remediation of most of the required code changes for faster migration to Azure.

UnifyCloud has been recognized by Microsoft as a Partner of the Year honoree for four consecutive years for its CloudAtlas platform. CloudAtlas has been utilized in over 3,500 global customer engagements, including over 200 of the Global 500, to assess the largest and most complex application portfolios for modernization and migration to Azure Kubernetes Services using Windows containers.

In this blog we will cover:

Why CloudAtlas?

How to Quickly Modernize to Windows containers on Azure Kubernetes Service with CloudAtlas

Creating a Project

Scan the Application Using CloudAtlas

Evaluating Migration Options

Application Remediation

Generating Landing Zones for Seamless Migration

Migration learnings and insights from analyzing more than 4,000 applications

Frequently Asked Questions

Conclusion

WHY CLOUDATLAS

In the past, a major deterrent to digital transformation was the extensive manual effort it required. Considering it takes an average developer 3 days to manually assess 10,000 lines of code to identify required changes1, manual analysis of even the simplest application portfolios can be time- and cost-prohibitive.

CloudAtlas leverages AI and Machine Learning to automate cloud migration processes, including the assessment of infrastructure, scanning application source code and databases, automating remediation, and automatically generating landing zones.

An example of this automation is CloudAtlas’ static code analysis that scans portfolios of apps, databases, and workloads with millions of lines of code in just minutes – a fraction of the time a manual assessment would require. For example, one portfolio of 574 million lines of code analyzed by CloudAtlas would have required several lifetimes of manual effort for even a quick scan, let alone an assessment that delivered the detail, analytics, insights, and recommendations that CloudAtlas delivered. CloudAtlas delivered this entire analysis in 199 hours.

This analysis provides detailed insights and recommendations to develop a modernization and migration plan with output that includes:

Cloud readiness

Migration options including IaaS, Containers/AKS, and PaaS as part of a 6R analysis that includes details to rehost, refactor, rearchitect, rebuild, and more

Tasks required

Hours of effort

Line of code guidance and on changes required for applications and databases to run effectively in the cloud

Customizable cost estimates.

It does all of this without the source code ever leaving the customer environment and presents the results in a simple to use dashboard, with drilldowns for each component that include the recommendation, reasoning, cost, and alternative approaches.

HOW TO QUICKLY MODERNIZE TO AZURE KUBERNETES SERVICE WITH CLOUDATLAS

CREATE A PROJECT

The process starts by downloading the CloudAtlas Modernize and Migrate tool and creating a project. In this example, we will be walking through a project using the sample eShopModernizing (eShop) app that has hypothetical legacy back office eShop web apps (traditional ASP.NET Web Forms and MVC) created by the .NET team. As noted, with CloudAtlas your source code never leaves your environment or source code repository.

Log into CloudAtlas, and download the CloudAtlas scanning app. Once the scanner is downloaded, start it and create a new project. The CloudAtlas scanner can assess single applications or a portfolio of applications and databases, minimizing processing and saving time in conducting application assessments.

Once your project is created you will need to add your application. In this example, we add the eShop app and indicate the platform, application type and source code location to prepare the application for scanning.

SCAN THE APPLICATION USING CLOUDATLAS

Once the app is added, you will see it in your inventory within the tool. At this point, the app code can be scanned, and metadata collected for analysis by CloudAtlas. Just select the eShop application and click “Scan” to start the analysis.

CloudAtlas can scan millions of lines of code in just minutes, eliminating the need for resource- and time-intensive manual effort. As an example, CloudAtlas has scanned a portfolio of 24 applications and 65 databases comprised of 6.7 million lines of code in just over 2 hours. A thorough manual assessment of this level would have required over 2,000 developer days – more than 5 years – of effort. That level of effort would cost more than $2M in time and resources. Even better, CloudAtlas scans applications and databases at a level of detail and accuracy that is much greater than any manual scan would achieve.

A simple app like this eShop demo app is quickly scanned in just over one minute. In practice, CloudAtlas can scan multiple applications at once.

Once scanned, the metadata for the source code can be viewed and edited prior to uploading to the CloudAtlas SaaS platform to ensure that sensitive or secure elements of the code do not leave your environment or source code repository. This can be achieved because the lightweight CloudAtlas scanner is not connected to the internet and can be run on a Windows PC or VM in your environment. The scanner produces metadata which is stored in an XML file. The XML file is can be reviewed to confirm that no IP, source code, sensitive information, or any other secure data is in the metadata in the XML file. If necessary, sensitive or proprietary information can be masked or deleted.

Once the XML file is reviewed and ready for analysis, it can be uploaded to the CloudAtlas SaaS platform for analysis.

Once uploaded, the eShop app (or any other apps or databases that have been uploaded) will appear in your project portfolio, where you can take further action, including initiating a full CloudAtlas analysis. This is done with the click of the “Analyze” button.

As part of the analysis process, a few pieces of information need to be collected to guide the assessment. This includes a short survey about the app or portfolio to provide additional information that is not available in the source code, including items like global load balancing, presence of PII, and criticality of the application to business operations. If you are unsure of the answers to the questions, selecting “No” provides the most comprehensive list of application remediation recommendations. Indicating the strategic importance of the application informs the recommendation for the type of remediation – rehosting, refactoring, rearchitecting, etc.

If required, the appropriate compliance standards can be selected from a list of more than fifty different global standards for incorporation into the CloudAtlas analysis.

EVALUATING MIGRATION OPTIONS

Once those items are complete, the application is ready to be analyzed by CloudAtlas to provide options, recommendations and guidance for modernization. The CloudAtlas assessment includes a multitude of analytics depending on the app, database or portfolio analyzed for multiple migration scenarios, including migration to Containers, PaaS, VMs, and Power Apps. Analysis includes an overview of all options and the readiness, tasks, effort and cost of each migration option. This allows users to make informed decisions with complete information to migrate with confidence. In this example, we’ll focus on the eShop app and the associated guidance for Windows Container modernization.

Code-level guidance is provided for every option with recommendations, count of changes required, estimated time to remediate, and sizing – a good proxy for complexity – and relative readiness. CloudAtlas also recommends the optimal migration path based on the analysis and business requirements. When you select container assessments, an overview highlights the recommendation, required tasks, hours of effort and cost for approaches with or without .NET to .NET Core conversion. In this example, CloudAtlas has identified the fastest path to modernizing the legacy .NET eShop app is to containerize it to Windows containers on AKS.

App-level guidance highlights the number of components and recommended remediation tasks, breaking those down into four different areas:

Application and Platform Design

Security

Network and Availability

Storage

Task size and level of effort in hours are provided to assist in migration planning. Cost estimates to run in Azure are also provided and can be further customized.

In this example, CloudAtlas estimates that eShop app is 88% container ready, subject to 15 changes (11 small and 4 medium) requiring 58 hours of effort if done manually.

APPLICATION REMEDIATION

Every remediation task is described at a detailed level to guide the effort and instill confidence in the changes to be made. CloudAtlas identifies the category, datapoint and the details of the recommendation including the reason for the change, the code block, the line of code, the file path, the recommended changes, the estimated effort, the migration impact, and authoritative Azure guidance. Where code changes are required, sample replacement code is typically provided.

As an example of the guidance, in this eShop MVC app remediation example, the app uses the InProc mode that stores session state in memory on the Web server which doesn’t allow for scaling because the memory provider isn’t distributed. CloudAtlas recommends that you should consider using either StateServer or SQLServer modes.

CloudAtlas can also accelerate application remediation with automated code changes that significantly reduce manual effort – often by as much as 80%. For every application, CloudAtlas provides an overview of the time savings this automated remediation capability provides.

For the eShop demo application, the readiness is improved from 88% to 91%, reducing manual effort by 25%. This may seem small, but it saves more than a day’s worth of effort for this small demo application.

To provide transparency and instill confidence in the code changes being recommended, every line of code can be reviewed, edited, and approved prior to compilation as part of the automated remediation. This gives users complete control over the automated remediation process.

As part of the automated remediation, CloudAtlas can connect to Azure subscriptions, new or existing landing zones and custom resources to ensure a smooth migration.

GENERATING LANDING ZONES FOR SEAMLESS MIGRATION

Once remediated, the code can be compiled for easy migration. To ensure a seamless migration, a Cloud Adoption Framework Workshop identifies business-specific needs which are incorporated into an automatically generated landing zone designed for the specific workloads being migrated.

Migration can then be implemented by directly connecting to the Azure subscription via CloudAtlas, providing the end-to-end support needed for a successful automated cloud migration to AKS.

MIGRATION INSIGHTS FROM 4,000+ APPLICATIONS

CloudAtlas has assessed over four thousand apps in the past few months, which gives us a great data sample to analyze. From this data, it is clear that many customers would benefit from remediating apps to a modern AKS architecture in a ‘one-step’ migration versus the ‘two step’ “lift and shift to VMs first and modernize later” approach the industry currently recommends.

FREQUENTLY ASKED QUESTIONS

While many questions may arise during a migration assessment, here are a few that we frequently see when considering migration to Azure Kubernetes Services.

Question: What are the key benefits of using AKS for application deployment compared to traditional hosting solutions?

Answer: AKS offers scalability, flexibility, and automated management of containerized applications. It helps optimize resource utilization, ensures high availability, and simplifies the deployment and orchestration of containers. Other AKS benefits include automatic scaling, self-healing, simplified management, and seamless integration with Azure services, enabling a more streamlined and efficient development and deployment process. And CloudAtlas simplifies the process even more, automating much of the process and making sure that your workloads and Azure environment are optimized at deployment and over time.

Question: How does AKS handle security for containerized applications?

Answer: AKS implements robust security features such as network policies, Azure Active Directory integration, role-based access control (RBAC), and it supports Azure Key Vault for secure management. CloudAtlas ensures that these services are provisioned appropriately based on the needs of the business as defined in the modernization assessment.

Question: What considerations should be taken into account when planning migration to AKS?

Answer: Factors to consider include application architecture, data storage, networking, security requirements, and any dependencies on external services. CloudAtlas considers all these factors as part of the assessment of existing infrastructure to develop a well-defined migration plan.

Conclusion – See what CloudAtlas can do for you

CloudAtlas is the only platform in the marketplace that provides a true end-to-end automated cloud migration solution from initial assessment to modernization to migration and optimization. Born in the cloud by former Microsoft employees, CloudAtlas accelerates and facilitates the cloud migration journey to help partners and customers realize and achieve cloud benefits faster, better, and more consistently. CloudAtlas does this for all types of digital transformation, including modernization to AKS. Learn more about CloudAtlas capabilities and AKS modernization in this short demo video.

CloudAtlas is offering a free assessment of an application for modernization and migration to AKS. Submit your information here to be contacted by a UnifyCloud Cloud Architect to get started, or ask your Account Manager at Microsoft about the Solutions Assessment Program.

For more information you can visit www.unifycloud.com or contact us at info@unifycloud.com for questions or comments.

____________

1 Source: Microsoft IT, as directly related to UnifyCloud personnel, notes that it takes an experienced developer 3 days to manually scan 10,000 lines of code for migration to Azure.

Microsoft Tech Community – Latest Blogs –Read More

Invite You to Be a Global Azure 2024 Organizer

Global Azure, a global community effort where local Azure communities host events for local users, has been gaining popularity year by year for those interested in learning about Microsoft Azure and Microsoft AI, alongside other Azure users. The initiative saw great success last year, with the Global Azure 2023 event featuring over 100 local community-led events, nearly 500 speakers, and about 450 sessions delivered across the globe. We have highlighted these local events in our blog post, Global Azure 2023 Led by Microsoft MVPs Around the World.

Looking ahead, Global Azure 2024 is scheduled from April 18 to 20, and its call for organizer who host these local events has begun. In this blog, we showcase the latest news about Global Azure to a wider audience, including messages from the Global Azure Admin Team. This year, we will directly share the essence of Global Azure’s appeal through the words of Rik Hepworth (Microsoft Azure MVP and Regional Director) and Magnus Mårtensson (Microsoft Azure MVP and Regional Director). We invite you to consider becoming a part of this global initiative, empowering Azure users worldwide by stepping up as an organizer.

What’s New in Global Azure 2024?

For Global Azure 2024 we are doing multiple new things:

Last year we started a collaboration with the Microsoft Learn Student Ambassador program. This year we will build on this start to further expand the activation among young professionals to join Global Azure and learn about our beloved cloud platform. As experienced community leaders, no task can be more worthy than to nurture the next generation of community leaders. We are working with the MLSA program to help young professionals arrange their first community meetups, or to join a meetup local to them and become involved in community work. We are asking experienced community leaders to mentor these young professionals to become budding new community leaders, they need guidance in how to organize a successful first Azure learning event!

For the -24 edition of our event, we are working on a self-service portal for both event organizers and event attendees, to access and claim sponsorships that companies give to Global Azure. As a community leader you will sign in and see the list of attendees at your location. You can share sponsorships directly with the attendees and the people who attend your event can claim the benefits from our portal.

What benefits can the organizers gain from hosting a local Global Azure event?

There is no better way to learn about something, about anything, than to collaborate with like-minded people in the learning process. We have been in communities for tech enthusiasts for many years; some of our best friends are cloud people we have met through communities, and the way we learn the most is from deep discussions with people we trust and know. Hosting a Global Azure Community event for the first time could be the start of a new network of great people who know and like the same things and who also need to continuously want to and need to learn more about the cloud. For us, community is work-life and within communities we find the best and most joyful parts of being in tech.

Message to the organizers looking forward to hosting local Global Azure events

For community by community – that is our guiding motto for Global Azure. We are community, and learning happens here! As a hero, it is your job to set up a fun agenda full of learning, and to drive the event when it happens. It is hugely rewarding to be involved in community work, at least if we are to believe the people who approach us wherever we go – “I really like Global Azure, it is the most fun community event we host in our community in X each year”. This is passion, and this is tech-geekery when it is at its best. You are part of the crowd that drives learning and that makes people enthusiastic about their work and about technology. We hope that your Global Azure event is a great success and that it leads to more learners of Azure near you becoming more active and sharing with their knowledge – as our motto states!

Additional message from Rik and Magnus

Global Azure has global reach to Azure cloud tech people everywhere. We are looking for additional sponsors who want to have the potential to reach these people. You need to give something away, like licenses or other giveaways to become a sponsor. When you do we can in turn ensure that everyone sees that yours is a company that backs the community for tech and who supports learning.

This year, we are also particularly keen to hear from our MVP friends who have struggled in the past with finding a location for their event but have a Microsoft office, or event space nearby. We are keen to see if we can help, but we need people to reach out to us so we can make the right connections.

If anyone out there in the community is interested in stepping up to a global context, we are often looking for additional people to join the Global Azure Admins team.

Azure is big, broad, wide, and deep – there are so many different topics and technologies that are a part of Azure. Withing Global Azure anything goes! AI is a very valid Global Azure focus, because AI happens on the Azure platform and somehow data needs to be securely transported to, ingested, and stored in Azure. Compute can happen in so many ways in the cloud and you can be part of using the cloud as an IT Pro management/admin community as well as a developer community. We have SecOps, FinOps, DevOps (all the Ops!!). Global Azure is also very passionate about building an inclusive and welcoming community around the world that includes young people and anybody who is underrepresented in our industry.

To find out more, head to https://globalazure.net and read our #HowTo guide. We look forward to seeing everyone’s pins appear on our map.

Microsoft Tech Community – Latest Blogs –Read More

Modernizing Azure Automation: A 2023 Retrospective and Future outlook

Majority of the organizations are at different stages of their cloud adoption journey, as they navigate through public clouds, private clouds and on-premises data centers. Their IT landscape is often characterized by multiple applications and services, that are spread across diverse environments. Managing this complex landscape manually or with multiple orchestration services can be daunting and inefficient. Irrespective of whether organizations are completely on-premises or exploring cloud solutions for the first time or born in the cloud, all share a common goal: to enhance efficiency and agility. Orchestration has become indispensable to streamline management tasks effectively to reduce cost and allow business to focus on its core priorities.

Azure Automation has emerged as a pivotal service for managing complex hybrid environments by delivering a consistent user experience across multiple cloud platforms. Customers utilize Azure Automation for a variety of tasks, such as resource lifecycle management, mission-critical jobs that often require manual intervention, guest management at scale and other common enterprise IT operations such as periodic maintenance. It targets orchestration on a wide array of resources such as Virtual Machines, Arc-enabled Servers, Databases, Storage, Azure Active Directory, Mailboxes and much more, along with complex workflows involving many resources. Azure Automation provides a complete end-to-end solution that facilitates authoring of PowerShell and Python scripts, with a serverless platform for execution of those scripts, offers the flexibility to execute those scripts on-premises or in customer’s local environment and monitors those executions comprehensively.

A 2023 Retrospective

Azure Automation has made substantial investments in modernizing its platform and significantly improving user experience over the previous year and promises to continue delivering value to its customers in the years to come. Here is a summary of key enhancements so far, that have laid the foundation for even greater benefits in the future:

New runtime languages: PowerShell 7.2 and Python 3.8 runbooks are Generally available. This enables Developers and IT administrators to execute runbooks in the most popular scripting languages. Customers are adopting Azure Automation to consolidate their scripts that are distributed on-premises and across multiple clouds and gaining operational efficiency by managing their Azure and Arc-enabled resources through a consistent experience.

Support for Azure CLI commands: Now Azure CLI commands can be invoked in Azure Automation runbooks (preview). The rich command set of Azure CLI expands capabilities of runbooks even further, allowing you to reap combined benefits of both to automate and streamline resource management on Azure.

Advanced script authoring experience: Azure Automation extension for Visual Studio Code is Generally Available. It offers an advanced authoring and editing experience for PowerShell and Python scripts. The extension leverages GitHub Copilot for intelligent code completion that provides suggestions directly within the editor, thereby making the coding process faster and simpler.

Granular control through Runtime environment: Module management and runbook update has never been so hassle-free! Runtime environment (preview) allows complete configuration of the job execution environment without worrying about mixing different module versions in a single Automation account. You can upgrade runbooks to newer language versions with minimal effort to stay secure and take advantage of latest functionalities. It is strongly recommended to use Runtime environment to update runbooks on end-of-support runtimes PowerShell 7.1 and Python 2.7 since both PowerShell 7.1 and Python 2.7 have been announced retired by parent products PowerShell and Python respectively.

Unified experience across diverse platforms: Hybrid Worker extension is Generally Available and supports Azure VMs, off-Azure servers registered as Arc-enabled servers, Arc-enabled SCVMM and Arc-enabled VMware VMs. This empowers organizations to orchestrate their entire hybrid environment at scale through a single interface. You can directly install the extension on Azure or Arc-enabled servers and execute runbooks for a variety of scenarios. These include in-guest VM management, access to other services privately from Azure Virtual Network, and to overcome organizational restrictions of keeping data in cloud.

State-of-the-art backend platform: Azure Automation has redesigned its platform and majority of the runbooks are now executing successfully on secure and modern Hyper-V containers. With this move and additional measures taken to minimize infrastructure failures, the service has further hardened its security and improved reliability. These enhancements have established the groundwork for faster release of innovative features in the coming months. If your runbooks have taken dependency on old platform and you observe unexpected job failures, take a look at the known issues and workarounds here.

Future outlook

Azure Automation is continuously evolving and enhancing its capabilities, striving to become the best-in-class platform for resource management in an adaptive cloud. It is providing organizations with more efficient and reliable ways to navigate across different services and applications residing in multiple clouds (on-premises data centers, private clouds, and public clouds). In addition to its ongoing commitments to strengthen security, reliability, resiliency and scale, Azure Automation is building critical features to further improve customer experience. Here are some of the improvements currently under development and expected to be released soon:

Aligning Runbook support with latest Runtime releases: Azure Automation is working actively to reduce the time gap between release of new PowerShell and Python language versions and their support in runbooks. Stay tuned for upcoming announcements on PowerShell 7.4!

Source control integration for new runtimes: You would now be able to keep runbooks updated with scripts in GitHub or Azure DevOps source control repository. This feature simplifies the process of promoting code that has undergone testing in the development environment to the production Automation account.

Native integration with Azure services: Azure Automation is already being used for creating runbooks that orchestrate across multiple resources. Keep an eye out for deeper integrations with more Azure resources for ease of management and to improve efficiency.

Richer Gallery of Runbooks: Improvements are planned in Runbook Gallery to help you search runbooks effortlessly for common scenarios and boost your productivity. Contribute to the community by sharing your scripts here.

Reminder for upcoming Retirements

Ensure to transition to the supported services/features prior to the retirement date:

AzureRM PowerShell module will retire on 29 February 2024 and will be replaced by Az PowerShell module. Update your outdated runbooks immediately.

With the retirement of Log Analytics agent, following dependent services/features will retire on 31 August 2024. It is strongly recommended to migrate to supported services before retirement date:

Log Analytics agent-based Hybrid Runbook Worker will be retired in favor of extension-based Hybrid Runbook Worker. Learn more.

Azure Automation Update Management will be retired in favor of Azure Update Manager. Learn more.

Azure Automation Change Tracking & Inventory will be retired in favor of Change Tracking & Inventory with AMA. Learn more.

For any questions or feedback, please reach out to askazureautomation@microsoft.com

Microsoft Tech Community – Latest Blogs –Read More

Modernizing Azure Automation: A 2023 Retrospective and Future outlook

Majority of the organizations are at different stages of their cloud adoption journey, as they navigate through public clouds, private clouds and on-premises data centers. Their IT landscape is often characterized by multiple applications and services, that are spread across diverse environments. Managing this complex landscape manually or with multiple orchestration services can be daunting and inefficient. Irrespective of whether organizations are completely on-premises or exploring cloud solutions for the first time or born in the cloud, all share a common goal: to enhance efficiency and agility. Orchestration has become indispensable to streamline management tasks effectively to reduce cost and allow business to focus on its core priorities.

Azure Automation has emerged as a pivotal service for managing complex hybrid environments by delivering a consistent user experience across multiple cloud platforms. Customers utilize Azure Automation for a variety of tasks, such as resource lifecycle management, mission-critical jobs that often require manual intervention, guest management at scale and other common enterprise IT operations such as periodic maintenance. It targets orchestration on a wide array of resources such as Virtual Machines, Arc-enabled Servers, Databases, Storage, Azure Active Directory, Mailboxes and much more, along with complex workflows involving many resources. Azure Automation provides a complete end-to-end solution that facilitates authoring of PowerShell and Python scripts, with a serverless platform for execution of those scripts, offers the flexibility to execute those scripts on-premises or in customer’s local environment and monitors those executions comprehensively.

A 2023 Retrospective

Azure Automation has made substantial investments in modernizing its platform and significantly improving user experience over the previous year and promises to continue delivering value to its customers in the years to come. Here is a summary of key enhancements so far, that have laid the foundation for even greater benefits in the future:

New runtime languages: PowerShell 7.2 and Python 3.8 runbooks are Generally available. This enables Developers and IT administrators to execute runbooks in the most popular scripting languages. Customers are adopting Azure Automation to consolidate their scripts that are distributed on-premises and across multiple clouds and gaining operational efficiency by managing their Azure and Arc-enabled resources through a consistent experience.

Support for Azure CLI commands: Now Azure CLI commands can be invoked in Azure Automation runbooks (preview). The rich command set of Azure CLI expands capabilities of runbooks even further, allowing you to reap combined benefits of both to automate and streamline resource management on Azure.

Advanced script authoring experience: Azure Automation extension for Visual Studio Code is Generally Available. It offers an advanced authoring and editing experience for PowerShell and Python scripts. The extension leverages GitHub Copilot for intelligent code completion that provides suggestions directly within the editor, thereby making the coding process faster and simpler.

Granular control through Runtime environment: Module management and runbook update has never been so hassle-free! Runtime environment (preview) allows complete configuration of the job execution environment without worrying about mixing different module versions in a single Automation account. You can upgrade runbooks to newer language versions with minimal effort to stay secure and take advantage of latest functionalities. It is strongly recommended to use Runtime environment to update runbooks on end-of-support runtimes PowerShell 7.1 and Python 2.7 since both PowerShell 7.1 and Python 2.7 have been announced retired by parent products PowerShell and Python respectively.

Unified experience across diverse platforms: Hybrid Worker extension is Generally Available and supports Azure VMs, off-Azure servers registered as Arc-enabled servers, Arc-enabled SCVMM and Arc-enabled VMware VMs. This empowers organizations to orchestrate their entire hybrid environment at scale through a single interface. You can directly install the extension on Azure or Arc-enabled servers and execute runbooks for a variety of scenarios. These include in-guest VM management, access to other services privately from Azure Virtual Network, and to overcome organizational restrictions of keeping data in cloud.

State-of-the-art backend platform: Azure Automation has redesigned its platform and majority of the runbooks are now executing successfully on secure and modern Hyper-V containers. With this move and additional measures taken to minimize infrastructure failures, the service has further hardened its security and improved reliability. These enhancements have established the groundwork for faster release of innovative features in the coming months. If your runbooks have taken dependency on old platform and you observe unexpected job failures, take a look at the known issues and workarounds here.

Future outlook

Azure Automation is continuously evolving and enhancing its capabilities, striving to become the best-in-class platform for resource management in an adaptive cloud. It is providing organizations with more efficient and reliable ways to navigate across different services and applications residing in multiple clouds (on-premises data centers, private clouds, and public clouds). In addition to its ongoing commitments to strengthen security, reliability, resiliency and scale, Azure Automation is building critical features to further improve customer experience. Here are some of the improvements currently under development and expected to be released soon:

Aligning Runbook support with latest Runtime releases: Azure Automation is working actively to reduce the time gap between release of new PowerShell and Python language versions and their support in runbooks. Stay tuned for upcoming announcements on PowerShell 7.4!

Source control integration for new runtimes: You would now be able to keep runbooks updated with scripts in GitHub or Azure DevOps source control repository. This feature simplifies the process of promoting code that has undergone testing in the development environment to the production Automation account.

Native integration with Azure services: Azure Automation is already being used for creating runbooks that orchestrate across multiple resources. Keep an eye out for deeper integrations with more Azure resources for ease of management and to improve efficiency.

Richer Gallery of Runbooks: Improvements are planned in Runbook Gallery to help you search runbooks effortlessly for common scenarios and boost your productivity. Contribute to the community by sharing your scripts here.

Reminder for upcoming Retirements

Ensure to transition to the supported services/features prior to the retirement date:

AzureRM PowerShell module will retire on 29 February 2024 and will be replaced by Az PowerShell module. Update your outdated runbooks immediately.

With the retirement of Log Analytics agent, following dependent services/features will retire on 31 August 2024. It is strongly recommended to migrate to supported services before retirement date:

Log Analytics agent-based Hybrid Runbook Worker will be retired in favor of extension-based Hybrid Runbook Worker. Learn more.

Azure Automation Update Management will be retired in favor of Azure Update Manager. Learn more.

Azure Automation Change Tracking & Inventory will be retired in favor of Change Tracking & Inventory with AMA. Learn more.

For any questions or feedback, please reach out to askazureautomation@microsoft.com

Microsoft Tech Community – Latest Blogs –Read More

Modernizing Azure Automation: A 2023 Retrospective and Future outlook

Majority of the organizations are at different stages of their cloud adoption journey, as they navigate through public clouds, private clouds and on-premises data centers. Their IT landscape is often characterized by multiple applications and services, that are spread across diverse environments. Managing this complex landscape manually or with multiple orchestration services can be daunting and inefficient. Irrespective of whether organizations are completely on-premises or exploring cloud solutions for the first time or born in the cloud, all share a common goal: to enhance efficiency and agility. Orchestration has become indispensable to streamline management tasks effectively to reduce cost and allow business to focus on its core priorities.

Azure Automation has emerged as a pivotal service for managing complex hybrid environments by delivering a consistent user experience across multiple cloud platforms. Customers utilize Azure Automation for a variety of tasks, such as resource lifecycle management, mission-critical jobs that often require manual intervention, guest management at scale and other common enterprise IT operations such as periodic maintenance. It targets orchestration on a wide array of resources such as Virtual Machines, Arc-enabled Servers, Databases, Storage, Azure Active Directory, Mailboxes and much more, along with complex workflows involving many resources. Azure Automation provides a complete end-to-end solution that facilitates authoring of PowerShell and Python scripts, with a serverless platform for execution of those scripts, offers the flexibility to execute those scripts on-premises or in customer’s local environment and monitors those executions comprehensively.

A 2023 Retrospective

Azure Automation has made substantial investments in modernizing its platform and significantly improving user experience over the previous year and promises to continue delivering value to its customers in the years to come. Here is a summary of key enhancements so far, that have laid the foundation for even greater benefits in the future:

New runtime languages: PowerShell 7.2 and Python 3.8 runbooks are Generally available. This enables Developers and IT administrators to execute runbooks in the most popular scripting languages. Customers are adopting Azure Automation to consolidate their scripts that are distributed on-premises and across multiple clouds and gaining operational efficiency by managing their Azure and Arc-enabled resources through a consistent experience.

Support for Azure CLI commands: Now Azure CLI commands can be invoked in Azure Automation runbooks (preview). The rich command set of Azure CLI expands capabilities of runbooks even further, allowing you to reap combined benefits of both to automate and streamline resource management on Azure.

Advanced script authoring experience: Azure Automation extension for Visual Studio Code is Generally Available. It offers an advanced authoring and editing experience for PowerShell and Python scripts. The extension leverages GitHub Copilot for intelligent code completion that provides suggestions directly within the editor, thereby making the coding process faster and simpler.

Granular control through Runtime environment: Module management and runbook update has never been so hassle-free! Runtime environment (preview) allows complete configuration of the job execution environment without worrying about mixing different module versions in a single Automation account. You can upgrade runbooks to newer language versions with minimal effort to stay secure and take advantage of latest functionalities. It is strongly recommended to use Runtime environment to update runbooks on end-of-support runtimes PowerShell 7.1 and Python 2.7 since both PowerShell 7.1 and Python 2.7 have been announced retired by parent products PowerShell and Python respectively.

Unified experience across diverse platforms: Hybrid Worker extension is Generally Available and supports Azure VMs, off-Azure servers registered as Arc-enabled servers, Arc-enabled SCVMM and Arc-enabled VMware VMs. This empowers organizations to orchestrate their entire hybrid environment at scale through a single interface. You can directly install the extension on Azure or Arc-enabled servers and execute runbooks for a variety of scenarios. These include in-guest VM management, access to other services privately from Azure Virtual Network, and to overcome organizational restrictions of keeping data in cloud.

State-of-the-art backend platform: Azure Automation has redesigned its platform and majority of the runbooks are now executing successfully on secure and modern Hyper-V containers. With this move and additional measures taken to minimize infrastructure failures, the service has further hardened its security and improved reliability. These enhancements have established the groundwork for faster release of innovative features in the coming months. If your runbooks have taken dependency on old platform and you observe unexpected job failures, take a look at the known issues and workarounds here.

Future outlook