Category: Microsoft

Category Archives: Microsoft

Lesson Learned #474:Identifying and Preventing Unauthorized Application Access to Azure SQL Database

In recent scenarios encountered with our customers, we have come across a specific need: restricting certain users from using SQL Server Management Studio (SSMS) or other applications to connect to a designated database in Azure SQL Database. A common solution in traditional SQL Server environments, like the use of LOGIN TRIGGERS, is not available in Azure SQL Database. This limitation poses a unique challenge in database management and security.

To address this challenge, I’d like to share an alternative that combines the power of Extended Events in Azure SQL Database with PowerShell scripting. This method effectively captures and monitors login events, providing administrators with timely alerts whenever a specified user connects to the database using a prohibited application, such as SSMS.

How It Works

Extended Events Setup: We start by setting up an Extended Event in Azure SQL Database. This event is configured to capture login activities, specifically focusing on the application name used for the connection. By filtering for certain applications (like SSMS), we can track unauthorized access attempts.

PowerShell Script: A PowerShell script is then employed to query these captured events at regular intervals. This script connects to the Azure SQL Database, retrieves the relevant event data, and checks for any instances where the specified users have connected via the restricted applications.

Email Alerts: Upon detecting such an event, the PowerShell script automatically sends an email notification to the database administrator. This alert contains details of the unauthorized login attempt, such as the timestamp, username, and application used. This prompt information allows the administrator to take immediate corrective measures.

Advantages

Proactive Monitoring: This approach provides continuous monitoring of the database connections, ensuring that any unauthorized access is quickly detected and reported.

Customizable: The method is highly customizable. Administrators can specify which applications to monitor and can easily adjust the script to cater to different user groups or connection parameters.

No Direct Blocking: While this method does not directly block the connection, it provides immediate alerts, enabling administrators to react swiftly to enforce compliance and security protocols.

This article provides a high-level overview of how to implement this solution. For detailed steps and script examples, administrators are encouraged to tailor the approach to their specific environment and requirements.

Extended Event

CREATE EVENT SESSION Track_SSMS_Logins

ON DATABASE

ADD EVENT sqlserver.sql_batch_starting(

ACTION(sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.username, sqlserver.session_id)

WHERE (sqlserver.client_app_name LIKE ‘%Management Studio%’)

)

ADD TARGET package0.ring_buffer

(SET max_events_limit = 1000, max_memory = 4096)

WITH (EVENT_RETENTION_MODE = NO_EVENT_LOSS, MAX_DISPATCH_LATENCY = 5 SECONDS);

GO

ALTER EVENT SESSION Track_SSMS_Logins ON DATABASE STATE = START;

Query to run using ring buffers

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n);

Powershell Script

# Connection configuration

$Database = “DBNAme”

$Server = “Servername.database.windows.net”

$Username = “username”

$Password = “pwd!”

$emailFrom = “EmailFrom@ZYX.com”

$emailTo = “EmailTo@XYZ.com”

$smtpServer = “smtpservername”

$smtpUsername = “smtpusername”

$smtpPassword = “smtppassword”

$smtpPort=25

$ConnectionString = “Server=$Server;Database=$Database;User Id=$Username;Password=$Password;”

# Last check date

$LastCheckFile = “c:tempLastCheck.txt”

$LastCheck = Get-Content $LastCheckFile -ErrorAction SilentlyContinue

if (!$LastCheck) {

$LastCheck = [DateTime]::MinValue

}

# SQL query

$Query = @”

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n)

WHERE

n.value(‘(@timestamp)[1]’, ‘datetime2’) > ‘$LastCheck’

“@

# Create and open SQL connection

$SqlConnection = New-Object System.Data.SqlClient.SqlConnection

$SqlConnection.ConnectionString = $ConnectionString

$SqlConnection.Open()

# Create SQL command

$SqlCommand = $SqlConnection.CreateCommand()

$SqlCommand.CommandText = $Query

# Execute SQL command

$SqlAdapter = New-Object System.Data.SqlClient.SqlDataAdapter $SqlCommand

$DataSet = New-Object System.Data.DataSet

$SqlAdapter.Fill($DataSet)

$SqlConnection.Close()

# Process the results

$Results = $DataSet.Tables[0]

# Check for new events

if ($Results.Rows.Count -gt 0) {

# Prepare email content

$EmailBody = $Results | Out-String

$smtp = New-Object Net.Mail.SmtpClient($smtpServer, $smtpPort)

$smtp.EnableSsl = $true

$smtp.Credentials = New-Object System.Net.NetworkCredential($smtpUsername, $smtpPassword)

$mailMessage = New-Object Net.Mail.MailMessage($emailFrom, $emailTo)

$mailMessage.Subject = “Alert: SQL Access in database $Database”

$mailMessage.Body = “SQL Access Alert in database $Database on server $Server at $LastCheck.”

$smtp.Send($EmailBody)

# Save the current timestamp for the next check

Get-Date -Format “o” | Out-File $LastCheckFile

}

# Remember to schedule this script to run every 5 minutes using Windows Task Scheduler

Of course, that using SQL auditing o Log analytics will be another alternative.

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #474:Identifying and Preventing Unauthorized Application Access to Azure SQL Database

In recent scenarios encountered with our customers, we have come across a specific need: restricting certain users from using SQL Server Management Studio (SSMS) or other applications to connect to a designated database in Azure SQL Database. A common solution in traditional SQL Server environments, like the use of LOGIN TRIGGERS, is not available in Azure SQL Database. This limitation poses a unique challenge in database management and security.

To address this challenge, I’d like to share an alternative that combines the power of Extended Events in Azure SQL Database with PowerShell scripting. This method effectively captures and monitors login events, providing administrators with timely alerts whenever a specified user connects to the database using a prohibited application, such as SSMS.

How It Works

Extended Events Setup: We start by setting up an Extended Event in Azure SQL Database. This event is configured to capture login activities, specifically focusing on the application name used for the connection. By filtering for certain applications (like SSMS), we can track unauthorized access attempts.

PowerShell Script: A PowerShell script is then employed to query these captured events at regular intervals. This script connects to the Azure SQL Database, retrieves the relevant event data, and checks for any instances where the specified users have connected via the restricted applications.

Email Alerts: Upon detecting such an event, the PowerShell script automatically sends an email notification to the database administrator. This alert contains details of the unauthorized login attempt, such as the timestamp, username, and application used. This prompt information allows the administrator to take immediate corrective measures.

Advantages

Proactive Monitoring: This approach provides continuous monitoring of the database connections, ensuring that any unauthorized access is quickly detected and reported.

Customizable: The method is highly customizable. Administrators can specify which applications to monitor and can easily adjust the script to cater to different user groups or connection parameters.

No Direct Blocking: While this method does not directly block the connection, it provides immediate alerts, enabling administrators to react swiftly to enforce compliance and security protocols.

This article provides a high-level overview of how to implement this solution. For detailed steps and script examples, administrators are encouraged to tailor the approach to their specific environment and requirements.

Extended Event

CREATE EVENT SESSION Track_SSMS_Logins

ON DATABASE

ADD EVENT sqlserver.sql_batch_starting(

ACTION(sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.username, sqlserver.session_id)

WHERE (sqlserver.client_app_name LIKE ‘%Management Studio%’)

)

ADD TARGET package0.ring_buffer

(SET max_events_limit = 1000, max_memory = 4096)

WITH (EVENT_RETENTION_MODE = NO_EVENT_LOSS, MAX_DISPATCH_LATENCY = 5 SECONDS);

GO

ALTER EVENT SESSION Track_SSMS_Logins ON DATABASE STATE = START;

Query to run using ring buffers

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n);

Powershell Script

# Connection configuration

$Database = “DBNAme”

$Server = “Servername.database.windows.net”

$Username = “username”

$Password = “pwd!”

$emailFrom = “EmailFrom@ZYX.com”

$emailTo = “EmailTo@XYZ.com”

$smtpServer = “smtpservername”

$smtpUsername = “smtpusername”

$smtpPassword = “smtppassword”

$smtpPort=25

$ConnectionString = “Server=$Server;Database=$Database;User Id=$Username;Password=$Password;”

# Last check date

$LastCheckFile = “c:tempLastCheck.txt”

$LastCheck = Get-Content $LastCheckFile -ErrorAction SilentlyContinue

if (!$LastCheck) {

$LastCheck = [DateTime]::MinValue

}

# SQL query

$Query = @”

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n)

WHERE

n.value(‘(@timestamp)[1]’, ‘datetime2’) > ‘$LastCheck’

“@

# Create and open SQL connection

$SqlConnection = New-Object System.Data.SqlClient.SqlConnection

$SqlConnection.ConnectionString = $ConnectionString

$SqlConnection.Open()

# Create SQL command

$SqlCommand = $SqlConnection.CreateCommand()

$SqlCommand.CommandText = $Query

# Execute SQL command

$SqlAdapter = New-Object System.Data.SqlClient.SqlDataAdapter $SqlCommand

$DataSet = New-Object System.Data.DataSet

$SqlAdapter.Fill($DataSet)

$SqlConnection.Close()

# Process the results

$Results = $DataSet.Tables[0]

# Check for new events

if ($Results.Rows.Count -gt 0) {

# Prepare email content

$EmailBody = $Results | Out-String

$smtp = New-Object Net.Mail.SmtpClient($smtpServer, $smtpPort)

$smtp.EnableSsl = $true

$smtp.Credentials = New-Object System.Net.NetworkCredential($smtpUsername, $smtpPassword)

$mailMessage = New-Object Net.Mail.MailMessage($emailFrom, $emailTo)

$mailMessage.Subject = “Alert: SQL Access in database $Database”

$mailMessage.Body = “SQL Access Alert in database $Database on server $Server at $LastCheck.”

$smtp.Send($EmailBody)

# Save the current timestamp for the next check

Get-Date -Format “o” | Out-File $LastCheckFile

}

# Remember to schedule this script to run every 5 minutes using Windows Task Scheduler

Of course, that using SQL auditing o Log analytics will be another alternative.

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #474:Identifying and Preventing Unauthorized Application Access to Azure SQL Database

In recent scenarios encountered with our customers, we have come across a specific need: restricting certain users from using SQL Server Management Studio (SSMS) or other applications to connect to a designated database in Azure SQL Database. A common solution in traditional SQL Server environments, like the use of LOGIN TRIGGERS, is not available in Azure SQL Database. This limitation poses a unique challenge in database management and security.

To address this challenge, I’d like to share an alternative that combines the power of Extended Events in Azure SQL Database with PowerShell scripting. This method effectively captures and monitors login events, providing administrators with timely alerts whenever a specified user connects to the database using a prohibited application, such as SSMS.

How It Works

Extended Events Setup: We start by setting up an Extended Event in Azure SQL Database. This event is configured to capture login activities, specifically focusing on the application name used for the connection. By filtering for certain applications (like SSMS), we can track unauthorized access attempts.

PowerShell Script: A PowerShell script is then employed to query these captured events at regular intervals. This script connects to the Azure SQL Database, retrieves the relevant event data, and checks for any instances where the specified users have connected via the restricted applications.

Email Alerts: Upon detecting such an event, the PowerShell script automatically sends an email notification to the database administrator. This alert contains details of the unauthorized login attempt, such as the timestamp, username, and application used. This prompt information allows the administrator to take immediate corrective measures.

Advantages

Proactive Monitoring: This approach provides continuous monitoring of the database connections, ensuring that any unauthorized access is quickly detected and reported.

Customizable: The method is highly customizable. Administrators can specify which applications to monitor and can easily adjust the script to cater to different user groups or connection parameters.

No Direct Blocking: While this method does not directly block the connection, it provides immediate alerts, enabling administrators to react swiftly to enforce compliance and security protocols.

This article provides a high-level overview of how to implement this solution. For detailed steps and script examples, administrators are encouraged to tailor the approach to their specific environment and requirements.

Extended Event

CREATE EVENT SESSION Track_SSMS_Logins

ON DATABASE

ADD EVENT sqlserver.sql_batch_starting(

ACTION(sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.username, sqlserver.session_id)

WHERE (sqlserver.client_app_name LIKE ‘%Management Studio%’)

)

ADD TARGET package0.ring_buffer

(SET max_events_limit = 1000, max_memory = 4096)

WITH (EVENT_RETENTION_MODE = NO_EVENT_LOSS, MAX_DISPATCH_LATENCY = 5 SECONDS);

GO

ALTER EVENT SESSION Track_SSMS_Logins ON DATABASE STATE = START;

Query to run using ring buffers

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n);

Powershell Script

# Connection configuration

$Database = “DBNAme”

$Server = “Servername.database.windows.net”

$Username = “username”

$Password = “pwd!”

$emailFrom = “EmailFrom@ZYX.com”

$emailTo = “EmailTo@XYZ.com”

$smtpServer = “smtpservername”

$smtpUsername = “smtpusername”

$smtpPassword = “smtppassword”

$smtpPort=25

$ConnectionString = “Server=$Server;Database=$Database;User Id=$Username;Password=$Password;”

# Last check date

$LastCheckFile = “c:tempLastCheck.txt”

$LastCheck = Get-Content $LastCheckFile -ErrorAction SilentlyContinue

if (!$LastCheck) {

$LastCheck = [DateTime]::MinValue

}

# SQL query

$Query = @”

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n)

WHERE

n.value(‘(@timestamp)[1]’, ‘datetime2’) > ‘$LastCheck’

“@

# Create and open SQL connection

$SqlConnection = New-Object System.Data.SqlClient.SqlConnection

$SqlConnection.ConnectionString = $ConnectionString

$SqlConnection.Open()

# Create SQL command

$SqlCommand = $SqlConnection.CreateCommand()

$SqlCommand.CommandText = $Query

# Execute SQL command

$SqlAdapter = New-Object System.Data.SqlClient.SqlDataAdapter $SqlCommand

$DataSet = New-Object System.Data.DataSet

$SqlAdapter.Fill($DataSet)

$SqlConnection.Close()

# Process the results

$Results = $DataSet.Tables[0]

# Check for new events

if ($Results.Rows.Count -gt 0) {

# Prepare email content

$EmailBody = $Results | Out-String

$smtp = New-Object Net.Mail.SmtpClient($smtpServer, $smtpPort)

$smtp.EnableSsl = $true

$smtp.Credentials = New-Object System.Net.NetworkCredential($smtpUsername, $smtpPassword)

$mailMessage = New-Object Net.Mail.MailMessage($emailFrom, $emailTo)

$mailMessage.Subject = “Alert: SQL Access in database $Database”

$mailMessage.Body = “SQL Access Alert in database $Database on server $Server at $LastCheck.”

$smtp.Send($EmailBody)

# Save the current timestamp for the next check

Get-Date -Format “o” | Out-File $LastCheckFile

}

# Remember to schedule this script to run every 5 minutes using Windows Task Scheduler

Of course, that using SQL auditing o Log analytics will be another alternative.

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #474:Identifying and Preventing Unauthorized Application Access to Azure SQL Database

In recent scenarios encountered with our customers, we have come across a specific need: restricting certain users from using SQL Server Management Studio (SSMS) or other applications to connect to a designated database in Azure SQL Database. A common solution in traditional SQL Server environments, like the use of LOGIN TRIGGERS, is not available in Azure SQL Database. This limitation poses a unique challenge in database management and security.

To address this challenge, I’d like to share an alternative that combines the power of Extended Events in Azure SQL Database with PowerShell scripting. This method effectively captures and monitors login events, providing administrators with timely alerts whenever a specified user connects to the database using a prohibited application, such as SSMS.

How It Works

Extended Events Setup: We start by setting up an Extended Event in Azure SQL Database. This event is configured to capture login activities, specifically focusing on the application name used for the connection. By filtering for certain applications (like SSMS), we can track unauthorized access attempts.

PowerShell Script: A PowerShell script is then employed to query these captured events at regular intervals. This script connects to the Azure SQL Database, retrieves the relevant event data, and checks for any instances where the specified users have connected via the restricted applications.

Email Alerts: Upon detecting such an event, the PowerShell script automatically sends an email notification to the database administrator. This alert contains details of the unauthorized login attempt, such as the timestamp, username, and application used. This prompt information allows the administrator to take immediate corrective measures.

Advantages

Proactive Monitoring: This approach provides continuous monitoring of the database connections, ensuring that any unauthorized access is quickly detected and reported.

Customizable: The method is highly customizable. Administrators can specify which applications to monitor and can easily adjust the script to cater to different user groups or connection parameters.

No Direct Blocking: While this method does not directly block the connection, it provides immediate alerts, enabling administrators to react swiftly to enforce compliance and security protocols.

This article provides a high-level overview of how to implement this solution. For detailed steps and script examples, administrators are encouraged to tailor the approach to their specific environment and requirements.

Extended Event

CREATE EVENT SESSION Track_SSMS_Logins

ON DATABASE

ADD EVENT sqlserver.sql_batch_starting(

ACTION(sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.username, sqlserver.session_id)

WHERE (sqlserver.client_app_name LIKE ‘%Management Studio%’)

)

ADD TARGET package0.ring_buffer

(SET max_events_limit = 1000, max_memory = 4096)

WITH (EVENT_RETENTION_MODE = NO_EVENT_LOSS, MAX_DISPATCH_LATENCY = 5 SECONDS);

GO

ALTER EVENT SESSION Track_SSMS_Logins ON DATABASE STATE = START;

Query to run using ring buffers

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n);

Powershell Script

# Connection configuration

$Database = “DBNAme”

$Server = “Servername.database.windows.net”

$Username = “username”

$Password = “pwd!”

$emailFrom = “EmailFrom@ZYX.com”

$emailTo = “EmailTo@XYZ.com”

$smtpServer = “smtpservername”

$smtpUsername = “smtpusername”

$smtpPassword = “smtppassword”

$smtpPort=25

$ConnectionString = “Server=$Server;Database=$Database;User Id=$Username;Password=$Password;”

# Last check date

$LastCheckFile = “c:tempLastCheck.txt”

$LastCheck = Get-Content $LastCheckFile -ErrorAction SilentlyContinue

if (!$LastCheck) {

$LastCheck = [DateTime]::MinValue

}

# SQL query

$Query = @”

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n)

WHERE

n.value(‘(@timestamp)[1]’, ‘datetime2’) > ‘$LastCheck’

“@

# Create and open SQL connection

$SqlConnection = New-Object System.Data.SqlClient.SqlConnection

$SqlConnection.ConnectionString = $ConnectionString

$SqlConnection.Open()

# Create SQL command

$SqlCommand = $SqlConnection.CreateCommand()

$SqlCommand.CommandText = $Query

# Execute SQL command

$SqlAdapter = New-Object System.Data.SqlClient.SqlDataAdapter $SqlCommand

$DataSet = New-Object System.Data.DataSet

$SqlAdapter.Fill($DataSet)

$SqlConnection.Close()

# Process the results

$Results = $DataSet.Tables[0]

# Check for new events

if ($Results.Rows.Count -gt 0) {

# Prepare email content

$EmailBody = $Results | Out-String

$smtp = New-Object Net.Mail.SmtpClient($smtpServer, $smtpPort)

$smtp.EnableSsl = $true

$smtp.Credentials = New-Object System.Net.NetworkCredential($smtpUsername, $smtpPassword)

$mailMessage = New-Object Net.Mail.MailMessage($emailFrom, $emailTo)

$mailMessage.Subject = “Alert: SQL Access in database $Database”

$mailMessage.Body = “SQL Access Alert in database $Database on server $Server at $LastCheck.”

$smtp.Send($EmailBody)

# Save the current timestamp for the next check

Get-Date -Format “o” | Out-File $LastCheckFile

}

# Remember to schedule this script to run every 5 minutes using Windows Task Scheduler

Of course, that using SQL auditing o Log analytics will be another alternative.

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #474:Identifying and Preventing Unauthorized Application Access to Azure SQL Database

In recent scenarios encountered with our customers, we have come across a specific need: restricting certain users from using SQL Server Management Studio (SSMS) or other applications to connect to a designated database in Azure SQL Database. A common solution in traditional SQL Server environments, like the use of LOGIN TRIGGERS, is not available in Azure SQL Database. This limitation poses a unique challenge in database management and security.

To address this challenge, I’d like to share an alternative that combines the power of Extended Events in Azure SQL Database with PowerShell scripting. This method effectively captures and monitors login events, providing administrators with timely alerts whenever a specified user connects to the database using a prohibited application, such as SSMS.

How It Works

Extended Events Setup: We start by setting up an Extended Event in Azure SQL Database. This event is configured to capture login activities, specifically focusing on the application name used for the connection. By filtering for certain applications (like SSMS), we can track unauthorized access attempts.

PowerShell Script: A PowerShell script is then employed to query these captured events at regular intervals. This script connects to the Azure SQL Database, retrieves the relevant event data, and checks for any instances where the specified users have connected via the restricted applications.

Email Alerts: Upon detecting such an event, the PowerShell script automatically sends an email notification to the database administrator. This alert contains details of the unauthorized login attempt, such as the timestamp, username, and application used. This prompt information allows the administrator to take immediate corrective measures.

Advantages

Proactive Monitoring: This approach provides continuous monitoring of the database connections, ensuring that any unauthorized access is quickly detected and reported.

Customizable: The method is highly customizable. Administrators can specify which applications to monitor and can easily adjust the script to cater to different user groups or connection parameters.

No Direct Blocking: While this method does not directly block the connection, it provides immediate alerts, enabling administrators to react swiftly to enforce compliance and security protocols.

This article provides a high-level overview of how to implement this solution. For detailed steps and script examples, administrators are encouraged to tailor the approach to their specific environment and requirements.

Extended Event

CREATE EVENT SESSION Track_SSMS_Logins

ON DATABASE

ADD EVENT sqlserver.sql_batch_starting(

ACTION(sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.username, sqlserver.session_id)

WHERE (sqlserver.client_app_name LIKE ‘%Management Studio%’)

)

ADD TARGET package0.ring_buffer

(SET max_events_limit = 1000, max_memory = 4096)

WITH (EVENT_RETENTION_MODE = NO_EVENT_LOSS, MAX_DISPATCH_LATENCY = 5 SECONDS);

GO

ALTER EVENT SESSION Track_SSMS_Logins ON DATABASE STATE = START;

Query to run using ring buffers

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n);

Powershell Script

# Connection configuration

$Database = “DBNAme”

$Server = “Servername.database.windows.net”

$Username = “username”

$Password = “pwd!”

$emailFrom = “EmailFrom@ZYX.com”

$emailTo = “EmailTo@XYZ.com”

$smtpServer = “smtpservername”

$smtpUsername = “smtpusername”

$smtpPassword = “smtppassword”

$smtpPort=25

$ConnectionString = “Server=$Server;Database=$Database;User Id=$Username;Password=$Password;”

# Last check date

$LastCheckFile = “c:tempLastCheck.txt”

$LastCheck = Get-Content $LastCheckFile -ErrorAction SilentlyContinue

if (!$LastCheck) {

$LastCheck = [DateTime]::MinValue

}

# SQL query

$Query = @”

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n)

WHERE

n.value(‘(@timestamp)[1]’, ‘datetime2’) > ‘$LastCheck’

“@

# Create and open SQL connection

$SqlConnection = New-Object System.Data.SqlClient.SqlConnection

$SqlConnection.ConnectionString = $ConnectionString

$SqlConnection.Open()

# Create SQL command

$SqlCommand = $SqlConnection.CreateCommand()

$SqlCommand.CommandText = $Query

# Execute SQL command

$SqlAdapter = New-Object System.Data.SqlClient.SqlDataAdapter $SqlCommand

$DataSet = New-Object System.Data.DataSet

$SqlAdapter.Fill($DataSet)

$SqlConnection.Close()

# Process the results

$Results = $DataSet.Tables[0]

# Check for new events

if ($Results.Rows.Count -gt 0) {

# Prepare email content

$EmailBody = $Results | Out-String

$smtp = New-Object Net.Mail.SmtpClient($smtpServer, $smtpPort)

$smtp.EnableSsl = $true

$smtp.Credentials = New-Object System.Net.NetworkCredential($smtpUsername, $smtpPassword)

$mailMessage = New-Object Net.Mail.MailMessage($emailFrom, $emailTo)

$mailMessage.Subject = “Alert: SQL Access in database $Database”

$mailMessage.Body = “SQL Access Alert in database $Database on server $Server at $LastCheck.”

$smtp.Send($EmailBody)

# Save the current timestamp for the next check

Get-Date -Format “o” | Out-File $LastCheckFile

}

# Remember to schedule this script to run every 5 minutes using Windows Task Scheduler

Of course, that using SQL auditing o Log analytics will be another alternative.

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #474:Identifying and Preventing Unauthorized Application Access to Azure SQL Database

In recent scenarios encountered with our customers, we have come across a specific need: restricting certain users from using SQL Server Management Studio (SSMS) or other applications to connect to a designated database in Azure SQL Database. A common solution in traditional SQL Server environments, like the use of LOGIN TRIGGERS, is not available in Azure SQL Database. This limitation poses a unique challenge in database management and security.

To address this challenge, I’d like to share an alternative that combines the power of Extended Events in Azure SQL Database with PowerShell scripting. This method effectively captures and monitors login events, providing administrators with timely alerts whenever a specified user connects to the database using a prohibited application, such as SSMS.

How It Works

Extended Events Setup: We start by setting up an Extended Event in Azure SQL Database. This event is configured to capture login activities, specifically focusing on the application name used for the connection. By filtering for certain applications (like SSMS), we can track unauthorized access attempts.

PowerShell Script: A PowerShell script is then employed to query these captured events at regular intervals. This script connects to the Azure SQL Database, retrieves the relevant event data, and checks for any instances where the specified users have connected via the restricted applications.

Email Alerts: Upon detecting such an event, the PowerShell script automatically sends an email notification to the database administrator. This alert contains details of the unauthorized login attempt, such as the timestamp, username, and application used. This prompt information allows the administrator to take immediate corrective measures.

Advantages

Proactive Monitoring: This approach provides continuous monitoring of the database connections, ensuring that any unauthorized access is quickly detected and reported.

Customizable: The method is highly customizable. Administrators can specify which applications to monitor and can easily adjust the script to cater to different user groups or connection parameters.

No Direct Blocking: While this method does not directly block the connection, it provides immediate alerts, enabling administrators to react swiftly to enforce compliance and security protocols.

This article provides a high-level overview of how to implement this solution. For detailed steps and script examples, administrators are encouraged to tailor the approach to their specific environment and requirements.

Extended Event

CREATE EVENT SESSION Track_SSMS_Logins

ON DATABASE

ADD EVENT sqlserver.sql_batch_starting(

ACTION(sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.username, sqlserver.session_id)

WHERE (sqlserver.client_app_name LIKE ‘%Management Studio%’)

)

ADD TARGET package0.ring_buffer

(SET max_events_limit = 1000, max_memory = 4096)

WITH (EVENT_RETENTION_MODE = NO_EVENT_LOSS, MAX_DISPATCH_LATENCY = 5 SECONDS);

GO

ALTER EVENT SESSION Track_SSMS_Logins ON DATABASE STATE = START;

Query to run using ring buffers

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n);

Powershell Script

# Connection configuration

$Database = “DBNAme”

$Server = “Servername.database.windows.net”

$Username = “username”

$Password = “pwd!”

$emailFrom = “EmailFrom@ZYX.com”

$emailTo = “EmailTo@XYZ.com”

$smtpServer = “smtpservername”

$smtpUsername = “smtpusername”

$smtpPassword = “smtppassword”

$smtpPort=25

$ConnectionString = “Server=$Server;Database=$Database;User Id=$Username;Password=$Password;”

# Last check date

$LastCheckFile = “c:tempLastCheck.txt”

$LastCheck = Get-Content $LastCheckFile -ErrorAction SilentlyContinue

if (!$LastCheck) {

$LastCheck = [DateTime]::MinValue

}

# SQL query

$Query = @”

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n)

WHERE

n.value(‘(@timestamp)[1]’, ‘datetime2’) > ‘$LastCheck’

“@

# Create and open SQL connection

$SqlConnection = New-Object System.Data.SqlClient.SqlConnection

$SqlConnection.ConnectionString = $ConnectionString

$SqlConnection.Open()

# Create SQL command

$SqlCommand = $SqlConnection.CreateCommand()

$SqlCommand.CommandText = $Query

# Execute SQL command

$SqlAdapter = New-Object System.Data.SqlClient.SqlDataAdapter $SqlCommand

$DataSet = New-Object System.Data.DataSet

$SqlAdapter.Fill($DataSet)

$SqlConnection.Close()

# Process the results

$Results = $DataSet.Tables[0]

# Check for new events

if ($Results.Rows.Count -gt 0) {

# Prepare email content

$EmailBody = $Results | Out-String

$smtp = New-Object Net.Mail.SmtpClient($smtpServer, $smtpPort)

$smtp.EnableSsl = $true

$smtp.Credentials = New-Object System.Net.NetworkCredential($smtpUsername, $smtpPassword)

$mailMessage = New-Object Net.Mail.MailMessage($emailFrom, $emailTo)

$mailMessage.Subject = “Alert: SQL Access in database $Database”

$mailMessage.Body = “SQL Access Alert in database $Database on server $Server at $LastCheck.”

$smtp.Send($EmailBody)

# Save the current timestamp for the next check

Get-Date -Format “o” | Out-File $LastCheckFile

}

# Remember to schedule this script to run every 5 minutes using Windows Task Scheduler

Of course, that using SQL auditing o Log analytics will be another alternative.

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #474:Identifying and Preventing Unauthorized Application Access to Azure SQL Database

In recent scenarios encountered with our customers, we have come across a specific need: restricting certain users from using SQL Server Management Studio (SSMS) or other applications to connect to a designated database in Azure SQL Database. A common solution in traditional SQL Server environments, like the use of LOGIN TRIGGERS, is not available in Azure SQL Database. This limitation poses a unique challenge in database management and security.

To address this challenge, I’d like to share an alternative that combines the power of Extended Events in Azure SQL Database with PowerShell scripting. This method effectively captures and monitors login events, providing administrators with timely alerts whenever a specified user connects to the database using a prohibited application, such as SSMS.

How It Works

Extended Events Setup: We start by setting up an Extended Event in Azure SQL Database. This event is configured to capture login activities, specifically focusing on the application name used for the connection. By filtering for certain applications (like SSMS), we can track unauthorized access attempts.

PowerShell Script: A PowerShell script is then employed to query these captured events at regular intervals. This script connects to the Azure SQL Database, retrieves the relevant event data, and checks for any instances where the specified users have connected via the restricted applications.

Email Alerts: Upon detecting such an event, the PowerShell script automatically sends an email notification to the database administrator. This alert contains details of the unauthorized login attempt, such as the timestamp, username, and application used. This prompt information allows the administrator to take immediate corrective measures.

Advantages

Proactive Monitoring: This approach provides continuous monitoring of the database connections, ensuring that any unauthorized access is quickly detected and reported.

Customizable: The method is highly customizable. Administrators can specify which applications to monitor and can easily adjust the script to cater to different user groups or connection parameters.

No Direct Blocking: While this method does not directly block the connection, it provides immediate alerts, enabling administrators to react swiftly to enforce compliance and security protocols.

This article provides a high-level overview of how to implement this solution. For detailed steps and script examples, administrators are encouraged to tailor the approach to their specific environment and requirements.

Extended Event

CREATE EVENT SESSION Track_SSMS_Logins

ON DATABASE

ADD EVENT sqlserver.sql_batch_starting(

ACTION(sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.username, sqlserver.session_id)

WHERE (sqlserver.client_app_name LIKE ‘%Management Studio%’)

)

ADD TARGET package0.ring_buffer

(SET max_events_limit = 1000, max_memory = 4096)

WITH (EVENT_RETENTION_MODE = NO_EVENT_LOSS, MAX_DISPATCH_LATENCY = 5 SECONDS);

GO

ALTER EVENT SESSION Track_SSMS_Logins ON DATABASE STATE = START;

Query to run using ring buffers

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n);

Powershell Script

# Connection configuration

$Database = “DBNAme”

$Server = “Servername.database.windows.net”

$Username = “username”

$Password = “pwd!”

$emailFrom = “EmailFrom@ZYX.com”

$emailTo = “EmailTo@XYZ.com”

$smtpServer = “smtpservername”

$smtpUsername = “smtpusername”

$smtpPassword = “smtppassword”

$smtpPort=25

$ConnectionString = “Server=$Server;Database=$Database;User Id=$Username;Password=$Password;”

# Last check date

$LastCheckFile = “c:tempLastCheck.txt”

$LastCheck = Get-Content $LastCheckFile -ErrorAction SilentlyContinue

if (!$LastCheck) {

$LastCheck = [DateTime]::MinValue

}

# SQL query

$Query = @”

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n)

WHERE

n.value(‘(@timestamp)[1]’, ‘datetime2’) > ‘$LastCheck’

“@

# Create and open SQL connection

$SqlConnection = New-Object System.Data.SqlClient.SqlConnection

$SqlConnection.ConnectionString = $ConnectionString

$SqlConnection.Open()

# Create SQL command

$SqlCommand = $SqlConnection.CreateCommand()

$SqlCommand.CommandText = $Query

# Execute SQL command

$SqlAdapter = New-Object System.Data.SqlClient.SqlDataAdapter $SqlCommand

$DataSet = New-Object System.Data.DataSet

$SqlAdapter.Fill($DataSet)

$SqlConnection.Close()

# Process the results

$Results = $DataSet.Tables[0]

# Check for new events

if ($Results.Rows.Count -gt 0) {

# Prepare email content

$EmailBody = $Results | Out-String

$smtp = New-Object Net.Mail.SmtpClient($smtpServer, $smtpPort)

$smtp.EnableSsl = $true

$smtp.Credentials = New-Object System.Net.NetworkCredential($smtpUsername, $smtpPassword)

$mailMessage = New-Object Net.Mail.MailMessage($emailFrom, $emailTo)

$mailMessage.Subject = “Alert: SQL Access in database $Database”

$mailMessage.Body = “SQL Access Alert in database $Database on server $Server at $LastCheck.”

$smtp.Send($EmailBody)

# Save the current timestamp for the next check

Get-Date -Format “o” | Out-File $LastCheckFile

}

# Remember to schedule this script to run every 5 minutes using Windows Task Scheduler

Of course, that using SQL auditing o Log analytics will be another alternative.

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #474:Identifying and Preventing Unauthorized Application Access to Azure SQL Database

In recent scenarios encountered with our customers, we have come across a specific need: restricting certain users from using SQL Server Management Studio (SSMS) or other applications to connect to a designated database in Azure SQL Database. A common solution in traditional SQL Server environments, like the use of LOGIN TRIGGERS, is not available in Azure SQL Database. This limitation poses a unique challenge in database management and security.

To address this challenge, I’d like to share an alternative that combines the power of Extended Events in Azure SQL Database with PowerShell scripting. This method effectively captures and monitors login events, providing administrators with timely alerts whenever a specified user connects to the database using a prohibited application, such as SSMS.

How It Works

Extended Events Setup: We start by setting up an Extended Event in Azure SQL Database. This event is configured to capture login activities, specifically focusing on the application name used for the connection. By filtering for certain applications (like SSMS), we can track unauthorized access attempts.

PowerShell Script: A PowerShell script is then employed to query these captured events at regular intervals. This script connects to the Azure SQL Database, retrieves the relevant event data, and checks for any instances where the specified users have connected via the restricted applications.

Email Alerts: Upon detecting such an event, the PowerShell script automatically sends an email notification to the database administrator. This alert contains details of the unauthorized login attempt, such as the timestamp, username, and application used. This prompt information allows the administrator to take immediate corrective measures.

Advantages

Proactive Monitoring: This approach provides continuous monitoring of the database connections, ensuring that any unauthorized access is quickly detected and reported.

Customizable: The method is highly customizable. Administrators can specify which applications to monitor and can easily adjust the script to cater to different user groups or connection parameters.

No Direct Blocking: While this method does not directly block the connection, it provides immediate alerts, enabling administrators to react swiftly to enforce compliance and security protocols.

This article provides a high-level overview of how to implement this solution. For detailed steps and script examples, administrators are encouraged to tailor the approach to their specific environment and requirements.

Extended Event

CREATE EVENT SESSION Track_SSMS_Logins

ON DATABASE

ADD EVENT sqlserver.sql_batch_starting(

ACTION(sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.username, sqlserver.session_id)

WHERE (sqlserver.client_app_name LIKE ‘%Management Studio%’)

)

ADD TARGET package0.ring_buffer

(SET max_events_limit = 1000, max_memory = 4096)

WITH (EVENT_RETENTION_MODE = NO_EVENT_LOSS, MAX_DISPATCH_LATENCY = 5 SECONDS);

GO

ALTER EVENT SESSION Track_SSMS_Logins ON DATABASE STATE = START;

Query to run using ring buffers

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n);

Powershell Script

# Connection configuration

$Database = “DBNAme”

$Server = “Servername.database.windows.net”

$Username = “username”

$Password = “pwd!”

$emailFrom = “EmailFrom@ZYX.com”

$emailTo = “EmailTo@XYZ.com”

$smtpServer = “smtpservername”

$smtpUsername = “smtpusername”

$smtpPassword = “smtppassword”

$smtpPort=25

$ConnectionString = “Server=$Server;Database=$Database;User Id=$Username;Password=$Password;”

# Last check date

$LastCheckFile = “c:tempLastCheck.txt”

$LastCheck = Get-Content $LastCheckFile -ErrorAction SilentlyContinue

if (!$LastCheck) {

$LastCheck = [DateTime]::MinValue

}

# SQL query

$Query = @”

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n)

WHERE

n.value(‘(@timestamp)[1]’, ‘datetime2’) > ‘$LastCheck’

“@

# Create and open SQL connection

$SqlConnection = New-Object System.Data.SqlClient.SqlConnection

$SqlConnection.ConnectionString = $ConnectionString

$SqlConnection.Open()

# Create SQL command

$SqlCommand = $SqlConnection.CreateCommand()

$SqlCommand.CommandText = $Query

# Execute SQL command

$SqlAdapter = New-Object System.Data.SqlClient.SqlDataAdapter $SqlCommand

$DataSet = New-Object System.Data.DataSet

$SqlAdapter.Fill($DataSet)

$SqlConnection.Close()

# Process the results

$Results = $DataSet.Tables[0]

# Check for new events

if ($Results.Rows.Count -gt 0) {

# Prepare email content

$EmailBody = $Results | Out-String

$smtp = New-Object Net.Mail.SmtpClient($smtpServer, $smtpPort)

$smtp.EnableSsl = $true

$smtp.Credentials = New-Object System.Net.NetworkCredential($smtpUsername, $smtpPassword)

$mailMessage = New-Object Net.Mail.MailMessage($emailFrom, $emailTo)

$mailMessage.Subject = “Alert: SQL Access in database $Database”

$mailMessage.Body = “SQL Access Alert in database $Database on server $Server at $LastCheck.”

$smtp.Send($EmailBody)

# Save the current timestamp for the next check

Get-Date -Format “o” | Out-File $LastCheckFile

}

# Remember to schedule this script to run every 5 minutes using Windows Task Scheduler

Of course, that using SQL auditing o Log analytics will be another alternative.

Microsoft Tech Community – Latest Blogs –Read More

Lesson Learned #474:Identifying and Preventing Unauthorized Application Access to Azure SQL Database

In recent scenarios encountered with our customers, we have come across a specific need: restricting certain users from using SQL Server Management Studio (SSMS) or other applications to connect to a designated database in Azure SQL Database. A common solution in traditional SQL Server environments, like the use of LOGIN TRIGGERS, is not available in Azure SQL Database. This limitation poses a unique challenge in database management and security.

To address this challenge, I’d like to share an alternative that combines the power of Extended Events in Azure SQL Database with PowerShell scripting. This method effectively captures and monitors login events, providing administrators with timely alerts whenever a specified user connects to the database using a prohibited application, such as SSMS.

How It Works

Extended Events Setup: We start by setting up an Extended Event in Azure SQL Database. This event is configured to capture login activities, specifically focusing on the application name used for the connection. By filtering for certain applications (like SSMS), we can track unauthorized access attempts.

PowerShell Script: A PowerShell script is then employed to query these captured events at regular intervals. This script connects to the Azure SQL Database, retrieves the relevant event data, and checks for any instances where the specified users have connected via the restricted applications.

Email Alerts: Upon detecting such an event, the PowerShell script automatically sends an email notification to the database administrator. This alert contains details of the unauthorized login attempt, such as the timestamp, username, and application used. This prompt information allows the administrator to take immediate corrective measures.

Advantages

Proactive Monitoring: This approach provides continuous monitoring of the database connections, ensuring that any unauthorized access is quickly detected and reported.

Customizable: The method is highly customizable. Administrators can specify which applications to monitor and can easily adjust the script to cater to different user groups or connection parameters.

No Direct Blocking: While this method does not directly block the connection, it provides immediate alerts, enabling administrators to react swiftly to enforce compliance and security protocols.

This article provides a high-level overview of how to implement this solution. For detailed steps and script examples, administrators are encouraged to tailor the approach to their specific environment and requirements.

Extended Event

CREATE EVENT SESSION Track_SSMS_Logins

ON DATABASE

ADD EVENT sqlserver.sql_batch_starting(

ACTION(sqlserver.client_app_name, sqlserver.client_hostname, sqlserver.username, sqlserver.session_id)

WHERE (sqlserver.client_app_name LIKE ‘%Management Studio%’)

)

ADD TARGET package0.ring_buffer

(SET max_events_limit = 1000, max_memory = 4096)

WITH (EVENT_RETENTION_MODE = NO_EVENT_LOSS, MAX_DISPATCH_LATENCY = 5 SECONDS);

GO

ALTER EVENT SESSION Track_SSMS_Logins ON DATABASE STATE = START;

Query to run using ring buffers

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n);

Powershell Script

# Connection configuration

$Database = “DBNAme”

$Server = “Servername.database.windows.net”

$Username = “username”

$Password = “pwd!”

$emailFrom = “EmailFrom@ZYX.com”

$emailTo = “EmailTo@XYZ.com”

$smtpServer = “smtpservername”

$smtpUsername = “smtpusername”

$smtpPassword = “smtppassword”

$smtpPort=25

$ConnectionString = “Server=$Server;Database=$Database;User Id=$Username;Password=$Password;”

# Last check date

$LastCheckFile = “c:tempLastCheck.txt”

$LastCheck = Get-Content $LastCheckFile -ErrorAction SilentlyContinue

if (!$LastCheck) {

$LastCheck = [DateTime]::MinValue

}

# SQL query

$Query = @”

SELECT

n.value(‘(@timestamp)[1]’, ‘datetime2’) AS TimeStamp,

n.value(‘(action[@name=”client_app_name”]/value)[1]’, ‘varchar(max)’) AS Application,

n.value(‘(action[@name=”username”]/value)[1]’, ‘varchar(max)’) AS Username,

n.value(‘(action[@name=”client_hostname”]/value)[1]’, ‘varchar(max)’) AS HostName,

n.value(‘(action[@name=”session_id”]/value)[1]’, ‘int’) AS SessionID

FROM

(SELECT CAST(target_data AS xml) AS event_data

FROM sys.dm_xe_database_session_targets

WHERE event_session_address =

(SELECT address FROM sys.dm_xe_database_sessions WHERE name = ‘Track_SSMS_Logins’)

AND target_name = ‘ring_buffer’) AS tab

CROSS APPLY event_data.nodes(‘/RingBufferTarget/event’) AS q(n)

WHERE

n.value(‘(@timestamp)[1]’, ‘datetime2’) > ‘$LastCheck’

“@

# Create and open SQL connection

$SqlConnection = New-Object System.Data.SqlClient.SqlConnection

$SqlConnection.ConnectionString = $ConnectionString

$SqlConnection.Open()

# Create SQL command

$SqlCommand = $SqlConnection.CreateCommand()

$SqlCommand.CommandText = $Query

# Execute SQL command

$SqlAdapter = New-Object System.Data.SqlClient.SqlDataAdapter $SqlCommand

$DataSet = New-Object System.Data.DataSet

$SqlAdapter.Fill($DataSet)

$SqlConnection.Close()

# Process the results

$Results = $DataSet.Tables[0]

# Check for new events

if ($Results.Rows.Count -gt 0) {

# Prepare email content

$EmailBody = $Results | Out-String

$smtp = New-Object Net.Mail.SmtpClient($smtpServer, $smtpPort)

$smtp.EnableSsl = $true

$smtp.Credentials = New-Object System.Net.NetworkCredential($smtpUsername, $smtpPassword)

$mailMessage = New-Object Net.Mail.MailMessage($emailFrom, $emailTo)

$mailMessage.Subject = “Alert: SQL Access in database $Database”

$mailMessage.Body = “SQL Access Alert in database $Database on server $Server at $LastCheck.”

$smtp.Send($EmailBody)

# Save the current timestamp for the next check

Get-Date -Format “o” | Out-File $LastCheckFile

}

# Remember to schedule this script to run every 5 minutes using Windows Task Scheduler

Of course, that using SQL auditing o Log analytics will be another alternative.

Microsoft Tech Community – Latest Blogs –Read More

Validate your skills with our new certification for Microsoft Fabric Analytics Engineers

We’re looking for Microsoft Fabric Analytics Engineers to take our new beta exam. Do you have subject matter expertise in designing, creating, and deploying enterprise-scale data analytics solutions? If so, and if you know how to transform data into reusable analytics assets by using Microsoft Fabric components, such as lakehouses, data warehouses, notebooks, dataflows, data pipelines, semantic models, and reports, be sure to check out this exam. Other helpful qualifications include the ability to implement analytics best practices in Fabric, including version control and deployment.

If this is your skill set, we have a new certification for you. The Microsoft Certified: Fabric Analytics Engineer Associate certification validates your expertise in this area and offers you the opportunity to prove your skills. To earn this certification, pass Exam DP-600: Implementing Analytics Solutions Using Microsoft Fabric, currently in beta.

Is this the right certification for you?

This certification could be a great fit if you have in-depth familiarity with the Fabric solution and you have experience with data modeling, data transformation, Git-based source control, exploratory analytics, and languages, including Structured Query Language (SQL), Data Analysis Expressions (DAX), and PySpark.

Review the Exam DP-600 (beta) page for details, and check out the self-paced learning paths and instructor-led training there. The Exam DP-600 study guide alerts you for key topics covered on the exam.

Ready to prove your skills?

Take advantage of the discounted beta exam offer. The first 300 people who take Exam DP-600 (beta) on or before January 25, 2024, can get 80 percent off market price.

To receive the discount, when you register for the exam and are prompted for payment, use code DP600Winfield. This is not a private access code. The seats are offered on a first-come, first-served basis. As noted, you must take the exam on or before January 25, 2024. Please note that this beta exam is not available in Turkey, Pakistan, India, or China.

The rescore process starts on the day an exam goes live—8 to 12 weeks after the beta period, and final scores for beta exams are released approximately 10 days after that. For details on the timing of beta exam rescoring and results, read my post Creating high-quality exams: The path from beta to live.

Get ready to take Exam DP-600 (beta)

Explore the Fabric Career Hub. Access live training, skills challenges, group learning, and career insights.

Join the Fabric Cloud Skills Challenge. Complete all modules in the challenge within 30 days and become eligible for 50% off the cost of a Microsoft Certification exam. This 50% discount can’t be used toward the Exam DP-600 (beta). If you miss the beta period, you can use it later once the exam goes live or for another live certification exam.

Looking for in-depth training? Check out the new course Microsoft Fabric Analytics Engineer. Connect with Microsoft Training Services Partners in your area for in-person training.

Need other preparation ideas? Check out my blog post Just How Does One Prepare for Beta Exams?

Did you know that you can take any role-based exam online? Online delivered exams—taken from your home or office—can be less hassle, less stress, and even less worry than traveling to a test center, especially if you’re adequately prepared for what to expect. To find out more, check out my blog post Online proctored exams: What to expect and how to prepare.

Ready to get started?

Remember, the number of spots for the discounted beta exam offer is limited to the first 300 candidates taking Exam DP-600 (beta) on or before January 25, 2024.

Related announcements

9 ways Microsoft Learn helps you with the skills-first economy

Introducing a new resource for all role-based Microsoft Certification exams

Microsoft Learn: Four key features to help expand your knowledge and advance your career

Meet learners who changed their career with the help of Microsoft Learn

Microsoft Tech Community – Latest Blogs –Read More

Introducing Automatic File and URL (Detonation) Analysis

The Microsoft Defender Threat Intelligence (MDTI) team continuously adds new threat intelligence capabilities to MDTI and Defender XDR, giving customers new ways to hunt, research, and contextualize threats.

Today, we are excited to share a new feature that enhances our file and URL analysis (detonation) capabilities in the threat intelligence blade within the Defender XDR user interface. If MDTI cannot return any results when a customer searches for a file or URL, MDTI now automatically detonates it to improve search coverage and add to our corpus of knowledge of the global threat landscape:

Here’s how it works:

The detonation request for the searched file or URL entity is processed asynchronously in the background in the United States region.

If the end user is not served with a reputation and detonation results at the time of the search request. A subsequent search request for the same entity is initiated in the background.

Although there are no fixed SLAs regarding the volume and availability of the auto-detonated results, we aim to provide the results within 2 hours, depending on the load.

Next time you search and don’t find anything, don’t worry. The system is working in the background to give you better results later!

Next steps

Whether you are just kick-starting a threat intelligence program or looking to augment your existing threat intelligence toolset, the MDTI standard version can add critical context to your existing security investigations, keep your organization informed on current threats through leading research and intel profiles, provide crucial brand intelligence, and help you to collect powerful threat intelligence associated with your organization or others in your industry – all free of charge.

To learn more about how you and your organization can leverage MDTI, watch our overview video and follow our “Become an MDTI Ninja” training path today.

Microsoft Tech Community – Latest Blogs –Read More

December ’23 Monthly M365 Webinar – Microsoft Collaboration Framework

Dan Carroll and Richard Wakeman supported a great discussion around the Microsoft Collaboration Framework and explored the current state of collaboration capabilities across your partner ecosystem.

Recording here: https://www.microsoft.com/en-us/videoplayer/embed/RW1g6Zt

Microsoft Tech Community – Latest Blogs –Read More

All GCCH M365 Webinar Recordings Here!

December ’23, Microsoft Collaboration Framework Office Hours – https://www.microsoft.com/en-us/videoplayer/embed/RW1g6Zt

October ’23, New Channels Experience and New Webhook Connector in Teams – https://www.microsoft.com/en-us/videoplayer/embed/RW1dYng

September ’23, New Teams App – https://www.microsoft.com/en-us/videoplayer/embed/RW1c5kr

August ’23, Teams Phone Device Update and Cross-Cloud Teams Collaboration Capabilities – https://www.microsoft.com/en-us/videoplayer/embed/RW1aCjk

June ’23, Demystifying Task Management in M365 – https://www.microsoft.com/en-us/videoplayer/embed/RW16V80

June ’23, Teams Premium – https://www.microsoft.com/en-us/videoplayer/embed/RW16yZi

May ’23, M365 Search and Search Analytics, Teams Panels – https://www.microsoft.com/en-us/videoplayer/embed/RW14RyF

April ’23, Company Communicator and Viva Connections – https://www.microsoft.com/en-us/videoplayer/embed/RW12prs

March ’23, Cross-Cloud Collaboration and Viva Personal Insights – https://www.microsoft.com/en-us/videoplayer/embed/RW10qiX

February ’23, 1st and 3rd Party App Teams Integration Update, Learning Pathways, and New and Coming Features – https://www.microsoft.com/en-us/videoplayer/embed/RWXhnb

January ’23, Teams as a Platform Overview (intranet concept), OneDrive session #2, and @mention functionality – https://www.microsoft.com/en-us/videoplayer/embed/RWWURs

December ’22, Cross-Cloud Collaboration Overview, OneDrive for Sharing and Teams Integration, Teams Phone and CQD Update – https://www.microsoft.com/en-us/videoplayer/embed/RE5dWYA

November ’22, App Integration with Teams, Teams Meeting Options, Microsoft Whiteboard – https://www.microsoft.com/en-us/videoplayer/embed/RE5dkYn

Microsoft Tech Community – Latest Blogs –Read More

Announcing Public Preview of Confidential VMs with Intel TDX in Azure Virtual Desktop

We are excited to announce that Azure Virtual Desktop now supports the public preview of DCesv5 and ECesv5-series confidential VMs. These confidential VMs are powered by 4th Gen Intel® Xeon® Scalable processors with Intel® Trust Domain Extensions (Intel® TDX) and enable organizations to bring confidential workloads to the cloud without code changes to applications. Through the gated preview, we continued to enhance performance with our Intel partnership. These new virtual machines are up to 20% faster than 3rd Gen Intel Xeon virtual machines, and we expect performance for I/O intensive workloads to continue to improve as the technology matures.

Azure confidential VMs (CVMs) offer VM memory encryption with integrity protection, which strengthens guest protections to deny the hypervisor and other host management components code access to the VM memory and state. For additional CVM security benefits, please see the CVM documentation for more information.

For more information on AVD’s support for confidential VMs, please see this blog.

For more information about Intel TDX confidential VMs, please see this blog for more information.

Note: Intel TDX is offered in Europe West, Central US, and East US 2 regions. Europe North will be available in January 2024.

How to deploy Intel TDX Confidential VMs in AVD Host Pool Provisioning

On the Virtual machine location, select “Europe West”, “Central US”, or “East US 2”.

Select Confidential Virtual Machines from the Security Type dropdown in the AVD Host Pool Virtual Machine blade.

From there, go down to Virtual machine size, and click on “Change size” link.

You will then get directed towards a table that gives you all SKUs available, make sure on the top, that the “Type” is “Confidential Compute”.

Expand the DC or EC-Series categories and select and of the DCesv5/ECesv5 SKUs appropriate for your demand.

Getting Started

To get started, please visit Azure Virtual Desktop to learn more about the various benefits AVD provides and to get started with your first deployment.

Visit Create a host pool – Azure Virtual Desktop to start deploying your first confidential VM in Azure Virtual Desktop through the Azure Portal. For more information about any of these features, please visit Azure Virtual Desktop security best practices – Azure.

Continue the conversation. Find best practices. Bookmark the Azure Virtual Desktop Community. Have feedback on the service? Share your thoughts and upvote others on the Azure Virtual Desktop Feedback board.

Microsoft Tech Community – Latest Blogs –Read More

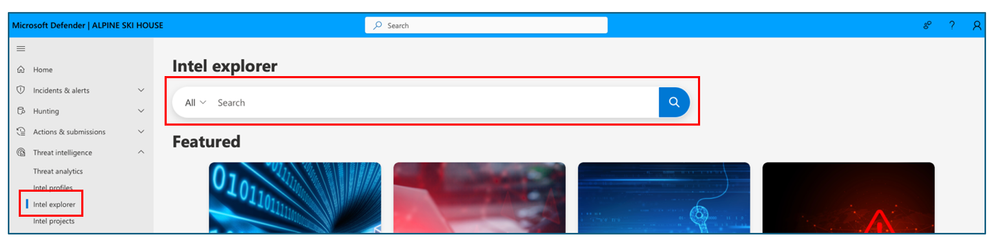

Identity in focus: Exploring the new ITDR experience within Microsoft Defender

Earlier this year I shared the news that the features and functionality of Microsoft Defender for Identity had been converged into Microsoft Defender XDR and were now a core part of that experience. Today I am excited to discuss some new enhancements to how our customers can find and engage with their Identity security capabilities.

New navigation

Identities have become an inherent part of modern security and the latest update to the Microsoft Defender XDR navigation further elevates Identity security within the SOC experience with a new dedicated section for the domain. As illustrated in the image below Defender for Identity customers will now see a section titled “Identities” which today encapsulates 3 new Identity specific pages or views.

1. ITDR Dashboard

The new ITDR dashboard is designed to provide SOC professionals with a single, prioritized view of Identity-specific security information and recommendations. Pulling relevant alerts and insights from across their identity footprint, this pane helps SOR teams better understand their identity posture and quickly manage potential identity-related security risks.

The page itself is broken down into 3 main areas. At the top users benefit from a visual representation of their unique identity landscape, breaking down the number and location of corporate identities across Entra ID, on-premises Active Directory and hybrid identities.

Just below this area is a section dedicated to critical recommended actions. Here users will see important steps they should take immediately to minimize risks, such as eliminating lateral movement paths and removing dormant accounts from sensitive groups.

The bottom section of the page consists of different cards each offering security professional’s a focused view into a specific element of their ITDR practice. These widgets offer identity-specific filters of broader security capabilities and serve as a jumping off point into other areas of the Defender XDR portal. For example, the “identity posture” card surfaces the Identity recommendations within Secure Score and the “Identity-Related Incidents” card highlights security incidents with identity elements or alerts. There are also some exciting new features available through these cards like the “highly privileged identities” widget which summarizes sensitive accounts within the environment, including Entra ID security administrators and global admin users. This consolidated view will give SOC teams additional insight to implement more targeted and effective management strategies, helping enhancing the organizations overall security posture. Similarly, the “deployment health” card offers info into both the deployment status, and overall health of Defender for Identity agents across the environment but also sheds some light into available licenses for Defender for Identity and Entra ID Protection.

For more information about this page and the available widgets, see the documentation here.

2. Health Issues