Category: Microsoft

Category Archives: Microsoft

Microsoft Learn AI Skills Challenge Pitch Winner: Watch Out

The Microsoft Learn AI Cloud Skills Challenge held in July wrapped up an incredible learning journey with the AI pitch Challenge; a showcase of innovation where passionate learners brought their visions to life through the power of AI. These creators shared how they would harness Microsoft’s AI technology to craft solutions for the future in a 3-minute video pitch. Out of many, five outstanding winners emerged, each with a unique and compelling vision.

This series of blog posts spotlights each creator sharing the transformative potential of their ideas.

Hello! I’m Ahmet Dedeler, a 16-year-old high school junior from Turkey, and I’m eager to share with you not just my latest project, “Watch Out,” but also my journey in the tech world. My adventure began with a simple curiosity about coding. Python and JavaScript were my initial gateways, but they quickly became much more than just programming languages. They were the tools that helped me understand the power of technology in solving real-world issues.

From Hackathons to Hosting One

My enthusiasm for coding swiftly led me to the world of hackathons. These weren’t just competitions; they were platforms where I could test my skills, innovate, and learn from peers. Winning a bunch of hackathons was a thrilling experience, each victory not just an achievement but a stepping stone to something greater.

This journey through numerous hackathons sparked an idea – why not host my own? Thus, “Boost Hacks” was born. It was a leap from participant to organizer, from learner to leader. The event was a massive success, with 800 participants, 85 innovative projects, and a staggering $180,000 in prizes. This wasn’t just about organizing an event; it was about creating a space for like-minded individuals to collaborate, innovate, and push the boundaries of technology.

Unveiling “Watch Out”: A Vision for Safer Communities

“Watch Out” is born from a desire to enhance community safety through the power of AI. It’s an AI-driven system that uses Computer Vision to detect and alert people about potential safety hazards in their surroundings – from fallen trees to damaged sidewalks.

How “Watch Out” Works

The system operates by analyzing live street footage, continuously scanning for anomalies or potential dangers. When it detects a hazard, it immediately notifies local authorities and emergency services, ensuring quick action and a safer environment for everyone.

The Inspiration Behind the Project

The idea for “Watch Out” came from observing everyday community challenges. I wanted to create a solution that not only leverages technology but also actively involves the community in promoting safety.

The Tech Behind the Vision

Developing “Watch Out” involved several Microsoft AI technologies. The core of the project is Microsoft’s Custom Vision, a tool that enabled me to train an AI model to recognize various safety hazards with high precision.

Favorite Microsoft AI Technology

Among all the technologies I explored, Microsoft’s Custom Vision stood out. Its user-friendly interface and powerful capabilities made it not just a tool for development, but a learning experience that was both challenging and rewarding.

Looking Ahead: My Future Vision and Aspirations

Looking towards the future, my goal is to blend my coding skills with my enthusiasm for meaningful projects. “Watch Out” is a stepping stone into a world where technology serves humanity. I am excited about refining this project and exploring new technological frontiers. My aspiration is to create solutions that leave a lasting, positive impact on society.

Join me in this journey of innovation and discovery, where we’re not just coding for the sake of technology, but for building a smarter, safer, and more connected world. My story is one of a young mind’s passion for technology and a heart for community service, and I believe this is just the beginning.

Feeling inspired? The Microsoft Learn AI Skills Challenge may have ended but the learning never stops! Get started with an AI Learning Path and find a new Microsoft Learn Cloud Skills Challenge to join. Transform your innovative ideas into reality with Azure credits through the Founders Hub. And for the students who dream of making an impact, the Imagine Cup is currently underway!

Microsoft Tech Community – Latest Blogs –Read More

Microsoft Learn AI Skills Challenge Pitch Winner: Watch Out

The Microsoft Learn AI Cloud Skills Challenge held in July wrapped up an incredible learning journey with the AI pitch Challenge; a showcase of innovation where passionate learners brought their visions to life through the power of AI. These creators shared how they would harness Microsoft’s AI technology to craft solutions for the future in a 3-minute video pitch. Out of many, five outstanding winners emerged, each with a unique and compelling vision.

This series of blog posts spotlights each creator sharing the transformative potential of their ideas.

Hello! I’m Ahmet Dedeler, a 16-year-old high school junior from Turkey, and I’m eager to share with you not just my latest project, “Watch Out,” but also my journey in the tech world. My adventure began with a simple curiosity about coding. Python and JavaScript were my initial gateways, but they quickly became much more than just programming languages. They were the tools that helped me understand the power of technology in solving real-world issues.

From Hackathons to Hosting One

My enthusiasm for coding swiftly led me to the world of hackathons. These weren’t just competitions; they were platforms where I could test my skills, innovate, and learn from peers. Winning a bunch of hackathons was a thrilling experience, each victory not just an achievement but a stepping stone to something greater.

This journey through numerous hackathons sparked an idea – why not host my own? Thus, “Boost Hacks” was born. It was a leap from participant to organizer, from learner to leader. The event was a massive success, with 800 participants, 85 innovative projects, and a staggering $180,000 in prizes. This wasn’t just about organizing an event; it was about creating a space for like-minded individuals to collaborate, innovate, and push the boundaries of technology.

Unveiling “Watch Out”: A Vision for Safer Communities

“Watch Out” is born from a desire to enhance community safety through the power of AI. It’s an AI-driven system that uses Computer Vision to detect and alert people about potential safety hazards in their surroundings – from fallen trees to damaged sidewalks.

How “Watch Out” Works

The system operates by analyzing live street footage, continuously scanning for anomalies or potential dangers. When it detects a hazard, it immediately notifies local authorities and emergency services, ensuring quick action and a safer environment for everyone.

The Inspiration Behind the Project

The idea for “Watch Out” came from observing everyday community challenges. I wanted to create a solution that not only leverages technology but also actively involves the community in promoting safety.

The Tech Behind the Vision

Developing “Watch Out” involved several Microsoft AI technologies. The core of the project is Microsoft’s Custom Vision, a tool that enabled me to train an AI model to recognize various safety hazards with high precision.

Favorite Microsoft AI Technology

Among all the technologies I explored, Microsoft’s Custom Vision stood out. Its user-friendly interface and powerful capabilities made it not just a tool for development, but a learning experience that was both challenging and rewarding.

Looking Ahead: My Future Vision and Aspirations

Looking towards the future, my goal is to blend my coding skills with my enthusiasm for meaningful projects. “Watch Out” is a stepping stone into a world where technology serves humanity. I am excited about refining this project and exploring new technological frontiers. My aspiration is to create solutions that leave a lasting, positive impact on society.

Join me in this journey of innovation and discovery, where we’re not just coding for the sake of technology, but for building a smarter, safer, and more connected world. My story is one of a young mind’s passion for technology and a heart for community service, and I believe this is just the beginning.

Feeling inspired? The Microsoft Learn AI Skills Challenge may have ended but the learning never stops! Get started with an AI Learning Path and find a new Microsoft Learn Cloud Skills Challenge to join. Transform your innovative ideas into reality with Azure credits through the Founders Hub. And for the students who dream of making an impact, the Imagine Cup is currently underway!

Microsoft Tech Community – Latest Blogs –Read More

Benefits of moving to Azure Monitor SCOM managed instance

In this blog, let’s highlight the cost-benefit of moving from your existing SCOM on-prem to Azure Monitor SCOM MI.

If you are using System Center Operations Manager (SCOM) to monitor on-premises and hybrid cloud environment, you might be wondering whether you should migrate to Azure Monitor SCOM managed instance or keep your SCOM on-premises deployment. In this blog, we will compare the two options in terms of cost benefits (up to 44% when fully migrated to SCOM MI), and help you make an informed decision based on your specific needs and goals.

What is Azure Monitor SCOM managed instance?

Azure Monitor SCOM managed instance is a cloud-based service that provides the same functionality as SCOM on-premises, but without the hassle of managing and maintaining the infrastructure. You can use SCOM MI to monitor your resources on and off Azure, as well as integrate with other Azure services such as Log Analytics, Azure Managed Grafana, and Power BI. SCOM MI is fully compatible with your existing SCOM management packs and agents*, so you can migrate your existing monitoring configuration and data with minimal disruption.

What are the cost benefits of Azure Monitor SCOM managed instance?

Azure Monitor SCOM MI offers several cost benefits over SCOM on-premises, such as:

Reduced infrastructure & maintenance costs: You don’t need to bother about maintaining infrastructure such as server racks, network cables, electricity, cooling, physical security, datacenter lease. Moreover, hardware infrastructure is a depreciation cost. SCOM MI runs on Azure’s scalable and reliable infrastructure, which means you only pay for what you use, and you don’t have to worry about downtime or performance issues.

You can save additionally on Azure Infrastructure with savings and reserved plans.

Reduced IT labor costs: SCOM MI is fully managed by Microsoft, which means you get updates, patches, scalability, and security. Since you don’t need to retrain your staff on SCOM management packs and, the efforts required to provision, patch and scale SCOM MI service is significantly less, we estimate ~40% reduction in time (labor cost) required to maintain & operate SCOM MI.

Optimized licensing costs: You don’t need to purchase, renew, or manage any licenses for your monitoring solution. SCOM MI is offered as a PAYG model, which means you only pay a monthly fee based on the number of monitored objects and the amount of data ingested. You also get access to all the features and capabilities of Azure Monitor, which can enhance your monitoring experience and provide additional insights and value.

For more information on SCOM MI licensing, refer here.

To illustrate the cost benefits of SCOM MI, we have created a comparison table of the estimated annual costs for a typical scenario of monitoring 500 VMs. The table does not include optional SCOM MI integration i.e., data ingestion to Log Analytics, usage of Grafana.

Disclaimer: Below table includes representative numbers only. For accurate Azure costs, refer to Pricing Calculator | Microsoft Azure. Also, we assume that the duration of migration between SCOM to SCOM MI is completed quickly (<3 months) and not as a long-term migration project.

Cost category

SCOM on-premises

Azure Monitor SCOM managed instance

Infrastructure

(Hardware + Software)

To monitor 500 VMs, you need 2 SCOM servers with Windows OS, 1 SQL server with Windows OS, server racks, storage disks etc.

$13,812 (annually)

$27,780 (no discount)

$12,586 (max discount)

Maintenance cost

(Security, lease, electricity, network, etc.)

$4,443 (annually)

$0 (included under infra cost)

IT labor cost

(administration)

$116,800 (annually)

$70,080 (annually)

Licensing

System Center license to manage 500VMs is $75,747. If you are using all SC products, the operating license cost for SCOM will be least ($12,625).

SCOM MI license is $6/VM/month.

$12,625 (If all SC products used)

$75,747 (If SCOM only used)

$36,000 (annually)

Annual cost range

$147,680 to $210,802

$118,666 to $133,860

Costs savings

(once you move to SCOM MI to monitor 500VMs)

20% if SCOM onprem only used & No Azure discounts applied

36% if all SC products used, max Azure discounts applied

44% if SCOM onprem only used, maximum Azure discounts applied

As you can see, Azure Monitor SCOM managed instance can save you up to 44% of the total costs of SCOM on-premises, considering you migrate to SCOM MI quickly. Of course, your actual costs may vary depending on your specific requirements and preferences, but the table gives you a general idea of the potential savings you can achieve by migrating to Azure Monitor SCOM managed instance. If you are interested in moving other System Center products to Azure and want to know the cost analysis, we recommend you build a Business case with Azure Migrate | Microsoft Learn.

How to get started with Azure Monitor SCOM managed instance?

If you are interested in trying out Azure Monitor SCOM managed instance, you can start here. You should talk to your Microsoft sales representative for clarity on plausible discounts and actual cost savings.

If you have any questions or feedback, you can leave your comments below. We would love to hear from you and help you with your monitoring needs.

*SCOM 2022 Agent (as of Feb’24).

References

Pricing Calculator | Microsoft Azure

Microsoft System Center | Microsoft Licensing Resources

Microsoft Tech Community – Latest Blogs –Read More

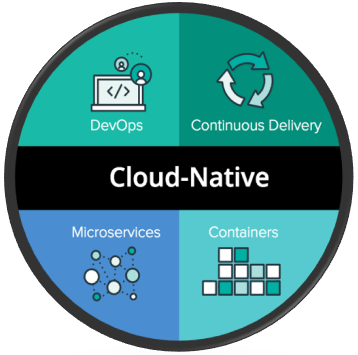

Step by Step Practical Guide to Architecting Cloud Native Applications

This is a step by step, no fluff guide to building and architecting cloud native applications.

Cloud application development introduces unique challenges: applications are distributed, scale horizontally, communicate asynchronously, have automated deployments, and are built to handle failures resiliently. This demands a shift in both technical approach and mindset.

So Cloud Native is not about technologies, if you can do all of the above, then you are Cloud Native. You don’t have to use Kubernetes for instance, to become Cloud Native.

Steps:

Say you want to architect an application in the cloud, and let’s use an E-Commerce Website as an example, how do you go about it and what decisions do you need to make?

Regardless of the type of application, we have to go through six distinct steps:

Define Business Objectives.

Define Functional and None Functional Requirements.

Choose an Application Architecture.

Make Technology Choices.

Code using Cloud Native Design Patterns.

Adhere to the Well Architected framework.

Business Objectives:

Before starting any development, it’s crucial to align with business objectives, ensuring every feature and decision supports these goals. While microservices are popular, their complexity isn’t always discussed.

It’s essential to evaluate if they align with your business needs, as there’s no universal solution. For external applications, the focus is often on enhancing customer experience or generating new revenue. Internal applications, however, aim to reduce operational costs and support queries.

For instance, in E-commerce, improving customer experience may involve enhancing user interfaces or ensuring uptime, while creating new revenue streams could require capabilities in data analytics and product recommendations.

By first establishing and then rigorously adhering to the business objectives, we ensure that the every functionality in the application — whether serving external customers or internal users — delivers real value.

Functional and None Functional Requirements:

Now that we understand and are aligned with the business objectives, we go one step further by getting a bit more specific. And that is by defining functional and none-functional requirements.

Functional requirements define the functionalities you will have in your application:

Users should be able to create accounts, sign in, and there should be password recovery mechanisms.

The system should allow users to add, modify, or delete products.

Customers should be able to search for products and filter based on various criteria.

The checkout process should support different payment methods.

The system should check order status from processing to delivery.

Customers should be able to rate products & leave reviews.

None functional requirements define the “quality” of the application:

Application must support hundreds of concurrent users.

The application should load pages in under 500ms even under heavy load.

Application must be able to scale during peak times.

All internal communication between systems should be happen through private IPs.

Application should be responsive to adapt to different devices.

What SLA will we support, what RTO & RPO are we comfortable with?

Application Architecture:

We understand the business objectives, we are clear on what functionalities our application will support, and we understand the constraints our applications need to adhere to.

Now, it is time to make a decision on the Application Architecture. For simplicity, you either choose a Monolith or a Microservices architecture.

Monolith: A big misconception is that all monoliths are big ball of muds — that is not necessary the case. Monolith simply means that the entire application with all of its functionalities are hosted in a single deployment instance. The internal architecture though, can be designed to keep the components of different subsystems isolated from each other but calls between them are in process function calls as opposed to network calls.

Microservices: Modularity has always been a best practice in software development whether you use a Monolith (logical modularity) or Microservice (physical modularity). Microservices is when functionalities of your application are physically separate into different services communicating over network calls.

What to pick: Choose microservices if technical debt is high and your codebase has become so large that feature releases are slow, affecting market responsiveness, or if your application components need different scaling approaches. Conversely, if your app updates infrequently and doesn’t require detailed scalability, the investment in microservices, which introduces its own challenges, might not be justified.

Again, this is why we start with business objectives and requirements.

Technology Choices:

It is time to make some technology choices. We won’t be architecting yet, but we need to get clear on what technologies we will choose, which is now much easier because we understand the application architecture and requirements.

Encompassing all technology choices, you should be thinking about containers if you are architecting cloud native applications. Away from all the fluff, this is how containers work: The code, dependencies, and run-time are all packaged into a binary (an executable package) known as an image. That binary or image is stored in a container registry and you can run this binary on any computer that has a supported container runtime (such as Docker). With that, you have a portable and lightweight deployable package.

Compute:

N-Tier: If you are going with N-tier (a simple Web, Backend, Database for instance), the recommended option for compute is to go with a PaaS Managed service such as Azure App Service. The decision point ultimately comes down to control & productivity. IaaS gives you the most control, but least productivity as you have to manage many things. It is also easier to scale managed services as they often come with an integrated load balancer as opposed to configuring your own.

Microservices: On the other hand, the most common platform for running Microservices is Kubernetes. The reason for that is Microservices are often developed using containers, and Kubernetes is the most popular container orchestrator which can handle thing such as self healing, scale, load balancing, service-service discovery, etc. The other option, however, is using Azure Container Apps which is a layer of abstraction above Kubernetes allowing you to run multiple containers at scale without managing Kubernetes. If you require access to the Kubernetes API, Container Apps is not a good option. Many apps, however, don’t require access to the Kubernetes API so it is not worth the effort managing the cluster, hence Container Apps is a good choice. You can run microservices in a PaaS Service such as Azure App Service, but it becomes difficult to manage, specifically from a CI/CD perspective.

Storage

Blob Storage: Use Blobs if you want to store unstructured objects like images. Calls are done via a HTTPS and blobs are generally inexpensive.

Files: If your applications would like native file shares, you can use services such as Azure Files which is accessible over SMB and HTTPS. For containers that want persistent volume, Azure Files is very popular.

Databases:

Most applications model data. We represent different entities in our applications (users, products) using objects with certain properties (name, title, description).

When we pick a database, we need to take into consideration how will we represent our data model (JSON, Tables, Key Value/Pairs). In addition, we need to understand relationships between different models, scalability multi-regional replication, schema flexibility, and of course, team skillsets.

Relational Databases: Use this when you want to ensure strong consistency, you want to enforce a schema, and you have a lot of relationships between data (especially many to one and many to many).

Document Database: Use this if you want a flexible schema, you primarily have a one to many relationships i.e. a tree hierarchy (such as posts with multiple comments), and you are dealing with millions of transactions per second.

Key/Value store: Suitable for simple lookups. They are extremely fast and extremely scalable. Caching is excellent for that.

Graph Databases: Great for complex relationships and ones that change over time. Data is organized into nodes and edges. Building a recommendation engine for an e-commerce application to implement “frequently bought together” would probably use a Graph Database under the hood.

One of the advantages of Microservices is that each service can pick its own database. A product service with reviews follows a tree like structure (one to many relationship) and would fit a document database. An inventory service on the other hand, where each product can belong to one or more category and each order can contain multiple products (many to many relationships) might use a relational database.

Messaging:

The difference between an event and message is that with message (apart from having a larger payload), a producer expects the message to be processed while an event is just a broadcast with no expectation of acting on it.

For an event, it is either discrete (such as a notification) or in a sequence such as IOT streams. Streams are good for statistical evaluation. For instance, if you are tracking inventory through a stream of events, you can analyze in the last 2 hours, how much stock has been moved.

Pick:

Azure Service Bus: If you’re dealing with a message.

Azure Event Grid: If you’re dealing with discrete events.

Azure Event Hub: If you’re dealing with a stream of events.

Many times, a combination of these services are used. Say you have an e-commerce, and when new orders come, you send a message to a queue where inventory system listens to confirm & update stock. Since orders are infrequent, the inventory system will constantly poll for no reason. To mitigate that, we can use an Event Grid listening to the Service Bus Queue, and the Event Grid triggers the inventory system whenever a new message comes in. Event Grid helps convert this inefficient polling model into an event-driven model.

Identity:

Identity is one of the most critical elements in your application. How you authenticate & authorize users is a considerable design decision. Often times, identity is the first thing that is considered before apps are moved to the cloud. Users need to authenticate to the application and the application itself needs to authenticate to other services like Azure.

The recommendation is to outsource identity to an identity provider. Storing credentials in your databases makes you a target for attacks. By using an Identity as a Service (IDaaS) solution, you outsource the problem of credential storage to experts who can invest the time and resources in securely managing credentials. Whichever service you choose, make sure it supports Passwordless & MFA which is more secure. Open ID & OAuth are the standard protocols for Authentication and Authorization.

Code using Cloud Native Design Patterns:

We defined requirements, picked the application architecture, made some technology choices, and now it is time to think about how to design the actual application.

No matter how good the infrastructure is and how good of a technology choice you’ve made, if the application is poorly designed or is not designed to operate in the cloud, you will not meet your requirements.

API Design:

Basic Principles: The two key principles of API design is that they should be platform independent (any client should be able to call the API) and designed for evolution (APIs should be updated without affecting clients, hence proper versioning should be implemented).

Verbs not nouns: URLs should be around Data Models & Resources not verbs: /orders not /create-orders .

Avoid Chatty URLs: For instance, instead of fetching product details, pricing, reviews separately, fetch them in one call. But there is a balance here, ensure the response doesn’t become too big otherwise latency will increase.

Filtering and Pagination: It is generally not efficient to do a GET Request over a large number of resources. Hence, your API should support a filtering logic, pagination (instead of getting all orders, get a specific number), and sorting (avoid doing that on the client).

Versioning: Maintaining multiple versions can increase complexity and costs since you have to manage multiple versions of documentation, test suites, deployment pipelines, and possibly different infrastructure configurations. It also requires a clear deprecation policy so clients know how long v1 will be available and can plan their migration to v2 but it is well worth the effort to ensure updates does not disrupt our clients. Versioning is often done on the URI, Headers, or Query Strings.

Design for Resiliency:

In the cloud, failures are inevitable. This is the nature of distributed systems, we rarely run everything on one node. Here is a checklist of things you need to account for:

Retry Mechanism: A Retry mechanism is a way to deal with transient failures and you need to have retry logic in your code. If you are interacting with Azure services, most of that logic is embedded in the SDKs.

Circuit Breaking: Retry logic is great for transient failures, but not for persistent failures. For persistent failures, implement circuit breaking. In practical terms, we monitor calls to a service, and upon a certain failure threshold, we prevent further calls, it is as simple as that.

Throttling: The reality is, sometimes the system cannot keep up even if you have auto-scaling setup so you need throttling to ensure your service still functions. You can implement throttling in your code logic or use a service like Azure API Management.

Design for Asynchronous Communication:

Asynchronous communication is when services talk to each other using events or messages. The web was built on synchronous (direct) Request-Response communication, but it becomes problematic when you start chaining multiple requests, such as in a distributed transaction because if one call fails, the entire chain fails.

Say we have a distributed transaction in a microservices application. A customer places an order processed by order service, which does a direct call to the payment service to process payments. If payment system is down, then the entire chain fails, and order will not go through. With asynchronous communication, a customer places an order, the order puts an order request in a queue, and when payment is up again, an order confirmation is sent to the user. You can see, such small design decisions can make massive impacts on revenues.

Design for Scale:

You might think scalability is as simple as defining a set of auto-scaling rules. However, your application should first be designed to become scalable.

The most important design principle is having a stateless application, which means logic should not depend on a value stored on a specific instance such as a user session (which should be stored in a shared cache). The simple rule here is: don’t assume your request will always hit the same instance.

Consider using connection pooling as well, which is re-using established database connections instead of creating a new connection for every request. It also helps avoid SNAT exhaustion especially when you’re operating at scale.

Design for Loose Coupling:

An application consists of various pieces of logic & components working together. If these are tightly coupled, it becomes hard to change the system i.e. changing one system, means coordinating with multiple other systems.

Loosely coupled services means you can change one service without changing the other. Asynchronous communication, as explained previously, helps with designing loosely coupled components.

Also, using a separate database per microservice is an example of loose coupling (changing the schema of one DB will not affect any other service, for instance).

Lastly, ensure services are deployed individually without having to re-deploy the entire system.

Design for Caching:

If performance is crucial, then caching is a no brainer and cache management is the responsibility of the client application or intermediate server.

Caching, essential for performance, involves storing frequently accessed data in fast storage to reduce latency and backend load. It’s suitable for data that’s often read but seldom changed, like product prices, minimizing database queries. Managing cache expiration is critical to ensure data remains current. Techniques include setting max-age headers for client-side caching and using ETags to identify when cached data should be refreshed.

Service Side Caching: Server-side caching often employs Redis, an in-memory database ideal for fast data retrieval due to its RAM-based storage, not suitable for long-term persistence. Redis enhances performance by caching data that doesn’t frequently change, using key-value pairs and supporting expiration times (TTLs) for data.

Client Side Caching: Content Delivery Networks (CDNs) are often used to cache data closer to frontend clients, and more often, it is static data. In Web Lingo, static data refers to files that are static, such as CSS, Images, HTML, etc. Those are often stored in CDNs, which are nothing more than a set of reverse proxies (spread around the world) between the client and backend. Client will usually request these static resources from these CDN URLs (sometimes called edge servers) and the CDN will return the static content along with TTL headers and if TTL expires, CDN will request static resources again from the origin.

Well-Architected Framework:

You’ve defined business objectives & requirements, made technology choices, and coded your application using cloud native design patterns.

This section is about how you architect your solution in the cloud, defined through a cloud agnostic framework known as Well-Architected framework encompassing reliability, performance, security, operational excellence, and cost optimization.

Note that many of these are related. Having scalability will help with reliability, performance, and cost optimization. Loose coupling between services will help with both reliability and performance.

Reliability:

A reliable architecture must be fault tolerant and must survive outages automatically. We discussed how to increase reliability in our application code through retry mechanisms, circuit breaking patterns, etc. This is about how do we ensure reliability is at the forefront of our architecture.

Here is a summary of things to think about when it comes to reliability:

Avoid Overengineering: Simplicity should be a guiding principle. Only add elements to your architecture if they directly contribute to your business goals. For instance, if having a complex recommendation engine doesn’t significantly boost sales, consider a simpler approach. Another thing to consider is avoiding re-inventing the wheel, if you can integrate a third party payment system, why invest in building your own?

Redundancy: This helps minimize single points of failures. Depending on your business requirements, implement zonal and/or regional redundancy. For regional redundancies, appropriately choose between active-active (maximum RTO) and active-passive (warm, cold, or redeploy) and always have an Automatic failover but manual fallback to prevent switching back to an unhealthy region. Every Azure component in your architecture should account for redundancy in case things go wrong by conducting a failure mode analysis (analyzing risk, likelihood, and effect in case things go wrong).

Backups: Every stateful part of your system such as your database should have automatic immutable backups to meet your RPO objectives. If your RPO is 1 hour (any data loss should not exceed 1 hour), you might need to schedule backups every 30 minutes. Backups are your safety archives in case things such as bugs or security breaches happen, but for immediate recovery from a failed component, consider data replication.

Chaos Engineering: Deliberately injecting faults into your system allows you to discover how your system responds before they become real problems for your customers.

Performance:

The design patterns you implement in your applications, will influence performance. This focuses more on the architecture.

Capacity Planning: You always need to start here. How do you pick the resources (CPU, memory, storage, bandwidth) you need to satisfy your performance requirements? Best place to start is by conducting load testing on expected traffic and peak loads to validate if your capacity meets your performance needs. However, most importantly, capacity planning is an ongoing process which should be reviewed and adjusted.

Consider Latency: Services communicating across regions or availability zones can face increased latency. Also, ensure services are placed closer to users. Lastly, use private backbone networks (Private endpoints & service endpoints) instead of traversing the public internet.

Scale Applications: You need to choose the right auto-scaling metrics. Queue length has always been popular metric because it shows there is a long backlog of tasks to be completed. However, a better metric is now used which is critical time, that is the total time when a message is sent to a queue & processed. Yes queue length is important, but critical time actually shows you how efficiently the backend is processing it. For an e-commerce platform that sends confirmation emails based on orders on a queue, you can have 50k orders but if the critical time is 500ms (for oldest message), then we can see that the worker is keeping up despite all these messages.

Scale Databases: A big chunk of performance problems are usually attributed to databases. If it is a none-relational database, horizontal scaling is straightforward, but often-times, you need to define a partition key to split the data (for instance, if customer address is used as a partition key, users are split into different customer address partitions). If it is a relational database, partitioning can also be done through sharding (splitting tables into separate physical nodes), but it is more challenging to implement.

Security:

Security is about embracing two mindsets, zero trust and a defense in depth approach.

Zero Trust: In the past, we used to have all components in the same network so we used to trust any component that was within the network. Nowadays, we have the internet and various components that connect to our application from anyone. Zero trust is about verifying explicitly, where all users & applications must authenticate & authorize even if they are inside the network. If an application is trying to access a database in the same network, it should authenticate & authorize. Azure Managed Identities, for instance, support this mechanism without exposing secrets. The second principle is using least privelege by assigning Just in Time (JIT) and Just Enough Access (JEA) to users and applications. Lastly, always assume breach. Even if an attacker penetrated our network, how can we minimize the damage? A common methodology is to use network segmentation. Only allow specific ports open between subnets and only allow certain subnets to communicate with each other. Just because they are all in the same network, does not mean you allow all communication.

Defense in Depth: Everything in your application & architecture should embed security. Your data should be encrypted at transit & rest, your applications should not store secrets or use vulnerable dependencies or insecure code practices (consider using a static security analysis tool to detect these), your container images should be scanned, etc.

Operational Excellence:

While the application, infrastructure, and architecture layer are important. We need to ensure we have the operations in place to support our workaloads. I boiled them down into four main practices.

DevOps Practice: Set of principles & tooling to allow automated, reliable, and predictable tests to ensure faster & more stable releases. Testing & Security should be shifted to the left, and zero down-time must be achieved through blue/green deployments or canary releases.

Everything as Code: Infrastructure should be defined using declarative code that is stored in source control repository for version control & rollbacks. IaC allows more reliable and re-usable infrastructure deployments.

Platform Engineering Practice: Outside of buzz words, this is when a centralized platform team creates a set of self-service tools (utilizing preconfigured IaC templates under the hood) for developers to deploy resources & experiment in a more controlled environment.

Observability: This is about gathering metrics, logs, and traces to provide our engineers with better visibility into our systems, and in-turn, fix issues faster. I wrote another article about observability so I won’t go into detail here, make sure to check it out.

Cost Optimization:

If costs are not optimized, your solution is not well architected, it is as simple as that. Here are things you should be thinking about:

Plan for Services: Every service has a set of cost optimization best practices. For instance, if you are using Azure Cosmos DB, ensure you implement proper partitioning. If you are using Azure Kubernetes, ensure you define resource limits on Pods.

Accountability: All resources should be tracked back to their owners and divisions to be linked back to a cost center. We do that by enforcing tags.

Utilize Reserved Instances: If you know you will have predictable load on a production application that will always be on. Utilize Reserved Instances. Every cloud provider should support that.

Leverage Automation: Switch off things during none traffic times.

Auto-scale: Leverage dynamic auto-scaling to use less resources at low peak times.

Conclusion

I hope this overview has captured the essentials of building cloud-native applications, highlighting the shift required from traditional development practices. While not exhaustive, I’m open to exploring any specific areas in more detail based on your interest. Just let me know in the comments, and I’ll gladly delve deeper.

Microsoft Tech Community – Latest Blogs –Read More

MVP and MLSA Collaboration Benefits Tech and Community

From November to December 2023, an online session series titled “Learn Live: Build your AI portfolio with AI Kick-off Challenge Projects” was held for individuals looking to apply AI to solve real-world problems. The series kicked off with “Build a minigame console app with GitHub Copilot” as part of the Microsoft Ignite 2023 sessions, where Microsoft Cloud Advocates demonstrated how to create a game using GitHub Copilot.

Episodes 2 and 3 were both collaborations involving Microsoft MVPs and Microsoft Learn Student Ambassadors. Episode 2 featured a session titled “Add image analysis and generation capabilities to your application” led by Ivana Tilca, an AI and Microsoft Azure MVP who has been previously featured on this MVP Communities Blog (e.g. Microsoft Ignite & Microsoft MVP – Global Experience), and a Microsoft Learn Student Ambassador, Rachel Irabor. Episode 3, “Build a Speech Translator App,” was presented by AI MVP Charles Elwood and Microsoft Learn Student Ambassador Vidushi Gupta, explaining how to develop a Speech Translator App.

Charles reflected on the collaboration as both fun and meaningful. “The comments from the audience were so positive on the Learn Live episode, I think we helped a lot of people that day. It was a lot of work though. Vidushi and I spent so many hours debugging the code and the documentation was nonexistent for the Power Apps connectors.”

He continues, “Vidushi would work on this until 4am, then send us emails, and then had to study for finals. So, she had to take breaks, and I was in LA visiting family to take breaks. Then, Carlotta Castelluccio (Cloud Advocate) brought in some help, and Someleze Diko (Cloud Advocate) and Olanrewaju Oyinbooke (MI/AI Researcher, COSMOS at UALR) guided us through Power Apps. It was the most interesting collaboration where AI brought this amazing group of people together to solve a problem (and we were in all corners of the world). I wish I could bottle this up.”

Vidushi noted the scarcity of female Student Ambassadors focused on Power Platform and expressed her ambition to become an MVP someday. “She has the persistence to solve the big problems and she has such a good and friendly teaching style. I was so impressed,” Charles praised her potential and influence, offering advice and encouragement toward achieving her goal to become an AI MVP.

The “Learn Live: Build your AI portfolio with AI Kick-off Challenge Projects” series allowed community members worldwide to embark on new journeys of AI technology. Moreover, the preparation for these sessions provided MVPs and Student Ambassadors with shared experiences from which they could learn extensively from each other. Charles’s mentorship might pave the way for Vidushi’s future inclusion in the MVP program, potentially expanding the global MVP community circle and fostering ideal ecosystems through new collaborations between MVPs and Student Ambassadors.

The impact of such collaborations is immeasurable. The joint sessions between Microsoft MVPs and Microsoft Learn Student Ambassadors are a prime example of this. We encourage you to explore different collaborations with people in various roles, gaining new perspectives, knowledge, and experiences.

If you are eager to learn more about the topics shared in this series, official Learn Modules are available on Microsoft Learn:

– Challenge project – Build a minigame with GitHub Copilot and Python – Training | Microsoft Learn

– Challenge project – Build a speech translator app – Training | Microsoft Learn

For those interested in learning more about the “Learn Live: Build your AI portfolio with AI Kick-off Challenge Projects” series, a comprehensive review is available on the Educator Developer Blog.

Learn Live – Build your AI portfolio with AI Kick-off Challenge Projects – Microsoft Community Hub

Microsoft Tech Community – Latest Blogs –Read More

New on Microsoft AppSource: February 1-7, 2024

We continue to expand the Microsoft AppSource ecosystem. For this volume, 154 new offers successfully met the onboarding criteria and went live. See details of the new offers below:

Get it now in our marketplace

ADDU: Botnomics offers a smart framework for IT automation that reduces incidents, streamlines operations, and increases value. Suitable for businesses and OEMs of any size, the platform provides out-of-the-box automations, self-healing remediation, and self-service capabilities. Botnomics integrates with ITSM and ServiceNow to deliver non-invasive remote troubleshooting.

Advocat AI (Per User): Advocat AI simplifies legal document management for startups and small-to-midsize businesses. With Advocat AI, users can upload, create, negotiate, e-sign, store, analyze, and track legal documents. The platform offers AI summaries and real-time negotiating insights.

Annex Cloud Loyalty Experience Platform: Annex Cloud offers a highly configurable SaaS loyalty program management platform that motivates customer behavior across the entire customer journey. Annex Cloud helps marketers gain new customers, increase customer lifetime value, perform basket analysis, and reduce churn. The platform is scalable, innovative, and designed for even the most complex global brands.

Change Warden: Change Warden is a low-cost IT change management system that helps organizations track and manage IT changes, assess risks, seek approvals, and ensure successful completion of deployments. The system features change request management templates, RACI tracking, and integration with Microsoft Azure DevOps and Dynamics 365 Customer Service.

CommuteSaver: CommuteSaver is an AI-based mobile app that tracks and reports CO2 emissions for corporate commuting and business travel by detecting a user’s transport mode. The app provides data-based recommendations to reduce emissions and costs and includes scoreboards and incentives for employees to reduce CO2 emissions.

Compliance Recording for Microsoft Teams: Open Lake’s Compliance Recording provides a turnkey, managed solution for recording all modern workplace modalities, including chat, voice, video, and screen share. The service includes conversation archiving and multiple compliance services such as case management, secure recording sharing, and legal hold. Legacy recording systems can be imported into Open Lake’s Compliance Hub for replaying interactions from a single service.

ContractMatrix: ContractMatrix streamlines contract drafting, review, and analysis with generative AI-assisted interrogation and drafting. The platform provides real-time access to gold-standard precedents and policies, built-in risk management, and governance designed by Allen & Overy lawyers. ContractMatrix saves time, cost, and risk, allowing lawyers to focus on smart, fast decisions.

ControlDoc ECM/BPM/SGDEA: Available in Spanish, ControlDoc is an electronic document management system (SGDEA) that offers efficient, comprehensive, and automated content management for businesses. The system complies with MoReq (Modelo de Requisitos) standards and supports W3C accessibility standards, portability on various devices, and HTTPS protocols for secure communication.

Exclaimer: Exclaimer is an email signature management solution that allows users to design and manage professional email signatures for all emails. It offers automation and efficiency, reduces IT workload, and allows for collaboration with multiple user logins. The solution features the ability to assign different signature templates for individual users or departments.

Footprints for Commercial Properties: Footprints AI offers a retail media platform that helps commercial property owners and managers enhance profitability and growth by utilizing data on customer behavior within physical store environments. The solution can help generate a new revenue stream from retail media and provide valuable insights into consumer shopping habits.

Footprints for Retail Media: Footprints AI is an omnichannel retail media platform that helps retailers monetize their customer data by transforming anonymous shoppers into predictive media audiences. The solution offers premium retail media services across in-store, on-site, and off-site channels, generating increased profits. Footprints AI uses AI-generated customer insights, including psychographic and socio-demographic profiling to predict and influence omnichannel purchase behaviors.

Greenomy Company Portal: Greenomy is a multi-framework environmental, social, and governance (ESG) reporting solution that centralizes the collection, analysis, and reporting of ESG data. The platform offers AI-powered features to save time and costs, while ensuring compliance with the latest regulations. Greenomy helps companies advance ESG performance by identifying improvements and tracking progress over time.

Hybrid Benefits Calculator for Azure: This dashboard displays virtual machine details for your Microsoft Azure tenant, including configuration and vCPU count. Hybrid Benefits Calculator also retrieves SQL service type and calculates necessary core licenses for hybrid benefits licensing to help you manage your licensing by automating calculations.

HyperNet Sustainable Solution: HyperNym’s HyperNet solution uses IoT technology to monitor emissions, track sustainability progress, promote energy-efficient upgrades, and navigate toward carbon neutrality. Using data insights and advanced analytics, HyperNet can help you drive substantial reductions in environmental impact, resulting in better environmental stewardship, operational efficiency, and brand trust.

HyperNet Fleet Management System: HyperNym’s HyperNet fleet management solution is an IoT-powered system that uses sensors and connected devices to gather data on vehicle location, performance, and maintenance. The solution provides real-time insights on fuel consumption, driver behavior, and vehicle utilization, allowing fleet managers to make informed decisions that optimize schedules, improve safety, and reduce costs.

IBLook for Microsoft Teams: IBLook for Microsoft Teams is an attendance management app that displays team members’ presence and status, allowing for easy tracking of their location and work status. The app features include status management, message memo registration, comment registration, and integration with Microsoft Teams.

Imperium Power Sales CPQ: Built on Imperium Starter CRM using the Microsoft Power Platform, Imperium Power Sales CPQ is a quote management solution that connects businesses and customers. Businesses can use the solution to create a product catalog and provide customers with a portal for quote requests. Imperium Power Sales CPQ reduces errors, offers competitive pricing, accelerates sales cycles, and enhances customer satisfaction.

GPT SaaS Jurisprudence: Available in Portuguese, Jurisprudence GPT is an AI-powered tool trained on public documents from Brazilian courts. The tool is designed to enable easy and natural language-based searches of legal precedents.

LearnPro LMS: LearnPro is an enterprise learning management solution that offers access to a wide range of educational content, progress tracking tools, and detailed analysis. The solution enables companies to optimize their training processes, enhance staff performance, and stay competitive in an ever-changing business environment. LearnPro offers customization capabilities, personalized course recommendations, and advanced analytics tools to evaluate the impact of learning on business performance.

Pegatron Vision AI Smart Surveillance: The Vision AI Smart Surveillance system by Pegatron is a user-friendly platform that utilizes powerful AI technology to enhance surveillance capabilities without requiring coding skills.

Rapid Platform – Developer: Automate your business management with a customizable and scalable system that integrates Microsoft 365, SharePoint, Power BI, Outlook, and Azure and provides an intuitive no-code environment for process automation and documentation.

RapidStart Sales for Microsoft Dynamics 365: RapidStart Sales is a simple yet powerful app that streamlines customer interactions and sales opportunities with features like record hashtagging and shortcuts. Built on Microsoft Power Apps, the app seamlessly integrates with Microsoft 365, Outlook, and Teams while maintaining compatibility with Dynamics 365 Sales.

Skypoint AI Platform for Long-Term Care (LTC): Skypoint’s AI platform unifies structured and unstructured data as well as public external data into an Azure Data Lakehouse. Built on Microsoft Azure, Skypoint lets users query data using conversational language via Skypoint AI Private GPT. The platform offers business intelligence and reporting, data unification, task/process automation, and privacy compliance.

SmartDX: SmartDX offers a solution for tracking and identifying individual audience members across channels and platforms, enabling companies to deliver contextual omnichannel interactions and end-to-end attribution. The platform also provides a comprehensive snapshot of campaign results and marketing ROI, with an emphasis on conversion-focused customer experiences in real time.

Team Board In/Out Status List: Team Board In/Out Status List is an app for Microsoft Teams that allows you to see your entire team’s real-time availability. Use the app to improve communication, productivity, and transparency.

Velocity Learning JSC Cares Program: Velocity Learning’s JSC Cares Program offers a comprehensive learning solution for personal and professional development. The solution provides a wide range of courses and resources to help individuals and organizations achieve their goals.

Voluptuaria: QR Support: Voluptuaria provides a dedicated service based on Microsoft Azure to support businesses using dynamic QR codes so that every scan results in seamless access to your digital offerings.

Well: Available only in Australia, the Well app integrates with Microsoft Teams to catalyze daily habits and help keep everyone at work safe by delivering engaging content directly within Teams. Well drives behavioral change while aligning with the ISO 45003 global standard for psychological safety. The app has been designed to help manage the psychosocial hazards outlined in Safe Work Australia’s Code of Practice: Managing Psychosocial Hazards at Work.

WeTransact.io: WeTransact.io simplifies the process of listing products on the Microsoft commercial marketplaces by handling technical aspects and maintenance. The WeTransact platform enables quick publishing in days and compliance with the GDPR.

Go further with workshops, proofs of concept, and implementations

Beyondsoft AI-Powered Productivity for Microsoft 365 Copilot: Implementation: Beyondsoft offers AI-powered productivity services to help organizations implement Copilot for Microsoft 365. The implementation includes business case development, end-user readiness, change management programs, and more.

Catalyst Envision and Innovation for Customer Service: Workshop: The Catalyst Envision and Innovation Workshop for Microsoft Dynamics 365 Customer Service helps businesses understand whether Dynamics 365 can meet their priorities. Attendees will find the right transformation strategy, and the workshop ends with a presentation on next steps.

Catalyst Envision and Innovation for Marketing: Workshop: The Catalyst Envision and Innovation Workshop for Microsoft Dynamics 365 Marketing helps businesses understand whether Dynamics 365 can meet their priorities and identify how to build velocity toward business transformation using Microsoft Azure, Dynamics 365, and Power Platform.

Catalyst Envision and Innovation for Sales: Workshop: The Catalyst Envision and Innovation Workshop for Microsoft Dynamics 365 Sales helps businesses understand whether Dynamics 365 can meet their priorities. Attendees will learn how to transform business by using Microsoft Azure, Dynamics 365, and Power Platform.

Continual Professional Development Solution for Higher Education: 6-Month Implementation: Crimson will deploy a professional development solution built on Microsoft Dynamics 365 and Power Platform using pre-configured accelerators. The solution delivers a seamless experience for learners, improved automation, increased efficiency, and better-informed decisions.

Copilot for Microsoft 365 – Planning for Success: Workshop: UnifiedCommunications.com will identify use cases, deliver demonstrations, and discuss best practices to ensure your technical readiness, security, and user adoption of Microsoft 365 Copilot. This offer includes a pre-workshop survey to identify your key business concerns and goals.

Copilot for Microsoft 365: Deployment: True will help you take advantage of Microsoft 365 Copilot to increase creativity, boost productivity, and enhance user skills across applications. True will assess your organization’s requirements, develop a customized deployment plan, and guide your organization through training and adoption.

CRM2GO: Available in German, InsideAX’s CRM2GO engagement helps you quickly and affordably introduce a new CRM system built on Microsoft Dynamics 365 Sales. The starter package includes analysis of your processes, guidance through implementation and integration, and licensing support.

Digital Contact Centre for Housing: 6-Month Implementation: Crimson’s Digital Contact Centre solution for the housing sector is a cloud-based service that uses Microsoft Dynamics 365 Customer Service and Power Platform to connect and engage with customers across digital messaging channels. The solution offers contextual customer identification, real-time notification, integrated communication, and agent productivity tools to improve operational performance and increase revenue.

Dynamics 365 Customer Insights – Data & Journeys: 4-Week Implementation: Axazure will help you connect all your customer data with ease by resolving customer identities with AI-driven recommendations. After ingesting data, unifying profiles, and defining rules, Axazure consultants will take live your implementation of Microsoft Dynamics 365 Customer Insights. You’ll benefit from newly created customer segments for marketing campaigns, end-user training, and more.

Dynamics Go – Low-Cost Entry to Dynamics 365 Sales: Deployment: AXON-IT’s Dynamics Go is a cloud-based CRM platform designed to streamline and automate sales processes for SMBs. The platform offers a low cost of entry to Microsoft Dynamics 365, featuring pre-built templates, dashboards, and triage support. Dynamics Go includes lead and opportunity management, quotes, orders, invoices, and email integration.

Enforced Data Security: 12-Week Implementation: BDO offers data protection measures to safeguard businesses from cyberattacks, data breaches, and data loss by using Microsoft 365 data discovery tools. BDO will build a comprehensive plan to discover and protect data where it lives, as well as drive healthy ways to maintain data integrity and monitor data access against the HIPAA regulations and the widely recognized HITRUST industry standards.

Fast Forward to Dynamics 365 Cloud: Savaco’s Fast Forward Track is a migration service for companies using Microsoft Dynamics CRM on-premises that want to transition to a cloud-based solution. The service offers expertise in migrations, rich Dynamics 365 experience, in-house tools, and applications. Benefits include seamless transition, cost-effectiveness, scalability, and data security. Deliverables include a migration plan, post-migration support, and training.

Finchloom+ for Microsoft 365 Email Security: Finchloom’s email security managed service offers protection against phishing attempts with human-powered detection and swift response. Finchloom’s security operations center monitors suspicious domains and offers user training and phishing simulations. The service includes email security setup on Microsoft 365, unlimited user phishing submissions, and monthly threat reports.

Finchloom+ for Microsoft Intune: Finchloom offers setup, monitoring, and support for compliance, patch management, and software deployment using Microsoft Intune integrated with Micrsofot Defender for Endpoint. This service includes an environment assessment and onboarding, as well as monthly reporting and recurring services.

Frontline Workers: Workshop: Salt’s Frontline Solution Workshop helps organizations onboard frontline employees to be productive using Microsoft 365 apps. The workshop includes training, inclusivity, support for diversity needs, adoption planning, change management planning, physical onboarding, and a report with outcomes and recommendations. This service is designed to enhance the productivity, engagement, and satisfaction of frontline employees.

Google Workspace to Microsoft 365: Migration: Overcast offers seamless migration from Google Workspace to Microsoft 365, including migrating data from email, calendar, contacts, and Google Drive. Overcast provides training, support, and documentation. The scope and cost of the solution are customized to your needs.

Leap into Copilot: 2-Hour Workshop: Core will lead you through an exploration of the potential impact and the strategies for implementing Microsoft 365 Copilot. The session covers key features along with a demo and overview of Copilot suites, integration with corporate data, practical applications, benefits, and deployment options.

Microsoft 365 Copilot: Assessment and Implementation: Eide Bailly offers expert guidance on responsible AI usage for immediate business value built on Microsoft 365 Copilot. This service includes adjusting settings to industry standards, ensuring security, privacy, and compliance, and providing training for stakeholders and end-users.

Microsoft 365 Copilot: Implementation: Forefront’s offer includes a pilot program, phased rollout plan, updated training material, and governance model for Microsoft 365 Copilot. This service will help organizations unlock the full potential of Copilot, empowering teams to work smarter and more efficiently.

Microsoft 365 Copilot: Readiness & Deployment: ConXioNOne offers a customized solution for businesses to use Microsoft 365 Copilot as a generative AI assistant. This solution includes four phases: discovery, design, deployment, and adoption, with a focus on assessing technical readiness, identifying high-value use cases, and configuring security and compliance.

Microsoft 365 On-Premises to Tenant: Migration: Overcast can help migrate on-premises server infrastructure to Microsoft 365, including Exchange mailboxes, public folders, and SharePoint sites. This offer can be performed as a single event, phased migration, or split move. Overcast provides migration training and support, custom migration, data migration assessment, planning and timeline, documentation, and training sessions. The proposal is tailored to your needs and budget.

Microsoft Teams Phone: 4-Week Proof of Concept: Red X Carbon enhances Microsoft Teams phone systems by integrating Operator Connect, Direct Route, and native Microsoft calling plans. This proof of concept will demonstrate calling capabilities in Teams and includes system configurations, pilot testing, and deployment of Teams Rooms systems and phones.

Microsoft 365 Tenant to Tenant: Migration: Overcast can help migrate data from one Microsoft 365 tenant to another, including Exchange mailboxes, SharePoint sites, and Teams data. Overcast provides migration training and support, custom migration, and complete documentation. The service is customized to your needs and budget.

Onesec.AI: ONESEC offers a complete solution for companies to integrate AI safely and efficiently using Microsoft Entra, Microsoft Purview, and Microsoft 365 Copilot. ONESEC consultants will identify security risks, provide personalized protection strategies, and assist with adoption and process improvement.

Upgrade to Dynamics 365 Business Central (Essential): 2-Month Migration: Navision Tech offers customized technical data migration services from Microsoft Dynamics NAV to Dynamics 365 Business Central. The Essential Package includes everything in the Kick-start Package as well as extension migration, custom reports, and documentation.

Upgrade to Dynamics 365 Business Central (Kick-start): 2-Week Migration: Navision Tech offers customized data migration services from Microsoft Dynamics NAV to Dynamics 365 Business Central. The Kick-start Package includes project discovery, planning, data migration, user training, and go-live monitoring.

Upgrade to Dynamics 365 Business Central (Solid Foundation): 3-Month Migration: Navision Tech offers customized data migration services from Microsoft Dynamics NAV to Dynamics 365 Business Central. The Solid Foundation Package includes everything in the Essentials Package, as well as custom data migration, data integration, customization, and custom reports built on Microsoft Power BI.

Zones Data Governance and Compliance: Zones’ Data Governance and Compliance services help organizations optimize Microsoft 365 while addressing challenges such as inconsistent data quality, regulatory requirements, and data management strategies. Zones’ holistic approach ensures data becomes a catalyst for positive change, aligning with organizational objectives and extending the use of Microsoft 365 to drive growth and innovation.

Contact our partners

Advanced Manufacturing Reports

AI Candidate Assessment – MetaOPT

AI in Housing Art of Possible: 2-Day Assessment

Aptean Beverage Advanced Online Warehouse Management for Drink-IT Edition

Cloud Migration – License Assessment

Configit Ace – Configuration Lifecycle Management Solution (DE)

Configit Ace – Configuration Lifecycle Management Solution

Continia Payment Management (IN)

Copilot Spark: Readiness Check

Digital Distribution Platform for Insurance

Dynamics 365 Customer Engagement: Assessment

EX Managed Services – Essentials (Including Copilot)

Fairly AI Red-Teaming-in-a-Box

Feat Paper (Motion PDF & Analytics)

FI Acquisition and Spend Planner

Fusion5 Pack for Power Automate

Fusion5 Vendor Bank Account Approval

Gestisoft AMP Sales Accelerator

Integrated School Affairs Support System Eduo

Kovix WMS – Streamline Your Warehouse Operations

Learning Management System (UK)

Ledger Allocation Functionality

License Assessment: Optimize Your License Management

MD.ECO – Proactive Cybersecurity

Microsoft 365 Copilot Evaluation: 1- to 2-Hour Assessment

Microsoft 365 Copilot Readiness: 4-Week Assessment

Pre-Flight Check for Microsoft 365 Copilot: 2-Week Assessment

Process Digitization with Power Platform: 7-Day Assessment

Readiness for Launching Copilot for Microsoft 365: 5-Day Assessment

Redoflow ERP Requirements: 1-Week Assessment

Seamless Communication with Teams Phone

Sparxcloud Cloud Cost Management

Text, SMS, Instant Message, Chat + Dynamics 365 CRM – TextSMS4Dynamics

Trovex.ai – AI-Driven Training and Enablement

WooCommerce Integration with Dynamics 365 Finance – HexaSync Profile

Workstatz – Employee Efficiency and Productivity Monitoring Software

This content was generated by Microsoft Azure OpenAI and then revised by human editors.

Microsoft Tech Community – Latest Blogs –Read More

MODELLING MICROSOFT DYNAMICS 365 DATA USING DATA VAULT 2.0

This Article is Authored By Michael Olschimke, co-founder and CEO at Scalefree International GmbH and Co-authored with Markus Lewandowski Senior BI Consultant and Bibhush Nepal Technical Solutions Specialist from Scalefree

The Technical Review is done by Ian Clarke and Naveed Hussain – GBBs (Cloud Scale Analytics) for EMEA at Microsoft

The previous articles in this series covered individual aspects of Data Vault 2.0 on the Microsoft Azure platform. We discussed several architectures, such as the standard or base architecture, the real-time architecture, and one for self-service BI. We have also looked at individual entities, their purpose, and how to model them.

What is missing now is the big picture: we have the tools but how do we use them in a real-world example? How do we get started on an actual project? To answer these questions, we have teamed up with consultants from Scalefree, a consulting firm specializing in Big Data projects with Data Vault 2.0 and use some of their process patterns in this article to get you started.

Introduction

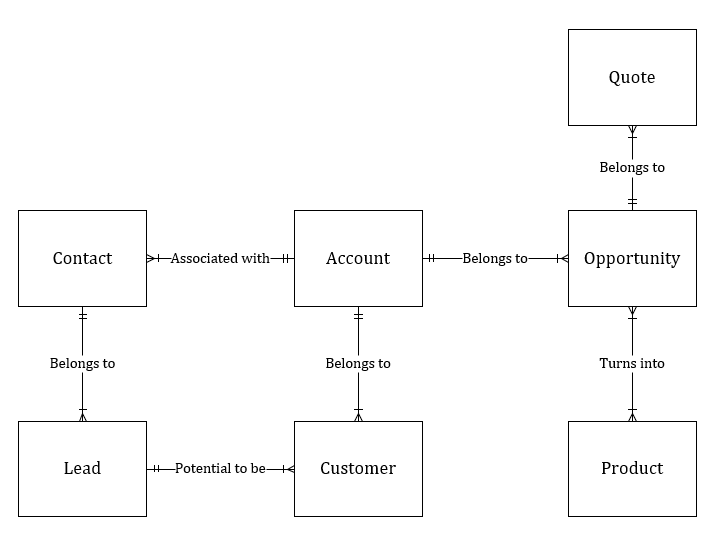

For this article, we have extracted data from the Microsoft Dynamics API. The goal of this article is to come up with the Raw Data Vault model, including important hubs, links, and satellites. We will take the standard Sales process of Microsoft Dynamics and use its business objects for this purpose. Those business objects are:

Lead

Account

Contact

Opportunity

Opportunity Product

Quote

Product

The Data Model we are using is a selection from the Microsoft Common Data Model where we picked all objects that play a role during the standard sales process.

The sales process can be described very roughly as follows:

Lead -> Account / Contact -> Opportunity -> Quote -> Opportunity Close

This Raw Data Vault could later be used to create reports, dashboards, and other data-centric solutions.

Note that, while more complete, this article does not describe the full capabilities of Data Vault 2.0. Data Vault 2.0 shows its full strength when data from multiple data sources must be integrated. This is not the case in this article as we only model data from Microsoft Dynamics CRM to keep the article simple. However, you can easily extend the model with additional data in the next iteration. Data Vault 2.0 has been designed for the agile development of the data analytical platform. Step by step, you would add more and more data from additional (or the same) data sources and integrate the data into the platform.

A Team Effort

Building a data analytics platform is not a job only for data modelers. Instead, a data analytics platform is a complex technical system, and building it requires expertise from technical and business areas. Therefore, it is a team effort, and many roles are involved, including data engineers, architects, testers, DevOps engineers, and more. But in addition to the typical data roles, it is highly recommended also to include two other types of roles: one that focuses on business value and the other focused on the data sources.

The business role represents the user and can explain the requirements. They know the business processes and how the business value, typically a report or dashboard, is used later on. They can also explain data quality issues and know what step in the business process causes them.

On the other side of the team, there are the data source specialists. They know the source system very well from a technical perspective and know the purpose of each data attribute, can explain data quality issues from a source system perspective, and know-how data relate to each other.

For the same reasons, we teamed up with the CRM experts at Scalefree to write this article and create a more comprehensive Data Vault 2.0 model for the Microsoft Dynamics 365 CRM solution.

Getting started with the Data Vault project is always a challenge. Typically, teams face two questions (among others):

How to start the overall project?

How to start with the modeling aspects?

The next two sections present our answers to these questions.

How to start the overall project?

At Scalefree, our practice is to initiate our client engagement with a series of sprints. The first sprint, called “sprint minus one” is all about resources and is performed by a senior role of Scalefree. Does the project have access to the right resources, including team members, business users, software and hardware, and meeting space? Would the team be able to get working as they join the context? Are user credentials set up?

Once the readiness of the project has been established, the next sprint, called “sprint zero” is used to set up the infrastructure and the initial information requirement for the first real sprint (“sprint one)”. The goal is to set up the architecture for the data analytics platform, including all the required layers. As a basis, one of the Data Vault 2.0 architectures from our previous articles . However, in most cases, we do not apply these architectures directly. In many cases, the architecture requires some adjustments to the circumstances of the client, for example, pre-existing components, tool stack, etc. With that in mind, we honestly don’t know if the architecture draft actually works in a project: many variables are unknown, some tools are new and sometimes even untested, and the team might lack skills or experience.

The worst case scenario would be to start developing with this architecture blueprint only to realize in two years from now, that the architecture choice was a bad one or doesn’t even work at all. Instead of wasting two years of budget, we follow a “fail hard fast” strategy. If the architecture blueprint doesn’t work, you want to fail as soon as possible to limit the wasted budget. And that is the main goal of sprint zero: set up the architecture and establish the data flow from an actual data source to the actual target. This data flow doesn’t have to use Data Vault models. We are more concerned about data flow. More important than the Data Vault model is to use the sprint to try assumptions and unknowns.

For example, in one of our projects, the client wanted to use binary hash keys in the information marts. It was unclear if the dashboard application was able to join on these binary hash keys. Instead of waiting for this to test in the future, we used sprint zero to test the assumption that it would work. If not, we could still adjust our architecture and implementation techniques for the later sprints.

The goal of sprint one, and most subsequent sprints, is to deliver business value. However, it is not the purpose of sprint one to define the business value. Instead, this should be done in a previous sprint. Since this is sprint one, sprint zero must define the business value with a first information requirement.

Most subsequent sprints should deliver business value or at least some progress. There might be some exceptions when technical debt piles up over time. In this case, a technical sprint might focus on reducing the technical debt and not delivering any business value. However, this is the exception, not the rule.

How to get started with the Data Vault model?

While the Data Vault model is not a concern of sprint zero, it is certainly a concern in subsequent sprints. Many less experienced Data Vault practitioners face the issue of getting started with the first Data Vault model, even if they went through proper training. A good start is always to define the client’s business model and focus on the major concepts. For example, the following diagram could present the business model of a customer-centric organization:

We call this diagram a “conceptual model” or ontology. It defines the business objects and their relationship. Typically, it is defined in a meeting with business users when we ask them early in the project to explain their business.

The next knowledge to extract from the business is the business keys which are used to identify the business objects in the conceptual model. When asking business users from various departments, different answers are often given. This is due to the fact that different departments often use different operational systems with different business key definitions to identify the same business objects. Therefore, expect multiple business keys per business object to be given as an answer, depending on who you ask. Add them to the conceptual model:

We have added the business keys using braces to the above diagram. However, we did not try to find the best business key for a certain business object. Do not judge the business keys, yet. Email addresses might not be a good choice, given privacy requirements, but add them nevertheless to the concept. These business key candidates will give us choice, so the more (actual ones), the better. With that in mind, make sure to invite all relevant business users to capture as many data sources as possible.

This model will be of great use when analyzing specific source systems, such as Microsoft Dynamics CRM. The basic idea of the business key, as discussed in article 7 of this series, is to be shared across source systems, so it can be used to integrate the separate data sets.

Identify the Concept and Type of Data

Once the organizational context of the model is known, it is time to analyze the actual dataset. The first step is to identify the concept and type of data:

Is this dataset related to one of the concepts in the conceptual diagram?

Does this dataset represent a business object (maybe in addition to the ones on the conceptual diagram) or transactional data?

What about reference data?

Is multi-active data (refer to article 8 of this series) involved?