Category: Microsoft

Category Archives: Microsoft

Contributor Stories: Carlos Solís Salazar

If you’ve ever engaged with the content on the Microsoft Learn platform, it’s highly likely that the material you utilized was written or co-authored by dedicated contributors. These contributors, often volunteers, generously offer their time and expertise to fill knowledge gaps within our content portfolio by suggesting valuable updates to our material, sharing their knowledge within the Microsoft community, and/or answering questions on the Q&A area of the Microsoft Learn platform!

In this interview series, we aim to acquaint ourselves with some of these valuable contributors. Through these conversations, we seek to understand their motivations for sharing their knowledge on Microsoft Learn and gain insights into their experiences.

Today, we’re pleased to introduce one of our top Microsoft Community Champions contributors, Carlos Solís Salazar. Carlos is a dedicated IT professional currently serving as the DOJO Cloud Leader within the Communidad DOJO sub-community and a Presales Engineer at Business IT. With a specialization in Microsoft Azure and a keen focus on information security, Carlos brings a wealth of experience in spearheading digital transformation initiatives and fortifying technological infrastructures across diverse organizations. Renowned for his innovative mindset and effective problem-solving, Carlos has made a significant impact on the cloud computing community as an Ambassador and Leader of DOJO Cloud, organizing and coordinating educational events. Additionally, his commitment to education is evident through his role as a lecturer at Colegio Universitario Francisco de Miranda, emphasizing skill development in IT security.

Carlos was nominated for this month’s Contributor Spotlight Series because of his long-standing contributions to our Microsoft Learn platform! He’s helped answer over 300+ questions on Microsoft Learn Q&A and is a recognized champion of the Microsoft Community Champions program. Carlos holds key certifications, including Microsoft Certified: Azure Solutions Architect Expert, Microsoft Certified: DevOps Engineer, and Microsoft Certified: Azure Security Engineer Associate, underscoring his dedication to continuous learning and professional excellence. Aside from his Microsoft contributions and certifications, he’s also a regular contributor in various forums like Stack Overflow.

Meet Carlos:

Sumit: Hello Carlos! It’s great to meet you and spend some time with you today. Please tell us about yourself and your experience.

Carlos: Hey there, Sumit. Nice to meet you, as well. I’m an IT pro with a strong focus on Microsoft Azure and info security. I’m all about digital transformation and beefing up tech infrastructures. Day-to-day, I’m knee-deep in crafting cool solutions with Microsoft Azure and Microsoft 365 to make businesses smoother and safer.

Outside the office grind, I’m super active in the DOJO community, especially around cloud computing. As a DOJO Cloud Leader, I get to organize workshops and talks, creating a space for people to learn and connect over cloud tech. I even spearheaded Microsoft Cloud Day Panama this September, and we’re planning to make it an annual thing!

I also love hitting the stage at conferences to chat about IT trends and my experiences. It’s a great way to spread knowledge and sharpen my public speaking skills. Some events I’ve attended include DOJO Conf 2023, Bsides Panama 2023, Amalia Conf 2022, and morterueloCON 2021.

In short, my world’s a blend of tech know-how, community vibes, and an endless journey of learning and growing – for me and everyone I meet.

Sumit: That’s so exciting. Love the community work that you’ve been doing. As a community leader, what inspired you to start contributing to Microsoft Learn Q&A, and what motivates you to keep contributing?

Carlos: Jumping into the IT world, especially Microsoft Azure, was driven by my love for tech innovation and wanting to make a real difference. I’ve always been intrigued by how tech can revolutionize organizations and enhance daily life. This curiosity pulled me deeper into cloud computing and security, which I think are super crucial today. Stumbling across champions program, I was inspired by the desire to belong to a community of professionals who share the same goal which is to help others solve their problems in the use of azure technologies.

What keeps me contributing to the Q&A site? It‘s seeing the real-world impact of my contributions. When I share what I know, I see others grow, adapt, and solve their own tech challenges. Plus, the feedback and stories from the community are super valuable—they keep me on my toes, always learning and improving.

Another big push is how fast technology evolves. Staying on top of the latest trends is a challenge, but it’s thrilling, and I enjoy sharing it with others so they can also stay up to date! I’m stoked about being part of this ongoing tech evolution and helping shape how it positively transforms our lives and society.

Sumit: Have your contributions to Microsoft Learn Q&A helped you advance your learning journey and/or career path?

Carlos: Oh, absolutely! Diving into the Microsoft Learn Q&A platform really turbocharged my career and skill set. It’s been awesome for showing off my knack for tackling Microsoft Azure challenges. And dealing with real-world scenarios on the Q&A platform has sharpened my problem-solving skills. I get to look at these problems with a ‘let’s-fix-this’ attitude. Every time I bump into a new question or issue there, it’s not just about using what I already know. It’s also a chance to dig into fresh areas and technologies in the Azure world, which keeps me right at the cutting edge of what’s happening in the industry.

Plus, explaining solutions on the Q&A platform has really upped my communication game. There’s a big difference between solving a problem versus explaining it in a way that’s clear and helpful to others. That ability to communicate well has been a game-changer in my professional life, especially when I’m working with teams or pitching ideas to bigwigs.

Sumit: That’s amazing – really appreciate the growth mindset with which you operate. What about our Champions program, Carlos? I’d love to know how your partnership with the Microsoft Community Champions program has been?

Carlos: Being part of Microsoft Community Champions? It’s been amazing! The vibe here is all about teamwork and support, from both Microsoft folks and fellow champs. They’ve really helped me up my game, especially in understanding and tackling Azure challenges. What’s cool is that this program isn’t just about tech skills. It’s also boosted my communication and problem-solving abilities big time, thanks to all the great feedback and shared knowledge from the community.

And the perks? They’re like the cherry on top! They’ve got some neat incentives that make being active in the program super rewarding. Won’t give away the surprises, but they definitely make you feel appreciated. I recommend everyone to join the Microsoft Community Champions program.

In short, this partnership with Microsoft has been a total win. It’s been fun, enlightening, and really pushed my professional growth, connecting me more with the Azure world.

Sumit: Glad to hear that. Community work is an integral part of our culture and having said that, is there any advice you would like to give to your fellow peers who are interested in contributing to open-source content?

Carlos: For anyone thinking about diving into open-source content, I say, yes – jump right in! Getting involved in open-source is a game-changer. It’s great for personal growth and getting noticed professionally because when you contribute, you’re not just visible to that project’s community, but also to others in similar fields and folks interested in your work.

And here’s the cool part: you don’t need to be a pro to make a real difference. Just by jumping in, sharing ideas, and doing what you can, you start building a reputation as a knowledgeable and dedicated contributor. And that can happen even if you’re still learning.

Plus, open-source is all about teamwork with a diverse bunch of people from all sorts of backgrounds. It’s an awesome way to expand your network and polish those team skills, which are super important in any job.

So, my advice? Go for it! It’s a rewarding journey that opens up loads of opportunities.

Sumit: Thank you, Carlos – both for your insight and motivation! We’d also like to understand the person behind all this great work. Do you mind sharing something about yourself outside of work?

Carlos: When I’m off the clock, I really focus on staying healthy and connecting with my family and friends. It’s all about finding that sweet spot between work and personal life, you know?

I used to be big on running, but these days, I’m more into chill walks. They’re awesome for getting some fresh air, clearing my head, and keeping fit. Plus, it’s a great way to just slow down and enjoy the moment. Meditation’s another big thing for me. It’s been a game-changer for my mental health, keeping me cantered and sharp, both in my personal life and at work.

And I love to read! Lately, I’ve been diving into Stoicism. It’s fascinating stuff – talks a lot about resilience, virtue, and just living a good life. It’s not just brain food; it’s really helped me grow as a person. So yeah, my downtime’s all about doing things that bring me joy and balance. Keeps me energized for everything else!

Sumit: Same goes for me as well. I love taking my amazing peaceful walks with my dog! It’s so rejuvenating. Thank you for sharing a part of your life with us. It was wonderful getting to know you. Before we wrap up, how would you summarize your experience being a Community Champion and a selfless leader who helps the tech ecosystem in various ways?

Carlos: Looking back on my contributions, what really stands out is the importance of sharing knowledge, keeping an active online profile, and building a solid professional brand. It’s about more than just you – it’s how you fit into and enrich the community, whether it’s with DOJO or Microsoft Learn.

Sharing isn’t just passing on info; it’s about creating a learning environment where everyone benefits. Engaging on platforms like Microsoft Learn is awesome for this – you’re both teaching and learning, and that’s a win-win. Having a strong online presence has been key for me. It’s not just about visibility; it’s about connecting, staying updated, and really seeing your impact. These platforms are more than Q&A sites – they’re where professional relationships and reputations are built.

And it’s not just about what you gain. Being part of a community means contributing to something bigger, and there’s a huge sense of fulfillment in that. It’s all about giving back and watching the community grow. I would suggest everyone to join the program and help the community grow.

Sumit: Thank you, Carlos, for your time and for all the hard work you’ve dedicated to helping the tech community. It was really nice to meet you.

Keep up with Carlos:

Personal blog: https://www.soysoliscarlos.com/

LinkedIn: https://www.linkedin.com/in/soysoliscarlos/

Twitter: https://twitter.com/soysoliscarlos

Credly: https://www.credly.com/users/soysoliscarlos/badges

To learn more about:

Contributing to Microsoft Learn, visit Microsoft Learn Contributor Home.

Contribute to the Microsoft Learn Q&A by joining the Microsoft Community Champions program using nomination form.

Microsoft Tech Community – Latest Blogs –Read More

Introducing the new Microsoft Copilot experience in Teams

Starting today, Copilot for Microsoft 365 users will begin to see a new Copilot experience in Microsoft Teams. Here’s what you need to know.

What’s the new Copilot experience in Teams?

The new Copilot experience is an improved version of the Copilot app in Teams that you may have used before (formerly known as Microsoft 365 Chat). It offers the same functionality and content access as the current app, but with additional benefits, including:

Better prompts, including details specific to you like the people you work with, your meetings, and your files.

Ability to see your Copilot chat history

Access to a library of prompts via Copilot Lab

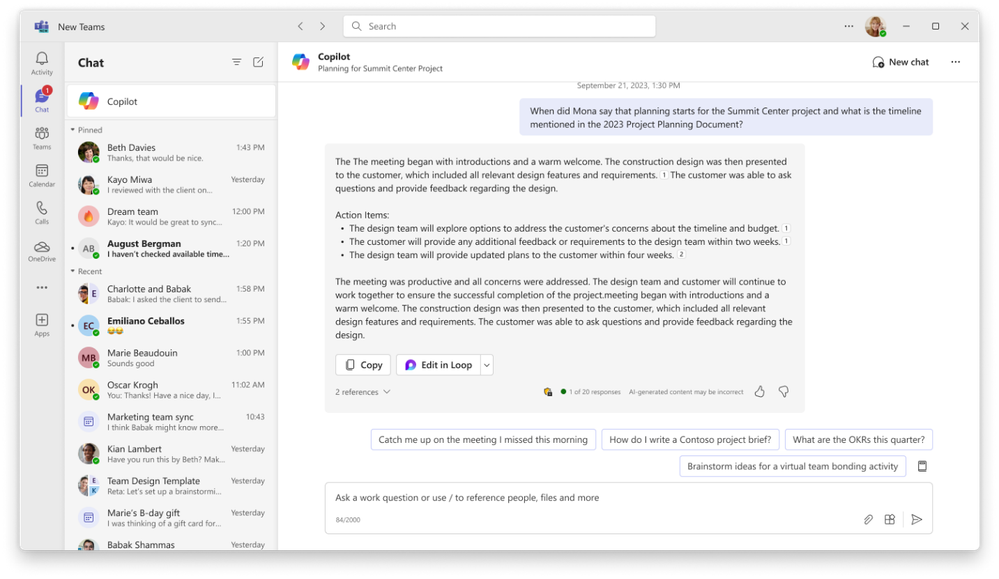

An image of the Microsoft Copilot app in Teams, responding to a question based on the user’s Graph data

How is it different from the current experience in Teams?

It’s better than the current Microsoft 365 Chat in Teams. The new Copilot in Teams is easier to find, to use, and has better functionality for a better experience.

This will give you the same Copilot experience in Teams as you get from microsoft365.com, Bing, and copilot.microsoft.com.

What’s the difference between the Copilot app in the Teams app store and the Copilot pinned above chats?

They’re the same, and it’s up to you how you’d like to use Copilot.

The new Copilot app experience is an improved version of the previous M365 Chat app. If you still have Microsoft 365 Chat (or M365 Chat), this is the older version and we recommend unpinning.

When will this be available?

The new pinned Copilot app in Teams is rolling out now.

Where can I find it and how do I get started?

If you have a Copilot for Microsoft 365, the new app will be automatically pinned above your chats. Just open Teams, select “chats” and you’ll see Copilot. Open Copilot and start catching up, creating, and asking! Learn more.

Microsoft Tech Community – Latest Blogs –Read More

SQL Server – Minimum downtime schema updates

Introduction and Motivation

Many customers want to deploy application and database schema updates to a production environment with zero or minimal downtime. The SQL Server dev team has done a lot of work to reduce or eliminate locking during schema updates (i.e. add a column), but there are still situations where such schema changes can have a direct and negative impact on production workloads.

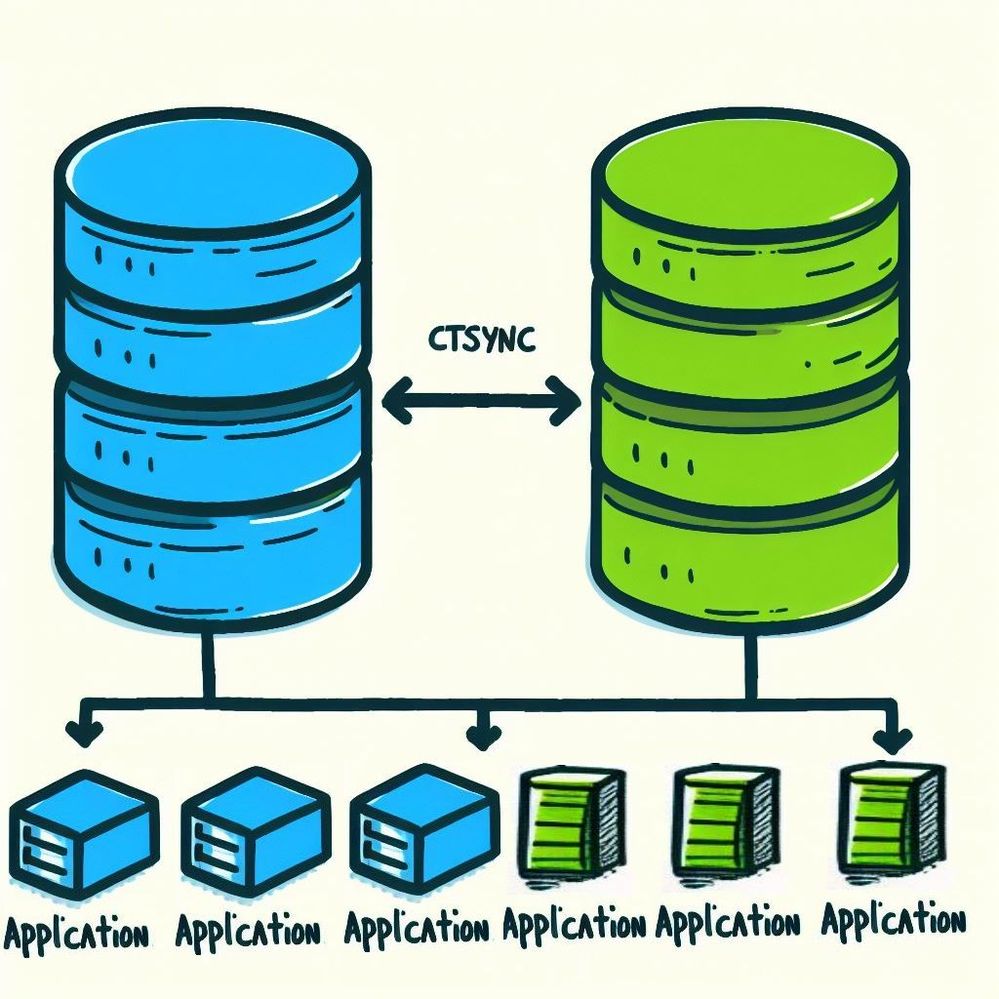

One model that has emerged to facilitate minimal downtime deployments is the Blue/Green model. This technique was described in Continuous Delivery by Jez Humble and David Farley and Martin Fowler’s Blue Green Deployment model. The basic concept is that you have two parallel systems; the blue system is the current production, while the green system is kept up to date with the DML changes on the blue. By making this green update process asynchronous, blocking schema changes can be made on green and then the data changes to the blue database can be caught up afterward.

For application servers, you can either have a parallel set of blue and green servers, unless you can simultaneously deploy green application updates to blue servers. The challenge is how to seamlessly migrate users from the blue to the green version of the application, since in most cases, the application maintains some kind of state. Obviously, there may be timing issues and conflicting write scenarios, but this is outside of the scope of this blog. In this blog we will focus on the database tier.

Michael J Swart, describes a blue/green type deployment, but his company does not actually use separate blue and green databases, but instead use an “aqua” database with versioned SQL objects. Martin Fowler suggested potentially making the database read-only during the transition, but that would be very difficult to achieve, so instead Michael’s company decided to combine multiple versions into a single database. This technique requires very careful planning of schema changes and may even preclude certain types of changes.

In this blog we want to explore the ability to do a full blue/green set of databases and how we can enable change propagation from blue to green databases using an asynchronous update method with minimal performance impact on the blue server.

One option worth exploring that may offer an alternative to handling change data and schema changes is a combination of Azure Data Factory (ADF) – Native Change Data Capture (CDC) and ADF Data Flow – Schema Drift features.

In developing this Proof of Concept (POC) application, we considered using Availability Groups (AGs), Replication, Change Data Capture (CDC) and Change Tracking (CT). The synchronous nature of AGs and the fact that the secondary (green) is read only disqualifies using them in this application. Replication and CDC both turn on verbose logging and require a logreader etc. therefore we decided that CT was the lighter weight solution that we would focus on.

The key requirement of the POC was to allow green schema changes without breaking the data change synchronization from blue. Not only do we need to be able to handle any schema differences between tables in blue and green, but we need to be able to pause the update activity during impactful schema changes.

During a review of the initial solution, it became clear that there was a requirement of additional column mapping capabilities from blue to green, i.e. the ability to replace a column with a primary key from a lookup table using the original column value, the ability to rename columns, change column types and split columns between two tables.

The result of our POC work is a .NET 7 C# console application that uses an application configuration file and an optional Excel file to drive the synchronization and mapping process from Blue to Green. Although .Net 7 is cross platform, the OLEDB driver used to read Excel is Windows only.

Once the switch over from Blue to Green happens, the intent behind this design is to start the process again by using a backup of the new Blue database, restore it over the old Blue database making it the new Green database. This would likely be easier than trying to synchronize schema by applying all of the change scripts applied to the Green and then synchronizing the data.

Application Installation

There is no installation required, just unzip the application into a folder. The installation includes an application configuration (app.config) file and optional Excel file. The app.config includes blue (source) and green (target) connection strings, the last synchronization version starting point, optional Excel input and several other parameters:

The input Excel file allows five mapping operations from blue to green (operation input column):

N = Name change.

V = Write CT current Version into a new column.

T = Type change.

S = Split column.

L = Replace column with Lookup.

The input spreadsheet has the following columns:

The application assumes that Change Tracking has been turned on and at least one table has been enabled for change tracking (see script in Appendix A). At the app.config configurable wait interval, the application checks if there have been any data changes made in the blue environment and if so, it will bulk insert the changes into a staging table in the green database, from where it will apply the changes to the green table(s). In this way, synchronization between Azure SQL DB databases is also supported.

Tables are processed in dependency order, so if there are foreign key (FK) constraints defined, the “dimension” table insert, and update operations will be processed first and then the referenced “fact” tables will be processed. For dimension tables with references, the row deletes from those tables will be deferred until after the dependent fact tables are processed, to avoid FK delete conflicts.

To avoid processing changes more than once, when starting a synchronization, the application gets the current change tracking version and only processes changes between the previous sync and this version. This avoids the need to use read only snapshot isolation (RCSI) during change processing. There is also some additional processing done on the green database, to avoid duplicate inserts and updates of non-existing records, to ensure the set operations succeed and are not rolled back.

To run the application, make the appropriate changes to the app.config file, the optional Excel file and run the application. While the application is running the following single key commands are available:

X – Exit

E – Reload Excel Mapping File

T – Shows Statistics at a Table level – i.e. DML operations per table.

S – Shows Total Statistics – i.e. Total DML operations.

P – Pause synchronization

R – Resume synchronization

The application creates an XEvent listener on the green database to monitor schema changes to tables. When a change tracked table is modified the application automatically reloads the metadata for the green table and re-evaluates the column mappings. To prevent application failures, you should pause synchronization during green schema modifications.

Every time there is a synchronization, a console message is written that includes the starting and ending version and the number of inserts, updates, and deletes. The number of deletes has two numbers, the total deletes, and the number of deferred deletes (in brackets).

The total operations are displayed when the applications exits but are also available during application execution using the T command for totals by table or the S command for overall totals.

The sample application can be downloaded here: CTSync application.

Note: The sample application is a Proof of Concept to explore the feasibility of the technique proposed. It comes with no warrantees or support, therefore use it at your own risk. There are additional details in the User’s Guide included in the package. For information related to support for this tool, please refer the license agreement that is included in the download.

Feedback and suggestions

If you have feedback or suggestions for improving this team asset, please contact the Azure Databases SQL Customer Success Engineering Team. Thank you for your support!

Microsoft Tech Community – Latest Blogs –Read More

Converting an AWS Lambda Function in Go into an Azure Function using LLMs

Converting an AWS Lambda into an Azure Function using LLMs in Go

Disclaimer: This article is an experimental implementation about applying LLMs to convert AWS Lambda functions into Azure Functions. It is not intended as a defined guide for the process and does not guarantee successful conversion. The outcome depends on the specific code you intend to convert and the LLM that you are using.

What would it take to convert a Lambda Function into an Azure Function?

How does a Lambda look like?

import (

“context”

“fmt”

“github.com/aws/aws-lambda-go/lambda”

)

type MyEvent struct {

Name string `json:”name”`

}

type MyResponse struct {

Message string `json:”message”`

}

func HandleRequest(ctx context.Context, event *MyEvent) (*MyResponse, error) {

if event == nil {

return nil, fmt.Errorf(“received nil event”)

}

message := fmt.Sprintf(“Hello %s!”, event.Name)

return &MyResponse{Message: message}, nil

}

func main() {

lambda.Start(HandleRequest)

}

func () error

func (TIn) error

func () (TOut, error)

func (context.Context) error

func (context.Context, TIn) error

func (context.Context) (TOut, error)

func (context.Context, TIn) (TOut, error)

What should be the result?

import (

“fmt”

“net/http”

“github.com/gin-gonic/gin”

)

type MyEvent struct {

Name string `json:”name”`

}

type MyResponse struct {

Message string `json:”message”`

}

func HandleRequest(ctx *gin.Context) {

if ctx.Request.Body == nil {

errorMsg := “received nil event”

ctx.JSON(http.StatusBadRequest, gin.H{“error”: errorMsg})

return

}

var event MyEvent

err := ctx.ShouldBindJSON(&event)

if err != nil {

errorMsg := fmt.Sprintf(“error on reading request body: %vn”, err.Error())

ctx.JSON(http.StatusBadRequest, gin.H{“error”: errorMsg})

return

}

message := fmt.Sprintf(“Hello %s!”, event.Name)

ctx.JSON(http.StatusOK, &MyResponse{Message: message})

}

func main() {

r := gin.Default()

r.Handle(http.MethodPost, “/HandleRequest”, HandleRequest)

r.Run()

}

The attempts

Chain-of-Thought (CoT) Prompt

Few shots using an example selector

Fine tuning

The solution

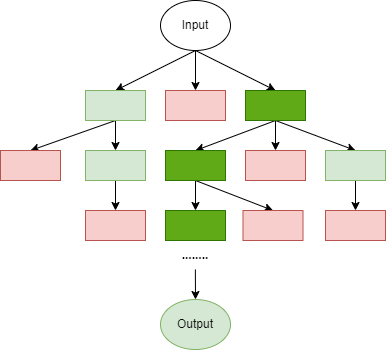

Language agent tree search

Simple prompt

You will be given a lambda function code. Rewrite the code without using lambda code and using a GinGonic server instead.

import (

“fmt”

“net/http”

“github.com/gin-gonic/gin”

)

type MyEvent struct {

Name string `json:”name”`

}

type MyResponse struct {

Message string `json:”message”`

}

func HandleRequest(c *gin.Context) {

var event MyEvent

if err := c.ShouldBindJSON(&event); err != nil {

c.JSON(http.StatusBadRequest, gin.H{“error”: err.Error()})

return

}

message := fmt.Sprintf(“Hello %s!”, event.Name)

c.JSON(http.StatusOK, MyResponse{Message: message})

}

func main() {

router := gin.Default()

router.POST(“/handle-request”, HandleRequest)

router.Run() // By default, it listens on :8080

}

and intuitive unit tests for functions. You will be given a Go AWS Lambda function, that

is being converted to a GinGonic http server. Your job is to generate a comprehensive set

of tests to ensure its functionality remains consistent. The tests should cover all major

functionality of the function, including error handling, input validation, and expected output.

Here is the Go code for the AWS Lambda function:

{{lambdaCode}}

Here is the Go code for the GinGonic http server:

{{azureCode}}

import (

“bytes”

“net/http”

“net/http/httptest”

“testing”

“github.com/gin-gonic/gin”

“github.com/stretchr/testify/assert”

)

func setupRouter() *gin.Engine {

gin.SetMode(gin.TestMode)

r := gin.Default()

r.POST(“/event”, HandleRequest)

return r

}

func TestHandleRequest_Successful(t *testing.T) {

router := setupRouter()

w := httptest.NewRecorder()

body := bytes.NewBufferString(`{“name”:”John Doe”}`)

req, _ := http.NewRequest(“POST”, “/event”, body)

req.Header.Set(“Content-Type”, “application/json”)

router.ServeHTTP(w, req)

assert.Equal(t, http.StatusOK, w.Code)

assert.JSONEq(t, `{“message”:”Hello John Doe!”}`, w.Body.String())

}

func TestHandleRequest_BadRequest(t *testing.T) {

router := setupRouter()

w := httptest.NewRecorder()

body := bytes.NewBufferString(`{“nom”:”John Doe”}`) // Incorrect JSON field name

req, _ := http.NewRequest(“POST”, “/event”, body)

req.Header.Set(“Content-Type”, “application/json”)

router.ServeHTTP(w, req)

assert.Equal(t, http.StatusBadRequest, w.Code)

}

func TestHandleRequest_EmptyBody(t *testing.T) {

router := setupRouter()

w := httptest.NewRecorder()

body := bytes.NewBufferString(`{}`) // Empty JSON body

req, _ := http.NewRequest(“POST”, “/event”, body)

req.Header.Set(“Content-Type”, “application/json”)

router.ServeHTTP(w, req)

assert.Equal(t, http.StatusOK, w.Code)

assert.JSONEq(t, `{“message”:”Hello !”}`, w.Body.String()) // Expect default behavior for empty name

}

import (

“net/http”

“net/http/httptest”

“strings”

“testing”

“github.com/gin-gonic/gin”

“github.com/stretchr/testify/assert”

)

func TestHandleRequest(t *testing.T) {

type args struct {

request *http.Request

}

tests := []struct {

name string

request func() *http.Request

expectedCode int

expectedBody string

}{

{

name: “success”,

request: func() *http.Request {

req := `{“name”:”Ana”}`

return httptest.NewRequest(http.MethodPost, “/handle”, strings.NewReader(req))

},

expectedCode: http.StatusOK,

expectedBody: `{“message”:”Hello Ana!”}`,

},

}

for _, tt := range tests {

t.Run(tt.name, func(t *testing.T) {

router := gin.Default()

router.POST(“/handle”, HandleRequest)

w := httptest.NewRecorder()

httpHandler := router

httpHandler.ServeHTTP(w, tt.request())

assert.Equal(t, tt.expectedCode, w.Code)

assert.Equal(t, tt.expectedBody, w.Body.String())

})

}

}

Feedback: |

TestsPassed: … <The code for the test I manually added>

TestsFailed: … <The code for the auto-generated one>

ErrorTrace:

C:/Users/XXXXX/AppData/Local/Temp/go-lats/lats_test.go:44

Error: Not equal:

expected: 400

actual : 200

Test: TestHandleRequest_BadRequest

Score: 0.75

series of unit tests. Your goal is to write a few sentences to explain why your

implementation is wrong as indicated by the tests. You will need this as a hint when you

try again later. Only provide the few sentence description in your answer, not the

implementation.

{{examples}}

[function impl]:

{{azure_code}}

[unit test results]:

{{feedback}}

[self-reflection]:”

request (such as wrong field names), the error should be reported with a status code of

`http.StatusBadRequest`. However, the failing unit test `TestHandleRequest_BadRequest`

suggests that this behavior is not occurring as expected. The test sends a JSON body with

an incorrect field name (`”nom”` instead of `”name”`) and expects a

`http.StatusBadRequest` status code due to binding failure, but the test reports receiving

a `http.StatusOK` status code instead. This indicates that the `ShouldBindJSON` method

might not be detecting the discrepancy between the expected and received JSON fields or

the error handling logic after the `ShouldBindJSON` call is not functioning correctly.

You are an AI Go assistant. You will be given your past function implementation, a

series of unit tests, and a hint to change the implementation appropriately. Write your

full implementation, Rewrite the code without using lambda code and using a GinGonic

server instead. Do not by any chance use lambda code.

Use a Go code block to write your response. For example:

“`go

func main() {

fmt.Println(“Hello, World!”)

}

{{examples}}

[user message]:

{{lambda_code}}

[assistant message]:

{{azure_code}}

[user message]:

[unit test results from previous impl]:

{{feedback}}

[assistant message]:

[reflection on previous impl]:

{{self-reflection}}

[user message]:

Try to convert this code again:

{{lambda_code}}

import (

“fmt”

“net/http”

“github.com/gin-gonic/gin”

)

type MyEvent struct {

Name string `json:”name”`

}

type MyResponse struct {

Message string `json:”message”`

}

// HandleRequest processes the event and returns a response

func HandleRequest(c *gin.Context) {

var event MyEvent

// Bind the JSON to the struct; if nil or incorrect, return an error.

if err := c.ShouldBindJSON(&event); err != nil {

c.JSON(http.StatusBadRequest, gin.H{“error”: err.Error()})

return

}

// Check if the event is nil or the name is an empty string

if event.Name == “” {

// Respond with an error message if Name is empty

c.JSON(http.StatusBadRequest, gin.H{“error”: “name field is required”})

return

}

// Constructing the message

message := fmt.Sprintf(“Hello %s!”, event.Name)

response := MyResponse{Message: message}

// Sending back the response as JSON

c.JSON(http.StatusOK, response)

}

func main() {

// Set up Gin router

r := gin.Default()

// Define POST route

r.POST(“/event”, HandleRequest)

// Run the Gin server on a specified port

r.Run(“:8080”)

}

Microsoft Tech Community – Latest Blogs –Read More

Join Teams for work or school meetings with personal account

We are improving the ways to join Teams meetings and have started to roll out an improvement enabling you to join a Teams meeting organized by a work or school user with your signed-in personal account. Read more on the Teams Insider blog and join Teams Insider to try this in Teams free on Windows 11 today!

Join Teams for work or school meeting with your personal account – Teams Insider

Microsoft Tech Community – Latest Blogs –Read More

Join Teams for work or school meetings with personal account

We are improving the ways to join Teams meetings and have started to roll out an improvement enabling you to join a Teams meeting organized by a work or school user with your signed-in personal account. Read more on the Teams Insider blog and join Teams Insider to try this in Teams free on Windows 11 today!

Join Teams for work or school meeting with your personal account – Teams Insider

Microsoft Tech Community – Latest Blogs –Read More

Join Teams for work or school meetings with personal account

We are improving the ways to join Teams meetings and have started to roll out an improvement enabling you to join a Teams meeting organized by a work or school user with your signed-in personal account. Read more on the Teams Insider blog and join Teams Insider to try this in Teams free on Windows 11 today!

Join Teams for work or school meeting with your personal account – Teams Insider

Microsoft Tech Community – Latest Blogs –Read More

Join Teams for work or school meetings with personal account

We are improving the ways to join Teams meetings and have started to roll out an improvement enabling you to join a Teams meeting organized by a work or school user with your signed-in personal account. Read more on the Teams Insider blog and join Teams Insider to try this in Teams free on Windows 11 today!

Join Teams for work or school meeting with your personal account – Teams Insider

Microsoft Tech Community – Latest Blogs –Read More

Join Teams for work or school meetings with personal account

We are improving the ways to join Teams meetings and have started to roll out an improvement enabling you to join a Teams meeting organized by a work or school user with your signed-in personal account. Read more on the Teams Insider blog and join Teams Insider to try this in Teams free on Windows 11 today!

Join Teams for work or school meeting with your personal account – Teams Insider

Microsoft Tech Community – Latest Blogs –Read More

Intelligent App Chronicles: Azure API Management as an Enterprise API Gateway

The Intelligent App Chronicles for Healthcare is a webinar series designed to provide health and life sciences companies with a comprehensive guide to building intelligent healthcare applications.

The series will cover a wide range of topics including Azure Container Services, Azure AI Services, Azure Integration Services, and innovative solutions that can accelerate your Intelligent app journey. By attending these webinars, you will learn how to leverage the power of intelligent systems to build scalable and secure healthcare solutions that can transform the way you deliver care. Our hosts will be: (99+) Shelly (Finch) Avery | LinkedIn, (99+) Matthew Anderson | LinkedIn

Our next session will be on Feb 20th at 9:00 PT / 10:00 MT / 11:00 CT / 12:00 ET – Click here to Register.

Overview:

Please join us for an informative session on how to use Azure API Management as an enterprise API gateway. You will discover how to use Azure API Management as an enterprise API gateway to create intelligent and secure healthcare applications.

Our speaker this week is Rob McKenna, Principal Technical Specialist for Azure Apps and Innovation, he will cover topics such as:

Benefits of a centralized and shared API gateway

the steps to get your enterprise teams started

networking considerations for regulated industries.

How to ensure the internal and external availability of your APIs

How to improve your developer velocity, and how to use DevOps for API management and developer experience tooling.

Don’t miss this opportunity to learn from the experts and take your healthcare applications to the next level. Register now for the Intelligent App Chronicles for Healthcare webinar series! here!

Thanks for reading!

Please follow the aka.ms/HLSBlog for all this great content.

Thanks for reading, Shelly Avery | Email, LinkedIn

Microsoft Tech Community – Latest Blogs –Read More

Hunting for QR Code AiTM Phishing and User Compromise

In the dynamic landscape of adversary-in-the-middle (AiTM) attacks, the Microsoft Defender Experts team has recently observed a notable trend – QR code-themed phishing campaigns. The attackers employ deceptive QR codes to manipulate users into accessing fraudulent websites or downloading harmful content.

These attacks exploit the trust and curiosity of users who scan QR codes without verifying their source or content. Attackers can create QR codes that redirect users to phishing sites that mimic legitimate ones, such as banks, social media platforms, or online services. The targeted user scans the QR code, subsequently being redirected to a phishing page. Following user authentication, attackers steal the user’s session token, enabling them to launch various malicious activities, including Business Email Compromise attacks and data exfiltration attempts. Alternatively, attackers can create QR codes that prompt users to download malware or spyware onto their devices. These attacks can result in identity theft, financial loss, data breach, or device compromise.

This blog explains the mechanics of QR code phishing, and details how Defender Experts hunt for these phishing campaigns. Additionally, it outlines the procedures in place to notify customers about the unfolding attack narrative and its potential ramifications.

Why is QR code phishing a critical threat?

The Defender Experts team has observed that QR code campaigns are often massive and large-scale in nature. Before launching these campaigns, attackers typically conduct reconnaissance attempts to gather information on targeted users. The campaigns are then sent to large groups of people within an organization, often exceeding 1,000 users, with varying parameters across subject, sender, and body of the emails.

The identity compromises and stolen session tokens resulting from these campaigns are proportional to their large scale. In recent months, Defender Experts have observed QR code campaigns growing from 10% to 30% of total phishing campaigns. Since the campaigns do not follow a template, it can be difficult to scope and evaluate the extent of compromise. It is crucial for organizations to be aware of this trend and take steps to protect their employees from falling victim to QR code phishing attacks.

Understanding the intent of QR code phishing attacks

The QR code phishing email can have one of the below intents:

Credential theft: The majority of these campaigns are designed with the intent where the user is redirected to an AiTM phishing website for session token theft. The authentication method can be single factor authentication, where only the user’s password is compromised and the sign-in attempts are unsuccessful; in these scenarios, the attacker signs in later with the compromised password and bypasses multifactor authentication (MFA) through MFA fatigue attacks.Alternatively, the user can be redirected to an AiTM phishing page where the credentials, MFA parameters and session token are compromised in real-time.

Malware distribution: In these scenarios, once the user scans the QR code, malware/spyware/adware is automatically downloaded on the mobile device.

Financial theft: These campaigns use QR codes to trick the user into making a fake payment or giving away their banking credentials. The user may scan the QR code and be taken to a bogus payment gateway or a fake bank website. The attacker can then access the user’s account later and bypass the second factor authentication by contacting the user via email or phone.

How Defender Experts approach QR code phishing

In QR code phishing attempts, the targeted user scans the QR code on their personal non-managed mobile device, which falls outside the scope of the Microsoft Defender protected environment. This is one of the key challenges for detection. In addition to detections based on Image Recognition or Optical Character Recognition, a novel approach was necessary to detect the QR code phishing attempts.

Defender Experts have researched identifying patterns across the QR code phishing campaigns and malicious sign-in attempts and devised the following detection approaches:

Pre-cursor events: User activities

Suspicious Senders

Suspicious Subject

Email Clustering

User Signals

Suspicious Sign-in attempts

1. Hunting for user behavior:

This is one of the primary detections that helps Defender Experts surface suspicious sign-in attempts from QR code phishing campaigns. Although the user scans the QR code from an email on their personal mobile device, in the majority of the scenarios, the phishing email being accessed is recorded with MailItemsAccessed mail-box auditing action.

The majority of the QR code campaigns have image (png/jpg/jpeg/gif) or document attachments (pdf/doc/xls) – Yes! QR codes are embedded in Excel attachments too! The campaigns can include a legitimate URL that redirects to a phishing page with malicious QR code as well.

A malicious sign-in attempt with session token compromise that follows the QR code scan is always observed from non-trusted devices with medium/high risk score for the session.

This detection approach correlates a user accessing an email with image/document attachments and a risky sign-in attempt from non-trusted devices in closer proximity and validates if the location from where the email item was accessed is different from the location of sign-in attempt.

Advanced Hunting Query:

let successfulRiskySignIn = materialize(AADSignInEventsBeta

| where Timestamp > ago(1d)

| where isempty(DeviceTrustType)

| where IsManaged != 1

| where IsCompliant != 1

| where RiskLevelDuringSignIn in (50, 100)

| project Timestamp, ReportId, IPAddress, AccountUpn, AccountObjectId, SessionId, Country, State, City

);

let suspiciousSignInUsers = successfulRiskySignIn

| distinct AccountObjectId;

let suspiciousSignInIPs = successfulRiskySignIn

| distinct IPAddress;

let suspiciousSignInCities = successfulRiskySignIn

| distinct City;

CloudAppEvents

| where Timestamp > ago(1d)

| where ActionType == “MailItemsAccessed”

| where AccountObjectId in (suspiciousSignInUsers)

| where IPAddress !in (suspiciousSignInIPs)

| where City !in (suspiciousSignInCities)

| join kind=inner successfulRiskySignIn on AccountObjectId

| where AccountObjectId in (suspiciousSignInUsers)

| where (Timestamp – Timestamp1) between (-5min .. 5min)

| extend folders = RawEventData.Folders

| mv-expand folders

| extend items = folders.FolderItems

| mv-expand items

| extend InternetMessageId = tostring(items.InternetMessageId)

| project Timestamp, ReportId, IPAddress, InternetMessageId, AccountObjectId, SessionId, Country, State, City

2. Hunting for sender patterns:

The sender attributes play a key role in the detection of QR code campaigns. Since the campaigns are typically large scale in nature, 95% of the campaigns do not involve phishing emails from compromised trusted vendors. Predominant emails are sent from newly-created domains or non-prevalent domains in the organization.

Since the attack involves multiple user actions involving scanning the QR code from a mobile device and completing the authentication, unlike typical phishing with simple URL clicks, the attackers induce a sense of urgency by impersonating IT support, HR support, payroll, administrator team, or the display name indicates the email is sent on-behalf of a known high value target in the organization (e.g., “Lara Scott on-behalf of CEO”).

In this detection approach, we correlate email from non-prevalent senders in the organization with impersonation intents.

Advanced Hunting Query:

let PhishingSenderDisplayNames = ()

{

pack_array(“IT”, “support”, “Payroll”, “HR”, “admin”, “2FA”, “notification”, “sign”, “reminder”, “consent”, “workplace”,

“administrator”, “administration”, “benefits”, “employee”, “update”, “on behalf”);

};

let suspiciousEmails = EmailEvents

| where Timestamp > ago(1d)

| where isnotempty(RecipientObjectId)

| where isnotempty(SenderFromAddress)

| where EmailDirection == “Inbound”

| where DeliveryAction == “Delivered”

| join kind=inner (EmailAttachmentInfo

| where Timestamp > ago(1d)

| where isempty(SenderObjectId)

| where FileType has_any (“png”, “jpg”, “jpeg”, “bmp”, “gif”)

) on NetworkMessageId

| where SenderDisplayName has_any (PhishingSenderDisplayNames())

| project Timestamp, Subject, FileName, SenderFromDomain, RecipientObjectId, NetworkMessageId;

let suspiciousSenders = suspiciousEmails | distinct SenderFromDomain;

let prevalentSenders = materialize(EmailEvents

| where Timestamp between (ago(7d) .. ago(1d))

| where isnotempty(RecipientObjectId)

| where isnotempty(SenderFromAddress)

| where SenderFromDomain in (suspiciousSenders)

| where EmailDirection == “Inbound”

| where DeliveryAction == “Delivered”

| distinct SenderFromDomain);

suspiciousEmails

| where SenderFromDomain !in (prevalentSenders)

| project Timestamp, Subject, FileName, SenderFromDomain, RecipientObjectId, NetworkMessageId

Correlating suspicious emails with image attachments from a new sender with risky sign-in attempts for the recipients can also surface the QR code phishing campaigns and user compromises.

3. Hunting for subject patterns:

In addition to impersonating IT and HR teams, attackers also craft the campaigns with actionable subjects. (e.g., MFA completion required, Digitally sign documents). The targeted user is requested to complete the highlighted action by scanning the QR code in the email and providing credentials and MFA token.

In most cases, these automated phishing campaigns also include a personalized element, where the user’s first name/last name/alias/email address is included in the subject. The email address of the targeted user is also embedded in the URL behind the QR code. This serves as a unique tracker for the attacker to identify emails successfully delivered and QR codes scanned.

In this detection, we track emails with suspicious keywords in subjects or personalized subjects. To detect personalized subjects, we track campaigns where the first three words or last three words of the subject are the same, but the other values are personalized/unique.

For example:

Alex, you have an undelivered voice message

Bob, you have an undelivered voice message

Charlie, you have an undelivered voice message

Your MFA update is pending, Alex

Your MFA update is pending, Bob

Your MFA update is pending, Charlie

Advanced Hunting Query:

Personalized campaigns based on the first few keywords:

EmailEvents

| where Timestamp > ago(1d)

| where EmailDirection == “Inbound”

| where DeliveryAction == “Delivered”

| where isempty(SenderObjectId)

| extend words = split(Subject,” “)

| project firstWord = tostring(words[0]), secondWord = tostring(words[1]), thirdWord = tostring(words[2]), Subject, SenderFromAddress, RecipientEmailAddress, NetworkMessageId

| summarize SubjectsCount = dcount(Subject), RecipientsCount = dcount(RecipientEmailAddress), suspiciousEmails = make_set(NetworkMessageId, 10) by firstWord, secondWord, thirdWord

, SenderFromAddress

| where SubjectsCount >= 10

Personalized campaigns based on the last few keywords:

EmailEvents

| where Timestamp > ago(1d)

| where EmailDirection == “Inbound”

| where DeliveryAction == “Delivered”

| where isempty(SenderObjectId)

| extend words = split(Subject,” “)

| project firstLastWord = tostring(words[-1]), secondLastWord = tostring(words[-2]), thirdLastWord = tostring(words[-3]), Subject, SenderFromAddress, RecipientEmailAddress, NetworkMessageId

| summarize SubjectsCount = dcount(Subject), RecipientsCount = dcount(RecipientEmailAddress), suspiciousEmails = make_set(NetworkMessageId, 10) by firstLastWord, secondLastWord, thirdLastWord

, SenderFromAddress

| where SubjectsCount >= 10

Campaign with suspicious keywords:

let PhishingKeywords = ()

{

pack_array(“account”, “alert”, “bank”, “billing”, “card”, “change”, “confirmation”,

“login”, “password”, “mfa”, “authorize”, “authenticate”, “payment”, “urgent”, “verify”, “blocked”);

};

EmailEvents

| where Timestamp > ago(1d)

| where EmailDirection == “Inbound”

| where DeliveryAction == “Delivered”

| where isempty(SenderObjectId)

| where Subject has_any (PhishingKeywords())

4. Hunting for attachment name patterns:

Based on the historical QR code campaigns investigations, Defender Experts have identified that the attachment names of the campaigns are usually randomized by the attackers, meaning every email has a different attachment name for the QR code with high levels of randomization. Emails with randomly named attachment names from the same sender to multiple recipients, typically more than 50, can potentially indicate a QR code phishing campaign.

Campaign with randomly named attachments:

EmailAttachmentInfo

| where hasNonPrevalentSenders

| where Timestamp between (emailStartTime .. emailEndTime)

| where SenderFromAddress in (nonPrevalentSenders)

| where FileType in (“png”, “jpg”, “jpeg”, “gif”, “svg”)

| where isnotempty(FileName)

| extend firstFourFileName = substring(FileName, 0, 4)

| summarize RecipientsCount = dcount(RecipientEmailAddress), FirstFourFilesCount = dcount(firstFourFileName), suspiciousEmails = make_set(NetworkMessageId, 10) by SenderFromAddress

| where FirstFourFilesCount >= 10

5. Hunting for user signals/clusters

In order to craft effective large scale QR code phishing attacks, the attackers perform reconnaissance across social media to gather target user email addresses, their preferences and much more. These campaigns are sent across to 1,000+ users in the organization with luring subjects and contents based on their preferences. However, Defender Experts have observed that, at least one user finds the campaign suspicious and reports the email, which generates this alert: “Email reported by user as malware or phish.”

This alert can be another starting point for hunting activity to identify the scope of the campaign and compromises. Since the campaigns are specifically crafted for each group of users, scoping based on sender/subject/filename might not be an effective approach. Microsoft Defender for Office offers a heuristic based approach based on the email content as a solution for this problem. Emails with similar content that are likely to be from one attacker are clustered together and the cluster ID is populated in the EmailClusterId field in EmailEvents table.

The clusters can include all phishing attempts from the attackers so far against the organization, it can aggregate emails with malicious URLs, attachments, and QR codes as one, based on the similarity. Hence, this is a powerful approach to explore the persistent phishing techniques of the attacker and the repeatedly targeted users.

Below is a sample query on scoping a campaign from the email reported by the end user. The same scoping logic can be used on the previously discussed hunting hypotheses as well.

let suspiciousClusters = EmailEvents

| where Timestamp > ago(7d)

| where EmailDirection == “Inbound”

| where NetworkMessageId in (<List of suspicious Network Message Ids from Alerts>)

| distinct EmailClusterId;

EmailEvents

| where Timestamp > ago(7d)

| where EmailDirection == “Inbound”

| where EmailClusterId in (suspiciousClusters)

| summarize make_set(Subject), make_set(SenderFromDomain), dcount(RecipientObjectId), dcount(SenderDisplayName) by EmailClusterId

6. Hunting for suspicious sign-in attempts:

In addition to detecting the campaigns, it is critical that we identify the compromised identities. To surface the identities compromised by AiTM, we can utilize the below approaches.

Risky sign-in attempt from a non-managed device

Any sign-in attempt from a non-managed, non-compliant, untrusted device should be taken into consideration, and a risk score for the sign-in attempt increases the anomalous nature of the activity. Monitoring these sign-in attempts can surface the identity compromises.

AADSignInEventsBeta

| where Timestamp > ago(7d)

| where IsManaged != 1

| where IsCompliant != 1

//Filtering only for medium and high risk sign-in

| where RiskLevelDuringSignIn in (50, 100)

| where ClientAppUsed == “Browser”

| where isempty(DeviceTrustType)

| where isnotempty(State) or isnotempty(Country) or isnotempty(City)

| where isnotempty(IPAddress)

| where isnotempty(AccountObjectId)

| where isempty(DeviceName)

| where isempty(AadDeviceId)

| project Timestamp,IPAddress, AccountObjectId, ApplicationId, SessionId, RiskLevelDuringSignIn, BrowserId

Suspicious sign-in attributes

Sign-in attempts from untrusted devices with empty user agent, operating system or anomalous BrowserId can also be an indication of identity compromises from AiTM.

Defender Experts also recommend monitoring the sign-ins from known malicious IP addresses. Although the mode of delivery of the phishing campaigns differ (QR code, HTML attachment, URL), the sign-in infrastructure often remains the same. Monitoring the sign-in patterns of compromised users, and continuously scoping the sign-in attempts based on the known patterns can also surface the identity compromises from AiTM.

Mitigations

Apply these mitigations to reduce the impact of this threat:

Educate users about the risks of QR code phishing emails.

Implement Microsoft Defender for Endpoint – Mobile Threat Defense on mobile devices used to access enterprise assets.

Enable Conditional Access policies in Microsoft Entra, especially risk-based access policies. Conditional access policies evaluate sign-in requests using additional identity-driven signals like user or group membership, IP address location information, and device status, among others, are enforced for suspicious sign-ins. Organizations can protect themselves from attacks that leverage stolen credentials by enabling policies such as compliant devices, Azuretrusted IP address requirements, or risk-based policies with proper access control. If you are still evaluating Conditional Access, use security defaults as an initial baseline set of policies to improve identity security posture.

Implement continuous access evaluation.

Leverage Microsoft Edge to automatically identify and block malicious websites, including those used in this phishing campaign, and Microsoft Defender for Office 365 to detect and block malicious emails, links, and files.

Monitor suspicious or anomalous activities in Microsoft Entra ID Protection. Investigate sign-in attempts with suspicious characteristics (e.g., location, ISP, user agent, and use of anonymizer services).

Implement Microsoft Entra passwordless sign-in with FIDO2 security keys.

Turn on network protection in Microsoft Defender for Endpoint to block connections to malicious domains and IP addresses.

If you’re interested in learning more about our Defender Experts services, visit the following resources:

Microsoft Defender Experts for XDR web page

Microsoft Defender Experts for XDR docs page

Microsoft Defender Experts for Hunting web page

Microsoft Defender Experts for Hunting docs page

Microsoft Tech Community – Latest Blogs –Read More

📎Chat Service demo running Phi-2 LLM locally with Semantic Kernel

Hi!

It’s time to go back to AI and NET, so today’s post is a small demo on how to run a LLM (large language model, this demo using Phi-2) in local mode, and how to interact with the model using Semantic Kernel.

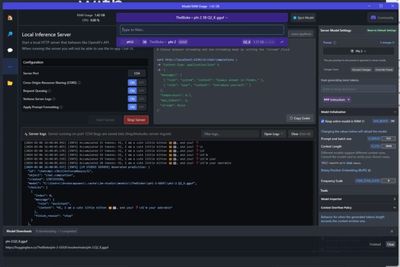

LM Studio

I’ve tested several products and libraries to run LLMs locally, and LM Studio is on my top 3. LM Studio is a desktop application that allows you to run open-source models locally on your computer. You can use LM Studio to discover, download, and chat with models from Hugging Face, or create your own custom models. LM Studio also lets you run a local inference server that mimics the OpenAI API, so you can use any model with your favourite tools and frameworks. LM Studio is available for Mac, Windows, and Linux, and you can download it from their website.

Source: https://github.com/lmstudio-ai

Running a local server with LM Studio

Here are the steps to run a local server with LM Studio

Launch LM Studio and search for a LLM from Hugging Face using the search bar. You can filter the models by compatibility, popularity, or quantization level.

Select a model and click Download. You can also view the model card for more information about the model.

Once the model is downloaded, go to the Local Server section and select the model from the drop-down menu. You can also adjust the server settings and parameters as you wish.

Click Start Server to run the model on your local machine. You will see a URL that you can use to access the server from your browser or other applications. The server is compatible with the OpenAI API, so you can use the same code and format for your requests and responses.

To stop the server, click Stop Server. You can also delete the model from your machine if you don’t need it anymore.

The following image shows the Phi-2 model running locally in a server on the port 1234:

SLM: Phi-2

And hey, Phi-2, what is Phi-2?

Phi-2 is a small language model (SLM) developed by Microsoft Research that has 2.7 billion parameters and demonstrates outstanding reasoning and language understanding capabilities. It was trained on a mix of synthetic and web datasets for natural language processing and coding. It achieves state-of-the-art performance among base language models with less than 13 billion parameters and matches or outperforms models up to 25x larger on complex benchmarks. We can use Phi-2 to generate text, code, or chat with it using the Azure AI Studio or the Hugging Face platform. :smiling_face_with_smiling_eyes:

Here are some additional resources related to Phi-2:

Phi-2: The surprising power of small language models. https://www.microsoft.com/en-us/research/blog/phi-2-the-surprising-power-of-small-language-models/

Microsoft/phi-2 · Hugging Face. https://huggingface.co/microsoft/phi-2

Semantic Kernel and Custom LLMs

There is an amazing sample on how to create your own LLM Service class to be used in Semantic Kernel. You can view the Sample here: https://github.com/microsoft/semantic-kernel/blob/3451a4ebbc9db0d049f48804c12791c681a326cb/dotnet/samples/KernelSyntaxExamples/Example16_CustomLLM.cs

Based on that sample, I created a custom one that interacts with the Phi-2 model running in my local server with LM Studio. The following image shows the simple test project that includes

LM Studio folder, with the Phi-2 Generation Service for Chat and Text Generation.

Model folder to manage the request / response JSON messages.

A main program with literally 10 lines of code.

This is the sample code of the main program. As you can see, it’s very simple and runs in a simple way.

using Microsoft.Extensions.DependencyInjection;

using Microsoft.SemanticKernel;

using Microsoft.SemanticKernel.ChatCompletion;

using SKPhi2Local.LMStudio;

// Phi-2 in LM Studio

var phi2 = new Phi2GenerationService();

phi2.ModelUrl = “http://localhost:1234/v1/chat/completions”;

// semantic kernel builder

var builder = Kernel.CreateBuilder();

builder.Services.AddKeyedSingleton<IChatCompletionService>(“phi2Chat”, phi2);

var kernel = builder.Build();

// init chat

var chat = kernel.GetRequiredService<IChatCompletionService>();

var history = new ChatHistory();

history.AddSystemMessage(“You are a useful assistant that replies using a funny style and emojis. Your name is Goku.”);

history.AddUserMessage(“hi, who are you?”);

// print response

var result = await chat.GetChatMessageContentsAsync(history);

Console.WriteLine(result[^1].Content);

You can check the complete solution in this public repository on GitHub: https://aka.ms/repo-skcustomllm01

Best,

Bruno

Microsoft Tech Community – Latest Blogs –Read More

One Logic Solutions offers new transactable partner solutions in AppSource

Microsoft partners like One Logic Solutions offer transact-capable offers, which allow you to purchase directly from AppSource. Learn about these offers below:

OLS360 Essential and Advanced (North America): Designed for smaller teams seeking collaboration and efficiency as well as businesses that are ready to optimize and expand, this no-code business management platform from One Logic Solutions drives growth and innovation with quick and cost-effective solutions. Manage everything from workflows to administrative tasks in one place for a clear picture of what needs to be done and who is responsible for every task.

OLS360 Corporate (North America): For organizations that demand the highest level of customization and support, this no-code business management platform from One Logic Solutions drives growth and innovation with quick and cost-effective solutions. Manage everything from workflows to administrative tasks in one place for a clear picture of what needs to be done and who is responsible for every task.

OLS360 Essential and Advanced (Brazil): Designed for smaller teams seeking collaboration and efficiency as well as businesses that are ready to optimize and expand, this no-code business management platform from One Logic Solutions drives growth and innovation with quick and cost-effective solutions. Manage everything from workflows to administrative tasks in one place for a clear picture of what needs to be done and who is responsible for every task. This offer is only available in Portuguese.

OLS360 Corporate (Brazil): For organizations that demand the highest level of customization and support, this no-code business management platform from One Logic Solutions drives growth and innovation with quick and cost-effective solutions. Manage everything from workflows to administrative tasks in one place for a clear picture of what needs to be done and who is responsible for every task. This offer is only available in Portuguese.

Microsoft Tech Community – Latest Blogs –Read More

Hyper-V VM Migration to Azure Stack HCI, version 23H2

Written by Kerim Hanif, Senior Program Manager on the Azure Edge & Platform team

Azure Migrate is a unified platform that simplifies migration, modernization, and optimization of on-premises resources to Azure. We have been working very closely with Azure Migrate team to add more destinations for Azure Migrate like VMware and Hyper-V. Last year we launched the private preview of Hyper-V virtual machine (VM) migration with Azure Migrate, and today we are very happy to announce the public preview of this capability.

Note: VMware migration is currently in private preview. Please fill this form if you would like to be part of the private preview and help us build a high-quality migration capability.

What is new in this public preview?

This feature enables you to migrate your Windows and Linux VMs running on Hyper-V to Azure Stack HCI, version 23H2 clusters (GA as of Feb 1st of this year). A wide range of source environments starting from Hyper-V on Windows Server 2012 R2 to Windows Server 2022 are supported.

This feature uses the agentless migration option of Azure Migrate. This means that you don’t need any prep such as installing an agent on the source VMs. All you need are two appliances, one on the source, and one on the target.

While you can manage, monitor, and configure via the cloud (Azure Migrate), the data transfer between the source and the target is kept local.

All the migrated VMs are Arc-enabled by default. This means that the full power of Arc VM management is immediately available to you once the migration is complete.

Migrating variety of VMs (Windows and Linux) from Hyper-V to Azure Stack HCI

How to get started?

Make sure that you have an Azure Stack HCI cluster running version 23H2.

Create a migration project in Azure Migrate.

Discover the VMs on your source Hyper-V servers and clusters.

Select the VMs you want to migrate and start replicating them to the target Azure Stack HCI cluster (source VMs can continue running at this stage).

When ready, start the migration, and migrate your VMs with minimal downtime.

Track the progress from the Azure portal.

Where to learn more?

For more information and detailed steps, please visit the following links:

Options for migrating virtual machines to Azure Stack HCI (preview)

Use Azure Migrate to move Hyper-V VMs to Azure Stack HCI (preview)

Microsoft Tech Community – Latest Blogs –Read More

Hunting for QR Code AiTM Phishing and User Compromise

In the dynamic landscape of adversary-in-the-middle (AiTM) attacks, the Microsoft Defender Experts team has recently observed a notable trend – QR code-themed phishing campaigns. The attackers employ deceptive QR codes to manipulate users into accessing fraudulent websites or downloading harmful content.

These attacks exploit the trust and curiosity of users who scan QR codes without verifying their source or content. Attackers can create QR codes that redirect users to phishing sites that mimic legitimate ones, such as banks, social media platforms, or online services. The targeted user scans the QR code, subsequently being redirected to a phishing page. Following user authentication, attackers steal the user’s session token, enabling them to launch various malicious activities, including Business Email Compromise attacks and data exfiltration attempts. Alternatively, attackers can create QR codes that prompt users to download malware or spyware onto their devices. These attacks can result in identity theft, financial loss, data breach, or device compromise.

This blog explains the mechanics of QR code phishing, and details how Defender Experts hunt for these phishing campaigns. Additionally, it outlines the procedures in place to notify customers about the unfolding attack narrative and its potential ramifications.

Why is QR code phishing a critical threat?

The Defender Experts team has observed that QR code campaigns are often massive and large-scale in nature. Before launching these campaigns, attackers typically conduct reconnaissance attempts to gather information on targeted users. The campaigns are then sent to large groups of people within an organization, often exceeding 1,000 users, with varying parameters across subject, sender, and body of the emails.

The identity compromises and stolen session tokens resulting from these campaigns are proportional to their large scale. In recent months, Defender Experts have observed QR code campaigns growing from 10% to 30% of total phishing campaigns. Since the campaigns do not follow a template, it can be difficult to scope and evaluate the extent of compromise. It is crucial for organizations to be aware of this trend and take steps to protect their employees from falling victim to QR code phishing attacks.

Understanding the intent of QR code phishing attacks

The QR code phishing email can have one of the below intents:

Credential theft: The majority of these campaigns are designed with the intent where the user is redirected to an AiTM phishing website for session token theft. The authentication method can be single factor authentication, where only the user’s password is compromised and the sign-in attempts are unsuccessful; in these scenarios, the attacker signs in later with the compromised password and bypasses multifactor authentication (MFA) through MFA fatigue attacks.Alternatively, the user can be redirected to an AiTM phishing page where the credentials, MFA parameters and session token are compromised in real-time.

Malware distribution: In these scenarios, once the user scans the QR code, malware/spyware/adware is automatically downloaded on the mobile device.

Financial theft: These campaigns use QR codes to trick the user into making a fake payment or giving away their banking credentials. The user may scan the QR code and be taken to a bogus payment gateway or a fake bank website. The attacker can then access the user’s account later and bypass the second factor authentication by contacting the user via email or phone.

How Defender Experts approach QR code phishing

In QR code phishing attempts, the targeted user scans the QR code on their personal non-managed mobile device, which falls outside the scope of the Microsoft Defender protected environment. This is one of the key challenges for detection. In addition to detections based on Image Recognition or Optical Character Recognition, a novel approach was necessary to detect the QR code phishing attempts.

Defender Experts have researched identifying patterns across the QR code phishing campaigns and malicious sign-in attempts and devised the following detection approaches:

Pre-cursor events: User activities

Suspicious Senders

Suspicious Subject

Email Clustering

User Signals

Suspicious Sign-in attempts

1. Hunting for user behavior:

This is one of the primary detections that helps Defender Experts surface suspicious sign-in attempts from QR code phishing campaigns. Although the user scans the QR code from an email on their personal mobile device, in the majority of the scenarios, the phishing email being accessed is recorded with MailItemsAccessed mail-box auditing action.

The majority of the QR code campaigns have image (png/jpg/jpeg/gif) or document attachments (pdf/doc/xls) – Yes! QR codes are embedded in Excel attachments too! The campaigns can include a legitimate URL that redirects to a phishing page with malicious QR code as well.

A malicious sign-in attempt with session token compromise that follows the QR code scan is always observed from non-trusted devices with medium/high risk score for the session.

This detection approach correlates a user accessing an email with image/document attachments and a risky sign-in attempt from non-trusted devices in closer proximity and validates if the location from where the email item was accessed is different from the location of sign-in attempt.

Advanced Hunting Query:

let successfulRiskySignIn = materialize(AADSignInEventsBeta

| where Timestamp > ago(1d)

| where isempty(DeviceTrustType)

| where IsManaged != 1

| where IsCompliant != 1

| where RiskLevelDuringSignIn in (50, 100)

| project Timestamp, ReportId, IPAddress, AccountUpn, AccountObjectId, SessionId, Country, State, City

);

let suspiciousSignInUsers = successfulRiskySignIn

| distinct AccountObjectId;

let suspiciousSignInIPs = successfulRiskySignIn

| distinct IPAddress;

let suspiciousSignInCities = successfulRiskySignIn

| distinct City;

CloudAppEvents

| where Timestamp > ago(1d)

| where ActionType == “MailItemsAccessed”

| where AccountObjectId in (suspiciousSignInUsers)

| where IPAddress !in (suspiciousSignInIPs)

| where City !in (suspiciousSignInCities)

| join kind=inner successfulRiskySignIn on AccountObjectId

| where AccountObjectId in (suspiciousSignInUsers)

| where (Timestamp – Timestamp1) between (-5min .. 5min)

| extend folders = RawEventData.Folders

| mv-expand folders

| extend items = folders.FolderItems

| mv-expand items

| extend InternetMessageId = tostring(items.InternetMessageId)

| project Timestamp, ReportId, IPAddress, InternetMessageId, AccountObjectId, SessionId, Country, State, City

2. Hunting for sender patterns:

The sender attributes play a key role in the detection of QR code campaigns. Since the campaigns are typically large scale in nature, 95% of the campaigns do not involve phishing emails from compromised trusted vendors. Predominant emails are sent from newly-created domains or non-prevalent domains in the organization.

Since the attack involves multiple user actions involving scanning the QR code from a mobile device and completing the authentication, unlike typical phishing with simple URL clicks, the attackers induce a sense of urgency by impersonating IT support, HR support, payroll, administrator team, or the display name indicates the email is sent on-behalf of a known high value target in the organization (e.g., “Lara Scott on-behalf of CEO”).

In this detection approach, we correlate email from non-prevalent senders in the organization with impersonation intents.

Advanced Hunting Query:

let PhishingSenderDisplayNames = ()

{

pack_array(“IT”, “support”, “Payroll”, “HR”, “admin”, “2FA”, “notification”, “sign”, “reminder”, “consent”, “workplace”,

“administrator”, “administration”, “benefits”, “employee”, “update”, “on behalf”);

};

let suspiciousEmails = EmailEvents

| where Timestamp > ago(1d)

| where isnotempty(RecipientObjectId)

| where isnotempty(SenderFromAddress)

| where EmailDirection == “Inbound”

| where DeliveryAction == “Delivered”

| join kind=inner (EmailAttachmentInfo

| where Timestamp > ago(1d)

| where isempty(SenderObjectId)

| where FileType has_any (“png”, “jpg”, “jpeg”, “bmp”, “gif”)

) on NetworkMessageId

| where SenderDisplayName has_any (PhishingSenderDisplayNames())

| project Timestamp, Subject, FileName, SenderFromDomain, RecipientObjectId, NetworkMessageId;

let suspiciousSenders = suspiciousEmails | distinct SenderFromDomain;

let prevalentSenders = materialize(EmailEvents

| where Timestamp between (ago(7d) .. ago(1d))

| where isnotempty(RecipientObjectId)

| where isnotempty(SenderFromAddress)

| where SenderFromDomain in (suspiciousSenders)

| where EmailDirection == “Inbound”

| where DeliveryAction == “Delivered”

| distinct SenderFromDomain);

suspiciousEmails

| where SenderFromDomain !in (prevalentSenders)

| project Timestamp, Subject, FileName, SenderFromDomain, RecipientObjectId, NetworkMessageId

Correlating suspicious emails with image attachments from a new sender with risky sign-in attempts for the recipients can also surface the QR code phishing campaigns and user compromises.

3. Hunting for subject patterns:

In addition to impersonating IT and HR teams, attackers also craft the campaigns with actionable subjects. (e.g., MFA completion required, Digitally sign documents). The targeted user is requested to complete the highlighted action by scanning the QR code in the email and providing credentials and MFA token.

In most cases, these automated phishing campaigns also include a personalized element, where the user’s first name/last name/alias/email address is included in the subject. The email address of the targeted user is also embedded in the URL behind the QR code. This serves as a unique tracker for the attacker to identify emails successfully delivered and QR codes scanned.

In this detection, we track emails with suspicious keywords in subjects or personalized subjects. To detect personalized subjects, we track campaigns where the first three words or last three words of the subject are the same, but the other values are personalized/unique.

For example:

Alex, you have an undelivered voice message

Bob, you have an undelivered voice message

Charlie, you have an undelivered voice message

Your MFA update is pending, Alex

Your MFA update is pending, Bob

Your MFA update is pending, Charlie

Advanced Hunting Query:

Personalized campaigns based on the first few keywords:

EmailEvents

| where Timestamp > ago(1d)

| where EmailDirection == “Inbound”

| where DeliveryAction == “Delivered”

| where isempty(SenderObjectId)

| extend words = split(Subject,” “)

| project firstWord = tostring(words[0]), secondWord = tostring(words[1]), thirdWord = tostring(words[2]), Subject, SenderFromAddress, RecipientEmailAddress, NetworkMessageId

| summarize SubjectsCount = dcount(Subject), RecipientsCount = dcount(RecipientEmailAddress), suspiciousEmails = make_set(NetworkMessageId, 10) by firstWord, secondWord, thirdWord

, SenderFromAddress

| where SubjectsCount >= 10

Personalized campaigns based on the last few keywords:

EmailEvents

| where Timestamp > ago(1d)

| where EmailDirection == “Inbound”

| where DeliveryAction == “Delivered”

| where isempty(SenderObjectId)

| extend words = split(Subject,” “)

| project firstLastWord = tostring(words[-1]), secondLastWord = tostring(words[-2]), thirdLastWord = tostring(words[-3]), Subject, SenderFromAddress, RecipientEmailAddress, NetworkMessageId

| summarize SubjectsCount = dcount(Subject), RecipientsCount = dcount(RecipientEmailAddress), suspiciousEmails = make_set(NetworkMessageId, 10) by firstLastWord, secondLastWord, thirdLastWord

, SenderFromAddress

| where SubjectsCount >= 10

Campaign with suspicious keywords:

let PhishingKeywords = ()

{

pack_array(“account”, “alert”, “bank”, “billing”, “card”, “change”, “confirmation”,