Category: Microsoft

Category Archives: Microsoft

Visually group shapes in your diagrams with containers in Visio for the web

A container in Visio is a special shape that can hold other shapes inside of it. It’s often used to group related shapes together and can be a powerful tool to help organize and manage complex diagrams. The Visio desktop app has long supported containers, and now Visio for the web does, too! Users with a Visio Plan 1 or Visio Plan 2 license use containers in Visio for the web to create diagrams that are better organized and easier to understand and navigate.

Add a container from the new Container drop-down

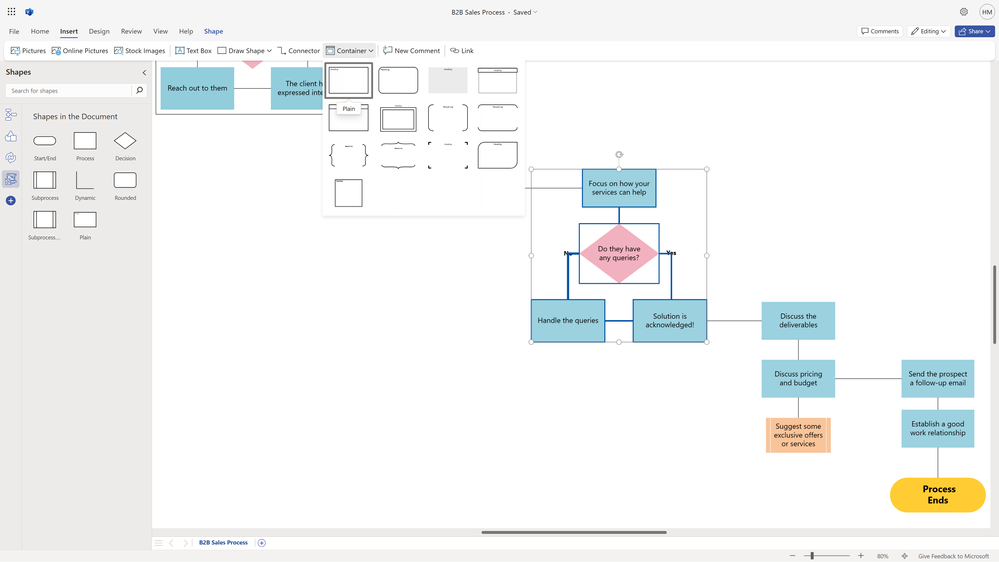

To add a container to your diagram, first select the shapes you want it to contain. Then, from the Insert tab in the ribbon, select the new Container drop-down.

An image of a flowchart in Visio for the web demonstrates how to access new Container options from the Insert tab.

A cropped image of a drawing in Visio for the web highlights the new Container styles.

From here, you will see more than a dozen container styles, including Rounded, Translucent, Horizontal Brackets, Vertical Brackets, Corner Frame, Square, and more. Hover over the container shapes, then select the preferred option to add it to the canvas.

Add a container from the right-click menu

You can also add a container directly from the right-click menu. Simply right-click a shape—or a group of shapes—that you want to add to the new container, then select Insert Container > Add to New Container from the drop-down menu.

A cropped image of a flowchart in Visio for the web highlights the new Container options in the right-click menu.

Note that, when adding shapes to a container via the right-click menu, only the shape—or shapes—selected will be contained. To include adjacent shapes, drag the container so that it encompasses all relevant shapes. Then, right-click the shapes and select Insert Container > Add to Underlying Container to add the additional shapes to the new container.

A video of a flowchart in Visio for the web demonstrates how to add shapes to an underlying container.

You can also add multiple shapes to a container by dragging and dropping them into the container. As shown in the GIF above, when a selected shape is contained in a container, you will see a green highlight around the associated container. Drag the required shapes to the container shape and then, when you see the green highlight, drop (or release) the shapes to add them to the container.

Add a container from the new Container stencil

For simplicity and flexibility, we’ve added a third option for adding a container to your diagram. But first, you’ll need to add the new Container stencil to the Shapes pane. To do this, type “Container” in the Search box at the top of the Shapes pane. Scroll through the list of results and select the magnifying glass to see a preview of the Container stencil. Then, select the Add button to pin the stencil to the Shapes pane.

A video of a flowchart in Visio for the web demonstrates how to add the new Container stencil to the Shapes pane.

Once the stencil is added to the Shapes palette, you can select and drag the preferred container from the stencil onto the canvas. Follow the steps above to increase the size of the container and quickly add additional shapes to the underlying container.

Add a header to your container

Once the container has been added, you can replace the default “Heading” text with your own header name, format the text, and add more containers by following the steps outlined above.

An image of a flowchart in Visio for the web demonstrates how to add header text to a container by selecting the text in the heading field.

Remove a shape from a container or a container from a drawing

To remove a shape from a container, simply select the shape and drag it out of the container. If successfully removed, you’ll notice that the green highlight will no longer appear around the container when you select the removed shape.

You can also delete a container from your drawing without having to delete the contained shapes. Simply right-click the container that you want to delete, then select Delete from the right-click menu. After selecting Delete, you will receive a prompt confirming whether you want to delete not only the container, but also all of its contents. Unchecking the box will allow you to keep the contained shapes as part of your drawing while removing the container.

An image of a message prompt in Visio for the web asks a user, “Are you sure you want to delete this container?”

New Container tab in the ribbon

Select a container in your diagram to access the new Container tab in the ribbon. Here, you’ll see options to: fit the container to its contents, quickly change the current style of the container, rotate the heading text by 90 degrees counterclockwise, and lock the contents of the container to prevent certain actions, such as deleting contained shapes.

A cropped image of a flowchart in Visio for the web highlights the new Container tab in the ribbon.

Example user scenario: Solution architecture diagram

Containers can be used to group related shapes in an architecture diagram. The example below uses Translucent containers to group the three DevTest environments that exist under an Azure DevTest subscription, and clearly separates them from the Production environment.

An image of an Azure architecture diagram in Visio for the web demonstrates how to use containers to group components in DevTest and Production subscriptions.

Example user scenario: Large team org chart

Containers can also be a great way to visually group specific team members within an org chart. The example below uses the Classic 1 container style to indicate those who are part of a virtual team.

An image of a large team org chart in Visio for the web demonstrates how to use containers to identify virtual teams.

Learn more about how to clarify the structure of diagrams by using containers in Visio for the web.

We are listening!

We look forward to hearing your feedback and learning more about how you use containers in Visio for the web. Please share some of your use cases in the comments below or let us know how we can help to improve the experience. You can also send feedback via the Visio Feedback Portal or directly in the Visio web app using “Give Feedback to Microsoft” in the bottom right corner.

Did you know? The Microsoft 365 Roadmap is where you can get the latest updates on productivity apps and intelligent cloud services. Check out what features are in development and coming soon for Visio on the Microsoft 365 Roadmap.

Microsoft Tech Community – Latest Blogs –Read More

Avoid the complexity when utilizing Entra ID multi-tenants and School or Work/Microsoft Accounts

Ideally, it would be convenient to manage the Prod env, Test env, and Dev env within a single Entra ID tenant. However, from the perspective of governance and compliance, it is common to separate the Entra ID tenant for the Prod env from the other envs. In such cases, various complexities arise, such as guest invitations for School or Work Account, use of Microsoft Account, and individuals using multiple accounts.

In this post, we outline several patterns for addressing issues that commonly arise in such scenarios and their corresponding solutions.

Dealing with authentication and authorization issues related to Entra ID can be time-consuming in the absence of prior knowledge, so we hope this knowledge proves helpful to all.

I recently had challenge when I attemptted to control access to Cosmos DB using RBAC, as described in Use system-assigned managed identities to access Azure Cosmos DB data article. While the this topic itself is simple, it becomes more intricate when dealing with the subject.

If you don’t fall into any of the categories listed below, you’re fine. However, many of you may encounter issues at some point. The initial item alone might not be issue, but when the second, third, and fourth items come into play, the complexity of Entra ID shows up.

Use your local machine for the development

Leverage Entra ID multi-tenants and guest invitation

Use Microsoft account instead of School or Work account

Use both of Microsoft account and School or Work account

I have tried a couple of scenarios, so I will share knowledge which work well or not work well in this post.

#1: Single Entra ID tenant, a subscription exists within the same EntraID tenant, and using School or Work account in the same EntraID tenant – this works well

Let’s try the official article at first. The concept is illustrated in the diagram below:

There is no built-in role for Cosmos DB data access, so we need to create the role as custom role. In this example, we create the custom role, which can read, write, and delete the data. The JSON file is as follows:

{

“RoleName”: “CosmosDBDataAccessRole”,

“Type”: “CustomRole”,

“AssignableScopes”: [“/”],

“Permissions”: [{

“DataActions”: [

“Microsoft.DocumentDB/databaseAccounts/readMetadata”,

“Microsoft.DocumentDB/databaseAccounts/sqlDatabases/containers/items/*”,

“Microsoft.DocumentDB/databaseAccounts/sqlDatabases/containers/*”

]

}]

}

Then, you create this custom role on your environment. You can get the resource ID of the custom role here as format, “XXXXXXX-XXX-XXX-XXXX-XXXXXXXXX” (actually a randomly generated UUID). This will be used later.

$rgName = “your-resource-group-name”

$cosmosdbName = “your-cosmosdb-name”

az cosmosdb sql role definition create -a $cosmosdbName -g $rgName -b role-definition-rw.json

{

“assignableScopes”: [

“/subscriptions/your-subscription-id/resourceGroups/your-resource-group-name/providers/Microsoft.DocumentDB/databaseAccounts/your-cosmosdb-name”

],

“id”: “/subscriptions/your-subscription-id/resourceGroups/your-resource-group-name/providers/Microsoft.DocumentDB/databaseAccounts/your-cosmosdb-name/sqlRoleDefinitions/XXXXXXX-XXX-XXX-XXXX-XXXXXXXXX”,

“name”: “XXXXXXX-XXX-XXX-XXXX-XXXXXXXXX”,

“permissions”: [

{

“dataActions”: [

“Microsoft.DocumentDB/databaseAccounts/readMetadata”,

“Microsoft.DocumentDB/databaseAccounts/sqlDatabases/containers/items/*”,

“Microsoft.DocumentDB/databaseAccounts/sqlDatabases/containers/*”

],

“notDataActions”: []

}

],

“resourceGroup”: “your-resource-group-name”,

“roleName”: “CosmosDBDataAccessRole”,

“type”: “Microsoft.DocumentDB/databaseAccounts/sqlRoleDefinitions”,

“typePropertiesType”: “CustomRole”

}

Next, you need to obtain the ID of your School or Work account using the following “az ad user show” command:

az ad user show –id “myuser@normalian.xxx”

{

“@odata.context”: “https://graph.microsoft.com/v1.0/$metadata#users/$entity”,

“businessPhones”: [],

“displayName”: “aduser-normalian”,

“givenName”: “aduser”,

“id”: “YYYYYYY-YYY-YYY-YYYY-YYYYYYYYY”,

“jobTitle”: “Principal Administrator”,

“mail”: “myuser@normalian.xxx”,

“mobilePhone”: null,

“officeLocation”: null,

“preferredLanguage”: null,

“surname”: “normalian”,

“userPrincipalName”: “myuser@normalian.xxx”

}

Finally, assign the custom role to the user.

$ az cosmosdb sql role assignment create -a $cosmosdbName -g $rgName -p “YYYYYYY-YYY-YYY-YYYY-YYYYYYYYY” -d “XXXXXXX-XXX-XXX-XXXX-XXXXXXXXX” -s “/”

{

“id”: “/subscriptions/your-subscription-id/resourceGroups/your-resource-group-name/providers/Microsoft.DocumentDB/databaseAccounts/your-cosmosdb-name/sqlRoleAssignments/787a36f9-

a7f3-40c8-a860-99d4c6ae5fd9″,

“name”: “787a36f9-a7f3-40c8-a860-99d4c6ae5fd9”,

“principalId”: “b0bde25a-d588-410c-a16d-30fc001661c4”,

“resourceGroup”: “your-resource-group-name”,

“roleDefinitionId”: “/subscriptions/your-subscription-id/resourceGroups/your-resource-group-name/providers/Microsoft.DocumentDB/databaseAccounts/your-cosmosdb-name/sqlRoleDefinit

ions/26eeca83-1fd8-4f40-8a5e-b66dac7d3e08″,

“scope”: “/subscriptions/your-subscription-id/resourceGroups/your-resource-group-name/providers/Microsoft.DocumentDB/databaseAccounts/your-cosmosdb-name”,

“type”: “Microsoft.DocumentDB/databaseAccounts/sqlRoleAssignments”

}

In your source code, you can leverage the authentication info. Refer to the Programmatically access the Azure Cosmos DB keys article if you need the detail more. This method allows you to access Cosmos DB without secret strings.

using Azure.Identity;

using Microsoft.Azure.Cosmos;

var builder = WebApplication.CreateBuilder(args);

// Add services to the container.

builder.Services.AddControllersWithViews();

builder.Services.AddSingleton<CosmosClient>(serviceProvider =>

{

return new CosmosClient(

accountEndpoint: builder.Configuration[“AZURE_COSMOS_DB_NOSQL_ENDPOINT”]!,

tokenCredential: new DefaultAzureCredential()

);

});

var app = builder.Build();

I believe this scenario should be simple.

#2: You have EntraID multi-tenants and You use a subscription on different EntraID tenant from School or Work Account’s one – this works well

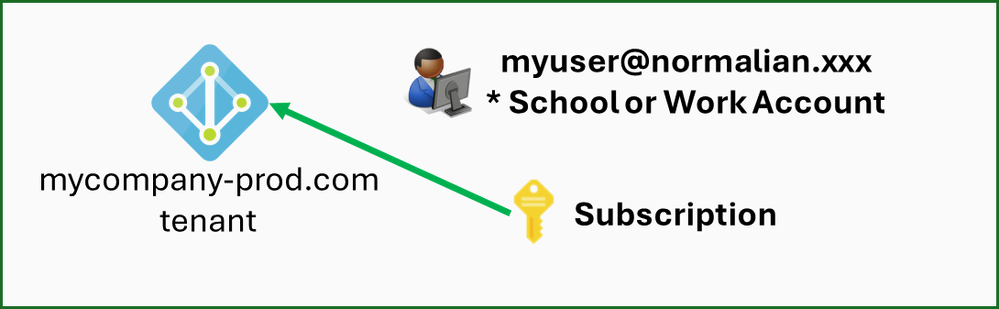

Let’s try another scenario. I believe this one is very popular in large companies. This scenario use School or Work Account on production env Entra ID tenant, and use the account as a guest use on development env Entra ID tenant. This scenario also assume that your subscription is on development env EntraID tenant. This scenario diagram is as follows:

Here are two ket points in this scenario:

How to assign custom roles to the guest user

How to use the development env EntraID tenant with DefaultAzureCredential

First, I try to get resource id for the guest user as follows, but this does not work well.

$ az ad user show –id “myuser01@normalian.xxx”

az : ERROR: Resource ‘myuser01@normalian.xxx’ does not exist or one of its queried reference-property objects are not present.

At line:1 char:1

+ az ad user show –id “myuser01@normalian.xxx”

+ ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

+ CategoryInfo : NotSpecified: (ERROR: Resource…re not present.:String) [], RemoteException

+ FullyQualifiedErrorId : NativeCommandError

You can figure out why this does not work well by checking your development env Entra ID tenant. The guest use contains “#EXT#@” and appear as follows:

You can get the resource id here. You can also get the ID with the command as follows.

az ad user show –id “daichi_mycompany.com#EXT#@normalianxxxxx.onmicrosoft.com”

{

“@odata.context”: “https://graph.microsoft.com/v1.0/$metadata#users/$entity”,

“businessPhones”: [],

“displayName”: “daichi”,

“givenName”: null,

“id”: “4bf33ec0-dc63-4468-cc7d-edd4c9820fee”,

“jobTitle”: “CLOUD SOLUTION ARCHITECT”,

“mail”: “daichi@mycompany.com”,

“mobilePhone”: null,

“officeLocation”: null,

“preferredLanguage”: null,

“surname”: null,

“userPrincipalName”: “daichi_mycompany.com#EXT#@normalianxxxxx.onmicrosoft.com”

}

You can assign the custom role properly with the ID using “az cosmosdb sql role assignment create” command.

Next, you need to configure to use development env EntraID tenant in your code. The School or Work account uses production env EntraID tenant without any setting, then you will find “organizational account belongs to the production EntraID, but Azure subscription is under the development EntraID” error as follows. (This example is ASP.NET Core):

To avoid this, you can address this with the code as follows:

using Azure.Identity;

using Microsoft.Azure.Cosmos;

var builder = WebApplication.CreateBuilder(args);

// Add services to the container.

builder.Services.AddControllersWithViews();

builder.Services.AddSingleton<CosmosClient>(serviceProvider =>

{

// var connectionString = builder.Configuration.GetConnectionString(“CosmosDB”);

// return new CosmosClient(connectionString);

var option = new DefaultAzureCredentialOptions()

{

TenantId = “your-entraid-tenant-id”,

};

return new CosmosClient(

accountEndpoint: builder.Configuration[“AZURE_COSMOS_DB_NOSQL_ENDPOINT”]!,

tokenCredential: new DefaultAzureCredential(option)

);

});

var app = builder.Build();

By setting up the tenant ID here, you can access proper Entra ID tenant.

#3: Use Microsoft account invited as a guest user on EntraID tenant – this works well

This case is same concept with second use case. This is a diagram for the scenario as follows:

Run “az ad user show” command with the account name adding #EXT#@ and your EntraID tenant. It’s fine to check on your Entra ID tenant directly. Then, run “az cosmosdb sql role assignment create” command to assign the custom role to the use.

az ad user show –id “warito_test_hotmail.com#EXT#@normalianxxxxx.onmicrosoft.com”

{

“@odata.context”: “https://graph.microsoft.com/v1.0/$metadata#users/$entity”,

“businessPhones”: [],

“displayName”: “Daichi”,

“givenName”: “Daichi”,

“id”: “94bfd636-b2e9-4d44-b895-8d51277e7abe”,

“jobTitle”: “Principal Normal”,

“mail”: null,

“mobilePhone”: null,

“officeLocation”: null,

“preferredLanguage”: null,

“surname”: “Isamin”,

“userPrincipalName”: “warito_test_hotmail.com#EXT#@normalianxxxxx.onmicrosoft.com”

}

$ az cosmosdb sql role assignment create -a $cosmosdbName -g $rgName -p “your-user-object-resourceid” -d “your-customrole-resourceid” -s “/”

{

“id”: “/subscriptions/your-subscription-id/resourceGroups/your-resource-group-name/providers/Microsoft.DocumentDB/databaseAccounts/your-cosmosdb-name/sqlRoleAssignments/7adc585c-

74d6-4979-a3ed-3d968de2d27e”,

“name”: “7adc585c-74d6-4979-a3ed-3d968de2d27e”,

“principalId”: “b0bde25a-d588-410c-a16d-30fc001961c4”,

“resourceGroup”: “your-resource-group-name”,

“roleDefinitionId”: “/subscriptions/your-subscription-id/resourceGroups/your-resource-group-name/providers/Microsoft.DocumentDB/databaseAccounts/your-cosmosdb-name/sqlRoleDefinit

ions/7adc585c-74d6-4979-a3ed-3d968de2d27e”,

“scope”: “/subscriptions/your-subscription-id/resourceGroups/your-resource-group-name/providers/Microsoft.DocumentDB/databaseAccounts/your-cosmosdb-name”,

“type”: “Microsoft.DocumentDB/databaseAccounts/sqlRoleAssignments”

}

#4 Create Service Principal on development env Entra ID tenant – This does not work

I guess you might come up an idea “Why not just create service principal in the development env Entra ID tenant?” Here is a diagram for this scenario as follows. This approach does not work.

Note that the Client ID and Object ID of the Service Principal are different. When you run commands to assign the custom role, it appears as follows:

$ az cosmosdb sql role assignment create -a $cosmosdbName -g $rgName -p “your-serviceprincipal-clientied” -d “your-customrole-resourceid” -s “/”

az : ERROR: (BadRequest) The provided principal ID [“your-serviceprincipal-clientied”] was not found in the AAD tenant(s) [b4301d50-52bf-43f0-bfaa-915234380b1a] which are associated

with the customer’s subscription.

At line:1 char:1

+ az cosmosdb sql role assignment create -a $cosmosdbName -g $rgName -p …

+ ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

+ CategoryInfo : NotSpecified: (ERROR: (BadRequ…s subscription.:String) [], RemoteException

+ FullyQualifiedErrorId : NativeCommandError

ActivityId: b1b3a860-c244-11ee-9296-085bd676b6c2, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0,

Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0

Code: BadRequest

Message: The provided principal ID [“your-serviceprincipal-clientied”] was not found in the AAD tenant(s) [b4301d50-52bf-43f0-bfaa-915234380b1a] which are associated with the

customer’s subscription.

ActivityId: b1b3a860-c244-11ee-9296-085bd676b6c2, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0,

Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0

$ az cosmosdb sql role assignment create -a $cosmosdbName -g $rgName -p “your-serviceprincipal-objectid” -d “your-customrole-resourceid” -s “/”

az : ERROR: (BadRequest) The provided principal ID [“your-serviceprincipal-objectid”] was found to be of an unsupported type : [Application]

At line:1 char:1

+ az cosmosdb sql role assignment create -a $cosmosdbName -g $rgName -p …

+ ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

+ CategoryInfo : NotSpecified: (ERROR: (BadRequ…: [Application]:String) [], RemoteException

+ FullyQualifiedErrorId : NativeCommandError

ActivityId: f24dccfd-c244-11ee-9712-085bd676b6c2, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0,

Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0

Code: BadRequest

Message: The provided principal ID [“your-serviceprincipal-objectid”] was found to be of an unsupported type : [Application]

ActivityId: f24dccfd-c244-11ee-9712-085bd676b6c2, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0,

Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0, Microsoft.Azure.Documents.Common/2.14.0

As evident from the above, you get an error stating, “There’s no such ID in the first place”. This is expected since the object ID is not specified. In second, you get an error stating, “Assigning to the Application is unsupported!” when you use the Object ID. Take note of this.

#5: Use multi-accounts on EntraID multi-tenants – This case works

This scenario is popular when you manage multiple customers simultaneously. Let’s assume the following example for illustration.

Account for Project ①: myuser@normalian.xxx – School or Work account

Account for Project ②: personalxxxx@outlook.com – Microsoft account

We have to look back authentication priority of DefaultAzureCredential. Refer to DefaultAzureCredential class for more details.

In this case, we switch the accounts using Azure Cli for AzureCliCredential. First, run command as follows and acquire authentication info for your development account.

az login

Next, refer to the example source code as follows. You can configure the priority.

using Azure.Identity;

using Microsoft.Azure.Cosmos;

var builder = WebApplication.CreateBuilder(args);

// Add services to the container.

builder.Services.AddControllersWithViews();

builder.Services.AddSingleton<CosmosClient>(serviceProvider =>

{

// var connectionString = builder.Configuration.GetConnectionString(“CosmosDB”);

// return new CosmosClient(connectionString);

var option = new DefaultAzureCredentialOptions()

{

ExcludeEnvironmentCredential = true,

ExcludeWorkloadIdentityCredential = true,

ExcludeManagedIdentityCredential = true,

ExcludeSharedTokenCacheCredential = true,

ExcludeVisualStudioCredential = true,

ExcludeVisualStudioCodeCredential = true,

TenantId = “your-entraid-tenant-id”,

};

return new CosmosClient(

accountEndpoint: builder.Configuration[“AZURE_COSMOS_DB_NOSQL_ENDPOINT”]!,

tokenCredential: new DefaultAzureCredential(option)

);

});

Reference

DefaultAzureCredential Class

Use system-assigned managed identities to access Azure Cosmos DB data

How to manage Azure subscriptions with the Azure CLI

Microsoft Tech Community – Latest Blogs –Read More

What’s new in Windows Autopatch: February 2024

The start of the new year brings a great opportunity for positive change, including the release of new features in Windows Autopatch. We heard your feedback! Here are some improvements made in response to your enterprise needs.

Import Update rings for Windows 10 and later in preview

Update rings allow you to specify how and when Windows as a service updates your Windows 10 or Windows 11 device with feature and quality updates. Update rings are available for Windows 10 and later. And if you’re a Windows Autopatch customer, you can now bring existing Update rings for Windows 10 and later policies into Windows Autopatch Management. For additional information, see Configure Update rings for Windows 10 and later policy in Intune.

Importing existing rings allows you to take advantage of the many capabilities of Windows Autopatch without impacting your existing Windows update schedules. Imported rings will automatically register all targeted devices into Windows Autopatch without the need to redeploy or change your existing update rings. Additionally, important rings will be reflected in the reporting and release experience.

Learn how to import update rings for Windows 10 and later. If needed, brush up on Windows client updates, channels, and tools.

Customer defined service outcomes in preview

Have you used Windows Autopatch reports to monitor the health and activity of your deployments? The insights from the reports can help you understand if your devices are maintaining update compliance targets.

Previously, deployment success measures were based on a static schedule of 21 days. This means that Windows Autopatch aims to keep at least 95% of eligible devices on the latest Windows quality update 21 days after release.

With this enhancement, the success of Windows Autopatch deployments will be based on your defined rings. We’ll also be introducing new columns in our release blade, as well as Windows quality and feature update reporting, to show the percentage complete for quality and feature updates. Devices that are up to date will remain in the “In Progress” status in reporting until you either get the current monthly cumulative update or an alert. If an alert is received, the status will change to “Not up to date.”

To learn more, read Service level objectives.

Improved data refresh speed and reporting accuracy

Windows Autopatch reporting provides rich insights into your patch compliance status, so you can make informed choices about protecting against defects and vulnerabilities.

This release is changing the refresh cycle for Windows Autopatch reporting. The refresh cycle refers to the amount of time from when a change is made to when it’s reflected in reporting and other UX components. This time will be reduced from every 24 hours to every 30 minutes. This improvement supports the many data streams that Windows Autopatch uses to provide current update status for all devices enrolled into Windows Autopatch.

To learn more, see Windows quality update reporting.

Take your next step with Windows Autopatch

We hope these enhancements will help you keep your devices secure and up to date with less hassle and more control. Get current and stay current with automation that leads to higher security and lower costs.

The ideas behind these releases originated from conversations, input, and requests from you, our customers. We’d love to hear your feedback and suggestions on how we can continue to make Windows Autopatch even better for you. You can share your thoughts and ideas with us on our feedback hub or by joining our community forum.

If you want to learn more about Windows Autopatch:

Visit our website.

Read our documentation.

Watch our guided demos.

If you want to try Windows Autopatch for yourself, sign up for a free trial or contact us for a demo.

Thank you for choosing Windows Autopatch and stay tuned for more updates and announcements.

Continue the conversation. Find best practices. Bookmark the Windows Tech Community, then follow us @MSWindowsITPro on X/Twitter. Looking for support? Visit Windows on Microsoft Q&A.

Microsoft Tech Community – Latest Blogs –Read More

What’s new in Windows Autopatch: February 2024

The start of the new year brings a great opportunity for positive change, including the release of new features in Windows Autopatch. We heard your feedback! Here are some improvements made in response to your enterprise needs.

Import Update rings for Windows 10 and later in preview

Update rings allow you to specify how and when Windows as a service updates your Windows 10 or Windows 11 device with feature and quality updates. Update rings are available for Windows 10 and later. And if you’re a Windows Autopatch customer, you can now bring existing Update rings for Windows 10 and later policies into Windows Autopatch Management. For additional information, see Configure Update rings for Windows 10 and later policy in Intune.

Importing existing rings allows you to take advantage of the many capabilities of Windows Autopatch without impacting your existing Windows update schedules. Imported rings will automatically register all targeted devices into Windows Autopatch without the need to redeploy or change your existing update rings. Additionally, important rings will be reflected in the reporting and release experience.

Learn how to import update rings for Windows 10 and later. If needed, brush up on Windows client updates, channels, and tools.

Customer defined service outcomes in preview

Have you used Windows Autopatch reports to monitor the health and activity of your deployments? The insights from the reports can help you understand if your devices are maintaining update compliance targets.

Previously, deployment success measures were based on a static schedule of 21 days. This means that Windows Autopatch aims to keep at least 95% of eligible devices on the latest Windows quality update 21 days after release.

With this enhancement, the success of Windows Autopatch deployments will be based on your defined rings. We’ll also be introducing new columns in our release blade, as well as Windows quality and feature update reporting, to show the percentage complete for quality and feature updates. Devices that are up to date will remain in the “In Progress” status in reporting until you either get the current monthly cumulative update or an alert. If an alert is received, the status will change to “Not up to date.”

To learn more, read Service level objectives.

Improved data refresh speed and reporting accuracy

Windows Autopatch reporting provides rich insights into your patch compliance status, so you can make informed choices about protecting against defects and vulnerabilities.

This release is changing the refresh cycle for Windows Autopatch reporting. The refresh cycle refers to the amount of time from when a change is made to when it’s reflected in reporting and other UX components. This time will be reduced from every 24 hours to every 30 minutes. This improvement supports the many data streams that Windows Autopatch uses to provide current update status for all devices enrolled into Windows Autopatch.

To learn more, see Windows quality update reporting.

Take your next step with Windows Autopatch

We hope these enhancements will help you keep your devices secure and up to date with less hassle and more control. Get current and stay current with automation that leads to higher security and lower costs.

The ideas behind these releases originated from conversations, input, and requests from you, our customers. We’d love to hear your feedback and suggestions on how we can continue to make Windows Autopatch even better for you. You can share your thoughts and ideas with us on our feedback hub or by joining our community forum.

If you want to learn more about Windows Autopatch:

Visit our website.

Read our documentation.

Watch our guided demos.

If you want to try Windows Autopatch for yourself, sign up for a free trial or contact us for a demo.

Thank you for choosing Windows Autopatch and stay tuned for more updates and announcements.

Continue the conversation. Find best practices. Bookmark the Windows Tech Community, then follow us @MSWindowsITPro on X/Twitter. Looking for support? Visit Windows on Microsoft Q&A.

Microsoft Tech Community – Latest Blogs –Read More

Security review for Microsoft Edge version 121

We are pleased to announce the security review for Microsoft Edge, version 121!

We have reviewed the new settings in Microsoft Edge version 121 and determined that there are no additional security settings that require enforcement. The Microsoft Edge version 117 security baseline continues to be our recommended configuration which can be downloaded from the Microsoft Security Compliance Toolkit.

Microsoft Edge version 121 introduced 11 new computer settings and 11 new user settings. We have included a spreadsheet listing the new settings in the release to make it easier for you to find them.

As a friendly reminder, all available settings for Microsoft Edge are documented here, and all available settings for Microsoft Edge Update are documented here.

Please continue to give us feedback through the Security Baselines Discussion site or this post.

Microsoft Tech Community – Latest Blogs –Read More

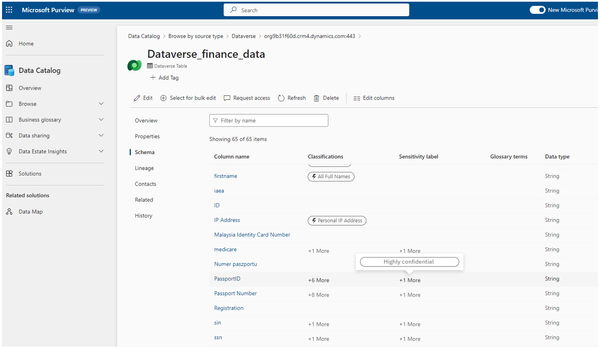

Labeling with Microsoft Purview Data Map now supports Dataverse, Azure Databricks, and Snowflake

We are pleased to announce that Labeling with Purview Data Map now supports three new data sources:

Dataverse,

Azure Databricks and

Snowflake.

For the complete list of data sources supported please refer to:

Microsoft Purview Data Map supported data sources and file types | Microsoft Learn

Background

Data has transformative potential in the predictive and AI era to propel business outcomes. Organizations need to create data agility but at the same time manage risk and ensure the right usage and compliance with regulations. To achieve this, the first step is to be able to discover and identify sensitive data across the data estate.

The current processes for data discovery and classification are not adequate for the modern data landscape. They are fragmented by different disciplines or workloads, resulting in redundant work, poor visibility, and inconsistent data categorization across the data estate. Moreover, they often rely on manual interventions, which are time-consuming and error-prone, especially when dealing with diverse data sources on a large scale.

Hence, a unified and automated solution that can discover, classify, and label sensitive data across different data sources and domains is required.

How can Labeling in Microsoft Purview data map help address these challenges?

Microsoft Purview is a comprehensive set of solutions that can help your organization secure, govern and manage your data, wherever it lives. It enables organizations to have a comprehensive and consistent view of their data assets and the sensitivity levels of their data, as well as to enforce data policies and comply with regulations.

Labeling with Purview Data Map enables organizations to identify sensitive data easily and consistently across the data estate, regardless of where it resides or how it is structured. It also reduces the manual effort and human error involved in labeling the data by using predefined rules and policies that match your business and compliance needs.

For additional details please refer to:

Labeling in the Microsoft Purview Data Map | Microsoft Learn

How does it work?

To Label your data in Purview Data Map, you need to register your data sources in Purview and scan them to discover and catalog your data assets. Then, you need to create Sensitivity Labels and define the rules and policies for applying the labels to your data assets. Once you scan the data source in the Data Map, Purview will automatically label your data assets.

Steps to Label Data in Dataverse:

Register Dataverse in Purview. For details, please refer to: Connect to and manage Microsoft Dataverse in Microsoft Purview | Microsoft Learn

Configure Label and Labels policies for data assets in Microsoft Purview Information Protection App and Scan your assets. For details, please refer to: How to automatically apply sensitivity labels to your data in Microsoft Purview Data Map (Preview) | Microsoft Learn

From the Catalog App, view the Sensitivity Labels applied to columns in Dataverse

Steps to configure labeling for Azure Databricks:

Register Azure Databricks in Purview. For details, please refer to: Connect to and manage Azure Databricks Unity Catalog | Microsoft Learn

Configure Label and Labels policies for schematized assets in Microsoft Purview Information Protection App and Scan your assets. For details, please refer to: How to automatically apply sensitivity labels to your data in Microsoft Purview Data Map (Preview) | Microsoft Learn

From the Purview Catalog App view the Sensitivity Labels

Steps to configure labeling for Snowflake:

Register Snowflake in Purview. For details, please refer to: Connect to and manage Snowflake | Microsoft Learn

Configure Label and Labels policies for schematized assets in Microsoft Purview Information Protection App and Scan your assets. For details, please refer to: How to automatically apply sensitivity labels to your data in Microsoft Purview Data Map (Preview) | Microsoft Learn

From the Data Catalog App view the Sensitivity Labels

How to get started:

Labeling with Purview data map is currently in Public Preview.

Please refer to the following link to get started with Purview: Microsoft Purview | Microsoft Learn

Please refer to the following link to get started with labeling for Purview Data Map: Labeling in the Microsoft Purview Data Map | Microsoft Learn

Microsoft Tech Community – Latest Blogs –Read More

Security review for Microsoft Edge version 121

We are pleased to announce the security review for Microsoft Edge, version 121!

We have reviewed the new settings in Microsoft Edge version 121 and determined that there are no additional security settings that require enforcement. The Microsoft Edge version 117 security baseline continues to be our recommended configuration which can be downloaded from the Microsoft Security Compliance Toolkit.

Microsoft Edge version 121 introduced 11 new computer settings and 11 new user settings. We have included a spreadsheet listing the new settings in the release to make it easier for you to find them.

As a friendly reminder, all available settings for Microsoft Edge are documented here, and all available settings for Microsoft Edge Update are documented here.

Please continue to give us feedback through the Security Baselines Discussion site or this post.

Microsoft Tech Community – Latest Blogs –Read More

Security review for Microsoft Edge version 121

We are pleased to announce the security review for Microsoft Edge, version 121!

We have reviewed the new settings in Microsoft Edge version 121 and determined that there are no additional security settings that require enforcement. The Microsoft Edge version 117 security baseline continues to be our recommended configuration which can be downloaded from the Microsoft Security Compliance Toolkit.

Microsoft Edge version 121 introduced 11 new computer settings and 11 new user settings. We have included a spreadsheet listing the new settings in the release to make it easier for you to find them.

As a friendly reminder, all available settings for Microsoft Edge are documented here, and all available settings for Microsoft Edge Update are documented here.

Please continue to give us feedback through the Security Baselines Discussion site or this post.

Microsoft Tech Community – Latest Blogs –Read More

APIs in Action: Unlocking the Potential of APIs in Today’s Digital Landscape

In today’s world, APIs (Application Programming Interfaces) are essential for connecting applications and services, driving digital innovation. But with the rise of hybrid and multi-cloud setups, effective API management becomes essential for ensuring security and efficiency. That’s where APIs in Action, a virtual event dedicated to unlocking the full potential of APIs, comes in.

Join us for a full-day virtual event focused on exploring API management for integration, hybrid and multi-cloud, and AI workloads. Learn from industry experts about the latest trends and best practices shaping the API landscape. Our immersive event delves deep into APIs and API management, highlighting innovative architectures that drive business growth. Our experts will guide you through transforming existing services and making your data easily accessible to developers, both internally and externally.

Whether you’re a seasoned professional or just starting out, APIs in Action equips you with the knowledge and tools to use APIs effectively in your hybrid and multi-cloud environment. Register now and join the conversation! Experience a day filled with insightful discussions, demos, and actionable insights that will empower you to navigate the evolving landscape of API management with confidence.

Session

Abstract

Speaker(s)

The role of API Management in Azure Integration Services

A successful integration platform developed with Azure Integration Services will find API Management at the heart of your solution. In this session we will discuss some of the common scenarios where you will find API Management used.

Mike Stephenson

API management for microservices in a hybrid and multi-cloud world

Microservices are on the cusp of becoming the dominant style of software architecture. This hands-on demonstration will show how enterprises can make the transition to API-first architectures and microservices in a hybrid, multi-cloud world.

Tom Kerkhove

Leveraging API Management for OpenAI Applications/Use Azure API Management (APIM) to manage, secure, and scale your LLM-based applications

This session navigates the intersection of APIM and OpenAI technologies, discussing how APIM enhances the deployment, security, and scalability of OpenAI-powered applications. Attendees will learn about APIM basics, OpenAI’s capabilities, integration strategies, security challenges, and real-world applications.

Elena Neroslavskaya, Chris Ayers

Azure API Management from a developer perspective

As organizations adopt an API-first mindset, the need for a good management of your APIs grows. This session will explain the benefits of Azure API Management (APIM) through the eyes of a developer. What’s in it for the developer and how can Azure APIM help to maximize the potential and security of your APIs?

Toon Vanhoutte

OpenAPI now vs. the future

Discover the essential role of OpenAPI in unlocking your API’s full potential and expanding your customer base. In this session, explore how OpenAPI is integral to the AI-driven future, providing crucial insights for staying ahead in the dynamic API landscape. Elevate your strategy and position your API for success by embracing OpenAPI.

Darrel Miller

API Design First with SwaggerHub and Azure API Management

Still designing in the dark ages with interface design documents and outdated documentation? Come see how SwaggerHub and Azure API Management can enable you to utilize the API Design First methodology to create live documentation that allows architects and stakeholders to design software together.

Joël Hébert

API DevEx

The developer experience for APIs can be difficult for new API developers and can add complexity to existing API projects due to new toolchains and evolving cloud services. In this session, we will demystify the API developer experience, leveraging tools like GitHub Copilot, Azure API Center, Azure API Management, and OpenAPI extensions.

Josh Garverick

Better API Governance with Azure API Center

An API catalog brings together the different roles involved in an API program and, by promoting the collaboration between them, it fosters API reuse, ensured compliance and better developer productivity. In this session we will explore what is Azure API Center and how to integrate it in your API design workflow.

Massimo Crippa

Leverage Postman to Collaboratively Test your APIs from design to deployment and beyond

Learn firsthand how to wield Postman effectively throughout the API Lifecycle, boosting your API implementation and fortifying security from the start with the right testing strategies.

Whether you’re in the business of creating or consuming APIs, discover how Postman and Azure API Management complement each other to enhance collaboration and streamline productivity.

Sandeep Murusupalli, Garrett London

Build a warp speed time-to-market API with DAB, APIM and Azure Container Apps

In this session will delve into how the Data API builder enables swift and secure database object exposure through REST or GraphQL endpoints allowing data access on any platform, language, or device. By combining DAB with Azure Container Apps and API Management we will build up and secure a serverless data API without writing a single line of code.

Massimo Crippa

Harnessing the Power of Azure API Management: Building Robust and Secure API

In this session, which combines theoretical knowledge with real-world scenarios, we will delve into the advanced features of Azure API Management, with a focus on building robust, secure, and scalable APIs. Attendees will learn about security best practices, policy management, and how to effectively use Azure’s tools to enhance API performance and security.

Hamida Rebai

Building a resilient API landscape with Azure API Management

Cloud service failure is inevitable. When building platforms, it is crucial to ensure that you will seamlessly handle failure and by being resilient to them. Learn how Azure API Management helps you mitigate and recover from failures by using built-in load balancing and circuit-breaking capabilities.

Tom Kerkhove

Enhance your API security posture with Microsoft Defender for APIs

Azure Defender for APIs brings security insights and ML-based detections to APIs that are exposed via Azure API Management. In this session we will see how to leverage Defender for APIs to enhance your security posture, which kind of scenarios are covered, and our learnings from observing production workloads.

Massimo Crippa

Gain Understanding of APIs and Integrations with Azure Application Insights

Use Application Insights to create a correlated, end to end view of integrations across APIM, Logic Apps and Functions. Learn how to record insights, including business data, then create queries to view the data and observe through dashboards. Through Workbooks we can create meaningful, insightful custom visuals allowing support and business teams to gain the insights they want.

Dave Phelps

GitOps for API-Management

In this talk, we will present our experience with a GitOps workflow for implementing and managing API-Management within an Integration Platform for an international corporation. We will describe how we automated infrastructure and deployment for the whole platform, addressing key aspects such as governance, permissions management, testing and documentation.

Christine Robinson, Maximiliane Ott

APIOps: Transforming Azure APIM Deployments with GitOps and DevOps Methodologies

This talk offers a deep dive into the principles and practices of automating and managing APIs in Azure API Management. Attendees will gain insights into how APIOps applies the concepts of GitOps and DevOps to API deployment. By using practices from these two methodologies, APIOps can enable everyone involved in the lifecycle of API design, development, and deployment with self-service and automated tools to ensure the quality of the specifications and APIs that they’re building.

Wael Kdouh

Microsoft Tech Community – Latest Blogs –Read More

How to run Azure Virtual Desktop on-premises

Deliver desktop and app virtualization experiences to almost any device, with VMs running where you need them with Azure Virtual Desktop on Azure Stack HCI. This hybrid solution integrates local data centers with Azure cloud workloads through Azure Arc, enabling flexible, secure, and regionally compliant virtualization experiences.

Take advantage of exclusive features like Windows 11 multi-session, previously limited to Windows Server. Maintain precise control over VM hosts’ locations, ensuring compliance and optimizing for ultra-low latency. Benefit from unified security with Microsoft Entra ID and streamlined administration through Azure Arc, for increased flexibility and control. Microsoft Azure MVP, Matt McSpirit shows the steps to get it up and running.

Unified management of your virtual desktops.

Manage virtual desktops whether running in the Cloud or locally on Azure Stack HCI. Steps to get started.

Configure an Azure Stack HCI 23H2 cluster.

Ensure prerequisites are met and add virtual machine images. See how to get started.

Configure additional options.

Enhance Azure Virtual Desktop performance by configuring GPU resources using Windows Admin Center or PowerShell. GPU-enabled VMs provide a boost in frames-per-second for graphic-intensive applications. Check it out.

Watch our video here:

QUICK LINKS:

00:00 — Azure Virtual Desktop for Azure Stack HCI

01:21 — Security and management benefits

01:56 — Get it up and running

03:27 — Connect to session hosts using the new Windows App

04:15 — Configure an Azure Stack HCL 23H2 cluster to run Azure Virtual Desktop

06:28 — Deploy session hosts

08:29 — Configure additional options

09:57 — Wrap Up

Link References:

Check out https://aka.ms/AVDonHCI

Steps for setup at https://aka.ms/StackHCISetup

Unfamiliar with Microsoft Mechanics?

As Microsoft’s official video series for IT, you can watch and share valuable content and demos of current and upcoming tech from the people who build it at Microsoft.

Subscribe to our YouTube: https://www.youtube.com/c/MicrosoftMechanicsSeries

Talk with other IT Pros, join us on the Microsoft Tech Community: https://techcommunity.microsoft.com/t5/microsoft-mechanics-blog/bg-p/MicrosoftMechanicsBlog

Watch or listen from anywhere, subscribe to our podcast: https://microsoftmechanics.libsyn.com/podcast

Keep getting this insider knowledge, join us on social:

Follow us on Twitter: https://twitter.com/MSFTMechanics

Share knowledge on LinkedIn: https://www.linkedin.com/company/microsoft-mechanics/

Enjoy us on Instagram: https://www.instagram.com/msftmechanics/

Loosen up with us on TikTok: https://www.tiktok.com/@msftmechanics

Video Transcript:

-If you’re looking at modernizing your virtual desktop infrastructure and need to ensure that your VM hosts run in your datacenter or at your specified edge locations, today I’ll show you how with Azure Virtual Desktop running on Azure Stack HCI, which is now generally available and fully-supported.

-If you’re new to Azure Stack HCI, it’s a hybrid solution running on hyperconverged server infrastructure in your local data center, and via integration with Azure Arc management, it allows you to run select Azure cloud workloads on-premises.

-And now for the first time, you can run Azure Virtual Desktop on Azure Stack HCI, which lets you deliver desktop and app virtualization experiences to almost any device, with VMs running where you need them. This is a true hybrid solution, spanning your on-prem locations and the cloud.

-This combined solution means that you can take advantage of exclusive Azure Virtual Desktop capabilities, like Windows 11 and Windows 10 multi-session, a capability otherwise only possible using Windows Server, where more than one user can simultaneously use the same session host.

-And because you control where you are running your virtual desktops on your local domain right down to a specific local subnet, you can easily meet regional or regulatory data sovereignty needs and optimize for scenarios where ultra-low latency networking is critical. Additionally, there are a number of security and management benefits that come with the native Azure integration.

-For example, as users log in, Microsoft Entra ID lets you take advantage of a common secure identity including multifactor authentication and Conditional Access. And importantly as an admin, you benefit from consistent infrastructure management with Azure Arc.

-This lets you use the same tools you would use for other workloads in Azure, such as Azure Policy for consistent configurations, Microsoft Defender for Cloud for improved multicloud security, Update Manager to ensure your VMs remain current, and more. Now, let me show you how you would get this up and running.

-In my case, I’m in the Azure Portal, and I have a local Azure Stack HCI 23H2 cluster running and connected to Azure, which is one of the prerequisite steps to get everything working. You can follow the steps to do that at aka.ms/StackHCISetup. Now, if we take a closer look, we can see this cluster consists of two new Dell APEX MC-660 nodes, and is running in a custom location, which in our case, is an on-prem site.

-Next, we need to set up a host pool in Azure Virtual Desktop. For that, I’m in the Azure Portal where you’ll manage Azure Virtual Desktop services and configurations. So here you can see we have host pools running in the Azure cloud. And importantly, you can see, as denoted with their resource names, there are also host pools running in my own data center on my Azure Stack HCI infrastructure.

-So, you have a unified management plane in the Azure cloud for your virtual desktops, whether they are running in the Cloud or locally on Azure Stack HCI. And from here, I can manage things like my host pools, application groups, workspaces, and my users, again, all from the Azure Portal. In fact, when I create a new session host, I simply need to define that it’s running in my Azure Stack HCI cluster.

-And from a deployment perspective, it’s similar to other Azure locations you’d define when you provision virtual desktops in Azure. Once I’ve added all of the necessary configurations for my host pool, the new virtual machines are provisioned through Azure Arc VM management down to my local cluster.

-And once my session hosts are up and running and added to a workspace, I can connect to them using the new Windows App, which works with Azure Virtual Desktop, and by the way, also works for Windows 365 and Microsoft Dev Box. In fact, one of the benefits of Azure Virtual Desktop compared to other services, is that in addition to full desktops, I can also access my remote apps, and back on the home screen, you’ll see I’ve already pinned a few of those apps.

-In this case, I’ll connect to my shared Windows 11 multi-session VM running on-prem using the most up-to-date Microsoft-managed VM image with Microsoft 365 apps pre-installed along with my custom apps. And if I look at my network settings in this PowerShell window, you’ll see that it’s on my local subnet, so it’s right next my on-premises databases and file shares. So how do we configure an Azure Stack HCI 23H2 cluster to run Azure Virtual Desktop? Let me show you.

-So, we’ll start with a freshly-deployed cluster this time, which was set up using the new cloud-based deployment experience. And the good news is that the deployment experience provides all the Azure Arc infrastructure needed, including the Arc Resource Bridge, Custom Location, and other agents and management components. But you can see here that this cluster doesn’t yet meet the remaining prerequisites for Azure Virtual Desktop, which are.

-The cluster needs to be running 23H2 with related Azure Arc infrastructure. Well, we’ve got that. And you’ll also need to have at least one virtual machine image available in your cluster, which we still need to do. So over on the left hand navigation, I’ll click on VM images, and from there, you can add an image. You have three options to choose from.

-You can use a local share, you can create and store a new image on the cluster. Or select an existing image from an Azure Storage Account to replicate it down to your cluster. Or from the Azure Marketplace, you can choose a Microsoft-curated image. So I’ll do that to select an image with multi-session support. Then in Basics, I’ll fill in the resource group, the image name, the custom location, in this case, is already populated with my cluster name.

-In the Image to Download, I can choose from curated and supported images for installing Windows Server, Windows 10, or Windows 11. These will have the latest Cumulative Updates applied and a few with the Microsoft 365 apps pre-installed and optimized for Azure Virtual Desktop, like A/V Redirect for a better Microsoft Teams experience.

-So I’ll choose this Windows 11 image. And now I can select the storage volume on my cluster where this image will be downloaded to. And from there, I just need to review and create my new image. Now depending on your connection, that will take a few minutes to download.

-And once it’s complete, here in the VM images blade, you can see the image is available, and is the latest version with no updates available. You can also see I’ve gone ahead and downloaded a Windows Server 2022 Datacenter Azure Edition image that can also be used as a shared session host within Azure Virtual Desktop. Back on the cluster overview page, you’ll see that all prerequisites are now met. It was really that simple.

-Now from there, you just need to click deploy. And by the way, this is the same flow that’s used for hostpool provisioning directly from the Azure Virtual Desktop experience. After filling in the Basics, resource group and name, in this case, I’m building a testing and validation host pool. Under Virtual Machines, these are the usual options like adding a name prefix, but importantly, you’ll select Azure Stack HCI virtual machine as your VM type.

-And when you do that, it will expose the Custom location control, where you’ll select your cluster, and I’ll choose the one that corresponds with our new cluster. Once you set that control, it will filter the VM images to the ones available on that cluster. Then, because this is your own cluster, you won’t see the usual Azure VM SKU selection.

-Instead, you can customize the virtual processor count, memory type, and memory size. The network selection is also filtered to show the available DHCP-based logical networks available on that cluster. Then in the Domain Join options, note that today, you can only choose Active Directory, but soon you’ll also have the option to use Microsoft Entra ID join.

-Then you’ll fill in your user principal name, your password, then the details for the VM local admin account with username, password, and confirmation. The rest of the tabs are identical to provisioning an Azure Virtual Desktop host pool for the Workspace, along with any advanced configurations, the right tagging, and from there, you can make sure everything looks good.

-And if I want to templatize this deployment, from here I can view the Azure Resource Manager template, and incorporate that into fully-automated and unattended deployment scenarios. So from there, I’ll go ahead and click Create. And that will deploy your session hosts with everything they need to be managed directly from the Azure Portal like any other Azure-hosted VM.

-So here you can see my deployed VMs on this cluster, and if we head on over to Azure Virtual Desktop and navigate to Host Pools, then open our newly deployed testing host pool, we can see that our 5 freshly deployed virtual desktops are ready for our team to use. And using Windows Admin Center or PowerShell, you can configure additional options as well, like assigning GPU resources on VMs for specific users.

-In fact, now I’ll connect to a previously deployed VM with GPU support. So you can see the RDP Shortpath is enabled by going into the remote desktop connection and if you see UDP enabled, that means it’s using RDP Shortpath. To show the GPU is available, I can open up the NVIDIA Control Panel, and I’ll head over to System Information, and you’ll see that this is an NVIDIA A16 GPU that’s been partitioned into four parts, of which one is attached to this VM.

-So, I’ll close these windows, and to show it working, I’ll open up a WebGL web app with the aquarium sample running. Now, if you’ve used this sample before, you’ll know it maxes out frames-per-second, or FPS, based on the display’s refresh rate, and in my configuration, that’s 30 hertz. But as you add more fish you can stress the GPU, so I’ll add more and more fish.

-Then as I get up to 25,000 fish it starts to slow down. And at 30,000 fish, it hovers around 26 or 27 frames. Now if I bring in a similar VM without a GPU, notice that at 25 thousand fish, it only runs around 7–9 frames per second, so the GPU VM is three to four times faster even with more fish.

-So, that was an overview of the new Azure Virtual Desktop for Azure Stack HCI, and its integration with Azure Arc, enabling you to run your virtual desktops precisely where you need them, all managed from the cloud. To learn more, check out aka.ms/AVDonHCI. And keep watching Microsoft Mechanics for the latest tech updates, and thanks for watching.

Microsoft Tech Community – Latest Blogs –Read More

Upcoming February 2024 Microsoft 365 Champion Community Call

Join our next community call on February 27 to dive into the immersive 3D world of Microsoft Mesh. We will be starting the call at 5 minutes past the hour for both of our sessions (at 8:05 AM and 5:05 PM PT), and it will still end at the top of the hour (9:00 AM and 6:00 PM PT, respectively).

If you have not yet joined our Champion community, sign up here to get access to the calendar invites, program assets, and previous call recordings.

Microsoft Tech Community – Latest Blogs –Read More

Learn from Microsoft: Best practices for engaging employees with AMAs and live events

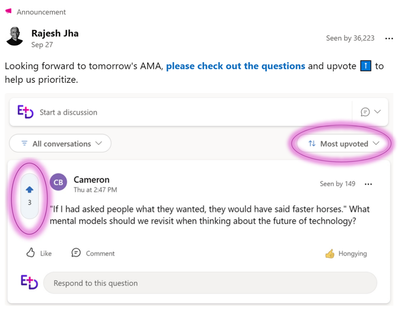

At the beginning of each fiscal year, Rajesh Jha, Executive Vice President of Experiences + Devices at Microsoft, hosts a kick-off event for his organization of more than 40,000 employees. During the event he is eager to get his employees excited about what is to come, listen to, and engage with colleagues through their questions. This year was more engaging than ever as they adopted new technology to achieve their goals: Ask Me Anything (AMA) events and live events in Viva Engage.

“Colleagues have been clear and consistent in their desire to hear directly from our leaders on the topics that matter most to them. Viva Engage Ask Me Anything events have given us a powerful tool to answer the questions that are truly top of mind for them in a transparent way that encourages constructive dialogue across different levels of the organization. Viva Engage also gives us, as corporate communications professionals, the right tools and settings to enable the right style of event from smaller team events through to scaling AMAs to tens of thousands of employees. Feedback has been positive from our leader (Rajesh), the leadership team, our event team and, most importantly, our colleagues; so much so that we are increasing the cadence of our AMAs to every other month.”

– Alexander Bradley, Director of Communications

AMAs and live events

An AMA is an event during which leaders and employees connect to ask and answer questions. It’s a two-way engagement—leaders create the space for conversation, and employees drive the agenda through their questions. AMA events are available as a part of the Viva Suite or Communications and Communities plan. AMAs can be customized with options including moderation, upvoting, and anonymous submission. Previously where you could only submit questions via forms or email, now you can generate engagement before the event with features like upvoting. Upvoting helps to signal moderators and communicators what’s important and top of mind for employees. Participation can be controlled and limited to a specific set of people, or open for anyone in the organization to participate.

Events bring people together to spark dialogue and engagement. They’re an opportunity for leaders to create clarity and spark energy—to inform and inspire employees, and to listen and respond to employees. Events can be in person, but hybrid events with live and on-demand video have become standard, particularly to engage a large, distributed workforce. Live events can be hosted in Viva Engage, where conversations in an event become part of the community’s ongoing discussion.

Rajesh and his team put these two capabilities together in what has proven an excellent, repeatable model. They created the AMA before the event to gather questions and feedback from employees and then proceeded to leverage the ‘upvoting’ from colleagues to inform which topics/questions they took live during the event. They streamed the event live on Viva Engage in their private community, enabling robust conversation alongside the live video, and incorporate a few live questions, apart from the AMA, demonstrating agility in the moment and responsiveness to colleagues’ ‘in the moment’ questions. They then brought the recording and key content back to Viva Engage to share broadly with people who could not attend the live event, hosting the video on Stream and launching via a Viva Engage Storyline announcement from Rajesh; something that previously would have happened over email, limiting the potential for ongoing dialogue.

In this article, we share the best practices that Rajesh and his team learned before, during, and after the event—the ingredients that made it a success. Many of the learnings and best practices mentioned below can be found in the AMA playbook.

Peering into our crystal ball, we can share that we are working to deeply integrate the capabilities Rajesh and his team leveraged into a seamless, end-to-end events experience. We’ll have much more to share in 2024 but, for now, you can have confidence that following these best practices today will set you up for even more success in the coming months.

Before the event

Like any event, success requires planning and preparation. Rajesh’s team determined that the event would be a public AMA event, promoted to the employees of his Experiences + Devices (E+D) organization, but discoverable by anyone in the organization. They also decided that the event would consist of approximately 25% prepared content, in which Rajesh would share vision and top-of-mind, and 75% Q&A.

1-2 weeks before the event

Before the event, Rajesh and his communications team prepared the content and videos for the vision and updates portion. From the E+D community in Viva Engage, they scheduled the live event for the interactive, video portion of the event. Learn more about live events.

To gather questions before the event they leveraged the new ask-me-anything (AMA) feature in Engage. They scheduled the AMA for the same timeframe as the live event, but published the AMA so that it was “live” for several weeks leading up to the event. This gave employees time to submit questions before the live event, upvote questions they found interesting, and react or comment on questions from coworkers. Learn more about AMAs.

To encourage attendance and engagement, the team promoted the event in relevant Engage communities, on Rajesh’s storyline, and via Outlook. We’ve included sample communications at the end of this article. Communications emphasized the importance of upvoting and mentioned that Rajesh would address the most upvoted questions live during the event.

Rajesh announced the event and encouraged employees to upvote colleagues’ questions and submit their own.

For a more recent event, Rajesh’s team shared an announcement from his storyline using a visually-compelling image that takes advantage of new layouts for media that feature a video or image above the text of the post.

At a more recent event, Rajesh used the new layout for images in posts in his announcement. He encouraged employees to submit questions and upvote colleagues’ questions.

To create a space where employees felt comfortable expressing their concerns and questions, the team enabled the option for anonymous submission. They found—as we see across customers—that enabling anonymous questions increased both the quantity and quality of input form employees. The team also enabled moderation, so that organizers could vet questions before the questions were approved and shown to attendees for upvoting.

Moderation options allow a host or organizer to approve questions before they are published.

They approved 2/3rds of the anonymous questions. The team also found that moderation allowed them to reduce the number of duplicate or highly related questions being asked.

The team checked the AMA daily and moderated questions regularly. Because they had a good rhythm in place, they leveraged the new notifications toggle to disable notifications for each new question. Our team is continuing to make more improvements to the , organizers, and attendees.

Invitation and notification options. Organizers and hosts can be sent a calendar invite and can receive notifications for new questions.

1-2 days before the event

There was on the AMA before the live portion of the event. The team could filter questions to the most upvoted questions. They then prepared to address those questions.

On the event’s AMA page, organizers can filter by most upvoted questions.

During the event

The live event was a broadcast viewed by attendees in the E+D community. There are several ways to produce live events in Viva Engage:

Using Teams, anyone can produce high-quality broadcasts using webcams and screen sharing. Learn more about producing an live event with Teams.

Rajesh’s team had access to a production team that used professional studio facilities to create the broadcast, streaming it using RTMP into the live event. Learn more about producing an event with an external encoder.

After the vision and update portion of the event, Rajesh and his team began answering questions from employees. They answered ALL questions that had been submitted in the AMA with more than 50 upvotes, delivering on their promise to address the questions that we’re truly top of mind for employees.

Live events in Viva Engage also support questions and conversation during the live event. Throughout this event they showcased the questions Rajesh was asking in real time in the event with Rajesh!

During the live event, Rajesh answered the most upvoted questions from employees.

Showcasing impact after the event

A post-event survey was sent out to those who attended to see how this new format worked in the days that followed the event. The feedback was clear—people really enjoyed the new Q&A powered by Viva Engage AMAs and were happy at how many questions Rajesh was able to answer.

The AMA insights panel assisted Rajesh and his team in evaluating the impact of this event compared to previous events.

After the event, analytics provide insights on activity and engagement.

Rajesh and his team look forward to continuing to leverage this format to engage his employees in upcoming Q&A events! In fact, they’ve committed to hosting AMA Town Hall events bi-monthly.

AMA + Live Event Communications Plan

Since the initial AMA, Rajesh’s team has hosted a few more AMAs and we’ve learned some best practices about communicating expectations about the event and how to provide the best guidance for employees. Here’s an example communications plan for this type of AMA + Live Event. We hope that this helps your teams make your first AMA event a success.

Timeline

Type

Highlights

2 weeks before the event

Calendar invite

The calendar invite has all relevant links to live event and AMA.

A week before event

Storyline Announcement from leader

A week before event an announcement to the leaders audience that describes the upcoming events and links to the AMA for gathers questions before hand. This communication also mentions how they will prioritize the most upvoted questions.

Day before event

Storyline Announcement from leader

The day prior, remind employees about the event and how to engage

Hour before the live event is host

Post an Announcement in the community where the Live Event

Reminder that the event starts soon and how to join the live event.

After the event

Event Summary

Share out recap and high level key points and event recording

Try it in your own network!

Try hosting an AMA alongside your next town hall or all hands.

Resources:

AMA events in Viva Engage – Microsoft Support

AMA playbook

Microsoft Tech Community – Latest Blogs –Read More

Understanding OneLake Architecture: The OneDrive for Data

As data engineers, we grapple with numerous challenges daily. Data is often scattered across various sources, residing in a multitude of file types with varying data quality. The time spent locating specific files—figuring out which tenant they belong to and deciphering access rights—can be exasperating. This is where OneLake steps in.

OneLake streamlines data management, breaks down silos, and ensures that your data resides in one unified home—just like OneDrive for files!

A Basic Setup would be;

What is OneLake?

OneLake is essentially the OneDrive for data within the Fabric ecosystem. Just like OneDrive, it’s automatically provisioned for every Fabric tenant, requiring no infrastructure management.

Key benefits of OneLake include:

Unified data storage across different domains and tenants.

Support for both managed and unmanaged data storage.

Full Delta support using VertiParq (a powerful feature for tracking changes in data).

Distributed ownership of data and security.

Integration with DirectLake, providing robust Power BI support

How does OneLake work?

The architecture of OneLake allows seamless connectivity to multiple cloud providers. Let’s explore the basics:

Symbolic links (Shortcuts): Using symbolic links, you can connect to both Azure and Amazon storage. These shortcuts enable data from these providers to be accessible within the same OneLake, without having to copy the data

Unified management: All personas—data engineers, real-time analysts, and BI developers—can directly access data stored in OneLake.

Delta file format: Data within OneLake uses the open-source delta file format, which optimizes storage for data engineering workflows. It supports efficient storage, versioning, schema enforcement, ACID transactions, and streaming.

Ingestion methods: You can get data into OneLake via Shortcuts or data pipelines. Shortcuts create symbolic links to external storage locations, simplifying navigation. Data pipelines, familiar to Data Factory or Synapse users, link external lakes into the managed tables area.

Managed Data: Tables

Tables play a crucial role in managing and organizing data within the lakehouse architecture. Once set up in the managed section of the lakehouse, you have several options:

Browse tables using the Lakehouse Explorer.

Query and analyze data efficiently.

Connecting External Data to Microsoft Fabric OneLake

Now that you’ve grasped how the oneLake works, let’s get some data from an External source into oneLake. For this, we will be using the Data Engineering Experience, feel free to choose any other Experience.

Create a workspace:

Begin by creating a workspace within your Microsoft Fabric environment. This workspace will serve as the container for your data-related activities.

Select lakehouse item from the drop-down menu and give it a name

Setup a Lakehouse:

Next, create a lakehouse item within your workspace by following the following steps;

Select the workspace into which you want to create the lakehouse.

In the open worspace, select new.

Select lakehouse item from the drop-down menu and give it a name.

3. Ingest the Data from an Extenal source into the Lakehouse.

Use any of the following options to create a shortcut, which is allows you to point to other storage locations, which can either be internal or external to oneLake.

That will launch up a shortcut wizard, select the source you want to pull your data from. For this demo select OneLake to create an internal shortcut.

Find and connect to the data you want to use with your shortcut. And click next. Your data will be loaded in the files section of your lakehouse

Preview the Loaded data by clicking on the files section

4. Transform the Data into Delta Tables

Once your data is in the Lakehouse, create a new notebook and associate it with the Lakehouse created. Drag and drop the file into the notebook.

5. Transform it into delta tables using Spark within the Fabric notebook. Delta tables provide efficient change tracking and management.

6. Build Reports and Analyze the Data

From the table view, click on Lakehouse and select SQL analytics endpoint.

From the SQL endpoint view, select new visual to create a simple visual

You can create the visuals manually, or let co-pilot do the magic for you.

Clean Up Resources: After completing the task, remember to clean up any temporary or test data.

Conclusion

OneLake aims to give you the most value possible out of a single copy of data without data movement or duplication. You no longer need to copy data just to use it with another engine or to break down silos so you can analyze the data with data from other sources.

Further Guides