Category: Microsoft

Category Archives: Microsoft

Streamline SharePoint Governance with Site Lifecycle Management

Site Lifecycle Management Policies in SharePoint Advanced Management

SharePoint is a powerful platform for collaboration and content management; it is not surprising that millions of people use SharePoint daily to perform their tasks. A good governance plan is necessary to manage this growth, and while most organizations have some basic policies and procedures for creating, maintaining, and decommissioning sites; it has always been a challenge to find inactive sites and can be identified for deletion. This is especially important as organizations are starting to deploy Microsoft 365 Copilot, and cleaning up outdated data is more important than ever.

Site lifecycle management in SharePoint Advanced Management can help organizations to easily track and inform site owners when their site is not active, and allow them to take the appropriate steps to manage their content.

To use site lifecycle management, you must first create an inactive site policy. This will allow you to create rules that define when a site is considered inactive, what sites are included in the policy, and manually exclude sites as needed.

The policy can be adjusted to target sites based on how they were created and the type of site, such as group connected sites or communication sites, etc. This will enable administrators to apply different rules based on how the site is used. For example, you can have a stricter policy for classic sites, by setting the site policy to consider a site inactive sooner than others.

Administrators can also use these policies to only target only sites created by users, and exclude anything created in the SharePoint admin center or via PowerShell.

Once the policy is configured, site owners will receive an email notification asking them to certify that the site is active or providing them information on how to delete the site.

Administrators can access a report of inactive sites in the portal, which is in CSV format. This format can help with further actions such as deleting the sites or sending additional messages to the site owners.

SharePoint Advanced Management provides a powerful tool for managing site lifecycles through its site lifecycle policies. These policies allow administrators to easily track inactive sites, inform site owners, and take appropriate actions to manage their content. By utilizing these policies, organizations can ensure that their SharePoint environment remains clean and up-to-date, improving overall efficiency and productivity.

Review the full details of site lifecycle policies and everything that’s available with SharePoint Advanced Management in the links below:

Manage site lifecycle policies – SharePoint in Microsoft 365 | Microsoft Learn

Microsoft Tech Community – Latest Blogs –Read More

Armchair Architects: Large Language Models (LLMs) & Vector Databases

David Blank-Edelman and our armchair architects Uli Homann and Eric Charran will be focusing on large language models (LLMs) and vector databases and their role in fueling AI, ML, and LLMs.

What are vector databases?

Eric defines vector databases as a way in which we store meaningful information about multi-dimensional aspects of data such as what’s called vectors, which are numerical, typically numerical integers which work very much like a traditional relational database system.

What’s interesting about the vector databases is that they help us solve different types of queries. One type of query is like a “nearest neighbor”. For example, if Spotify knows that Eric Charran loves Def Leppard and he loves this one song, what are some of the nearest other songs that are very, very similar based on a number of dimensions that Spotify might have so that it can recommend this other song. The way that it works is that it’s just using numerical distance between the vectors to figure that answer to that question out.

Uli added that vector databases in the context of AI are effectively using text and they’re converting text into these numerical representation. If you go into the PostgreSQL community for example, the PostgreSQL teams have already added a plug-in into PostgreSQL where you can take any text field, turn it into a vector and then you can take that vector and embed it into an LLM.

Vectors have been around for a very long time as it is part of the of the neural network model which at the end of the day days, those are vectors as well. This is now data specific because it’s not just databases, while databases will be prevalent, you will see search systems also expose their search index as vectors.

Azure Cognitive Search for example does that and Uli theorizes that other search systems do that as well. You can take that index and make it part, for example, of an OpenAI system or Bard or whatever AI system you like.

Vector databases is one way of implementing an AI system, the other method is embedding.

Vector Databases and Natural Language Processing (NLP)

Let’s look into how vector databases are used for in the real world and NLP, where embedding is used. For example taking word embeddings, sentence embeddings, making them specifically integer base so LLMs can actually include them in the corpus of information that’s used to train it. That is one vector database use case another use case is the “nearest neighbor” example earlier.

If you recall, for the nearest neighbor use case, if I have this particular item, object input, what are the nearest things closest or the farthest things away from it. This can include image and video retrieval, taking unstructured data but vectorizing it so that you can find it, surface it, and do all of those comparison things that are important. This can also include anomaly detections and geospatial data and then machine learning.

What does embedding mean in the context of LLMs?

LLMs get trained primarily on the Internet, so if you’re looking at Bard or you’re looking at OpenAI, they get a copy of the Internet as the corpus of knowledge and conceptually how it works is it is vectorized and put it into the LLM.

Now that’s a great set of knowledge, and if you use ChatGPT or Bing Chat or something similar, you will effectively access that Internet. This is great, but most of these LLMs are static, for example, the OpenAI models got compiled sometime in 2021. If you ask the model without any helper about an event in 2022, it won’t know because it got compiled, conceptually speaking, with knowledge that didn’t include 2022 events.

So now what happens is you bring in models from the Internet, for example, that effectively allow these LLMs to understand “oh, there is something beyond what I already know” and bring it in. This scenario would apply for example in an internet search.

If you’re an enterprise, you care about the global knowledge but you want your enterprise’s specific knowledge also to be part of this search. So that if somebody like Eric is looking for specific things in his new company, the company’s knowledge is available for him as well. That’s what’s called data grounding, you ground the model with the data that your enterprise has and expand the knowledge, and embedding is one technique of doing that.

Embedding simply says, take this vector of knowledge and fold it into your larger model so that every time you run a query, this embedding will be part of the query that the system evaluates before it responds to you. The way that Eric thinks about it is the vector database stores the integer-based representation of a concept found within a corpus of information of a web page on the Internet and what it allows you to do is to link near concepts together.

That’s how the LLMs, if it’s trained on these vectors and these embeddings, really understands concepts. It’s the vectorization of semantic concepts and then the distance equation between them allows the model to stitch these things together and respond accordingly.

Using One or Multi-shot training of an LLM

LLM teams now have the technology and tools to make it easy to bring vector stores and vector databases into your models through embeddings. A tip is to make sure you use the right tooling to help you, however before you do that you can use prompt engineering to also feed example data of what you’re looking for into the model. Part of the prompt engineering is what’s called one or multi-shot training. As part of the prompt you’re saying, “I’m expecting this kind of output.”

Then the system takes that into account and says “ah, that’s what you’re looking for” and responds in kind. You can feed it quite a lot of sample data, this is what I want you to look at and that’s obviously far cheaper than doing embeddings and other things because it’s part of the prompt and therefore should be always considered first before you go into embeddings.

Corporation will end up using embeddings, but you should start with one shot and multi-shot training because there is a lot of knowledge in those models that can be coaxed out if you give it the right prompt.

LLM Fine Tuning

Fine tuning is the way in which you take a model and you try to develop a fit for purpose data set. Whether it’s a pre-compiled model that you’ve downloaded or used or have already trained, you engage in additional training loops in order for it to train on a fit for purpose data set so that you can basically tune it to respond in the ways in which you intend for it to respond.

The fine tuning element is to adjust the model’s parameters based on iterative training loops around purpose driven data sets. The key part is where you bring specific data sets, and you take a layer of the model and train it. You are adding more constraints to the general-purpose model and it’s using your data to affect the training itself for the specific domain you are in for example healthcare or industrial automation domain. Fine tuning also helps extremely with hallucinations because you tell the system this is what you need to pay attention to and it will effectively adapt and be more precise.

Limitations of LLMs and One shot or Multi-shot Training

There are two things that large language modes are really bad at. One is math, so don’t ask it to do calculus for you, that’s not going to work. As of today, the second one is, you cannot point a LLM to a structured database and have the system just automatically understand this is the schema, this is the data and ask it to produce good responses. There is still much more work involved.

The state-of-the-art right now is that you effectively build shims or work arounds it; you write code that you can then integrate into the prompt. For example, OpenAI has a way for you to call a function inside your prompt, and that function can, for example, bring back relational or structured data.

From the one shot or multi-shot training, you can take the result set, which cannot be too large, and feed it into the prompt. There is also pipeline based programming, which is explained in this scenario.

You are an insurance company.

David is a customer of the insurance company and would like to understand his claim status.

You go into your the website and you type in the chat and the first thing that I need to know is who is David? In my CRM system you will know who is David, what insurances he has, what claims are open, etc.…That is all structured information out of the CRM system and maybe a claims management system.

The first phase is to parse the language, the text that David entered using GPT for example, and pull out the information that’s relevant and then feed it through structured API calls.

You get the result sets back.

You then create the prompt that you really want to use for the response using prompt engineering with the one and multi-shot training.

Then the system generates the response that you’re looking for in terms of “Hi, David, great to see you again here’s the status of the claim.”

You then had just used the OpenAI model in this case twice, not just once.

In summary, you use it first to understand the language and extract what you need to call the structured APIs and then you feed the response from the structured system back into the prompt so that it generates the response you’re looking for.

Increased Adoption of Vector Indexes

Eric brought up as an architect how do I figure out whether or not I need a dedicated vector database or can I use these things called vector search indexes? Another question may be how do I build a system so that I can help LLM developers be better at their job or more efficient at their job?

Eric thinks that we’re reaching a transition point in which vector databases used to be a Relational Database Management System (RDBMS) for vectors and answering queries associated with vectors.

He is seeing a lot of the lake house platforms and traditional database management systems adopt vector indexes so that developers don’t have to pick the data up and move it to another specific place, vectorize it and store it. Now there are these components of relational database management systems or lake houses that create vectors on top of where the data lives, so on top of delta tables for example. That’s one architectural consideration and it should make architects happy because the heaviest thing in the world to move is data and architects hate doing it.

Architectural Considerations around Vector Databases

The other architectural consideration is how do you actually arrive at the vectorization? Is it an ETL scheme on write operation? Is there logic associated with the vectorization themselves? All of that information is important to consider when you’re trying to create a platform for your organization.

If you’re creating your own foundational models, vectorization and the process by which data becomes vectorized if or embedded becomes very important.

You also have to worry about whether or not you’re allowed to store specific information based on your industry such as in financial services, life sciences, health, all those different things you may need to scan and tokenize your data as well before it goes into the vectorized process.

Another consideration for architects to think about is that although vectorization is a key technique, but we have now seen real data, in this case Microsoft, that vectorization is not necessary alone the answer to the question. Microsoft has seen that a search index plus vectorization is actually faster and more reliable from a response perspective for an open AI system than just the vector or just the query of the index.

When developing a solution, you should be much more flexible in this case where you say, “how am I going to go and get this data?” Sometimes it’s a combination of techniques, not just one technique that will work or be most efficient.

Architecture for Uli is an understanding of the tools that you have and really picking on what it is and ideally not looking for black or white answers as the world is mostly gray and picking the right tools together makes the right answer rather than a singular tool or technique.

Resources

Vector search in Azure AI Search

Geospatial data processing and analytics

Microsoft Azure AI Fundamentals: Natural Language Processing

Azure Database for PostgreSQL

Vector DB Lookup tool for flows in Azure AI Studio

Related episodes

Armchair Architects: LLMs & Vector Databases (Part 2)

Watch more episodes in the Armchair Architects Series

Watch more episodes in the Well-Architected Series

Recommended Next Steps

If you’d like to learn more about the general principles prescribed by Microsoft, we recommend Microsoft Cloud Adoption Framework for platform and environment-level guidance and Azure Well-Architected Framework. You can also register for an upcoming workshop led by Azure partners on cloud migration and adoption topics and incorporate click-through labs to ensure effective, pragmatic training.

You can view the whole videos below and check our more videos from the Azure Enablement Show.

Microsoft Tech Community – Latest Blogs –Read More

Microsoft and SAP work together to transform identity for SAP customers

SAP has recently announced its collaboration with Microsoft and advises their SAP Identity Management (IDM) customers to move their identity management scenarios to Microsoft Entra ID as their IDM approaches the end of maintenance. This latest collaboration creates new possibilities for Microsoft Entra and SAP to offer enhanced integration that will support a comprehensive identity and access governance framework.

Microsoft and SAP will deepen our longstanding partnership to combine our unique areas of expertise. We are committed to delivering the best identity management solutions for our customers and users, and we’re honored to partner with SAP on delivering seamless and secure identity management experiences that will support SAP customers’ digital transformation and cloud adoption goals. Over the years we’ve worked together to integrate our products and services, such as Microsoft Azure, Microsoft 365, SAP Cloud Platform, SAP S/4HANA, and SAP SuccessFactors.

Our aim is to help SAP customers with their migration path so they can continue to connect enterprise software and collaboration tools to work and innovate effectively, quickly, and seamlessly.

To learn more about our latest collaboration, read the blog post here.

Irina Nechaeva, General Manager, Identity and Network Access

Learn more about Microsoft Entra:

Related Articles: SAP’s blog - Preparing for SAP Identity Management’s End-of-Maintenance in 2027.

See recent Microsoft Entra blogs

Dive into Microsoft Entra technical documentation

Learn more at Azure Active Directory (Azure AD) rename to Microsoft Entra ID

Join the conversation on the Microsoft Entra discussion space

Learn more about Microsoft Security

Microsoft Tech Community – Latest Blogs –Read More

Microsoft and SAP work together to transform identity for SAP customers

SAP has recently announced its collaboration with Microsoft and advises their SAP Identity Management (IDM) customers to move their identity management scenarios to Microsoft Entra ID as their IDM approaches the end of maintenance. This latest collaboration creates new possibilities for Microsoft Entra and SAP to offer enhanced integration that will support a comprehensive identity and access governance framework.

Microsoft and SAP will deepen our longstanding partnership to combine our unique areas of expertise. We are committed to delivering the best identity management solutions for our customers and users, and we’re honored to partner with SAP on delivering seamless and secure identity management experiences that will support SAP customers’ digital transformation and cloud adoption goals. Over the years we’ve worked together to integrate our products and services, such as Microsoft Azure, Microsoft 365, SAP Cloud Platform, SAP S/4HANA, and SAP SuccessFactors.

Our aim is to help SAP customers with their migration path so they can continue to connect enterprise software and collaboration tools to work and innovate effectively, quickly, and seamlessly.

To learn more about our latest collaboration, read the blog post here.

Irina Nechaeva, General Manager, Identity and Network Access

Learn more about Microsoft Entra:

Related Articles: SAP’s blog - Preparing for SAP Identity Management’s End-of-Maintenance in 2027.

See recent Microsoft Entra blogs

Dive into Microsoft Entra technical documentation

Learn more at Azure Active Directory (Azure AD) rename to Microsoft Entra ID

Join the conversation on the Microsoft Entra discussion space

Learn more about Microsoft Security

Microsoft Tech Community – Latest Blogs –Read More

Microsoft and SAP work together to transform identity for SAP customers

SAP has recently announced its collaboration with Microsoft and advises their SAP Identity Management (IDM) customers to move their identity management scenarios to Microsoft Entra ID as their IDM approaches the end of maintenance. This latest collaboration creates new possibilities for Microsoft Entra and SAP to offer enhanced integration that will support a comprehensive identity and access governance framework.

Microsoft and SAP will deepen our longstanding partnership to combine our unique areas of expertise. We are committed to delivering the best identity management solutions for our customers and users, and we’re honored to partner with SAP on delivering seamless and secure identity management experiences that will support SAP customers’ digital transformation and cloud adoption goals. Over the years we’ve worked together to integrate our products and services, such as Microsoft Azure, Microsoft 365, SAP Cloud Platform, SAP S/4HANA, and SAP SuccessFactors.

Our aim is to help SAP customers with their migration path so they can continue to connect enterprise software and collaboration tools to work and innovate effectively, quickly, and seamlessly.

To learn more about our latest collaboration, read the blog post here.

Irina Nechaeva, General Manager, Identity and Network Access

Learn more about Microsoft Entra:

Related Articles: SAP’s blog - Preparing for SAP Identity Management’s End-of-Maintenance in 2027.

See recent Microsoft Entra blogs

Dive into Microsoft Entra technical documentation

Learn more at Azure Active Directory (Azure AD) rename to Microsoft Entra ID

Join the conversation on the Microsoft Entra discussion space

Learn more about Microsoft Security

Microsoft Tech Community – Latest Blogs –Read More

Microsoft and SAP work together to transform identity for SAP customers

SAP has recently announced its collaboration with Microsoft and advises their SAP Identity Management (IDM) customers to move their identity management scenarios to Microsoft Entra ID as their IDM approaches the end of maintenance. This latest collaboration creates new possibilities for Microsoft Entra and SAP to offer enhanced integration that will support a comprehensive identity and access governance framework.

Microsoft and SAP will deepen our longstanding partnership to combine our unique areas of expertise. We are committed to delivering the best identity management solutions for our customers and users, and we’re honored to partner with SAP on delivering seamless and secure identity management experiences that will support SAP customers’ digital transformation and cloud adoption goals. Over the years we’ve worked together to integrate our products and services, such as Microsoft Azure, Microsoft 365, SAP Cloud Platform, SAP S/4HANA, and SAP SuccessFactors.

Our aim is to help SAP customers with their migration path so they can continue to connect enterprise software and collaboration tools to work and innovate effectively, quickly, and seamlessly.

To learn more about our latest collaboration, read the blog post here.

Irina Nechaeva, General Manager, Identity and Network Access

Learn more about Microsoft Entra:

Related Articles: SAP’s blog - Preparing for SAP Identity Management’s End-of-Maintenance in 2027.

See recent Microsoft Entra blogs

Dive into Microsoft Entra technical documentation

Learn more at Azure Active Directory (Azure AD) rename to Microsoft Entra ID

Join the conversation on the Microsoft Entra discussion space

Learn more about Microsoft Security

Microsoft Tech Community – Latest Blogs –Read More

Microsoft and SAP work together to transform identity for SAP customers

SAP has recently announced its collaboration with Microsoft and advises their SAP Identity Management (IDM) customers to move their identity management scenarios to Microsoft Entra ID as their IDM approaches the end of maintenance. This latest collaboration creates new possibilities for Microsoft Entra and SAP to offer enhanced integration that will support a comprehensive identity and access governance framework.

Microsoft and SAP will deepen our longstanding partnership to combine our unique areas of expertise. We are committed to delivering the best identity management solutions for our customers and users, and we’re honored to partner with SAP on delivering seamless and secure identity management experiences that will support SAP customers’ digital transformation and cloud adoption goals. Over the years we’ve worked together to integrate our products and services, such as Microsoft Azure, Microsoft 365, SAP Cloud Platform, SAP S/4HANA, and SAP SuccessFactors.

Our aim is to help SAP customers with their migration path so they can continue to connect enterprise software and collaboration tools to work and innovate effectively, quickly, and seamlessly.

To learn more about our latest collaboration, read the blog post here.

Irina Nechaeva, General Manager, Identity and Network Access

Learn more about Microsoft Entra:

Related Articles: SAP’s blog - Preparing for SAP Identity Management’s End-of-Maintenance in 2027.

See recent Microsoft Entra blogs

Dive into Microsoft Entra technical documentation

Learn more at Azure Active Directory (Azure AD) rename to Microsoft Entra ID

Join the conversation on the Microsoft Entra discussion space

Learn more about Microsoft Security

Microsoft Tech Community – Latest Blogs –Read More

Microsoft and SAP work together to transform identity for SAP customers

SAP has recently announced its collaboration with Microsoft and advises their SAP Identity Management (IDM) customers to move their identity management scenarios to Microsoft Entra ID as their IDM approaches the end of maintenance. This latest collaboration creates new possibilities for Microsoft Entra and SAP to offer enhanced integration that will support a comprehensive identity and access governance framework.

Microsoft and SAP will deepen our longstanding partnership to combine our unique areas of expertise. We are committed to delivering the best identity management solutions for our customers and users, and we’re honored to partner with SAP on delivering seamless and secure identity management experiences that will support SAP customers’ digital transformation and cloud adoption goals. Over the years we’ve worked together to integrate our products and services, such as Microsoft Azure, Microsoft 365, SAP Cloud Platform, SAP S/4HANA, and SAP SuccessFactors.

Our aim is to help SAP customers with their migration path so they can continue to connect enterprise software and collaboration tools to work and innovate effectively, quickly, and seamlessly.

To learn more about our latest collaboration, read the blog post here.

Irina Nechaeva, General Manager, Identity and Network Access

Learn more about Microsoft Entra:

Related Articles: SAP’s blog - Preparing for SAP Identity Management’s End-of-Maintenance in 2027.

See recent Microsoft Entra blogs

Dive into Microsoft Entra technical documentation

Learn more at Azure Active Directory (Azure AD) rename to Microsoft Entra ID

Join the conversation on the Microsoft Entra discussion space

Learn more about Microsoft Security

Microsoft Tech Community – Latest Blogs –Read More

Microsoft and SAP work together to transform identity for SAP customers

SAP has recently announced its collaboration with Microsoft and advises their SAP Identity Management (IDM) customers to move their identity management scenarios to Microsoft Entra ID as their IDM approaches the end of maintenance. This latest collaboration creates new possibilities for Microsoft Entra and SAP to offer enhanced integration that will support a comprehensive identity and access governance framework.

Microsoft and SAP will deepen our longstanding partnership to combine our unique areas of expertise. We are committed to delivering the best identity management solutions for our customers and users, and we’re honored to partner with SAP on delivering seamless and secure identity management experiences that will support SAP customers’ digital transformation and cloud adoption goals. Over the years we’ve worked together to integrate our products and services, such as Microsoft Azure, Microsoft 365, SAP Cloud Platform, SAP S/4HANA, and SAP SuccessFactors.

Our aim is to help SAP customers with their migration path so they can continue to connect enterprise software and collaboration tools to work and innovate effectively, quickly, and seamlessly.

To learn more about our latest collaboration, read the blog post here.

Irina Nechaeva, General Manager, Identity and Network Access

Learn more about Microsoft Entra:

Related Articles: SAP’s blog - Preparing for SAP Identity Management’s End-of-Maintenance in 2027.

See recent Microsoft Entra blogs

Dive into Microsoft Entra technical documentation

Learn more at Azure Active Directory (Azure AD) rename to Microsoft Entra ID

Join the conversation on the Microsoft Entra discussion space

Learn more about Microsoft Security

Microsoft Tech Community – Latest Blogs –Read More

Microsoft and SAP work together to transform identity for SAP customers

SAP has recently announced its collaboration with Microsoft and advises their SAP Identity Management (IDM) customers to move their identity management scenarios to Microsoft Entra ID as their IDM approaches the end of maintenance. This latest collaboration creates new possibilities for Microsoft Entra and SAP to offer enhanced integration that will support a comprehensive identity and access governance framework.

Microsoft and SAP will deepen our longstanding partnership to combine our unique areas of expertise. We are committed to delivering the best identity management solutions for our customers and users, and we’re honored to partner with SAP on delivering seamless and secure identity management experiences that will support SAP customers’ digital transformation and cloud adoption goals. Over the years we’ve worked together to integrate our products and services, such as Microsoft Azure, Microsoft 365, SAP Cloud Platform, SAP S/4HANA, and SAP SuccessFactors.

Our aim is to help SAP customers with their migration path so they can continue to connect enterprise software and collaboration tools to work and innovate effectively, quickly, and seamlessly.

To learn more about our latest collaboration, read the blog post here.

Irina Nechaeva, General Manager, Identity and Network Access

Learn more about Microsoft Entra:

Related Articles: SAP’s blog - Preparing for SAP Identity Management’s End-of-Maintenance in 2027.

See recent Microsoft Entra blogs

Dive into Microsoft Entra technical documentation

Learn more at Azure Active Directory (Azure AD) rename to Microsoft Entra ID

Join the conversation on the Microsoft Entra discussion space

Learn more about Microsoft Security

Microsoft Tech Community – Latest Blogs –Read More

How to handle azure data factory lookup activity with more than 5000 records

Hello Experts,

The DataFlow Activity successfully copies data from an Azure Blob Storage .csv file to Dataverse Table Storage. However, an error occurs when performing a Lookup on the Dataverse due to excessive data. This issue is in line with the documentation, which states that the Lookup activity has a limit of 5,000 rows and a maximum size of 4 MB.

Also, there is a Workaround mentioned (Micrsofot Documentation): Design a two-level pipeline where the outer pipeline iterates over an inner pipeline, which retrieves data that doesn’t exceed the maximum rows or size.

How can I do this? Is there a way to define an offset (e.g. only read 1000 rows)

Thanks,

-Sri

Hello Experts, The DataFlow Activity successfully copies data from an Azure Blob Storage .csv file to Dataverse Table Storage. However, an error occurs when performing a Lookup on the Dataverse due to excessive data. This issue is in line with the documentation, which states that the Lookup activity has a limit of 5,000 rows and a maximum size of 4 MB. Also, there is a Workaround mentioned (Micrsofot Documentation): Design a two-level pipeline where the outer pipeline iterates over an inner pipeline, which retrieves data that doesn’t exceed the maximum rows or size. How can I do this? Is there a way to define an offset (e.g. only read 1000 rows) Thanks,-Sri Read More

Understanding the Windows Event Log and Event Log Policies

The event log is something that’s been built into Windows Server for decades. It’s one of those meat and potatoes features that we all have a cursory understanding of but rarely think about in depth. The event logs record events that happen on the computer. Examining the events in these logs can help you trace activity, respond to events, and keep your systems secure. Configuring these logs properly can help you manage the logs more efficiently and use the information that they provide more effectively.

We created the video below to explain the different Windows Event Logs and the policies that you can use to control how those logs record and store event data. It’s a topic you’re probably passingly familiar with – and the video provides a summary of what’s in the documentation that you can listen to or watch as a refresher (or introduction) to this core operating system technology.

Windows Server: Event Log and Event Log Policies

Microsoft Tech Community – Latest Blogs –Read More

Windows 365 Healthcare Virtual Webinar

This is a virtual webinar event series for healthcare focused on Microsoft Windows 365 Cloud PC Cloud Virtualization Desktop solution (a SaaS product).

The HLS team covers a vast majority of healthcare accounts in the US region, from providers, payors, pharma, and health & science, where each of them offers different organization requirements and business blockers.

Our field team has taken the initiative to develop organic content to highlight a solution to some of these roadblocks with the goal of promoting awareness and visibility to business stakeholders.

Background

Windows 365 Cloud PC is a disruptive innovative technology that aims to revolutionize patient & clinician experience; where healthcare industries must prioritize consumer experience, embrace patient-centric innovation, improve workforce business productivity, realize AI true-benefits for adoption, while systems continue to evolve for interoperability data exchange, steering for simplicity and experience when building a windows ecosystem.

Agenda

We’re excited to present a Windows 365 Cloud PC virtual webinar tailored for healthcare, deep high-level business and technical content, region scoped business scenarios, aiming to account for sub-domains design (identity, security, data protection, etc..), and region-based specific blockers.

Windows 365 Healthcare Virtual Webinar

Agenda

Tuesday

March 12, 2024

Documents

Icons

Session 1

9:00 AM

How to Break Free from Traditional VDI with W365

Speakers: Juan Sifuentes, Jesse Asmond

Teams Webinar session link

LinkedIn webinar event link

HLS Blog session link

YouTube session recording link

Microsoft Forms link

Session 2

10:00 AM

Ultimate guide to Safeguarding your Cloud PC Access

Speakers: Sean McNeill, Erfan Setork

Teams Webinar session link

LinkedIn webinar event link

HLS Blog session link

YouTube session recording link

Microsoft Forms link

Session 3

11:00 AM

Transforming Onboarding with W365 & Viva

Speakers: AJ Goldie, Dan Ramacciotti, Jenn Myers

Partners: Tom Cantrell (Kizan)

Teams Webinar session link

LinkedIn webinar event link

HLS Blog session link

YouTube session recording link

Microsoft Forms link

Session 4

12:00 PM

Empowering Healthcare Payors with W365 and Intune

Speakers: Kevin Bowlin, Lauren Nordmann

Partners: Miellette Mcfarlane,

Scott Winslow (Cyclotron)

Teams Webinar session link

LinkedIn webinar event link

HLS Blog session link

YouTube session recording link

Microsoft Forms link

Session 5

1:00 PM

Say goodbye to Windows 10, Embrace NextGen

Speakers: Nicholas Aquino, Dan Ramacciotti

Teams Webinar session link

LinkedIn webinar event link

HLS Blog session link

YouTube session recording link

Microsoft Forms link

Note: The links redirection to content will be updated after the completion of the webinar.

We will continue to target more webinars aimed at helping our healthcare customers, if you want to learn more be sure to follow these resources:

Windows 365 Healthcare Virtual Webinar Series

Windows 365 Cloud PC Healthcare Blog

Windows 365 Architecture

Windows 365 Management

Windows 365 Cloud PC Healthcare Series

Thank you for stopping by; Juan Sifuentes | CETS | Healthcare.

Microsoft Tech Community – Latest Blogs –Read More

Join Global Power Platform Bootcamp 2024 on Feb 23-24

The 5th Power Platform Festival, Global Power Platform Bootcamp, is coming up soon on February 23rd and 24th. This time, over 100 local events are planned by the Power Platform community worldwide, with many events scheduled to be led by MVPs, RDs, and Microsoft Learn Student Ambassadors.

We encourage you to explore the details of each event on the following page and seize the opportunity to engage in an event that piques your interest.

GPPB Bootcamps 2024 · Global Power Platform Bootcamp

Microsoft Tech Community – Latest Blogs –Read More

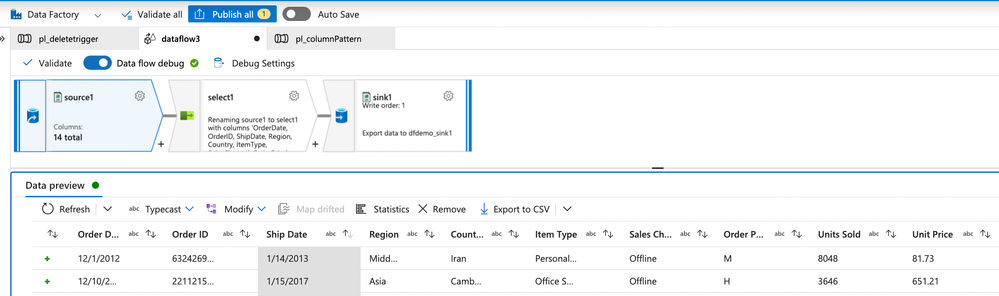

ADFSynapse Analytics – Replace Columns names using Rule based mapping in Mapping data flows

In real time, the column names from source might not be uniform, some columns will have a space in it, some other columns will not.

For example,

Sales Channel

Item Type,

Region

Country

Unit Price

It is a good practice to replace all the spaces in a column name before doing any transformation for easy handling. This also helps with auto mapping, when the sink column names do not come with spaces!

Select transformation in data flow makes it simpler to automatically detect spaces in column names and then remove them for the rest of the dataflow.

Consider the below source, with the given column names.

Here as we can see, few columns have spaces, few columns like Region and Country do not have spaces in it.

Using the below configuration in select transformation, we can get rid of the spaces in the column names with a simple expression.

In the Input columns, Click on Add mapping button, and choose Rule-based Mapping.

Then give the below expression:

on Source1’s column: true()

on Name as column: replace($$,’ ‘,”)

What it does?

It will return true() for all the columns which have ‘ ‘ (space) in it and replace it with ” (no space).

Upon data preview, we get to see the below result,

As we are seeing here, all the columns with spaces are coming without spaces in between.

If not for the Rule based mapping, one would have to manually remove space from all the columns. It would be a nightmare if the number of columns are more! Thanks to rule-based mapping!

Microsoft Tech Community – Latest Blogs –Read More

Democratizing Data: Unleashing Power of AI Analytics with LangChain and Azure OpenAI Services

Artificial Intelligence (AI) is revolutionizing how we analyze and interpret data, signaling a paradigm shift towards more accessible and user-friendly data analytics. Generative AI systems, like LangChain’s Pandas DataFrame agent, are at the heart of this transformation. Using the power of Large Language Models (LLMs such as GPT-4, these agents make complex data sets understandable to the average person.

In this blog we will explore how LangChain and Azure OpenAI are revolutionizing data analytics. Discover the transformative potential of Generative AI and Large Language Models in making data analytics accessible to everyone, irrespective of their coding expertise or data science background. Dive into the behind-the-scenes magic of the LangChain agent and learn how it simplifies the user experience by dynamically generating Python code for data analysis

Generative AI: A Game Changer for Data Analytics: Generative AI transforms the field by enabling users to communicate with data in natural language. This pivotal development breaks down barriers, making data analytics accessible to everyone, not just those with coding expertise or data science backgrounds. The result? A more inclusive and expansive understanding of data.

The LangChain Pandas DataFrame Agent: LangChain’s agent uses LLMs trained on vast text corpora to respond to data queries with human-like text. This agent intermediates complex data sources and the user, enabling a seamless and intuitive query process.

A Simplified User Experience: Imagine asking, ‘What were our top-selling products last quarter?’ and receiving an immediate, straightforward answer in natural language. That’s the level of simplicity LangChain brings to the user experience. The agent handles all the heavy lifting, dynamically generating and executing the Python code needed for data analysis and delivering results within seconds, no user coding required.

The Behind-the-Scenes Magic: When users interact with the system, they see only the surface of a deep and complex process. The LLM crafts Python code from user queries, which the LangChain agent executes against the DataFrame. The agent is responsible for running the code, resolving errors, and refining the process to ensure the answers are accurate and easily understood.

Transformative Potential for Data Analysis: This technology empowers subject-matter experts with no programming skills to glean valuable insights from their data. Providing a natural language interface accelerates data-driven decision-making and streamlines exploratory data analysis.

By democratizing analytics, AI assistants are not just providing answers but enabling a future where data-driven knowledge and decisions are within everyone’s reach.

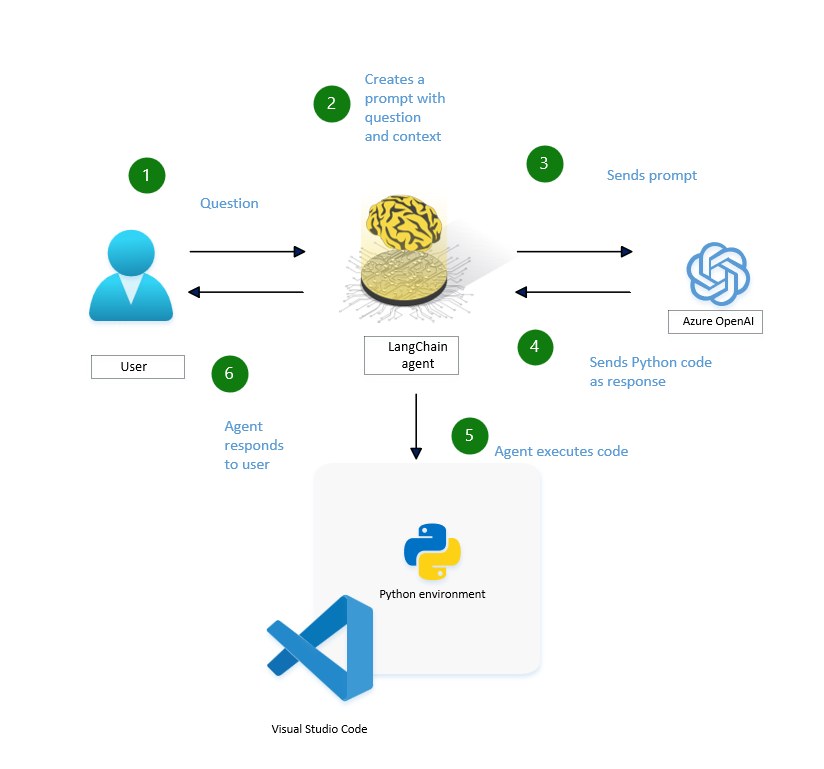

Behind the Scenes: How the LangChain Agent Works

Let’s peel back the curtain to see how the LangChain agent operates when a user sends a query:

1. User Query: It all starts with the user’s question.

2. Creating Contextual Prompts: The LangChain agent interprets the question and forms a contextual prompt, laying the groundwork for a relevant response.

3. Prompt Submission: This prompt is dispatched to Azure’s OpenAI service.

4. Python Code Generation: If necessary, Azure OpenAI service crafts Python code in response to the prompt.

5. The Python code generated by the agent is executed in a Python environment, such as Microsoft Fabric, which is capable of processing and outputting the required information. This environment ensures that the Python code runs efficiently and securely, facilitating the seamless transformation of data into actionable insights.

6. User Response: Finally, the user receives a response based on the analysis carried out by the Python code, closing the loop on their initial query.

1. Azure Open AI Service

To expand on the AI-driven analytics journey, we must understand the mechanics of setting up the underlying AI services.”

Creating an Azure OpenAI resource and deploying a model is a simple process through Azure’s user-friendly interface. Here are the detailed steps complemented by visuals from various sources to guide you through the process:

Step 1: Sign in to Azure Portal

Visit the Azure Portal and sign in with your credentials.

Step 2: Create a Resource Group

Type ‘resource group‘ in the search bar at the top of the portal page and select ‘Resource groups’ from the options that appear.

Click ‘Create’ from the toolbar to create a new resource group.

Fill in details such as Subscription, Resource group name, and Region.

Click ‘Review + Create‘ and then Create.

Wait for the resource group to be created, then open it by selecting its name.

Step 3: Create an Azure OpenAI Resource

Inside your resource group, select Create Resources.

Search for ‘Azure OpenAI‘ in the Marketplace and select Create Azure OpenAI.

Fill in the details such as Subscription, Resource Group, Region, and Service Name.

Choose the Pricing tier (e.g., Standard S0).

Click through ‘Next’ until you can click ‘Create’.

Step 4: Deploy a Model

Once your Azure OpenAI resource is set up, go to Azure OpenAI Studio.

Select it and go to Deployments.

Click on ‘Create new deployment.

Choose a model (like GPT-4), the model version, and enter a Deployment name.

Click Create.

Step 5: Test Your Model

After deployment, you can test your model in Azure OpenAI Studio by accessing features like Chat and Completions playgrounds.

Go to ‘Chat’ and enter some text in the box.

Step 6: Integrate with Applications

Retrieve your endpoint URL and primary API key from your Resource management page’s Keys and Endpoint section.

Use these details to integrate Azure OpenAI capabilities into your applications.

2.Visual Studio Code

Harness the full potential of data analysis by integrating Jupyter with LangChain and a GPT-4 Language Model (LLM) in Visual Studio Code (VS Code). This comprehensive guide will walk you through using Anaconda as your Python kernel, from setup to executing insightful data analyses.

Step 1: Setting up your environment

To work with Python in Jupyter Notebooks, you must activate an Anaconda environment in VS Code, or another Python environment in which you’ve installed the Jupyter package. To select an environment, use Python: Select Interpreter command from the Command Palette (Ctrl+Shift+P).

Step 2: Install Visual Studio Code Extensions

Ensure VS Code is equipped with the Python and Jupyter extensions:

Open VS Code and navigate to the Extensions view (Ctrl+Shift+X).

Search for and install the “Python” and “Jupyter” extensions by Microsoft.

Step 4: Launch Jupyter Notebook in VS Code

Within your activated Anaconda environment, launch VS Code:

Run code to open VS Code directly from the Anaconda Prompt or Terminal.

Create a new Jupyter Notebook in VS Code: Open the Command Palette (Ctrl+Shift+P), type “Jupyter: Create New Blank Notebook”, and select it.

Step 5: Install Required Libraries

In the first cell of your notebook, install the necessary libraries for your project:

langchain: A custom package specific to this project.

sqlalchemy: A SQL toolkit and ORM system for Python, upgraded to the latest version.

openai==0.28: A package to interact with the OpenAI API, installed at a specific version (0.28).

pandas: A data manipulation library in Python.

seaborn: A Python data visualization library.

Step 6: Import Libraries and Configure OpenAI

Configure the OpenAI SDK and import other necessary libraries in a new cell:

openai: OpenAI’s API client for interacting with their machine learning models.

AzureChatOpenAI from langchain.chat_models: A custom module for integrating OpenAI with Azure’s chat services.

create_pandas_dataframe_agent from langchain_experimental.agents: A function to create an agent that interacts with pandas DataFrames.

pandas as pd: A library for data manipulation.

numpy as np: A library for numerical computations.

seaborn as sns: A library for data visualization.

matplotlib.pyplot as plt: A library for plotting.

Step 7: Configure OpenAI SDK for Azure deployment

Configure OpenAI SDK for Azure deployment in a new cell:

openai.api_key = “xxxxxxxxxxxx“: This sets the API key for authentication. This key should be kept secret.

openai.api_type = “azure“: This sets the type of API to Azure.

openai.api_base = “https://xxxxxxxx.openai.azure.com/“: This sets the base URL for the API.

openai.azure_deployment = “xxxxxx”: This sets the specific Azure deployment to interact with.

openai.api_version = “2023-07-01-preview”: This sets the API version to use.

gpt4_endpoint = openai.api_base: This creates a variable gpt4_endpoint that stores the base URL of the API.

Step 8: Load Data, Instantiate the DataFrame Agent, and Analyze

Load your data into a DataFrame, instantiate the DataFrame agent, and begin your analysis with natural language queries:

1. Load your data into a DataFrame and instantiate the DataFrame agent

Using the pandas library and GPT-4, this Python code shows a new way to analyze data by loading a dataset into a DataFrame and adding natural language processing features. By making an agent that merges the analytical skills of pandas with the contextual comprehension of GPT-4, users can ask their data questions in natural language, making complex data operations easier. This combination improves data availability and creates new possibilities for intuitive data discovery.

This Python code does two things:

It employs the pandas library to read a dataset from a CSV file into a DataFrame, which is a two-dimensional data structure with labels. The data is kept in the variable df.

It creates an instance of the GPT-4 model using the AzureChatOpenAI class, which is probably a wrapper around the Azure OpenAI API. The instance is created with several parameters, such as the deployment name, API version, API key, and API type. The created instance is kept in the variable gpt4.

This line of Python code uses a function named create_pandas_dataframe_agent to create an agent. The function takes three arguments:

gpt4: An instance of a GPT-4 model.

df: A pandas DataFrame, which is a two-dimensional labeled data structure.

verbose=True: A boolean that, when set to True, typically means the function will provide more detailed logs or messages.

The function is likely creating an agent that can process or interact with the data in the DataFrame using the GPT-4 model. The resulting agent is stored in the variable agent.

The following line, which is “How many rows and how many columns are there?” is a question that the agent function can recognize and answer. For instance, if `agent` is part of a program that works with databases or spreadsheets, this command might make it return the number of rows and columns in the current dataset.

2. Analysis with natural language queries

In this analysis, we delve into a dataset utilizing Python and pandas to reveal insights and patterns through natural language queries. Beginning with a preview of the dataset’s structure and contents via the head() function, we set the stage for a deeper exploration into sales data across various dimensions. We keep on working on issues like finding outliers in important financial metrics—’Units Sold’, ‘Manufacturing Price’, and ‘Sale Price’—using both statistical and visual methods, even when the data format is not ideal. We finish our exploration by creating a Kernel Density Estimate (KDE plot to visually compare ‘Sales’ and ‘Gross Sales’, showing how seaborn can help us overcome the challenges of a text-based analysis environment and emphasizing the important steps in data cleaning, outlier detection, and distribution comparison.

The result shows the first 5 records of a dataframe called df, which is obtained using the head(function in pandas. This function, by default, gives the first 5 records of the dataframe, offering a glimpse of the data, with columns such as Segment, Country, Product, Discount Band, Units Sold, Manufacturing Price, Sale Price, Gross Sales, Discounts, Sales, COGS, Profit, Date, Month Number, Month Name, and Year. The records present sales information for the product “Carretera” in different segments and countries, describing aspects like sales price, gross sales, discounts, cost of goods sold (COGS, and profit, among others, for the beginning period of 2014.

The sequence of Python explains how an Agent Executor chain makes a bar chart that shows how many units of each product were sold. Here is an overview:

First Observation: The agent notices that the ‘Units Sold’ column seems to be in a string format because of the dollar signs and commas. The goal is to change this column to a numeric type so that a bar chart can be made. This chart will have the ‘Product’ column as the x-axis and the numeric ‘Units Sold’ column as the y-axis.

First Action – Check Data Types: The agent runs a command (df.dtypes to check the data types of columns in the DataFrame. The output shows that the ‘Units Sold’ column is already a float64 type, meaning it’s in numeric format.

Understanding and Next Steps: The agent realizes that the dollar signs and commas seen in a previous display (not shown in the instructions are probably part of the display format and not the actual data. Since ‘Units Sold’ is already numeric, there’s no need for data type conversion.

Making the Bar Chart: The agent goes ahead to make the bar chart using matplotlib. It groups the data by ‘Product’, adds up the ‘Units Sold’ for each product, and plots this data as a bar chart. The chart is modified with labels for the x-axis (‘Product’, y-axis (‘Units Sold’, and a title (‘Units Sold by Product’)).

The instructions describe how the agent uses and tests data types and creates a visual representation of the sales data without needing to convert data types, since the ‘Units Sold’ column is already in the correct numeric format.

The sequence of Python code executions aimed at analyzing a dataset for outliers by:

Summary Statistics with pandas: The initial step uses df.describe() to calculate summary statistics for the dataset’s numerical columns. This function outputs essential metrics like the mean, standard deviation, quartiles, and max/min values, providing a statistical overview of the data.

Identifying Outliers Conceptually: It mentions the concept of outliers being values significantly distant from the median, typically defined as those beyond 1.5 times the interquartile range (IQR) from the 75th or 25th percentile.

Attempt at Visual Inspection via matplotlib: A code snippet aiming to plot boxplots for specific columns (‘Units Sold’, ‘Manufacturing Price’, and ‘Sale Price’) encounters a KeyError, suggesting a discrepancy between the specified and actual column names.

Column Name Verification: To rectify the plotting issue, the code df.columns is executed to list all dataframe columns, revealing extra spaces in the column names, which explains the earlier KeyError.

Using the Lang chain analysis and Azure Open AI features, provide the steps that focus on solving a KeyError due to incorrectly formatted column names in a dataframe and finding possible outliers. Key steps include:

Correcting Column Names: The KeyError was resolved by including leading and trailing spaces in the column names, allowing for successful reference in subsequent analysis.

Outlier Identification: Due to visualization limitations, outliers were identified mathematically by calculating the Interquartile Range (IQR) for ‘ Units Sold ‘, ‘ Manufacturing Price ‘, and ‘ Sale Price ‘. Outlier thresholds were established as 1.5 times the IQR above the 75th percentile.

Outlier Thresholds: Potential outliers were defined as values greater than 4215.3125 for ‘ Units Sold ‘, greater than 617.5 for ‘ Manufacturing Price ‘, and greater than 732.0 for ‘ Sale Price ‘.

Conclusion: The process identified thresholds beyond which values in the specified columns could be considered outliers. Actual outlier identification would require applying these thresholds to the dataset.

This summary outlines the steps taken to correct data referencing issues and the method used to identify potential outliers in the dataset mathematically.

The process described involves preparing and visualizing data to compare the distribution of ‘Sales’ versus ‘Gross Sales’ using a Kernel Density Estimate (KDE) plot. Here are the key points summarized:

Data Type Verification: Initially, it was confirmed that the ‘Sales’ and ‘Gross Sales’ columns were of float64 numerical data type, making them suitable for KDE plotting.

KDE Plot Creation: A KDE plot for ‘Sales’ and ‘Gross Sales’ was generated using the seaborn library. This type of plot is helpful for visually comparing the distribution of two variables.

Visualization Limitation: Due to the text-based nature of the interface, the KDE plot could not be inspected visually within this environment. However, the successful execution of the plotting code indicates that the KDE plot was created without errors.

Conclusion: The summary concludes that the KDE plot comparing ‘Sales’ to ‘Gross Sales’ was successfully generated. To view the plot, the code should be run in a Python environment that supports graphical output, such as Jupyter Notebook or a Python script executed in an IDE with plotting capabilities.

3. Conclusion

Integrating Generative AI systems like LangChain’s Pandas DataFrame agent is revolutionizing data analytics by simplifying user interactions with complex datasets. Powered by models such as GPT-4, these agents enable natural language queries, democratizing analytics and empowering users without coding skills to extract valuable insights.

The LangChain Pandas DataFrame agent acts as a user-friendly interface, bridging the gap between users and complex data sources. It interprets queries, generates Python code, executes analysis processes, and delivers understandable results seamlessly.

Setting up AI services, like Azure OpenAI, is made accessible through intuitive interfaces, facilitating resource creation, model deployment, and application integration. This transformative potential accelerates data-driven decision-making, streamlines analysis, and unlocks insights for users without coding or data science expertise. By democratizing analytics, AI assistants pave the way for a future where data-driven knowledge is accessible to everyone.

4. Resources

Quickstart – Getting started with Azure OpenAI Assistants (Preview) – Azure OpenAI | Microsoft Learn

Fundamentals of Azure OpenAI Service – Training | Microsoft Learn

Python and Data Science Tutorial in Visual Studio Code

Working with Jupyter Notebooks in Visual Studio Code

Introduction to Azure AI Studio (preview)

Get started with prompt flow to develop language model apps in the Azure AI Studio (preview)

Microsoft Tech Community – Latest Blogs –Read More

SemanticKernel – 📎Chat Service demo running Llama2 LLM locally in Ubuntu

Hi!

Today’s post is a demo on how to interact with a local LLM using Semantic Kernel. In my previous post, I wrote about how to use LM Studio to host a local server. Today we will use ollama in Ubuntu to host the LLM.

Ollama

Ollama is an open-source language model platform designed for local interaction with large language models (LLMs). It provides developers with a convenient way to run LLMs on their own machines, allowing experimentation, fine-tuning, and customization. With Ollama, you can create and execute scripts directly, without relying on external tools. Notable features include Python and JavaScript libraries, integration of vision models, session management, and improved CPU support. Whether you’re a researcher, developer, or enthusiast, Ollama empowers you to explore and harness the capabilities of language models locally.

Run a local inference LLM server using Ollama

In their latest post, the Ollama team describes how to download and run locally a Llama2 model in a docker container, now also supporting the OpenAI API schema for chat calls (see OpenAI Compatibility).

They also describe the necessary steps to run this in a linux distribution. So, I got back to life on my Ubuntu using Windows Subsystem for Linux.

And if you want to know more, here are my Ubuntu specs:

Now time to install ollama, run the server, and start a live journal track in a separate window using the following commands:

# install ollama

curl -fsSL https://ollama.com/install.sh | sh

# run ollama

ollama run llama2

/# show journal / logs in live model

journalctl -u ollama -f

The ollama server is up and running, hosting a llama2 model in the endpoint: http://localhost:11434/v1/chat/completions

Llama 2

In my previous post, I used Phi-2 as the LLM to test with Semantic Kernel. Ollama allows us to use a different set of models, this time I decided to test Llama 2.

Llama 2 is a family of transformer-based autoregressive causal language models. These models take a sequence of words as input and recursively predict—the next word(s).

Here are some key points about Llama 2:

Open Source: Llama 2 is Meta’s open-source large language model (LLM). Unlike some other language models, it is freely available for both research and commercial purposes.

Parameters and Features: Llama 2 comes in many sizes, with 7 billion to 70 billion parameters. It is designed to empower developers and researchers by providing access to state-of-the-art language models.

Applications: Llama 2 can be used for a wide range of applications, including text generation, inference, and fine-tuning. Its versatility makes it valuable for natural language understanding and creative tasks.

Global Support: Llama 2 has garnered support from companies, cloud providers, and researchers worldwide. These supporters appreciate its open approach and the potential it holds for advancing AI innovation.

Source: Conversation with Microsoft Copilot:

Llama. https://llama.meta.com/

Llama 2 is here – get it on Hugging Face. https://huggingface.co/blog/llama2

Download Llama. https://ai.meta.com/resources/models-and-libraries/llama-downloads/

:paperclip: Semantic Kernel and Custom LLMs

If you want to learn more about Semantic Kernel, check the official repository here: https://aka.ms/ebsk

The whole sample can be found in: https://aka.ms/repo-skcustomllm01

In this new iteration, I added a few changes:

Create a shared class library “sk-customllm”. This class implements the Chat Completion Service from Semantic Kernel.

Added a few more fields to the models to work with the OpenAI API specification.

The new solution looks like this one:

This is the sample code of the main program. As you can see, it’s quite simple and runs in an uncomplicated way.

// Copyright (c) 2024

// Author : Bruno Capuano

// Change Log :

// – Sample console application to use llama2 LLM running locally in Ubuntu with Semantic Kernel

//

// The MIT License (MIT)

//

// Permission is hereby granted, free of charge, to any person obtaining a copy

// of this software and associated documentation files (the “Software”), to deal

// in the Software without restriction, including without limitation the rights

// to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

// copies of the Software, and to permit persons to whom the Software is

// furnished to do so, subject to the following conditions:

//

// The above copyright notice and this permission notice shall be included in

// all copies or substantial portions of the Software.

//

// THE SOFTWARE IS PROVIDED “AS IS”, WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

// IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

// FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

// AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

// LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

// OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN

// THE SOFTWARE.

using Microsoft.Extensions.DependencyInjection;

using Microsoft.SemanticKernel;

using Microsoft.SemanticKernel.ChatCompletion;

using sk_customllm;

// llama2 in Ubuntu local in WSL

var ollamaChat = new CustomChatCompletionService();

ollamaChat.ModelUrl = “http://localhost:11434/v1/chat/completions”;

ollamaChat.ModelName = “llama2”;

// semantic kernel builder

var builder = Kernel.CreateBuilder();

builder.Services.AddKeyedSingleton<IChatCompletionService>(“ollamaChat”, ollamaChat);

var kernel = builder.Build();

// init chat

var chat = kernel.GetRequiredService<IChatCompletionService>();

var history = new ChatHistory();

history.AddSystemMessage(“You are a useful assistant that replies using a funny style and emojis. Your name is Goku.”);

history.AddUserMessage(“hi, who are you?”);

// print response

var result = await chat.GetChatMessageContentsAsync(history);

Console.WriteLine(result[^1].Content);

In the next posts we’ll create a more complex system using local LLMs and Azure OpenAI services to host agents with Semantic Kernel.

Happy coding!

Greetings

Bruno

Microsoft Tech Community – Latest Blogs –Read More

Windows AI Studio : Getting Stared

Generative AI is certainly reaching greater heights every day. With the type of new innovations happening in the field, its really amazing to see the progress in this field. Large Language Models (LLMs) which are one of the foundational factors for this are very much necessary to create any application on Generative AI. OpenAI’s GPT, undoubtedly is one of the leading LLM being used in the today’s world. This can be consumed by making some API calls. The model really doesn’t reside on user’s infrastructure. Instead, it is hosted on a cloud infrastructure and user consumes it as a service. For example, Microsoft provides Azure OpenAI services through its own cloud – Microsoft Azure.

What if we want to use a LLM on our infrastructure? Is there any method to achieve this? Certainly, there is!! With the advent of Opensource LLMs, this is definitely a reality today. Hugging face proves a lot of opensource Language models like Mistral, Phi-2, Orca, Llama to name a few.

One might now wonder that how exactly can we run it on local machine? Will they be compatible? To answer this, we have to definitely need to consider a lot of aspects, Majorly the infrastructure should be able to cater the model needs. An on-premise GPU is definitely a benefit!

In this regard there are some solutions which provides an environment to readily run these models on our infrastructure. Microsoft recently has launched the Windows AI Studio which helps the users in this regard!

Windows AI Studio streamlines the development of generative AI applications by integrating advanced AI development tools and models from Azure AI Studio and other repositories like Hugging Face.

Developers using Windows AI Studio can refine, customize, and deploy cutting-edge Small Language Models (SLMs) for local use within their windows applications. The platform offers a comprehensive guided workspace setup, complete with a model configuration user interface and step-by-step instructions for fine-tuning popular SLMs (such as Phi) and state-of-the-art models like Llama 2 and Mistral.

Developers can efficiently test their fine-tuned models by utilizing the integrated Prompt Flow and Gradio templates within the workspace of Windows AI Studio.

Now since we know the benefits of Windows AI Studio, lets get started with the installation of this powerful tool!

The best part of this tool is that it is available on the Visual Studio Code as an extension! We can directly add it from the extensions tab in Visual Studio code. But before doing that we need to complete some basic checklist on our machine!

Navigate to Windows AI Studio. This should open a GitHub page with all the required documentation needed for installation. Please make sure to go through this once before proceeding ahead.

Note: Windows AI Studio will run only on NVIDIA GPUs for the preview, so please make sure to check your device spec prior to installing it. WSL Ubuntu distro 18.4 or greater should be installed and is set to default prior to using Windows AI Studio.

Lets begin with the initial installation of Windows Subsystem for Linux (WSL).This lets the developers install a Linux distribution (such as Ubuntu, OpenSUSE, Kali, Debian, Arch Linux, etc.) and use Linux applications, utilities, and Bash command-line tools directly on Windows, unmodified, without the overhead of a traditional virtual machine or dualboot setup.

Navigate to the start button on your machine and search for “Windows PowerShell”. Now right click and select “Run as administrator”. Click on “yes” in the dialogue box and the PowerShell should now be launched on your machine.

Once it is ready, type in the following command,

wsl –install

Note: You must be running Windows 10 version 2004 and higher (Build 19041 and higher) or Windows 11 to use the command. Incase if you are using previous versions refer manual installation.

Now again navigate to the start menu on your machine and search for Microsoft store. Once Microsoft store is launched, search for “Ubuntu”.

Download the application.. Once this is completed, Reboot the machine. The rebooting step is very crucial or else the changes will not be reflected.

Upon Rebooting, you must be able to see the ubuntu on start menu, type Ubuntu on the start menu and launch it.

Now enter the following command in the Ubuntu terminal

sudo apt update

Once this is completed, type in the following command

sudo apt upgrade

This will ask a Y/N, type in “Y” and the installation of packages should begin after that.

Now type in,

#! /bin/bash

Note:Make sure you type in the sudo apt update and sudo apt upgrade to verify that its ready.

Its time to get CUDA installed now, Let’s begin by adding necessary repositories,

wget https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/cuda-ubuntu2004.pin

Next type in the following command,

sudo mv cuda-ubuntu2004.pin /etc/apt/preferences.d/cuda-repository-pin-600

Once this is completed, the next task is to import the keys, to do so, use the following command,

sudo apt-key adv –fetch-keys https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/7fa2af80.pub

Now type the following command,

sudo add-apt-repository “deb http://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/ /”

Press “Enter” key to continue.

Note: If this doesn’t work, run the command –

sudo apt-key adv –fetch-keys https://developer.download.nvidia.com/compute/cuda/repos/ubuntu2004/x86_64/3bf863cc.pub

and then retry running the above command.

Now again run the following command

sudo apt update

Its not time to finally install the CUDA!! Lets do it now

Use the following command to do it,

sudo apt install cuda

Press “Y” when prompted.

This is going to install the CUDA components and it might take a while to complete the process.

Once the process is completed, Run the following command,

sudo reboot

Restart the machine now and that’s it we have completed the steps for installation. Once the machine is restarted, launch the ubuntu terminal from the start menu

Now in the Ubuntu terminal, type

code .

This should launch the Visual studio code. Since this is the first launch, it will collect few things.

Now Visual studio code window will be launched.

On the activity bar Visual Studio Code window, there is an “Extension” option . Click on this and search for “Windows AI Studio” and install the extension, Once it is installed, we can see an extra icon on the activity bar.

Click on the windows AI Studio and let is run some tasks.Upon running the tasks we can see a tick mark on all the tasks except for one –“ VS Code running in a local session. (Remote sessions are currently not supported when running the Windows AI Studio Actions. Please switch to a local session.)”

Note: For some of the users, there might be a cross mark on Conda detected, which means that this is not able to detect conda. In Such cases simply click the “Setup WSL Environment” and let the process complete. Once it is done restart the Visual Studio code.

To make it working the last step is to close the remote connection. To do this, simply click on the session –“WSL:Ubuntu” highlighted on the visual studio code on the bottom left side of the window.

Now a dropdown is shown. In the options, select “Close remote connection”.

This will open a local session of VS Code. Now again click on the extension of Windows AI Studio on the activity bar of VS Code.

Note: GitHub account needs to be signed in and will be prompted to do so. Login using the GitHub account.

Finally!! The much-awaited step is here and we have our own Studio which has the Language models to be used readily on the local machine!!

We can currently see 4 sections,

Model-Fine Tuning

RAG Project – Coming soon

Phi-2 Playground – Coming soon

Windows optimized models

For now, I will choose “Model fine tuning”. In the given form, give a title to the project in the Project Name section, Choose the Project location and select a model(In this case I have used Phi-2). Once it is ready the “Configure Project” Button will be enabled and the project is created!

It takes a while to load the project settings. Once it is done we are supposed to select the Model Inference settings, Fine tune settings and Data settings.

Once these are done click on generate project

Provide the hugging face token when prompted,

Once it is completed, Relaunch the window in workspace.

The readme files guides through the project details.

That’s how the LLM can be made ready to serve our purposes in our local machines! The Windows AI Studio surely is a great tool which has some great capabilities.

Microsoft Tech Community – Latest Blogs –Read More

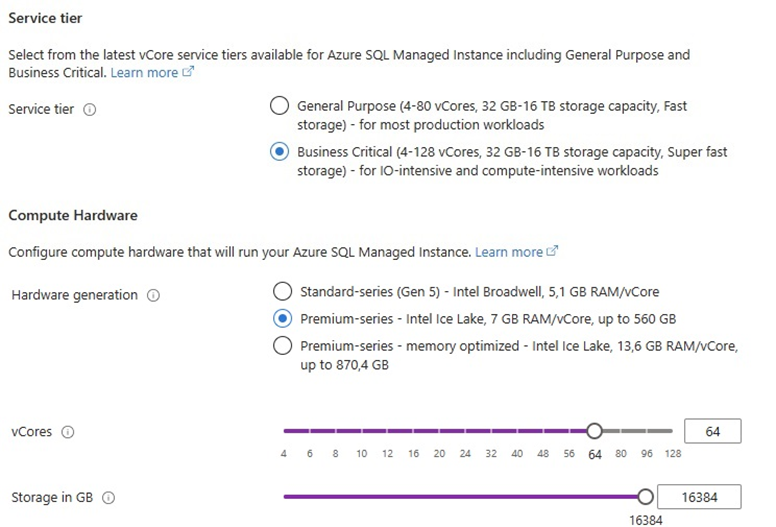

16 TB of storage is now available for Premium-series in SQL Managed Instance Business Critical

Previously, to unlock 16 TB of storage in SQL MI Business Critical, customers had to opt for the memory optimized premium-series hardware generation, but not anymore; we are happy to highlight that SQL MI Business Critical now offers 16 TB for both premium-series and premium-series memory optimized hardware generations.

What is new?

16 TB was an exclusive capability for memory optimized premium-series hardware choice. However, not all workloads require that amount of memory. With this improvement, you can use premium series hardware and still have the benefit of 16 TB storage.

Why is this important?

This is another possibility for you to optimize the spendings if your workload does not require the throughput and the amount of memory of the premium-series memory optimized hardware.

For example, in the West Europe region, this can save you 18% of your monthly computational cost:

Regional support for Premium-series hardware with 16 TB storage

This improvement is available in most Azure regions. The list of regions can be found here.

Summary

This improvement in Azure SQL Managed Instance Business Critical offers you significant cost savings if your workload needs large storage but is not memory heavy. If you’re still new to Azure SQL Managed Instance, now is a great time to get started and take Azure SQL Managed Instance for a spin!

Next steps:

Get started with SQL Managed Instance with our Quick Start reference guide.

Learn more about the latest innovation in Azure SQL Managed Instance.

Try SQL MI free of charge for the first 12 months.

Microsoft Tech Community – Latest Blogs –Read More

New on Azure Marketplace: February 8-14, 2024

We continue to expand the Azure Marketplace ecosystem. For this volume, 148 new offers successfully met the onboarding criteria and went live. See details of the new offers below:

Get it now in our marketplace

Account Based Marketing: The Growth Lab’s programmatic Account Based Marketing service uses automation and a human touch to create personalized campaigns for high-value accounts. The approach is efficient and ensures resources are invested where they’re most likely to pay off. The service guides businesses through every step, from identifying prime targets to engaging them with dynamic campaign content.

Atlan – Active Metadata Platform: Atlan is an active metadata platform designed for data teams, providing a collaborative workspace and creating a single source of truth. It integrates with popular tools like Snowflake, Redshift, and Looker and is used by companies like WeWork, Ralph Lauren, and Plaid. Key features include data catalog and discovery and data lineage.

Cobol on Debian 11: This reliable and consistent platform for developing and deploying Cobol applications on Microsoft Azure comes pre-configured with all the necessary tools and libraries, making it easy to get started with programming and coding. Apps4Rent offers support for deploying Cobol programming on Linux across different OSes on Azure. Key features include faster deployments, stability and reliability, and high-level programming.

Cobol on Red Hat Enterprise Linux 8.7: This reliable and consistent platform for developing and deploying Cobol applications on Microsoft Azure comes pre-configured with all the necessary tools and libraries, making it easy to get started with programming and coding. Apps4Rent offers support for deploying Cobol programming on Linux across different OSes on Azure. Key features include faster deployments, stability and reliability, and high-level programming.

Consul Platform on Debian 10: Consul Platform by HashiCorp is a suite of tools for service networking that enables secure connection, management, and monitoring of services across any environment. It offers real-time insights into service availability, allowing automated routing decisions based on health status for reliable and uninterrupted application performance. Art Group provides preconfigured and up-to-date images of applications according to industry standards.