Category: Microsoft

Category Archives: Microsoft

Microsoft Entra’s Top 50 Features of 2023

To kick off 2024, we’re revisiting the top features delivered in Microsoft Entra over the last calendar year. We served thousands of customers to verify all types of identities and secure, manage, and govern their access to any resource with multicloud identity and network access products. We introduced the latest wave of advancements from Microsoft Entra, expanding into Security Service Edge (SSE), Artificial Intelligence (AI), and accelerating innovations in other key areas like Decentralized Identities, multicloud, and non-human identities, delivering more than a hundred features. Below, you’ll find the top fifty features influenced by customer feedback and market needs. For a comprehensive list, please refer to the release notes. By adopting these latest identity innovations, you can better protect your digital estate and get more out of your security investments.

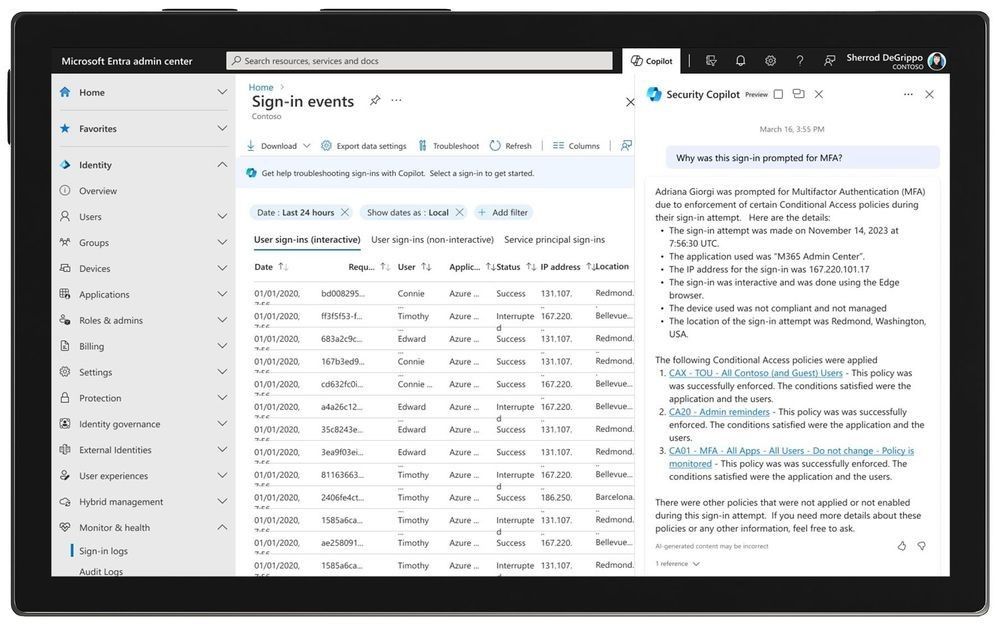

Secure Access in the Era of AI – at Microsoft Ignite 2023, we announced that Microsoft Security Copilot is coming to Microsoft Entra (in Private Preview) to help you automate common tasks, troubleshoot faster, interpret complex policies, and design workflows. This monumental inclusion is only one component of maintaining strong and consistent identity security. Microsoft Entra’s breadth of solutions protect employees, frontline workers, customers, and partners—as well as apps, devices, and workloads across multicloud and hybrid environments.

In the Microsoft Entra admin center, Microsoft Security Copilot explains in simple, conversational language what a Conditional Access policy does or why multi-factor authentication (MFA) was triggered.

Upcoming support for passkeys offering phishing-resistant alternative to physical FIDO2 security keys supporting our enterprises and government customers.

Secure by default, through the auto-rollout of Microsoft Entra Conditional Access policies protecting tenants based on risk signals, licensing, and usage.

Conditional Access enforcement of token protection for sign-in sessions (Public Preview) to combat token theft and replay attacks.

Conditional Access for protected actions enabling organizations to safeguard critical administrative operations, such as altering Conditional Access policies, adding credentials to an application, or changing federation trust settings etc.

Conditional Access overview dashboard offering a comprehensive view of Conditional Access posture and templates providing a convenient method to deploy new policies aligned with Microsoft recommendations.

Conditional Access authentication strength to enable organizations to tailor authentication method requirements based on the user’s sign-in risk level or the sensitivity of the accessed resource, empowering those in highly regulated industries or with strict compliance requirements.

Implement Zero Trust access control by invalidating tokens that violate your IP-based location policies and prevent token replay attacks in near real-time through the strict enforcement of location policies (Public Preview).

The new Entra ID Protection dashboard (Public Preview) is a central hub aiding identity admins and IT practitioners in understanding security posture and implementing effective protections against identity compromises.

New Entra ID Protection signals: verified threat actor ID and attacker in the middle, to help protect organizations from malicious actors and activities.

Entra ID Protection now offers real-time threat intelligence detections to apply risk-based Conditional Access policies to protect identities.

Manage the permissions of identities across a multicloud infrastructure – Improve the security posture of your identities for multicloud infrastructure by managing their permissions and ensuring the principle of least privilege. Manage identity permissions across your multicloud infrastructure.

Leverage Entra ID Protection – Allow on-premises password change to reset user risk (Public Preview) to effectively manage user risk in hybrid environments.

Integration of Entra ID Protection with Microsoft 365 Defender to investigate incidents efficiently and effectively, gaining a comprehensive understanding of end-to-end attacks and facilitating a quicker response to identity compromises.

System-preferred authentication for MFA to sign in users with the most secure method they’ve registered and the method that’s enabled by admin policy.

Configure phishing-resistant MFA on mobile without having to provision certificates on the user’s mobile device using certificate-based authentication (CBA) on mobile.

New features and enhancements in certificate-based authentication (CBA) enabling government organizations to comply with Executive Order 14028 requirements and helping customers migrate from Active Directory Federation Services.

Verify your workplace on LinkedIn with Microsoft Entra Verified ID – Start your decentralized identity journey through managed verifiable credentials service based on open standards.

Converged Authentication Methods – Manage multi-factor authentication (MFA) and self-service password reset (SSPR) in one policy alongside passwordless methods like FIDO2 security keys and certificate-based authentication (CBA).

Secure non-human identities using Microsoft Entra Workload Identities App Health Recommendations.

AD FS Application Migration – Modernize identity estate through cloud-based identity services, enhanced security, and improved user experience.

Use FIDO2 security keys to sign in to Microsoft Entra ID federated applications on iOS and macOS web browsers.

Configure single sign-on (SSO) for Microsoft Entra ID accounts on macOS, iOS, and iPadOS across all applications using Microsoft Enterprise SSO for Apple Devices.

Configure either the phishing-resistant credential or a traditional password as the authentication method using platform SSO for macOS (Public Preview).

Protect and secure local administrator accounts using Windows Local Administrator Password Solution with Microsoft Entra ID.

Customize your authentication flows with custom claims provider to source claims from external systems.

Microsoft Entra External ID (Public Preview) – Establish more secure digital relationships for external identities, create people-centric experiences, and accelerate development of secure applications.

Personalize and help secure access to any application for customers and partners.

With Microsoft Graph Activity Logs, you can now investigate the complete picture of activity in your tenant – from token request in Sign–In logs, to API request activity (reads, writes, and deletes) in Microsoft Graph Activity Logs, to ultimate resource changes in Audit logs.

Restricted management administrative units: Designate specific users, security groups, or devices in your Microsoft Entra ID tenant that you want to protect from modification by tenant-level administrators.

IPv6 support to Microsoft Entra ID allowing customers to reach the Entra services over IPv4, IPv6 or dual stack endpoints.

Microsoft Entra Permissions Management – Microsoft Defender for Cloud (MDC) integration (Public preview): Consolidate identity and access permission insights with other cloud security information in a single interface.

Microsoft Entra Permissions Management – ServiceNow integration: Allow ServiceNow customers to request time-bound, on-demand permissions for multicloud environments (Azure, AWS, Google Cloud) via the ServiceNow portal.

Gain a centralized view of all identities and their permissions (Public Preview), regardless of the identity provider solutions they’re using.

Safeguard Network Access with Microsoft Entra – in July 2023 we announced two new products: Microsoft Entra Internet Access (Public Preview) and Microsoft Entra Private Access (Public Preview). With Identity and Network Access solutions working together, organizations don’t need to spend time deciding which tool would work better for each app, or how to bridge the policies your identity team created with the policies your networking team created. You can enforce unified adaptive access controls, simplify network access security, and deliver a great user experience anywhere with identity-centric Security Service Edge (SSE) solutions. Secure access to all internet, SaaS, and Microsoft 365 apps and resources with an identity-centric Secure Web Gateway (SWG).

Identity Platform Developer Center: One-stop shop for everything developers need to understand about identity concepts, learn the features of Microsoft Entra External ID, and how best to use the new platform to build awesome consumer–facing applications.

Enhanced company branding – Create a custom look and feel for the default sign–in pages, as well as pages targeting specific browser languages.

Cross-tenant access settings improvements – secure your cross-tenant collaboration scenarios and improve end-user experiences for partners.

Prevent data exfiltration using Tenant Restrictions v2 to secure cross-tenant access.

Lifecycle workflows land within LID Governance – On June 7, 2023, Microsoft Entra ID Governance became generally available, and included one of our newest capabilities: Lifecycle Workflows (LCW). With the new Lifecycle Workflows, updates and improvements can be made with more granular workflow execution auditing, and at any time, allowing for navigation to Lifecycle Workflows Audit Logs or Entra Identity Governance Audit Logs. All this access provides extensive workflow execution info and other workflow management activities.Design workflows to ensure new employees are productive immediately—and that access is removed when employees leave.

Machine Learning-based recommendations for reviewers.

A new Microsoft Entra ID Governance dashboard that pulls information, giving you an at-a-glance view of your current state of Identity Governance, a launch-pad for IGA features, and quick access to compliance reports.

Implement require independently verified credentials before approving access to confidential resources.

Zero Trust access control – Just-in-time access to privileged roles with PIM for groups.

Enforce security requirements for activation using PIM integration with Conditional Access.

API-driven provisioning (Public Preview) – Enhance employees, partners productivity and help to meet compliance and regulatory requirements through robust identity governance.

Automate provisioning and governance of your on-premises applications.

Extending the access lifecycle with your organization-specific processes and business logic.

Assigning access automatically to access packages instead of requiring users to request access.

Configuring Verified ID checks in entitlement management.

Entitlement Management support in Conditional Access.

Identity security is a continuous journey

The identity security market is constantly developing, but so are the threats and risks from malicious actors and hackers and the advancements of AI. To keep up with these changes, organizations need to take an active and comprehensive approach to identity security, working with reliable vendors to offer them the best solutions. Even though 2023 is over, the demand for solutions remains high, and we are eager to reveal what we have planned for 2024.

Best regards,

Shobhit Sahay

Learn more about Microsoft identity:

Related Articles:

5 ways to secure identity and access for 2024

Microsoft named a Leader in 2023 Gartner® Magic Quadrant™ for Access Management for the 7th year

See recent Microsoft Entra blogs

Dive into Microsoft Entra technical documentation

Join the conversation on the Microsoft Entra discussion space and Twitter

Learn more about Microsoft Security

Microsoft Tech Community – Latest Blogs –Read More

Protect faster with Microsoft Defender XDR’s latest UX enhancements

Organizations are facing an unprecedented surge in cyberthreats and a global shortage of security experts, and Security Operations Center (SOC) teams are struggling with managing a rising influx of alerts, incidents, and raw telemetry produced by a variety of security tools to protect endpoints, email, identity, SaaS apps, data, and cloud infrastructure. Balancing the speed of response with the precision required for effective threat detection poses an ongoing challenge for SOC teams in optimizing their operational efficiency.

To help SOC teams protect faster, this week we are excited to share the general availability (GA) of our most recent user experience (UX) enhancements within Microsoft Defender XDR to make our industry-leading XDR platform easier to use than ever. These UX enhancements not only improve efficiency but also deliver an intuitive, smooth experience throughout the incident triage, investigation, and threat hunting processes for the SOC teams.

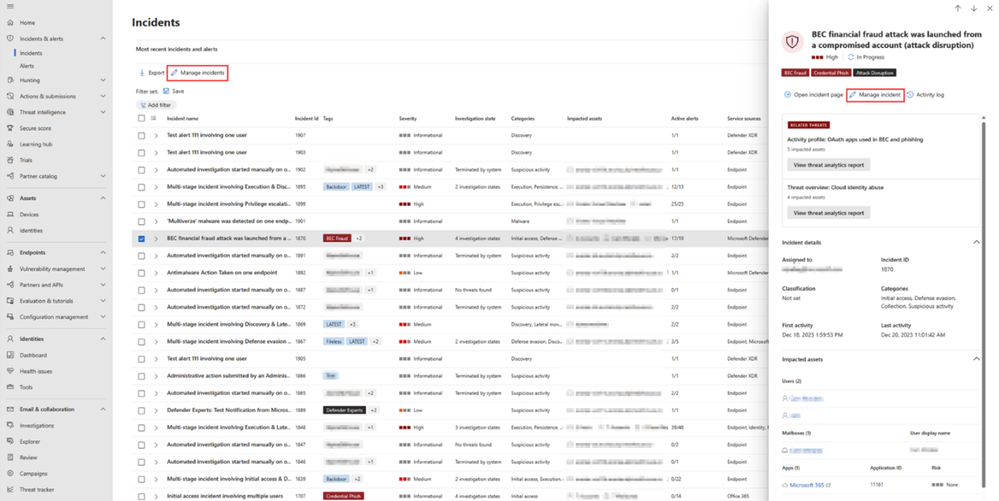

Incident customization

In Microsoft Defender portal, security analysts can choose which incidents should be prioritized based on several factors such as incident severity, incident status and more. To provide SOC analysts with a better and more customized incident management experience, we have added new capabilities to the “Manage incidents” action of the incident page so they can now assign incidents to security groups and change incident severity manually. Once you have the Incident side panel open, select the “Manage incident” action, and you will be able to assign the incident or change the incident severity manually.

Figure 1: Manage incident action in incident details paneFigure 2: Manage incident panel

In page-filters for the incident queue

Every second counts in cybersecurity. In SOC team’s daily work, customization saves time as it helps security analysts swiftly retrieve their preferred filter configurations. Today we are introducing a set of powerful in-page filters that improve the way you customize your incidents queue within Microsoft Defender XDR. Now, you can easily search for specific filters and save your frequently used filter sets to tailor your incident response experience. The new in-page filters provide a user-friendly interface that allows security analysts to efficiently locate and apply the filters they need. This time-saving capability enables security analysts to manage the incident queue more efficiently in a seamless and productive incident management process.Figure 3: In page filters

Incident activity log

Gaining context in an easier and more efficient way represents another time-saving opportunity in security operations. Now with the new incident activity log, security analysts can thoroughly document the steps taken when investigating an incident. This not only ensures accurate reporting to management but also fosters seamless collaboration amongst coworkers. An analyst can also access records of comments on the incident made by other analysts.

Moreover, instead of plain text comments, analysts now have rich text comments for incidents. They can apply various formatting options such as bold, italics, underline, bullet points, and more, as well as add links and images to make their comments more expressive and informative. Additionally, the bi-directional synchronization feature with Microsoft Sentinel’s activity log allows SOC teams to benefit from the seamless integration of logs and comments.

Figure 4: Incident activity log and rich comment interface

Go hunt within incident page

Go hunt feature is now available in the attack story of the incident page. This new feature allows security analysts to access the Advanced hunting function directly from the Incident Graph, providing a well-organized overview of incident-related data all within the same tab. With Go hunt, analysts can quickly gather more data on various entities such as devices, IPs, URLs, and files as shown in Figure 5. Additionally, they can link advanced hunting query results to a specific incident as shown in Figure 5, all from the same page.Figure 5: “Go hunt” action on URL from incident attack storyFigure 6: Advanced hunting query results within incident page

Hunt and investigate from Advanced hunting page

The new query history feature helps SOC analysts to seamlessly resume their work from where they left off. This functionality reduces the risk of losing any unsaved work by automatically retaining security analysts’ recent queries and allows analysts to effortlessly run and modify those queries at their convenience. Figure 7: Query history in Advanced hunting page

Another enhancement of the Advanced hunting page is the new inline results exploration functionality that facilitates a smoother results exploration experience and allows SOC analysts to conveniently navigate through all the parsed data within the results grid while eliminating the necessity for horizontal scrolling or side panels. SOC analysts now can expand the record of the results to explore its content within the results grid. In addition, they can expand specific cells of type JSON or array to easily explore their parsed content.Figure 8: Inline results exploration in Advance hunting page

Furthermore, SOC analysts can now right-click on any result value in a row to add it as a filter to the existing query or copy the value to clipboard. This functionality preserves valuable time for analysts, allowing them to modify their queries in seconds based on the results, all without navigating away from the results grid.Figure 9: Right click context menu for Advanced hunting results

Speed and efficiency are crucial in security operations. The new UX enhancements in Microsoft Defender XDR make our XDR platform more user-friendly than ever before and provide an even more seamless and streamlined experience in incident triage, investigation, and threat hunting for SOC teams. The cumulative impact of each one of these improvements contributes to a significant enhancement in SOC efficiency.

Learn more:

Read our documentation to get started:

Manage incidents

Incidents queue filters

Incident activity log

“Go hunt” from incident attack story

Advanced hunting query history

Advanced hunting results exploration

Visit our websites to learn more about Microsoft Defender XDR and unified security operations platform.

Microsoft Tech Community – Latest Blogs –Read More

MidDay Café Episode 47 – Rundown on the Latest Copilot Announcements and Implications

This week on MidDay Café Microsoft’s with Tyrelle Barnes and Michael Gannotti the two hosts discuss the latest Microsoft announcements about Copilot. They also talk about the implications and real-world examples of the impact/value they deliver.

Listen to the Audio podcast version:

Subscribe to the Audio Podcast on Spotify

Subscribe to the Audio Podcast on Apple Podcasts

Subscribe to the Audio Podcast on Google Podcasts

Resources:

Bringing the full power of Copilot to more people and businesses – The Official Microsoft Blog

Expanding Copilot for Microsoft 365 to businesses of all sizes | Microsoft 365 Blog

Thanks for visiting!

Tyrelle Barnes LinkedIn : Michael Gannotti LinkedIn | Twitter

Microsoft Tech Community – Latest Blogs –Read More

Tell Us What You Think!

Microsoft Tech Community – Latest Blogs –Read More

Tell Us What You Think!

Microsoft Tech Community – Latest Blogs –Read More

Prepare for upcoming TLS 1.3 support for Azure Storage

Azure Storage has started to enable TLS 1.3 support on public HTTPS endpoints across its platform globally to align with security best practices. Azure Storage currently supports TLS 1.0, 1.1 (scheduled for deprecation by November 2024), and TLS 1.2 on public HTTPS endpoints. This blog provides additional guidance on how to prepare for upcoming support for TLS 1.3 for Azure Storage.

TLS 1.3 introduces substantial enhancements compared to its predecessors. TLS 1.3 improvements focus on both performance and security, featuring faster handshakes and a streamlined set of more secure cipher suites, namely TLS_AES_256_GCM_SHA384 and TLS_AES_128_GCM_SHA256. Notably, TLS 1.3 prioritizes Perfect Forward Secrecy (PFS) by eliminating key exchange algorithms that don’t support it.

Clients that utilize the latest available TLS version will automatically pick TLS 1.3 when it is available. If you need more time to upgrade to TLS 1.3, you can choose to continue to use TLS 1.2 by controlling the TLS negotiations through client configuration (see recommendations section below). Azure storage will continue to support TLS 1.2 in addition to TLS 1.3.

We have outlined below some of the known issues with TLS 1.3 enablement, potential impact and mitigation.

Known Issues, impact and mitigation

Certain Java clients can experience high latencies, timeouts, and connections that hang for extended periods due to a bug in the Java Http stack. The issue manifests primarily for applications with high request concurrency. The bugs are [JDK-8293562] and [JDK-8208526] .

The major JDK versions with the bug fixes are:

JDK 11 (> 11.0.17)

JDK 17 (> 17.0.6)

JDK 21

The following categories of clients could be affected while using TLS 1.3:

Clients that run on JDK versions other than JDK versions mentioned above.

Client tools like WASB and Azure Storage SDK for Java < v12 running the JDK version without the fix. (Note: ABFS, Azure Storage Java SDK > V12 are not impacted).

Recommendations for mitigation:

Option 1: (Recommended) Upgrade your application to the latest supported JDK versions mentioned above or latest Azure Storage SDK for Java. You can refer to the following link to get the latest recommended SDK versions.

Option 2: (Short-term workaround) We understand it might not always be possible to upgrade to the latest SDK version. While you move your application to the latest SDK version, this can be mitigated by setting the maximum TLS version for client versions to TLS 1.2. There are two ways to accomplish this:

Setting system properties when invoking the Java application:

java -Djdk.tls.client.protocols=TLSv1.2 -Dhttps.protocols=TLSv1.2 -jar …

Setting system properties in code:

System.setProperty(“Djdk.tls.client.protocols”, “TLSv1.2”);

System.setProperty(“Dhttps.protocols”, “TLSv1.2”);

When your applications are ready to work with TLS 1.3, remember to reset these settings.

Help and Support

If you have questions, get answers from community experts in Microsoft Q&A. If you have a support plan and you need technical help, create a support request:

For Issue type, select Technical.

For Subscription, select your subscription.

For Service, select My services.

For Service type, select Blob Storage.

For Resource, select the Azure resource you are creating a support request for.

For Summary, type a description of your issue.

For Problem type, select Connectivity.

For Problem subtype, select Issues using TLS.

Microsoft Tech Community – Latest Blogs –Read More

Test your configurations and experience Defender Experts Notifications early

Threat hunting, an integral part of our Defender Experts services, helps our customers by proactively hunting across endpoints, Office 365, cloud applications and identity for emerging cyberthreats. Defender Experts will investigate anything they find and hand off contextual alert information along with remediation instructions for customers to quickly respond through a Defender Experts Notification.

We have released the Sample Defender Experts Notification feature which will enable customers to:

Get the Defender Experts Notification experience earlier than when the actual Defender Experts Notification is sent by our experts upon detecting malicious activities in their environment.

Test the email notifications configuration done by customers for Defender Experts Notifications.

Test the playbooks/rules set up in SIEM/SOC tools for Defender Experts Notifications.

Customers can generate Defender Experts Notifications very easily and quickly through the portal at any time.

After logging into the portal, navigate to Settings>Defender Experts.

Figure 1. Screenshot of the settings in Microsoft Defender that highlights the Defender Experts general settings option.

Click the Sample notifications option to start generating a sample Defender Experts Notification. Once you click on the ‘Generate a Sample notification’ button, the sample notification is generated in a few minutes.

Figure 2. Screenshot of the Defender Experts section where a customer can generate a sample Defender Experts Notification.

Customers can then view the last five sample Defender Experts Notifications.

Figure 3. Screenshot showing a list of the last five generated Defender Experts Notifications.

Click one of the test notifications to open an instance of a sample notification.

Figure 4. Screenshot of a sample Defender Experts Notification.

Click on the Summary tab and then the Read more button to open the Defender Experts Notification contents which include an executive summary and recommendations.

Figure 5. Screenshot of the summary section of a sample Defender Experts Notification.

The detailed documentation for generating sample Defender Experts Notifications can be found here. To learn more about Defender Experts Notifications visit Receive Defender Experts Notifications and how to configure email notifications visit Set up Defender Experts email notifications.

To get a deeper understanding of the threats our Defender Experts team hunt for, visit https://aka.ms/ThreatHunting101.

To learn more about our services, visit the Microsoft Defender Experts for XDR web page and Microsoft Defender Experts for Hunting web page.

Microsoft Tech Community – Latest Blogs –Read More

Leveraging Azure Event Hub, Microsoft Fabric, and Power BI for Real-Time Data Analytics

Real-time data analytics is the process of collecting, analyzing, and using data in real time to make informed decisions. It involves capturing data as it is generated, processing it immediately, and presenting actionable insights to users without any delay. Real-time data analytics can help you improve customer satisfaction, business intelligence, business development, fraud detection, and efficiency.

In this blog, you will learn how to use Azure Event Hub, Microsoft Fabric, and Power BI to create a scalable and efficient solution for real-time data. You will also see how to use a Python script to simulate a device that sends data to Azure Event Hub every time you run it.

Prerequisites:

Azure account

Microsoft Fabric licence

Visual studio code

Python

1. Create an event hub namespace

Create a resource group: A resource group is a logical collection of Azure resources.

Sign in to the Azure portal.

In the left navigation, select Resource groups, and then select Create.

For Subscription, select the name of the Azure subscription in which you want to create the resource group.

Type a unique name for the resource group. The system immediately checks to see if the name is available in the currently selected Azure subscription.

Select a region for the resource group.

Select Review + Create. On the Review + Create page, select Create.

2. Create an Event Hubs namespace

An Event Hubs namespace provides a unique scoping container, in which you create one or more event hubs.

In the Azure portal,

in Marketplace type event hubs

Select Create on the toolbar.

On the Create namespace page, take the following steps:

Select the subscription in which you want to create the namespace.

Select the resource group you created in the previous step.

Enter a name for the namespace. The system immediately checks to see if the name is available.

Select a location for the namespace.

Choose Basic for the pricing tier.

Leave the throughput units (for standard tier) or processing units (for premium tier) settings as it is.

Select Review + Create at the bottom of the page. On the Review + Create page, review the settings, and select Create.

3. Create the first Event Hubs namespace instance

To create an event hub within the namespace, do the following actions:

On the Overview page, select + Event hub on the command bar.

Type a name for your event hub, then select Review + create.

You can check the status of the event hub creation in alerts, after the event hub is created.

4. Get data from Azure Event Hubs

4.1. Set a shared access policy on your event hub

Before you can create a connection to your Event Hubs data, you need to set a shared access policy (SAS) on the event hub and collect some information to be used later in setting up the connection.

In the Azure portal, browse to the event hubs instance you want to connect.

Under Settings, select Shared access policies

Select +Add to add a new SAS policy, or select an existing policy with Manage permissions.

Enter a Policy name.

Select Manage, and then Create.

4.2.Gather information for the cloud connection

Within the SAS policy pane, take note of the following four fields. You might want to copy these fields and paste it somewhere, like a notepad, to use in a later step.

Field reference

Field

Description

Example

a

Event Hubs instance

The name of the event hub instance.

learnfabric

b

SAS Policy

The SAS policy name created in the previous step

learnfabricSAP

c

Primary key

The key associated with the SAS policy

xxxxxxxxxxxxxxxxx

4.3. Create consume group

To create a new Consumer Group in Azure, navigate to the Azure Portal, then go to Event Hubs, select your specific Event Hub, click on Consumer Groups, and finally click on “Consumer Group” to add a new one.

5.Create a workspace

To create a workspace within the Microsoft Fabric portal, do the following actions:

Sign in to Power BI.

Select Workspaces > New workspace.

Fill out the Create a workspace form as follows: Name: Enter Data Warehouse Tutorial, and some characters for uniqueness. …

Expand the Advanced section.

Choose Fabric capacity or Trial in the License mode section.

Choose a premium capacity you have access to.

Select Apply. The workspace is created and opened.

5.1.KQL Database

Now you need to create a KQL Database, here are the steps:

Ensure you have a workspace with a Microsoft Fabric-enabled capacity.

Select New > KQL Database.

Enter your database name, then select Create.

5.2.Database details

The main page of your KQL database shows an overview of the contents in your database but now it is empty.

5.3.Create eventstream

On the Workspace page, select New and then Eventstream:

Enter a name for the new eventstream and select Create.

The creation of the new eventstream in your workspace may take a few seconds. Once it’s done, you’re directed to the main editor where you can add sources and destinations to your eventstream.

5.3.1. Source

On the lower ribbon of your KQL database, select Get Data.

In the Get data window, the Source tab is selected.

Select the data source from the available list. In this example, you’re ingesting data from Azure Event Hubs.

5.3.2. Configure

Add source name for your choice.

select Create new connection or select Existing connection. For this example you will create a new connection

Run the script through your terminal to collect the data from your device to Azure Event:

python sender.

The link for the script PascalBurume/Event-Hub (github.com)

Create new connection

Fill out the Connection settings according to the following table:

Setting

Description

Example value

Event hub namespace

Field d from the table above.

eventdatacollect

Event hub

Field a from the table above. The name of the event hub instance.

learnfabric

Connection

To use an existing cloud connection between Fabric and Event Hubs, select the name of this connection. Otherwise, select Create new connection.

Create new connection

Connection name

The name of your new cloud connection. This name is autogenerated but can be overwritten. Must be unique within the Fabric tenant.

Connection

Authentication kind

Auto populated. Currently only Shared Access Key is supported.

Shared Access Key

Shared Access Key Name

Field b from the table above. The name you gave to the shared access policy.

learnfabricSAP

Select Save. A new cloud data connection between Fabric and Event Hubs is created.

Connect the cloud connection to your Event Stream

Whether you have created a new cloud connection, or you’re using an existing one, you need to define the consumer group.

Fill out the following fields according to the table:

Setting

Description

Example value

Destination name

The name of the destination for your choice

Data_collection

Consumer group

The relevant consumer group defined in your event hub. After adding a new consumer group, you’ll then need to select this group from the drop-down.

Data

Workspace

The workspace where your database will be located.

RealTime_event

KQL Database

The name of your database

Event_Kdb

Destination table

The name of your table where your data will be store

Data_

Input data format

Select the format of the incoming data you want to ingest. Data from the eventstream that matches the selected format will be ingested into the kusto.

JSON

Select Save.

5.3.3.Datbase details

The main page of your KQL database shows an overview of the contents in your database. The following table lists the available information.

Card

Item

Description

Database details

Created by

User name of person who created the database.

Region

Shows the region of the data and services.

Created on

Date of database creation.

Last ingestion

Date on which data was ingested last into the database.

Query URI

URI that can be used to run queries or to store management commands.

Ingestion URI

URI that can be used to get data.

OneLake folder

OneLake folder path that can be used for creating shortcuts. You can also activate and deactivate data copy to OneLake.

Size

Compressed

Total size of compressed data.

Original size

Total size of uncompressed data.

Compression ratio

Compression ratio of the data.

Top tables

Name

Lists the names of tables in your database. Select a table to see more information.

Size

Database size in megabytes. The tables are listed in a descending order according to the data size.

Most active users

Name

User name of most active users in the database.

Queries run last month

The number of queries run per user in the last month.

Recently updated functions

Lists the function name and the time it was last updated.

Recently used Querysets

Lists the recently used KQL queryset and the time it was last accessed.

Recently created Data streams

Lists the data stream and the time it was created.

6.Create a report

There are three possible ways to create a report:

Open the Explore your data window from a KQL database.

On the ribbon, select Build Power BI report.

.

6.1.Report preview

You can add visualizations in the report’s preview. In the Data pane, expand Kusto Query Result to see a summary of your query

When you’re satisfied with the visualizations, select File on the ribbon, and then Save this report to name and save your report in a workspace.

6.2 Report details

In Name your file in Power BI, give your file a name.

Select the workspace in which to save this report. The report can be a different workspace than the one you started in.

Select the sensitivity label to apply to the report

Select Continue.

6.3.Build your report.

You can build the report with visuals: Line Charts and Table to view the information about the real time data.

You can run the script again for collecting data from device from the terminal

python sender.py

After refreshing the report, we can see how the visuals has also been update.

We can observe how the data has been updated.

Congratulations on completing this tutorial blog on how to leverage Azure Event Hub, Microsoft Fabric, and Power BI for real-time data analytics. You have learned how to create a scalable and reliable data pipeline that can ingest, process, and visualize streaming data from devices. You have also gained valuable skills and insights on how to use Microsoft’s powerful cloud services and tools to enhance your data analytics capabilities. We hope you enjoyed this tutorial and found it useful for your projects.

Thank you for choosing Microsoft as your partner in data analytics.

Ressoruces :

Overview of Real-Time Analytics – Microsoft Fabric | Microsoft Learn

Get data from Azure Event Hubs – Microsoft Fabric | Microsoft Learn

Quickstart: Read Azure Event Hubs captured data (Python) – Azure Event Hubs | Microsoft Learn

Visualize data in a Power BI report – Microsoft Fabric | Microsoft Learn

CareerHubPage – Microsoft Fabric Community

Get started with Microsoft Fabric – Training | Microsoft Learn

Microsoft Tech Community – Latest Blogs –Read More

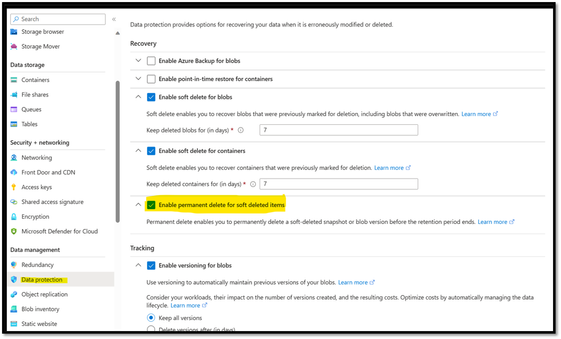

Permanent Delete of Soft deleted Snapshot and Versions without disabling Soft Delete option

Background:

This blog covers Permanent Delete of Soft deleted Snapshot and Versions in Azure storage without disabling Soft Delete option before the retention period ends.

Permanent Delete of Blob Snapshot and Version:

With version 2020-02-10 and later, you can permanently delete a soft-deleted snapshot or version.

Permanent delete enables you to permanently delete a soft-deleted snapshot or blob version before the retention period ends.

Note

The storage account must have versioning or snapshots enabled. Soft-delete must also be enabled on the storage account to soft-delete versions or snapshots of blobs in the account. Permanent delete only deletes soft-deleted snapshots or versions.

Please refer to article Delete Blob (REST API) – Azure Storage | Microsoft Learn

Step1 :

Enable Permanent delete for soft deleted items option from Azure Portal

Please note Permanent delete option can be enabled from Azure Portal at the moment only for GPv2 Storage account without Hierarchical namespace enabled Storage account.

Enable Permanent delete for soft deleted items from REST API

Make use of Set Blob Service Properties Rest API to enable Permanent Delete

Reference document : Set Blob Service Properties (REST API) – Azure Storage | Microsoft Docs

Sample request :

Make use of Get Blob Service Properties Rest API to check whether the Permanent Delete is enabled on the storage account or not

Get Blob Service Properties (REST API) – Azure Storage | Microsoft Docs

Step 2 :

Permanent delete of Snapshot/ version

Using REST API

Make use of Delete Blob Rest API to permanent delete soft deleted snapshot/Version

Storage accounts with permanent delete enabled can use the deletetype=permanent query parameter to permanently delete a soft-deleted snapshot or deleted blob version.

If the query parameter presents any of the following, Blob Storage returns a 409 error (Conflict):

The permanent delete feature isn’t enabled for the storage account.

Neither versionid nor snapshot are provided.

The specified snapshot or version isn’t soft-deleted.

Permanent delete also includes a shared access signature permission to permanently delete a blob snapshot or blob version. For more information, see Create a service SAS.

To get the Snapshot or version id which needs to be deleted use List Blob : List Blobs (REST API) – Azure Storage | Microsoft Learn rest API. Make use of URI parameter include=snapshots/versions(based on requirement)

– snapshots: Specifies that snapshots should be included in the enumeration. Snapshots are listed from oldest to newest in the response.

-versions: Version 2019-12-12 and later. Specifies that versions of blobs should be included in the enumeration

Sample Rest API call to get Snapshot/version id :

Sample Rest API call to permanent delete the snapshot/versions:

Using Azcopy

We can make use of Azcopy to Permanently delete the Blob version or snapshot.

Make use of –permanent-delete (string) switch, this is a preview feature that PERMANENTLY deletes soft-deleted snapshots/versions. Possible values include ‘snapshots’, ‘versions’, ‘snapshotsandversions’, ‘none’. (default “none”)

Execute azcopy login( with AD Authentication)

azcopy rm “Blob URL” –Permanent-delete=”snapshots/version”(pass based on requirement)

The above command will delete all the Snapshots/Versions which is in soft deleted state

Note : Make Sure to assign Storage Blob Data Owner role for the user who is running the Azcopy or use SAS /Access Keys.

Refer below documents for more information

https://github.com/Azure/azure-storage-azcopy/releases/tag/v10.14.0

https://learn.microsoft.com/en-us/rest/api/storageservices/delete-blob#permanent-delete

Microsoft Tech Community – Latest Blogs –Read More

New on Azure Marketplace: January 1-11, 2024

We continue to expand the Azure Marketplace ecosystem. For this volume, 267 new offers successfully met the onboarding criteria and went live. See details of the new offers below:

Get it now in our marketplace

AdvisorTarget: ForwardLane’s self-service data analytics platform provides advisors with insight into financial products, tickers, and investment strategies. The solution lets you to skip laborious ETL processes and have insights delivered into an easy-to-install CRM widget. You can personalize outreach based on frequency of advisor visits.

Analytics 365 – Teams Call Analytics: Trolling’s app for Microsoft Teams Phone provides insights into inbound and outbound customer communications and helps you manage call center and customer-facing teams. The web-based delivery offers an intuitive dashboard with multiple views, metrics, and wallboards. You can set KPIs for contextual insight, visualize end-to-end call journeys, and sync with Microsoft 365.

Battle Road Atom Engine: Atom Engine from Carahsoft is a game engine that powers complex simulations from logistics and supply chain to energy networks, disaster response, and defense applications. It allows teams to collaborate, plan, and operate their scenarios in real time via a shared persistent world user experience. The platform runs in the cloud, eliminating the need for local software or expensive hardware.

Bayer Crop Water Use Maps: Bayer’s AgPowered service provides access to map layers and metadata to track crop moisture levels and potential crop loss areas due to lack of water. Maps are generated based on user identified geographic points and use NDVI imagery and crop evapotranspiration. Supported geographies include over 50 countries, and approved crops include alfalfa, corn, soybean, and more.

BeesWall (Enterprise License): BeesWall’s digital knowledge library offers seamless data governance for efficient organization and deep focus. Designed for post-merger integration and data governance teams in life sciences and financial industries, it organizes and showcases information from multiple electronic storage systems in a structured manner that allows users to find it easily.

Bigeye: Bigeye is a data observability platform that uses automated monitoring, anomaly detection, and root cause analysis to ensure data accuracy. With a library of over 70 data quality monitoring metrics and easy deployment options, businesses can proactively detect and resolve data quality issues at scale within minutes.

CentOS 7.9 Generation 2 with Python 3.9 and Support: This CentOS 7.9 minimal image with Python 3.9 is a secure and stable platform for web servers, development environments, test environments, and thin clients. Built from the official CentOS 7.9.2207 ISO image, this version from Rinne Labs is stripped down to include only essential packages for optimal performance.

CentOS 7 Generation 2 with Support: This CentOS 7 minimal image from Rinne Labs is a secure and lightweight platform built from the official CentOS 7 ISO image. It is updated with the latest security patches making it ideal for rapid deployment of web applications, efficient development and testing environments, and stable and secure server infrastructure.

ClaimBook (Standard): ClaimBook from ABI Health is a revenue cycle management (RCM) solution built to enable an automated cashless health insurance claims management. It streamlines insurance claim settlement with features like smart data extraction, integrated emailing, and a rules engine that disallows incomplete submissions. It also offers comprehensive reports and notifications for every action taken.

ClaimBook (Enterprise): ClaimBook from ABI Health is a revenue cycle management (RCM) solution built to enable an automated cashless health insurance claims management. It streamlines insurance claim settlement with features like smart data extraction, integrated emailing, and a rules engine that disallows incomplete submissions. It also offers comprehensive reports and notifications for every action taken.

Data Lake Connector: Intelligent Plant’s Industrial App Store Data Lake Connector transfers data from your real-time historian to Microsoft Azure Event Hub using streaming rules in Microsoft Power BI. This ensures a smooth and efficient flow of information and easy storage in your data lake for efficient management and utilization of your valuable data resources.

Dataweavers Platform Operations for Sitecore: Dataweavers helps Sitecore users achieve low TCO and high performance, security, and upgrade certainty on Microsoft Azure. The platform supports composable Sitecore XM-Cloud, traditional Sitecore XP/XM, and hybrid scenarios with prebuilt templates, pipelines, and security controls in a fully managed environment.

Docker on Ubuntu 22.04 LTS Minimal: Ideal for web applications, microservices architecture, development, testing, and CI/CD, this ready-to-deploy image of Docker on Ubuntu 22.04 LTS Minimal is offered by Yaseen’s Market. Features include optimized performance, enhanced security, seamless integration with Microsoft Azure services, and support for Docker Compose.

Docker CE on Linux Stream 9: This image of Docker CE on Linux Stream 9 by Tidal Media offers a powerful combination for seamless container management. Docker CE’s efficient resource utilization and security features make it an ideal choice for development and production environments. It supports an extensive repository of prebuilt images, accelerating the development process.

Engage: Engage from Udyamo is an app that streamlines employee recognition and communication within an organization. It allows for easy shoutouts, increased transparency, and improved company culture. The app provides managers with data on employee recognition and progress tracking.

ExpressCluster HA Clustering Software: NEC’s ExpressCluster ensures high availability and continuity of system infrastructure, protecting against failures that could lead to business interruptions. It monitors hardware components, operating systems, and applications, and synchronizes data across clusters to maintain system operations without data loss.

Extra360: This platform from Extra Loyalty Solutions enhances customer engagement and loyalty through customer-centric promotions, integrated digital wallet solutions, data-driven decisions, omnichannel engagement, gamification techniques, scalability, and robust security. It offers bespoke solutions to cater to businesses across a diverse range of sectors.

Crux: Crux from Fractal Analytics is a generative AI-powered decision intelligence platform that provides an intuitive ChatGPT-like conversational experience for business users to ask questions in simple English and get reliable, safe, and secure answers from enterprise structured datasets and unstructured documents. It offers direct connectivity to various data sources, data authorization, and customizable options.

Imply Polaris: Imply Polaris is a real-time database for modern analytics applications, built from Apache Druid and delivered as a fully managed database as a service (DBaaS). With Imply, developers can easily create interactive data experiences on real-time and historical data at unlimited scale, with access to updated Druid distribution, visualization UI, built-in management, and performance monitoring software.

Kafka on Windows Server 2022: GlobalSolutions offers a stand-alone version of Apache Kafka server for developers to quickly publish events without setting up the environment. It allows creating topics, setting partition and replication factor, and real-time processing. Features include distributed architecture, scalability, durability, load balancing, and high volume along with support, a free trial, and monitoring.

MarketServe: This offer from Cloudserve Systems streamlines your product’s journey in the Microsoft Azure Marketplace with innovative automation and expert guidance. Elevate your publication experience and maximize visibility with an intuitive interface, efficient submission process, optimization assistance, comprehensive guidance, and faster time-to-market.

Neo4j Community Edition Server on Debian 10: Neo4j is a powerful database tool that manages and queries highly interconnected data structures with lightning speed. With enterprise-grade features like ACID compliance, role-based access controls, and encryption, this image of Neo4j Community Edition Server on Debian 10 from Tidal Media ensures data security and integrity.

Neo4j Community Edition Server on Linux 7.9: Neo4j is a graph database management system that efficiently manages, stores, and processes highly connected data. It represents data as nodes, relationships, and properties, and employs Cypher, a powerful query language. Try Neo4j Community Edition Server on Linux 7.9 from Tidal Media for efficient storage and management of complex data relationships.

Neo4j Community Edition Server on Linux Stream 8: This image of Neo4j Community Edition Server on Linux Stream 8 from Tidal Media offers exceptional speed in handling complex queries related to graphs. It employs Cypher, an intuitive and powerful query language exclusively crafted for graph databases and incorporates robust security measures.

Neo4j Community Edition Server on Linux Stream 9: Neo4j is a graph database platform that visualizes connections between data points, providing insights and lightning-fast performance. It scales effortlessly and offers robust security features. This image of Neo4j Community Edition Server on Linux Stream 9 helps you effortlessly manage and traverse complex relationships within data structures.

Neo4j Community Edition Server on Ubuntu 20.04: Neo4j is a graph-based database that allows organizations to manage, explore, and derive insights from interconnected data. It features a declarative graph query language, over 20 visualization libraries, predictive analytics tooling, API access, and a developer sandbox. Try Neo4j Community Edition Server on Ubuntu 20.04 from Tidal Media to make full use of relationships between your data.

Neo4j Community Edition Server on Ubuntu 22.04: Neo4j is a graph data platform optimized for uncovering valuable intelligence found within connections across data. It simplifies querying and manipulation of connected data, making complex operations intuitive and accessible. This image of Neo4j Community Edition Server on Ubuntu 22.04 from Tidal Media is ideal for managing and traverse complex relationships within data structures.

OLS360 Platform (Essential and Advanced): This no-code business management platform from One Logic Solutions offers cost-effective and innovative strategies to grow your business. Integrated with Microsoft Power BI, it provides data mart integration, multi-level security, customized SLAs, and task scheduling. The platform has essential and advanced plans with dynamic menus and customized intranet pages.

OpenVPN Server on Debian 10: OpenVPN is an open-source VPN server that offers strong security, configuration flexibility, and scalability for networks of all sizes. Art Group’s preconfigured images are always up-to-date and secure, with updates and vulnerabilities monitored and fixed promptly. Get the OpenVPN Server on Debian 10 for reliable and customizable protection.

OpenVPN Server on Debian 11: OpenVPN is a popular open-source VPN software that emphasizes security and offers robust features and flexibility. This preconfigured and up-to-date image of OpenVPN Server on Debian 11 from Art Group uses industry-standard protocols and multiple encryption techniques to circumvent network constraints and censorship, giving users free access to the internet.

OpenVPN Server on Linux Stream 8: OpenVPN is a popular open-source VPN solution that provides secure remote access to networks. Its extensive configuration options allow for tailoring to specific needs, and it can bypass firewalls and network restrictions. This OpenVPN Server on Linux Stream 8 image from Art Group is reliable and secure.

Oracle Linux 9 with GUI: Yaseen’s Market provides this graphical user interface (GUI) image for Oracle Linux 9. Oracle Linux lacks a GUI in cloud environments, but a virtual machine with a GUI and RDP can be configured for use. Recommended clients are remmina for Linux and mRemoteNG for Windows.

Oracle Database Client 11g on Alma Linux 8: cloudimg provides this Oracle Database Client 11g on Alma Linux 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. Oracle Database Client 11g on Linux is a reliable and high-performance database client that offers advanced security features and support for multiple operating systems.

Oracle Database Client 11g on CentOS Stream 8: cloudimg provides this Oracle Database Client 11g on CentOS Stream 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. This software can help businesses access and manage data quickly and efficiently while ensuring data security.

Oracle Database Client 12.1 on Alma Linux 8: cloudimg provides this Oracle Database Client 12.1 on Alma Linux 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. This is a reliable and high-performance database client that supports multiple operating systems. It provides advanced data access and security features, making it an excellent choice for businesses and individuals.

Oracle Database Client 12.1 on CentOS Stream 8: cloudimg provides this Oracle Database Client 12.1 on CentOS Stream 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. It enables users to connect to Oracle databases from various platforms and includes advanced data access features such as multi-version read consistency and result set caching.

Oracle Database Client 12.1 on Rocky Linux 8: cloudimg provides this Oracle Database Client 12.1 on Rocky Linux 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. The software includes advanced data access and security features, making it an excellent choice for businesses and individuals looking to manage data efficiently while ensuring data security.

Oracle Database Client 12.2 on Alma Linux 8: cloudimg provides this Oracle Database Client 12.2 on Alma Linux 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. Oracle Database Client 12.2 on Linux is a reliable and high-performance database client that provides advanced security features and support for multiple operating systems.

Oracle Database Client 12.2 on CentOS Stream 8: cloudimg provides this Oracle Database Client 12.2 on CentOs Stream 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. It provides advanced data access and security features, including multi-version read consistency, result set caching, transparent data encryption, and database vault.

Oracle Database Client 12.2 on Rocky Linux 8: cloudimg provides this Oracle Database Client 12.2 on Rocky Linux 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. The software enables users to connect to Oracle databases from various platforms, providing flexibility and convenience.

Oracle Database Client 19c on Alma Linux 8: cloudimg provides this Oracle Database Client 19c on Alma Linux 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. The software offers advanced features for improved query performance, data security, and real-time analytics.

Oracle Database Client 19c on CentOS Stream 8: cloudimg provides this Oracle Database Client 19c on CentOS Stream 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. The software’s advanced features improve query performance, data security, and real-time analytics, while the automatic indexing feature creates and manages indexes for better performance.

Oracle Database Client 19c on OEL 8: cloudimg provides this Oracle Database Client 19c on OEL 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. This powerful software solution offers advanced features for improved query performance, real-time analytics, and data security.

Oracle Database Client 19c on Rocky Linux 8: cloudimg provides this Oracle Database Client 19c on Rocky Linux 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. This tool provides a secure environment for critical business applications and is essential for any organization that relies on Oracle databases for their operations.

Oracle Database Client 21c on Alma Linux 8: cloudimg provides this Oracle Database Client 12c on Alma Linux 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. The software provides advanced features for managing Oracle databases on Linux, including In-Memory for faster query response times and machine learning algorithms for real-time analytics.

Oracle Database Client 21c on CentOS Stream 8: cloudimg provides this Oracle Database Client 21c on CentOS Stream 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. The software’s myriad features enable high-performance queries against large datasets, while its security features provide a secure environment for critical business applications.

Oracle Database Client 21c on OEL 8: cloudimg provides this Oracle Database Client 12c on OEL 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. Oracle Database Client 21c on Linux is a powerful software solution for managing Oracle databases. It offers advanced features for improved query performance, real-time analytics, and data security.

Oracle Database Client 21c on Rocky Linux 8: cloudimg provides this Oracle Database Client 21c on Rocky Linux 8 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. The software offers advanced features for improved query performance, data security, and real-time analytics that are essential for organizations that rely on Oracle databases for operations.

Oracle Enterprise Linux 7.3: cloudimg provides this Oracle Enterprise Linux 7.3 image preinstalled with system components such as Azure Command-Line Interface (CLI), Python 3, and cloud-init. Oracle Enterprise Linux 7 is a reliable and secure operating system with advanced performance optimization capabilities and built-in technologies like Ksplice and DTrace.

OLS360 Platform (Essential and Advanced) (Portuguese): Available in Portuguese, this no-code platform from One Logic Solutions offers cost-effective and innovative strategies to grow your business. Integrated with Microsoft Power BI, it provides data mart integration, multi-level security, customized SLAs, and task scheduling. The essential plan is ideal for smaller teams, while the advanced version is better suited for enterprises.

PowerShell 7.3 on Debian 10: PowerShell 7.3 on Debian 10 offers advanced scripting capabilities for efficient automation and cross-platform compatibility for a consistent scripting experience. It also provides enhanced performance and expanded functionality, effortless system configuration, and task management on Debian 10. Art Group packages images according to industry standards, ensuring they are always up-to-date, reliable, and secure.

PowerShell 7.3 on Debian 11: PowerShell 7.3 on Debian 11 offers advanced features and cross-platform compatibility for efficient system administration. Art Group’s preconfigured and up-to-date images ensure reliability and security, with constant monitoring for updates and vulnerabilities. Try PowerShell 7.3 on Debian 11 for streamlined management of your infrastructure.

PowerShell 7.3 on Linux 7.9: PowerShell 7.3 on Linux 7.9 offers advanced features and cross-platform compatibility for efficient system administration and automation. Art Group’s preconfigured and up-to-date images adhere to industry standards and are monitored for updates and vulnerabilities.

PowerShell 7.3 on Linux Stream 8: PowerShell 7.3 on Linux Stream 8 offers a unified scripting and automation solution for IT infrastructure, with enhanced compatibility, expanded cmdlet functionality, improved performance, and secure communication. Art Group’s preconfigured and up-to-date images are reliable and secure, with constant monitoring for updates and vulnerabilities.

PowerShell 7.3 on Ubuntu 18.04: PowerShell 7.3 on Ubuntu 18.04 allows for unified scripting and automation across Windows and Linux, with optimized compatibility and expanded functionality. It offers enhanced performance and resource utilization, secure communication with remote systems, streamlined configuration and deployment processes, and integration with popular DevOps tools.

PowerShell 7.3 on Ubuntu 20.04: PowerShell 7.3 on Ubuntu 20.04 offers cross-platform compatibility, efficient scripting, security, and community support. It also has improved performance, enhanced compatibility, and containerization support. Art Group’s preconfigured and up-to-date images ensure reliability and security.

PowerShell 7.3 on Ubuntu 22.04: PowerShell 7.3 on Ubuntu 22.04 offers advanced scripting capabilities for efficient automation and system configuration. With cross-platform compatibility, it ensures a consistent scripting environment and seamless integration with Ubuntu 22.04. Art Group’s preconfigured and up-to-date images are secure and monitored for updates and vulnerabilities.

Red Hat Enterprise Linux 8.9 Generation 2: ProComputers offers a minimal ready-to-use Red Hat Enterprise Linux (RHEL) 8.9 Generation 2 Gold Image for use on Microsoft Azure. It includes Azure Linux Agent, cloud-init, and SELinux, and supports Accelerated Networking. No RHEL 8 subscription is required, and it has all security updates available at the release date.

Red Hat Enterprise Linux 8.9 with LVM: ProComputers offers a minimal ready-to-use Red Hat Enterprise Linux (RHEL) 8.9 Gold Image with LVM partitioning, including Azure Linux Agent, cloud-init, and SELinux. No RHEL 8 subscription is required, and it supports Accelerated Networking. The image is pay-as-you-go with no upfront cost and includes all security updates available at the release date.

Red Hat Enterprise Linux 9.3 with LVM: ProComputers offers a minimal ready-to-use Red Hat Enterprise Linux (RHEL) 9.3 Gold Image with LVM partitioning, including Azure Linux Agent, cloud-init, and SELinux. It requires no RHEL 9 subscription and supports accelerated networking. The image is pay-as-you-go with no upfront cost and includes all security updates available at the release date.

PyTorch on Debian 11: PyTorch is a flexible, imperative deep learning framework with a rich ecosystem of libraries for computer vision, natural language processing, and scientific computing. It features tensors, automatic differentiation, modules, and optimizers.

QuickBooks Accountant Enterprise 2023 Server with Microsoft 365: Automate io offers a prebuilt Windows Server 2022 with QuickBooks Accountant Enterprise 2023 and Microsoft 365 applications preinstalled. It simplifies the process of moving to the cloud and eliminates the cost of system failures. A QuickBooks Accountant Enterprise license must be purchased separately, and a Microsoft 365 license is required.

QuickBooks Accountant Enterprise 2024 Server with Microsoft 365: Automate io offers a prebuilt Windows Server 2022 with QuickBooks Accountant Enterprise 2024 and Microsoft 365 applications preinstalled. It simplifies the process of moving to the cloud and eliminates the cost of system failures. A QuickBooks Accountant Enterprise License must be purchased separately, and a Microsoft 365 license is required.

QuickBooks Premier 2023 Server with Microsoft 365: Automate io offers a prebuilt Windows Server 2022 with QuickBooks Premier 2023 and Microsoft 365 applications preinstalled. It simplifies the process of moving to the cloud and eliminates the cost of system failures. A QuickBooks Premier license must be purchased separately, and a Microsoft 365 license will be required.

QuickBooks Pro 2023 Server with Microsoft 365: Automate io offers a prebuilt Windows Server 2022 with QuickBooks Pro 2023 and Microsoft 365 applications preinstalled. It simplifies the process of moving to the cloud and eliminates the cost of system failures. A Microsoft 365 license is required to activate the products, which can be easily purchased through Automate io.

QuickBooks Pro 2024 Server with Microsoft 365: Automate io offers a prebuilt Windows Server 2022 with QuickBooks Pro 2024 and Microsoft 365 applications preinstalled. It simplifies the process of moving to the cloud and eliminates the cost of system failures. A QuickBooks Desktop Pro License must be purchased separately, and a Microsoft 365 license will be required to activate the products.

Rocky Linux 9 with GUI: Yaseen’s Market provides this graphical user interface (GUI) image for Rocky Linux 9. Rocky Linux is a secure Linux environment but lacks a GUI on cloud platforms, but a virtual machine with a GUI and RDP can be configured for use.

Rocky Linux 9.3 Minimal: This image of Rocky Linux 9.3 is now available from Yaseen’s Market and offers optimized performance, enhanced security, flexibility, and control. With full root access, users can easily integrate with Microsoft Azure services for a comprehensive cloud experience. It is ideal for businesses seeking enterprise-level performance and developers requiring a reliable testing environment.

Sigil: Sigil by Arkahna is a cloud management SaaS platform for Microsoft Azure, Dynamics 365, Microsoft 365, GitHub, Atlassian tools, and custom Azure-based applications. Suitable for businesses of all sizes, Sigil offers governance and compliance assurance, proactive monitoring, best practice standards, access to expert knowledge, outcomes-based operational approach, and transparent and flexible pricing models.

Solgari Customer Engagement for Dynamics 365: Solgari’s solution connects customers to the right people quickly, allowing communication through any preferred channel. The app offers advanced self-service options and intelligent skills-based routing, with real-time conversation summary and sentiment analysis. Solgari can be deployed instantly from Microsoft Azure with transparent and flexible pricing models.

Tiger Bridge: This solution from Tiger Technology lets organizations benefit from Microsoft Azure multi-tier storage and services while preserving existing applications and workflows. It offers cost optimization, no IT complexities, and no disruption to workflows. Tiger Bridge software-only data management solution enables hybrid workflows by seamlessly pairing file systems with Azure Blob containers.

TPM Managed Partner Program (Individual Plan): This program helps independent software vendors, systems integrators, managed services providers, and advisory/consultancy partners achieve desired business results in their partnership with Microsoft. The program includes live guidance, email support, weekly seminars, quarterly executive briefing calls, and access to an on-demand learning platform.

Webmin: Webmin is an open-source solution for managing your Linux servers in a web-based interface. This offer from Yaseen’s Market includes a user-friendly interface, comprehensive control, customizable modules, and secure management. Deploy Webmin on Microsoft Azure for efficient, browser-based Linux management and top-notch performance and reliability.

ZADIG XDR: BITCORP S.R.L offers an embedded IDS module to analyze traffic in Microsoft Azure virtual networks. The virtual machine, available as part of the ZADIG XDR managed application, captures network traffic from a mirroring router, and the search engine may be part of the node or in a dedicated cluster.

Go further with workshops, proofs of concept, and implementations

Microsoft Purview Security and Compliance: 1-Week Workshop: Sopra Steria offers a workshop to help organizations meet compliance and governance requirements using Microsoft Purview. The workshop includes identifying requirements, analyzing current setup, and presenting an implementation plan with best practices and recommended security and compliance roles to control and secure content, including Microsoft Teams, SharePoint, OneDrive, and Exchange.

Custom Azure PreStudy Solution Workshops: Accigo offers custom solutions and proposals for complex problems through a defined set of workshops. The proposed solution is based on the Microsoft Azure technology stack to maximize investment in Microsoft. The solution utilizes Azure infrastructure, security, identity, integration services, AI search, and web apps for cost-effective implementation.

Private ChatGPT: 1-Week Implementation: Advania’s Private ChatGPT solution based on Microsoft Azure OpenAI provides safe generative AI for every user, with the option of extending to company data in Microsoft and non-Microsoft platforms. The solution is hosted entirely on Azure and allows safe use of AI with tasks related to sensitive data. Advania offers a full range of AI and Microsoft 365 Copilot services.

Application Modernization: 2-Month Implementation: Kainskep’s Microsoft Azure implementation helps modernize legacy applications into cloud-native solutions. Benefits include increased agility, reduced IT costs, enhanced security and compliance, improved performance and scalability, and empowered innovation. Outcomes include reduced IT costs and complexity, enhanced security and compliance, improved performance and scalability.

Application Development: 8-Week Proof of Concept: Tailored for enterprises and start-ups, this proof of concept from Aximsoft offers Microsoft Azure automation (CI/CD) services to help you validate application concepts and create prototypes for visualizing and refining ideas. Axiomsoft’s solutions are designed to scale with evolving development needs and are implemented by experienced professionals.

Automation Services (CI/CD): Aximsoft offers Microsoft Azure automation (CI/CD) services for efficient and error-free code deployment, automated testing, and infrastructure management. Axiomsoft’s solutions utilize customized architectures and agile methodologies for faster time-to-market, with iterative development for continuous improvement.

Azure DevOps Consulting Services: UB Technology Innovations offers comprehensive Microsoft Azure DevOps services, including consultation, training, infrastructure setup, source control, CI/CD pipeline implementation, integration and automation, best practices and governance, and ongoing support and maintenance. Benefits include accelerated time-to-market and enhanced productivity and security.

Azure Ingestion Framework: 4-Week Implementation: Adastra offers an analytics platform for organizations to analyze work and assets through near real-time data integration. The offer includes defining Microsoft Azure ingestion framework service, integrating data from up to five source datasets, and validating results with stakeholders. The platform enables real-time event streaming to the cloud, metadata-driven framework, and more.

Azure Migration Workshop: Resolution Technology’s Microsoft Azure Migration workshop helps businesses avoid common roadblocks in cloud adoption by focusing on understanding business objectives and infrastructure to create an actionable roadmap for migration. This approach increases project success and uncovers benefits of modernizing workloads and applications.

Azure Modern Data Platform Jumpstart: 4-Week Implementation: TL Consulting will deploy a modern data platform on Microsoft Azure, providing organizations with scalable and resilient solutions for faster data-driven decisions. Their offering includes data analytics, machine learning, and AI on Azure for an end-to-end data pipeline that accelerates analytical use cases’ time to market and time to value.

Azure Open AI Consultancy: Microsoft Azure OpenAI is a collaborative service that simplifies developer access to advanced AI technology. It enables the creation of intelligent chatbots, data analysis tools, and personalized content. Imperium Dynamics’ services offer enterprise-grade security to enhance customer support, provide natural language processing for data insights, and automate content generation.

Azure OpenAI Implementation: Blazeclan offers Microsoft Azure OpenAI implementation and integration services for Microsoft business apps. Their team of certified Azure experts provides customized AI solutions, seamless integration with Microsoft apps, data security, and compliance. Benefits include enhanced productivity, informed decision-making, scalability, and a seamless user experience.

Azure Sentinel Implementation: CloudGuard offers Microsoft Azure Sentinel MXDR services to provide real-time security visibility and improve issue detection and response performance. They also offer user behavioral analytics and abnormal activity monitoring with threat intelligence to enhance threat detection and response. Services include around-the-clock support, customized cybersecurity reports, and more.

Azure Virtual Desktop Implementation: Microsoft Azure Virtual Desktop simplifies the transition to cloud-based work, providing flexibility, cost efficiency, enhanced security, streamlined management, and scalability. RAKE Digital’s implementation includes assessment and planning, Azure resource configuration, image creation, deployment and configuration, testing, optimization, and training.

Azure Virtual Desktop Jumpstart: 4-Week Implementation: Spyglass MTG offers a cost-effective and secure approach to VDI environments. It helps customers move to the cloud and provides a solid foundation for Azure Virtual Desktop. The implementation includes strategy and planning, deployment, and user training. Deliverables include a functioning Azure Virtual Desktop environment with user access and security configurations.

Cloud Infrastructure Management (CIM): Enhance your Microsoft Azure cloud infrastructure with cloud infrastructure management (CIM) services. Aximsoft’s Microsoft-certified team offers tailored solutions for scalability, performance, security, and compliance. Benefit from continuous monitoring, cost-effective management, and around-the-clock support.

Cloud Optimization Service: This service from Audax Labs maximizes cloud infrastructure efficiency and performance for IT, e-commerce, finance, healthcare, and telecoms. It uses Microsoft Azure tools like Azure Cost Management and Billing, Azure Automation, Azure Advisor, and Azure DevOps to streamline the intricate process of resource allocation and performance metrics.

CogniSights: 8-Week Proof of Concept: CogniSights is a Microsoft Azure OpenAI app that uses NLP to SQL conversion to make database querying easy, accelerating insights and productivity. It retrieves metadata from any database to answer natural language queries about structured data from various sources. The app is designed for non-programmers to self-serve insights from any enterprise database.

Community Training Guided Implementation: Wipfli will help your organization implement a structured community training platform that includes assessment, technical planning, execution, and content guidance. It will enable high-impact digital skilling and training across geographically disparate regions and languages and will provide an understanding of impact goals and a branded instance on Microsoft Azure.

Community Training Implementation Advisory: Wipfli’s service helps organizations implement community training by providing guidance on platform fit, implementation planning, and design approach recommendations. The advisory includes a community training overview, technology infrastructure guidance, and coaching on implementation decisions. The offering assumes administrative access to Microsoft Azure and provides advisory services only.