Category: News

Microsoft 365 online excel ledger jumbles the page on screen to where it is not legible. Why?

My excel page that is up on my computer gets jumbled when I leave it for a little bit open.

This has happened a few times now. I can restore to a previous version, but I do not want to keep doing that.

Does anyone know why this is happening?

My excel page that is up on my computer gets jumbled when I leave it for a little bit open.This has happened a few times now. I can restore to a previous version, but I do not want to keep doing that.Does anyone know why this is happening? Read More

How do I access elevation data capabilities for TI MM Wave Radar sensors (AWR1843 Boost).

The TIAWR1843Boost MM Wave Radar sensor has elevation sensing capabilities. During seup, when I create the .cfg file through the mmWave demo tool provided by Texas Instruments i can plot this data. The .cfg file stipulates that the sensor is configured for Azimuth 15 + Elevation. When i run the file though, the radar object has the elevation resolution as read only and set as NaN. It is only configurable through the .cfg file. I have verified that I am not recording any Z-axis values (only 0).

Has anybody found a work around for this?

RADAR OBJECT PROPERTIES:

BoardName: "TI AWR1843BOOST"

ConfigPort: "COM6"

DataPort: "COM5"

ConfigFile: "C:ProgramDataMATLABSupportPackagesR2023btoolboxtargetsupportpackagestimmwaveradarconfigfilesxwr18xx_BestRange_UpdateRate_1.cfg"

MMWave SDK Version: "03.06.00.00"

SensorIndex: 1

MountingLocation: [0, 0, 0] (m)

MountingAngles: [0, 0, 0] (degrees)

UpdateRate: 1 (samples/s)

RangeResolution: 2.441211e-01 (m)

RangeRateResolution: 1.242369e-01 (m/s)

AzimuthResolution: 1.447751e+01 (degrees)

ElevationResolution: NaN (degrees)

MaximumRange: 6.249500e+01 (m)

MaximumRangeRate: 9.938949e-01 (m/s)

BaudRate: 921600 (bits/s)

ReadMode: "latest"

StartTime: "25-Dec-2023 07:03:52.375"

CenterFrequency: 7.751722e+10 (Hz)

Bandwidth: 6.144492e+08 (Hz)

EnableRangeGroups: 1

EnableDopplerGroups: 1

RemoveStaticClutter: 1

RangeCFAR: 15 (dB)

DopplerCFAR: 15 (dB)

RangeLimits: [0, 4.999000e+01] (m)

RangeRateLimits: [-1, 1] (m/s)

AzimuthLimits: [-90, 90] (degrees)

ElevationLimits: [-90, 90] (degrees)

DetectionCoordinates: "Sensor rectangular"

This is the .cfg file i am using:

% ***************************************************************

% Created for SDK ver:03.06

% Created using Visualizer ver:3.6.0.0

% Frequency:77

% Platform:xWR18xx

% Scene Classifier:best_range

% Azimuth Resolution(deg):15 + Elevation

% Range Resolution(m):0.244

% Maximum unambiguous Range(m):50

% Maximum Radial Velocity(m/s):1

% Radial velocity resolution(m/s):0.13

% Frame Duration(msec):1000

% RF calibration data:None

% Range Detection Threshold (dB):15

% Doppler Detection Threshold (dB):15

% Range Peak Grouping:enabled

% Doppler Peak Grouping:enabled

% Static clutter removal:disabled

% Angle of Arrival FoV: Full FoV

% Range FoV: Full FoV

% Doppler FoV: Full FoV

% ***************************************************************

sensorStop

flushCfg

dfeDataOutputMode 1

channelCfg 15 7 0

adcCfg 2 1

adcbufCfg -1 0 1 1 1

profileCfg 0 77 296 7 28.49 0 0 30 1 256 12499 0 0 30

chirpCfg 0 0 0 0 0 0 0 1

chirpCfg 1 1 0 0 0 0 0 4

chirpCfg 2 2 0 0 0 0 0 2

frameCfg 0 2 16 0 1000 1 0

lowPower 0 0

guiMonitor -1 1 0 0 1 1 0

cfarCfg -1 0 2 8 4 3 0 15 1

cfarCfg -1 1 0 4 2 3 1 15 1

multiObjBeamForming -1 1 0.5

clutterRemoval -1 0

calibDcRangeSig -1 0 -5 8 256

extendedMaxVelocity -1 0

lvdsStreamCfg -1 0 0 0

compRangeBiasAndRxChanPhase 0.0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0

measureRangeBiasAndRxChanPhase 0 1.5 0.2

CQRxSatMonitor 0 3 4 63 0

CQSigImgMonitor 0 127 4

analogMonitor 0 0

aoaFovCfg -1 -90 90 -90 90

cfarFovCfg -1 0 0 49.99

cfarFovCfg -1 1 -1 1.00

calibData 0 0 0

sensorStartThe TIAWR1843Boost MM Wave Radar sensor has elevation sensing capabilities. During seup, when I create the .cfg file through the mmWave demo tool provided by Texas Instruments i can plot this data. The .cfg file stipulates that the sensor is configured for Azimuth 15 + Elevation. When i run the file though, the radar object has the elevation resolution as read only and set as NaN. It is only configurable through the .cfg file. I have verified that I am not recording any Z-axis values (only 0).

Has anybody found a work around for this?

RADAR OBJECT PROPERTIES:

BoardName: "TI AWR1843BOOST"

ConfigPort: "COM6"

DataPort: "COM5"

ConfigFile: "C:ProgramDataMATLABSupportPackagesR2023btoolboxtargetsupportpackagestimmwaveradarconfigfilesxwr18xx_BestRange_UpdateRate_1.cfg"

MMWave SDK Version: "03.06.00.00"

SensorIndex: 1

MountingLocation: [0, 0, 0] (m)

MountingAngles: [0, 0, 0] (degrees)

UpdateRate: 1 (samples/s)

RangeResolution: 2.441211e-01 (m)

RangeRateResolution: 1.242369e-01 (m/s)

AzimuthResolution: 1.447751e+01 (degrees)

ElevationResolution: NaN (degrees)

MaximumRange: 6.249500e+01 (m)

MaximumRangeRate: 9.938949e-01 (m/s)

BaudRate: 921600 (bits/s)

ReadMode: "latest"

StartTime: "25-Dec-2023 07:03:52.375"

CenterFrequency: 7.751722e+10 (Hz)

Bandwidth: 6.144492e+08 (Hz)

EnableRangeGroups: 1

EnableDopplerGroups: 1

RemoveStaticClutter: 1

RangeCFAR: 15 (dB)

DopplerCFAR: 15 (dB)

RangeLimits: [0, 4.999000e+01] (m)

RangeRateLimits: [-1, 1] (m/s)

AzimuthLimits: [-90, 90] (degrees)

ElevationLimits: [-90, 90] (degrees)

DetectionCoordinates: "Sensor rectangular"

This is the .cfg file i am using:

% ***************************************************************

% Created for SDK ver:03.06

% Created using Visualizer ver:3.6.0.0

% Frequency:77

% Platform:xWR18xx

% Scene Classifier:best_range

% Azimuth Resolution(deg):15 + Elevation

% Range Resolution(m):0.244

% Maximum unambiguous Range(m):50

% Maximum Radial Velocity(m/s):1

% Radial velocity resolution(m/s):0.13

% Frame Duration(msec):1000

% RF calibration data:None

% Range Detection Threshold (dB):15

% Doppler Detection Threshold (dB):15

% Range Peak Grouping:enabled

% Doppler Peak Grouping:enabled

% Static clutter removal:disabled

% Angle of Arrival FoV: Full FoV

% Range FoV: Full FoV

% Doppler FoV: Full FoV

% ***************************************************************

sensorStop

flushCfg

dfeDataOutputMode 1

channelCfg 15 7 0

adcCfg 2 1

adcbufCfg -1 0 1 1 1

profileCfg 0 77 296 7 28.49 0 0 30 1 256 12499 0 0 30

chirpCfg 0 0 0 0 0 0 0 1

chirpCfg 1 1 0 0 0 0 0 4

chirpCfg 2 2 0 0 0 0 0 2

frameCfg 0 2 16 0 1000 1 0

lowPower 0 0

guiMonitor -1 1 0 0 1 1 0

cfarCfg -1 0 2 8 4 3 0 15 1

cfarCfg -1 1 0 4 2 3 1 15 1

multiObjBeamForming -1 1 0.5

clutterRemoval -1 0

calibDcRangeSig -1 0 -5 8 256

extendedMaxVelocity -1 0

lvdsStreamCfg -1 0 0 0

compRangeBiasAndRxChanPhase 0.0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0

measureRangeBiasAndRxChanPhase 0 1.5 0.2

CQRxSatMonitor 0 3 4 63 0

CQSigImgMonitor 0 127 4

analogMonitor 0 0

aoaFovCfg -1 -90 90 -90 90

cfarFovCfg -1 0 0 49.99

cfarFovCfg -1 1 -1 1.00

calibData 0 0 0

sensorStart The TIAWR1843Boost MM Wave Radar sensor has elevation sensing capabilities. During seup, when I create the .cfg file through the mmWave demo tool provided by Texas Instruments i can plot this data. The .cfg file stipulates that the sensor is configured for Azimuth 15 + Elevation. When i run the file though, the radar object has the elevation resolution as read only and set as NaN. It is only configurable through the .cfg file. I have verified that I am not recording any Z-axis values (only 0).

Has anybody found a work around for this?

RADAR OBJECT PROPERTIES:

BoardName: "TI AWR1843BOOST"

ConfigPort: "COM6"

DataPort: "COM5"

ConfigFile: "C:ProgramDataMATLABSupportPackagesR2023btoolboxtargetsupportpackagestimmwaveradarconfigfilesxwr18xx_BestRange_UpdateRate_1.cfg"

MMWave SDK Version: "03.06.00.00"

SensorIndex: 1

MountingLocation: [0, 0, 0] (m)

MountingAngles: [0, 0, 0] (degrees)

UpdateRate: 1 (samples/s)

RangeResolution: 2.441211e-01 (m)

RangeRateResolution: 1.242369e-01 (m/s)

AzimuthResolution: 1.447751e+01 (degrees)

ElevationResolution: NaN (degrees)

MaximumRange: 6.249500e+01 (m)

MaximumRangeRate: 9.938949e-01 (m/s)

BaudRate: 921600 (bits/s)

ReadMode: "latest"

StartTime: "25-Dec-2023 07:03:52.375"

CenterFrequency: 7.751722e+10 (Hz)

Bandwidth: 6.144492e+08 (Hz)

EnableRangeGroups: 1

EnableDopplerGroups: 1

RemoveStaticClutter: 1

RangeCFAR: 15 (dB)

DopplerCFAR: 15 (dB)

RangeLimits: [0, 4.999000e+01] (m)

RangeRateLimits: [-1, 1] (m/s)

AzimuthLimits: [-90, 90] (degrees)

ElevationLimits: [-90, 90] (degrees)

DetectionCoordinates: "Sensor rectangular"

This is the .cfg file i am using:

% ***************************************************************

% Created for SDK ver:03.06

% Created using Visualizer ver:3.6.0.0

% Frequency:77

% Platform:xWR18xx

% Scene Classifier:best_range

% Azimuth Resolution(deg):15 + Elevation

% Range Resolution(m):0.244

% Maximum unambiguous Range(m):50

% Maximum Radial Velocity(m/s):1

% Radial velocity resolution(m/s):0.13

% Frame Duration(msec):1000

% RF calibration data:None

% Range Detection Threshold (dB):15

% Doppler Detection Threshold (dB):15

% Range Peak Grouping:enabled

% Doppler Peak Grouping:enabled

% Static clutter removal:disabled

% Angle of Arrival FoV: Full FoV

% Range FoV: Full FoV

% Doppler FoV: Full FoV

% ***************************************************************

sensorStop

flushCfg

dfeDataOutputMode 1

channelCfg 15 7 0

adcCfg 2 1

adcbufCfg -1 0 1 1 1

profileCfg 0 77 296 7 28.49 0 0 30 1 256 12499 0 0 30

chirpCfg 0 0 0 0 0 0 0 1

chirpCfg 1 1 0 0 0 0 0 4

chirpCfg 2 2 0 0 0 0 0 2

frameCfg 0 2 16 0 1000 1 0

lowPower 0 0

guiMonitor -1 1 0 0 1 1 0

cfarCfg -1 0 2 8 4 3 0 15 1

cfarCfg -1 1 0 4 2 3 1 15 1

multiObjBeamForming -1 1 0.5

clutterRemoval -1 0

calibDcRangeSig -1 0 -5 8 256

extendedMaxVelocity -1 0

lvdsStreamCfg -1 0 0 0

compRangeBiasAndRxChanPhase 0.0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0 1 0

measureRangeBiasAndRxChanPhase 0 1.5 0.2

CQRxSatMonitor 0 3 4 63 0

CQSigImgMonitor 0 127 4

analogMonitor 0 0

aoaFovCfg -1 -90 90 -90 90

cfarFovCfg -1 0 0 49.99

cfarFovCfg -1 1 -1 1.00

calibData 0 0 0

sensorStart ti mmwave radar, radar, elevation data, radar toolbox MATLAB Answers — New Questions

IWR1843BOOST Parameter: “ElevationResolution value: NaN”, which causes the z axis missing in 3D point cloud data.

Device: TI IWR1843BOOST

SDK version: 3.06

Test procedures: 1. Use hardware setup to flash the bin and load the cfg file (generated from mmWave demo visualizer 3.6.0).

2. Run mmWaveRadar() to read the properties on the device.

The value of the mmWaveRadar() output:

val =

BoardName: "TI IWR1843BOOST"

ConfigPort: "COM5"

DataPort: "COM6"

ConfigFile: "C:ProgramDataMATLABSupportPackagesR2023btoolboxtargetsupportpackagestimmwaveradarconfigfilesxwr18xx_BestRangeRateResolution_UpdateRate_10.cfg"

MMWave SDK Version: "03.06.00.00"

SensorIndex: 1

MountingLocation: [0, 0, 0] (m)

MountingAngles: [0, 0, 0] (degrees)

UpdateRate: 10 (samples/s)

RangeResolution: 2.142656e-01 (m)

RangeRateResolution: 3.849848e-02 (m/s)

AzimuthResolution: 1.447751e+01 (degrees)

ElevationResolution: NaN (degrees)

MaximumRange: 1.371300e+01 (m)

MaximumRangeRate: 2.463903e+00 (m/s)

The ElevationResolution is NaN.

However, the 3D point cloud data can be correctly read from Demo visualizer but fails to be read in the MATLAB. Is there any differences from the cfg extract procedure in MATLAB?Device: TI IWR1843BOOST

SDK version: 3.06

Test procedures: 1. Use hardware setup to flash the bin and load the cfg file (generated from mmWave demo visualizer 3.6.0).

2. Run mmWaveRadar() to read the properties on the device.

The value of the mmWaveRadar() output:

val =

BoardName: "TI IWR1843BOOST"

ConfigPort: "COM5"

DataPort: "COM6"

ConfigFile: "C:ProgramDataMATLABSupportPackagesR2023btoolboxtargetsupportpackagestimmwaveradarconfigfilesxwr18xx_BestRangeRateResolution_UpdateRate_10.cfg"

MMWave SDK Version: "03.06.00.00"

SensorIndex: 1

MountingLocation: [0, 0, 0] (m)

MountingAngles: [0, 0, 0] (degrees)

UpdateRate: 10 (samples/s)

RangeResolution: 2.142656e-01 (m)

RangeRateResolution: 3.849848e-02 (m/s)

AzimuthResolution: 1.447751e+01 (degrees)

ElevationResolution: NaN (degrees)

MaximumRange: 1.371300e+01 (m)

MaximumRangeRate: 2.463903e+00 (m/s)

The ElevationResolution is NaN.

However, the 3D point cloud data can be correctly read from Demo visualizer but fails to be read in the MATLAB. Is there any differences from the cfg extract procedure in MATLAB? Device: TI IWR1843BOOST

SDK version: 3.06

Test procedures: 1. Use hardware setup to flash the bin and load the cfg file (generated from mmWave demo visualizer 3.6.0).

2. Run mmWaveRadar() to read the properties on the device.

The value of the mmWaveRadar() output:

val =

BoardName: "TI IWR1843BOOST"

ConfigPort: "COM5"

DataPort: "COM6"

ConfigFile: "C:ProgramDataMATLABSupportPackagesR2023btoolboxtargetsupportpackagestimmwaveradarconfigfilesxwr18xx_BestRangeRateResolution_UpdateRate_10.cfg"

MMWave SDK Version: "03.06.00.00"

SensorIndex: 1

MountingLocation: [0, 0, 0] (m)

MountingAngles: [0, 0, 0] (degrees)

UpdateRate: 10 (samples/s)

RangeResolution: 2.142656e-01 (m)

RangeRateResolution: 3.849848e-02 (m/s)

AzimuthResolution: 1.447751e+01 (degrees)

ElevationResolution: NaN (degrees)

MaximumRange: 1.371300e+01 (m)

MaximumRangeRate: 2.463903e+00 (m/s)

The ElevationResolution is NaN.

However, the 3D point cloud data can be correctly read from Demo visualizer but fails to be read in the MATLAB. Is there any differences from the cfg extract procedure in MATLAB? mmwave radar, iwr1843boost, point cloud MATLAB Answers — New Questions

How to Refresh Expired Keys in Redis using Azure Functions

Introduction

Redis is an extremely popular in-memory data store that is used as a cache, session store, message broker, and more. One of the features that makes Redis really useful is the ability to set a time-to-live (TTL) value for any key. A key with a TTL value will be automatically deleted from Redis when the TTL expires. This helps reduce memory usage and ensure data freshness.

For instance, you might be storing information like inventory or pricing for your e-commerce site in Redis. This is a perfect application for caching in Redis. This type of data will be queried constantly and serving it directly from a database will either slow down your application or make your database implementation very expensive to handle the load! Using a CDN is out of the question because pricing and inventory data isn’t static. Redis can handle the throughput at low latencies, boosting the performance of your site.

There’s one problem, however—how can you be sure the price or inventory data in the cache is up to date with the official value in the database? This is a classic cache invalidation problem. TTL functionality in Redis solves half of the problem. By setting a TTL, you can ensure that stale values are periodically purged. But the other half of the problem remains—how do you gracefully update the cache with the latest value? That’s where serverless functions come in. This blog post will show you how to use the Redis bindings for Azure Functions to detect key expirations and automatically refresh the cache with the latest values.

Key Expiration and TTL in Redis

Redis has native support for several key expiration mechanisms, which enables you to have precise control over the TTL of data. Expiration commands include:

EXPIRE, which sets the TTL of a Redis key in seconds.

PEXPIRE, which sets the TLL of a Redis key in milliseconds.

EXPIREAT, which sets keys to expire at an absolute time in the future, using UNIX timestamps.

PEXPIREAT, which is the same as EXPIREAT, but using milliseconds.

Redis also has several commands that can be used to return the remaining time to live for a key, including TTL, PTTL, EXPIRETIME, and PEXPIRETIME.

So, for example, if you have a key named “apple” that is storing the apple’s price, you could use the following command to expire the price value after 60 seconds:

EXPIRE apple 60

Or this command to expire the price on December 25th, 2024:

EXPIREAT apple 1735138800

Keyspace Notifications

Expiration of stale keys is a great feature of Redis, but it still means you must check for expired keys and periodically refresh them. That’s where keyspace notifications can be used. This nifty feature will generate a notification each time an expiration event occurs. Specifically, it uses a special pub/sub channel within your cache to publish these events. This is a great feature because you can monitor the pub/sub channel and know when your keys are expiring, then proactively update them. There are a couple of things to bear in mind when using keyspace notifications:

First, you need to explicitly enable keyspace notifications since they are not enabled by default. Second, there is a performance impact from keyspace notifications, especially if your cache is firing off a lot of events. Third, keyspace notifications are fire-and-forget, which means that Redis does not guarantee the delivery or the order of the messages. You should not rely on keyspace notifications for critical or sensitive operations. Finally, keyspace notifications are asynchronous, which means that there may be some delay or inconsistency between the actual event and the notification.

Redis Triggers and Bindings for Azure Functions

Keyspace notifications are a powerful feature, but monitoring the notification channel can be a challenge. Fortunately, serverless functions offer an excellent (and low-cost) way to monitor and act upon these events. Even better, there are now triggers and bindings that allow Azure Cache for Redis to be used seamlessly with Azure Functions. This means you can trigger and automatically execute an Azure Function based on activity on your Redis cache. And, among other things, you can trigger on keyspace notifications! That means that, every time a key expires on your cache, you can trigger a Function that will pull in the latest value and update the expired key.

Putting it all Together

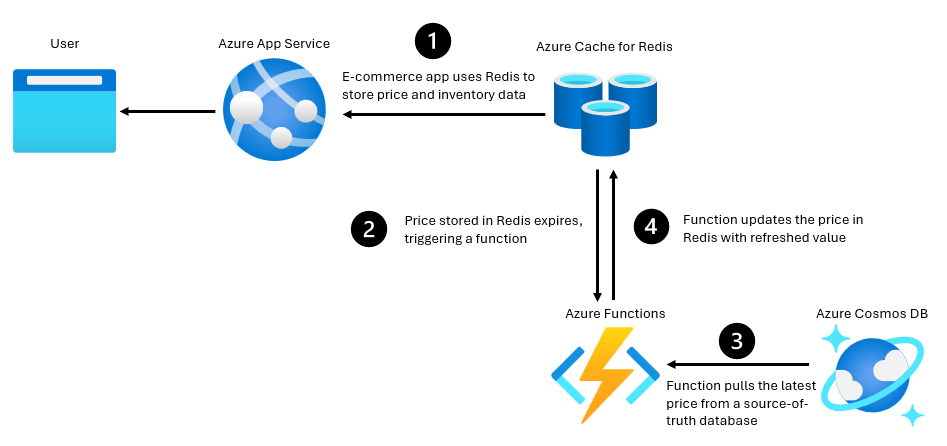

Ultimately, the architecture we’re looking for is something like this:

In this blog post, we’ll focus on three components using a very simple e-commerce use-case:

A Redis instance that will host a copy of price data for grocery items (using Azure Cache for Redis)

A primary database that will serve as the “source of truth” for the price of each item (using Azure Cosmos DB)

A serverless function that will detect key expirations in Redis, query the latest value in the database, and update the Redis instance (using Azure Functions)

Sample Code

To make things easier, the code for this sample is available on GitHub. To run the example, you’ll need:

An Azure subscription

.NET 8 or above.

The Azure Developer CLI

The Azure Developer CLI makes setting up the example simple. Follow these steps to get the demo up and running on Azure:

Open a terminal/shell

Copy the repo to a location of your choice (e.g. git clone https://github.com/MSFTeegarden/ExpirationTrigger)

In the terminal, change the directory to the project folder

Run:azd up

Follow the instructions to enter an environment name, select an Azure subscription, and choose an Azure region.

The Azure Developer CLI automation will kick in and start provisioning the resources you need in a new resource group. The following Azure resources will be created:

Azure Cache for Redis

Azure Cosmos DB

App Service plan

Function app

Storage Account

Application Insights

It will take 15-25 min for provisioning to finish. Pricing for these resources will depend on your region and usage, of course. But for me, it was around $2-$4 per day.

The automation will also enable keyspace notifications on your Redis instance, add a new database and container to your Azure Cosmos DB instance, and add connection strings for both Azure Cosmos DB and Azure Cache for Redis to your Function app environment variables. Finally, it deploys sample code to the functions instance. This is what the code looks like:

using Microsoft.Extensions.Logging;

using Microsoft.Azure.Functions.Worker;

using Microsoft.Azure.Functions.Worker.Extensions.Redis;

using Microsoft.Azure.Cosmos;

using Microsoft.Azure.Cosmos.Linq;

public class RedisTrigger

{

private readonly ILogger<RedisTrigger> logger;

public RedisTrigger(ILogger<RedisTrigger> logger)

{

this.logger = logger;

}

// This function will be invoked when a key expires in Redis. It then queries Cosmos DB for the key and its value. Finally, it uses an Azure Function output binding to write the updated key and value back to Redis .

[Function(“ExpireTrigger”)]

[RedisOutput(Common.connectionString, “SET”)] //Redis output binding. This specifies that the returned value will be written to Redis using the SET command.

//Trigger on Redis key expiration, and use an CosmosDB input binding to query the key and value from Cosmos DB.

public static async Task<String> ExpireTrigger(

[RedisPubSubTrigger(Common.connectionString, “__keyevent@0__:expired”)] Common.ChannelMessage channelMessage,

FunctionContext context,

[CosmosDBInput(

“myDatabase”, //Cosmos DB database name

“Inventory”, //Cosmos DB container name

Connection = “CosmosDbConnection” //Parameter name for the connection string in a environmental variable or local.settings.json

)] Container container

)

{

var logger = context.GetLogger(“RedisTrigger”);

var redisKey = channelMessage.Message; //The key that has expired in Redis

logger.LogInformation($”Key ‘{redisKey}’ has expired.”);

//Query Cosmos DB for the key and value

IOrderedQueryable<CosmosData> queryable = container.GetItemLinqQueryable<CosmosData>();

using FeedIterator<CosmosData> feed = queryable

.Where(b => b.item == redisKey) //item name must be the same as the key in Redis

.ToFeedIterator<CosmosData>();

FeedResponse<CosmosData> response = await feed.ReadNextAsync();

CosmosData data = response.FirstOrDefault(defaultValue: null);

if (data != null)

{

logger.LogInformation($”Key: “{data.item}”, Value: “{data.price}” added to Redis.”);

return $”{redisKey} {data.price}”; //Return the key and value to be written to Redis

}

else

{

logger.LogInformation($”Key not found”);

return $”{redisKey} false”; //set the value of the key to “false” if not found in Cosmos DB

}

}

}

With data models contained in the Common.cs file:

public class Common

{

public const string connectionString = “redisConnectionString”;

public class ChannelMessage

{

public string SubscriptionChannel { get; set; }

public string Channel { get; set; }

public string Message { get; set; }

}

}

public record CosmosData(

string id,

string item,

string price

);

The example has several key elements:

A Redis pub/sub trigger is used to monitor expiration key events, using the “__keyevent@0:expired” keyspace notification channel. This will execute the function each time an expiration event is detected.

A Cosmos DB Input binding is used to connect to the database—an easy way to manage the connection and read data on the database.

A Redis output binding is used to write new data to your Redis instance, saving the hassle of importing a Redis library and establishing a connection.

Each time the function is executed, the example will record the name of the key that expired, search for the value of this key within a specified Cosmos DB container, and then write the value from Cosmos DB back to Redis.

Running the Example

To finish setting up the example, you need to add some items to the Cosmos database.

Navigate to your Cosmos DB resource in the Azure Portal, and go to the Data Explorer menu. You’ll see a database named “myDatabase” and a container named “Inventory” which have already been created.

Select the Items entry under “Inventory” and then select New Item.

Enter in an id of your choosing, and then add entries for item and price, then select Save.

You can add as many items as you’d like—this will provide the source of truth price for entries in your Redis cache.

Next, navigate to your functions app resource in the portal and select the Log stream menu. This will allow you to monitor the execution of your functions trigger.

Finally, open a connection to your Redis instance. You can use the redis-cli command line tool, the built-in console in the Azure portal, or a standalone Redis GUI like Redis Insights.

When you have a connection to Redis open, first the value of a key, for example:

SET apple 1

If you get the value, you’ll see that our item “apple” has a value of 1:

GET apple

Now set the key to expire, for example in three seconds:

EXPIRE apple 3

Check the Log stream, and you’ll see your function execute and update the price:

Check the price again, and you get the updated value:

GET apple

Every time a key expires, your function is pulling the value from your database and updating your cache!

Next Steps

There is a lot more you can do with Azure Functions and Azure Cache for Redis! Take a look at these resources for more information and examples:

Overview of Azure Cache for Redis triggers and bindings

Tutorial: Get started with Azure Functions triggers and bindings in Azure Cache for Redis

Tutorial: Create a write-behind cache by using Azure Functions and Azure Cache for Redis

Repo: Azure Functions Redis Extension

What is Azure Cache for Redis?

Microsoft Tech Community – Latest Blogs –Read More

Maximize data protection & minimize business disruption with Microsoft Purview Data Loss Prevention

Protecting your business-critical data is of the utmost importance in today’s digital landscape. Within the last 12 months, 74% of organizations have had business data exposed during a data security incident, 65% saw operational data compromised, and 58% experienced personal data being made vulnerable [1]. However, protecting that data can seem like a daunting charter for many security teams. Between the boundless volumes of data created and transformed daily by modern organizations and the difficulty of scaling legacy data loss prevention (DLP) strategies, proper prevention, investigation, and remediation of data security incidents can be an uphill climb. Simultaneously, the breakneck adoption of Generative AI is not only an exponential multiplier of organizational data, but also a new frontier of risk that we must learn to secure. Now is the time for organizations to take a comprehensive approach to data security that supports the pace of work today and adapts as your business transforms for the future.

Microsoft Purview Data Loss Prevention (Microsoft Purview DLP) provides a unified and cloud-native DLP that prevents sensitive data loss with minimal impact to business continuity. As part of our commitment to helping security teams build resilient and adaptable data security, we are excited to announce several new Microsoft Purview DLP capabilities that enable:

Efficient investigation: Capabilities that empower admins to find, interpret, and act on key DLP incidents, including context-rich email alerts, support for custom email templates as policy actions, and new rich filters for DLP alerts in Microsoft Defender XDR.

Strengthened protection: Capabilities that aim to maximize both protection of sensitive data and employee productivity, including automatic pause and resume of user actions, improvements to device onboarding, and the addition of application allowlists.

Extended protection: Capabilities that protect your sensitive data across multiple workloads, including support for file type and file extension-based policy conditions and allowed domain groups for macOS.

Easier triage and investigation of DLP alerts

As the volume of data created, processed, and transmitted by modern business proliferates, so do the risks. In 2023, organizations experienced an average of 59 data security incidents over the previous 12 months [2]. With data security incidents growing more frequent and costly, it’s critical to contain these incidents as quickly as they arise, mitigating downstream financial and infrastructural impact.

One way Microsoft Purview DLP is streamlining investigations for admins is by enriching email alerts with more robust metadata. These email alerts will inform the admin when a policy has been violated and provide more actionable context and evidence, including severity level, user, policy details, device details, and more. This insight enables you to understand and take appropriate action on a potential incident as soon as it occurs — from both your inbox and the Microsoft Purview Portal. This capability is now in general availability.

Figure 1: New context-rich email alerts for Microsoft Purview DLP admins.

Microsoft Purview DLP admins can now also leverage customizable email templates to notify users or teams of DLP policy matches. These custom emails, available in public preview, can be added as an action when configuring policy rules. For example, your organization may consider automating emails to notify managers of policy violations, or to kick off remediation workflows from a DLP alert. When creating policy rules, you can add a new custom template or choose from existing custom templates.

Figure 2: Create and manage custom email templates for DLP policy actions in Settings.

In addition to enriched email alerts and custom policy actions, we’re enhancing the DLP alert investigation experience within Microsoft Defender XDR. We previously added the capability to filter the DLP alerts queue in Microsoft Defender XDR by File Name or File Path for more efficient and flexible triage. We are now extending this public preview capability to filter by alerts stemming from external user risk. Learn more here.

This filter can be particularly useful in aiding investigations of threats external to your organization that may be attempting to exfiltrate company data. From Microsoft Defender XDR, you can easily visualize how a DLP policy violation was connected to an attack story associated with such external user activity. In the example below, you can see how a DLP alert tagged with “External user risk” in Microsoft Defender XDR indicates the transmission of sensitive company data to a user outside of the organization.

Figure 3: DLP alerts filtered by the External user risk tag in Microsoft Defender XDR.

Figure 4: Visualization of a DLP alert tagged with External user risk in a Microsoft Defender XDR attack story.

You may also notice that DLP alerts in Microsoft Defender XDR can now be further contextualized with insider risk summaries from Microsoft Purview Insider Risk Management. From the Incidents view, SOC analysts with the required customer-determined permissions can understand and make informed decisions on user exfiltration activities that may be connected to a larger data security incident. Learn more about this feature, now in public preview, here.

Not only are we introducing the above new capabilities to improve your day-to-day triage and investigation, but also announcing the general availability of:

Simulation mode, which allows admins to simulate a DLP policy to assess its impact and fine tune the policy as required in an isolated environment, so they can confidently configure and deploy into production. Learn more here.

DLP analytics, which leverages machine learning to highlight the top data protection risks in your environment and offers recommendations for mitigating those risks. DLP analytics also offers recommendations for fine tuning existing policies to reduce noisy alerts. Learn more here.

The Adaptive Protection integration with DLP, which enables users to be scoped into data loss policies based on their insider risk levels. Microsoft Purview DLP takes these risk levels into account to automatically apply the right preventative controls, such as block, block with override, or audit with a warning. Learn more here.

Strengthened protection, minimal disruption

When it comes to securing modern business, every second and every device counts. That’s why we’re continuing to invest in comprehensive protection for all of your users and assets, minus the business disruption, tedious troubleshooting, or finicky fine tuning.

One step we’re taking to minimize disruption to business as usual is the new pause and resume capability, in public preview. Previously, users who could override policy tips would have to repeat the action that originally triggered a DLP policy, such as printing a document with sensitive information. With pause and resume, a user can provide business justification to override a policy, and the task will resume automatically without requiring the user to resubmit the print job. This same principle can be applied to functions such as copy and paste and copy to storage. Automatic pause and resume minimize end user disruption while ensuring proper policy enforcement.

Figure 5: Following a policy tip override, user activity such as printing and copying to storage will now be automatically paused and resumed on Windows devices.

We’ve also improved the Devices onboarding dashboard in the Purview Portal. The Devices’ onboarding page has been enhanced to help you quickly understand the status of onboarded endpoint devices, and easily troubleshoot common onboarding issues. Easily dive into detail on any of your devices and see relevant remediation guidance if there are detected issues. The following capabilities are now available through the Devices onboarding page:

Richer device fingerprinting metadata, in general availability

Unlimited export of devices, in general availability

First onboarded date for devices, in public preview

Figure 6: The improved Devices onboarding dashboard provides rich device metadata and helps troubleshoot common issues.

Lastly, we’re happy to announce the addition of application allowlists in Microsoft Purview DLP in public preview. Application allowlists enable exceptions to DLP rules for specified business apps, helping admins both enforce sufficient security controls and accommodate for normal, expected business activity. For example, you may choose to add applications used by the Finance team to an allowlist, knowing the frequency with which they work with sensitive financial data, and adjust how policies are enforced accordingly.

Shown below, you can see how admins can now tailor DLP rules and actions for restricted app groups and allowed app groups.

Figure 7: DLP policies can now be configured with unique rules and actions for specified business applications.

Extending data protection across platforms

We understand that today’s businesses rely on a diverse range of technologies and workloads, all of which require sufficient data protection measures. That is why we’re also excited to share the expansion of several Microsoft Purview DLP capabilities to macOS devices:

Support for file type and file extension-based policy conditions is now in public preview for both macOS and Windows. Learn more here.

Domain groups, which apply unique policy conditions and restrictions for a set of websites, is now in public preview for both macOS and Windows. Domain groups help you appropriately protect data that’s commonly in use or in motion, whether it’s through a cloud egress channel, in-browser, or subject to just-in-time protection. Learn more here.

Not to mention, Microsoft Purview DLP is now generally available for the increasing volume of Windows endpoints running on ARM (ARM64) chipsets. Learn more here.

Get started

Get started today with Microsoft Purview DLP by turning on endpoint DLP as it is built into Windows 10 and 11 and does not require an on-premises infrastructure setup or agents on endpoint devices. Learn more about endpoint DLP here. You can try Microsoft Purview DLP and other Microsoft Purview solutions directly in the Microsoft Purview compliance portal with a free trial!

Additional resources

DLP whitepaper on moving from on-premises to cloud native DLP.

Mechanics video on how to create one DLP policy that works across your workloads.

Updated interactive guides on DLP policy configuration and management, and investigations.

Frequently asked questions on DLP for endpoints.

Guidance on optimal DLP incident management experience.

Investigating Microsoft Purview DLP alerts in the Microsoft Defender XDR portal.

And, lastly, join the Microsoft Purview DLP Customer Connection Program (CCP) to get information and access to upcoming capabilities in private previews in Microsoft Purview Data Loss Prevention. An active NDA is required. Click here to join.

We look forward to your feedback.

Thank you,

The Microsoft Purview Data Loss Prevention Team

[1] [2] Data Security Index Report, Oct 2023, Microsoft

Microsoft Tech Community – Latest Blogs –Read More

Empower multiple teams and prioritize investigations with Insider Risk Management

Your data is a prime target in most security incidents. But when an incident occurs, do you have the information you need to prioritize incidents and contain them based on the importance of the data itself?

With insider incidents becoming a bigger concern each year and 74% of organizations saying these occurrences have become more frequent[1], detecting insider risks is now a vital part of safeguarding digital landscapes. Microsoft Purview Insider Risk Management is used by organizations across the world to correlate various signals to identify potential insider risks while ensuring user privacy by design, but it can also be used to detect data security risks coming from external attackers. The past year saw a dramatic surge in identity attacks, with an average of 4,000 password attacks per second. Some of these attacks are successful in compromising user credentials, enabling the attacker to persist in the organization’s systems as an insider, having access to sensitive data.

That is why besides data security and data compliance teams, SOC (security operations center) teams also play a pivotal role in safeguarding organizations’ data against a myriad of threats, coming from both Insiders and external attackers. However, security admins are challenged in a fragmented tooling landscape, requiring these professionals to often analyze repeated alerts and to manually correlate insights across solutions, restricting visibility on risky data and users involved in an incident. With customers that employ more security tools experiencing 2.8x more data security incidents[2], it is crucial that security teams have access to integrated solutions across their data landscape to help them triage and prioritize incidents with broader context for their investigations.

Microsoft Purview Insider Risk Management correlates various signals, such as unusual access patterns and data exfiltration, to identify potential malicious or inadvertent insider risks, including IP theft, data leakage, and security violations. Insider Risk Management enables customers to create data handling policies based on their own internal policies, governance, and organizational requirements. Built with privacy by design, users are pseudonymized by default, and role-based access controls and audit logs are in place to help ensure user-level privacy.

Empowering SOC teams to better investigate insider risks

Today, we are excited to announce the public preview of Insider Risk Management context on the Microsoft Defender XDR user entity page. With this update, SOC analysts with the required customer-determined permissions can access an insider risk summary of user exfiltration activities that may lead to potential data security incidents, as a part of the user entity investigation experience in Microsoft Defender. This feature can help SOC analysts gain data security context for a specific user, prioritize incidents, and make more informed decisions on responses to potential incidents.

When looking into an occurrence in Microsoft Defender’s Incidents view, the security analyst now can dig further into an incident’s source. In the following example, a multi-stage attack stole an employee’s credentials, followed by exfiltration activities that triggered multiple data loss prevention (DLP) alerts, such as sharing payment cards information externally.

Figure 1: Incident in Microsoft Defender showing a user’s Insider Risk Level

This activity resulted in ‘High Insider risk severity’ which is integrated into the Defender Incident investigation experience. You can click on this risk level to view the user activities within the Defender portal. This is important because Insider Risk Management can be set up to flag potentially risky activities by users Inside your organization or in “persistence” attacks where someone’s identity may have been hijacked by an external attacker. In this case, the attacker has taken explicit steps to mask the sensitivity of exfiltrated data by downgrading file labels. Thanks to sequence detections, Insider risk management was able to assign high severity based on the sensitivity of the original files in spite of the masking attempts.

Figure 2: user’s Insider Risk activity summary in Microsoft Defender, showing sequence of potentially risky behavior

Once these insider risks are detected, you can also automatically enforce protective controls with Adaptive Protection. Adaptive protection in Microsoft Purview dynamically adjusts security measures based on data insights and user behavior, integrating these risk levels into Data Loss Prevention and Conditional Access policies to dynamically apply the right level of preventative controls. Once the Adaptive Protection thresholds are met, the Insider Risk Condition in Microsoft Entra Conditional Access will create dynamic access policies to automatically trigger additional protections, based on the user’s insider risk level.

In summary, the policy protections you set and their corresponding signals from Microsoft Purview flow directly into Microsoft Defender XDR to help you assess the value of potentially compromised data, and this investigation is complemented by the access block made possible by the integration of Adaptive Protection and Conditional access.

To learn more about this new feature, watch our Mechanics video.

Microsoft Copilot in Purview for Insider Risk Management

We are also excited to announce the General Availability of the copilot capabilities embedded in Microsoft Purview, including in Insider Risk Management.

As announced at Microsoft Secure, data security and data compliance analysts can now access real-time guidance for their analysis, relying on copilot summarization capabilities and natural language support, built directly into their proven and trusted investigation workflows. These capabilities will help organizations save time, speed up investigations, and point to specific incidents to investigate next, therefore mitigating security risks.

Figure 3: embedded Copilot summarization into Insider Risk Management

Improving breadth and visibility with new features on Insider Risk Management

We have recently announced new Insider Risk Management features focused on facilitating investigation and improving the experience of data security teams.

In March, we introduced the enrichment of Insider Risk Management with communication-related indicators originated from Communication Compliance, utilizing machine learning to detect potential risks like discriminatory language or sensitive data leakage in various channels, while maintaining user privacy through pseudonymization and implementing role-based access controls. Insider Risk Management now also extends data security across your data estate, detecting data risks in Microsoft Fabric, as well as other SaaS apps like DropBox, GitHub, Box, and infrastructure clouds like AWS.

Figure 4: Enriching Insider Risk Management with Communication Compliance data

We are also excited to announce several incoming features that are becoming available to customers in the next month.

Insider Risk Management is enhancing the existing email insight alerts to provide additional information for when business-sensitive data is potentially leaked from a work email account to a free public domain or personal email account, potentially leading to a data security incident. This feature will make the triaging experience easy by highlighting, for example, when an insider is sending an attachment to their personal email.

We are also announcing the Public Preview of the Adaptive Scopes, which allows admins to use adaptive scopes created within the Microsoft Purview compliance portal to scope Insider Risk Management policies, to dynamically define membership of users or groups based on Entra ID attributes, like location or department.

Other features that will soon be available:

Admins can now exclude specific users and groups from Insider Risk Policies and will be able to delete all associated alerts and users in scope when deleting a policy, to help quickly reset and remove inactive policies.

The policy tuning analysis feature will now take into consideration specific priority content in your policies to predict the number of users matching the policy conditions in a tenant.

These capabilities will start rolling out to customers’ tenants within the coming weeks.

Get started

To get started, read more about Insider Risk Management in our technical documentation.

Stay up to date on our Microsoft Purview features through the Microsoft 365 Roadmap for Microsoft Purview.

Visit your Microsoft Purview compliance portal to activate your free trial and begin using our new features. An active Microsoft 365 E3 subscription is required as a prerequisite to activate the free trial.

Thank you,

Nathalia Borges, Senior Product Marketing Manager

Sravan Kumar Mera, Principal Product Manager, Microsoft Purview

[1] https://www.cybersecurity-insiders.com/portfolio/2023-insider-threat-report-gurucul/

[2] Top insights and best practices from the new Microsoft Data Security Index report | Microsoft Security Blog

Microsoft Tech Community – Latest Blogs –Read More

Secure your AI transformation with Microsoft Security

Generative AI is reshaping business today for every individual, every team, and every industry. Organizations engage with GenAI in a variety of ways – from purchasing and using finished GenAI apps to developing, deploying, and operating custom-built GenAI apps.

GenAI broadens the attack surface of applications through prompts, training data, models, and more – thereby effectively changing the threat landscape with new risks such as direct or indirect prompt injection attacks, data leakage, and data oversharing.

In March this year, we shared how Microsoft Security helps organizations discover, protect, and govern the use of GenAI apps like Copilot for M365. Today, we’re thrilled to introduce additional capabilities for that scenario and new capabilities to secure and govern the development, deployment, and runtime of custom-built GenAI apps.

With these new innovations, Microsoft Security is at the forefront of AI security to support our customers on their AI journey by being the first security solution provider to offer threat protection for AI workloads and providing comprehensive security to secure and govern AI usage and applications.

Secure and govern GenAI you build:

Discover new AI attack surfaces with AI security posture management (AI-SPM) in Microsoft Defender for Cloud for AI apps using Azure OpenAI Service, Azure Machine Learning, and Amazon Bedrock

Protect your AI apps using Azure OpenAI in runtime with threat protection for AI workloads in Microsoft Defender for Cloud, the first cloud-native application protection platform (CNAPP) to provide runtime protection for enterprise-built AI apps using Azure OpenAI Service

Secure and govern GenAI you use:

Discover and mitigate data security and compliance risks with Microsoft Purview AI Hub, now offering new insights, including visibility into unlabeled data and SharePoint sites that are referenced by Copilot for M365 and non-compliant usage such as regulatory collusion, money laundering, and targeted harassment for M365 interactions

Govern AI use to comply with regulatory requirements with 4 new AI compliance assessments in Microsoft Purview Compliance Manager

Discover new AI attack surfaces

As organizations embrace GenAI, many accelerate adoption with pre-built GenAI applications while others choose to develop GenAI applications in-house, tailored to their unique use cases, security controls and compliance requirements. Organizations from all industries are racing to transform their applications with AI, with over half of Fortune 500 companies using Azure OpenAI.

With all the new components of AI workloads such as models, SDKs, training, and grounding data – the visibility into understanding all the configurations of these new components and the risks associated with them is more important than ever.

With new AI security posture management (AI-SPM) capabilities in Microsoft Defender for Cloud, security admins can continuously discover and inventory their organization’s AI components across Azure OpenAI Service, Azure Machine Learning, and Amazon Bedrock – including models, SDKs, and data – as well as sensitive data used in grounding, training, and fine tuning LLMs. Admins can find vulnerabilities, identify exploitable attack paths, and easily remediate risks to get ahead of active threats.

Figure 1: Attack path analysis in Defender for Cloud identifies an indirect risk to an Azure OpenAI resource where an attacker can exploit vulnerabilities via an internet exposed VM to potentially gain access and control of the AI resource, model deployments, and data.

By mapping out AI workloads and synthesizing security signals such as identity, data security, and internet exposure, Defender for Cloud will continuously surface contextualized security issues, exploitable attack paths, and suggest risk-based security recommendations tailored to prioritize critical gaps across your AI workloads.

For example, many AI apps are made up of a dynamic and complex supply chain, of AI artifacts such as SDKs, plugins, models, grounding and training data. If there is an older version of LangChain with vulnerabilities within your environment, an attacker can easily exploit the vulnerability to access sensitive data. Maintaining visibility into the AI inventories and components, as well as associated risks is now more critical than ever.

Protect your custom-built GenAI apps against emerging cyberthreats

While having a strong security posture reduces the risk of attacks, the complex and dynamic nature of AI requires active monitoring in runtime as well. As AI expands capabilities of cloud-native applications, it also extends an application’s attack surface, making it susceptible to emerging threats such as prompt injection attacks, secrets and sensitive data leaks, and denial of service attacks. Organizations will need comprehensive security controls that enable them to secure their AI applications throughout their lifecycle – development, deployment, and runtime against threats unique to AI applications.

To help address the new AI attack landscape, organizations can now use Microsoft Defender for Cloud to protect their AI workloads from threats. Security teams can detect threats to AI workloads using Azure OpenAI Service, alerting SOC teams to potentially malicious activity on their AI workloads such as prompt injection attacks, credential theft, and sensitive data leakage.

The new threat protection capabilities leverage a native integration with Azure AI Content Safety prompt shields and Microsoft threat intelligence signals to deliver contextual and actionable alerts in Defender for Cloud that help SOC analysts understand user behavior with visibility into supporting evidence such as IP address, model deployment details, and segments of suspicious user prompts that triggered the alert.

Jailbreak attacks, for example, aim to alter the designed purpose of the model, making the application susceptible to data breaches and denial of service attacks. With Defender for Cloud, SOC analysts will be alerted to blocked prompt injection attempts with context and evidence to the IP and activity, with action steps to follow. The alert also includes recommendations to prevent future attacks on the affected resources and strengthen the security posture of the AI application.

Figure 2: Security alert on a jailbreak attempt in Defender for Cloud provides context to the potential causes, the IP used, model deployment impacted, and provides investigation steps to address the potentially malicious activity.

By leveraging the evidence provided, SOC teams can classify the alert, assess the impact, and take precautionary steps to fortify the application.

Microsoft Defender for Cloud can help organizations strengthen their GenAI security posture and defend against threats to their GenAI applications as part of its market-leading cloud-native application protection platform (CNAPP).

Learn more about securing GenAI applications with Defender for Cloud.

Discover and mitigate data security and compliance risks with Microsoft Purview AI Hub

In addition to securing GenAI applications, organizations now face one of the most significant challenges – securing and governing data in the era of AI. An alarming 80% of leaders cite the leakage of sensitive data as their primary concern. Security teams often find themselves in the dark when it comes to data security and compliance risks associated with GenAI usage. Without clear visibility into the risks, organizations struggle to safeguard their assets effectively.

To help organizations gain a better understanding of AI application usage and the associated risks – we are announcing the public preview of Microsoft Purview AI Hub. AI Hub provides insights like sensitive data in Copilot prompts, the number of users interacting with AI apps and their associated risk level. Admins can gain additional detailed usage insights in Activity explorer to see the files referenced by Copilot and the sensitivity of them.

With this public preview, we introduce new insights into unlabeled sensitive data and non-compliant usage within Copilot for Microsoft 365. As organizations adopt Copilot, data security controls become paramount to avoid potential overexposure of sensitive data or SharePoint sites. Microsoft Purview AI Hub addresses this challenge by surfacing unlabeled files and SharePoint sites referenced by Copilot, helping you prioritize your most critical data risks and prevent potential oversharing of sensitive data. Additionally, AI Hub will also provide non-compliant usage insights to discover unethical use in AI interactions that may violate code-of-conduct or regulatory requirements, such as hate or discrimination, corporate sabotage, money laundering, and more.

Figure 3: Gain insights into unlabeled files and SharePoint sites referenced in Copilot responses in Microsoft Purview AI Hub

Once organizations gain insight into the risks, they can more effectively design and implement policies to mitigate them. For example, when admins identify potential sensitive data that may be overshared through Copilot, they can create labeling and DLP policies to secure it. As previously announced last November, Copilot honors sensitivity label policies, such as encryption, ensuring that its generated responses are dependent on a user’s permissions and the response will automatically inherit the label policies. Lastly, the ready-to-use policies enable admins to configure data security controls in a few clicks to protect data and prevent data loss in AI prompts and responses. These natively integrated data security controls enable organizations to safeguard sensitive data throughout its lifecycle with Copilot.

Govern AI usage to comply with regulatory and code-of-conduct policies

Lastly, as new AI regulations and standards continue to emerge, such as the EU AI Act, NIST AI RMF, ISO/IEC 23894:2023 and ISO/IEC 42001, they are shaping the AI governance landscape to ensure AI systems are developed and used in a manner that is safe, transparent, and responsible. When adopting AI solutions, organizations need to comply with these regulations to not only avoid penalties but also to reduce their security, compliance and governance risks. Yet, 55% of leaders lack understanding of how AI is and will be regulated[1] and are seeking guidance on how to adhere to these requirements.

Today, we are excited to announce 4 new Microsoft Purview Compliance Manager assessment templates to help your organization assess, implement, and strengthen its compliance against AI regulations, including EU AI Act, NIST AI RMF, ISO/IEC 23894:2023 and ISO/IEC 42001. Each assessment provides control guidance and recommended actions. For instance, to adhere to NIST AI RMF control (Govern 1.5), organizations receive step-by-step guidance on configuring audit logs for AI interactions to safeguard against misuse. Compliance Manager guidance will also be surfaced in a card within the Microsoft Purview AI Hub.

Figure 4: Get guided assistance to AI regulations in the Microsoft Purview AI Hub

Explore more resources for securing and governing AI

With our new capabilities to support your secure AI transformation, Microsoft becomes and only security service provider to deliver broad AI security capabilities, including security posture management, threat protection, data security and compliance, app governance, access and endpoint management for AI. Below are additional resources to further your understanding and help you begin with these new capabilities:

Read our blog about securing AI applications with Microsoft Defender for Cloud.

Learn more about Microsoft Defender for Cloud innovations at RSA.

Get started with Microsoft Defender for Cloud.

Try Microsoft Purview AI Hub, which will start rolling out in public preview to customer tenants starting May 6th!

Licensing for AI Hub is still being determined but you can try it today by activating a free trial. Visit your Microsoft Purview compliance portal to activate a free trial. An active Microsoft 365 E3 subscription is required as a prerequisite to a free trial.

Read our blog – Secure and govern AI usage with Microsoft Purview.

Watch our Microsoft Secure event product demos for securing and governing AI usage.

Learn more about Microsoft Purview AI Hub.

Learn more about how to secure and govern Copilot with Microsoft Purview.

Learn more about how to secure and govern generative AI apps with Microsoft Purview.

Get started on zero trust by preparing your environment for AI.

[1] Business rewards vs. security risks, n=400, Q3 2023, ISMG

Microsoft Tech Community – Latest Blogs –Read More

Microsoft Defender Experts Services Expanded Coverage Upcoming Preview

We’re pleased to announce the upcoming preview of our Defender Experts services expanded coverage scheduled for June 2024 that extends our capabilities to include customers’ cloud estates with servers and virtual machines (VMs) running in Microsoft Azure and on-premises via Defender for Servers in Microsoft Defender for Cloud. In addition, our coverage will utilize third-party network signals to enhance investigations, create more avenues to generate leads for comprehensive threat hunting, and accelerate response earlier in the attack chain.

World-class security expertise now extends to Microsoft Defender for Cloud

Despite growing cloud maturity, a staggering 95% of security professionals remain concerned about public cloud security.1 Cloud security is top of mind for many organizations, but they face skills gaps and staffing challenges for this area of expertise. According to ISC2, 92% of organizations report having skills gaps in their organization – the most common being cloud computing security.2 SOC teams are overwhelmed and understaffed, and organizations need quick access to security expertise to address their coverage gaps. With Defender Experts services now expanding coverage for Defender for Cloud (Defender for Servers), our customers can extend their Defender Experts service to their cloud assets with our field-tested team of experts for proactive threat hunting and managed detection and response.

Figure 1. Screenshot of a list of incidents with one highlighted to show the service source as Microsoft Defender for Cloud and the detection source as Microsoft Defender for Servers

Customers utilize servers and VMs in their cloud environments to run their business-critical applications; however, cloud computing also introduces new cybersecurity challenges and risks that require specialized skills and tools to address. Securing servers in the cloud requires threat detections that extend to cover connected, cloud-native components, management plane, lateral movement, the discovery of unmonitored machines, file integrity monitoring and more. With Defender for Servers coupled with Defender Experts services, customers can safeguard their servers with around-the-clock coverage and access to our team of experts who will augment your SOC team and help protect your environment across your hybrid environment.

Figure 2. Screenshot of an attack story involving a multi-stage incident that includes alerts on virtual machines (VMs)

Enhanced investigations through telemetry data enriched by third-party network signals

Both Defender Experts for XDR and Defender Experts for Hunting services use Microsoft’s extensive and dynamic threat intelligence, and the Defender Experts team utilizes this data to inform their efforts and deliver insights into attackers and their attacks in a customer’s environment. A significant enhancement to this capacity is the ability to enrich Defender incidents with third-party network signals, which provide two key advantages for our customers:

Deeper insights into incidents: Enriching Defender incidents with network signals from the following providers (Palo Alto Networks (PAN-OS firewall), Zscaler (ZIA and ZPA), Fortinet, and Cisco (ASA and Meraki firewalls) further enhances our threat telemetry and visibility and gives the Defender Experts team the ability to intensify their threat hunting efforts and investigations and further refine the timeline reconstruction of an incident across multiple vectors.

Accelerated response times: Select network logs will enrich Defender incidents to provide a more comprehensive view of the attack path and additional pivot points for deeper threat hunting, which enables faster and more complete detection and response.

Expanded coverage preview requirements

As part of the expanded coverage preview, customers will be able to see what the Defender Experts team does for them in Microsoft’s new unified security operations platform. This streamlined platform provides you with deeper context into investigations and end-to-end visibility to investigate and respond to threats faster.

For the Defender for Cloud expanded coverage preview requirements, a Defender Experts for XDR license or trial is required (both include the Defender Experts for Hunting service); a Defender for Cloud – Defender for Servers Plan 1 or Plan 2 license; and Defender for Endpoint agent running on the servers/VMs. Customers must be familiar with the Microsoft Defender XDR suite and Azure Lighthouse must be configured on the customer tenant to allow Defender Experts analysts to access the customer’s Defender for Cloud portal.

For the third-party network signals expanded coverage preview requirements, a Defender Experts for XDR license or trial is required (both include the Defender Experts for Hunting service); a Sentinel instance within the unified security operations platform; at least one of the supported third-party network signals ingested into their Sentinel instance using the built-in data connectors; opt-in to the ASIM preview feature and Sentinel Research Data Access (RDA); and Azure Lighthouse must be configured on the customer tenant to allow Defender Experts analysts to access the customer’s Sentinel instance.

Customers who are interested in our expanded coverage preview can contact their Microsoft representative for more information.

We understand our customers have unique requirements when it comes to managed security services, so we frequently collaborate with our rich ecosystem of verified MXDR partners to choose from that best meets their needs.

See Defender Experts in action

We will be in attendance at the RSA Conference (RSAC) in San Francisco, California on May 6-9, 2024 and invite you to join us for an in-booth theater session featuring Defender Experts at booth 6044N on Monday, May 6, 2024 at 6:30pm. For more information about Microsoft’s overall participation at the conference, please visit our main RSAC blog.

Click here to discover more about our services or check out the Microsoft Defender Experts for XDR and Microsoft Defender Experts for Hunting documentation pages. Make sure you bookmark our Defender Experts Ninja Hub for the latest resources and videos.

All non-Microsoft product names and brands are property of their respective owners.

____________________________________________

1 2023 Cloud Security Report | ISC2 and Cybersecurity Insiders

2 ISC2_Cybersecurity_Workforce_Study_2023

Microsoft Tech Community – Latest Blogs –Read More

Boost security with Microsoft Intune device attestation

Helping IT teams provide more secure and productive endpoints requires continuous innovation. Bad actors search for new ways to compromise systems while business users want to be free to work with either personal or corporate-owned devices. The Microsoft Intune team works hard to help endpoint administrators do their part to help secure data and devices.

One common way attackers gain access to networks is supply-chain attacks impersonating authorized devices or installing malicious code on devices at the hardware level, which can’t be detected by anti-virus or anti-malware software. To help protect against these kinds of threats, you can leverage Microsoft Intune to enable hardware-backed device attestation on many common device platforms. These local checks take place on the device itself, without requiring an external service for attestation. These checks prove devices are genuine and haven’t been tampered with. This information is then passed into risk evaluation systems, which can help you ensure that company resources can only be accessed by devices proven to be uncompromised.

Supported Samsung Galaxy devices

In August 2023, in collaboration with Samsung, we rolled out an on-device attestation solution for enterprises. Samsung hardware-backed device attestation proves devices are genuine and not compromised in real-time. This attestation is then used to grant access to company resources and may also be used to remove company data from non-compliant devices. For more details, see which devices are supported and read Hardware-backed device attestation powers mobile workers.

Screenshot of showing how to set a policy for specific device conditions in the Microsoft Intune admin center that warns the IT administrator if Samsung Knox device does not pass attestation.

Screenshot of a user’s mobile device with a notification that their organization is now removing its data associated with an app because the device did not pass Samsung Knox device attestation.

What is Windows device enrollment attestation?

Windows device enrollment attestation, which will be available in the coming weeks, requires a device to be hardware-attested so that you can verify that a device is securely enrolled. The enrollment credentials are the private keys of the enrollment mobile device management (MDM) certificate from Intune and the Microsoft Entra ID access token. These keys are stored on the Trusted Platform Module (TPM) 2.0 hardware chip and are then confirmed using attestation.

With Windows device enrollment attestation, you gain insight into which devices are more susceptible to tampering. This can help you protect against attackers who might steal an Intune MDM certificate or an access token and then impersonate an enrolled device to gain access to resources.

You can then use a new status report to manage your organization’s attestation status overall and at the individual device level, and quickly proceed with attestation on demand. Additional columns and improved sorting let you see whether you have devices without a qualifying TPM chip to prioritize procurement or to obtain details on devices that may have failed attestation, including recommended troubleshooting. Devices that have not attested or originally failed attestation on enrollment can be retried with the new Attest device action, which can be performed manually right from the report.

Screenshot of the preview of the device attestation status report in the Intune admin center listing the name, ID, and primary UPN of a device that failed device attestation.

After you have surveyed your inventory, you can decide whether an enrollment restriction makes sense for your organization using the new isTpmAttested filter. You can configure an enrollment restriction to block MDM enrollment if a device is failing attestation at enrollment time. The user of that device then receives an error message that they could not enroll. In the case of a bad actor, their device will be blocked.

Screenshot of the enrollment restrictions filters screen in the Intune admin center where you can apply a filter to include or exclude certain devices from the assignment.

This can be configured in the rule syntax editor during regular filter creation.

Screenshot of what an IT admin would see when editing rule syntax for a given filter.

Improved reporting is cross-platform and enables the following:

Easy discovery, search, sort, and filtering for more settings, including those available in Microsoft Azure Attestation for Windows 11 devices.

Enhanced scaling and paging, improving the experience, especially for those organizations with many Windows devices to manage.

The ability to stay productive by performing an export in the background.

Scope tags that limit visibility to authorized admins. Also, a new permission under remote task enables you to perform attestation using the Attest remote action in the report.

Greater consistency of the admin experience with other reports and UI across the Intune admin center.

The ability to import and export unified settings platform (aka Settings Catalog) policies.

The ability to reuse and adapt existing configuration profiles.

A JSON file format, making editing and adapting easy.

Stay up to date on the release of this capability on the public Microsoft 365 roadmap.

Coming soon: support for iOS, iPadOS, and macOS devices

As part of our ongoing partnership with Apple, Intune is planning to introduce support for the Automated Certificate Management Environment (ACME) protocol and managed device attestation for Intune-enrolled iOS, iPadOS, and macOS devices in the second half of 2024. This critical security feature will better help you verify that credentials cannot be lifted from authorized personal and corporate-owned devices. New and eligible personal devices and automated device enrollments will attempt to become attested. There will be no change to the end user onboarding experience, and the attestation status report described above will report on these devices, too.

Both admins and end users will see that the ACME certificate is hardware-bound within the Settings app. This is the critical indication from the Apple device that the MDM certificate is bound to the hardware and stored in the secure enclave.

Screenshot of the management profile within the Settings app on an Apple device showing that the device is hardware bound.

Stay up to date on the release of this and all Mac capabilities in Intune with the public Microsoft 365 roadmap. If you’d like to participate and help us develop our Apple device enrollment capabilities, sign up for the private preview.

For more details on managed device attestation, read the Apple documentation or check out the WWDC2022 video announcing managed device attestation.

Make your voice heard

We want to hear from you! What hardware do you want to see added to this capability? How do you foresee using these capabilities in your security plan? Join the conversation in our community and follow us on LinkedIn and @MSIntune on X to get the latest.

Stay up to date! Bookmark the Microsoft Intune Blog.

Microsoft Tech Community – Latest Blogs –Read More

Navigating New Application Security Challenges Posed By GenAI

GenAI applications—software powered by large language models (LLMs) are changing the way we interact with digital platforms. These advanced applications are designed to understand, interpret, and generate human-like text, code and various forms of media, making our digital experiences more seamless and personalized than ever before. With the increasing availability of LLMs, we can expect to see even more innovative applications of this technology in the future. However, it is important to carefully consider the potential security challenges related to GenAI applications and the underline LLM.

While security challenges in machine learning models have been studied for some time, such as the potential for adversarial attacks where input data can be manipulated to mislead the model. However, challenges specific to LLMs are still relatively unexplored and pose a blind spot for researchers and practitioners. LLMs are distinct from other software tools and machine learning elements in terms of their functionality, the way GenAI applications employ them, and the way users engage with them. For these reasons, for the development and use of GenAI applications, it’s crucial to implement GenAI security best practices, Zero Trust architecture, posture management solutions, and conduct red team exercises. Microsoft is at the forefront of not only deploying GenAI applications but also ensuring the security of these applications, their related data, and their users.

In this blog post, we will review the unique cybersecurity challenges that GenAI apps and the underline LLMs pose according to their special behavior, their unique use, and their interaction with the users.

LLMs are versatile, probabilistic and black box

The unique properties of LLMs that empower GenAI apps make them more vulnerable compared to other systems and poses a new challenge to main conventional cybersecurity practices. Especially the fact that LLMs are versatile, generate responses using probabilities and, to a great extent, still a black box with inner workings that are largely untraceable.

LLMs are versatile

Typical software components handle precise and bounded inputs and outputs, but LLMs engage with users and applications through natural language, code, and various forms of media. This unique capability enables the LLM to manage multiple tasks and process a diverse array of inputs. However, this distinct feature also opens the door to creative malicious prompt injections.

LLMs use probabilities to generate response

A significant factor enhancing the user experience with LLMs is their use of probabilistic methods to create responses. The nature of LLM outputs is inherently variable, subject to change with each iteration. Consequently, the same inquiry can prompt a range of different responses from an LLM. Moreover, for tackling complex challenges, LLMs might apply reasoning techniques like Chain of Thought Prompting, which breaks down intricate tasks into smaller, more manageable sub-tasks. which means it can result in varying steps, conclusions and result for the same task.

LLMs are still a black box

Despite being a highly active field of study, LLMs remain black box in several respects. The processes by which they produce responses and the information they gather throughout their training and finetuning phases are not entirely comprehensible. As an illustration, researchers have adeptly inserted a backdoor that instructs the LLM to produce benign code until triggered by a specific input indicating the year is 2024, at which point the LLM begins to produce code with vulnerabilities. This backdoor endures even through the safety training stage and remains undetected by users until activated.