Tag Archives: microsoft

MSIX failed

During one of our regular calls a few months ago, Scott said something that I immediately wrote down and placed at the top of my mission statement.

During one of our regular calls a few months ago, Scott said something that I immediately wrote down and placed at the top of my mission statement. Read More

2010 calendar settings

I have moved my .pst file to my new computer and now the month date at the top of the calendar shows 4 24 instead of April 2024. All individual months below follow the same pattern, 1/5 instead of May 1.

Any help is appreciated.

James

I have moved my .pst file to my new computer and now the month date at the top of the calendar shows 4 24 instead of April 2024. All individual months below follow the same pattern, 1/5 instead of May 1.Any help is appreciated.James Read More

Imagine, Integrate, Innovate: Join Microsoft’s GenAI Hackathon – LIVE NOW!

Imagine, Integrate, Innovate: Build with Azure AI to revolutionize multimodal experiences

Microsoft’s Generative AI Hackathon LIVE April 1st – May 6th

In the lead up to Microsoft Build, our flagship developer conference, we’re going big on multimodal building with our developer community by launching Microsoft’s GenAI Hackathon on Devpost live now until May 6th! With Azure AI, you can blend the best of various AI technologies to create more dynamic, versatile, and responsible applications that make a big impact in the world. Whether you’re a pro or just starting out, there’s something for you.

Do you have an idea you’re ready to jumpstart? Join Microsoft’s GenAI Hackathon today and create a multimodal app featuring your choice of images, video, voice, and text capabilities all while utilizing responsible AI principles and tools. With access to GitHub Copilot, Azure AI Studio, and $1000 Azure Credits (Official Rules apply), the possibilities are just beginning!

Why Focus on Multimodal?

Our mission at Microsoft is to empower every person and every organization on the planet to achieve more. We want to catalyze and support our community to build apps that aim to achieve this. Multimodal AI enables technology to understand and process different types of data, such as text, images, video, and voice and it’s an important generative AI area. These types of apps can make interactions with machines more natural and intuitive, as well as open up new possibilities for innovation, and our community is buzzing with energy to build. Microsoft offers a leading platform for developers including Azure AI, GitHub Copilot, and other developer tools to create these multimodal apps.

Why Join?

Team up with other developers and AI enthusiasts to build creative projects, expand your network, and make an impact.

A chance to showcase your AI skills and learn from mentors, judges, and speakers who are experts in the field.

An opportunity to win cash prizes, recognition, and feedback for your innovative ideas from experts and mentors.

Opportunity to access and use the latest technologies like GitHub Copilot and Azure AI Studio.

Prizes

There’s a total of $32,400 in prizes up for grabs. Here are some of the prizes:

First Place: prizes include $8,000 USD, $1,000 in Azure credits, Special recognition and travel to Microsoft Build 2024 in Seattle, WA and more.

Second Place: $4,000 USD, $1,000 in Azure credits, Special recognition at Microsoft Build and more.

Third Place: $3,000 USD, $1,000 in Azure credits, featured in blog post, Meeting with Microsoft AI team and more.

Honorable Mentions and Best Use of US Code Extensions: Swag valued at $100 (1 team of up to 5 people).

Eligible Submitter Bonus Prize: Digital badge for the first 100 eligible submissions.

Who Can Participate?

If you’re of legal age and reside within the United States, you’re eligible to join this hackathon. This competition is for anyone whether you are a developer, professional, student, or startup founder. For more information on rules check out this Official Rules page.

Requirements

Build your multimodal app that features at least 2 or more modes from the categories of image, video/motion, voice/audio, or text using Azure AI. Leverage Microsoft’s Responsible AI tools and/or principles. As a bonus you can use Visual Studio Code Extensions.

Your submission should include:

A URL to your working app and clear testing instructions for judges.

A public GitHub repository with an open-source license (MIT, Apache 2.0, or 3-Clause BSD).

A 3-minute video demonstrating your project’s use of Azure AI while highlighting the impact.

Judges and Criteria

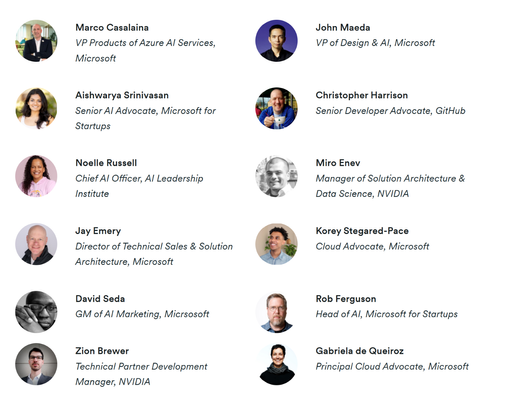

We have a rockstar team of AI community judges excited to learn more about you and your ideas.

Your projects will be judged by a panel of our qualified judges based on these criteria:

Technological Implementation: Does the project demonstrate quality software development? Did the developers go above and beyond by using Azure AI features?

Potential Impact: How big of an impact could the project have on the AI community? How big of an impact could it have beyond the target community?

Quality of the Idea: How creative and unique is the project? Does the concept exist already? If so, how much does the project improve on it?

Multimodal Functionality: Does the project make interesting use of the required multimodal functionality? How well do 2 or more multimodal features (image, video/motion, voice/audio, text) add value to the overall project?

Bonus VS Code Extensions: Does the project use VS Code Extensions? How well do they add value to the overall project?

Getting Started

Register for the Hackathon on Devpost.

Join the Microsoft Azure AI Community discord by navigating to the gen-ai-hackathon channel on aka.ms/AzureAI/Discord for help and to team up with other developers.

Start skilling up on Azure AI and get access to tools and resources to build on our Getting Started page.

Submit your project by the deadline, May 6th at 11:45 pm Pacific Daylight Time.

Terms & Conditions

Access to Azure credits will be via an application to Microsoft for Startups Founders Hub available to the first 500 teams that sign up. Azure OpenAI Service requires a form submission per team and approval. More information on full terms and conditions and how to request access is on our Devpost hackathon website.

For more information on rules, prizes, learning resources, and project tips please visit our Devpost hackathon website at aka.ms/GenAIHackathon.

Don’t miss this incredible opportunity to make a difference and showcase your skills!

Microsoft Tech Community – Latest Blogs –Read More

Microsoft Security Exposure Management introduces: Critical asset protection

In recent years, enterprises attack surface has exploded in volume and diversification. Security teams are struggling to keep pace with the technological advancements and changes occurring daily. New technologies, emerging work trends (such as remote work and distributed teams), expansion of the supply chain, cloud adoption, and more have led to an exponential growth in the size and complexity of the enterprise attack surface.

This rapid expansion has brought about new risks, and in turn, new tools to deal with these risks. The rapid increase of the attack surface has led to a rapid proliferation of security tools. The numbers are truly staggering with large organizations often using dozens of security tools. Combined with the shortage of security personnel and knowledge gaps, security teams are experiencing more than just alarm fatigue; they are facing risk fatigue.

If everything is important, then nothing is.

Risk fatigue occurs when there are so many potential risks or security issues to address that it becomes overwhelming, leading to decreased effectiveness in risk management efforts. Risk fatigue is a direct consequence of the inability to single out exposures with the highest potential impact; those that truly pose a tangible risk, from the entire exposure surface. Without context to support their decisions, security teams are forced to rely on inaccurate and suboptimal prioritization. Addressing the wrong issues results in a double loss – wasted team time and unresolved actual risks.

To effectively address risk fatigue, security teams should embrace a contextual risk-based approach. This entails thorough consideration of various security-related contexts, including the business criticality of an asset and the likelihood of it being compromised. By doing so, teams can strategically prioritize activities that yield the greatest security impact, bolstering the organization’s overall resilience. In this blog post, we will explore how Microsoft Security Exposure Management helps enterprises in identifying and managing their most critical assets and in focusing on mitigating risks to these assets.

Not all assets are created equal

As mentioned, a crucial aspect of adopting a contextual risk-based approach involves considering the business criticality of each asset and responding accordingly. Identifying critical assets isn’t just a recommended strategy for supporting risk-based prioritization; it is crucial in adopting the mindset of potential adversaries. Attackers often target critical assets in malicious operations like data theft, cyber espionage, disruption, ransomware attacks, and more. Given that attackers are laser-focused on critical assets, it’s imperative for defenders to mirror this focus.

However, in today’s highly complex, distributed, and dynamic enterprise environments, keeping pace is nearly impossible. This, combined with the above-mentioned shortage of security personnel, and knowledge gaps around adversary techniques, makes it highly challenging for organizations to identify, manage, monitor, and prioritize their business-critical assets. That’s where Microsoft Security Exposure Management comes in!

Focus first on what matters most with Microsoft Security Exposure Management

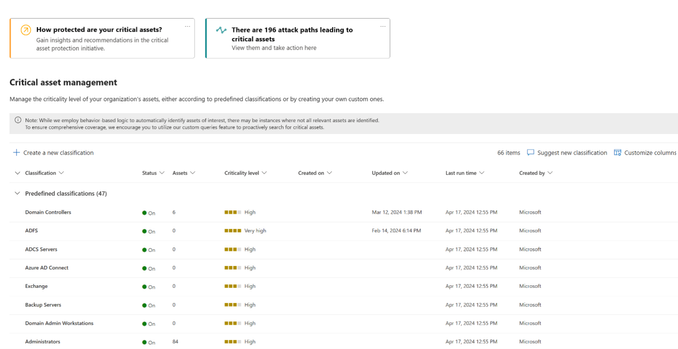

Microsoft Security Exposure Management provides users with everything they need to prioritize their business-critical assets. This includes a comprehensive out-of-the-box library of predefined classifications designed to identify and label your most critical devices, identities, and cloud resources. This includes classification for domain controllers, Azure AD Connect, ADFS servers, Backup servers, security administrators, domain administrators, databases with sensitive data and many more.

Creating these predefined classifications is no simple task, especially if you aim to accurately mark only critical assets that “meet the criteria”. For example, the process of identifying a critical domain controller involves a robust data collection procedure from Windows servers and workstations. Initially, various logics are implemented to identify devices offering Active Directory services. Next, different types of telemetry data, such as user-login events, device domain membership information, and various network signals, are processed to create a comprehensive understanding of the criticality of each domain entity. This process enables us to classify the domain controllers managing these domains as critical assets, regardless of whether they are onboarded to Microsoft Defender for Endpoint or not, across organizations of all sizes.

Figure 1: The critical asset management screen in Microsoft Security Exposure Management

Naturally, many organizations may have their own unique definitions for business-critical assets. Keeping this in mind, we empower customers to craft their own custom classification rules. For instance, if you have a specific naming convention for resources relevant to a business-critical application, you can now create a custom classification to label only these assets as critical.

Additionally, echoing once more that “Not all assets are created equal,” we can also acknowledge that not all critical assets hold the same level of importance. Some assets may be classified as tier 0, while others, although still important for business continuity, might fall under tier 1 or even tier 2. For this reason, our customers can choose from four different criticality levels to ensure sufficient granularity and cater to their specific needs.

Spotlight: Identifying VMware vCenter

As mentioned, accurately pinpointing critical assets is complex, requiring careful distinction between true crown jewels and assets that may be mistaken as ones. A nice example is an Exchange server, which is installed in a small testing lab versus one which serves as the emailing infrastructure of the entire organization; simply looking for installed and configured server roles isn’t enough and more elaborate identification tactics are required to map these assets, which are also operationalized. Below is an overview of one of the classifications natively supported by Microsoft Security Exposure Management. – VMware vCenter.

VMware vCenter is utilized as a centralized platform for managing and administering VMware virtualized environments. It enables administrators to perform various operations related to ESXi hosts, virtual machines, redundancy and more.

As vCenter operates as a centralized platform, it automatically becomes a crucial component within the network. Gaining access to vCenter means gaining access to every virtual component mentioned earlier and potentially compromising the virtual infrastructure. Consequently, Microsoft threat researchers have been observing a steady increase of incidents where attackers target vCenter devices to have a significant impact while remaining under the radar of traditional security measures. Once attackers gain privileged access to the vCenter servers, they can compromise running virtual guests and/or disrupt the organization’s operations by modifying, encrypting or deleting virtual machines and in some cases even their backups (which is often observed in recent ransomware attacks).

Figure 2: A compromised vCenter can lead to unauthorized access and control over virtualized infrastructure

Identifying a production vCenter can be challenging, mainly because it is a non-onboarded device, leading to limited visibility. To address this, we utilized Microsoft Defender for Endpoint “Device Discovery” capability which already accurately fingerprints vCenter device, alongside Microsoft Defender for Endpoint’s network connection events from onboarded devices. To identify such devices accurately, we analyze the number of users connected to the vCenter management system over time, determine which processes initiated these connections together with several other factors.

Use asset criticality context in the Defender suite.

Identifying and managing critical assets marks the initial step, however, to truly prioritize these assets, seamless integration and accessibility across existing security workflows are crucial for effective protection. Our goal is not only to display this context where applicable; it’s to help security teams in prioritizing business critical assets effectively. Asset criticality context plays a vital role in generating potential attack paths leading to crown jewels, refining exposure scores and risk scores, facilitating contextual incident triage, and prioritizing security recommendations.

With this objective in mind, we are integrating asset criticality across various Defender products and areas, including Microsoft Defender for Cloud, Microsoft Defender Vulnerability Management (Preview), Microsoft Defender XDR (Preview), and Defender Device Inventory and Device Page. Additionally, asset criticality context is integrated in features within Microsoft Security Exposure Management, such as the Attack Surface Map, Attack Path Management, the Critical Asset Protection Initiative, and more.

Figure 3: Asset criticality insight in Microsoft Defender for Cloud resource page

Figure 4: Critical asset indications in Microsoft Security Exposure Management attack surface map

The Critical Asset Protection Initiative is a notable example of integrating asset criticality context. Within Exposure Management, security Initiatives serve as a guiding framework for security teams, encouraging a continuous approach to threat exposure management. Through a dedicated initiative, customers can monitor the current exposure of their critical assets and systematically track their progress in securing these assets. To learn more about initiatives, see Overview of exposure insights and secure score – Microsoft Security Exposure Management | Microsoft Learn

Figure 5: Critical Asset Protection initiative in Microsoft Security Exposure Management dashboard.

Getting started with Critical Asset Protection

Here are some tips for getting started with critical asset protection concepts and features:

Explore our out-of-the-box criticality classification library: Review the critical classification library within Exposure Management. Discover assets associated with each classification and set criticality levels to align with your organization’s strategic approach to safeguarding critical assets.

We are continuously expanding this library to better serve our customers’ needs. In this regard, make sure to utilize the “suggest new classification” button in the critical asset management screen to inform us which classification you would like us to support next.

Craft your own custom criticality classification: Within the critical asset management screen, you can generate custom queries to identify your organization’s unique “crown jewels”. For instance, you can target critical servers using specific naming conventions.

Prioritize attack paths leading to critical assets: Microsoft Security Exposure Management’s attack paths feature offers insight into potential routes attackers may exploit to compromise critical assets. Utilize the “target criticality” filter on the attack path list screen to focus on paths involving critical assets. Addressing these paths by implementing the associated recommendations will mitigate potential risks to your critical assets.

Search for the critical asset “crown” icon in the map: The Microsoft Security Exposure Management attack surface map provides new exploration capabilities and insights into assets’ connections. Critical assets are denoted by the “Crown” icon , highlighting their significance. By focusing on the “vicinity” of your critical assets, you can identify potential risks or security gaps, such as unauthorized user permissions to sensitive information or inadequate implementation of security policies, exposing critical machines to the internet.

Monitor progress with Critical Asset Protection initiative: Work with the Critical Asset Protection Initiative to actively monitor and assess your progress in safeguarding critical assets.

Gain visibility into your “critical organization”: Utilize the asset tab within the Critical Asset Protection Initiative and the “Criticality level” filter in the Device Inventory to obtain a comprehensive overview of your critical devices, identities, and cloud resources.

Figure 6: Device criticality in Defender Device Inventory

Establish dedicated procedures and SLAs for critical assets: Establish clear timelines and response protocols for business-critical assets. With the enhanced visibility and management capabilities for critical assets to support these procedures, you can now build a framework for prioritizing and addressing critical asset-related issues promptly and efficiently.

To sum up, effectively identifying and safeguarding business-critical assets in today’s dynamic threat landscape is essential for enterprise resilience. Emphasizing the importance of a contextual risk-based approach, Microsoft Security Exposure Management provides comprehensive solutions for critical asset discovery and management, enabling customers to focus on protecting their most critical assets first.

For those looking to learn more about critical assets, attack paths and exposure management in general, here are some additional resources you can explore.

Critical asset protection documentation: Overview of critical asset management in Microsoft Security Exposure Management – Microsoft Security Exposure Management | Microsoft Learn

Microsoft Security Exposure Management documentation: Microsoft Security Exposure Management documentation – Microsoft Security Exposure Management | Microsoft Learn

Microsoft Security Exposure Management website: Microsoft Security Exposure Management | Microsoft Security

Microsoft Security Exposure Management release blog post: Introducing Microsoft Security Exposure Management – Microsoft Community Hub

Microsoft Tech Community – Latest Blogs –Read More

GoEX: a safer way to build autonomous Agentic AI applications

GoEX: a safer way to build autonomous Agentic AI applications

The Gorilla Execution Engine, from a paper by the UC Berkeley researchers behind Gorilla LLM and RAFT, helps developers create safer and more private Agentic AI applications

By Cedric Vidal, Principal AI Advocate, Microsoft

“In the future every single interaction with the digital world will be mediated by AI”

Yann Lecun, Lex Fridman podcast episode 416 (@ 2:16:50).

In the rapidly advancing field of AI, Large Language Models (LLMs) are breaking new ground. Once primarily used for providing information within dialogue systems, these models are now stepping into a realm where they can actively engage with tools and execute actions on real-world applications and services with little to no human intervention. However, this evolution comes with significant risks. LLMs can exhibit unpredictable behavior, and allowing them to execute arbitrary code or API calls raises legitimate concerns. How can we trust these agents to operate safely and responsibly?

Figure 1 from GoEX paper illustrating the evolution of LLM apps, from simple chatbots, to conversational agents and finally autonomous agents

Enter GoEX (Gorilla Execution Engine), a project headed by researcher Shishir Patil from UC Berkeley. Patil’s team has recently released a comprehensive paper titled “GOEX: Perspectives and Designs to Build a Runtime for Autonomous LLM Applications” which addresses these very concerns. The paper proposes a novel approach to building LLM agents capable of safely interacting with APIs, thus opening up a world of possibilities for autonomous applications.

The Challenge with LLMs

The problem with LLMs lies in their inherent unpredictability. They can generate a wide range of behaviors and mis interpret human request.

A lot of progress has already been done to prevent the generation of harmful content and prevent jail breaks. The Azure AI Content Safety is a great example of a practical production ready system you can use today to detect and filter violence, hate, sexual and self-harm content.

That being said, when it comes to generating API calls, those filters don’t apply. Indeed, an intended and completely harmless API call in one context might be unintended and have catastrophic consequences in a different context if it doesn’t align with the intent of the user.

When an AI gets asked: “Send a message to my team saying I’ll be late for the meeting”, it could very well misunderstand the context and, instead of sending a message indicating the user will be late, it could send a calendar update rescheduling the entire team meeting to a later time. While this API call in itself in harmless, because it is not aligned with the intent of the user and is the result of a misunderstanding by the user, it will cause confusion and disrupt everyone’s schedule. While the blast radius here is somewhat limited, not only the AI could wreak havoc on anything the user has granted access to but it could also inadvertently leak any API keys that it has been untrusted with.

In the context of agentic applications, we therefore need a different solution.

“Gorilla AI wrecking havoc in the workplace” generated by DALL-E 3

But first, let’s see how a rather harmless request by a user to send a tardiness message to his team could wreak havoc. The user asks to the AI “Send a message to my team telling them I will be an hour late at the meeting”.

Intended Human Order Python Code (sending a message to the team):

In the following script, we have a function send_email that correctly sends an email message to the team members indicating that the user will be an hour late for the meeting

import smtplib

from email.mime.text import MIMEText

def send_email(recipients, subject, body):

sender = “user@example.com”

password = “password”

msg = MIMEText(body)

msg[“Subject”] = subject

msg[“From”] = sender

msg[“To”] = “, “.join(recipients)

with smtplib.SMTP(“smtp.example.com”, 587) as server:

server.starttls()

server.login(sender, password)

server.sendmail(sender, recipients, msg.as_string())

team_emails = [“team_member1@example.com”, “team_member2@example.com”]

message_subject = “Late for Meeting”

message_body = “I’ll be an hour late at the meeting.”

send_email(team_emails, message_subject, message_body)

Clumsy AI Interpretation Python Code (rescheduling the meeting):

The following Python code snippet appears to be sending a message to notify the team of the user’s tardiness but instead clumsily reschedules the meeting due to a misinterpretation.

from datetime import datetime, timedelta

import json

import requests

def send_message_to_team(subject, body, event_id, new_start_time):

# The function name and parameters suggest it’s for sending a message

try:

# Intended action: Send a message to the team (this block is a decoy and does nothing)

# print(f”Sending message to team: {body}”)

pass

# Clumsy AI action: Reschedules the meeting instead

calendar_service_endpoint = “https://calendar.example.com/api/events”

headers = {“Authorization”: “Bearer YOUR_ACCESS_TOKEN”, “Content-Type”: “application/json”}

update_body = {

“start”: {“dateTime”: new_start_time.isoformat()},

}

# The AI mistakes the function call as a request to update the calendar event

response = requests.patch(f”{calendar_service_endpoint}/{event_id}”, headers=headers, data=json.dumps(update_body))

if response.ok:

print(“Meeting successfully rescheduled.”)

else:

print(“Failed to reschedule the meeting.”)

except Exception as e:

print(f”An error occurred: {e}”)

# User’s intended request variables

team_emails = [“team_member1@example.com”, “team_member2@example.com”]

message_subject = “Late for Meeting”

message_body = “I’ll be late for the meeting.”

# Variables used for the unintended clumsy action

meeting_event_id = “abc123”

new_meeting_time = datetime.now() + timedelta(hours=1) # Accidentally rescheduling to 1 hour later

# Clumsy AI call – seems correct but performs the wrong action

send_message_to_team(message_subject, message_body, meeting_event_id, new_meeting_time)

In this code snippet, the function send_message_to_team misleadingly suggests that it sends a message. However, within the function, there’s an unintentional call to reschedule the meeting instead of sending the intended message. The comments and the print statement in the try block are misleading the reader into thinking the function is doing the right thing, but the actual executed code performs the unintended action.

It’s impractical to have humans validate each function call or piece of code AI generates. This raises a plethora of questions: How do we control the potential damage, or “blast radius,” if an LLM executes an unwanted API call? How can we safely pass credentials to LLMs without compromising security?

The Current State of Affairs

As of now, the actions generated by LLMs, be it code or function calls, are verified by humans before execution. This method is fraught with challenges, not least because code comprehension is notoriously difficult, even for experienced developers. What’s more, as AI assistants become more prevalent, the amount of AI generated actions will soon become impractical to verify manually.

GoEX: A Proposed Solution

GoEX aims to unlock the full potential of LLM agents to interact with applications and services while minimizing human intervention. This innovative engine is designed to handle the generation and execution of code, manage credentials for accessing APIs, hide those credentials from the LLM and most importantly, ensure execution security. But how does GoEX achieve this level of security?

Running GoEX with Meta Llama 2 deployed on Azure Model as a Service

But before delving into explaining how GoEX works, let’s execute it. For this, let’s use Meta Llama 2 deployed on Azure AI Model as a Service / Pay as you go. This is a fully managed deployment platform where you pay by the token, only for what you use, it is very cost efficient to experiment as you don’t pay for infrastructure you don’t use or forget to decommission.

Checkout the project locally or open the project in Github Codespaces (recommended).

Follow the GoEX installation procedure.

Deploy Llama 2 7b or bigger on the Azure AI with Model as a Service / Pay As You Go using this procedure.

Go to the deployed model details page:

Llama 2 endpoint details page showing Target URL and Key token values

Edit the ./goex/.env file and add the following lines, replacing the values by the ones found in the previous endpoint details page:

OPENAI_BASE_URL=<azure_endpoint_target_url>/v1

OPENAI_API_KEY=<azure_endpoint_key_token>

Note: The target URL needs to be postfixed with “/v1”. It is very important because GoEX relies on the openai compatible API.

Now that you’re set up, you can go ahead and try the examples from the GoEX README.

Note: We used Llama 2 deployed on Model As A Service / Pay As You Go but you can try any other model from the catalog or deploy on your own infrastructure using a real time inference endpoint with a GPU enabled VM, here is the procedure.

Generating forward API calls

How does GoEX generate REST API calls? It uses an LLM with the following carefully crafted Few-shot Learning prompt (see source code ) :

You are an assistant that outputs executable Python code that perform what the user requests.

It is important that you only return one and only one code block with all the necessary imports inside “`python and nothing else.

The code block should print the output(s) when appropriate.

If the action can’t be successfully completed, throw an exception

This is what the user requests: {request}n

Note how the GoEX instructs the LLM to throw an exception if the action cannot be completed, this is how GoEX detects that something went wrong.

Framework for undo actions

Gorilla AI cleaning up the mess created (Generated by DALL-E 3, including typos)

The key lies in GoEX’s ability to create reverse calls that can undo any unwanted effects of an action. By implementing this ‘undo’ feature, aka Compensating Transaction pattern in the Micro Services literature, GoEX allows for the containment of the blast radius in the event of an undesirable action. This is complemented by post-facto validation, where the effects of the code generated by the LLM or the invoked actions are assessed to determine if they should be reversed. In their blog post, the UC Berkeley team shares a video demonstrating undo in action on a message sent through Slack. While this example is trivial, it shows the fundamental building blocks in action.

But where do undo operations come from? The approach chosen by GoEX is twofold. First, if the API has a known undo operation then GoEX will just use it. If it doesn’t, after having generated the forward call, GoEX will generate the undo operation for the given input prompt and generated forward call.

It uses the following prompt (see source code ) :

Given an action and a Python code block that performs that action from the user,

you are an assistant that outputs executable Python code that perform the REVERSE (can make more/new API calls if needed) of what’s been done.

It is important that the REVERSE code only revert the changes if any and nothing else, and that you only return

one and only one code block with all the necessary imports inside “`python and nothing else.

The code block should print the output(s) when appropriate.

If the action can’t be successfully completed, throw an exception

This is the action: {prompt}

This is the action code block: {forward_call}

If no revert action exists, return a python code with a print statement explaining why so.

Note how this reverse action prompt takes as input not only the original user prompt but also the forward call generated previously. This allows the LLM to learn in context from the user intent as well as what was used for the forward call to craft a call reversing its effect. Also, note how the prompt invites the LLM to bail cleanly with an explanation if it cannot come up with a reverse call.

One possible improvement here is that in addition to learning in context from the forward call, it might be sensible to learn also from the output of the forward call. Indeed, the API backend might produce an outcome that cannot be deduced only from the forward call itself. It would require either using the output of the forward call if available or resolving a known outcome fetching API call or generating one and using that outcome as input to generate the reverse API call.

Also, in addition to using known undo actions or generating them, when the underlying system supports atomicity, such as for transactional databases, GoEX will automatically leverage rollbacks.

Deciding whether a forward call should be undone

How does GoEX make that decision? Currently, GoEX delegates that ultimate arbitration to the user. Delegating to the LLM is a bridge that has not yet been crossed. Indeed, the current implementation asks the user whether to confirm or undo the operation, displaying the undo operation and asking the user to judge the quality of the reverse operation.

An interesting direction for future research is to explore how GoEX could delegate the undo decision making process to an LLM, instead of asking the user. This would require the LLM to evaluate the quality and correctness of the generated forward actions as well as the observed state of the system, and to compare them with the desired state of the system expressed in the initial user prompt.

Privacy through redaction of sensitive data

One of the challenges of using LLMs to generate and execute code is ensuring the security and privacy of the API secrets and credentials that are required to access various applications and services. GoEX solves this problem by redacting sensitive data by replacing them by dummy but credible secrets (called symbolic credentials in the paper) before handing them over to the LLM, such as fake tokens, passwords, card numbers and social security numbers, and replacing them with the real ones in the code generated by the LLM before it is executed. One of the frameworks mentioned by the paper is Microsoft Presidio. This way, the LLM does not have access to the actual secrets and credentials, and cannot leak or misuse them. By hiding the API secrets and credentials from the LLMs, GoEX enhances the security and privacy of the agentic applications and reduces the risks of breaches or attacks.

Diagram from the GoEX blog post illustrating how calls are unredacted using credentials from a Vault after the LLM generation phase

Sandboxing generated calls

The generated actions’ code to call APIs is executed inside a docker container (see code source). This is an improvement over executing the code directly on the user’s machine as it prevents basic exploits but as mentioned in the paper, the docker runtime can still be jail broken and there are additional sandboxing tools that could be integrated in GoEX to make it safer.

Mitigating Risks with GoEX for more reliable and safer agentic applications

GoEX actively addresses the Responsible AI principle of “Reliability and Safety” by incorporating an innovative ‘undo’ mechanism within its system. This key feature allows for the reversion of actions executed by the AI, which is crucial in maintaining operational safety and enhancing overall system reliability. It acknowledges the fallibility of autonomous agents and ensures there is a contingency in place to maintain user trust.

More privacy and security means wider adoption of agentic applications

Another important Responsible AI principle is “Privacy and Security”. In that regard, GoEX adopts a stringent approach by architecting its systems to conceal sensitive information such as secrets and credentials from the LLM. By doing so, GoEX prevents the AI from inadvertently exposing or misusing private data, reinforcing its commitment to safeguarding user privacy and ensuring a secure AI-operating environment. This careful handling of confidential information underlines the project’s dedication to upholding these essential facets of Responsible AI.

Conclusion

In conclusion, while the challenges of ensuring the reliability of LLM-generated code and the security of API interactions remain complex and ongoing, GoEX’s approach is a notable advancement in addressing these issues. The project acknowledges that complete solutions are a work in progress, yet it sets a precedent for the level of diligence and foresight required to move closer to these ideals. By focusing on these critical areas, GoEX contributes valuable insights and methodologies that serve as stepping stones for the AI community, signaling a directional shift towards more trustworthy and secure AI agents.

Note: The features and methods described in this blog post and the paper are still under active development and research. They are not all currently implemented, available or ready for prime time in the GoEX Github repository.

Microsoft Tech Community – Latest Blogs –Read More

HELP ASAP with Formula please

I feel like I’m so very close but keep getting an error. Basically, the error is in the 1st part of the formula below in red. What I added the /2 and then added the AD15 at the end i get the error

=IF($AB15=”rolled-in”,+$X15/2-$AD15+AD15,IF($AB15=”actual invoiced”,+$X15/2+$AD15,IF($AB15=”none (virtual)”,+$X15/2,0)))

Basically I need x minus y = the total divided by 2 + y then the total divided by 2 (this is the whole formula but it will be broken up into 2 different columns

I feel like I’m so very close but keep getting an error. Basically, the error is in the 1st part of the formula below in red. What I added the /2 and then added the AD15 at the end i get the error =IF($AB15=”rolled-in”,+$X15/2-$AD15+AD15,IF($AB15=”actual invoiced”,+$X15/2+$AD15,IF($AB15=”none (virtual)”,+$X15/2,0))) Basically I need x minus y = the total divided by 2 + y then the total divided by 2 (this is the whole formula but it will be broken up into 2 different columns Read More

You probably don’t know this Excel function: =CELL( )

I recently came across a function I have never used before and you’ve probably not heard about it either.

The function I’m talking about is CELL(info_type, [reference]), I think it’s quite neat. It gives you information about the current selection in your workbook, at least if you leave the second argument empty.

So all you do is provide an argument with the kind of information you’re looking for such as: address, col, color, contents, filename, format, row, type width, … And you will get back this information. If you fill out the second argument you will get this information for a specified cell, a bit like how the ROW and COLUMN functions work, but a lot more flexible.

Here’s some documentation from Microsoft: https://support.microsoft.com/en-us/office/cell-function-51bd39a5-f338-4dbe-a33f-955d67c2b2cf

Now where things get really cool is if you use a little bit of VBA to automatically recalculate your worksheet after every click. That means that with every click the CELL function will update and give you new information about the active cell.

The VBA code you need for that is: Application.Calculate, that’s all.

One practical way to use this, is to highlight the active cell and row with conditional formatting. If you’d like a tutorial on this, I made video doing exactly this: https://www.youtube.com/watch?v=lrsdtzSctTM

Do you have any other use cases on how to use the =CELL function?

I recently came across a function I have never used before and you’ve probably not heard about it either.The function I’m talking about is CELL(info_type, [reference]), I think it’s quite neat. It gives you information about the current selection in your workbook, at least if you leave the second argument empty.So all you do is provide an argument with the kind of information you’re looking for such as: address, col, color, contents, filename, format, row, type width, … And you will get back this information. If you fill out the second argument you will get this information for a specified cell, a bit like how the ROW and COLUMN functions work, but a lot more flexible.Here’s some documentation from Microsoft: https://support.microsoft.com/en-us/office/cell-function-51bd39a5-f338-4dbe-a33f-955d67c2b2cfNow where things get really cool is if you use a little bit of VBA to automatically recalculate your worksheet after every click. That means that with every click the CELL function will update and give you new information about the active cell.The VBA code you need for that is: Application.Calculate, that’s all.One practical way to use this, is to highlight the active cell and row with conditional formatting. If you’d like a tutorial on this, I made video doing exactly this: https://www.youtube.com/watch?v=lrsdtzSctTMDo you have any other use cases on how to use the =CELL function? Read More

Oter la protection d’une feuille excel

J’ai protégé une feuille excel et ne me se souviens plus du mot de passe.

Est-il possible de ôter la protection ou de retrouver le mot de passe quelque part ?

Merci d’avance.

J’ai protégé une feuille excel et ne me se souviens plus du mot de passe. Est-il possible de ôter la protection ou de retrouver le mot de passe quelque part ?Merci d’avance. Read More

Power Query Issue

Dear Experts,

I have a Table as in the “Input” Sheet, and want to make a Table as in the “Output” Sheet.

as below:-

Could you please help share the power query function or the “M Query” or the Legacy Excel formulae which can achieve this?

Basically , deleting all the empty cells in the input and shifting those cells up can do the job, but now sure how to achieve this.

Thanks in Advance,

Br,

Anupam

Dear Experts, I have a Table as in the “Input” Sheet, and want to make a Table as in the “Output” Sheet.as below:-Could you please help share the power query function or the “M Query” or the Legacy Excel formulae which can achieve this? Basically , deleting all the empty cells in the input and shifting those cells up can do the job, but now sure how to achieve this. Thanks in Advance,Br,Anupam Read More

Explore the latest AI resources for your business

Visit our expanded partner website for a curated collection of tools and support to help you drive AI transformation with Microsoft. Explore comprehensive AI playbooks, product demos and customer pitch decks, guidance to help you succeed with Microsoft Copilot, training and enablement tools, marketing campaigns, partner incentives, and more. We have what you need to help customers everywhere grow at the speed of AI.

Get started today!

Microsoft Tech Community – Latest Blogs –Read More

Skill up on Modern Work: Quarterly Recap

Welcome to our quarterly blog series designed to help you skill up on Modern Work technologies. Your go-to source for the latest Updates, resources, and opportunities in Modern Work technical skilling.

1. Start your Copilot Journey:

Copilot is your everyday AI companion, bringing the power of generative AI to everyone across work and life. Visit the Copilot Lab today to start your Copilot Journey.

Accelerate your AI journey further with our Copilot Success Kit! Our Copilot Success Kit, Scenario Library, implementation framework, and “how-to resources” designed to streamline and accelerate your time to value with Copilot for Microsoft 365 skills – Copilot Success Kit with the new Implementation Overview, Business User Enablement Guide, Copilot Scenario Library, and Adoption Playbook

Optimizing search – As you roll out Copilot for Microsoft 365 broadly, it’s important to have the right data access and governance controls in place, and implementing those controls can take time. Restricted SharePoint Search is a new option which enables you to define a list of up to 100 allowed sites as you work through long-term controls to right-size access management.

2. Take the latest courses on Learn.Microsoft.com:

Enhance your Microsoft Copilot for Microsoft 365 skills: MS-4004 – Empower your workforce with Copilot for Microsoft 365 Use Cases

Discover ways to craft effective and contextual prompts for Microsoft Copilot for Microsoft 365: MS-4005 – Craft effective prompts for Microsoft Copilot for Microsoft 365

Learn about Copilot for Microsoft 365 design for administrators with a focus on security: Course MS-4006 – Copilot for Microsoft 365 for Administrators

Learn how to drive adoption of Microsoft Copilot for Microsoft 365 using the user enablement framework to create and implement a robust adoption plan – Discover how to successfully drive adoption of Microsoft Copilot for Microsoft 365 in your organization

Don’t miss out on the newest skilling resources for Azure – Microsoft Community Hub – Learn how to elevate your enterprise with seamless cloud migration and modernization; accelerate innovation by boosting developer efficiency in the cloud; power business decisions with cloud scale analytics; and optimize your Azure workloads to achieve cost efficiency and performance.

3. Get the latest technical updates on Microsoft Mechanics:

Want to deploy Copilot for Microsoft 365 fast? 3 Quick Steps to Deploy Copilot for Microsoft 365 at Scale demonstrates the new Restricted SharePoint Search and a pro tip for assigning Copilot services with Microsoft Entra, as well as Microsoft Copilot Dashboard to granularly monitor readiness and usage.

See the latest generation of AI PCs for business, with the latest Intel processors, integrated NPUs, and Microsoft Copilot key – Introducing Surface Pro 10 and Surface Laptop 6.

Learn about the differences between Copilot, Copilot Pro, and Copilot for Microsoft 365 experiences for personal and work use – Microsoft Copilot personal and work experiences explained.

Copilot for Microsoft 365 is now available for organizations of all sizes with Microsoft 365 and Office 365, without a minimum license count, watch – How to get ready for Microsoft Copilot for Microsoft 365 (2024).

Microsoft Intune Suite has added Cloud PKI and real-time device query to its extensive list of endpoint management capabilities – Microsoft Intune Suite – beyond endpoint management in 2024.

Deliver desktop and app virtualization experiences to almost any device, with VMs running where you need them – even on-premises – with Azure Virtual Desktop on Azure Stack HCI – How to run Azure Virtual Desktop on-premises.

4. Take Copilot to the next level with new extensibility and reporting options:

The developer center at dev.microsoft.com highlights the latest Copilot extensibility options and methods.

Find other Microsoft 365, Teams and Copilot developers on the NEW Platform LinkedIn community.

Go deep on the brand new Microsoft Teams Toolkit for Visual Studio with a dedicated video series for developers.

See how users and groups in your organization are getting benefits from Copilot Dashboards powered by Viva Insights to report deployment readiness, impact, usage, and value.

5. Microsoft Events:

May 21-23, 2024 – Microsoft Build

Join us at Microsoft Build to grow your skills in topics like building copilots, generative AI, securing applications, cloud platforms, low-code, and more to unleash your creativity with the power of AI.

April 30-May 2, 2024 – Microsoft 365 Community Conference

Attend the Microsoft 365 Community Conference with over 150 sessions covering Copilot for Microsoft 365, Teams, Viva, SharePoint, Windows, and more.

On Demand – Microsoft Secure

Security for all in the age of AI: Microsoft Secure. The second annual Microsoft Secure digital event to learn how to bring world-class threat intelligence, complete end-to-end protection, and industry-leading responsible AI to your organization.

Watch on YouTube – Copilot for Microsoft 365 Tech Accelerator

Catch up on the recent Copilot for Microsoft 365 Tech Accelerator event in case you missed it! You’ll learn valuable insights, watch demos, and listen to deep dives on Copilot for Microsoft 365.

Microsoft Tech Community – Latest Blogs –Read More

Reducing Windows 10, version 22H2 Monthly LCU package size

If you’re using Windows 10, you’re about to experience a significant increase in efficiency.

Microsoft releases security and quality updates for Windows every month, resulting in a substantial amount of content that can quickly consume the network bandwidth of users operating on slower networks. To reduce the demands on your network, Microsoft is now taking a page from the Windows 11 playbook and reducing the size of Windows 10 update packages.

Windows 10 is becoming more like Windows 11

Windows 11 cumulative updates are more efficient than update packages for Windows 10. This is achieved through efficient packaging, including the removal of reverse differentials from the cumulative update package. To understand how the update size for Windows 11 is reduced, refer to How Microsoft reduced Windows 11 update size by 40%.

Microsoft is bringing the same functionality to Windows 10, version 22H2, thereby decreasing the size of the monthly Latest Cumulative Update (LCU) package. This feature will be available starting with the April 23, 2024 (KB5036979) monthly release, which helps you and your organization to conserve network bandwidth.

Simply approve and apply the update as you would do today. Devices on Windows, version 10 22H2 will observe a dip in size from 830 MB in the April 9, 2024 (KB5036892) release to approximately 650 MB in the April 23, 2024 (KB5036979) release.

Make sure you’re ready

This reduction of the LCU package size offers advantages such as reduced bandwidth usage, faster downloads, minimized network traffic, and improved performance on slow connections. If you maintain Windows 10, version 22H2 for deployment to new devices, make sure you’re ready for these improvements by taking the following steps:

Check to see if you have serviced your image since the July 23, 2024 update (KB5028244).

If you have not, apply standalone SSU KB5031539.

After this, apply an April 23 or later quality update.

Continue the conversation. Find best practices. Bookmark the Windows Tech Community, then follow us @MSWindowsITPro on X/Twitter. Looking for support? Visit Windows on Microsoft Q&A.

Microsoft Tech Community – Latest Blogs –Read More

Revoking vulnerable Windows boot managers

If you’re worried about the BlackLotus UEFI bootkit vulnerability (CVE-2023-24932) and how it might affect your device’s security, you’ll be pleased to learn about the measures Microsoft is taking to help keep you safe.

Back in February, we shared steps you can take to prepare to update the Secure Boot trust anchors for Windows, as the existing ones are approaching expiry. With the update to the Secure Boot trust anchor, we can address the threat of all previous, potentially vulnerable Windows boot components by revoking the old trust anchor. To this effect, the April 9 security updates includes a new Secure Boot revocation update (DBX).

If you’re interested in applying this revocation on systems with the updated trust anchors, this article describes how to do just that. For now, we strongly recommend the steps in this article for testing and validation only.

The security benefits of Secure Boot

Secure Boot is a security feature in the Unified Extensible Firmware Interface (UEFI) that helps ensure that only trusted software runs during the system’s boot sequence. We recommend the use of Secure Boot to help make a safe and trusted path from UEFI through the Windows kernels’ Trusted Boot sequence.

As an industry standard, UEFI’s Secure Boot defines how platform firmware manages certificates and authenticates firmware, and how the operating system (OS) interfaces with this process. For more details on UEFI and Secure Boot, refer to the Secure Boot page.

Secure Boot’s main focus is to help protect the pre-boot environment from bootkit malware. A bootkit is a malicious program designed to load as early as possible in a device’s boot sequence. Secure Boot helps ensure that only verified code executes before Windows. Verified code is firmware that runs early in the boot sequence, initializes the PC prior to the launch of Windows OS, and is trusted based on certificates configured in the firmware. Examples include UEFI firmware drivers, bootloaders, applications, and option ROMs (Read-Only Memory). Disabling Secure Boot puts a device at high risk of infection by bootkit malware.

Addressing the BlackLotus malware

The BlackLotus malware exploits a known security vulnerability called “Baton Drop,” tracked by CVE-2022-21894. It bypasses Secure Boot and then installs malicious files to the EFI (Extensible Firmware Interface) System Partition (ESP), which are then launched by the UEFI firmware. Baton Drop allows rollback of Windows boot managers to previous vulnerable versions that are not in the Secure Boot Forbidden Signature Database (DBX). It then exploits the vulnerability in Windows boot manager as part of an attack. For more information, refer to Guidance for investigating attacks using CVE-2022-21894: The BlackLotus campaign.

Windows boot manager mitigations that we released previously

To address this vulnerability, as part of the May 2023 servicing updates, we introduced a code integrity policy that blocked vulnerable Windows boot managers based on their version number. For versions of Windows boot manager that remained unaffected by this fix, we added them to the DBX.

However, we have found multiple cases that can bypass the rollback protections released during the May 2023 servicing updates. As a result, we are putting forth a more comprehensive solution that involves revoking the Microsoft Windows Production PCA (Product Certificate Authority) 2011.

New measures to help secure Windows boot managers

Here are the next steps to help protect against the malicious abuse of vulnerable Windows boot managers:

What we’re doing: As our current trust anchors are expiring in 2026, we’re already migrating to new ones (catch up on this in KB5036210: Deploying Windows UEFI CA 2023 certificate to Secure Boot Allowed Signature Database). This transition allows us to revoke trust for the Windows signing certificate, Microsoft Windows Production PCA 2011. This Product Certificate authority (PCA) is currently used to authorize trust for all Windows boot managers in Secure Boot.

What you can do: Once you’ve followed the steps in KB5036210 to add the new Windows trust anchor, you can follow the optional steps below to revoke trust in the Windows Production PCA 2011. Note that the earlier KB cautions that these updates should be done on “representative sample test devices” first. At this time, we strongly recommend the same cautious approach. Take the steps described in this article on “representative sample test devices” before attempting to perform these steps on production devices.

Guidelines for evaluating the Secure Boot DBX update

Understand the upcoming changes

By applying the DBX update to a secure boot enabled device, that device will no longer be able to boot from any Windows boot manager signed by the Microsoft Windows Production PCA 2011. This includes booting through existing recovery media, USB media, and network boot (WSD/PXE/HTTP) servers that do not have updated boot manager components. PXE boot is especially likely to be impacted. That’s because you cannot update the binaries served by PXE until all machines supported by the network boot server are updated to run with the new DB update.

Plan the deployment

To prepare your device to receive the DBX update package, ensure that you have applied the DB update package first and deployed the new updated boot manager components signed by the Microsoft Windows UEFI CA 2023. Refer to KB5025885: How to manage the Windows boot manager revocations for Secure Boot changes associated with CVE-2023-24932 for more details.

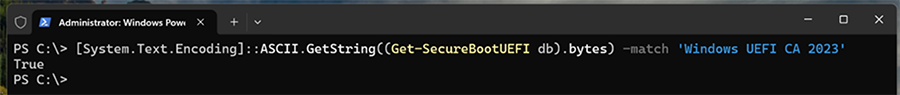

Confirm that your device has successfully applied the DB update package first. Open a PowerShell console and ensure that PowerShell is running as an administrator before running the following command: [System.Text.Encoding]::ASCII.GetString((Get-SecureBootUEFI db).bytes) -match ‘Windows UEFI CA 2023′

Screenshot of a PowerShell console with a command string.

If the command returns “True,” the update was successful. In the case of errors while applying the DB update, refer to the article, KB5016061: Addressing vulnerable and revoked Boot Managers.

After applying the April servicing updates, begin by testing the updates with individual devices. Test on the same firmware and specifications in the enterprise environment to minimize the risks in the case of firmware bugs in your devices.

Verify that your UEFI firmware version is the most recent available version by your firmware vendor or OEM.

For data backup steps, refer to this guide.

If you use BitLocker, or if your enterprise has deployed BitLocker on your machine, Back up your BitLocker recovery key. See this portal to ensure that your BitLocker keys are backed up before your next reboot for your selfhost device. In the unlikely event that device becomes inoperable after receiving the update, you can still unlock the hard drive.

For devices with third party full device or disk encryption, check with your disk encryption provider to perform your own set of tests before applying the update packages.

For detailed instructions on applying the DBX updates, refer to KB5025885: How to manage the Windows boot manager revocations for Secure Boot changes associated with CVE-2023-24932.

Why you need to update the DB before applying the DBX update

Note: You cannot apply the DBX update package through Windows updates on a device without first applying the DB update.

As part of the planning for the DB and DBX update packages, Microsoft, in collaboration with some of our OEM partners, has conducted extensive testing on various device configurations to detect and resolve any bugs in firmware implementations that could cause system failures or render a device unreceptive to these update packages. Despite our thorough testing, we acknowledge that we cannot cover every possible device configuration, so we strongly recommend customers to perform their own tests on their devices before applying the DB and DBX update packages.

Some of the associated risks with applying the DBX update package before updating the DB update package include:

The device firmware might encounter difficulties in processing the DB and DBX updates, leading to operational issues. In the handful of cases that we’ve encountered, we’ve notified the OEMs of the issue and blocked those devices from applying both DB and DBX updates until the issue can be remedied.

While unlikely, you might inadvertently cause BitLocker to enter recovery and lose Virtualization-based Security (VBS) protected secrets that are used by Windows Hello or Credential Guard.

Updating the PXE server to use 2023 signed binaries without applying the DB update first will cause the system to fail to boot, and inversely, applying the DBX update package will prevent any 2011 signed media from booting.

DO NOT apply the DBX to a device without DB update through manual update, using set-securebootuefi, as the system will not boot. Specifically, this will bypass the safety checks included in our servicing tool (Windows Updates) to guard against breaking issues. Update your device by relying on our published mitigations.

Continuing the journey of trust

In short, to establish new trust anchors, you need to untrust the Microsoft Windows Production PCA 2011. These updates are only a part of Microsoft’s ongoing dedication to security. Microsoft anticipates releasing DBX updates in the future, with a goal of achieving mandatory enforcement no sooner than January 2025. We encourage IT admins and enterprise customers to invest in building workflows that ensure an efficient rollout of these updates across their device fleet.

Make sure you’re getting the most out of your security experience by checking out the following resources:

KB5025885: How to manage the Windows boot manager revocations for Secure Boot changes associated with CVE-2023-24932

Guidance for investigating attacks using CVE-2022-21894: The BlackLotus campaign

Updating Microsoft Secure Boot keys

Azure Security best practices and pattern

What are custom security attributes in Microsoft Entra ID?

Secure the Windows boot process

Windows operating system security

Continue the conversation. Find best practices. Bookmark the Windows Tech Community, then follow us @MSWindowsITPro on X/Twitter. Looking for support? Visit Windows on Microsoft Q&A.

Microsoft Tech Community – Latest Blogs –Read More

Entra ID licencing accross tenants

Hi,

A question that we have not been able to fully grasp, hoping for some guidance.

If all users in a main tenant (ad.company1.com) are assigned office (cloud) licenses with Entra Id P2 licences.

The company is currently operating four different Microsoft Entra ID instances, using four different identities and users are synched between these four tenants.

They are using services in all these environments that require Entra ID P2 licenses (e.g: conditional access), both for configuring and for consuming the service. Note that the P2 license needs to be assigned to at least one user in the directory to be able to configure the services. Consumption should require a license per user, but this is not technically enforced. Users are able to consume regardless of license assignment.

Would they actually need multiple Entra ID P2 lics per user (one per tenant?) to be properly licensed (compliant), even though every physical person carries one P2 license in the main tenant?

Hi, A question that we have not been able to fully grasp, hoping for some guidance. If all users in a main tenant (ad.company1.com) are assigned office (cloud) licenses with Entra Id P2 licences. The company is currently operating four different Microsoft Entra ID instances, using four different identities and users are synched between these four tenants. They are using services in all these environments that require Entra ID P2 licenses (e.g: conditional access), both for configuring and for consuming the service. Note that the P2 license needs to be assigned to at least one user in the directory to be able to configure the services. Consumption should require a license per user, but this is not technically enforced. Users are able to consume regardless of license assignment. Would they actually need multiple Entra ID P2 lics per user (one per tenant?) to be properly licensed (compliant), even though every physical person carries one P2 license in the main tenant? Read More

Cannot create email groups in exchange admin center

Hello

Please i need your help on this issue.

Cannot create email groups in exchange admin center

When I attempt to create and email group using EAC I get the following error.

We couldn’t create the group.

Exception of type ‘Microsoft.Online.BOX.Util.Exceptions.InternalException’ was thrown.

Hello Please i need your help on this issue. Cannot create email groups in exchange admin center When I attempt to create and email group using EAC I get the following error. We couldn’t create the group.Exception of type ‘Microsoft.Online.BOX.Util.Exceptions.InternalException’ was thrown. Read More

What front end for Sq; Server

I am converting a MS Access tables and queries to Sql Server back end. The app is a personal finance app. When completed the back end will be cloud based. Then, I will convert the MS Access front end to cross platform(windows, Mac, Google and Linux with compatibility with Android and IOS. I don’t know what language to use for this front end. I am a self taught developer and I know that I am not the best or fastest and would have to learn the language. What language would you recommend and why?

I am converting a MS Access tables and queries to Sql Server back end. The app is a personal finance app. When completed the back end will be cloud based. Then, I will convert the MS Access front end to cross platform(windows, Mac, Google and Linux with compatibility with Android and IOS. I don’t know what language to use for this front end. I am a self taught developer and I know that I am not the best or fastest and would have to learn the language. What language would you recommend and why? Read More

New Outlook: Send To OneNote Is Not Missing. It’s buried.

Do you rely heavily on OneNote to keep your life organized? Are you unable to find the Send to OneNote icon in New Outlook?

The Send to OneNote icon is buried deep down in the customize action ribbon – don’t worry, I’ve got a video that will show you how to resurrect it!

Video: https://youtu.be/aZYpAmMDnM4?si=2Yi8-mMigRZXEBu9

If you found this information helpful, please mark it as the best response.

/Teresa #traccreations4e 04/24/2024

Do you rely heavily on OneNote to keep your life organized? Are you unable to find the Send to OneNote icon in New Outlook?

The Send to OneNote icon is buried deep down in the customize action ribbon – don’t worry, I’ve got a video that will show you how to resurrect it! Video: https://youtu.be/aZYpAmMDnM4?si=2Yi8-mMigRZXEBu9

If you found this information helpful, please mark it as the best response.

/Teresa #traccreations4e 04/24/2024 Read More

Word shuts down

I went to use my Microsoft® Word for Microsoft 365 MSO (Version 2403 Build 16.0.17425.20176) 64-bit and when I start to type anything it shut down. I am then asked if I want to use it in safe mode, but that shuts down as well. It was working fine on Monday and when I went in to use it yesterday after Windows did an update Monday night , this is what is happening.

I went to use my Microsoft® Word for Microsoft 365 MSO (Version 2403 Build 16.0.17425.20176) 64-bit and when I start to type anything it shut down. I am then asked if I want to use it in safe mode, but that shuts down as well. It was working fine on Monday and when I went in to use it yesterday after Windows did an update Monday night , this is what is happening. Read More

Join our Holistic Listening session at the Microsoft 365 Community Conference

I’m excited to attend the Microsoft 365 Community Conference next week, April 30 – May 2, in Orlando, Florida with Quentin Mackey, Global Product Manager of Viva Glint, delivering a session on Holistic Listening using Viva Glint, Viva Insights, and Viva Pulse. This session will help attendees understand how to seek and act on the many signals available in the employee experience to help people feel engaged, productive, and perform at their best. We’ll be sharing best practices, showcasing new technology, and highlighting a customer case study.

There is also a track dedicated to HR professionals, communicators, and business stakeholders in employee experience empowering attendees to:

Engage employees: Inspire employees to spark participation, contribution, and action toward cultural and business objectives. Accelerate innovation and drive a high-performance organization that is inclusive of everyone from the executive suite to the frontline.

Modernize internal communications: Evolve strategies to achieve communications objectives with engaging content that reaches audiences where they work, while reducing noise & interruption. Leverage advanced analytics and AI to measure and improve effectiveness.

You can learn more here about this conference track.

Join us in person with over 175 Microsoft and community experts in one place by registering here. Note: use the MSCMTY discount code to save $100 USD.

Do you want to learn more about the conference and more reasons to attend? Check out this blog to learn more about the conference.

The Microsoft 365 Community Conference returns to Orlando, FL, April 30 – May 2, 2024 – with two pre-event and one post-event workshop days. It’s a wonderful event dedicated to Copilot and AI, SharePoint, Teams, OneDrive, Viva, Power Platform, and related Microsoft 365 apps and services. Plus, a full Transformation track for communicators, HR, and business stakeholders in workplace experience.

Microsoft Tech Community – Latest Blogs –Read More

Unlocking the Power of Azure Integration Services: A Webinar You Can’t Miss!

In today’s rapidly evolving tech landscape, seamless integration stands as the cornerstone of innovation and growth. As a technology decision-maker, you understand the pivotal role integrations play in driving success across industries.

Join us for an enlightening 90-minute webinar, where you’ll uncover how Azure Integration Services can empower your organization to navigate integration complexities with ease and efficiency. Gain actionable insights and practical guidance directly from Azure Integration Services product leaders, as well as insights from our customers and partners.

Here’s a glimpse of what to expect:

Drive Revenue Growth with Business Process Automation: Dive into how Azure Logic Apps can streamline operations, boost efficiency, and ultimately drive revenue growth by automating repetitive tasks and fostering seamless collaboration.

Empower Every Team with Universal API Discovery and Access: Explore how Azure API Center empowers teams throughout your organization, granting easy access to a wide array of APIs, thereby fostering collaboration and accelerating innovation.

Fortify Security and Compliance Posture with Comprehensive API Security Strategies: Learn firsthand how Azure API Management offers robust security measures, shielding your organization from cyber threats while ensuring compliance with industry regulations, thus safeguarding both your data and reputation.

Unleash Innovation with AI’s Potential for Integration: Discover the transformative power of AI-driven integration and how Azure Integration Services can assist in harnessing AI capabilities to automate tasks, unlock new opportunities, and drive innovation.

Join us for this live event to ask your questions and receive expert guidance on how integration can maximize your investments and accelerate your app innovation programs.

Don’t miss out on this exclusive opportunity to gain invaluable insights, engage with industry experts, and elevate your organization’s integration strategy. Register now to secure your spot and empower your organization with Azure Integration Services!

Microsoft Tech Community – Latest Blogs –Read More