Category: Microsoft

Category Archives: Microsoft

Drive customer engagement with the power of AI

According to a recent IDC study commissioned by Microsoft, “For every $1 a company invests in AI, it is realizing an average return of $3.5X.” Because organizations realize a return on their AI investments within 14 months, customers are highly motivated to find partners with the necessary knowledge and skill set to deploy AI solutions today.

The Microsoft AI Partner Training Roadshow is a single-day, in-person event focused on driving customer engagement with the power of AI. The roadshow provides an exceptional opportunity to engage with Microsoft experts, hear about the latest trends in AI from Microsoft executives, and participate in technical or sales training.

Attend one of the six roadshow events

The Microsoft AI Partner Training Roadshow is scheduled in six cities across the globe, so there are only a few opportunities for deep learning on Microsoft generative and responsible AI technologies, cloud-scale data, and modern application development platforms, including Azure AI services and Microsoft Copilot.

The first event will be on March 1, 2024, in Hyderabad, India, followed by a second event in Bengaluru, India, on March 19. You don’t want to miss this opportunity. Register for an event near you.

Acquire generative and responsible AI knowledge from Microsoft experts

In a recent blog, Judson Althoff outlined four major opportunities where organizations can empower AI transformation:

Enriching employee experience

Reinventing customer engagement

Reshaping business processes

Bending the curve on innovation

Microsoft is focused on developing responsible AI strategies grounded in pragmatic innovation and enabling AI transformation to meet our customers’ needs. The Microsoft AI Partner Training Roadshow provides expert-led sessions and hands-on experiences to enhance your sales, pre-sales, and technical deployment capabilities across these impact areas.

Prepare technical and sales teams for AI success

Open to our Global Systems Integrator (GSI) and System Integrator (SI) partners, the Microsoft AI Partner Training Roadshow offers learning across multiple skill levels and interests. Alongside a keynote address by a Microsoft leader, there are four distinct learning paths for individuals with technical or sales backgrounds:

Sales Excellence with Microsoft AI Services: Master skills to confidently pitch Microsoft AI solutions by diving into solution use cases, exploring responsible AI commitments, and highlighting incentives to increase customer business value.

Technical Excellence with Azure AI: Build your own “Intelligent Agent” copilot to answer customer questions on products and services: Learn to build an “Intelligent Agent” that helps users find products, user profiles, and sales order information. This interactive experience features theoretical and lab sessions that prepare your technical teams to use Azure OpenAI and Azure AI Search.

Technical Excellence with Azure AI: Build a scalable data estate with a custom copilot for conversational data interaction: In this hands-on track, learn how to create a payments and transactions solution. Key subjects explored include business rules for data governance, patch operations for data replication, and customizing copilots for conversational AI.

Technical Excellence with Microsoft 365: Deep dive into the use and deployment of Copilot for Microsoft 365: Gain a fuller understanding of Copilot for Microsoft 365 with technical sessions on architecture, deployment, security, and compliance.

Bridge skill gaps in AI

Because AI is rapidly developing, there is a growing skills gap as employees work to keep up. In fact, 52% of participants of this IDC survey report that the lack of skilled workers is their biggest barrier to implementing and scaling AI. Much of the challenge isn’t simply adopting technology but also providing ample opportunities for employees to explore and learn.

To reconcile this divide, the Microsoft AI Partner Training Roadshow is committed to providing recent, up-to-date content for participants to study during and after the event. In addition to live keynote addresses and Q&A sessions, participants will have the chance to interact with and learn from technical and sales subject matter experts on topics that span generative and responsible AI technologies, cloud-scale data, and modern application development platforms, Azure AI services, and Microsoft Copilot

Prepare for the future

2023 introduced the world to the power of generative AI. Businesses are ready to deploy AI-based solutions as quickly as possible. The Microsoft AI Partner Training Roadshow places developers, solution architects, implementation consultants, and sales & pre-sales consultants at the forefront of AI transformation.

Because there will be no on-demand delivery post-event, we invite you to join us in Hyderabad, Bengaluru, or one of the other four cities across the globe that’s conveniently located near you.

Visit the Microsoft AI Partnership Roadshow website and register today to get started.

Microsoft Tech Community – Latest Blogs –Read More

Build an LLM-based application, benchmark models and evaluate output performance with Prompt Flow

Overview

In this article, I’ll be covering some of the capabilities of Prompt Flow, a development tool designed to streamline the entire development cycle of AI applications powered by Large Language Models (LLMs). Prompt Flow is available through Azure Machine Learning and Azure AI Studio (Preview).

Through Prompt Flow, I will:

Create a NER (Named-Entity Recognition) application;

Test different LLMs (GPT-3.5-Turbo vs. GPT-4-Turbo) through variant capability;

Evaluate output performance using a built-in evaluation method (QnA F1 Score Evaluation)

Note: As Azure AI Studio is in preview currently (February 2024), I’ll leverage Prompt Flow through the Azure Machine Learning Studio. After the preview period, everyone should use Azure AI Studio with Prompt Flow.

Create a NER application

On the Azure portal, go on Azure Machine Learning service, create a workspace, and launch the studio.

On the Azure Machine Learning Studio, you will find different features such as:

Prompt Flow, is a feature that allows you to author flows. Flows are executable workflows often consist of three parts:

Inputs: Represent data passed into the flow. There can be different data types like strings, integers, or boolean.

Nodes: Represent tools that perform data processing, task execution, or algorithmic operations. Tools are LLM tool (enables custom prompt creation utilizing LLMs), Python tool (allows the execution of custom Python scripts), Prompt tool (prepares prompts as strings for complex scenarios or integration with other tools)

Outputs: Represent the data produced by the flow.

Model Catalog, is the hub to deploy a wide-variety of third-party (Mistral, Meta, Hugging Face, Deci, Nvidia, etc.) open source as well as Microsoft developed foundation models pre-trained for various language, speech and vision use-cases. You can consume some of these models directly through their inference API endpoints called “Models as a Service” (e.g. Meta and Mistral) or deploy a real-time endpoint on a dedicated infrastructure (e.g. GPU) that you manage;

Notebook, to allow data scientists to create, edit, and run Jupyter notebooks in a secure, cloud-based environment;

Compute, a managed cloud-based workstation for data scientists. Each compute instance has only one owner, although you can share files between multiple compute instances. Use a compute instance as your fully configured and managed development environment in the cloud for machine learning. They can also be used as a compute target for training and inferencing for development and testing purposes.

Now, click on “Prompt Flow”, create a Flow by selecting a “Standard flow”. Now you should have a flow similar to this one:

This flow represents an application with different blocks. Let me go through each block (called node):

Inputs

Takes as input (prompt) a topic

Joke (LLM node)

Condition the LLM “to tell good jokes” through a system message. Takes as input the initial prompt.

You need to enable a Connection to make this action able to interact with an endpoint (e.g. LLM inference API, Vector Index such as Azure Search, Azure OpenAI deployed models, Qdrant, etc). To do that, you need to create this connection in a dedicated pane within Prompt Flow by specifying the provider, the endpoint, and credentials.

Echo (Python node)

Python script which takes as input the output (completion) of the LLM and echo it.

Output

Outputs the … output of the Python script.

To test your flow, provide an input and click on Run. On the outputs tab can review the outputs:

Now that I have a flow, I want to edit it to become a NER (Named Entity Recognition) flow that leverages an LLM to find entities from a given text content. To do that, I’ll edit the LLM node (previously named “joke”) and the Python node (previously named “echo”).

LLM node

I’ll rename the LLM node in “NER_LLM” with the configuration below.

To perform the NER I’ll use this prompt:

system:

Your task is to find entities of a certain type from the given text content.

If there’re multiple entities, please return them all with comma separated, e.g. “entity1, entity2, entity3”.

You should only return the entity list, nothing else.

If there’s no such entity, please return “None”.

user:

Entity type: {{entity_type}}

Text content: {{text}}

Entities:

Python node

I’ll rename the Python node in “cleansing” with the configuration below.

It runs the following Python code:

from typing import List

from promptflow import tool

@tool

def cleansing(entities_str: str) -> List[str]:

# Split, remove leading and trailing spaces/tabs/dots

parts = entities_str.split(“,”)

cleaned_parts = [part.strip(” t.””) for part in parts]

entities = [part for part in cleaned_parts if len(part) > 0]

return entities

Basically, this code snippet takes as input an entity (or entities if more than one) and cleanses a comma-separated string by removing extraneous whitespace, tabs, and dots from each element and returns a list of non-empty, trimmed strings.

Test the flow

To test the flow, I asked an LLM to provide me with an example. I asked the model to provide me in JSON format an “entity_type” and a “text” that contains entity or entities to extract through NER process. I took GPT-4-Turbo model through the Azure OpenAI Playground interface. Here is the example:

{“entity_type”: “location”, “text”: “Mount Everest is the highest peak in the world.”}

Then, I pass those into the inputs node on my flow:

Finally, I can run my flow. Basically, it will execute nodes after nodes from inputs to the outputs nodes:

I can see the result in the Outputs section:

And get more information in the Trace section such as API calls in which node, time to proceed, number of tokens process on the prompt and on the completion side, etc.

Variants

If you want to test different prompts, system messages, even different models you can create Variants. A variant refers to a specific version of a tool node that has distinct settings such as another models, different temperature, different top_p parameter, another prompts, etc. This way you’re able to perform basic A/B testing.

Let’s say you want to compare results between two of the most used OpenAI’s models that are GPT-3.5-Turbo and GPT-4-Turbo.

To make that happens, go on the LLM node, and click on “Generate variants”. On this example I’ll keep same prompt, same temperature, same top_p parameter, but I’ll change the LLM to interact with (from GPT-4-Turbo to GPT-3.5-Turbo).

To test multiple variants at the same time, click on Run, and select all my variants (variant_0 refers to GPT-4-Turbo and variant_1 refers to GPT-3.5-Turbo), so that I aggregate results within same outputs tab:

We can see that we obtain the same results, independently of the LLM used behind. Let’s be honest, this example isn’t very complex and can be easily handled by smaller model than GPT-4-Turbo but let’s keep it simple as the complexity of the task is not the main purpose of this blog post.

Evaluation

Now that I have a NER flow and having been able to test the application with different LLMs, I’d like to evaluate the output performance. This is where the Evaluate capability of Prompt Flow comes in. The evaluation feature enables you to select built-in evaluation methods and build your own custom evaluation methods.

Here, I’ll use the built-in “QnA F1 Score Evaluation” method. I won’t go deep into the details, but this evaluation method computes the F1 score based on words in the predicted answers and the ground truth.

Then, I need data samples to run the flow and then evaluate the outputs at a larger scale than one example. One of the use-case around Generative AI is the way of these models generate data samples so I’ll use GPT-4-Turbo to generate 50 examples that will serve to run flows and evaluate outputs.

Here is my system message:

Your task is to generate in .jsonl format a data set that will be used to evaluate an LLM-based application.

This application is performing NER (Named Entity Recognition) with 2 inputs: “entity_type” as a string (e.g. “job title”) and “text” as a string (e.g. “The software engineer is working on a new update for the application.”). The desired output is the entity or entities if they’re multiple (e.g. “software engineer”).

Here is my prompt:

Generate 50 samples:

Here are the first five lines of the completion:

{“entity_type”: “person”, “text”: “Elon Musk has announced a new Tesla model.”, “entity”: “Elon Musk”}

{“entity_type”: “organization”, “text”: “Google is planning to launch a new feature in its search engine.”, “entity”: “Google”}

{“entity_type”: “job title”, “text”: “Dr. Susan will take over as the Chief Medical Officer next month.”, “entity”: “Chief Medical Officer”}

{“entity_type”: “location”, “text”: “The Eiffel Tower is one of the most visited places in Paris.”, “entity”: “Eiffel Tower”}

{“entity_type”: “date”, “text”: “The conference is scheduled for June 23rd, 2023.”, “entity”: “June 23rd, 2023”}

Once I’m happy with my sample, I select Evaluate in Prompt Flow, where I can edit the run display name, add description and tags, select for each LLM nodes the variants I want to evaluate. In my case I select the two variants I created:

Now I need to select a runtime, upload my sample, do the inputs mapping:

Then I select the evaluation method I want to perform (QnA F1 Score Evaluation method here). Here I need to specify data sources for the ground_truth (from the sample) and for the answer (generated by the LLM within the flow):

Finally I can click on “Review + Submit”. Behind the scene, Prompt Flow is executing my flow in 2 separate runs, one with variant_0 and the other with variant_1. Once these runs will be completed, it will perform the QnA F1 Score Evaluation method to both runs.

We can see the results of the executions on the Runs tab:

First observation is the duration of each execution:

The execution based on variant_0 (GPT-4-Turbo) took 1mn 14s to be completed;

The execution based on variant_1 (GPT-3.5-Turbo) took 14s to be completed.

One thing to keep in mind in the LLM world is that using a larger model will – most of the time – result in longer inference speed.

Now let’s have a look at the evaluations. By selecting both evaluation runs we can output results:

We can observe that the flow with highest F1 score is the one leveraging GPT-4-Turbo model (F1 score == 0.95) compared to GPT-3.5-Turbo model (F1 score == 0.89).

Although the larger model results in better output performance evaluation, leveraging GPT-3.5-Turbo model results in 80% faster inference speed and more cost effective as well.

Inference speed and tokens pricing model are some of the trade-off that you need to make in order to make sure you choose the right model to answer your need.

Conclusion

In conclusion, we’ve been covering Prompt Flow within Azure Machine Learning and Azure AI Studio to build and evaluate AI applications powered by Large Language Models (LLMs). This blog post walks through the process of creating a Named-Entity Recognition (NER) application, testing it with different LLMs (GPT-3.5-Turbo and GPT-4-Turbo), and evaluating the output performance using the built-in QnA F1 Score Evaluation method.

We’ve been demonstrating the use of variants to perform A/B testing between different models and a performance evaluation using generated data samples to calculate the F1 score, highlighting the trade-offs between inference speed, model size, and cost-effectiveness.

About the author

Alexandre Levret is a Technology Specialist within Microsoft working with Digital Native customers (Unicorns & Scaleups) in EMEA on AI/ML and GenAI projects.

Microsoft Tech Community – Latest Blogs –Read More

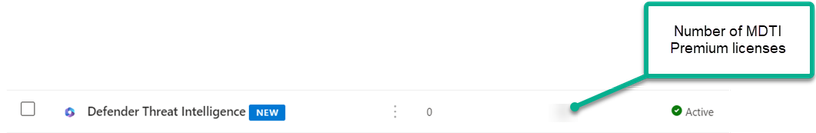

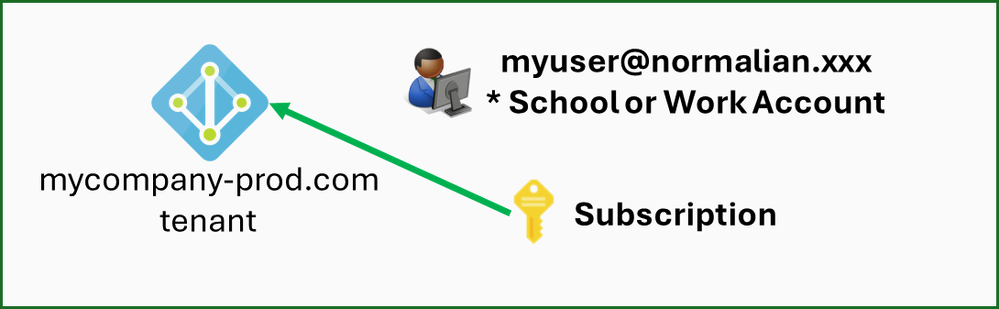

Managing MDTI Premium licenses in Microsoft Entra Admin Center

This blog details how to assign and manage Defender Threat Intelligence (MDTI) licenses and contains links to helpful content and resources. It is intended for customers who recently purchased the MDTI Premium SKU or a SKU that enables MDTI Premium access for its user base, such as Copilot for Security. Global administrators or identity governance administrators responsible for assigning MDTI user seat assignments will find it particularly useful.

Prerequisites to assigning MDTI premium licenses

Your Microsoft account team should have notified you that your MDTI procurement processing has been complete and requested that you view the available licenses within your respective tenant. If your agreement has not been fully processed, you will not be able to view the “Defender Threat Intelligence” licenses.

Instructions to assign MDTI Premium Licenses

As mentioned above, global administrators or identity governance administrators are responsible for assigning MDTI premium licenses to users, and should review the following Microsoft Learn resources for best practices for assigning licenses to users within Microsoft Entra Admin Center:

Tutorial – Manage access to resources in entitlement management – Microsoft Entra ID Governance | Microsoft Learn

Microsoft Entra built-in roles – Microsoft Entra ID | Microsoft Learn

Figure 1 – This is how your “Defender Threat Intelligence” MDTI Premium SKU licenses appear in Microsoft Entra Admin Center.

Troubleshooting MDTI Premium Seat Assignments

Ensure that you have the permissions to assign “Defender Threat Intelligence” licenses. Only global administrators or identity governance administrators have the appropriate permissions to assign user licenses.

Check with your Microsoft Account team that your MDTI Premium SKU agreement has been processed.

If you have completed the troubleshooting steps above and still cannot locate your “Defender Threat Intelligence” licenses in Microsoft Entra Admin Center, please work with your Microsoft account team to engage a CSA or another technical resource for further support.

We Want to Hear from You!

Be sure to join our fast-growing community of security pros and experts to provide product feedback and suggestions. Let us know how MDTI is helping your team stay on top of threats. With an open dialogue, we can create a safer internet together. Also, learn more about how to use MDTI to unmask adversaries and address threats here.

Microsoft Tech Community – Latest Blogs –Read More

Streamlining Azure Marketplace Deployments

Streamlining Azure Marketplace Deployments

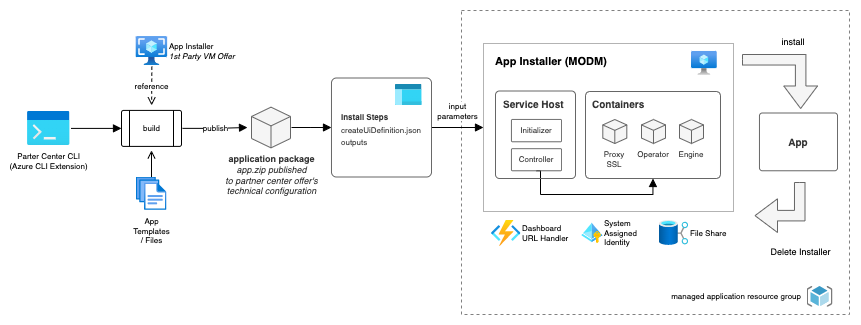

Navigating the complexity of deploying solutions to the Azure Marketplace is a common challenge faced by many of our partners at Microsoft. Recognizing this, our Global Partner Services team has developed a powerful tool to simplify this process: the Commercial Marketplace Offer Deployment Manager, or MODM.

Introducing MODM

MODM is a dedicated, first-party installer designed to streamline the deployment of intricate solutions in the Azure Marketplace. It is especially crafted to support deployments using HashiCorp’s Terraform and Azure Bicep, enhancing the versatility and efficiency of the deployment process.

How MODM Simplifies Deployment

The deployment process with MODM is straightforward, involving two main steps:

Step 1: Create Your Application Package

The initial phase involves packaging your solution into an application package using the Azure CLI Partnercenter Extension. MODM accommodates two types of solutions for packaging:

1. HashiCorp’s Terraform: This popular open-source infrastructure as code tool is now seamlessly supported for Azure Marketplace deployments. Previously, Terraform-based solutions needed conversion to Azure Resource Manager templates, a process that demanded significant development and testing efforts. MODM eliminates this requirement.

2. Azure Bicep: Azure Bicep offers a more readable and concise syntax compared to the JSON of Azure Resource Manager templates. With MODM, converting your Azure Bicep templates to ARM templates is a thing of the past.

Both Terraform and Bicep solutions require minimal prerequisites to be compatible with MODM. Place your solution in a directory with a main entry point file (main.tf for Terraform, main.bicep for Bicep), install the Azure CLI extension for Partnercenter, and execute a single command to create an application package ready for Azure Marketplace.

Simply execute:

az partnercenter marketplace offer package build –id simpleterraform –src $src_dir –package-type AppInstaller

Step 2: Publish Your Application Package

Publishing your application package follows the same protocol as any other Marketplace solution. Utilize the Azure CLI Extension for Partnercenter or the Partnercenter Portal for this purpose.

Post-Deployment: Installing Your Published Package

Installing a marketplace offer deployed with MODM is as straightforward as installing any other managed app. A unique aspect of MODM is the inclusion of a user-friendly front-end experience that allows you to monitor the installation progress and troubleshoot any issues that arise. Detailed documentation and a helpful video tutorial on this process are available for further guidance.

MODM’s Architecture Overview

MODM’s architecture is anchored by the App Installer, a virtual machine that plays a pivotal role in the deployment process. This component takes the packaged app.zip from the Partnercenter CLI command and oversees the installation, managing aspects like retries and machine restarts. A detailed breakdown of MODM’s architecture is available in our GitHub documentation.

Educational Resources and Tutorials

To assist you further, we have prepared video tutorials covering various aspects of using MODM:

Packaging Terraform Solutions

Installing the Published Offer

Source Code Repositories

MODM Installer

Azure CLI Partnercenter Extension

Microsoft Tech Community – Latest Blogs –Read More

Leverage Secure Multi Party Computation (SMPC) for machine learning inference in rs-fMRI datasets.

@Alberto Santamaria-Pang, @Ivan Tarapov, @Yonas Woldesenbet, @Sam Preston, @Rahul Sharma, @Nishanth Chandran, @Divya Gupta, @Kashish Mittal, and @Ajay Manchepalli.

Machine learning models are useful in analyzing patient data, helping in detecting diseases early, and enabling clinicians in creating personalized treatments. However, using these models in healthcare is challenging because it requires accessing and processing sensitive patient data while ensuring patient privacy and complying with strict regulations.

Traditional encryption methods can only protect data when it is stored and not when it is being used for computation. One way to perform computation on encrypted data is to decrypt it in a trusted region like a secure enclave, which is done in Microsoft’s product offering Azure Confidential Computing. A cryptographic way of protecting information exists that can operate directly on encrypted data without the need for decryption – this technique is known as Secure Multi-party Computation (SMPC). SMPC helps ensure that sensitive healthcare data remains secure while enabling healthcare professionals to perform computations on the data they need to provide better care for patients.

Traditional encryption vs. SMPC

While both traditional encryption methods and Secure Multi-Party Computation (SMPC) offer similar levels of data security, SMPC has the added capability of allowing computations on encrypted data. For instance, in the case of wanting to conduct model inference on an encrypted DICOM image, it’s possible to directly use the encrypted image with SMPC. The additional computational load or overhead of using SMPC depends on the specific function or computation being performed on the encrypted data.

Comparison criteria

Traditional encryption methods

Secure Multi‑Party Computation (SMPC)

Data exposure

Raw data needs to be decrypted for analysis or use.

Computation is performed on encrypted data.

Inference speed

Encryption and decryption overhead is minimal.

Joint computation on encrypted data can introduce overhead in latency.

Trust assumptions

Rely on trusted third‑party or secure infrastructure.

Distributed computation with privacy assurance.

Figure 1 Traditional encryption methods vs. Secure Multi‑Party Computation (SMPC).

SMPC transforms healthcare data analysis and ML

SMPC provides a solution that allows multiple parties to work together on their data without revealing any sensitive information. It helps healthcare providers and researchers securely analyze patient data and use ML models while maintaining patient privacy.

Here are some key benefits of SMPC in the healthcare sector:

Privacy preservation. SMPC protects individual patient data during the computation process. Each party only sees their own data, and the others’ data is hidden. This lets healthcare providers and researchers work together and use more data without risking privacy.

Collaborative research. SMPC facilitates collaborative research among healthcare institutions, enabling them to pool their data resources without compromising privacy. Multiple parties can train ML models together on their combined data while keeping patient records and information safe. This helps improve the ML models in healthcare by using more and different data sources and larger samples.

Secure data sharing. SMPC helps enable healthcare providers to more securely share specific information from their datasets with other authorized parties. For example, when studying rare diseases, healthcare organizations may be able to share some patient data points or features while helping preserve their identity and privacy. This controlled sharing mechanism helps enhance research and contributes to the advancement of medical knowledge.

Privacy‑preserving ML to improve the security of fMRI data analysis in healthcare.

In this blog we explore the application of SMPC to medical image analysis via machine learning techniques for a specific use case of functional Magnetic Resonance Imaging (fMRI) analysis. Applying ML to fMRI data has the potential to revolutionize healthcare by providing insights into brain function and diagnosing neurological disorders. However, the sensitive nature of fMRI data raises significant privacy concerns. To address these challenges, one may employ privacy‑preserving ML techniques, such as data anonymization, secure data encryption, federated learning, and differential privacy, which would allow leveraging the benefits of ML in fMRI analysis while maintaining patient confidentiality and adhering to regulatory requirements.

Before diving into the details of how OnnxBridge (an end-to-end compiler for converting Onnx Models to Secure Cryptographic backends) enables secure machine learning for fMRI data, it is important to understand how fMRI is relevant for neuroscience research. Functional magnetic resonance imaging (fMRI) is a technique that measures brain activity by detecting changes in blood flow. By using fMRI, researchers can identify which brain regions are involved in different cognitive functions, such as memory, language, or emotion. This is known as functional localization. However, fMRI data is often sensitive and confidential, as it can reveal personal information about the participants’ health, preferences, or personality. Therefore, it is essential to protect the privacy and security of fMRI data when performing machine learning analysis on it.

In the rest of this blog post, we cover these topics:

What rs‑fMRI is and how it measures brain activity by detecting changes in blood flow.

How SMPC protects the privacy and security of fMRI data when performing machine learning analysis using EzPC‑OnnxBridge, a crucial part of the EzPC project from Microsoft Research India (MPC-MSRI, 2021).

How to use EzPC‑OnnxBridge for rs‑fMRI to identify brain regions involved in different cognitive functions.

What is rs‑fMRI and how is it used to localize brain networks?

Unlike traditional fMRI, which captures brain activity during specific tasks or stimuli, rs‑fMRI delves into the spontaneous fluctuations of the brain when it is in a state of rest or free thinking. It explores the intricate networks of communication among different brain regions, shedding light on the underlying functional architecture that forms the foundation of our cognition.

The power of rs‑fMRI lies in its ability to measure and analyse blood oxygen level ‑dependent (BOLD) signals. By detecting changes in blood flow and oxygenation, rs‑fMRI provides a window into the brain’s dynamic activity during rest. These fluctuations in the BOLD signal, known as resting ‑state connectivity, are like whispers of communication between various regions of the brain, even when we are not consciously engaged in any cognitive task.

Through advanced computational algorithms and sophisticated statistical analysis, researchers can map and visualize these functional connections within the brain. However, it is important to note that rs‑fMRI is not without its challenges and limitations. The interpretation of resting ‑state connectivity requires careful consideration, as it represents correlations between brain regions rather than direct causality. Moreover, factors such as participant motion, physiological noise, and data pre‑processing methods can influence the results and must be rigorously addressed to help ensure data quality and reliability. Here’s where ML algorithms can help neuro‑radiologists to efficiently map and visualize brain networks towards different number of clinical applications. In this blog, we provide an example of how to use SMPC to automatically identify and localize brain networks using work published in [3].

Figure 2 Visualization of brain networks from 3D dual regression volumes.

How SMPC works using EzPC-OnnxBridge

We begin with an overview of how secure multi‑party computation (SMPC) works and then describe how EzPC‑OnnxBridge can be used in the application described above. EzPC OnnxBridge allows using SMPC without any knowledge of cryptography. We will now walk through the steps for using EzPC OnnxBridge for this application.

SMPC is a cryptographic primitive introduced in the 1980s [4,5] that helps enable two or more parties who have private data to collaborate (or compute joint functions) on their private/secret data, without sharing it in the clear with any entity. This is done through an interactive cryptographic protocol – each party performs computations on their data and exchange (seemingly random looking) messages with other parties iteratively. At the end of such an interaction, the parties learn only the output of the joint function. As an example, if two parties A and B have private inputs a and b and wish to compute the function y = f(a,b) which outputs 1 if a>b and 0, otherwise, they can run an SMPC protocol to precisely compute y and nothing else. SMPC protocols have been extensively studied in the cryptography community over the last four decades with latest research, such as the EzPC technology [6,7,8,9], making SMPC practical for large scale ML models. In the application of secure machine learning for fMRI data, we have 2 parties – one that holds the machine learning model and the other that holds an input data point for inference. For the first party, the weights of the ML model are private, while for the second party, the input data point is private. In typical applications, including ours, the model architecture is public and known to both parties.

1. Identify sensitive data

We first identify the data involved in a single inferencing between two parties:

Machine Learning Model (Model Weights + Model Architecture).

Input data for inference.

Image by author using [2].

In the above the secret (or private) data to the two parties are:

Model Weights (obtained after training publicly available model architecture on private data) to one party.

Input data to the other party.

Image by author using [2].

Typically, model architectures are openly available and do not hold any proprietary data of any of the parties.

2. Strip ML model of weights

Now that we know what the secret data involved in an inference are, the next step is to strip the ML model of its model weights so that the model architecture can be shared. This is shown in the figure below.

Image by author using [2].

The above step helps us confirm that the secret data is in no way involved in generating crypto protocols, and give us full control over our data, which we input only at the time of secure inference.

In the above image we can see the mlp.onnx model before and after its secret data (i.e., the weights and bias of all layers) is stripped and represented as an input value, which means the model architecture do not contain any secret data and expects it at runtime.

3. Generate SMPC protocols from architecture

After we have the model architecture without weights, we need to convert this architecture to cryptographically secure protocols which will run on the secret data and give us output as if it was run without any crypto or security guarantees involved. This is done through EzPC‑OnnxBridge and is depicted below.

Image by author using [2].

4. Secure inference on private data

Finally, we need to run the above generated crypto protocols for each of two parties involved. These protocols will take the secret data as input and will communicate with each other some encrypted (masked) bits and pieces of data, which have strong mathematical assurances such that at any point the data being communicated does not reveal any information about the secret data.

At the end of the computation, the output of the computation is revealed to the specified parties (one or both) involved in the computation.

Using EzPC OnnxBridge for rs-fMRI

EzPC offers an inference ‑app that serves as a front-end for SMPC operations. This application presents users with a graphical user interface (GUI) through which they can upload images and obtain results securely. Next, we’ll walk through the steps required to get the app running.

Internally, the application utilizes OnnxBridge, an ‑‑ end to end compiler, to convert Onnx files to SMPC cryptographic protocols. The compiler helps with the removal of confidential data from models before converting them to Secure Multi‑Party Computation (SMPC) protocols. Thus, EzPC provides a user ‑friendly interface that facilitates a more secure compilation and execution of machine learning models.

Let’s take a look at the practical implementation of OnnxBridge to conduct secure inference using the mlp.onnx model specifically designed for rs‑fMRI (resting‑state functional magnetic resonance imaging) images.

The setup steps from the EzPC GitHub repo will help us to get the inference‑app running. The steps will be executed in following order:

1. Install dependencies for:

Cryptographic backend

Compiler OnnxBridge

2. Set up server (model owner and model processing).

Extract MLP model from the JHU GitHub repository

Strips the model of its weights and save them in a file.

Loads the stripped model architecture.

Generates the secure backend code for the model architecture.

Share the stripped model architecture with dealer/client.

3. Set up dealer.

Compiles the model architecture received from server.

Compute and share pre generated randomness for server/client to reduce communication drastically and speed up inference.

Note: For the randomness generation there has been no involvement of secret data.

4. Set up client (acting as image owner).

Compiles the model architecture received from server.

5. Set up inference app.

Encrypts the input image and sends it to client VM which starts inference. See screenshots below.

Step 1: Upload the image.

Step 2: Receive encryption from dealer.

Step 3: Encrypt the image.

Step 4: Start secure inference.

With the above we can see how EzPC gives us an interface and empowers us with superior cryptographic backends to follow SMPC ideally without any interaction with the secret data.

References

MPC-MSRI. (2021). EzPC: Easy Secure Multi-party Computation. GitHub. Retrieved from https://github.com/MPC-MSRI/EzPC.

AmmarPL. (2021). fMRI Classification JHU. GitHub. Retrieved from https://github.com/AmmarPL/fMRI-Classification-JHU.

Empower Medical Innovations: Intel Accelerates PadChest & fMRI Models on Microsoft Azure* Machine Learning. https://www.intel.com/content/www/us/en/developer/articles/technical/intel-accelerates-padchest-fmri-models-on-azure-ml.html

Dsouza, Trevor. Machine Learning Icon, distributed under CC BY 3.0.

Ghate, S., Santamaria-Pang, A., Tarapov, I., Sair, H., Jones, C. (2022). Deep Labeling of fMRI Brain Networks Using Cloud Based Processing. In: Bebis, G., et al. Advances in Visual Computing. ISVC 2022. Lecture Notes in Computer Science, vol 13598. Springer, Cham. https://doi.org/10.1007/978-3-031-20713-6_21. https://doi.org/10.1007/978-3-031-20713-6_21.

Yao, A. (1982). Protocols for Secure Computations. In Proceedings of the 23rd Annual Symposium on Foundations of Computer Science (pp. 160-164). IEEE.

Goldreich, O., Micali, S., & Wigderson, A. (1987). How to play any mental game or A completeness theorem for protocols with honest majority. In Proceedings of the 19th Annual ACM Symposium on Theory of Computing (pp. 218-229). ACM.

Kumar, N., Rathee, M., Chandran, N., Gupta, D., Rastogi, A., & Sharma, R. (2020). CrypTFlow: Secure TensorFlow Inference. In Proceedings of the 41st IEEE Symposium on Security and Privacy (pp. 1247-1264). IEEE.

Rathee, D., Rathee, M., Kumar, N., Chandran, N., Gupta, D., Rastogi, A., & Sharma, R. (2020). CrypTFlow2: Practical 2 Party Secure Inference. In Proceedings of the 27th ACM Conference on Computer and Communications Security (pp. 1639-1656). ACM.

Chandran, N., Gupta, D., Rastogi, A., Sharma, R., & Tripathi, S. (2019). EzPC: Programmable and Efficient Secure Two-Party Computation for Machine Learning. In Proceedings of the 4th IEEE European Symposium on Security and Privacy (pp. 123-138). IEEE.

Gupta, K., Kumaraswamy, D., Chandran, N., Gupta, D. (2022). LLAMA: A Low Latency Math Library for Secure Inference. In Proceedings of the Privacy Enhancing Technologies Symposium (PoPETS).

Do more with your data with Microsoft Cloud for Healthcare

With Azure AI Health Insights, health organizations can transform their patient experience.

Microsoft Tech Community – Latest Blogs –Read More

Drive customer engagement with the power of AI

According to a recent IDC study commissioned by Microsoft, “For every $1 a company invests in AI, it is realizing an average return of $3.5X.” Because organizations realize a return on their AI investments within 14 months, customers are highly motivated to find partners with the necessary knowledge and skill set to deploy AI solutions today.

The Microsoft AI Partner Training Roadshow is a single-day, in-person event focused on driving customer engagement with the power of AI. The roadshow provides an exceptional opportunity to engage with Microsoft experts, hear about the latest trends in AI from Microsoft executives, and participate in technical or sales training.

Attend one of the six roadshow events

The Microsoft AI Partner Training Roadshow is scheduled in six cities across the globe, so there are only a few opportunities for deep learning on Microsoft generative and responsible AI technologies, cloud-scale data, and modern application development platforms, including Azure AI services and Microsoft Copilot.

The first event will be on March 1, 2024, in Hyderabad, India, followed by a second event in Bengaluru, India, on March 19. You don’t want to miss this opportunity. Register for an event near you.

Acquire generative and responsible AI knowledge from Microsoft experts

In a recent blog, Judson Althoff outlined four major opportunities where organizations can empower AI transformation:

Enriching employee experience

Reinventing customer engagement

Reshaping business processes

Bending the curve on innovation

Microsoft is focused on developing responsible AI strategies grounded in pragmatic innovation and enabling AI transformation to meet our customers’ needs. The Microsoft AI Partner Training Roadshow provides expert-led sessions and hands-on experiences to enhance your sales, pre-sales, and technical deployment capabilities across these impact areas.

Prepare technical and sales teams for AI success

Open to our Global Systems Integrator (GSI) and System Integrator (SI) partners, the Microsoft AI Partner Training Roadshow offers learning across multiple skill levels and interests. Alongside a keynote address by a Microsoft leader, there are four distinct learning paths for individuals with technical or sales backgrounds:

Sales Excellence with Microsoft AI Services: Master skills to confidently pitch Microsoft AI solutions by diving into solution use cases, exploring responsible AI commitments, and highlighting incentives to increase customer business value.

Technical Excellence with Azure AI: Build your own “Intelligent Agent” copilot to answer customer questions on products and services: Learn to build an “Intelligent Agent” that helps users find products, user profiles, and sales order information. This interactive experience features theoretical and lab sessions that prepare your technical teams to use Azure OpenAI and Azure AI Search.

Technical Excellence with Azure AI: Build a scalable data estate with a custom copilot for conversational data interaction: In this hands-on track, learn how to create a payments and transactions solution. Key subjects explored include business rules for data governance, patch operations for data replication, and customizing copilots for conversational AI.

Technical Excellence with Microsoft 365: Deep dive into the use and deployment of Copilot for Microsoft 365: Gain a fuller understanding of Copilot for Microsoft 365 with technical sessions on architecture, deployment, security, and compliance.

Bridge skill gaps in AI

Because AI is rapidly developing, there is a growing skills gap as employees work to keep up. In fact, 52% of participants of this IDC survey report that the lack of skilled workers is their biggest barrier to implementing and scaling AI. Much of the challenge isn’t simply adopting technology but also providing ample opportunities for employees to explore and learn.

To reconcile this divide, the Microsoft AI Partner Training Roadshow is committed to providing recent, up-to-date content for participants to study during and after the event. In addition to live keynote addresses and Q&A sessions, participants will have the chance to interact with and learn from technical and sales subject matter experts on topics that span generative and responsible AI technologies, cloud-scale data, and modern application development platforms, Azure AI services, and Microsoft Copilot

Prepare for the future

2023 introduced the world to the power of generative AI. Businesses are ready to deploy AI-based solutions as quickly as possible. The Microsoft AI Partner Training Roadshow places developers, solution architects, implementation consultants, and sales & pre-sales consultants at the forefront of AI transformation.

Because there will be no on-demand delivery post-event, we invite you to join us in Hyderabad, Bengaluru, or one of the other four cities across the globe that’s conveniently located near you.

Visit the Microsoft AI Partnership Roadshow website and register today to get started.

Microsoft Tech Community – Latest Blogs –Read More

Drive customer engagement with the power of AI

According to a recent IDC study commissioned by Microsoft, “For every $1 a company invests in AI, it is realizing an average return of $3.5X.” Because organizations realize a return on their AI investments within 14 months, customers are highly motivated to find partners with the necessary knowledge and skill set to deploy AI solutions today.

The Microsoft AI Partner Training Roadshow is a single-day, in-person event focused on driving customer engagement with the power of AI. The roadshow provides an exceptional opportunity to engage with Microsoft experts, hear about the latest trends in AI from Microsoft executives, and participate in technical or sales training.

Attend one of the six roadshow events

The Microsoft AI Partner Training Roadshow is scheduled in six cities across the globe, so there are only a few opportunities for deep learning on Microsoft generative and responsible AI technologies, cloud-scale data, and modern application development platforms, including Azure AI services and Microsoft Copilot.

The first event will be on March 1, 2024, in Hyderabad, India, followed by a second event in Bengaluru, India, on March 19. You don’t want to miss this opportunity. Register for an event near you.

Acquire generative and responsible AI knowledge from Microsoft experts

In a recent blog, Judson Althoff outlined four major opportunities where organizations can empower AI transformation:

Enriching employee experience

Reinventing customer engagement

Reshaping business processes

Bending the curve on innovation

Microsoft is focused on developing responsible AI strategies grounded in pragmatic innovation and enabling AI transformation to meet our customers’ needs. The Microsoft AI Partner Training Roadshow provides expert-led sessions and hands-on experiences to enhance your sales, pre-sales, and technical deployment capabilities across these impact areas.

Prepare technical and sales teams for AI success

Open to our Global Systems Integrator (GSI) and System Integrator (SI) partners, the Microsoft AI Partner Training Roadshow offers learning across multiple skill levels and interests. Alongside a keynote address by a Microsoft leader, there are four distinct learning paths for individuals with technical or sales backgrounds:

Sales Excellence with Microsoft AI Services: Master skills to confidently pitch Microsoft AI solutions by diving into solution use cases, exploring responsible AI commitments, and highlighting incentives to increase customer business value.

Technical Excellence with Azure AI: Build your own “Intelligent Agent” copilot to answer customer questions on products and services: Learn to build an “Intelligent Agent” that helps users find products, user profiles, and sales order information. This interactive experience features theoretical and lab sessions that prepare your technical teams to use Azure OpenAI and Azure AI Search.

Technical Excellence with Azure AI: Build a scalable data estate with a custom copilot for conversational data interaction: In this hands-on track, learn how to create a payments and transactions solution. Key subjects explored include business rules for data governance, patch operations for data replication, and customizing copilots for conversational AI.

Technical Excellence with Microsoft 365: Deep dive into the use and deployment of Copilot for Microsoft 365: Gain a fuller understanding of Copilot for Microsoft 365 with technical sessions on architecture, deployment, security, and compliance.

Bridge skill gaps in AI

Because AI is rapidly developing, there is a growing skills gap as employees work to keep up. In fact, 52% of participants of this IDC survey report that the lack of skilled workers is their biggest barrier to implementing and scaling AI. Much of the challenge isn’t simply adopting technology but also providing ample opportunities for employees to explore and learn.

To reconcile this divide, the Microsoft AI Partner Training Roadshow is committed to providing recent, up-to-date content for participants to study during and after the event. In addition to live keynote addresses and Q&A sessions, participants will have the chance to interact with and learn from technical and sales subject matter experts on topics that span generative and responsible AI technologies, cloud-scale data, and modern application development platforms, Azure AI services, and Microsoft Copilot

Prepare for the future

2023 introduced the world to the power of generative AI. Businesses are ready to deploy AI-based solutions as quickly as possible. The Microsoft AI Partner Training Roadshow places developers, solution architects, implementation consultants, and sales & pre-sales consultants at the forefront of AI transformation.

Because there will be no on-demand delivery post-event, we invite you to join us in Hyderabad, Bengaluru, or one of the other four cities across the globe that’s conveniently located near you.

Visit the Microsoft AI Partnership Roadshow website and register today to get started.

Microsoft Tech Community – Latest Blogs –Read More

Drive customer engagement with the power of AI

According to a recent IDC study commissioned by Microsoft, “For every $1 a company invests in AI, it is realizing an average return of $3.5X.” Because organizations realize a return on their AI investments within 14 months, customers are highly motivated to find partners with the necessary knowledge and skill set to deploy AI solutions today.

The Microsoft AI Partner Training Roadshow is a single-day, in-person event focused on driving customer engagement with the power of AI. The roadshow provides an exceptional opportunity to engage with Microsoft experts, hear about the latest trends in AI from Microsoft executives, and participate in technical or sales training.

Attend one of the six roadshow events

The Microsoft AI Partner Training Roadshow is scheduled in six cities across the globe, so there are only a few opportunities for deep learning on Microsoft generative and responsible AI technologies, cloud-scale data, and modern application development platforms, including Azure AI services and Microsoft Copilot.

The first event will be on March 1, 2024, in Hyderabad, India, followed by a second event in Bengaluru, India, on March 19. You don’t want to miss this opportunity. Register for an event near you.

Acquire generative and responsible AI knowledge from Microsoft experts

In a recent blog, Judson Althoff outlined four major opportunities where organizations can empower AI transformation:

Enriching employee experience

Reinventing customer engagement

Reshaping business processes

Bending the curve on innovation

Microsoft is focused on developing responsible AI strategies grounded in pragmatic innovation and enabling AI transformation to meet our customers’ needs. The Microsoft AI Partner Training Roadshow provides expert-led sessions and hands-on experiences to enhance your sales, pre-sales, and technical deployment capabilities across these impact areas.

Prepare technical and sales teams for AI success

Open to our Global Systems Integrator (GSI) and System Integrator (SI) partners, the Microsoft AI Partner Training Roadshow offers learning across multiple skill levels and interests. Alongside a keynote address by a Microsoft leader, there are four distinct learning paths for individuals with technical or sales backgrounds:

Sales Excellence with Microsoft AI Services: Master skills to confidently pitch Microsoft AI solutions by diving into solution use cases, exploring responsible AI commitments, and highlighting incentives to increase customer business value.

Technical Excellence with Azure AI: Build your own “Intelligent Agent” copilot to answer customer questions on products and services: Learn to build an “Intelligent Agent” that helps users find products, user profiles, and sales order information. This interactive experience features theoretical and lab sessions that prepare your technical teams to use Azure OpenAI and Azure AI Search.

Technical Excellence with Azure AI: Build a scalable data estate with a custom copilot for conversational data interaction: In this hands-on track, learn how to create a payments and transactions solution. Key subjects explored include business rules for data governance, patch operations for data replication, and customizing copilots for conversational AI.

Technical Excellence with Microsoft 365: Deep dive into the use and deployment of Copilot for Microsoft 365: Gain a fuller understanding of Copilot for Microsoft 365 with technical sessions on architecture, deployment, security, and compliance.

Bridge skill gaps in AI

Because AI is rapidly developing, there is a growing skills gap as employees work to keep up. In fact, 52% of participants of this IDC survey report that the lack of skilled workers is their biggest barrier to implementing and scaling AI. Much of the challenge isn’t simply adopting technology but also providing ample opportunities for employees to explore and learn.

To reconcile this divide, the Microsoft AI Partner Training Roadshow is committed to providing recent, up-to-date content for participants to study during and after the event. In addition to live keynote addresses and Q&A sessions, participants will have the chance to interact with and learn from technical and sales subject matter experts on topics that span generative and responsible AI technologies, cloud-scale data, and modern application development platforms, Azure AI services, and Microsoft Copilot

Prepare for the future

2023 introduced the world to the power of generative AI. Businesses are ready to deploy AI-based solutions as quickly as possible. The Microsoft AI Partner Training Roadshow places developers, solution architects, implementation consultants, and sales & pre-sales consultants at the forefront of AI transformation.

Because there will be no on-demand delivery post-event, we invite you to join us in Hyderabad, Bengaluru, or one of the other four cities across the globe that’s conveniently located near you.

Visit the Microsoft AI Partnership Roadshow website and register today to get started.

Microsoft Tech Community – Latest Blogs –Read More

Drive customer engagement with the power of AI

According to a recent IDC study commissioned by Microsoft, “For every $1 a company invests in AI, it is realizing an average return of $3.5X.” Because organizations realize a return on their AI investments within 14 months, customers are highly motivated to find partners with the necessary knowledge and skill set to deploy AI solutions today.

The Microsoft AI Partner Training Roadshow is a single-day, in-person event focused on driving customer engagement with the power of AI. The roadshow provides an exceptional opportunity to engage with Microsoft experts, hear about the latest trends in AI from Microsoft executives, and participate in technical or sales training.

Attend one of the six roadshow events

The Microsoft AI Partner Training Roadshow is scheduled in six cities across the globe, so there are only a few opportunities for deep learning on Microsoft generative and responsible AI technologies, cloud-scale data, and modern application development platforms, including Azure AI services and Microsoft Copilot.

The first event will be on March 1, 2024, in Hyderabad, India, followed by a second event in Bengaluru, India, on March 19. You don’t want to miss this opportunity. Register for an event near you.

Acquire generative and responsible AI knowledge from Microsoft experts

In a recent blog, Judson Althoff outlined four major opportunities where organizations can empower AI transformation:

Enriching employee experience

Reinventing customer engagement

Reshaping business processes

Bending the curve on innovation

Microsoft is focused on developing responsible AI strategies grounded in pragmatic innovation and enabling AI transformation to meet our customers’ needs. The Microsoft AI Partner Training Roadshow provides expert-led sessions and hands-on experiences to enhance your sales, pre-sales, and technical deployment capabilities across these impact areas.

Prepare technical and sales teams for AI success

Open to our Global Systems Integrator (GSI) and System Integrator (SI) partners, the Microsoft AI Partner Training Roadshow offers learning across multiple skill levels and interests. Alongside a keynote address by a Microsoft leader, there are four distinct learning paths for individuals with technical or sales backgrounds:

Sales Excellence with Microsoft AI Services: Master skills to confidently pitch Microsoft AI solutions by diving into solution use cases, exploring responsible AI commitments, and highlighting incentives to increase customer business value.

Technical Excellence with Azure AI: Build your own “Intelligent Agent” copilot to answer customer questions on products and services: Learn to build an “Intelligent Agent” that helps users find products, user profiles, and sales order information. This interactive experience features theoretical and lab sessions that prepare your technical teams to use Azure OpenAI and Azure AI Search.

Technical Excellence with Azure AI: Build a scalable data estate with a custom copilot for conversational data interaction: In this hands-on track, learn how to create a payments and transactions solution. Key subjects explored include business rules for data governance, patch operations for data replication, and customizing copilots for conversational AI.

Technical Excellence with Microsoft 365: Deep dive into the use and deployment of Copilot for Microsoft 365: Gain a fuller understanding of Copilot for Microsoft 365 with technical sessions on architecture, deployment, security, and compliance.

Bridge skill gaps in AI

Because AI is rapidly developing, there is a growing skills gap as employees work to keep up. In fact, 52% of participants of this IDC survey report that the lack of skilled workers is their biggest barrier to implementing and scaling AI. Much of the challenge isn’t simply adopting technology but also providing ample opportunities for employees to explore and learn.

To reconcile this divide, the Microsoft AI Partner Training Roadshow is committed to providing recent, up-to-date content for participants to study during and after the event. In addition to live keynote addresses and Q&A sessions, participants will have the chance to interact with and learn from technical and sales subject matter experts on topics that span generative and responsible AI technologies, cloud-scale data, and modern application development platforms, Azure AI services, and Microsoft Copilot

Prepare for the future

2023 introduced the world to the power of generative AI. Businesses are ready to deploy AI-based solutions as quickly as possible. The Microsoft AI Partner Training Roadshow places developers, solution architects, implementation consultants, and sales & pre-sales consultants at the forefront of AI transformation.

Because there will be no on-demand delivery post-event, we invite you to join us in Hyderabad, Bengaluru, or one of the other four cities across the globe that’s conveniently located near you.

Visit the Microsoft AI Partnership Roadshow website and register today to get started.

Microsoft Tech Community – Latest Blogs –Read More

Drive customer engagement with the power of AI

According to a recent IDC study commissioned by Microsoft, “For every $1 a company invests in AI, it is realizing an average return of $3.5X.” Because organizations realize a return on their AI investments within 14 months, customers are highly motivated to find partners with the necessary knowledge and skill set to deploy AI solutions today.

The Microsoft AI Partner Training Roadshow is a single-day, in-person event focused on driving customer engagement with the power of AI. The roadshow provides an exceptional opportunity to engage with Microsoft experts, hear about the latest trends in AI from Microsoft executives, and participate in technical or sales training.

Attend one of the six roadshow events

The Microsoft AI Partner Training Roadshow is scheduled in six cities across the globe, so there are only a few opportunities for deep learning on Microsoft generative and responsible AI technologies, cloud-scale data, and modern application development platforms, including Azure AI services and Microsoft Copilot.

The first event will be on March 1, 2024, in Hyderabad, India, followed by a second event in Bengaluru, India, on March 19. You don’t want to miss this opportunity. Register for an event near you.

Acquire generative and responsible AI knowledge from Microsoft experts

In a recent blog, Judson Althoff outlined four major opportunities where organizations can empower AI transformation:

Enriching employee experience

Reinventing customer engagement

Reshaping business processes

Bending the curve on innovation

Microsoft is focused on developing responsible AI strategies grounded in pragmatic innovation and enabling AI transformation to meet our customers’ needs. The Microsoft AI Partner Training Roadshow provides expert-led sessions and hands-on experiences to enhance your sales, pre-sales, and technical deployment capabilities across these impact areas.

Prepare technical and sales teams for AI success

Open to our Global Systems Integrator (GSI) and System Integrator (SI) partners, the Microsoft AI Partner Training Roadshow offers learning across multiple skill levels and interests. Alongside a keynote address by a Microsoft leader, there are four distinct learning paths for individuals with technical or sales backgrounds:

Sales Excellence with Microsoft AI Services: Master skills to confidently pitch Microsoft AI solutions by diving into solution use cases, exploring responsible AI commitments, and highlighting incentives to increase customer business value.

Technical Excellence with Azure AI: Build your own “Intelligent Agent” copilot to answer customer questions on products and services: Learn to build an “Intelligent Agent” that helps users find products, user profiles, and sales order information. This interactive experience features theoretical and lab sessions that prepare your technical teams to use Azure OpenAI and Azure AI Search.

Technical Excellence with Azure AI: Build a scalable data estate with a custom copilot for conversational data interaction: In this hands-on track, learn how to create a payments and transactions solution. Key subjects explored include business rules for data governance, patch operations for data replication, and customizing copilots for conversational AI.

Technical Excellence with Microsoft 365: Deep dive into the use and deployment of Copilot for Microsoft 365: Gain a fuller understanding of Copilot for Microsoft 365 with technical sessions on architecture, deployment, security, and compliance.

Bridge skill gaps in AI

Because AI is rapidly developing, there is a growing skills gap as employees work to keep up. In fact, 52% of participants of this IDC survey report that the lack of skilled workers is their biggest barrier to implementing and scaling AI. Much of the challenge isn’t simply adopting technology but also providing ample opportunities for employees to explore and learn.

To reconcile this divide, the Microsoft AI Partner Training Roadshow is committed to providing recent, up-to-date content for participants to study during and after the event. In addition to live keynote addresses and Q&A sessions, participants will have the chance to interact with and learn from technical and sales subject matter experts on topics that span generative and responsible AI technologies, cloud-scale data, and modern application development platforms, Azure AI services, and Microsoft Copilot

Prepare for the future

2023 introduced the world to the power of generative AI. Businesses are ready to deploy AI-based solutions as quickly as possible. The Microsoft AI Partner Training Roadshow places developers, solution architects, implementation consultants, and sales & pre-sales consultants at the forefront of AI transformation.

Because there will be no on-demand delivery post-event, we invite you to join us in Hyderabad, Bengaluru, or one of the other four cities across the globe that’s conveniently located near you.

Visit the Microsoft AI Partnership Roadshow website and register today to get started.

Microsoft Tech Community – Latest Blogs –Read More

Drive customer engagement with the power of AI

According to a recent IDC study commissioned by Microsoft, “For every $1 a company invests in AI, it is realizing an average return of $3.5X.” Because organizations realize a return on their AI investments within 14 months, customers are highly motivated to find partners with the necessary knowledge and skill set to deploy AI solutions today.

The Microsoft AI Partner Training Roadshow is a single-day, in-person event focused on driving customer engagement with the power of AI. The roadshow provides an exceptional opportunity to engage with Microsoft experts, hear about the latest trends in AI from Microsoft executives, and participate in technical or sales training.

Attend one of the six roadshow events

The Microsoft AI Partner Training Roadshow is scheduled in six cities across the globe, so there are only a few opportunities for deep learning on Microsoft generative and responsible AI technologies, cloud-scale data, and modern application development platforms, including Azure AI services and Microsoft Copilot.

The first event will be on March 1, 2024, in Hyderabad, India, followed by a second event in Bengaluru, India, on March 19. You don’t want to miss this opportunity. Register for an event near you.

Acquire generative and responsible AI knowledge from Microsoft experts

In a recent blog, Judson Althoff outlined four major opportunities where organizations can empower AI transformation:

Enriching employee experience

Reinventing customer engagement

Reshaping business processes

Bending the curve on innovation

Microsoft is focused on developing responsible AI strategies grounded in pragmatic innovation and enabling AI transformation to meet our customers’ needs. The Microsoft AI Partner Training Roadshow provides expert-led sessions and hands-on experiences to enhance your sales, pre-sales, and technical deployment capabilities across these impact areas.

Prepare technical and sales teams for AI success

Open to our Global Systems Integrator (GSI) and System Integrator (SI) partners, the Microsoft AI Partner Training Roadshow offers learning across multiple skill levels and interests. Alongside a keynote address by a Microsoft leader, there are four distinct learning paths for individuals with technical or sales backgrounds:

Sales Excellence with Microsoft AI Services: Master skills to confidently pitch Microsoft AI solutions by diving into solution use cases, exploring responsible AI commitments, and highlighting incentives to increase customer business value.

Technical Excellence with Azure AI: Build your own “Intelligent Agent” copilot to answer customer questions on products and services: Learn to build an “Intelligent Agent” that helps users find products, user profiles, and sales order information. This interactive experience features theoretical and lab sessions that prepare your technical teams to use Azure OpenAI and Azure AI Search.

Technical Excellence with Azure AI: Build a scalable data estate with a custom copilot for conversational data interaction: In this hands-on track, learn how to create a payments and transactions solution. Key subjects explored include business rules for data governance, patch operations for data replication, and customizing copilots for conversational AI.

Technical Excellence with Microsoft 365: Deep dive into the use and deployment of Copilot for Microsoft 365: Gain a fuller understanding of Copilot for Microsoft 365 with technical sessions on architecture, deployment, security, and compliance.

Bridge skill gaps in AI

Because AI is rapidly developing, there is a growing skills gap as employees work to keep up. In fact, 52% of participants of this IDC survey report that the lack of skilled workers is their biggest barrier to implementing and scaling AI. Much of the challenge isn’t simply adopting technology but also providing ample opportunities for employees to explore and learn.

To reconcile this divide, the Microsoft AI Partner Training Roadshow is committed to providing recent, up-to-date content for participants to study during and after the event. In addition to live keynote addresses and Q&A sessions, participants will have the chance to interact with and learn from technical and sales subject matter experts on topics that span generative and responsible AI technologies, cloud-scale data, and modern application development platforms, Azure AI services, and Microsoft Copilot

Prepare for the future

2023 introduced the world to the power of generative AI. Businesses are ready to deploy AI-based solutions as quickly as possible. The Microsoft AI Partner Training Roadshow places developers, solution architects, implementation consultants, and sales & pre-sales consultants at the forefront of AI transformation.

Because there will be no on-demand delivery post-event, we invite you to join us in Hyderabad, Bengaluru, or one of the other four cities across the globe that’s conveniently located near you.

Visit the Microsoft AI Partnership Roadshow website and register today to get started.

Microsoft Tech Community – Latest Blogs –Read More

Drive customer engagement with the power of AI

According to a recent IDC study commissioned by Microsoft, “For every $1 a company invests in AI, it is realizing an average return of $3.5X.” Because organizations realize a return on their AI investments within 14 months, customers are highly motivated to find partners with the necessary knowledge and skill set to deploy AI solutions today.

The Microsoft AI Partner Training Roadshow is a single-day, in-person event focused on driving customer engagement with the power of AI. The roadshow provides an exceptional opportunity to engage with Microsoft experts, hear about the latest trends in AI from Microsoft executives, and participate in technical or sales training.

Attend one of the six roadshow events

The Microsoft AI Partner Training Roadshow is scheduled in six cities across the globe, so there are only a few opportunities for deep learning on Microsoft generative and responsible AI technologies, cloud-scale data, and modern application development platforms, including Azure AI services and Microsoft Copilot.

The first event will be on March 1, 2024, in Hyderabad, India, followed by a second event in Bengaluru, India, on March 19. You don’t want to miss this opportunity. Register for an event near you.

Acquire generative and responsible AI knowledge from Microsoft experts

In a recent blog, Judson Althoff outlined four major opportunities where organizations can empower AI transformation:

Enriching employee experience

Reinventing customer engagement

Reshaping business processes

Bending the curve on innovation

Microsoft is focused on developing responsible AI strategies grounded in pragmatic innovation and enabling AI transformation to meet our customers’ needs. The Microsoft AI Partner Training Roadshow provides expert-led sessions and hands-on experiences to enhance your sales, pre-sales, and technical deployment capabilities across these impact areas.

Prepare technical and sales teams for AI success

Open to our Global Systems Integrator (GSI) and System Integrator (SI) partners, the Microsoft AI Partner Training Roadshow offers learning across multiple skill levels and interests. Alongside a keynote address by a Microsoft leader, there are four distinct learning paths for individuals with technical or sales backgrounds:

Sales Excellence with Microsoft AI Services: Master skills to confidently pitch Microsoft AI solutions by diving into solution use cases, exploring responsible AI commitments, and highlighting incentives to increase customer business value.

Technical Excellence with Azure AI: Build your own “Intelligent Agent” copilot to answer customer questions on products and services: Learn to build an “Intelligent Agent” that helps users find products, user profiles, and sales order information. This interactive experience features theoretical and lab sessions that prepare your technical teams to use Azure OpenAI and Azure AI Search.

Technical Excellence with Azure AI: Build a scalable data estate with a custom copilot for conversational data interaction: In this hands-on track, learn how to create a payments and transactions solution. Key subjects explored include business rules for data governance, patch operations for data replication, and customizing copilots for conversational AI.

Technical Excellence with Microsoft 365: Deep dive into the use and deployment of Copilot for Microsoft 365: Gain a fuller understanding of Copilot for Microsoft 365 with technical sessions on architecture, deployment, security, and compliance.

Bridge skill gaps in AI

Because AI is rapidly developing, there is a growing skills gap as employees work to keep up. In fact, 52% of participants of this IDC survey report that the lack of skilled workers is their biggest barrier to implementing and scaling AI. Much of the challenge isn’t simply adopting technology but also providing ample opportunities for employees to explore and learn.

To reconcile this divide, the Microsoft AI Partner Training Roadshow is committed to providing recent, up-to-date content for participants to study during and after the event. In addition to live keynote addresses and Q&A sessions, participants will have the chance to interact with and learn from technical and sales subject matter experts on topics that span generative and responsible AI technologies, cloud-scale data, and modern application development platforms, Azure AI services, and Microsoft Copilot

Prepare for the future

2023 introduced the world to the power of generative AI. Businesses are ready to deploy AI-based solutions as quickly as possible. The Microsoft AI Partner Training Roadshow places developers, solution architects, implementation consultants, and sales & pre-sales consultants at the forefront of AI transformation.

Because there will be no on-demand delivery post-event, we invite you to join us in Hyderabad, Bengaluru, or one of the other four cities across the globe that’s conveniently located near you.

Visit the Microsoft AI Partnership Roadshow website and register today to get started.

Microsoft Tech Community – Latest Blogs –Read More

Drive customer engagement with the power of AI

According to a recent IDC study commissioned by Microsoft, “For every $1 a company invests in AI, it is realizing an average return of $3.5X.” Because organizations realize a return on their AI investments within 14 months, customers are highly motivated to find partners with the necessary knowledge and skill set to deploy AI solutions today.

The Microsoft AI Partner Training Roadshow is a single-day, in-person event focused on driving customer engagement with the power of AI. The roadshow provides an exceptional opportunity to engage with Microsoft experts, hear about the latest trends in AI from Microsoft executives, and participate in technical or sales training.

Attend one of the six roadshow events

The Microsoft AI Partner Training Roadshow is scheduled in six cities across the globe, so there are only a few opportunities for deep learning on Microsoft generative and responsible AI technologies, cloud-scale data, and modern application development platforms, including Azure AI services and Microsoft Copilot.

The first event will be on March 1, 2024, in Hyderabad, India, followed by a second event in Bengaluru, India, on March 19. You don’t want to miss this opportunity. Register for an event near you.

Acquire generative and responsible AI knowledge from Microsoft experts

In a recent blog, Judson Althoff outlined four major opportunities where organizations can empower AI transformation:

Enriching employee experience

Reinventing customer engagement

Reshaping business processes

Bending the curve on innovation

Microsoft is focused on developing responsible AI strategies grounded in pragmatic innovation and enabling AI transformation to meet our customers’ needs. The Microsoft AI Partner Training Roadshow provides expert-led sessions and hands-on experiences to enhance your sales, pre-sales, and technical deployment capabilities across these impact areas.

Prepare technical and sales teams for AI success