Tag Archives: microsoft

Excel what if analysis issues etc

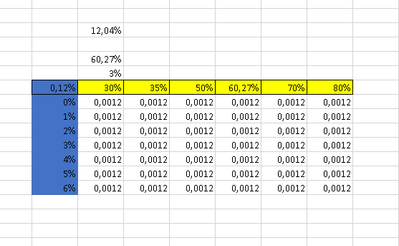

Hi I have an issue on what if analysis, i tries to insert the numbers however the table outcome answers are the same … is there any issues with the setting . Thanks in advance kind regards Alex

Hi I have an issue on what if analysis, i tries to insert the numbers however the table outcome answers are the same … is there any issues with the setting . Thanks in advance kind regards Alex Read More

Microsoft Fabric: Integration with ADO Repos and Deployment Pipelines – A Power BI Case Study.

Introduction

In the dynamic landscape of software development, collaboration stands as a cornerstone for success. Harnessing the power of cutting-edge tools and methodologies, teams strive to streamline their processes, elevate their efficiency, and deliver unparalleled results. In this pursuit, the convergence of GIT, Azure DevOps, and Microsoft Fabric Deployment Strategies emerges as a beacon of innovation, revolutionizing the way organizations approach collaborative development and deployment.

Fabric is an end-to-end, human-centered analytics product that unifies an organization’s data and analytics in one place.

It combines the best features of Microsoft Power BI, Azure Synapse, and Azure Data Factory into a single unified software-as-a-service (SaaS) platform.

This article will expose two features of Fabric that make it very powerful for undertaking business intelligence solutions:

Collaborative development and Agile application publishing in the Azure cloud.

Considering Fabric’s extensive array of product items already compatible with source control and deployment pipelines, our focus lies on a flagship product empowered by Fabric: Microsoft Power BI. We firmly believe that short-term development efforts will enable most, if not all, Fabric items to benefit from these features.

In this post we delve into the integration of Fabric with Azure DevOps Repos and the possibilities of Fabric Deployment Pipelines, aimed at enhancing both collaborative development and agile release cycles of Power BI solutions. Our goal is to elucidate the practical application of these technologies through examples, emphasizing their advantages within the Fabric ecosystem, and outline key best practices for utilizing Fabric-GIT and Fabric Deployment Pipelines effectively.

Collaborative development and deployment using Microsoft Fabric.

Suppose there are two developers who must collaborate to obtain a business intelligence application where trend analysis and key performance indicators about Medicines distributed to Hospitals are displayed.

Developers want to make source control [1] of the development, so they need to share a repository. They need to deploy the solution from a DEV Stage to a TEST Stage to test the solution, and after successful testing, deploy the solution to a PRODUCTION Stage.

What is the scenario when these developers use Microsoft Fabric?

In Fabric, development and deployment tasks are done using workspaces.

Fabric Workspaces [2] are places to collaborate with colleagues to create collections of items such as lakehouses, warehouses, and reports. A workspace is like a container of the work results of the tools provided by Fabric.

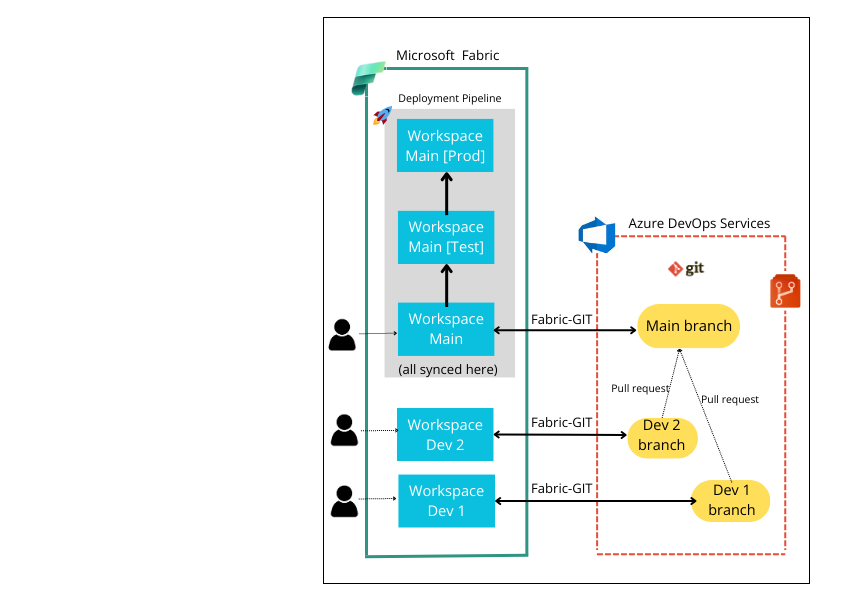

We will describe the architecture of this scenario by means of Figure 1 and provide a step-by-step guide to make the Fabric and GIT integration via an Azure DevOps Repo, and to build the desired deployment pipeline.

Figure 1. Development and Deployment Scenario using Microsoft Fabric and an Azure DevOps Repo.

These are the steps to make the Fabric and GIT integration:

Create a new project and a GIT Repo for this project in Azure DevOps Services. Create one or multiple workspaces within Fabric and grant access to as many developers as needed. In this case, create two workspaces named ‘Workspace Dev 1’ and ‘Workspace Dev 2’. Additionally, create a third workspace, ‘Workspace Main’, intended to store the combined contents of both developers. Create as many branches in the ADO GIT Repo as workspaces in Fabric. Or create folders within a branch to continue maintaining files when merging to the same branch. In Figure 1, we have created the branches Dev 1, Dev 2 and Main. Integrate each workspace with GIT pointing to the Organization, the Project, the Branch and Folder inside the branch.

Figures 2 shows the UI to make that Fabric-GIT integration [3]. You will see how to integrate Workspace Dev 1 with GIT.

Similarly, the workspaces Dev 2 and Main can be integrated and synchronized.

Figure 2. Integrating Workspace Dev 1 with GIT.

Each developer can commit changes to their items from his workspace in Fabric. This is done by using the Source Control option of the workspace. See Figures 3 and 4.

Figure 3. Workspace Dev 1 syncing with the GIT branch of ADO Repo.

Figure 4. Workspace with its content synced.

5. Developers must create pull requests [4] in Azure DevOps to merge the commits to the main branch. See Figure 5.

6. Don’t delete any branch after merge, to keep developers maintain items independently.

Figure 5. Creating a pull request in Azure DevOps.

Figure 6. Completing the merging process.

Many developers inquire about collaborating on making changes to the same report.

If multiple developers modify the same report, merge conflicts can arise when attempting to combine their changes into the same branch. [5]

What should be done to combine both contents into a single Power BI report?

Clone the branch locally and use VS Code to see the differences between two versions of the same file. Merge conflicts can be resolved more easily by installing the Pull Request Merge Conflict Microsoft extension. The extension adds special lines (commenting the conflicts) into the report.json file. Navigate to Azure DevOps > Repos > Files and delete all lines commenting merge conflicts. Commit those changes to the report.json file. Then, make the merge anyway. When trying to sync on Fabric, an error message will be displayed. When the content is synced, they may encounter a scenario where the report reflects the changes from one developer but not from the other. To ensure that all developers can view all changes, the following process must be repeated: each developer creates a pull request, the changes are merged, and then adjustments are made in the JSON file. Once the necessary modifications are made, the changes are committed to the JSON file, and synchronization in Fabric can be performed.

Figure 7. Edition of a report.json file in Azure DevOps.

To prevent merging conflicts, each developer should work on separate reports. Through deployment pipelines, the changes made by all developers can be deployed to test and production environments. A single dashboard can be created by pinning visuals from multiple reports.

Up to this point, we have shown how to obtain updated content in the Main Branch, based on the changes that developers have made in their respective workspaces.

“Main branch” is ready to be deployed now. The tools for the deployment in Fabric are the deployment pipelines.

Deployment Pipelines in Fabric.

Deployment Pipelines are a Fabric feature that can work independently of the integration of workspaces with GIT. [6]

The goal of deployment pipelines is to efficiently transfer content from one workspace to another in an agile manner.

The process followed by Fabric deployment pipelines is completely codeless.

Let’s look at it.

You can create a deployment pipeline outside of all workspaces, or within one of the workspaces.

Figure 8. Creating a New Deployment pipeline out of workspaces.

The first thing is to define a meaningful name for the purpose of the pipeline.

By default, Fabric proposes to use three stages, called DEVELOPMENT, TEST and PRODUCTION.

The stages are essentially Fabric workspaces that you can either associate with existing ones or create when deploying content from the space on the left to the one on the right.

You can do inverted deploys, that is, move content from the stage on the right to the stage on the left, to undo changes.

Never has there been such agility in publishing applications in the cloud.

Currently, a Fabric pipeline can be scheduled to run at a specified time. However, this differs from the ease of creating events to automate the execution of ADO Pipelines, it is just that ADO Pipelines typically require more coding.

It is convenient to create the deployment pipeline in the space where most changes have already been synchronized, for example, in our case it would be the “Workspace Main”, but it’s possible to create a pipeline for deploying the content from any of the workspaces.

Figure 9 illustrates the creation of a deployment pipeline in Workspace Main, utilizing this workspace as the initial stage (the DEVELOPMENT Stage). See Figure 1 to see the pipeline.

However, you have the flexibility to change the assigned workspace to any stage as needed. Figure 10 shows an assignment process.

Figure 9. Creation of a deployment pipeline inside a Fabric’s workspace.

Figure 10. Assign a workspace to a stage.

TEST and PRODUCTION workspaces can either be selected from previously created workspaces or generated by Fabric when deploying content. They are assigned names based on the chosen development stage workspace, followed by either [Test] or [Production].

It’s possible to compare content [7] between stages. Figure 11 says that Workspace Dev 1 contains two less items than the workspace assigned to TEST. This is a symptom that there are missing deploys to do.

Figure 11. Compare content between stages.

You need to refresh data from PBI services to see the complete report in the target workspace to be reviewed, because Fabric only moves metadata.

Another amazing feature is the possibility to publish an app from any of the workspaces in the pipeline. You can update the content of your Power BI apps using a deployment pipeline, giving you more control and flexibility when it comes to your app’s lifecycle. Create an app for each deployment pipeline stage, so that you can test each update from an end user’s point of view. Use the publish or view button in the workspace card to publish or view the app in a specific pipeline stage. See Figure 12.

This opens the doors to greater participation of individuals in reviewing and exchanging criteria on specific aspects they wish to submit for review, without the need to create a complex solution to be reviewed later.

Figure 12. Publish the app from any workspace.

Summary of Good Practices.

Collaborative development and agile deployment are two key aspects for the software industry. Microsoft Fabric delivers faster, lower-code scenarios than ever before for business intelligence solutions.

Microsoft Fabric powers the use of Azure DevOps Repos and has realized a content-based application deployment strategy, allowing engineers to focus on everything that matters to the business.

To take full advantage of these benefits, we offer this best practice guide:

Create as many branches in ADO Repo as there are developers to collaborate. The Main branch should preferably be handled by whoever oversees development direction. Developers should preferably deal with aspects of the solution that are relatively independent, to avoid merging conflicts over the same file. Create as many workspaces in Fabric as developers participate, plus the workspace where all the content will be joined. Keep the contents of each workspace synced with the corresponding Repo branch. Utilize the workspace that consolidates content as the initial stage in the Fabric pipeline. This approach reduces the number of workspaces required in the solution. Now that you have great ease and require minimal code for continuous integration, it’s crucial to publish your app frequently to the cloud to gather feedback quickly. Even if it doesn’t include all agreed-upon features, this approach ensures greater end-user satisfaction and commitment to the team.

Resources:

[1]

Microsoft, “Seamless Source Control Management ” https://blog.fabric.microsoft.com/en-us/blog/introducing-git-integration-in-microsoft-fabric-for-seamless-source-control-management.

[2]

M. Corporation, “Getting Started with Workspaces” https://learn.microsoft.com/en-us/fabric/get-started/workspaces.

[3]

M. Corporation, “Overview of Fabric Git Integration” Overview of Fabric Git integration – Microsoft Fabric | Microsoft Learn

[4]

Microsoft, “Deploy Pull Requests” https://learn.microsoft.com/en-us/azure/devops/pipelines/release/deploy-pull-request-builds?view=azure-devops.

[5]

M. Adnan, “How to solve Fabric and Power BI Azure DevOps GIT Merge Conflict”. https://www.youtube.com/watch?v=Ed1NwUOIoHs.

[6]

Microsoft, “Getting Started with Deployment Pipelines.” https://learn.microsoft.com/en-us/fabric/cicd/deployment-pipelines/get-started-with-deployment-pipelines.

[7]

Microsoft, “Understand the deployment process”. https://learn.microsoft.com/en-us/fabric/cicd/deployment-pipelines/understand-the-deployment-process.

Microsoft Tech Community – Latest Blogs –Read More

Possible to still save some videos

Hi,

I know deprecation has occurred.

Is it still possible to download some remaining videos from Steam Classic?

These are the ones I am interested in

Watch ‘Service Fabric First Party Day – Part 4’ | Microsoft Stream (Classic)

Watch ‘Compute Platform: Goal Seeking in CRP’ | Microsoft Stream (Classic)

Watch ‘Compute Platform: Dynamic Config’ | Microsoft Stream (Classic)

Hi,I know deprecation has occurred.Is it still possible to download some remaining videos from Steam Classic?These are the ones I am interested in

Watch ‘Service Fabric First Party Day – Part 4’ | Microsoft Stream (Classic)

Watch ‘Compute Platform: Goal Seeking and The Concurrent Workflow Framework’ | Microsoft Stream (Classic)

Watch ‘Compute Platform: Goal Seeking in CRP’ | Microsoft Stream (Classic)

Watch ‘Compute Tech talk – Overview of Guest Agent (Zhidong Peng) and Extension (Nathan Kuchta)’ | Microsoft Stream (Classic)

Watch ‘Compute Platform: Dynamic Config’ | Microsoft Stream (Classic)

Watch ‘Azure Compute Platform Speaker Series – Friday, September 7, 2018 10.58.26 PM’ | Microsoft Stream (Classic)

Watch ‘Compute Tech talk: CRP UTDP/R – Unit Test Performance and Reliability – Sean Zimmerman’ | Microsoft Stream (Classic) Read More

Access Releases 11 Issue Fixes in Version 2403 (Released March 27th, 2024)

In this blog post, we highlight some of the fixed issues that our Access engineers released in the current monthly channel.

If you see a bug that impacts you, and if you are on the current monthly channel Version 2403, you should have these fixes. Make sure you are on Access Build # 16.0.17425.20138 or greater.

Bug Name

Issue Fixed

Error “Primary key already exists.” when refreshing a link to a SQL Server table.

This error could be generated when using the Refresh Link menu item for a linked table and would also produce Error 3283 when using the TableDef.RefreshLink method in VBA (Visual Basic for Applications) code. This error was also fixed in Version 2402.

Data in the extended range of supported characters is not displayed correctly when imported from an Outlook folder.

Some characters would display as ‘?’ rather than the correct character in the import/link wizard.

Options in the Paste Special dialog are not displayed correctly in some languages when pasting a hyperlink.

Options should now display in the correct language.

When pasting from a Hyperlink field to a Rich Text field, data in the extended range of supported characters may not display correctly.

Some characters would display as ‘?’ rather than the correct character in the Rich Text field.

When specifying the description of a database template, data in the extended range of supported characters may not display correctly when using the template to create a new database.

Some characters would display as ‘?’ rather than the correct character in the new database dialog when using the template.

Cannot create a new database from a template if a table name contains an ampersand (&).

If a database template were created from a database that contained a table with an ampersand (&) in the name then trying to create a new database using the template would generate an error, “Template could not be instantiated.” Instead, you will now get an error when trying to save the template that the table name that contains an ampersand is not supported.

Error when trying to import/link text or Excel files.

Access was always attempting to open text/Excel files exclusively (denying any other access) when importing/linking to the file. This meant that if another application had opened the file for shared access, then the import/link operation would fail. Access will now open the file in a shared mode to allow the operation to succeed.

Filename for exported file is not correct when it uses the extended range of supported characters

Some characters were replaced with an underscore (_) in the filename, rather than using the character specified by the user.

No error displayed when there is a failure trying to switch tabs in a Navigation Form.

When switching tabs in a navigation form, a new form may be loaded. If an error occurs when Access attempts to load the new form, then an error message indicating the cause of failure will now be displayed. Previously the form failed to load, but no notification was given.

Text for the ribbon button to set the alternate row color for a datasheet reads “Alternative Row Colour” in British English.

The text now reads “Alternate Row Colour.”

The term Report is incorrectly localized in some versions of Access.

Some terms need to remain in English for expressions to function correctly, so the localization caused the expression builder to fail to build valid expressions.

Please continue to let us know if this is helpful and share any feedback you have.

Microsoft Tech Community – Latest Blogs –Read More

Support tip: Migrate your classic Conditional Access policies

Azure Active Directory (Azure AD) Graph has been deprecated since mid-2023 and is in its retirement phase to allow applications time to migrate to Microsoft Graph. As part of our ongoing efforts to prepare for this, we’ll be updating the Intune Company Portal infrastructure to move to Microsoft Graph. With this update, by July 10, 2024, admins must migrate classic Conditional Access (CA) to the new policies and disable or delete them for the Company Portal and Intune apps to continue working

For instructions on migrating these policies, see Migrate from a classic policy – Microsoft Entra ID | Microsoft Learn.

How does this affect you or your users?

If you are using classic CA policies, you will need to migrate these policies.

User impact: If you don’t migrate your policies, users won’t be able to enroll new devices via the Company Portal and they won’t be able to make non-compliant devices compliant (if non-compliance is caused by a classic CA policy or a condition within a classic CA policy). This applies to:

Windows Company Portal

Intune Company Portal website

Android Company Portal

Intune app for Android Enterprise

Intune app for Android (AOSP)

iOS Company Portal

macOS Company Portal

If you have questions or comments for the Intune team, reply to this post or reach out on X @IntuneSuppTeam.

Microsoft Tech Community – Latest Blogs –Read More

Think this requires the IF function?

I have a table (table1) of the list of members with attributes in cells.

member idcarcountry1fordunited states2chryslerukraine3rolls royceukraine4porcheukraine

I also have another table (table2) with the list of members and when they visit a cafe which is only open for 3 days a week.

member idMonThuSat1YYN2YYY3NNN4NYN

Question is “how many Ukrainians have visited the cafe at least once this week? (Answer is 2)

What’s the best way to do this?

I have a table (table1) of the list of members with attributes in cells. member idcarcountry1fordunited states2chryslerukraine3rolls royceukraine4porcheukraine I also have another table (table2) with the list of members and when they visit a cafe which is only open for 3 days a week. member idMonThuSat1YYN2YYY3NNN4NYN Question is “how many Ukrainians have visited the cafe at least once this week? (Answer is 2) What’s the best way to do this? Read More

Index/Match or Something Else

I have a worksheet that will look something like this:

Last NameWorked DepartmentHoursEmployee 1Project 72Employee 1Project 43Employee 1Project 51Employee 1Project 22Employee 1Project 41.5Employee 1Project 12Employee 1Project 22Employee 1Project 31Employee 1Project 101.5Employee 1Project 103Employee 1Project 42Employee 2Project 52Employee 2Project 41Employee 2Project 12Employee 2Project 33Employee 2Project 22Employee 2Project 41Employee 2Project 43Employee 2Project 22Employee 2Project 31Employee 2Project 32Employee 2Project 78Employee 3Project 51.5Employee 3Project 52.5Employee 3Project 52Employee 3Project 11Employee 3Project 41Employee 3Project 31.5Employee 3Project 42.5Employee 3Project 42

This worksheet will show the hours worked for thirty employees by Project worked.

I will be creating another worksheet that will look something like this:

Employee 1Employee 2Employee 3TotalsProject 1 – Project 2 Project 3 – Project 4 – Project 5 – Project 6 – Project 7 – Project 8 – Project 9 – Project 10 –

I want to write formulas in this worksheet such that it will look at the first worksheet and populate the second worksheet with the hours charged by employee to each of the projects. In this case the final work sheet would look like this:

Employee 1Employee 2Employee 3TotalsProject 1 2.00 2.00 2.00 6.00Project 2 4.00 4.00 Project 3 1.00 6.00 2.50 9.50Project 4 6.50 5.00 5.50 17.00Project 5 1.00 2.00 6.00 9.00Project 6 – – – – Project 7 2.00 8.00 – 10.00Project 8 – – – – Project 9 – – – – Project 10 – – – –

I believe that a pivot table could be used but I need to do additional calculations using this data that would not work in a pivot table. The second worksheet will be a template for future months use.

I would appreciate any help/suggestions.

I have a worksheet that will look something like this:Last NameWorked DepartmentHoursEmployee 1Project 72Employee 1Project 43Employee 1Project 51Employee 1Project 22Employee 1Project 41.5Employee 1Project 12Employee 1Project 22Employee 1Project 31Employee 1Project 101.5Employee 1Project 103Employee 1Project 42Employee 2Project 52Employee 2Project 41Employee 2Project 12Employee 2Project 33Employee 2Project 22Employee 2Project 41Employee 2Project 43Employee 2Project 22Employee 2Project 31Employee 2Project 32Employee 2Project 78Employee 3Project 51.5Employee 3Project 52.5Employee 3Project 52Employee 3Project 11Employee 3Project 41Employee 3Project 31.5Employee 3Project 42.5Employee 3Project 42 This worksheet will show the hours worked for thirty employees by Project worked. I will be creating another worksheet that will look something like this: Employee 1Employee 2Employee 3TotalsProject 1 – Project 2 Project 3 – Project 4 – Project 5 – Project 6 – Project 7 – Project 8 – Project 9 – Project 10 – I want to write formulas in this worksheet such that it will look at the first worksheet and populate the second worksheet with the hours charged by employee to each of the projects. In this case the final work sheet would look like this: Employee 1Employee 2Employee 3TotalsProject 1 2.00 2.00 2.00 6.00Project 2 4.00 4.00 Project 3 1.00 6.00 2.50 9.50Project 4 6.50 5.00 5.50 17.00Project 5 1.00 2.00 6.00 9.00Project 6 – – – – Project 7 2.00 8.00 – 10.00Project 8 – – – – Project 9 – – – – Project 10 – – – – I believe that a pivot table could be used but I need to do additional calculations using this data that would not work in a pivot table. The second worksheet will be a template for future months use. I would appreciate any help/suggestions. Read More

Office 365 Extra File Storage

Hello

Please i need your help on this issue.

This is for the “Office 365 Extra File Storage” license, which is currently displayed under the Office “F3” licenses.

I noticed the area to manage is grayed out. The accounts checking the status are both tenant and Global admins so we should have the correct permissions.

Here is the link we used to reference the case originally – https://learn.microsoft.com/en-us/microsoft-365/commerce/add-storage-space?view=o365-worldwide

The new license according to the link is supposed to fall under “Your Products” as a separate line item located within the “Billing” in the 365 Admin Console.

We opened the case Feb 21, 2024, and have been working on getting this issue corrected with our subscription to be able to add storage to SharePoint.

For another reference, tenant purchased the license through Insight back in December 2023 using this item – QTY 1000 6WT-00001 O365 Extra File Storage Sub Add-on Extra Storage 1 GB.

Insight then proceeded to push this through to the subscription we have setup for the company.”

Hello Please i need your help on this issue. This is for the “Office 365 Extra File Storage” license, which is currently displayed under the Office “F3” licenses. I noticed the area to manage is grayed out. The accounts checking the status are both tenant and Global admins so we should have the correct permissions. Here is the link we used to reference the case originally – https://learn.microsoft.com/en-us/microsoft-365/commerce/add-storage-space?view=o365-worldwide The new license according to the link is supposed to fall under “Your Products” as a separate line item located within the “Billing” in the 365 Admin Console. We opened the case Feb 21, 2024, and have been working on getting this issue corrected with our subscription to be able to add storage to SharePoint. For another reference, tenant purchased the license through Insight back in December 2023 using this item – QTY 1000 6WT-00001 O365 Extra File Storage Sub Add-on Extra Storage 1 GB. Insight then proceeded to push this through to the subscription we have setup for the company.” Read More

Outlook 2019 for Mac – Group / Lists?

Hi,

I’m using Outlook for Mac 2019 in classic mode

Looking to create and use a group / list (group is greyed out – see graphic) to send emails to around 25-30 people.

I can create and name a list, but can’t find out where it’s gone and how to use it in a email to communicate with the list members.

Unsure as to the difference between a group and a list.

Any thoughts on what may be happening?

Thanks

Hi, I’m using Outlook for Mac 2019 in classic mode Looking to create and use a group / list (group is greyed out – see graphic) to send emails to around 25-30 people. I can create and name a list, but can’t find out where it’s gone and how to use it in a email to communicate with the list members. Unsure as to the difference between a group and a list. Any thoughts on what may be happening? Thanks Read More

Shared calling policy enabled user can not login to teams sip gateway enabled phones like VVX400/500

We have enabled shared calling policy with Rogers Operator connect and assigned to users, which works great but while logging in to sip gateway enabled device like Polycom vvx400s/500s it throws error that please assign a phone number to the user to login to this device.

So, i run the Get-csonlineuser command for one of the user enabled with shared calling and line uri was empty even after assigning shared calling policy and incoming outgoing calls were working. Even on teams admin center user profile shows that there is an assigned phone number but because lineuri for that user is empty it did not allow a user to login to sip devices.

Any work around or solutions to this? Because i opened a ticket with Microsoft engineering teams and product team but even they don’t know the reason and solution. Any help is really appriciated. Thank you.

We have enabled shared calling policy with Rogers Operator connect and assigned to users, which works great but while logging in to sip gateway enabled device like Polycom vvx400s/500s it throws error that please assign a phone number to the user to login to this device. So, i run the Get-csonlineuser command for one of the user enabled with shared calling and line uri was empty even after assigning shared calling policy and incoming outgoing calls were working. Even on teams admin center user profile shows that there is an assigned phone number but because lineuri for that user is empty it did not allow a user to login to sip devices. Any work around or solutions to this? Because i opened a ticket with Microsoft engineering teams and product team but even they don’t know the reason and solution. Any help is really appriciated. Thank you. Read More

Referral Sheet With Dependent Drop Downs

Good day.

I’m trying to build a referral sheet for our business. I want to be able to choose a service and then have companies displayed that do those services. Once I choose a company, I want to display their logo and contact information to print out and hand to customers. Is this doable with my existing workbook that contains all of

Good day. I’m trying to build a referral sheet for our business. I want to be able to choose a service and then have companies displayed that do those services. Once I choose a company, I want to display their logo and contact information to print out and hand to customers. Is this doable with my existing workbook that contains all of Read More

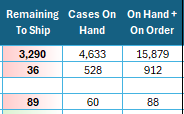

How can I use Pivot Table References for Conditional Formatting?

Hello!

I have a pivot table where the following columns are repeated for several states, so the easiest way to create the conditional formatting would be one that works for the entire pivot table, even as states are selected and deselected and as both the columns and rows grow and shrink. I want the “On Hand + On Order” values to be highlighted when they are less than the “Remaining To Ship” value in the same row. Is it possible to use table references to these columns? If not, is there a clever / elegant way to write a single Conditional Formatting formula that would cover the entire pivot table as the table expands and contracts (both rows and columns)?

Hello! I have a pivot table where the following columns are repeated for several states, so the easiest way to create the conditional formatting would be one that works for the entire pivot table, even as states are selected and deselected and as both the columns and rows grow and shrink. I want the “On Hand + On Order” values to be highlighted when they are less than the “Remaining To Ship” value in the same row. Is it possible to use table references to these columns? If not, is there a clever / elegant way to write a single Conditional Formatting formula that would cover the entire pivot table as the table expands and contracts (both rows and columns)? Read More

Migrate

Hello

Please i need your help on this issue.

I forgot to migrate my other USER.

Do I need to provide an additional licensed (MS Exchange Online Plan 1) ? OR can this be done at the time of migration?

Hello Please i need your help on this issue. I forgot to migrate my other USER. Do I need to provide an additional licensed (MS Exchange Online Plan 1) ? OR can this be done at the time of migration? Read More

Outlook iOS Advanced Setup Config Bug/Issue

I come across a bug/issue with how the “advanced setup” section is setup. I use Exchange for work and it goes to our personal mail server. So setting show as follows:

Email: Example.domain.com

Password

Description: Example

Server Mail.domain.com

Domain (for my case has to be this) domainusername

Username (Needs to be blank or it will not work)

That all being said, it stopped working recently because I needed to change my password on my local pc which is also the password to that domain email. Once prompted for new credentials for my iOS app Outlook, I enter and it fails. I believe with little doubt this is due to the “Username” Section on the app that is REQUIRED, to proceed. In my case it has to be blank, with the user name included in the “Domain field” as shown above.

Please Advise,

Tyler J

I come across a bug/issue with how the “advanced setup” section is setup. I use Exchange for work and it goes to our personal mail server. So setting show as follows: Email: Example.domain.comPasswordDescription: ExampleServer Mail.domain.comDomain (for my case has to be this) domainusernameUsername (Needs to be blank or it will not work) That all being said, it stopped working recently because I needed to change my password on my local pc which is also the password to that domain email. Once prompted for new credentials for my iOS app Outlook, I enter and it fails. I believe with little doubt this is due to the “Username” Section on the app that is REQUIRED, to proceed. In my case it has to be blank, with the user name included in the “Domain field” as shown above. Please Advise, Tyler J Read More

Learn how to apply Microsoft’s Generative AI technologies to your business

Get ready to dive deep into the world of Generative AI, explore the extensive Microsoft Copilot Ecosystem, and delve into the models and capabilities of Azure AI!

AI Visionaries Circle proudly presents:

“Unlocking Infinite Potential with Microsoft Generative AI”

Boost Your Productivity, Efficiency, and Creativity with Generative AI, Azure AI Models, and Discover Microsoft’s Copilot Ecosystem

Join us for an enlightening series of sessions by registering through the link below:

April 23rd at 9:00am PST

May 8th at 9:00am PST

June 25th at 9:00am PST

Discover how Microsoft’s Generative AI technologies can transform your business, enhancing productivity, efficiency, and fostering creativity. Throughout these sessions, we’ll delve into Azure AI Studio, our product offerings, multi-modal capabilities, the Azure AI model catalog, and provide an overview of Microsoft’s Copilot Ecosystem.

What you can expect:

Inspirational Insights: Witness how Generative AI is reshaping industries and learn how to integrate AI into your business, product, or sector.

Comprehensive Exploration of Microsoft’s Copilot Ecosystem: Uncover the potential of creating your own Copilots, understanding existing Copilots within Microsoft, and the vast opportunities they offer for you and your business.

Azure AI Showcase: Discover the diverse array of models in our model catalog within Azure AI Studio and gain hands-on experience with various Azure AI models to elevate your projects.

Interactive Q&A Session: Engage with our experts and inquire about anything related to Microsoft Generative AI!

Thank you for your interest! We look forward to your participation!

__________________________________________________________________________________________________________________________________________________

What is the AI Visionaries Circle?

The AI Visionaries Circle is an exclusive community that brings together Digital Natives, ISVs (Independent Software Vendors), and Startups. It’s your gateway to explore the cutting-edge Azure AI technologies and stay ahead of the curve.

Microsoft Tech Community – Latest Blogs –Read More

Image Analysis with Azure Open AI GPT-4V and Azure Data Factory

Earlier this year, I published an article on building a solution with Azure Open AI GPT-4 Turbo with Vision (GPT4-V) to analyze videos with chat completions, all orchestrated with Azure Data Factory. Azure Data Factory is a great framework for making calls to Azure Open AI deployments since ADF offers:

A low-code solution without having to write and deploy apps or web services

Easy and secure integration with other Azure resources with Managed Identities

Features which aid in parameterization making a single data factory reuseable for many different scenarios (for example, an insurance company could use the same data factory to analyze videos or images for car damage as well as for fire damage, storm damage, etc. )

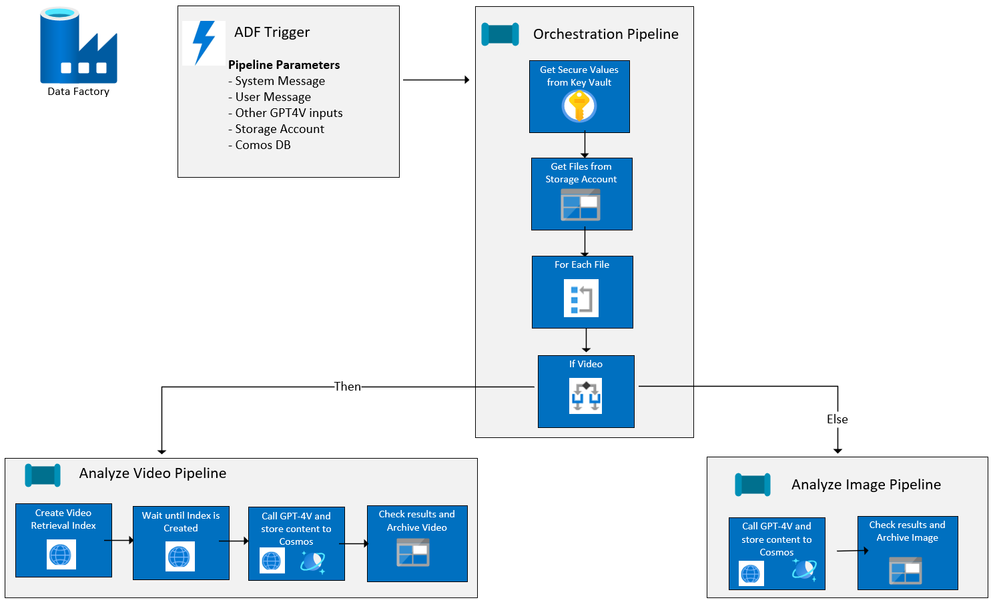

Since the first article was published, I have added image analysis to the ADF solution and have made it easy to deploy to your Azure Subscription from our Github repo, AI-in-a-Box, using azd. Below is the current flow of the ADF solution:

Solution resources and workflow

If you read the original blog, you will see that the Azure resources deployed are exactly the same as this solution. The only thing that has changed are the ADF pipelines. The resources used are:

Azure Key Vault for holding API keys as secrets

Azure Open AI with a GPT-4V Deployment (Preview)

Check here for available models and regions for deployment

Azure Computer Vision with Image Analysis 4.0 (Preview)

Follow the prerequisites in the above link and note the region availability and supported video formats

Azure Data Factory for orchestration

Azure Blob Storage for holding images and videos to be processed

This must be in the same region as your Azure Open AI resource

Azure Cosmos DB Account (NoSQL API), database and container for logging GPT-4V completion response

ADF/GPT-4V for Image and Video Processing – Orchestrator Pipeline

An orchestrator pipeline is the main pipeline that is called by event or at a scheduled time, executing all the activities to be performed, including running other pipelines. The orchestration pipeline has changed slightly since the original article was written. It now includes image processing along with telling GPT-4V to return the results in a Json format.

Takes in the specified parameters for the system message, user message, image and/or video storage account, and Cosmos DB account and database information.

Though GPT-4V does not support resource-format Json at this time, you can still return a string result in a Json format.

In the system_message parameter on the Orchestration Pipeline, specify that the results should be formatted in Json:

The system message says:

Your task is to analyze vehicles for damage. You will be presented videos or images of vehicles. Each video or image will only show a portion of the vehicle and there may be glare on the video or image. You need to inspect the video or image closely and determine if there is any damage to the vehicle, such as dents, scratches, broken lights, broken windows, etc. You will provide the following information in JSON format: {“summary”:””, “damage_probability”:””,”damage_type”:””,”damage_location”:””}. Do not return as code block. The definitions for each JSON field are as follows: summary = a description of the vehicle and and damage found; damage_probability = a value between 1 and 10 where 1 is no damage found, 5 is some likelyhood of damage, and 10 is obvious damage; damage_type = the type of damage on the vehicle, such as scratches, chips, dents, broken glass; damage_location = the location of the damage on the vehicle such as passenger front door, rear bumper.

At the end of this post, you will see how easy it is to query the results and return the content as a Json object.

2. Get the secrets from key vault and store them as return variables

3. Set a variable which contains the name/value pair for temperature. The parameter above for temperature returns “temperature” : 0.5

4. Set a variable which contains the name/value pair for top_p. The parameter above is not set so it will be blank.

5. Gets a list of the videos and/or images in the storage account

6. Then for each video or image, checks the file type and executes the appropriate pipeline depending on whether it is a video or image, passing in the appropriate file details and other values for parameters.

Video Ingestion/GPT-4V with Pipeline childAnalyzeVideo

The core logic for this pipeline has not changed from the first article. The childAnalyzeVideo pipeline is called from the Orchestrator Pipeline for each file that is a video rather than a image. It creates a video retrieval index with Azure AI Vision Image Analysis 4.0 and passes that along with the link to the video to GPT-4V, returning the completion results to Cosmos DB. Please refer to first article if you want more details.

Image Ingestion/GPT-4V with Pipeline childAnalyzeImage

The childAnalyzeImage pipeline is called from the orchestrator pipeline for each file that is an image rather than a video. Below are the details:

Input parameters for the pipeline. Note that the ‘Secure input’ on subsequent pipeline activities that use API keys or SAS tokens so their values will not be exposed in the pipeline output

filename – the image to be analyzed

computer_vision_url – url to your Azure AI Vision resource

vision_api_key – Azure AI Vision token

gpt_4v_deployment_name – deployment name of GPT-4V model

open_ai_key– url to your Azure Open AI resource

openai_api_base – url to your Azure Open AI resource

sys_message – initial instructions to the model about the task GPT-4V is expected to perform

user_prompt – the query to be answered by GPT-4V

sas_token – Shared Access Signature token

storageaccounturl – endpoint for the storage account

storageaccountfolder – the container/file that contains the images

temperature – formatted temperature value

top_p – formatted top_p value

cosmosaccount – Azure Cosmos DB endpoint

cosmosdb – Azure Cosmos DB name

cosmoscontainer – Azure Cosmos DB container name

temperaturevalue – value between 0 and 2 where 0 is the most accurate and consistent result and 2 is the most creative

top_pvalue – value between 0 and 1 to consider a subset of tokens

Call GPT-4V with inputs including system message and user prompt and store results in Cosmos DB

Copy Data Activity, General settings– note that Secure Input is checked

Copy Data Source settings – REST API Linked Service to GPT-4V Deployment. Check out Use Vision enhancement with images, and Chat Completion API Reference for more detail

Additional columns with pipeline information were added to the source:

Sink to Cosmos DB

Mapping tab includes GPT-4V completion content, number of prompt and completion tokens plus the additional fields:

3. Perform lookup to get Damage Probability

4. Get Damaged Probability value to set the value for the processfolder variable

5. Move the file from the Source folder to the appropriate Sink folder with using a binary integration dataset:

Source Settings:

Sink Settings:

That’s it!

Query in Cosmos DB:

After running the solution, we can query the results from Cosmos DB. Since we specified to format the results as a Json string, we can run a simple query to convert the content field from a string to an object:

SELECT c.filename, c.fileurl, c.shortdate,

StringToObject(c.content) as results

FROM c

Deploying the Image and Video Solution in your environment

You can easily deploy this solution, including all the Azure resources cited earlier in this article plus all the Azure Data Factory Pipelines and code, in your own environment!

First, your subscription must be enabled to GPT-4 Turbo with Vision. If it isn’t already, you can go here to apply. This usually takes just a few days.

Then go Image and Video Analysis-Azure Open AI in-a-box and follow the simple instructions to deploy either from your local Git repo or from Azure Cloud shell.

Test it out using the videos and images at the end of this article. Or better yet – upload your own videos and images to the Storage Account and change the system and user message parameters to do analysis for your own use cases!

And of course, since you have the entire AI-in-a-Box repo deployed locally or in Azure Cloudshell, check out the other insightful and easy to deploy solutions including chat bots, AI assistants, semantic kernel solutions and more. Follow the instructions and deploy into your subscription to learn, test, and adapt for your own use cases!

Resources:

Azure/AI-in-a-Box (github.com)

How to use the GPT-4 Turbo with Vision model – Azure OpenAI Service | Microsoft Learn

What is Image Analysis? – Azure AI services | Microsoft Learn

Microsoft Tech Community – Latest Blogs –Read More

Azure Cosmos Db Materialized View general availability

Hi Azure Cosmos DB Team,

Can you please confirm if materialized view for Cosmos Db is general available now and can be recommended for production workloads? Also lag for materialized view to catchup is dependent on SKU allocation and provisioned RU in source and destination container only? Does consistency have any impact when querying the materialized view query or for the materialized view catcup in case of heavy writes and updates in source container? If the account is set up with bounded staleness consistency materialized view querying will also have bounded staleness consistency associated with them when using cosmos JAVA sdk for querying? We are using SQL API.

With Regards,

Nitin Rahim

Hi Azure Cosmos DB Team, Can you please confirm if materialized view for Cosmos Db is general available now and can be recommended for production workloads? Also lag for materialized view to catchup is dependent on SKU allocation and provisioned RU in source and destination container only? Does consistency have any impact when querying the materialized view query or for the materialized view catcup in case of heavy writes and updates in source container? If the account is set up with bounded staleness consistency materialized view querying will also have bounded staleness consistency associated with them when using cosmos JAVA sdk for querying? We are using SQL API. With Regards,Nitin Rahim Read More

“gettype()” function in KQL – “double” result

“double” is supposedly not a datatype in Kusto (Copilot says it is a synonym for “real”), but the gettype function will return it as a value…

gettype(123.45) -> “real”

gettype(cm.total) -> “double”

(where cm was a container of measurements used to contain a number of C# double values)

MS should either return “real” or mention “real” in the gettype documentation so programmers writing switch statements will realize that “double” is a possible value that should be handled.

”double” is supposedly not a datatype in Kusto (Copilot says it is a synonym for “real”), but the gettype function will return it as a value…gettype(123.45) -> “real”gettype(cm.total) -> “double”(where cm was a container of measurements used to contain a number of C# double values) MS should either return “real” or mention “real” in the gettype documentation so programmers writing switch statements will realize that “double” is a possible value that should be handled. Read More

How to check the remaining size of recoverable items folder of a mailbox using powershell

Set-ExecutionPolicy RemoteSigned #changes PowerShell execution policies for Windows computers

Install-Module exchangeonlinemanagement #Install exchange online modules

Import-Module exchangeonlinemanagement #Import exchange online modules

Connect-ExchangeOnline #connect to exchange online service

Get-MailboxFolderStatistics -Identity “use UPN” -FolderScope RecoverableItems | Format-Table Name, FolderAndSubfolderSize, ItemsInFolderAndSubfolders -Auto

Set-ExecutionPolicy RemoteSigned #changes PowerShell execution policies for Windows computersInstall-Module exchangeonlinemanagement #Install exchange online modulesImport-Module exchangeonlinemanagement #Import exchange online modulesConnect-ExchangeOnline #connect to exchange online serviceGet-MailboxFolderStatistics -Identity “use UPN” -FolderScope RecoverableItems | Format-Table Name, FolderAndSubfolderSize, ItemsInFolderAndSubfolders -Auto Read More

new to teams

I would like to know if Team has anything that is comparable to Monday.com for both individual and group planning?

Thanks

steve

I would like to know if Team has anything that is comparable to Monday.com for both individual and group planning?Thankssteve Read More